Allosteric conformational ensembles have unlimited capacity for integrating information

Abstract

Integration of binding information by macromolecular entities is fundamental to cellular functionality. Recent work has shown that such integration cannot be explained by pairwise cooperativities, in which binding is modulated by binding at another site. Higher-order cooperativities (HOCs), in which binding is collectively modulated by multiple other binding events, appear to be necessary but an appropriate mechanism has been lacking. We show here that HOCs arise through allostery, in which effective cooperativity emerges indirectly from an ensemble of dynamically interchanging conformations. Conformational ensembles play important roles in many cellular processes but their integrative capabilities remain poorly understood. We show that sufficiently complex ensembles can implement any form of information integration achievable without energy expenditure, including all patterns of HOCs. Our results provide a rigorous biophysical foundation for analysing the integration of binding information through allostery. We discuss the implications for eukaryotic gene regulation, where complex conformational dynamics accompanies widespread information integration.

Introduction

Cells receive information in different ways, of which molecular binding is the most diverse and widespread. Binding events influence downstream biological functions. In the biophysical treatment that we present here, biological functions, such as the output of a gene or the oxygen-carrying capacity of haemoglobin, are quantified as averages over the probabilities of microscopic states. We will be concerned with how binding events collectively determine these probability distributions and will refer to this process as the integration of binding information.

The most proximal form of such integration is pairwise cooperativity, in which binding at one site modulates binding at another site. This can arise through direct interaction, where one binding event creates a molecular surface, which either stabilises or destabilises the other binding event. This situation is illustrated in Figure 1A, which shows the binding of ligand to sites on a target molecule. (In considering the target of binding, we use ‘molecule’ for simplicity to denote any molecular entity, from a single polypeptide to a macromolecular aggregate such as an oligomer or complex with multiple components.) We use the notation for the association constant—on-rate divided by off-rate, with dimensions of (concentration)−1—where denotes the binding site and denotes the set of sites which are already bound. This notation was introduced in previous work (Estrada et al., 2016) and is explained further in the Materials and methods. It allows binding to be analysed while keeping track of the context in which binding occurs, which is essential for making sense of how binding information is integrated.

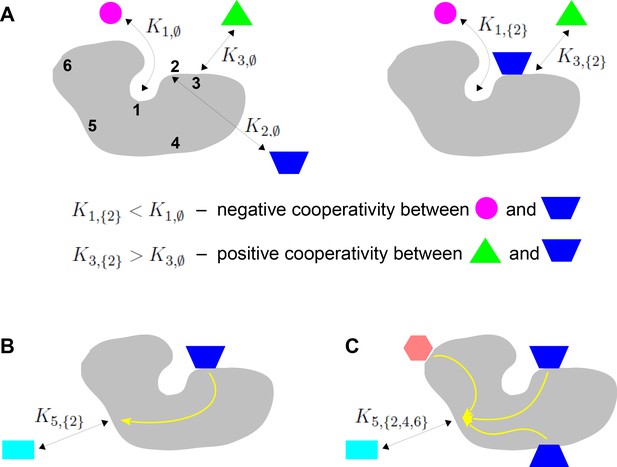

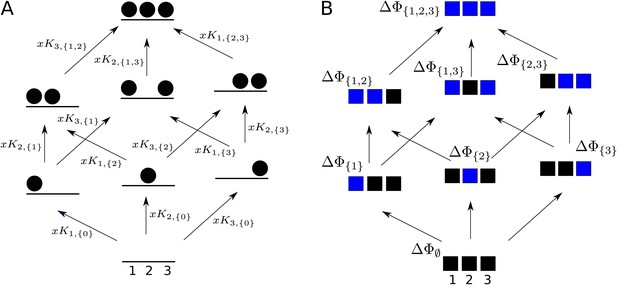

Binding cooperativity.

(A) Pairwise cooperativity by direct interaction on a target molecule (grey). As discussed in the text, the target could be any molecular entity. Left: target molecule with no ligands bound; numbers denote the binding sites. Right: target molecule after binding of blue ligand to site 2. (B) Indirect long-distance pairwise cooperativity, which can arise ‘effectively’ through allostery. (C) Higher-order cooperativity, in which multiple bound sites, 2, 4 and 6, affect binding at site 5.

Oxygen binding to haemoglobin is a classical example of integration of binding information, for which Linus Pauling gave the first biophysical definition of cooperativity (Pauling, 1935). At a time when the mechanistic details of haemoglobin were largely unknown, Pauling assumed that cooperativity arose from direct interactions between the four haem groups. He defined the pairwise cooperativity for binding to site , given that site is already bound, as the fold change in the association constant compared to when site is not bound. In other words, the pairwise cooperativity is given by , where denotes the empty set. (Pauling considered non-pairwise effects but deemed them unnecessary to account for the available data.) It is conventional to say that the cooperativity is ‘positive’ if this ratio is greater than 1 and ‘negative’ if this ratio is less than 1; the sites are said to be ‘independent’ if the cooperativity is exactly 1, in which case binding to site has no influence on binding to site . This terminology reflects the underlying free energy (Equation 1). Association constants and cooperativities may be thought of as an alternative way of describing the free-energy landscape, as we will explain in more detail in the Results. Figure 1A depicts the situation in which there is negative cooperativity for binding to site 1 and positive cooperativity for binding to site 3, given that site 2 is bound.

Studies of feedback inhibition in metabolic pathways revealed that information to modulate binding could also be conveyed over long distances on a target molecule, beyond the reach of direct interactions (Changeux, 1961; Gerhart, 2014; Figure 1B). Monod and Jacob coined the term ‘allostery’ for this form of indirect cooperativity (Monod and Jacob, 1961). Monod, Wyman and Changeux (MWC) and, independently, Koshland, Némethy and Filmer (KNF) put forward equilibrium thermodynamic models, which showed how effective cooperativity could arise from the interplay between ligand binding and conformational change (Koshland et al., 1966; Monod et al., 1965). In the two-conformation MWC model (Figure 2B), there is no ‘intrinsic’ cooperativity—the binding sites are independent in each conformation—and ‘effective’ cooperativity arises as an emergent property of the dynamically interchanging ensemble of conformations.

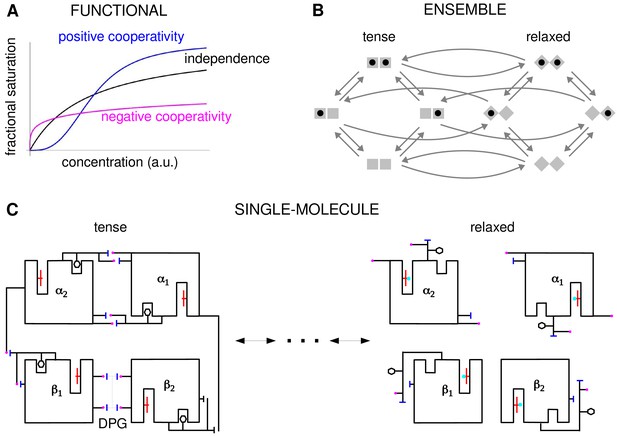

Cooperativity and allostery from three perspectives.

(A) Plots of the binding function, whose shape reflects the interactions between binding sites, as described in the text. (B) The Monod, Wyman and Changeux (MWC) model with a population of dimers in two quaternary conformations, with each monomer having one binding site and ligand binding shown by a solid black disc. The two monomers are considered to be distinguishable, leading to four microstates. Directed arrows show transitions between microstates. This picture anticipates the graph-theoretic representation used later in this paper. (C) Schematic of the end points of the allosteric pathway between the tense, fully deoxygenated and the relaxed, fully oxygenated conformations of a single haemoglobin tetramer, , showing the tertiary and quaternary changes, based on Figure 4 of Perutz, 1970. Haem group (red); oxygen (cyan disc); salt bridge (positive, magenta disc; negative, blue bar); DPG is 2–3-diphosphoglycerate.

In these studies, the effective cooperativity between sites was not quantitatively determined. Instead, the presence of cooperativity was inferred from the shape of the binding function, which is the average fraction of bound sites, or fractional saturation, as a function of ligand concentration (Figure 2A). The famous MWC formula is an expression for this binding function (Monod et al., 1965). If the sites are effectively independent, the binding function has a hyperbolic shape, similar to that of a Michaelis–Menten curve. A sigmoidal curve, which flattens first and then rises more steeply, indicates positive cooperativity, while a curve which rises steeply first and then flattens indicates negative cooperativity. Surprisingly, despite decades of study, the effective cooperativity of allostery is still largely assessed in this way, through the shape of the binding function, which is sometimes quantified in terms of a sensitivity or Hill coefficient. However, the shape of the binding function, and any associated Hill coefficient, are measures which aggregate over conformations and binding states, and they give little insight into how binding information is being integrated. To put it another way, the underlying free-energy landscape cannot be inferred from the shape of the binding function: as we will see below, different free-energy landscapes can give rise to indistinguishable binding functions. One of the contributions of this paper is to show how effective cooperativities can be quantified, providing thereby a set of parameters which collectively describe the allosteric free-energy landscape and placing allosteric information integration on a similar biophysical foundation to that provided by Pauling for direct interactions between two sites.

The MWC and KNF models are phenomenological: effective cooperativity arises as an emergent property of a conformational ensemble. This leaves open the question of how information is propagated between distant binding sites across a single molecule. This question was particularly relevant to haemoglobin, for which it had become clear that the haem groups were sufficiently far apart that direct interactions were implausible. Perutz’s X-ray crystallography studies of haemoglobin revealed a pathway of structural transitions during cooperative oxygen binding which linked one conformation to another (Figure 2C), thereby relating the single-molecule viewpoint to the ensemble viewpoint (Perutz, 1970). These pioneering studies provided important justification for key aspects of the MWC model, which has endured as one of the most successful mathematical models in biology (Changeux, 2013; Marzen et al., 2013).

Allostery was initially thought to be limited to certain symmetric protein oligomers like haemoglobin and to involve only a few, usually two, conformations. But Cooper and Dryden's theoretical demonstration that information could be conveyed by fluctuations around a dominant conformation anticipated the emergence of a more dynamical perspective (Cooper and Dryden, 1984; Henzler-Wildman and Kern, 2007). At the single-molecule level, it has been found that binding information can be conveyed over long distances by complex atomic networks, of which Perutz’s linear pathway (Figure 2C) is only a simple example (Schueler-Furman and Wodak, 2016; Kornev and Taylor, 2015; Knoverek et al., 2019; Wodak et al., 2019). These atomic networks may in turn underpin complex ensembles of conformations in many kinds of target molecules and allosteric regulation is now seen to be common to most cellular processes (Nussinov et al., 2013; Changeux and Christopoulos, 2016; Motlagh et al., 2014; Lorimer et al., 2018; Wodak et al., 2019; Ganser et al., 2019). The unexpected finding of widespread intrinsic disorder in proteins has been particularly influential in prompting a reassessment of the classical structure-function relationship, with conformations which may only be fleetingly present providing plasticity of binding to many partners (Wrabl et al., 2011; Wright and Dyson, 2015; Berlow et al., 2018).

However, while ensembles have grown greatly in complexity from MWC’s two conformations and new theoretical frameworks for studying them have been introduced (Wodak et al., 2019), the quantitative analysis of information integration has barely changed beyond pairwise cooperativity. In the present paper, we will be particularly concerned with higher-order cooperativities (HOCs) in which multiple binding events collectively modulate another binding site (Figure 1C). Such higher-order effects can be quantified by association constants, , where the set has more than one bound site. The size of , denoted by , is the order of cooperativity, so that pairwise cooperativity may be considered as HOC of order 1. For the example in Figure 1C, the ratio, , defines the non-dimensional HOC of order 3 for binding to site 5, given that sites 2, 4 and 6 are already bound. The notation used here is essential to express such higher-order concepts.

Higher-order effects have been discussed in previous studies (Dodd et al., 2004; Peeters et al., 2013; Martini, 2017; Gruber and Horovitz, 2018) and treated systematically in the mutant-cycle strategy developed in Horovitz and Fersht, 1990 and recently reviewed (Carter, 2017). The latter approach relies on perturbing residues or modules to unravel networks of energetic couplings within a macromolecule. It focusses on the single-molecule scale in contrast to the ensemble scale of the present paper (Figure 2). Mutant-cycle studies have confirmed the presence of substantial higher-order interactions underlying information propagation in proteins (Jain and Ranganathan, 2004; Sadovsky and Yifrach, 2007; Carter et al., 2017). The two approaches may be seen as different ways of analysing the free-energy landscape, as we explain in the Results.

HOCs were introduced in Estrada et al., 2016, where it was shown that experimental data on the sharpness of gene expression could not be accounted for purely in terms of pairwise cooperativities (Park et al., 2019a). In this context, the target molecule is the chromatin structure containing the relevant transcription factor (TF) binding sites and the analogue of the binding function is the steady-state probability of RNA polymerase being recruited, considered as a function of TF concentration (Estrada et al., 2016; Park et al., 2019a). The Hunchback gene considered in Estrada et al., 2016, Park et al., 2019a, which is thought to have six binding sites for the TF Bicoid, requires HOCs up to order 5 to account for the data, under the assumption that the regulatory machinery is operating without energy expenditure at thermodynamic equilibrium. An important problem emerging from this previous work, and one of the starting points for the present paper, is to identify a molecular mechanism capable of implementing such HOCs.

In the present paper, we show that allosteric conformational ensembles can implement any pattern of effective HOCs. Accordingly, they can implement any form of information integration that is achievable at thermodynamic equilibrium. We work at the ensemble level (Figure 2B) using a graph-based representation of Markov processes developed previously (below). We introduce a systematic method of ‘coarse graining’, which is likely to be broadly useful for other studies. This allows us to define the effective HOCs arising from any allosteric ensemble, no matter how complex. These effective HOCs provide a quantitative language in which the integrative capabilities of any ensemble can be specified. We show, in particular, that allosteric ensembles can account for the experimental data on Hunchback mentioned above, which was the problem that prompted the present study. It is straightforward to determine the binding function from the effective HOCs, and we derive a generalised MWC formula for an arbitrary ensemble, which recovers the functional perspective. Our results subsume and generalise previous findings and clarify issues which have been present since the concept of allostery was introduced. Our graph-based approach further enables general theorems to be rigorously proved for any ensemble (below), in contrast to calculation of specific models which has been the norm up to now.

Our analysis raises questions about how effective HOCs are implemented at the level of single molecules, similar to those answered by Perutz for haemoglobin and the MWC model (Figure 2C). This important problem lies outside the scope of the present paper and requires different methods (Wodak et al., 2019), such as the mutant-cycle approach mentioned above (Carter, 2017). Our analysis is also restricted to ensembles which are at thermodynamic equilibrium without expenditure of energy, as is generally assumed in studies of allostery. Energy expenditure may be present in maintaining a conformational ensemble, for example, through post-translational modification, but the significance of this has not been widely appreciated in the literature. Thermodynamic equilibrium sets fundamental physical limits on information processing in the form of ‘Hopfield barriers’ (Estrada et al., 2016; Biddle et al., 2019; Wong and Gunawardena, 2020). Energy expenditure can bypass these barriers and substantially enhance equilibrium capabilities. However, the study of non-equilibrium systems is more challenging and we must defer analysis of this interesting problem to subsequent work (Discussion).

The integration of binding information through cooperativities leads to the integration of biological functions. Haemoglobin offers a vivid example of how allostery implements this relationship. This one target molecule integrates two distinct functions, of taking up oxygen in the lungs and delivering oxygen to the tissues, by having two distinct conformations, each adapted to one of the functions, and dynamically interchanging between them. In the lungs, with a higher oxygen partial pressure, binding cooperativity causes the relaxed conformation to be dominant in the molecular population, which thereby takes up oxygen; in the tissues, with a lower oxygen pressure, binding cooperativity causes the tense conformation to be dominant in the population, which thereby gives up oxygen. Evolution may have used this integrative strategy more widely than just to transport oxygen, and we review in the Discussion some of the evidence for an analogy between functional integration by haemoglobin and by gene regulation.

Results

Construction of the allostery graph

Our approach uses the linear framework for timescale separation (Gunawardena, 2012), details of which are provided in the 'Materials and methods' along with further references. We briefly outline the approach here.

In the linear framework, a suitable biochemical system is described by a finite directed graph with labelled edges. In our context, graph vertices represent microstates of the target molecule and graph edges represent transitions between microstates, for which the edge labels are the instantaneous transition rates. A linear framework graph specifies a finite-state, continuous-time Markov process, and any reasonable such Markov process can be described by such a graph. We will be concerned with the probabilities of microstates at steady state. These probabilities can be interpreted in two ways, which reflect the ensemble and single-molecule viewpoints of Figure 2. From the ensemble perspective, the probability is the proportion of target molecules which are in the specified microstate, once the molecular population has reached steady state, considered in the limit of an infinite population. From the single-molecule perspective, the probability is the proportion of time spent in the specified microstate, in the limit of infinite time. The equivalence of these definitions comes from the ergodic theorem for Markov processes (Stroock, 2014). These different interpretations may be helpful when dealing with different biological contexts: a population of haemoglobin molecules may be considered from the ensemble viewpoint, while an individual gene may be considered from the single-molecule viewpoint. As far as the determination of probabilities is concerned, the two viewpoints are equivalent.

The graph representation may also be seen as a discrete approximation of a continuous energy landscape, as in Figure 3, in which the target molecule is moving deterministically on a high-dimensional landscape in response to a potential, while being buffeted stochastically through interactions with the surrounding thermal bath (Frauenfelder et al., 1991). In mathematics, this approximation goes back to the work of Wentzell and Freidlin on large deviation theory for stochastic differential equations in the low noise limit (Ventsel' and Freidlin, 1970; Freidlin and Wentzell, 2012). It has been exploited more recently to sample energy landscapes in chemical physics (Wales, 2006) and in the form of Markov State Models arising from molecular dynamics simulations (Noé and Fischer, 2008; Sengupta and Strodel, 2018). In this approximation, the vertices correspond to the minima of the free energy up to some energy cut-off, the edges correspond to appropriate limiting barrier crossings and the labels correspond to transition rates over the barrier.

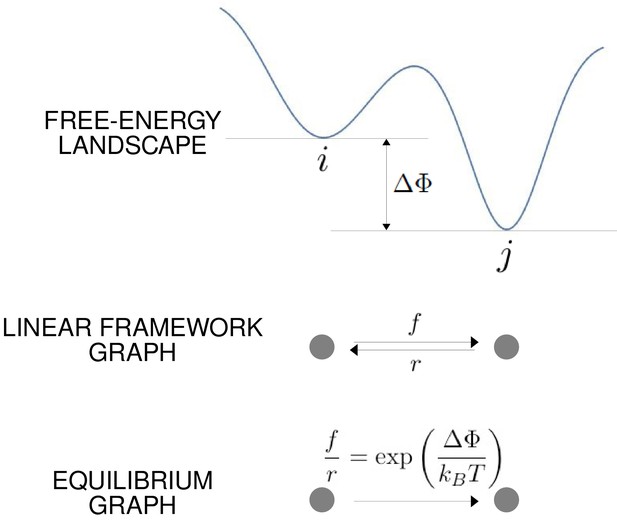

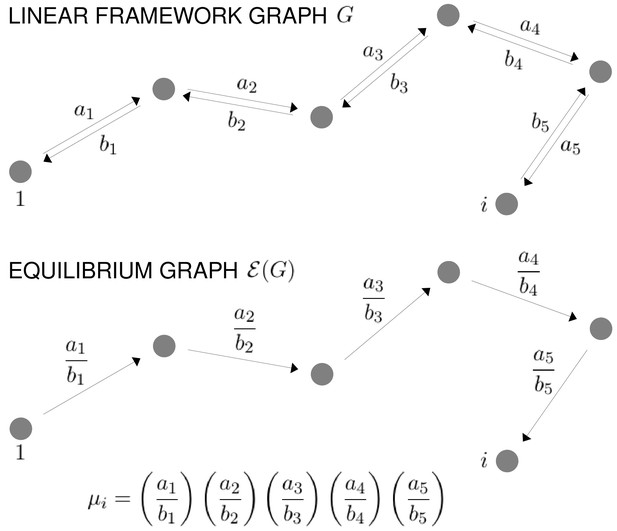

The free-energy landscape and corresponding graphs.

From the top, a hypothetical one-dimensional free-energy landscape, showing two graph vertices, and , as local minima of the free energy; the corresponding linear framework graph showing the edges between and with respective transition rates; the corresponding equilibrium graph whose edge label is the ratio of the transition rates, which is determined by the free-energy difference between the vertices (Equation 1).

The linear framework graph, or the accompanying Markov process, describes the time-dependent behaviour of the system. Our concern in the present paper is with systems which have reached a steady state of thermodynamic equilibrium, so that detailed balance, or microscopic reversibility, is satisfied. The assumption of thermodynamic equilibrium has been standard since allostery was introduced (Koshland et al., 1966; Monod et al., 1965) but has significant implications, as pointed out in the Introduction, and we will return to this issue in the Discussion. At thermodynamic equilibrium, we can dispense with dynamical information and work with what we call ‘equilibrium graphs’ (Figure 3). These are also directed graphs with labelled edges but the edge labels no longer contain dynamical information in the form of rates but rather ratios of forward to reverse rates. These ratios are determined by the minima of the free-energy landscape, with the equilibrium label on the edge from vertex to vertex being given by the formula in Figure 3 . Free energy is often expressed relative to a reference level, as we will do below, so it will be convenient to write the equilibrium label from to as

where is the relative free-energy of vertex , is Boltzmann’s constant and is the absolute temperature (Figure 3). Note that if the edge in question involves components from outside the graph itself, such as a ligand which binds to to yield , then the chemical potential of the ligand will contribute to the free energy. This contribution will manifest itself in the presence of a ligand concentration term in the edge label, as seen in Figure 4. The equilibrium edge labels are the only parameters needed at thermodynamic equilibrium and the free energies of the vertices can be recovered from them, up to an additive constant. From now on, in the main text, when we say ‘graph’, we will mean ‘equilibrium graph’.

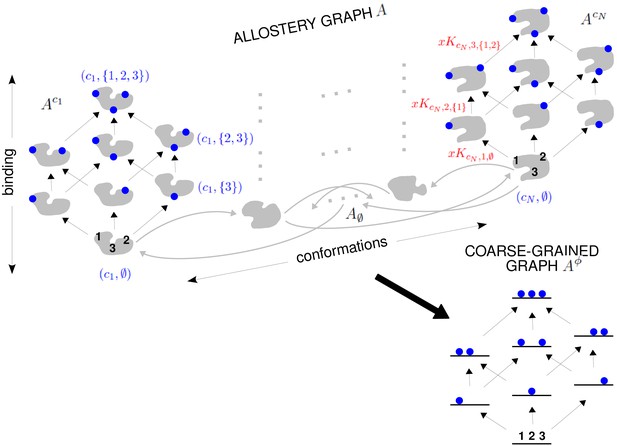

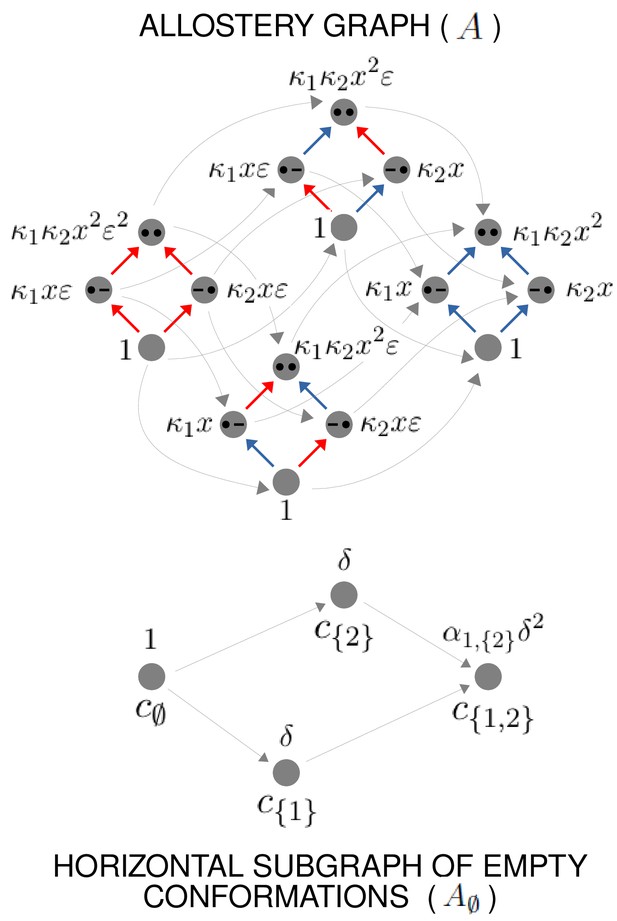

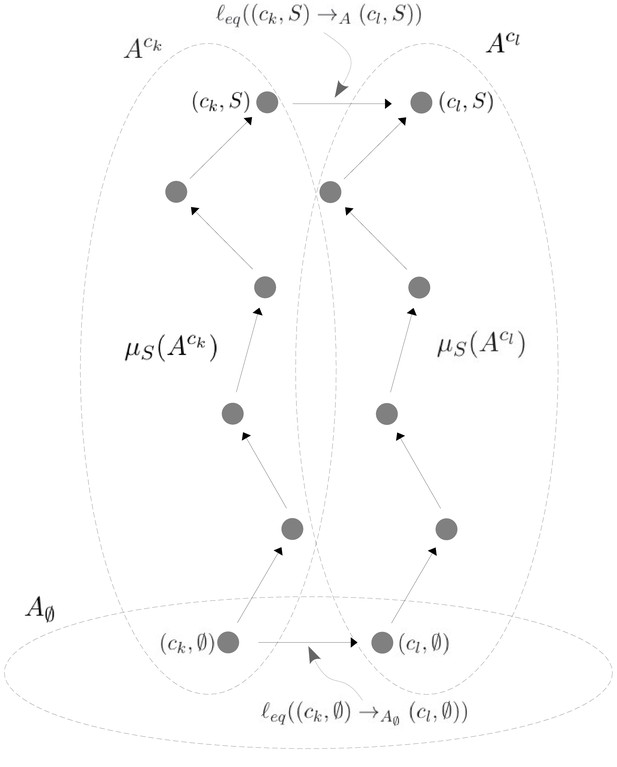

The allostery graph and coarse graining.

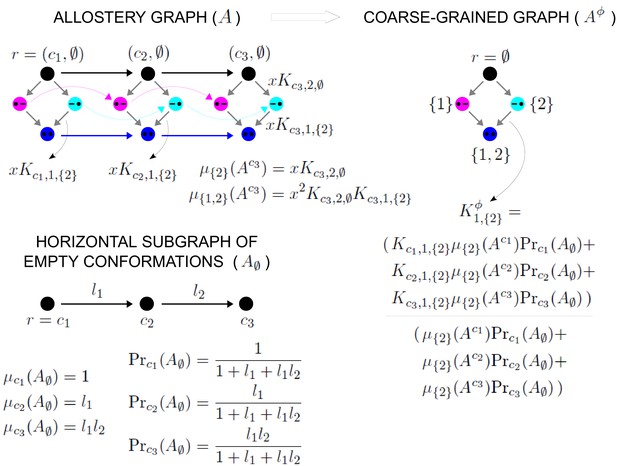

A hypothetical allostery graph (top) with three binding sites for a single ligand (blue discs) and conformations, , shown as distinct grey shapes. Binding edges (‘vertical’ in the text) are black and edges for conformational transitions (‘horizontal’) are grey. Similar binding and conformational edges occur at each vertex but are suppressed for clarity. Note that edges are shown in only one direction but are always reversible. All vertical subgraphs, , have the same structure, as seen for the vertical subgraphs, (left) and (right), and all horizontal subgraphs, , also have the same structure, shown schematically for the horizontal subgraph of empty conformation, , at the base. Example notation is given for vertices (blue font) and edge labels (red font), with denoting ligand concentration and sites numbered as shown for vertices and . The coarse-graining procedure coalesces each horizontal subgraph, , into a new vertex and yields the coarse-grained graph, (bottom right), which has the same structure as for any . Further details in the text and the Materials and methods.

We explain such graphs using our main example. Figure 4 shows the graph, , for an allosteric ensemble, with multiple conformations and multiple sites, , for binding of a single ligand ( in the example). The graph vertices represent abstract conformations with patterns of ligand binding, denoted , where the index designates the conformation with , and is the subset of bound sites. Directed edges represent transitions arising either from binding without change of conformation (‘vertical’ edges), where , which occur for all conformations ck, or from conformational change without binding (‘horizontal’ edges), where , which occur for all binding subsets . Edges are shown in only one direction for clarity—when binding or unbinding is present, we use the direction of binding—but edges are always reversible, in accordance with thermodynamic equilibrium. Ignoring labels and thinking only in terms of vertices and edges, or ‘structure’, has a product form: the vertical subgraphs, , consisting of those vertices with conformation ck and all edges between them, all have the same structure and the horizontal subgraphs, , consisting of those vertices with binding subset and all edges between them, also all have the same structure (Figure 4). Structurally speaking, we can think of as the graph product (Ahsendorf et al., 2014) of the vertical subgraph and the horizontal subgraph (Figure 4).

In an allostery graph, ‘conformation’ is meant abstractly as any state for which binding association constants can be defined. It does not imply any particular atomic configuration of a target molecule nor make any commitments as to how the pattern of binding changes.

The product-form structure of the allostery graph reflects the ‘conformational selection’ viewpoint of MWC, in which conformations exist prior to ligand binding, rather than the ‘induced fit’ viewpoint of KNF, in which binding can induce new conformations. Considerable evidence now exists for conformational selection, in the form of transient, rarely populated conformations which exist prior to binding (Tzeng and Kalodimos, 2011). Induced fit may be incorporated within our graph-based approach by treating new conformations as always present but at extremely low probability. One of the original justifications for induced fit was that it enabled negative cooperativities, in contrast to conformational selection (Koshland and Hamadani, 2002), but we will show below that induced fit is not necessary for this and that negative HOCs arise naturally in our approach. Accordingly, the product-form structure of our allostery graphs is both convenient and powerful.

The edge labels are the non-dimensional ratios of the forward transition rate to the reverse transition rate; accordingly, the label for the reverse edge is the reciprocal of the label for the forward edge (Materials and methods). Labels may include the influence of components outside the graph, such as a binding ligand. For instance, the label for the binding edge is , where is the ligand concentration and is the association constant (Figure 1A), with dimensions of (concentration)−1, as described in the Introduction. Horizontal edge labels are not individually annotated and need only be specified for the horizontal subgraph of empty conformations, , since all other labels are determined by detailed balance (Materials and methods).

The graph structure allows HOCs between binding events to be calculated, as suggested in the Introduction. We will define this first for the ‘intrinsic’ HOCs which arise in a given conformation and explain in the next section how ‘effective’ HOCs are defined for the ensemble. In conformation ck, the intrinsic HOC for binding to site , given that the sites in are already bound, denoted , is defined by normalising the corresponding association constant to that for binding to site when nothing else is bound (Estrada et al., 2016),

HOCs are non-dimensional quantities. If has only a single site, say , then the intrinsic HOC of order 1, , is the classical pairwise cooperativity between sites and . There is positive or negative intrinsic HOC if or , respectively, and independence if (Figure 1A).

For any graph , the steady-state probabilities of the vertices can be calculated from the edge labels. For each vertex, , in , the probability, , is proportional to the quantity, , obtained by multiplying the edge labels along any directed path of edges from a fixed reference vertex to . It is a consequence of detailed balance that does not depend on the choice of path in . This implies algebraic relationships among the edge labels. These can be fully determined from and independent sets of parameters can be chosen (Materials and methods). For the allostery graph, a convenient choice vertically is those association constants with less than all the sites in , denoted ; horizontal choices are discussed in the Materials and methods but are not needed for the main text.

Since probabilities must add up to 1, it follows that

Equation 3 yields the same result as equilibrium statistical mechanics, with the denominator being the partition function for the thermodynamic grand canonical ensemble. Equilibrium statistical mechanics typically focusses only on vertices and uses their free energies as the fundamental parameters. Directed graphs of the form considered here were previously used in Hill, 1966 and Schnakenberg, 1976 to study systems away from thermodynamic equilibrium, where the graph edges become essential to represent entropy production (Wong and Gunawardena, 2020). We find that the graph remains just as useful at thermodynamic equilibrium because binding and unbinding are the fundamental mechanisms through which information is integrated and these mechanisms must be represented by graph edges. Indeed, as the next section shows, graphs are invaluable for formulating higher-order concepts.

Our specification of an allostery graph allows for arbitrary conformational complexity and arbitrary interacting ligands (we consider only one ligand here for simplicity), with the independent association constants in each conformation being arbitrary and with arbitrary changes in these parameters between conformations. Moreover, the abstract nature of ‘conformation’, as described above, permits substantial generality. Allostery graphs can be formulated to encompass the two conformations of MWC (Marzen et al., 2013), nested models (Robert et al., 1987), the fluctuations of Cooper and Dryden, 1984 and more recent views of dynamical allostery (Tzeng and Kalodimos, 2011), the multiple domains of the Ensemble Allosteric Model developed by Hilser and colleagues (Hilser et al., 2012) and applied also to intrinsically disordered proteins (Motlagh et al., 2012), other ensemble models (LeVine and Weinstein, 2015; Tsai and Nussinov, 2014) and Markov State Models arising from molecular dynamics simulations (Noé and Fischer, 2008).

Relationships between higher-order measures

As mentioned in the Introduction, a systematic approach to higher-order effects using mutant-cycle analysis was developed in Horovitz and Fersht, 1990 and Horovitz and Fersht, 1992 and widely used subsequently (Carter, 2017). The HOCs presented above were introduced in our previous work (Estrada et al., 2016), and the present paper is concerned not with HOCs per se, but with effective HOCs that arise from an allosteric ensemble, as will be described below. Nevertheless, it may still be helpful to explain the relationship between our HOCs and the higher-order couplings arising from mutant-cycle analysis. We are grateful to an anonymous reviewer for making this point to us. The material which follows may be of particular interest to those familiar with the relevant literature but is not required for the main results of the paper.

Both HOCs and higher-order couplings can be seen as different ways of analysing the underlying free-energy landscape. Both approaches make essential use of directed graphs to organise this landscape. Figure 5A shows the labelled equilibrium graph for ligand binding to three sites in a single conformation, while Figure 5B shows a directed graph of the kind used in Horovitz and Fersht, 1990 for defining higher-order couplings for perturbations to three sites. The latter graphs are sometimes called ‘boxes’ (Horovitz and Fersht, 1990). We use ‘sites’ here for either individual residues or the modules described in Carter, 2017. Perturbations are typically mutations, such as replacement of an asparagine residue by alanine. The choice of replacement can make a difference to the results, but this is not usually depicted in graph representations like Figure 5B. The directed edges have rather different interpretations in the two examples in Figure 5: for the equilibrium graph in Figure 5A, a directed edge represents the biochemical process of ligand binding; for the coupling graph in Figure 5B, a directed edge represents an experimental perturbation. In both cases, the vertices have an associated free energy, denoted , where is either the subset of bound sites in the equilibrium graph (Figure 5A) or the subset of perturbed sites in the coupling graph (Figure 5B). The notation is conventionally used in the literature to signify a free-energy difference (Equation 1) or free energy relative to a chosen zero level. A frequent choice of zero is the free energy of empty binding or of the unperturbed state, in which case , but we have not assumed this here. Note that the free energies of the equilibrium graph have a contribution from the ligand, which manifests itself in the dependence of the edge labels on the ligand concentration, , while the free energies of the coupling graph do not. Despite this difference, the free energies provide in both cases the fundamental independent thermodynamic parameters, of which there are for sites, in terms of which both HOCs and higher-order couplings can be rigorously defined.

Graphs for defining higher-order measures.

(A) Equilibrium graph, similar to those in Figure 4, for binding of a ligand to three sites on a single conformation, ordered as shown at the base, and annotated with edge labels. The single conformation has been omitted from subscripts for clarity. (B) Directed graph used to define higher-order couplings, for a macromolecule with three sites or modules (solid squares), ordered as shown at the base, with perturbations indicated by blue colour in place of black. Vertices are annotated with the corresponding free energy.

The definition is easiest for HOCs. Equation 1 tells us that the edge label, , is given by

We omit the single conformation from subscripts for clarity. It follows from Equation 2 that HOCs can be written in terms of free energies as follows:

HOCs are non-dimensional quantities associated to graph edges. As noted above, there are algebraic relationships among them arising from detailed balance at thermodynamic equilibrium. An independent set of parameters is formed by restricting to those for which , of which there are . Taken together with the ‘bare’ association constants for initial ligand binding, , they form a complete set of independent parameters for the free-energy landscape. It follows from Equations 4 and 5 that these parameters can be used to recover the fundamental free energies, so that the two sets of parameters are mathematically equivalent.

Mutant-cycle studies often refer to both Horovitz and Fersht, 1990 and Horovitz and Fersht, 1992, which present apparently different measures of higher-order coupling. The second of these papers introduces what we will refer to as the ‘residual free energy’ of a vertex and denote . This is the free energy remaining at vertex after accounting for the contributions from all proper subsets of . The residual free energy may be concisely defined recursively, starting from , by

We see from Equation 6 that and that . may be calculated directly from but, as the previous example suggests, overlapping contributions of the actual free energies must be cancelled out (Horovitz and Fersht, 1992, Equation 4),

To see why Equation 7 is a consequence of Equation 6, note first that Equation 7 gives the correct result for . It may then be recursively checked by assuming it holds for and substituting into Equation 6 to check that it holds for . Each subset contributes a term arising from for each that satisfies . The sign of coming from Equation 7 is . These terms almost completely cancel each other out because, letting ,

Taking into account the additional sign coming from Equation 6, we recover Equation 7 for . This proves recursively that Equation 7 is the solution of Equation 6 in terms of free energies.

We can go further to show how is expressed in terms of HOCs. For this, we must assume that . When , ligand binding contributes to , but when that is no longer the case, as we will see. Choose any site . The summation in Equation 7 involves terms . It can be reorganised into a sum of terms of the form , where . The sign of these terms is given by the sign of coming from Equation 7 and is therefore . It is easy to see that, because , there must be equal numbers of +1 and −1 signs. It follows from Equation 4 that

where the double exponent just means that the right-hand side is a ratio in which those terms for which is odd go in the numerator and those terms for which is even go in the denominator. Using Equation 2, we can rewrite as . Since there are equal numbers of each sign, we can cancel each occurrence of between numerator and denominator to yield a formula for residual free energies in terms of HOCs when :

The choice of in Equation 8 is arbitrary. As an illustration of Equation 8, recalling from Equation 5 that , we see that

Equations 8 and 9 show how the residual free energy is built up from binding at any given site to the hierarchy of subsets of the remaining sites.

Residual free energies can be thought of as a measure of collective synergy between sites (Horovitz and Fersht, 1992). They are associated to graph vertices and constitute independent parameters, with no algebraic relationships between them. It follows from Equations 6 and 7 that they are mathematically equivalent to the fundamental free energies. Residual free energies have also been independently described for other purposes in Equation 4 of Martini, 2017.

The higher-order couplings introduced in Horovitz and Fersht, 1990 appear at first sight to be quite different from the residual free energies introduced in Horovitz and Fersht, 1992. The couplings are described by examples for low orders, as are typically encountered in practice (Horovitz and Fersht, 1990). We provide a general definition here by introducing a slightly more complex version. A coupling is associated to a pair, consisting of, first, a vertex, , and, second, an ordered sequence of distinct sites, , none of which are in , so that . The vertex should be thought of as an ‘offset’ within the coupling graph and the sites, as specifying an ordered sequence of perturbations undertaken around . Higher-order couplings are conventionally used in the literature only for , but this more complex version is needed for the definition in Equation 11 below. Associated to such a pair is a th order coupling, which we will denote by . We start by defining the first-order coupling, , for any satisfying the restriction above, in terms of the free energy,

With that in hand, we can define for , again for any satisfying the restriction

where it is clear that must be disjoint from , so that the right-hand side of Equation 11 is recursively well defined. Unravelling Equations 11 and 10, we see that

which corresponds when to Equation 1 of Horovitz and Fersht, 1990. With some more work, it can be seen that Equation 11 reproduces the and examples in Horovitz and Fersht, 1990. Equation 12 expresses the intuition behind higher-order coupling, that it measures the effect of a perturbation relative to the unperturbed state, hierarchically for a sequence of perturbations.

It can be seen quite easily from Equations 5 and 12 that

We note from Equation 13 that ‘order’ is counted differently between HOCs and conventional higher-order couplings: when , Equation 13 relates a higher-order coupling with to a HOC of order 1. Substituting Equation 13 into Equation 11 and continuing the recursion, we find that

at which point the similarity with Equation 9 becomes evident and the pattern emerges. It can be shown by direct substitution in Equation 11 that the following general formula holds, which expresses higher-order couplings in terms of HOCs for any :

Comparing Equation 14 with Equation 8 we see that, despite their very different definitions in Equations 11 and 6, conventional higher-order couplings are the same as residual free energies. Indeed, for ,

Equation 15 may seem strange because a higher-order coupling is defined in terms of an ordered sequence of perturbations, , while a residual free energy depends only on the subset of sites, , without respect to the order of sites. It is a consequence of detailed balance at thermodynamic equilibrium that the order in which the perturbations are undertaken does not matter. For example, it is clear from Equation 12 that . More generally, if ρ is any permutation of the perturbed sites, so that ρ is a bijective function, , then it can be shown that

Note that Equation 16 follows from Equation 15 when . This property of invariance under permutation is referred to as ‘symmetry’ in Horovitz and Fersht, 1990 and is similar to the algebraic relations which give rise to the independent HOCs, with , as described previously.

The equality between the higher-order couplings introduced in Horovitz and Fersht, 1990 and the residual free energies introduced in Horovitz and Fersht, 1992, as described in Equation 15, is presumably well known to those in the field. It seems to be implicitly assumed in Horovitz and Fersht, 1992, but we have not found a clear statement of it in the literature. It would be difficult to formulate one in the absence of a general definition of higher-order coupling, as we have given in Equation 11. The formulas above may therefore be of some value in offering a rigorous treatment.

Each of the measures we have discussed, HOCs, residual free energies and higher-order couplings, offers a different way of analysing the free-energy landscape using the graphs in Figure 5. HOCs are associated to graph edges; residual free energies are associated to graph vertices; and higher-order couplings are associated to sequences of sites, at least when symmetries are ignored. As we have seen above, the three measures are mathematically equivalent. However, they are useful for different purposes. HOCs tell us about the integration of binding information; residual free energies capture the collective synergy between sets of sites; and higher-order couplings show how these same synergies can be extracted from a sequence of experimental perturbations. One advantage of HOCs is that they are non-dimensional quantities in terms of which it is straightforward to calculate the other measures. By doing so, we were able to show rigorously that higher-order couplings are also residual free energies (Equation 15).

Having explained how various higher-order measures are related to each other, we return to the question of how effective cooperativity arises from allosteric ensembles with multiple conformations. For this problem, HOCs are much easier to use than either residual free energies or higher-order couplings. With Equations 8 and 14 now available, effective residual free energies or effective higher-order couplings may be calculated from the effective HOCs that we construct below, but we will not exploit this capability in the present paper.

Coarse graining yields effective HOCs

As MWC showed, even if there is no intrinsic cooperativity in any conformation, an effective cooperativity can arise from the ensemble. This is usually detected in the shape of the binding function (Figure 2A). Here, we introduce a method of coarse graining through which effective cooperativities can be rigorously defined. We illustrate this for the allostery graph, , and explain the general coarse-graining method in the Materials and methods. For allostery, the idea is to treat the horizontal subgraphs, , as the vertices of a new coarse-grained graph, , (Figure 4, bottom right). There is an edge between two vertices in , if, and only if, there is an edge in between the corresponding horizontal subgraphs. It is not hard to see that is identical in structure to any of the vertical subgraphs . We can think of as if it represents a single effective conformation to which ligand is binding, and we can index each vertex of by the corresponding subset of bound sites, . The key point, as explained in detail in the Materials and methods, is that it is possible to assign labels to the edges in so that

with being at thermodynamic equilibrium under these label assignments. According to Equation 17, the probability of being in a coarse-grained vertex of is identical to the overall probability of being in any of the corresponding vertices of . This is exactly the property a coarse graining should satisfy at steady state. It is not difficult to see why a procedure like this should work. The coarse-graining formula in Equation 17 tells us the expected probability distribution on the coarse-grained graph, . Equation 3 can then be used to back out the equilibrium labels on the edges of which give rise to this probability distribution. We provide a more direct way of achieving the same result in Equation 40. This assignment of labels to is the only way to ensure Equation 17 at equilibrium, so that the coarse graining is both systematic and unique. The Materials and methods gives a more careful treatment for coarse graining any linear framework graph, which may not itself be at thermodynamic equilibrium.

Our coarse-graining procedure offers a general method for calculating how effective behaviour emerges, at thermodynamic equilibrium, from a more detailed underlying mechanism. This procedure is likely to be broadly useful for other studies. We note that it applies only to the steady state. It does not provide a coarse graining of the underlying dynamics, which is a much harder problem.

Because resembles the graph for ligand binding at a single conformation, we can calculate HOCs for —equivalently, effective HOCs for —just as we did above, by normalising the effective association constants. Once the dust of calculation has settled (Materials and methods), we find that has effective association constants and effective HOCs:

The quantity is calculated by multiplying labels over paths, as above, within the vertical subgraph . The terms within angle brackets, of the form , where is some function over conformations ck, denote averages over the steady-state probability distribution of the horizontal subgraph: . The right-hand formula in Equation 18 for the effective HOCs has a suggestive structure: it is an average of a product divided by the product of the averages. The effective parameters in Equation 18 provide a biophysical language in which the integrative capabilities of any ensemble can be rigorously specified.

Effective HOCs for MWC-like ensembles

The functional viewpoint is readily recovered from the ensemble. A generalised MWC formula can be given in terms of effective HOCs, from which the classical two-conformation MWC formula is easily derived (Materials and methods). Some expected properties of effective HOCs are also easily checked (Materials and methods). First, is independent of ligand concentration, . Second, there is no effective HOC for binding to an empty conformation, so that . Third, if there is only one conformation c1, then the effective HOC reduces to the intrinsic HOC, so that .

More illuminating are the effective HOCs for the MWC model. We consider any conformational ensemble which is MWC-like: there is no intrinsic HOC in any conformation, so that and ; and the bare association constants are identical at all sites, so that we can set . There may, however, be any number of conformations, not just the two conformations of the classical MWC model. It then follows that depends only on the size of , so that we can write as , where is the order of cooperativity. Equation 18 then simplifies to (Materials and methods)

We see that, although there is no intrinsic HOC in any conformation, effective HOC of each order arises from the moments of over the probability distribution on . In particular, Equation 19 shows that the effective pairwise cooperativity is .

In studies of G-protein coupled receptor (GPCR) allostery, Ehlert relates ‘empirical’ to ‘ultimate’ levels of explanation by a procedure similar to our coarse graining, but with only two conformations, and calculates a ‘cooperativity constant’ which is the same as (Ehlert, 2016). Gruber and Horovitz calculate ‘successive ligand binding constants’ for the two-conformation MWC model which are the same as effective association constants, , (Gruber and Horovitz, 2018) (Materials and methods). To our knowledge, these are the only other calculations of effective allosteric quantities. We note that Equation 19 applies to all HOCs, not just pairwise, and to any MWC-like ensemble, not just those with two conformations.

The classical MWC model yields only positive cooperativity (Koshland and Hamadani, 2002; Monod et al., 1965), as measured in the functional perspective (Figure 2A). We find that MWC-like ensembles yield positive effective HOCs of all orders. Strikingly, these effective HOCs increase with increasing order of cooperativity: provided is not constant over conformations (Materials and methods),

This shows that ensembles with independent and identical sites, including the two-conformation MWC model, can effectively implement high orders and high levels of positive cooperativity. Equation 20 is very informative, and we return to it in the Discussion.

It is often suggested that negative cooperativity requires a different kind of ensemble to those considered here, such as one allowing KNF-style induced fit (Koshland and Hamadani, 2002). However, if two sites are independent but not identical, so that , then, with just two conformations, the effective pairwise cooperativity can become negative. Indeed, , if, and only if, the values of the association constants are not in the same relative order in the two conformations (Materials and methods). Negative effective cooperativity can arise from non-identical sites and does not need a special kind of ensemble.

Integrative flexibility of ensembles

Equation 18 shows that effective HOCs of any order can arise for a conformational ensemble but does not reveal what values they can attain. Can they vary arbitrarily? The question can be rigorously posed as follows. Suppose that we are considering binding sites and that numbers , for , and , for , are chosen at will. Does there exist a conformational ensemble such that the bare effective association constants satisfy , and the independent effective HOCs satisfy ?

To address this question, we assume that there is no intrinsic HOC, so as not to introduce cryptically what we want to generate. It follows that the sites cannot be identical, for otherwise Equation 20 shows that integrative flexibility is impossible. Accordingly, the bare association constants, for , can be treated as free parameters in each conformation ck. If there are conformations in the ensemble, then there are free parameters coming from the horizontal edges (Materials and methods). Dimensional considerations imply that the effective HOCs cannot take arbitrary values if . Conversely, we prove the following flexibility theorem: any pattern of values can be realised by an allosteric ensemble with no intrinsic cooperativity, to any required degree of accuracy, provided there are enough conformations with the right probability distribution and the right patterns of bare association constants.

To see why this is possible, we outline the argument here and give rigorous details in Theorem 1 in the Materials and methods. Other arguments may of course be possible and the details presented here should not be thought of as the only way for the results to hold. We will use an allostery graph whose conformations are indexed by subsets and denoted . Both binding subsets and conformations will then be indexed by subsets of . To avoid confusion, we will use to label binding subsets and to label conformations, so that a vertex of will be . The allostery graph for the case is shown in Figure 6. We will focus on the horizontal subgraph of empty conformations, , because that is what is needed for calculating effective HOCs using Equation 18. We will take the reference vertex of to be . Recall from what was explained previously that the product of the equilibrium labels along any path in from the reference vertex to the vertex is the quantity , in terms of which the steady-state probabilities of are given by Equation 3. Let . These quantities are free parameters whose values we are going to assign. They are more convenient for our purposes than an independent set of equilibrium labels for . By Equation 3,

Example allostery graph for the flexibility theorem.

There are sites and conformations, giving a 16-vertex allostery graph (top). Vertices indicate a bound site with a solid black dot and an unbound site with a black dash. Sites are indexed in increasing order, , from left to right. The red vertical binding edges carry a factor of ε in their equilibrium labels; the blue vertical binding edges do not, as specified in the text and Equation 60. The vertices of the allostery graph are annotated with the values of , as specified in the text and Equation 61. The horizontal subgraph of empty conformations is shown at the bottom, with conformations indexed below each vertex by subsets of and annotated above each vertex with the corresponding value of , as specified by Equation 24.

The other free parameters that we need are quantities, , to which we will subsequently assign values, in terms of which we will define the intrinsic association constants. We will assume that the sites are independent in each conformation, so that all intrinsic HOCs of are 1. It follows that . We then set if , and if . Here, ε is a small positive quantity which can be chosen to determine the degree of accuracy to which the and are approximated. In the calculations which follow, we will only be interested in terms which do not involve ε as a factor. Because the sites are independent in each conformation, it follows that, in the vertical subgraph, , at any conformation , , whenever . However, if , then acquires factors of ε and so , where ≈ means simply that the related quantities become equal as ε becomes very small. In this case, for our purposes, is negligible whenever . Figure 6 illustrates how this plays out in the allostery graph for .

To calculate the effective association constants, the left-hand formula in Equation 18 shows that we must evaluate the averages and . Using Equation 21,

The only terms in the sum which do not involve ε as a factor are those for which . Furthermore, the definition of given above shows that these terms do not depend on . Similarly, using Equation 21 again,

and the only terms in the sum which do not involve ε as a factor are those for which . These terms also do not depend on . It follows from Equation 18 that

where we have ignored all terms involving ε as a factor.

Equation 22 tells us two things. First, that the effective association constants are approximately proportional to the corresponding κ’s. Hence, if the proportionality constants, which depend only on the , are determined, we can choose the so as to make the bare effective association constants approximately equal to . Second, Equation 22 tells us that the effective HOCs, , are independent of the and depend only on the ,

It remains for us to assign values to the so that the effective HOCs become approximately equal to the α’s.

To do this, assume that, for the conformation , the subset is written as , where the indices are in increasing order, . Because of this ordering, the quantities are given to us by hypothesis. Hence, we can define

Here, δ is another small positive quantity, similar to ε, which can be chosen to set the degree of accuracy to which the β’s and α’s are approximated. As with ε, we will treat as negligible terms in which δ is a factor. Figure 6 illustrates Equation 24 for the case .

It can be seen from Equation 24 that , where has a factor of δ and is therefore negligible as δ becomes very small, . It then follows from Equation 23 that

where we have used as a generic symbol for quantities which are negligible as δ becomes very small. By Equation 24, , so that

This establishes part of what is required. For the other part, we can return to Equation 22 and set

from which it follows from Equation 22 that

Equations 26 and 27 show that the effective association constants and effective HOCs can take arbitrary positive values to any desired degree of accuracy, as determined by ε and δ. This establishes the flexibility theorem. The Materials and methods provides a more careful treatment in Theorem 1, which rigorously establishes the approximation as ε and δ become very small.

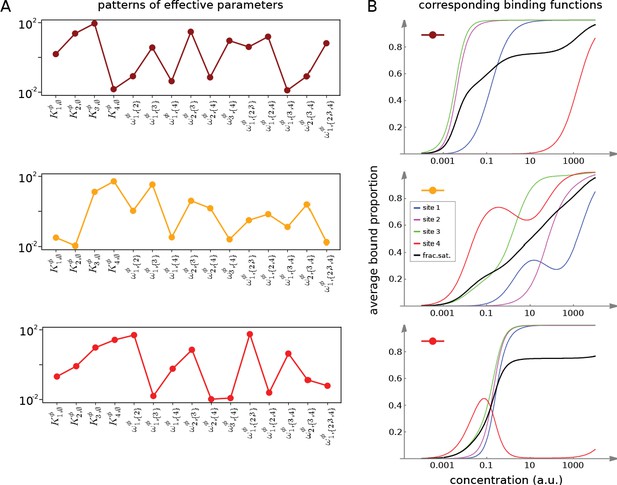

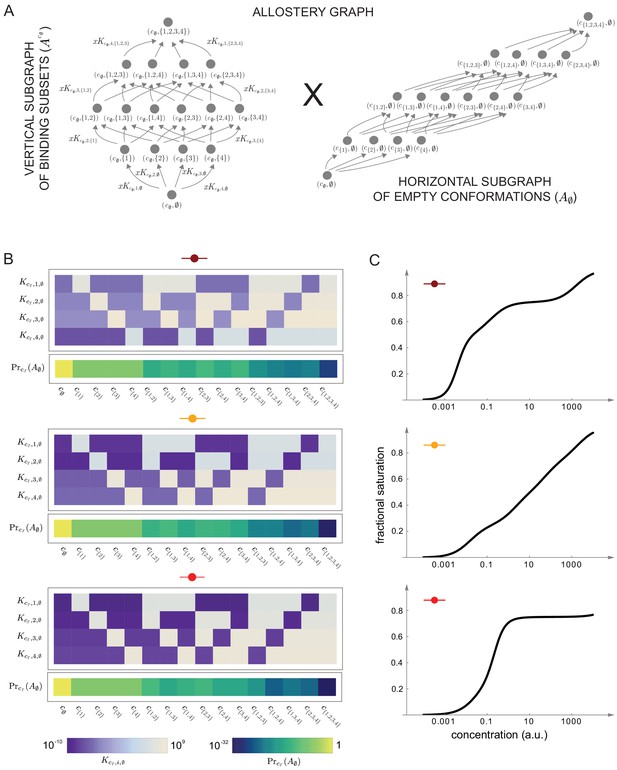

Figures 7 and 8 together illustrate the flexibility theorem. Figure 7A shows three arbitrarily chosen patterns of effective parameters for a target molecule with four ligand binding sites. Figure 7B shows the corresponding overall binding functions (black curves) together with the individual site-specific binding functions (coloured curves). As a matter of thermodynamics, the overall binding function is always an increasing function of ligand concentration. In contrast, the site-specific binding functions may increase or decrease depending on the combinations of positive and negative effective HOCs in Figure 7A, and thereby show more clearly the complexity arising from those different combinations. The implementation of the effective parameters by an allosteric ensemble, as specified by the flexibility theorem, is illustrated in Figure 8. Figure 8A shows the allosteric ensemble for sites as a product graph with 16 binding patterns and 16 conformations. Figure 8B shows the intrinsic association constants in each conformation coming from the proof of the flexibility theorem, to an accuracy of 0.01. Figure 8C confirms that this allosteric ensemble exactly reproduces the overall binding functions in Figure 7B.

Integrative flexibility of allostery I.

(A) Three choices of effective bare association constants, , in arbitrary units of (concentration)−1, and independent effective higher-order cooperativities , , for , in non-dimensional units, for ligand binding to four sites. Each example is coded by a colour (maroon, orange, red) and exhibits a different pattern of positive and negative effective HOCs. (B) Corresponding plots of average bound proportion at each site (colour coded as in middle inset) and the overall binding function, or fractional saturation, (black), calculated directly from the effective parameters. Note that the latter is always increasing; see the text for more details.

Integrative flexibility of allostery II.

(A) The allostery graph, , which implements the choices of effective higher-order cooperativities (HOCs) in Figure 7, shown as the product of the vertical subgraph of binding patterns at conformation , , and the horizontal subgraph of empty conformations, . As required in the proof of the flexibility theorem, both conformations and binding subsets are indexed by subsets of , where is the number of binding sites. Since for the effective HOCs in Figure 7, there are 16 binding subsets and 16 conformations, . (B) Intrinsic bare association constants, , in each conformation, in arbitrary units of (concentration)−1, and the probability distribution on the subgraph of empty conformations, , for the allostery graph in (A), giving the three choices of effective parameters in Figure 7A to an accuracy of 0.01 (Materials and methods), colour coded on a log scale as shown in the respective legends below. (C) Overall binding functions for the three parameterised ensembles in (B) (black curves), overlaid on the overall binding functions from Figure 7B (red curves), which were calculated from the effective parameters. The match is too close for the red curves to be visible. Numerical values are given in the Materials and methods. Calculations were undertaken in a Mathematica notebook, available on request.

In respect of the dimensional argument made previously, the allostery graph used in the proof above has free parameters for and the are a further free parameters, giving free parameters in total. This is more than the minimal required number of but not by much. It remains an interesting open question whether a conformational ensemble can be constructed, perhaps with more free parameters, which gives the effective HOCs exactly, rather than only approximately. One consequence of the definitions of and of in Equation 24 is that the parameters of the allosteric ensemble become exponentially small, as is evident for the examples in Figure 8B. Another interesting question is whether alternative constructions can be found which do not exhibit such a broad range of parameter values. Irrespective of these questions, the proof given above confirms that there is no fundamental biophysical limitation to achieving any pattern of values to any desired degree of accuracy. Accordingly, a central result of the present paper is that sufficiently complex allosteric ensembles can implement any form of information integration that is achievable without energy expenditure.

Allosteric ensembles for Hill functions

As mentioned in the Introduction, the starting point for the present paper was to account for experimental data on gene expression. Studies in Drosophila have shown that the Hunchback gene, in response to the maternal TF Bicoid, is sharply expressed in a way that is well fitted, after appropriate normalisation, to a Hill function, . This sharp expression underlies the initial patterning of anterior-posterior stripes in the early Drosophila embryo. Estimated values for the Hill coefficient, , vary depending on the experimental construct and time of measurement but are typically in the range during early nuclear cycle 14 (Tran et al., 2018). The relevant promoter is believed to have Bicoid binding sites, and the mechanistic basis for the sharpness is the subject of considerable interest. We showed in previous work that, if the promoter was assumed to have six Bicoid binding sites and to be operating at thermodynamic equilibrium, then the highest Hill coefficient that could be achieved of , at the so-called Hopfield barrier, required HOCs for Bicoid binding of order up to 5 (Estrada et al., 2016). In particular, pairwise cooperativities, which had previously been invoked to account for the sharpness (Gregor et al., 2007), are not sufficient to explain the data. Left open by this previous work was a molecular mechanism which could create the high-order HOCs required for Hill functions. We have seen above that allosteric ensembles can create any pattern of HOCs, so it is natural to ask if there are allosteric ensembles which yield good approximations to Hill functions.

We implemented a numerical optimisation algorithm to find binding functions which approximated Hill functions (Materials and methods). Hill functions are naturally normalised so that , so we followed the procedure introduced previously (Estrada et al., 2016) of normalising concentration to its value at half-maximum: if the normalised binding function is denoted , then . Figure 9 shows results for an allosteric ensemble with four conformations for ligand binding to six sites. The ensemble has no intrinsic cooperativity in any conformation, so that for any binding subset , while the bare association constants, , differ between the conformations (Figure 9B). This gives free parameters together with an additional three free parameters for the independent equilibrium labels on the horizontal subgraph (Figure 9A). We limited the parameter ranges so that the were in the range and the equilibrium labels of were in the range . With these settings, it was not difficult to find normalised binding functions which are very well fitted by the Hill function, , for Hill coefficients , 5 and 6 (Figure 9D).

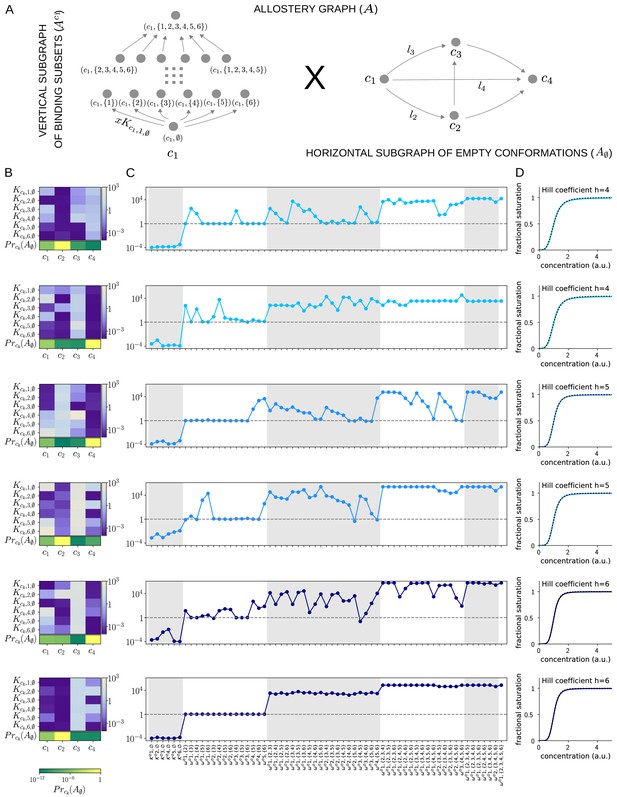

Allosteric ensembles for Hill functions.

(A) Allostery graph, , for representing Hill functions with six binding sites and four conformations, shown as the product of the vertical subgraph of binding subsets and the horizontal subgraph of empty conformations. Some vertices are annotated and some edges are labelled; the edge labels, and , on the horizontal subgraph are the independent labels coming from a spanning tree used in the algorithm described in the text. (B) Intrinsic bare association constants in each conformation, in arbitrary units of (concentration)−1 colour coded in the vertical bar on the right, and the probability distribution on the subgraph of empty conformations, colour coded in the horizontal bar at the bottom. (C) Corresponding effective association constants in arbitrary units of (concentration)−1 and the non-dimensional independent effective higher-order cooperativities arising from the ensemble. (D) Corresponding binding functions (blue curves) overlaid on the Hill function (black dashes) with the indicated Hill coefficient, . Two sets of parameter values are shown, with the same shade of blue, for each Hill coefficient , 5 and 6.

We were able to find multiple sets of parameters which yielded excellent fits; Figure 9 shows two representative examples for each Hill coefficient. It is evident that very different numerical ensembles (Figure 9B) can give almost identical binding functions (Figure 9D). This reinforces the point made in the Introduction that the binding function, or some associated measure of its shape, such as a Hill coefficient, are aggregate measures which give little insight into how binding information is being integrated. For this, the patterns of effective parameters provide more detailed information. As can be seen from Figure 9C, effective HOCs of all orders up to 5 are needed for each Hill function, as suggested previously (Estrada et al., 2016), with predominantly positive effective HOCs, , and varying amounts of independence, .

It is interesting to ask what role the size of the ensemble plays in approximating Hill functions. We cannot give a definitive answer but can make some observations. We were able to approximate with a two-conformation ensemble with six sites but only with much wider parametric ranges. It was also more difficult in terms of optimisation time to find a good fit, and we did not find multiple fits. This suggests that the larger the ensemble the easier it is to approximate Hill functions with limited parameter ranges. It is also conceivable that the size of the ensemble may have to increase with the number of binding sites to retain control over the parametric ranges. We must leave such issues to subsequent work. While our results are numerical, and therefore limited to the ensemble we have analysed, it seems clear that allosteric ensembles provide a molecular mechanism that can closely approximate Hill functions with the required high orders of effective cooperativity, thereby providing a solution to our original question. Since Hill functions are widely used to fit data, the potential for an underlying allosteric mechanism may be broadly useful.

Discussion

Jacques Monod famously described allostery as ‘the second secret of life’ (Ullmann, 2011). It is only relatively recently, however, that the prescience of his remark has been appreciated and the wealth of conformational ensembles present in most cellular processes has been revealed (Changeux and Christopoulos, 2016; Motlagh et al., 2014; Nussinov et al., 2013).

The present paper seeks to expand the existing allosteric perspective by providing a biophysical foundation for information integration by conformational ensembles. Equation 48 and Equation 49 in the Materials and methods (Equation 18 above) provide for the first time a rigorous definition of effective, higher-order quantities—the association constants, , and cooperativities, ,—arising from any ensemble. Since our methods are equivalent to those of equilibrium statistical mechanics (Material and methods), these definitions correctly aggregate the free-energy contributions which emerge in the ensemble from ligand binding to a conformation, intrinsic cooperativity within a conformation and conformational change. As noted above, our results encompass recent work on effective properties of the classical, two-conformation MWC ensemble—for pairwise cooperativity (Ehlert, 2016) and higher-order association constants (Gruber and Horovitz, 2018)—but they hold more generally for ensembles of arbitrary complexity with any number of conformations, including those with intrinsic cooperativities.

The effective quantities introduced here provide a language in which the integrative capabilities of an ensemble can be rigorously expressed. To begin with, the overall binding function can be determined in terms of the effective quantities through a generalised MWC formula (Materials and methods), thereby recovering the functional viewpoint (Figure 2A) from the ensemble viewpoint (Figure 2B). This generalised MWC formula reduces to the usual MWC formula for the classical two-conformation MWC model (Equation 55). We also clarify issues which had been difficult to understand in the absence of a quantitative definition of effective quantities. We find that the classical MWC model exhibits effective HOCs of any order and that these are always positive. In other words, binding always encourages further binding. Moreover, these effective HOCs increase strictly with increasing order (Equation 20), so that the more sites which are bound, the greater the encouragement to further binding. We see that HOC has always been present, even for oxygen binding to haemoglobin, albeit unrecognised for lack of an appropriate quantitative definition. Equation 20 confirms in a more precise way the long-standing realisation from the functional perspective that the MWC model exhibits only positive cooperativity; at the same time it succinctly expresses the rigidity and limitations of this model.

It is often stated in the allostery literature that negative cooperativity requires induced fit, in which binding induces conformations which are not present prior to binding. This view goes back to Koshland, who pointed to the emergence of negative cooperativity in the KNF model of allostery, which allows induced fit, and contrasted that to the positive cooperativity of the MWC model, which assumes conformational selection (Koshland and Hamadani, 2002). Our language of effective quantities permits a more discriminating analysis. It confirms, as just pointed out, that the classical MWC model exhibits only positive effective HOCs but also shows that induced fit is not required for negative effective HOC, which can arise just as readily from conformational selection (Materials and methods). What is required is not a different kind of ensemble but, rather, binding sites that are not identical.

Our main result, on the flexibility of conformational ensembles, shows that positive and negative HOCs of any value can occur in any pattern whatsoever, provided that the conformational ensemble is sufficiently complex, with enough conformations (Figure 8). Since the effective quantities provide a complete parameterisation of an ensemble at thermodynamic equilibrium, we see that conformational ensembles can implement any form of information integration that is achievable without external sources of energy. In particular, allosteric ensembles can be found whose binding functions closely approximate Hill functions (Figure 9), thereby answering the question which prompted this study, as to how such functions might arise in gene regulation.

Eukaryotic gene regulation is one of the most complex forms of cellular information processing (Wong and Gunawardena, 2020). Information from the binding of multiple TFs at many sites, often widely distributed across the genome in distal enhancer sequences, must be integrated to determine whether, and in what manner, a gene is expressed. The results of the present paper offer a way to think further about how such integration takes place (Tsai and Nussinov, 2011). We focus on gene regulation, but our results may also be useful for analysing other mechanisms of information integration, such as GPCRs (Thal et al., 2018).

As pointed out in the Introduction, haemoglobin solves the problem of integrating two quite different physiological functions—picking up oxygen in the lungs and delivering oxygen to the tissues—by having two conformations, each adapted to one of these functions, and dynamically inter-converting between them (Figure 10A). The effective cooperativity of oxygen binding ensures that the appropriate conformation dominates the ensemble in the distinct contexts of the lungs, where oxygen is abundant, and the tissues, where oxygen is scarce, so that oxygen is transferred from the former to the latter.

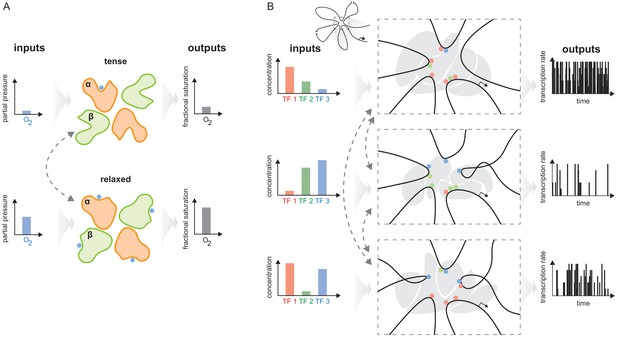

The haemoglobin analogy in gene regulation.

(A) The two conformations of haemoglobin are each adapted to one of the two input-output functions which haemoglobin integrates to solve the oxygen transport problem. These conformations dynamically interchange in the ensemble (grey dashed arrows). (B) The gene regulatory machinery couples input patterns of transcription factors (TFs) (left) to output patterns of stochastic expression of mRNA splice isoforms (right, showing bursting patterns of one isoform). Our results suggest that a sufficiently complex conformational ensemble, built out of chromatin, TFs, co-regulators and phase-separated condensates (centre, grey shapes in three distinct conformations), could integrate these functions at a single gene in an analogous way to haemoglobin. Chromatin is represented by the thick black curve, whose looped arrangement around the promoter is shown schematically (top).

Genes have to be regulated to achieve yet more elaborate forms of integration, with the same gene being expressed differently in different contexts. Such pleiotropy is particularly evident in developmental genes (Bolt and Duboule, 2020) but usually occurs in distinct cells within the developing organism. The same gene is present in these cells, but it may be difficult to know whether the corresponding regulatory machineries are also the same. More directly suitable examples for the present discussion arise in individual cells exposed to distinct stimuli (Molina et al., 2013; Kalo et al., 2015; Lin et al., 2015), which may be particularly the case for neurons or cells of the immune system (Marco et al., 2020; Smale et al., 2013).

Depending on the input pattern of TFs present in a given cellular context (Figure 10B, left), a gene may be expressed in a certain way, as a distribution of splice isoforms, each with an overall level of mRNA expression and a pattern of stochastic bursting (Lammers et al., 2020; Figure 10B, right). A different input pattern of TFs may elicit a different mRNA output. Our results suggest that one way in which these different input-output relationships could be integrated in the workings of a single gene is through allostery of the overall regulatory machinery. An allosteric analogy in gene regulation was previously made by Mirny, 2010, building upon observations of indirect cooperativity between TFs that were mediated by nucleosomes (Miller and Widom, 2003). In the allosteric analogy, TF binding to DNA takes place in one of two conformations—nucleosome present or absent—which dynamically interchange, leading to the classical MWC model. Here, we build upon Mirny’s idea to suggest that not only indirect cooperativity but also, more broadly, information integration may be accounted for by the conformational dynamics of the gene regulatory machinery. The latter comprises not just individual nucleosomes but whatever other molecular entities are implicated in conveying information from TF binding sites to RNA polymerase and the transcriptional machinery (Figure 10B, centre), as discussed below. If this hypothesis is correct, then the flexibility result tells us that the overall regulatory conformational ensemble must exhibit sufficient complexity to implement the integration of binding information.

Studies of individual regulatory components have revealed many levels of conformational complexity. DNA itself exhibits conformational changes in respect of TF binding (Kim et al., 2013). Nucleosomes are moved or evicted to alter chromatin conformation and DNA accessibility (Mirny, 2010; Voss and Hager, 2014). TFs, in particular, show high levels of intrinsic disorder compared to other classes of proteins (Liu et al., 2006), especially in their activation domains, and these disordered regions exhibit dynamic multivalent interactions characteristic of higher-order effects (Chong et al., 2018; Clark et al., 2018). Hub TFs like p53 exhibit high levels of conformational flexibility in the context of specific DNA binding (Demir et al., 2017). Transcriptional co-regulators, which do not directly bind DNA but are recruited there by TFs, exhibit substantial conformational complexity: CBP/p300 has multiple intrinsically disordered regions which facilitate higher-order cooperative interactions (Dyson and Wright, 2016), while the Mediator complex exhibits quite remarkable conformational changes upon binding to TFs (Allen and Taatjes, 2015). Transcription initiation sub-complexes such as TFIID, which help assemble the transcriptional machinery, show conformational plasticity (Nogales et al., 2017), while the C-terminal domain of RNA Pol II, which is repetitive and intrinsically disordered, shows surprising local structural heterogeneity (Portz et al., 2017). The significance of RNA conformational dynamics during transcription is becoming clearer (Ganser et al., 2019). Finally, transcription may also be regulated within larger-scale entities, such as transcription factories (Edelman and Fraser, 2012), phase-separated condensates (Sabari et al., 2018) and topological domains (Benabdallah and Bickmore, 2015). The role of such entities remains a matter of debate (Mir et al., 2019), but they may play a significant role in conveying information over long genomic distances between distal enhancers and target promoters (Furlong and Levine, 2018). From the perspective taken here, in view of their size and extent, they may exhibit conformational dynamics on longer timescales.