By Kristen Ratan, Sarah Greene and Shilpa Rele (ICOR)

It has been more than six months since eLife launched its new approach to publishing, opening up for submissions at the end of January 2023. The team at Incentivizing Collaborative and Open Research (ICOR) is providing an independent review and analysis of the first six months of collected data with regard to submissions, process, and feedback from authors, editors, and reviewers.

Overall, the broader eLife community has remained supportive about the new model, though where there is disagreement, passions have run high, illustrating how difficult culture change can be in the sciences. In May 2023, we provided an initial three-month update on eLife’s new publishing model. This early data showed steady submission numbers and many authors shared positive feedback on the ease, expediency and transparency of the new model. That checkpoint was very early in the process and, with six months of data, we are seeing a clearer set of results.

We will assess the data against the stated goals of eLife’s new model as well as its overall perceived success by the community.

The eLife goals:

- Provide meaningful feedback to more authors earlier in the process.

- Decrease the time to citable publication through Reviewed Preprints.

- Reduce the churn of repeated review cycles before the work can be shared.

- Provide authors with ongoing feedback and opportunities to revise and improve their work.

- Enable author control over when a final version of record (VOR) is published.

Executive summary of key findings

- Submissions are steady, and the proportion of papers sent for review is similar in the two models.

- Reviewed Preprints are visible earlier than traditionally peer-reviewed articles, which provides a middle ground between unreviewed preprints and published VORs.

- Author demographics have not changed significantly with regard to discipline or geography.

- Authors, Senior Editors, and Reviewing Editors are reporting largely favorable experiences with the new model, with some concerns about quality and suggestions regarding process being voiced.

Submissions are steady and papers sent to review are increasing monthly

Steady submissions

The rate of submissions to the new model continues to be steady with 2,931 received between February and July 2023, compared with 4,242 submitted to the old model between Feb–Jul 2022. This year, when authors can select either model, there has been a decline in submissions to the old model (292), but it is important to point out that the submission interface prioritises the new model so this is not surprising. However, total submissions in the past six months (2,931+292=3,223) was close to the forecast of 3,303.

The median number of days it takes for the decision to invite a paper for review remains the same at seven days in both the old and new models.

Papers sent to review

The proportion of papers invited for review is similar when comparing Feb–Jul 2023 new-model data (29.7% with 799 papers sent for review) to Feb–Jul 2022 old-model data (31.8% with 1,478 papers sent for review), and the former is steadily increasing from 21.7% in February to 30% in July. More importantly, because the central goal of the new model is to increase meaningful feedback earlier in the process, the time to first citable publication has decreased from 232 days to 79 days as described below.

Author demographics are similar to prior years

Subject Areas

In order of ranking, the highest number of submissions has been to the subject area Neuroscience, followed by Cell Biology, Genetics and Genomics, Microbiology and Infectious Diseases, and Medicine. This is similar to prior years.

Geography

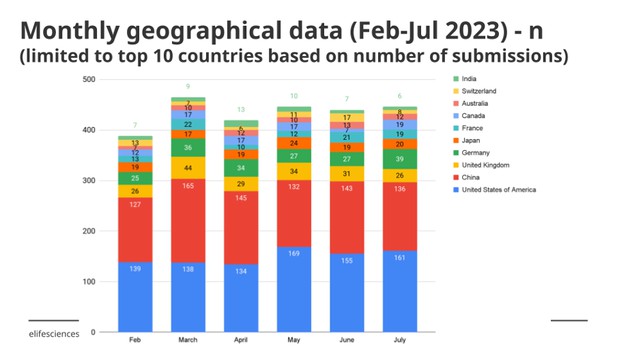

Geographically there has been little change in the number of submissions. In order of ranking, the United States was first, closely followed by China; together they were responsible for more than half the total submissions. Next, with much smaller numbers, were the United Kingdom, Germany and Japan.

Reviewed Preprints decrease the time to first citable publication

Days to publication in new and old models

In the new model, all papers that have been selected for review appear on the eLife website as Reviewed Preprints, accompanied by an eLife assessment and public reviews. By design this is faster than traditional peer review and gets a vetted version of the work to the public sooner. This central goal of the new model is being met, of course, as it is inherent in the new process.

The average number of days from submission to publication of the first version of a Reviewed Preprint is 79 days, whereas submission to publication of a VOR in the old model is 232 days. It is true that the review models are different, but if a goal is to reduce the time that a vetted work is shared, then this is achieved. There are not yet any data or comparisons on the effectiveness of the eLife review relative to traditional peer review, however. This type of analysis could be done in the future and we recommend attempting it after there is at least a year’s worth of data.

Challenges in reviewing process

There are challenges with this new model in terms of reviewing. Reviewing Editors (44%) found searching for and securing reviews to be more challenging than in the old model. They found writing up the eLife assessment together with reviews (48%) and engaging the original reviewers for assessing revised papers (50%) to be as equally challenging as in the old model. They commented on the need for communicating the advantages of the new model better with the community which might help secure more reviewers.

Author surveys show mostly positive feedback

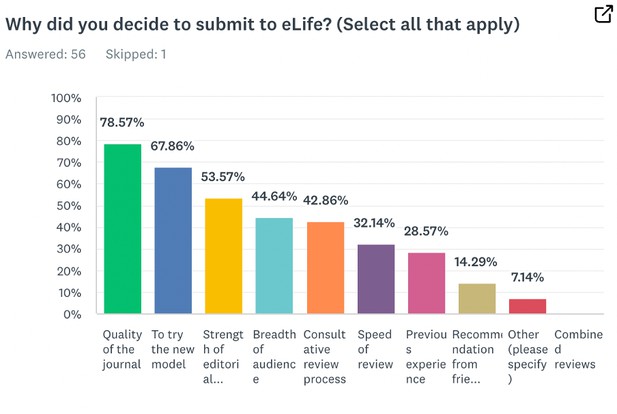

eLife sends surveys each month to the prior month’s submittors. Survey data revealed that authors chose to submit their works to eLife due to the quality of the journal as well as to try out the new process. This was often prompted through word of mouth or due to reading about eLife content in the news.

It is significant that 68% of authors said that one of the reasons that they submitted to eLife was to try the new model. This implies that there is some appetite for change and, combined with the answers relating to eLife’s quality, that authors trust eLife.

When asked for comments on their experience, authors were largely positive with some trepidation:

“As a postdoc, I truly appreciate the new model and being able to have full control over paper revisions.”

“I wholly support what eLife is trying to accomplish. I found the review process fair and transparent. I also believe the "eLife assessment", in addition to the public peer reviews, are valuable additions to our manuscript. With the assessment and reviews in hand, I feel empowered to handle revisions as I see fit.”

“I am nervous for how well the paper will be received in the community now that it has not been through the "old fashioned" and painfull [sic] peer review process. We will see.”

There were several comments asking for more clarity and speed with the submission and eLife review process.

Senior Editors mostly support the new model but have concerns about maintaining quality

Senior Editors were surveyed about their experience with the new model. The survey response rate was 73% for Senior Editors which is roughly in the same range as prior years.

While almost 85% of Senior Editors who answered the survey reported being either very or moderately supportive of the new model, there were concerns about the impact on quality of submissions, with more Senior Editors perceiving a decrease in quality than it remaining the same, and none perceiving an increase in quality.

However, approximately 54% of them either have submitted or plan to submit their own manuscripts to eLife under the new model.

In terms of process, over half of Senior Editors (58%) responding also indicated their tasks, such as obtaining feedback from Reviewing Editors on initial submissions, to be more challenging than the old model. This will be interesting to watch to see if the workflow and effort required smooth out over time.

Reviewing Editors are more favorable to the new model than Senior Editors

Most of the Reviewing Editors (71%) think the quality of submissions has improved or remained the same, which is higher than the Senior Editors quality assessment (with 41.5% of Senior Editors thinking the quality of submissions has stayed the same, and 58.5% perceiving a decrease in quality). However, fewer Reviewing Editors have submitted or plan to submit their own manuscripts to eLife (just under 50% compared to the 54% of Senior Editors).

As with Senior Editors, many Reviewing Editors (46.8%) found conducting the initial assessment to be more challenging than in the old model.

Readers’ perceptions are generally positive about the new model

Readers, in a recent reader survey, said that they find the new model helpful overall. Over half support preprints being accompanied by public peer reviews (57%), while around one in nine oppose it (12%).

Regarding the eLife assessments associated with the Reviewed Preprints, 50% had read at least one and 75% of those readers said they were useful to them. 81% of those who hadn’t read an eLife assessment said they could be useful to them.

Discussion

The first six months of data from the eLife shift to its new model show fledgling success in most areas. Community approval is relatively high, which is interesting given the reputation that researchers have for resisting change. The authors are self-selecting in to try the new model, so this is not a reflection of researchers writ large, but it seemed possible that submissions would trail off and there would not be enough data to do an analysis at all. Submission data and community engagement have in fact been quite high.

One caveat about the analysis is that it is proving difficult to compare to a control since eLife also switched to a new version of its manuscript submission system at the end of January 2023. This has confounded our efforts to compare to last year’s data meaningfully. Also, our initial goals of comparison to old-model submissions is difficult because most of the submitting authors are selecting the new model.

At ICOR we anticipate having a clearer picture after about two years of operation of the new model. While preliminary data is helpful and gives us an indication as to the direction eLife is headed, only after there is more awareness among authors regarding their options can we detect the thin wedge of true culture change.

#

We welcome comments and questions from researchers as well as other journals. Please annotate publicly on the article or contact us at hello [at] elifesciences [dot] org.

For the latest in published research sign up for our bi-weekly email alerts. You can also follow eLife on Twitter, Mastodon, Facebook or LinkedIn.