Learning multiple variable-speed sequences in striatum via cortical tutoring

Figures

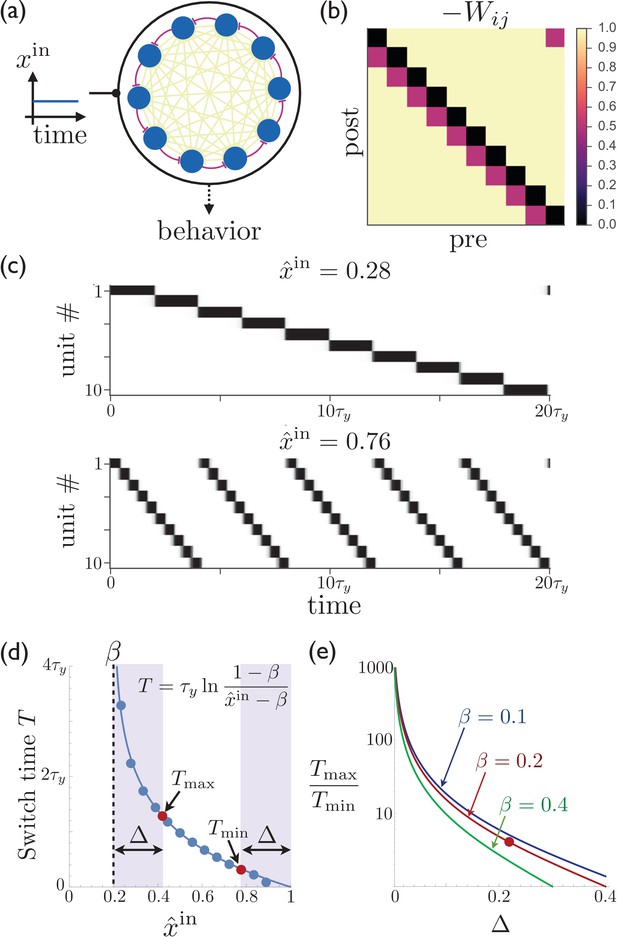

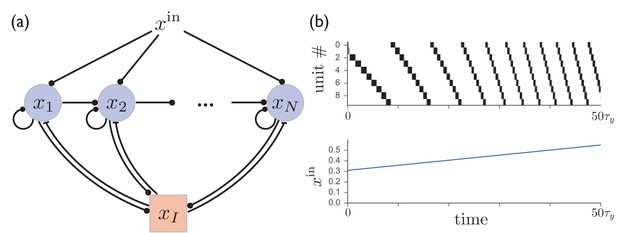

Rescalable sparse sequential activity in the striatum.

(a) Schematic diagram of a 10-unit striatal network. Units receive constant external excitatory input and mutually inhibit each other. The burgundy synapses correspond to a depotentiated path through the network that enables sequential activity. (b) The synaptic weight matrix for the network shown in ‘a’, with subdiagonal weights depotentiated by . (c) The magnitude of the constant input to the network can be used to control the rate at which the activity switches from one population to the next. The units in the network are active in sequential order, with the speed of the sequence increasing as the excitatory input to the network is increased. Parameters for the synaptic depression are and , the gain parameter is , synapses connecting sequentially active units are depotentiated by , and the effective input is . (d) The switch time as a function of the level input to the network. Points are determined by numerically solving Equation 1; curve is the theoretical result (equation shown in figure; see Appendix 1 for details). If the input is limited to the range (e.g. because reliable functioning in the presence of noise would require the input to stay away from the boundaries within which switching occurs), then and are the maximum and minimum possible switching periods that can be obtained. (e) The temporal scaling factor is shown as a function of for different values of the synaptic depression parameter . The red dot corresponds to the ratio of the red dots in ‘d’.

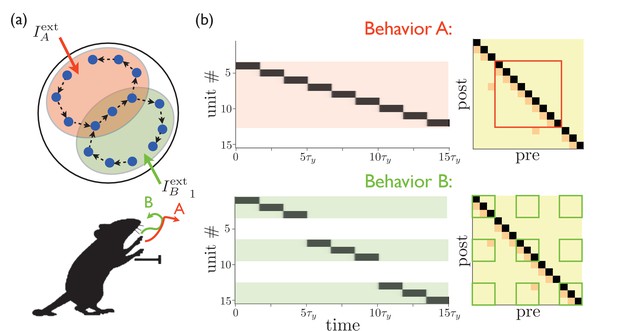

Targeted external input expresses one of several sequences.

(a) Schematic illustration of partially overlapping striatal activity sequences selectively activated by external input. The arrows do not represent synaptic connections, but rather the sequence of activity within an assembly. Overlapping parts of the striatal activity sequences encode redundancies in portions of the corresponding behaviors (in this case, the middle portion of a paw movement trajectory). (b) Left panels show network activities in which only the shaded units receive external input. Right panels show the weights, with only the outlined weights being relevant for the network dynamics for each behavior.

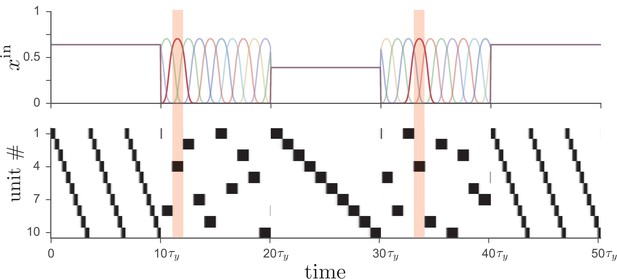

Driving the network from Figure 1 with two different types of input leads to distinct operational modes.

If input to the network (top) is tonic, the recurrent weights cause the network to produce a particular activity sequence (bottom), as in Figure 1, with the magnitude of the input controlling the speed of the sequence. Alternatively, if the input is strongly time-dependent, then the network dynamics are enslaved to the input pattern. In this case each unit receives a unique pulsed input, with the input to unit four highlighted for illustration.

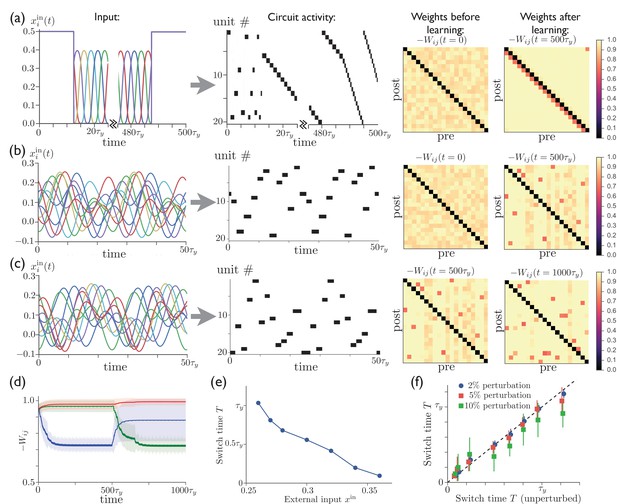

Repeated external input can tutor striatum to produce sparse activity sequences.

(a) A regular sequence of pulse-like inputs (only 10 inputs are shown for clarity; = 1 cycle) (left) leads to a sequential activity pattern (center) and, via anti-Hebbian plasticity, converts a random connectivity matrix (at ) to a structured matrix after 20 cycles (at ) (right). The input during the first half of the first cycle and the last half of the last cycle has been replaced by constant input to all units, which leads to a short random sequence before training and to a long specific sequence following training, with the sequence speed determined by the level of tonic input. (b) Starting with random connectivity between units (at ), each unit is driven with a distinct time-varying input consisting of a random superposition of sine waves (two cycles of which are shown for 10 inputs) which produces a repeating activity sequence. Anti-Hebbian learning results in a structured matrix after 20 cycles (at ). (c) 20 cycles with a new input elicits a different activity pattern and overwrites the prior connectivity to a new structured matrix (at ). (d) The evolution of the synaptic weights during the learning in ‘b’ and ‘c’. The blue, green, and red lines show the average weights of synapses involved in the first pattern, the second pattern, and neither pattern, respectively. (The weights shown in blue are not all repotentiated in the second training period due to the fact that some of these synapses are from units that are not active in the second sequence. For these weights in Equation 2, and thus they do not learn.) (e) The average time for switching from one unit to the next as a function of the constant external input after learning. (f) The switch times are robust to random perturbations of the weights. Starting with the final weights in ‘c’, each weight is perturbed by , where is a normal random variable, and , 0.05, or 0.10. The perturbed switch times (slightly offset for visibility) are averaged over active units and realizations of the perturbation. Learning-related parameters are , , and .

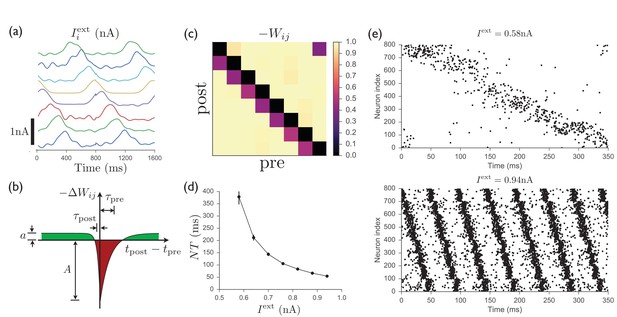

Spiking model of striatum.

(a) Input currents to each of 8 distinct clusters of 100 neurons each (offset for clarity). This input pattern causes sequential activation of the clusters and is repeated noisily several times while the recurrent weights are learned. (b) Schematic anti-Hebbian spike-timing-dependent plasticity (STDP) rule for recurrent inhibitory synapses, showing that synapses are depotentiated when pre- and post-synaptic spikes are coincident or sequential, and potentiated if they are not. (This STDP curve applies whenever there is a presynaptic spike; there is no weight change in the absence of a presynaptic spike; see Materials and methods for specific mathematical details.) (c) Average recurrent inhibitory weights between clusters in a spiking network after learning with STDP. (d) After the weights have been learned, driving the network with tonic inputs of varying amplitudes leads to a rescaling of the period of the activity pattern. (e) Two examples of the time-rescaled activity patterns in the trained network with different values of tonic input current.

A network of excitatory units with shared inhibition exhibits variable-speed sequences.

(a) A network of excitatory units are connected by self-excitation, feedforward excitation, and shared inhibition. (b) Just as in the recurrent inhibitory network, this network exhibits sparse sequential firing when the excitatory synapses are made depressing, with the speed of the sequence controlled by the level of external input. Parameters are .

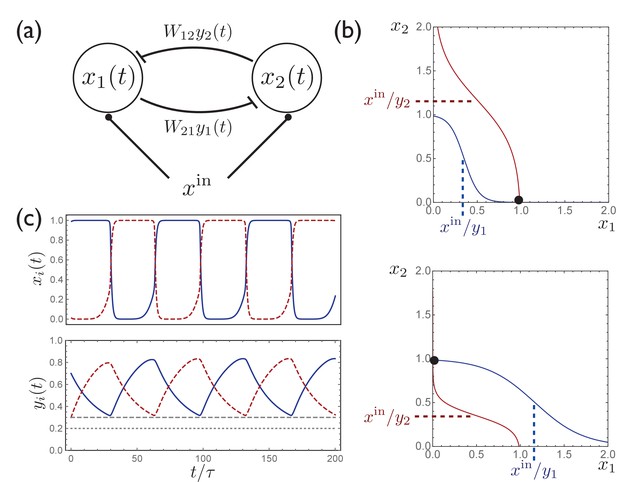

A simple circuit with two units exhibits activity switching.

(a) A simple two-unit network with activities and , symmetric inhibitory connectivity, and constant input . (b) Curves along which, for given fixed values of , the time derivatives , with the intersection of these curves describing a stable fixed point. Depending on the relative values of and , the fixed point occurs at either (top) or (bottom). (c) When are included as dynamical variables, the synaptic depression leads to periodic switching between the two stable solutions.

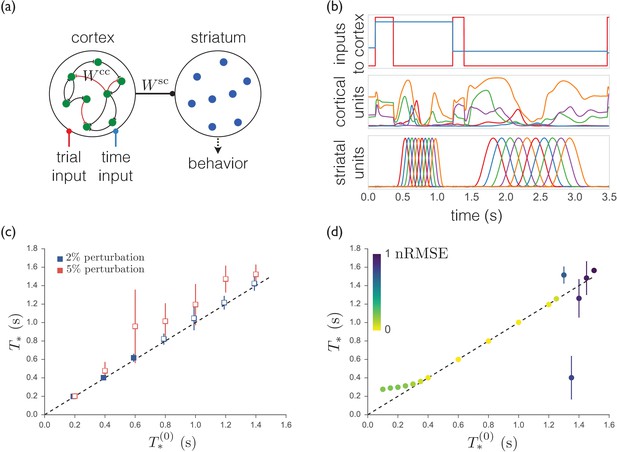

A trained recurrent network exhibits sequences that are less robust and less able to generalize.

(a) Schematic of the model. The cortical units receive trial-specific inputs and project to striatum. Striatal units are not recurrently connected. The corticocortical and corticostriatal weights and are set as per the text. (b) Model simulation. Upper: Inputs to model. Red trace marks initiation of trials; blue trace indicates the target time for the trial. Middle: Sample cortical units. Lower: Striatal units. (c) Means and standard deviations of best-match times as a function of target times with random weight perturbations of 2 or 5%. Open symbols denote target times for which the nRMSE exceeded 0.3 on greater than 25% of trials. (d) Best-match times for a model trained on time intervals ranging from 0.4 s to 1.2 s, and then tested from 0.1 s to 1.5 s. The colors indicate the means of the nRMSEs of the trials at each target time.