The nature of the animacy organization in human ventral temporal cortex

Figures

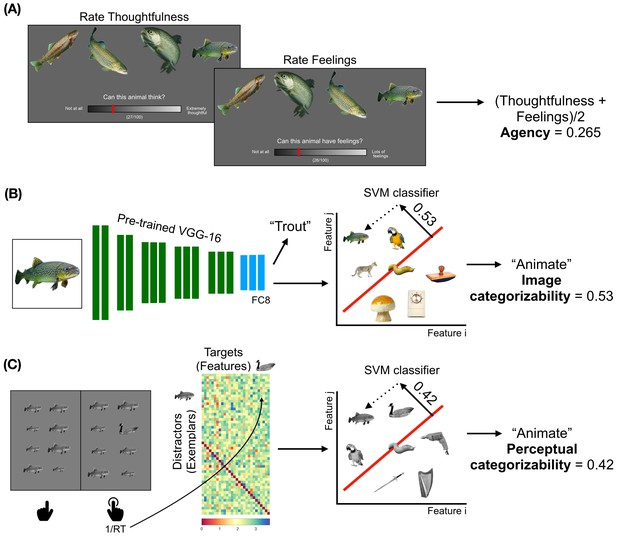

Obtaining the models to describe animacy in the ventral temporal cortex.

(A) Trials from the ratings experiment are shown. Participants were asked to rate 40 animals on three factors - familiarity, thoughtfulness, and feelings. The correlations between the thoughtfulness and feelings ratings are at the noise ceilings of both these ratings. Therefore, the average of these ratings was taken as a measure of agency. (B) A schematic of the convolutional neural network (CNN) VGG-16 is shown. The CNN contains 13 convolutional layers (shown in green), which are constrained to perform the spatially-local computations across the visual field, and three fully-connected layers (shown in blue). The network is trained to take RGB image pixels as inputs and output the label of the object in the image. Linear classifiers are trained on layer FC8 of the CNN to classify between the activation patterns in response to animate and inanimate images. The distance from the decision boundary, toward the animate direction, is the image categorizability of an object. (C) A trial from the visual search task is shown. Participants had to quickly indicate the location (in the left or right panel) of the oddball target among 15 identical distractors which varied in size. The inverse of the pairwise reaction times are arranged as shown. Either the distractors or the targets are assigned as features of a representational space on which a linear classifier is trained to distinguish between animate and inanimate exemplars (Materials and methods). These classifiers are then used to categorize the set of images relevant to subsequent analyses; the distance from the decision boundary, towards the animate direction, is a measure of the perceptual categorizability of an object.

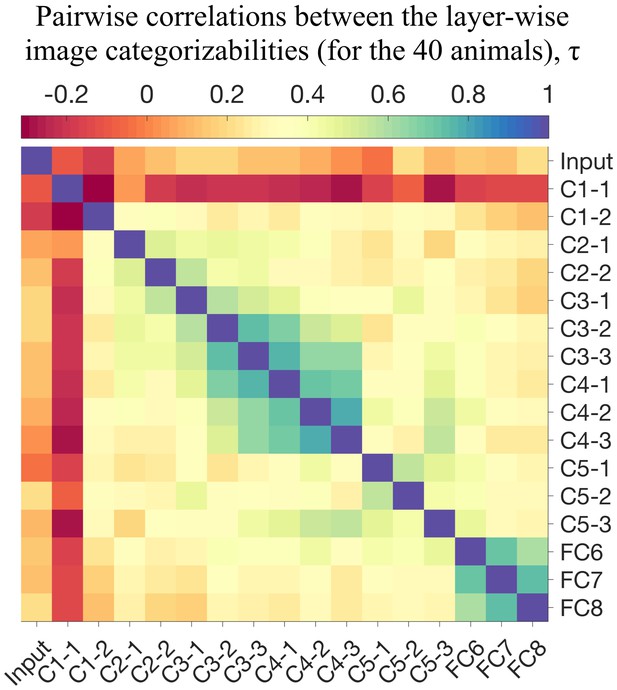

Pairwise similarities between the image categorizabilities of layers from VGG-16.

The image categorizabilities evolve as we go deeper into the network, as evidenced by the two middle and late clusters (in green) which are not similar to each other. The image categorizabilities of the fully connected layers (FCs) are highly similar and all the findings described in the paper are robust to a change in layer-selection among the FCs.

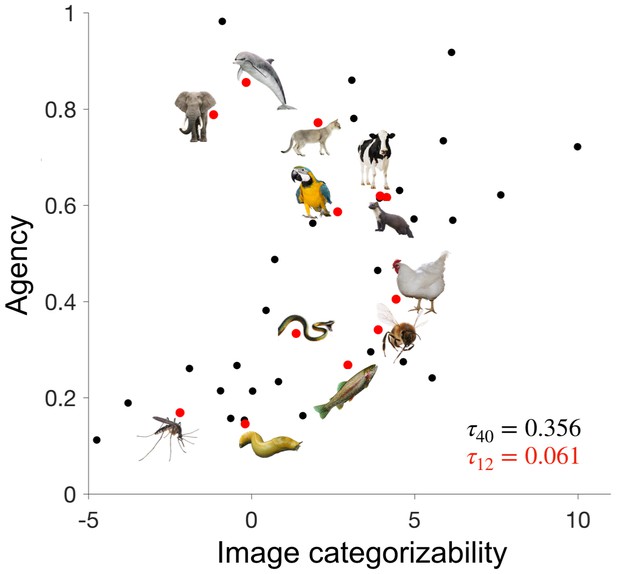

Disentangling image categorizability and agency.

The values of agency and image categorizability are plotted for the 40 animals used in the ratings experiment. We selected 12 animals such that the correlation between agency and image categorizability is minimized. Data-points corresponding to those 12 animals are highlighted in red.

-

Figure 2—source data 1

Agency and image categorizability scores for the 40 animals.

- https://doi.org/10.7554/eLife.47142.005

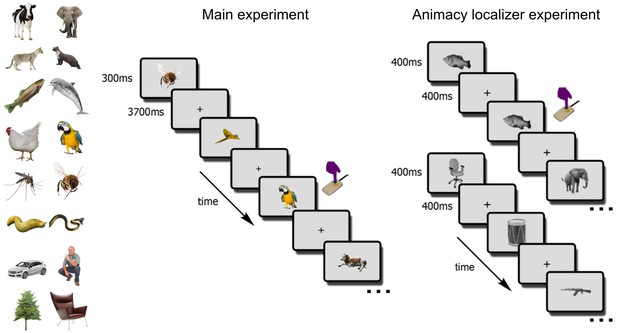

The fMRI paradigm.

In the main fMRI experiment, participants viewed images of the 12 selected animals and four additional objects (cars, trees, chairs, persons). Participants indicated, via button-press, one-back object repetitions (here, two parrots). In the animacy localizer experiment, participants viewed blocks of animal (top sequence) and non-animal (bottom sequence) images. All images were different from the ones used in the main experiment. Each block lasted 16s, and participants indicated one-back image repetitions (here, the fish image).

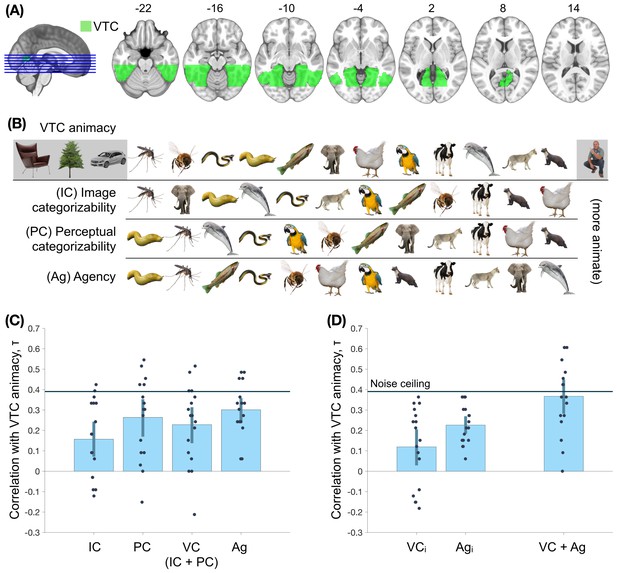

Assessing the nature of the animacy continuum in the ventral temporal cortex (VTC).

(A) The region-of-interest, VTC, is highlighted. (B) The order of objects on the VTC animacy continuum, image categorizability (IC), perceptual categorizability (PC), and agency (Ag) are shown. (C) The within-participant correlations between VTC animacy and image categorizability (IC), perceptual categorizability (PC), visual categorizability (VC, a combination of image categorizability and perceptual categorizability; Materials and methods), and agency (Ag) are shown. All four models correlated positively with VTC animacy. (D) The left panel shows the correlations between VTC animacy and VC and Ag after regressing out the other measure from VTC animacy. Both correlations are positive, providing evidence for independent contributions of both agency and visual categorizability. The right panel shows the correlation between VTC animacy and a combination of agency and visual categorizability (Materials and methods). The combined model does not differ significantly from the VTC animacy noise ceiling (Materials and methods). This suggests that visual categorizability and agency are sufficient to explain the animacy organization in VTC. Error bars indicate 95% confidence intervals for the mean correlations.

-

Figure 4—source data 1

Values of the rank-order correlations shown in the figure, for each participant.

Also includes the MNI mask for ventral temporal cortex.

- https://doi.org/10.7554/eLife.47142.011

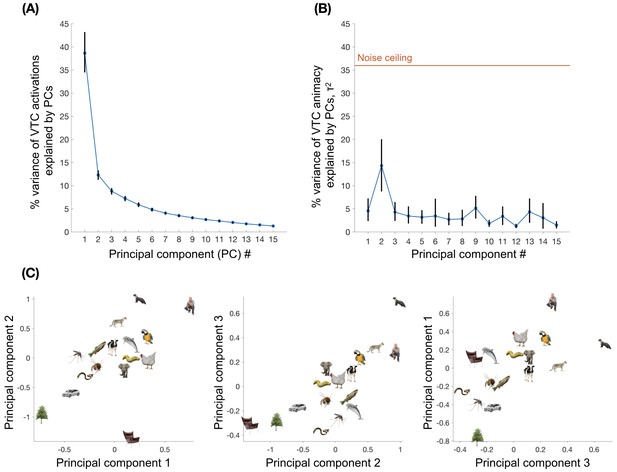

The contribution of the principal components of VTC activations to VTC animacy.

Principal component analysis was performed on the average of the regression weights (VTC activations) for each of the 16 objects, which were computed using a general linear model for each run of the main experiment. (A) The average of the participant-wise percentage variance in the VTC activations explained by each of the 15 principal components is shown. (B) The average participant-wise correlations between the principal components (PCs) and VTC animacy are shown. Although the first PC captures more variance than the second PC, the second PC contributes more to VTC animacy than the first. None of the individual PCs can fully account for VTC animacy. (C) The average scores of the 16 objects on the first three PCs are shown. Animacy organizations are seen in all the three PCs, where humans and other mammals lie on one end, insects in the middle, and cars and other inanimate objects lie on the other end of the representational spaces.

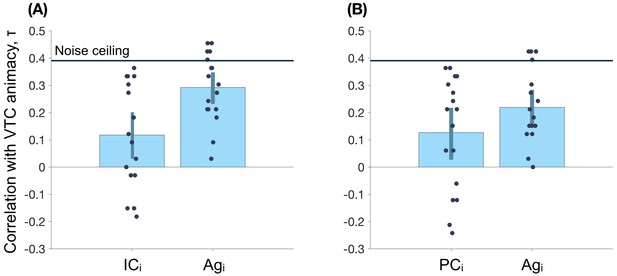

The contributions of image and perceptual categorizabilities (IC and PC), independent of agency (Ag), to VTC animacy.

Agency is regressed out of IC and PC and correlations of those two residuals (ICi and PCi) with VTC animacy are shown in (A) and (B), respectively. Both IC and PC explain variance in VTC animacy that is not accounted for by Ag (p < 0.05 for both correlations). IC and PC are also separately regressed out of Ag and the correlations of the two residuals with VTC animacy are shown in (A) and (B) respectively (p < 0.05 for both correlations). Echoing the observations in Figure 4C, agency contributes to VTC animacy independently of either IC, PC, or a combination of both (visual categorizability - VC in Figure 4C).

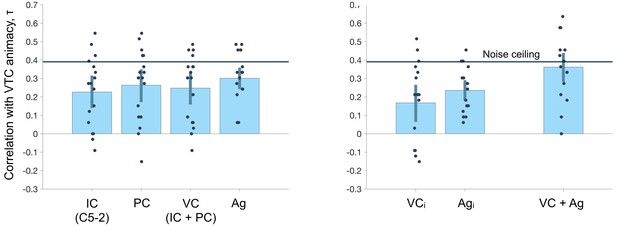

The robustness of our findings to the choice of the layer of VGG-16 used to quantify image categorizability.

Eberhardt et al. (2016) showed that the image categorizability scores of objects (different images than the ones we used) from the second layer of the fifth group of convolutional layers (C5-2) of VGG-16 correlated highest with how quickly humans categorized those objects as animate or inanimate in a behavioral experiment. Results were highly similar when the image categorizability (IC) as used in Figure 4 was replaced by the image categorizability of C5-2. Specifically, the independent contributions of visual categorizability and agency to VTC animacy remained significant and the correlation between the combined model and VTC animacy was at VTC animacy noise ceiling.

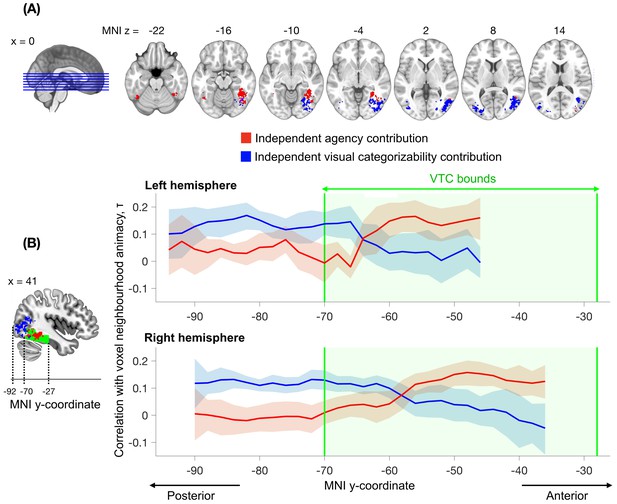

Searchlight analysis testing for the independent contributions of agency and visual categorizability to the animacy continuum.

The analysis followed the approach performed within the VTC ROI (Figure 4C, middle panel) but now separately for individual spheres (100 voxels). The independent contribution of agency is observed within anterior VTC, while the independent contribution of visual categorizability extends from posterior VTC into the lateral occipital regions. Results are corrected for multiple comparisons (Materials and methods). (B) The correlations between agency and the animacy continuum in the searchlight spheres (variance independent of visual categorizability, in red) and the mean of the correlations between image and perceptual categorizabilities and the animacy continuum in the searchlight spheres (variance independent of agency, in blue), are shown as a function of the MNI y-coordinate. For each participant, the correlations are averaged across x and z dimensions for all the searchlight spheres that survived multiple comparison correction in the searchlight analysis depicted in (A). The blue and red bounds around the means reflect the 95% confidence bounds of the average correlations across participants. The green area denotes the anatomical bounds of VTC. Visual categorizability contributes more than agency to the animacy organization in the spheres in posterior VTC. This difference in contribution switches within VTC and agency contributes maximally to the animacy organization in more anterior regions of VTC.

-

Figure 5—source data 1

Values of the correlations shown in the figure, for each participant, and the maps of the significant independent contributions of agency and visual categorizability across the brain.

- https://doi.org/10.7554/eLife.47142.014

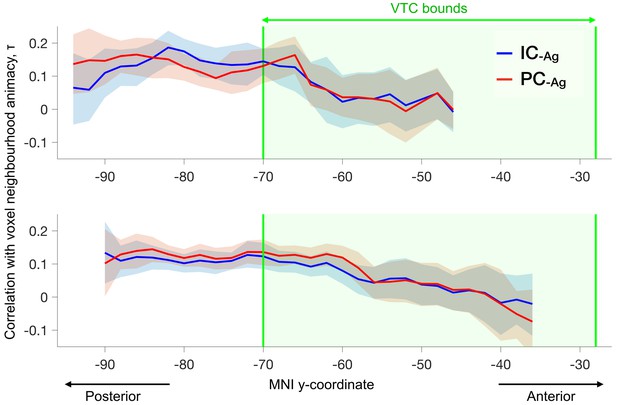

The contributions (independent of agency) of image and perceptual categorizabilities to the animacy continuum in the searchlight spheres are shown (ICAg and PCAg).

Both factors contribute to similar extents to the variance in the animacy continua.

Additional files

-

Transparent reporting form

- https://doi.org/10.7554/eLife.47142.015