Linguistic processing of task-irrelevant speech at a cocktail party

Figures

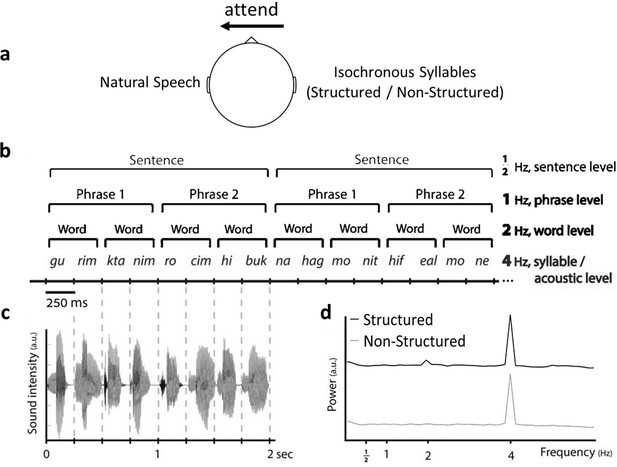

Illustration of the Dichotic Listening Paradigm.

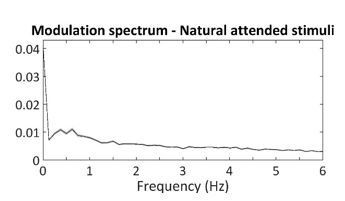

(a) Participants were instructed to attended right or left ear (counterbalanced) and ignore the other. To-be-attended stimulus was always natural Hebrew speech, and multiple choice questions about the content were asked at the end of each trial. The task-irrelevant ear was always presented with hierarchical frequency-tagging stimuli in two conditions: Structured and Non-Structured. (b) Example of intelligible (Structured) speech composed of 250 ms syllables in which 4 levels of information are differentiated based on their rate: acoustic/syllabic, word, phrasal, and sentential rates (at 4, 2, 1, and 0.5 Hz, respectively). Translation of the two Hebrew sentences in the example: ‘Small puppies want a hug’ and ‘A taxi driver turned on the meter.’ Control stimuli were Non-Structured syllable sequences with the same syllabic-rate of 4 Hz. (c) Representative sound wave of a single sentence (2 s). Sound intensity fluctuates at the rate of 4 Hz. (d) Modulation spectrua of the speech envelopes in each condition. Panels b and c are reproduced from Figure 1A and Figure 1B of Makov et al., 2017. Panel d has been adapted from Figure 1C of Makov et al., 2017.

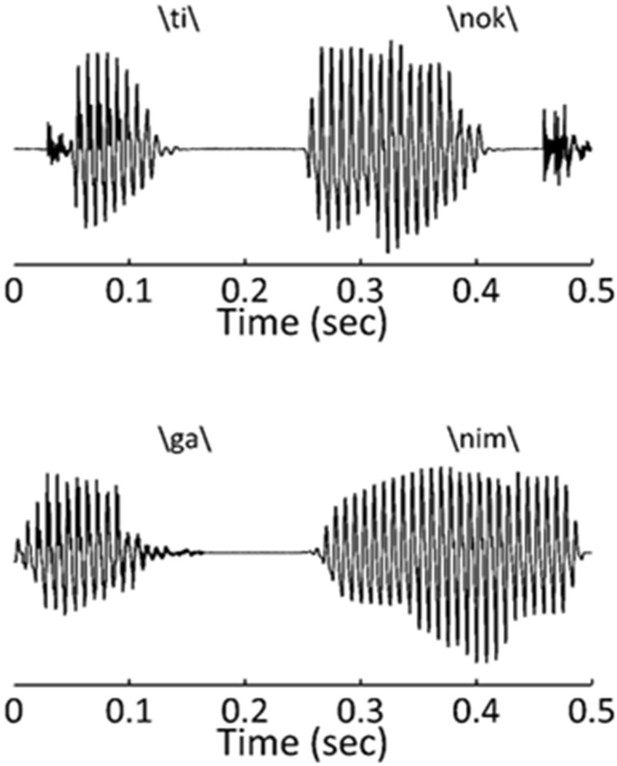

Two examples of start and end syllables for bi-syllabic Hebrew words.

This illustrates the natural differences in envelope-shape for different syllables. If short CV syllables systematically occur at the start vs. end of words, this can potentially create a 2Hz peak in the modulation spectrum.

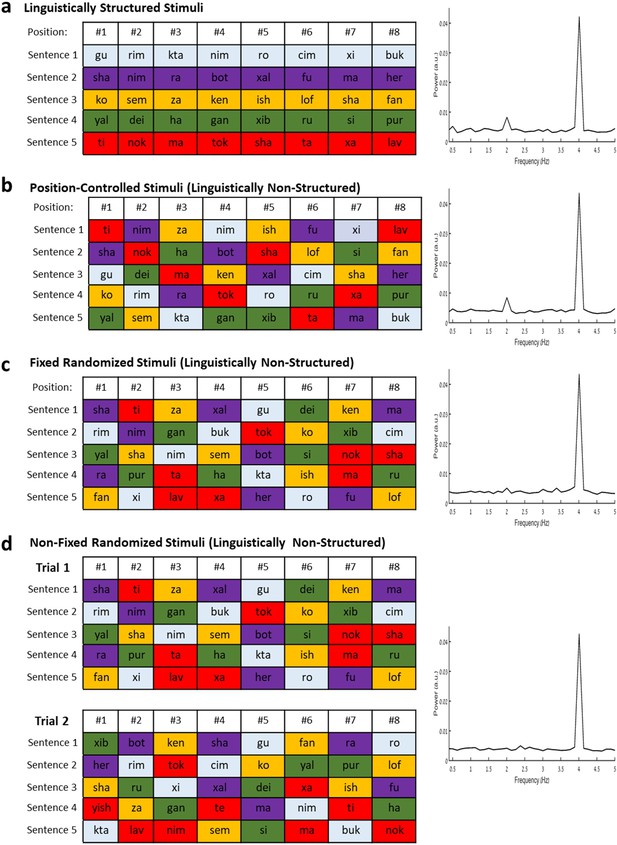

The effects of syllable-order on the modulation spectrum.

Each panel shows examples for sentences/pseudo-sentence constructed in different ways (left) and the resulting modulation spectrum for this type of stimulus (right). (a) Examples of five Structured sentences used in the current study. (b) Example of Position-Controlled pseudo-sentences, where the syllables used in the Structured sentences are randomized across pseudo-sentences, but maintain the same position as in the Structured sentences. (c) Examples of Fixed Randomized Stimuli, where a set of pseudo-sentences are constructed through random assignment of syllables (with no position-constraints), and these fixed pseudo-sentences are used to construct all the stimuli across trials. (d) Examples of Non-Fixed Randomized Stimuli, where different randomization of syllables is used to construct stimuli in each trial.

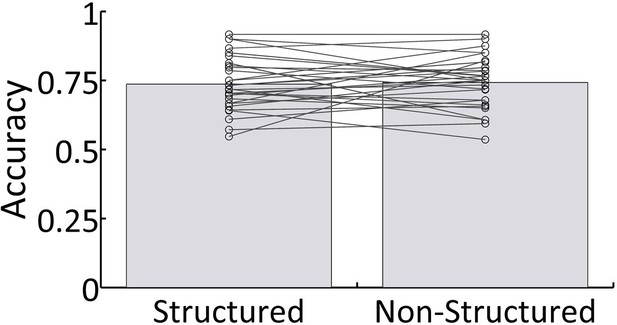

Behavioral results.

Mean accuracy across all participants for both Structured and Non-Structured conditions. Lines represent individual results.

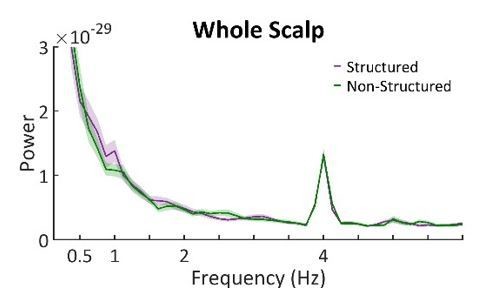

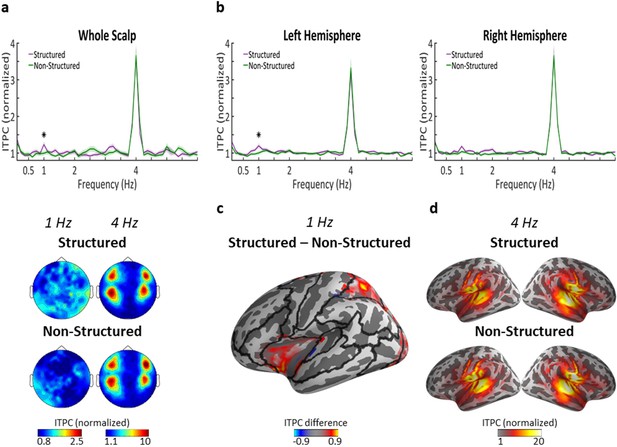

Neural tracking of linguistic structures in task-irrelevant speech.

(a) Top panel shows the ITPC spectrum at the scalp-level (average across all sensors) in response to Structured (purple) and Non-Structured (green) task-irrelevant speech. ITPC values z-score normalized, as implemented in the circ_rtest function (see Materials and methods). Shaded areas indicate SEM across participants (n = 29). Asterisk represents statistically significant difference (p<0.05) between conditions, indicating a significant response at 1 Hz for Structured task-irrelevant speech, which corresponds to the phrase-level. Bottom panel shows the scalp-topography of ITPC at 4 Hz and 1 Hz in the two conditions. (b) ITPC spectrum at the source-level, averaged across all voxels in each hemisphere. Shaded highlights denote SEM across participants (n = 23). Asterisk represents statistically significant difference (p<0.05) between conditions, indicating a significant response at 1 Hz for Structured task-irrelevant speech in the left, but not right hemisphere. (c) Source-level map on a central inflated brain depicting the ROIs in the left hemisphere where significant differences in ITPC at 1 Hz were found for Structured vs. Non-Structured task-irrelevant speech. Black lines indicate the parcellation into the 22 ROIs used for source-level analysis. (d) Source-level maps showing localization of the syllabic-rate response (4 Hz) in both conditions.

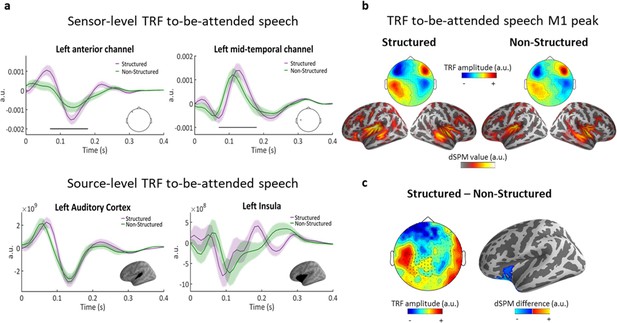

Speech tracking of to-be-attended speech.

(a) top, TRF examples from two sensors, showing the positive and negative poles of the TRF over the left hemisphere. Shaded highlights denote SEM across participants. Black line indicates the time points where the difference between conditions was significant (spatio-temporal cluster corrected). bottom, TRF examples from source-level ROIs in left Auditory Cortex and in the left Inferior Frontal/Insula region. (b) topographies (top) and source estimations (bottom) for each condition at M1 peak (140 ms). (c) left, topography of the difference between conditions (Structured – Non-Structured) at the M1 peak (140 ms). Asterisks indicate the MEG channels where this difference was significant (cluster corrected). right, significant cluster at the source-level.