As part of the eLife Continuum publishing platform, images are presented on the online journal, eLife, using the IIIF image API. This allows the journal to serve image content at the desired resolution and size for the user, and to be responsive and efficient when the user zooms in and out. How this technology was implemented within eLife Continuum is described below. Continuum is open source and available for others to use and build on. For more about publishing with Continuum, see our resources for publishers.

Blogpost by Giorgio Sironi, Software Engineer in Test

The International Image Interoperability Framework, or IIIF, is a community-driven image framework with well-defined application program interfaces (APIs) for making the world’s image repositories interoperable and accessible (as described in a previous Labs post). This includes the IIIF Image API, a technology that allows viewers to manipulate, transform and convert portions of very large images, minimizing the bandwidth required to transfer them over the Internet and making possible to work with images with dimensions ranging in the tens of thousands pixels.

All images served on elifesciences.org are done so using an image API we set up on an open source IIIF server, meaning that the resolution of a displayed image is dynamically selected as to the best fit for the user’s browser. In this blog post, I describe how this implementation was set up.

Software

Unlike relational databases, IIIF servers are not a commodity, and the choice of server implementation is going to constrain other parameters such as the image storage and the supported formats. Writing a server from scratch was not the appropriate solution for us as the work that it performs is non-trivial: cutting, resizing, rotating and especially converting images between different formats. Unlike for simpler domains like indexing text, image-related software has a huge amount of test cases to be verified, corresponding to images of all sizes, formats and colours.

The IIIF Image API 2.0 allows several levels of compliance:

- Level 0 only allows full portions of the image, at predefined sizes

- Level 1 adds the capability to request image portions, at any size

- Level 2 adds rotation, and multiple output options such as grayscale and the PNG format

For our own implementation of IIIF on eLife, we chose Loris, an image server providing IIIF Image API Level 2. Loris is a small Python web application, backed by many Python and C libraries. We run Loris in uWSGI, as we would do with any standard Python web application.

We expose Loris to the outside world through an Nginx server, which is capable of solving the standard problems of HTTP traffic such as setting cache headers and performing redirects. Nginx can scale to thousands of concurrent connections, and adds minimal overhead on top of the computationally intensive work of image manipulation.

Storage

Once we had chosen a IIIF server, we went to check out a few storage solutions for providing it with original, high-resolution images. The trade-off in storage is to use a medium that provides a faster access, or a medium that is less performant but provides a better cost per gigabyte.

In the context of our Amazon Web Services (AWS) infrastructure, the choice boils down to:

- Elastic File System (EFS, a glorified Network File System), shared external volumes attached to the virtual machines running Loris (EC2 instances)

- Elastic Block Storage (EBS, a glorified network attached block storage), external volumes attached to a single EC2 instance

- S3 as an object storage

EFS would be a very bad choice because this is a read-only storage from the IIIF server side, with no interaction between servers.

Solid-state EBS volumes cost ~$0.10 per GB/month, although some of these costs could be cut by using slower magnetic disks or throughput-optimised if the quantity of data reach some minimum threshold.

S3 costs ~$0.023 per GB/month, to which some API call costs must be added, but no data transfer charge is applied inside the same region (us-east-1). Therefore it is economically very convenient for long-term, append-only data storage like a corpus of images. Moreover, Loris provides a resolver that can cache on the local disk a copy of the original image.

Therefore, we started using S3 as a backend: it provided the IIIF servers with a single source of truth for the reference images and perfectly fit as the lower level of the storage hierarchy, with the local filesystem cache of Loris on top, and ultimately a Content Delivery Network.

Loris will always have read the entire source image onto the local disk before starting to manipulate it. There is however no reason to conform to S3 as a proprietary protocol, so we used one of Loris's HTTP resolvers to load images through the `s3-external-1.amazonaws.com` hostname.

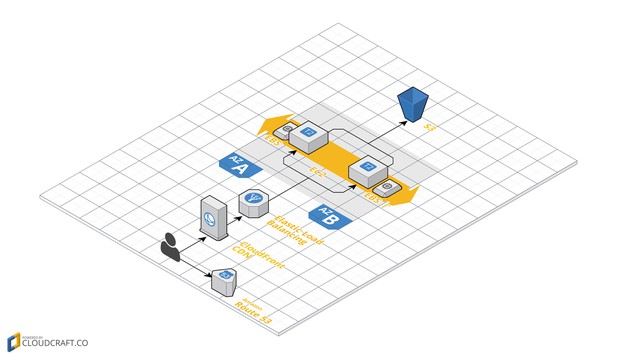

Infrastructure

Infrastructure in the real world is often referred to as the fundamental facilities of a country. In the computing world it consists of all the (now virtualized) hardware components that connect users with the information they want to obtain. In the case of IIIF, the laundry list for the required infrastructure consists of:

- Two or more virtual machines: `t2.medium` EC2 instances are inexpensive and good for bursts of increased compute power when there are peaks in traffic.

- Their volumes: not-particularly-fast hard drives can be used as a cache of original and generated images. The standard storage provided by EC2 instances is only ~7 GB, of which most is occupied by the operating system. Additional volumes can be used as the second level of storage, to avoid cleaning the cache every half an hour.

- Load balancing: servers can be detached one at a time from ELBs, in order to perform maintenance or cleaning operations like cache pruning without interrupting traffic. ELBs can also perform HTTPS termination, making the single IIIF servers easier to set up as they don't need to be configured with SSL certificates.

- Content Delivery Networks (CDNs): CloudFront can be used for edge caching, storing cached versions of popular images near the user’s location to reduce latency. The benefit of CloudFront is its simplicity, although the lack of protection from cache stampedes and the fairly long time it takes to invalidate content and update its configurations are drawbacks.

Infrastructure required to deliver images using the IIIF Image API. Image composed using Cloudcraft.

Operations

Once the architecture had been set up, particular care was needed to keep entropy from breaking down the system and to ensure continuing availability through the weeks and months that follow deployment. Without safeguards in place, a small problem like disk space filled by a log could degenerate into errors visible to the user and and an inability to access the images at all.

The first monumental task was to schedule a periodical cache-cleaning mechanism to retrieve disk space. We tried to perform this on a live server, but there is no correct way to guarantee traffic is not going to be affected, for example due to the removal of an expired reference image that is currently being used to generate a response. Moreover, detecting the least recently modified or accessed files to implement a Least Recently Used policy can be very intensive for the filesystem due to the sheer amount of files and directories being created. Therefore, cache cleaning is now performed while taking the instance being cleaned off the load balancer, and performing what in most cases is a blunt cleaning that deletes the whole content of the cache folders.

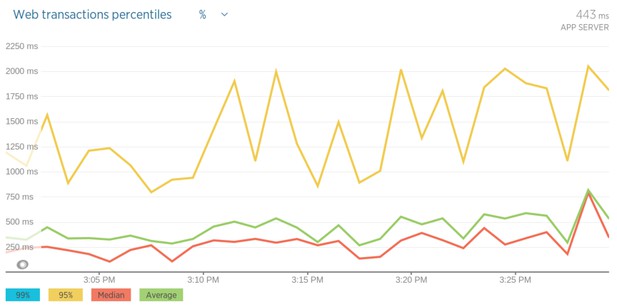

A second key aspect is monitoring: we want to be alerted in case a sizeable percentage of requests are failing, if the latency for serving generated images becomes too high, or if a disk or CPU are getting to 100% utilisation. To this end, we set up New Relic on both the application and server side, gaining non-intrusive insight in what is happening to live traffic.

New Relic latency measurements.

Testing

The fifth and final concern, not to be ignored, is to be able to upgrade without fear. We have set up a testing pipeline that takes a new version of Loris (usually just a commit), sets it up on a cluster of four servers and runs the whole corpus through it, requesting a sample resize and the `info.json` file for each image. It helps to have a template to create a testing environment for your IIIF implementation, in this case parameterising the number of servers in order to get a high throughput, complete the test run in an acceptable time of about three hours and then shutdown the testing environment and its operating expenses.

This test suite was extremely helpful at the start of the IIIF project, when we discovered that about 10% of the original TIFF files were incompatible with the libraries used by Loris, and we introduced a fallback to the equivalent JPEG version. The eLife corpus was about 110 GB and growing at the time, and with this fallback we had to modify only two source images to comply to a more standard colour space.

Results

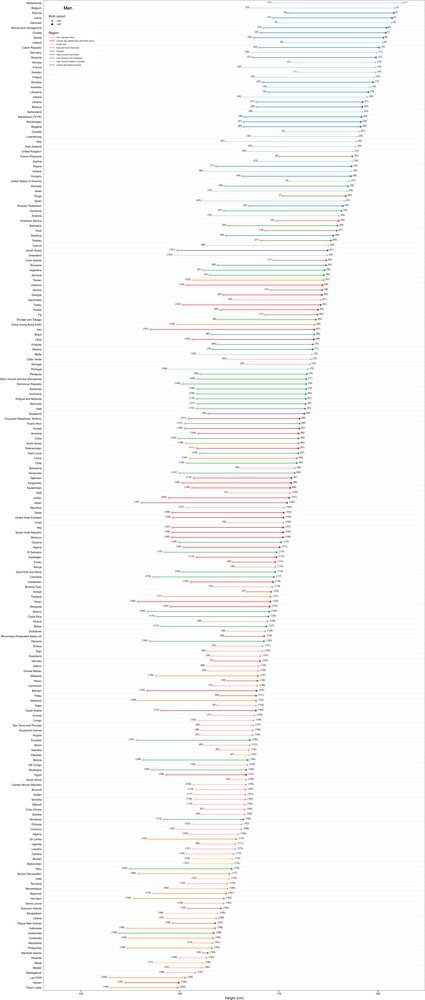

Since the eLife 2.0 launch, the IIIF Image API has been used to cut and serve the most suitable images and image portions in every single article and page on elifesciences.org. For example, we can display a full figure, scaled down in resolution for simplicity:

A full figure is accessed using https://iiif.elifesciences.org/lax:13410/elife-13410-fig4-v2.tif/full/,1000/0/default.jpg. NCD Risk Factor Collaboration, eLife 2016;5:e13410.

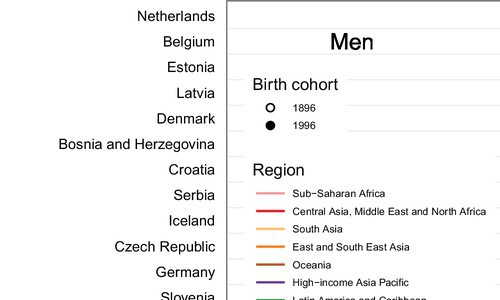

Or a closer view, closer to the full resolution:

A section of the graph at higher resolution is accessed using https://iiif.elifesciences.org/lax:13410/elife-13410-fig4-v2.tif/0,0,1000,600/500,/0/default.jpg. NCD Risk Factor Collaboration, eLife 2016;5:e13410.

The Image API provides a dynamic way to serve pixels, but opens up the possibility to build more services on top, such as human annotations over image portions, or mashups and correlations of figures from disparate articles. All we persistently store is the full resolution, lossless image, and we let the IIIF pipeline do the hard work of reacting to the user’s display needs.

An earlier version of this post is at https://medium.com/@g.sironi/the-iiif-elife-implementation-d1f940005517.

For the latest in innovation, eLife Labs and new open-source tools, sign up for our technology and innovation newsletter. You can also follow @eLifeInnovation on Twitter.