Efficient recognition of facial expressions does not require motor simulation

Figures

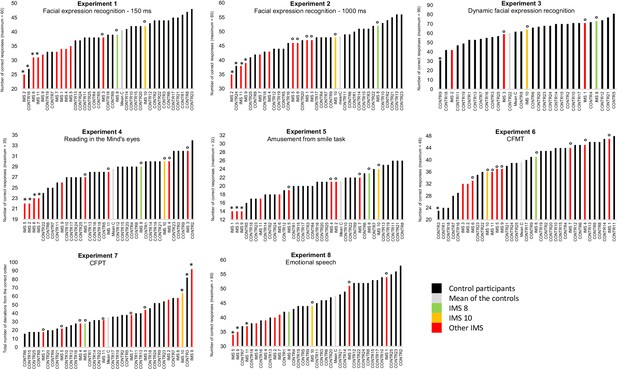

Results of Experiments 1–8 by individual participant.

In black: control participants; in light grey: mean of the controls; in green and yellow: IMS 8 and 10 who performed Experiments 1–5 with normotypical efficiency; in red: the nine other IMS. A small circle (°) indicates IMS participants with a ‘normotypical’ score (at least above 0.85 standard deviation below the controls’ mean performance) after control participants with an abnormally low score (below 2 SD from the other control participants) was/were discarded (indicated by an *). An asterisk (*) also indicates IMS participants with a score below two standard deviations from the mean of the controls.

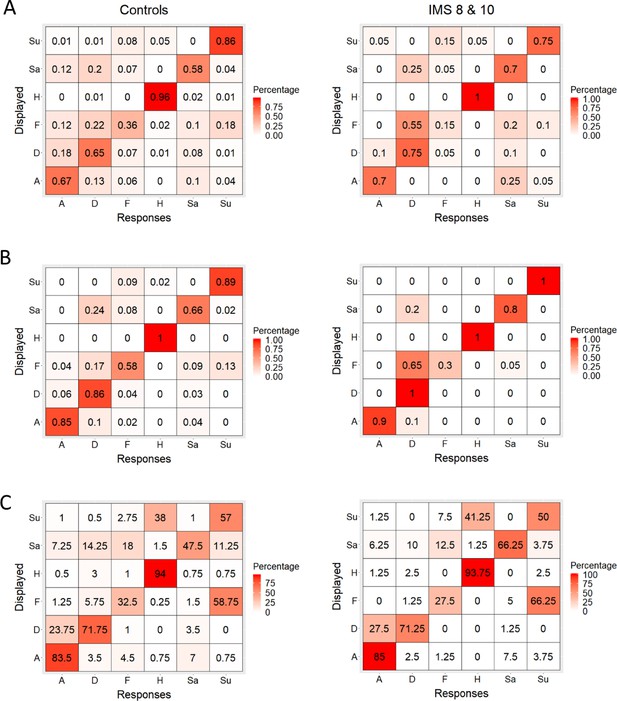

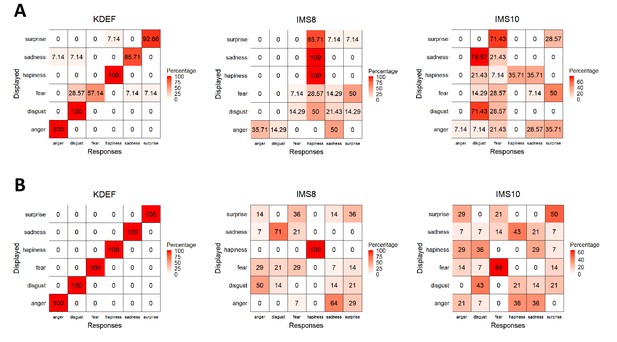

Confusion matrices.

(A, B, C) Distribution of control participants’ (left) and IMS 8 and 10’s (right) percentage of trials in which they chose each of the six response alternatives when faced with the six displayed facial expressions in Experiment 1 (A), 2 (B), and 3 (C).

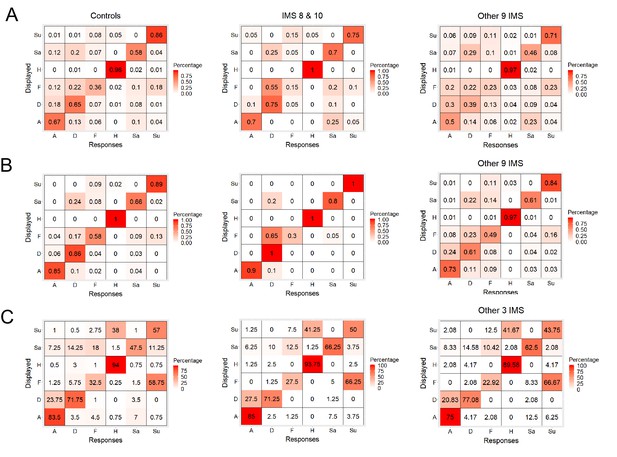

Confusion matrices.

(A, B, C) Distribution of control participants’ (left), IMS 8 and 10’s (middle) and the other IMS’s (right) percentage of trials in which they chose each of the six response alternatives when faced with the six displayed facial expressions in Experiment 1 (A), 2 (B), and 3 (C).

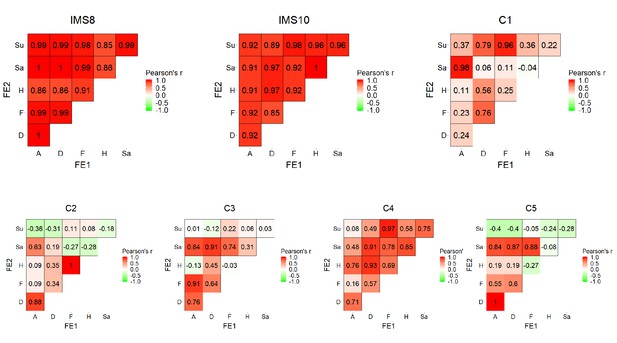

Analysis of participants' action units when imitating facial expressions.

IMS 8, 10 and 5 control participants were asked to imitate pictures of an actor’s face expressing one of six facial expressions (anger, disgust, fear, happiness, sadness, and surprise). Their imitation was video-recorded and, then, analyzed offline with OpenFace 2.1.0, an open source deep learning facial recognition system allowing automatic detection of action unit (AU) presence and intensity (Amos et al., 2016; Baltrusaitis et al., 2016). For each participant and facial expression, we first computed the average intensity of 12 facial action units relevant for facial expressions (AU01, 02, 04, 05, 06, 07, 09, 12, 15, 20, 23 and 26). Then, to obtain a measure of the similarity between the different facial expressions executed by each participant, we correlated the intensity of the 12 facial action units observed for the different facial expressions to each other. This allowed obtaining an objective measure of the similarity of the intensities of the different relevant action units when the IMS executed the different facial expressions. The results indicate that the intensity of the action units during the execution of the different facial expressions were highly correlated in the three IMS and much more correlated than in typical control participants. This supports the claim that although IMS 8 and 10 could execute some subtle facial movements, these movements were largely unspecific.

IMS 8 and 10's facial expression execution.

(A) Facial expression naming task. Control participants’ (six men, nine women, mean age = 26) were presented sequentially with a picture model from the Karolinska Directed Emotional Faces set (KDEF; Actress AF01, front view; Lundqvist et al., 1998) and video-clips of the IMS 8 and 10 (see Figure 2—figure supplement 1B-D) executing the six basic facial expressions (in rows) and were asked to choose the corresponding label (in column) among six alternatives (anger, disgust, fear, happiness, sadness, and surprise). Control participants categorized accurately the facial expressions of the picture model but erred most of the time when asked to categorize the facial expressions of the IMS. Under the (minimal) assumption that a facial expression is recognized accurately if (1) it is more often correctly than incorrectly labelled and (2) that its corresponding label is provided more often for that expression than for other ones, only the facial expression of anger in IMS8 (recognized by 36% of the control participants) has been recognized accurately by the controls. B. Facial expression sorting task. Control participants (five men, nine women) were presented simultaneously with the six facial expressions executed by a model (Actress AF01, front view from the KDEF; Lundqvist et al., 1998), by IMS8 or by IMS10 and were asked to associated the six pictures to their corresponding labels (anger, disgust, fear, happiness, sadness, and surprise). Each label could be used only once. Control participants categorized accurately the facial expressions of the picture model but erred most of the time when asked to categorize the facial expressions of the two IMS. Under the assumption that a facial expression is recognized accurately if (1) it is more often correctly than incorrectly labelled and (2) that its corresponding label is provided more often for that expression than for other ones, only the facial expressions of happiness in IMS8 (100% of the controls) and disgust, fear and surprise in IMS 10 (recognized by 43%, 64 and 50 of the control participants) have been recognized accurately by the controls.

Tables

Summary of the IMS participants’ facial movements.

| IMS | Inf. lip | Sup. lip | Nose | Eyebrows | Forehead | R. cheek | L. cheek | Sup. R. eyelid | Inf. R. eyelid | Sup. L. eyelid | Inf. L. eyelid |

|---|---|---|---|---|---|---|---|---|---|---|---|

| IMS1 | None | None | None | None | None | None | None | None | None | None | None |

| IMS2 | Slight | None | None | None | None | Slight | Slight | Slight | Slight | Slight | Slight |

| IMS3 | Slight | None | None | None | None | None | None | Slight | None | Slight | None |

| IMS4 | Slight | None | None | None | None | Slight | Slight | None | None | None | None |

| IMS5 | Slight | Slight | None | None | None | Slight | Slight | Slight | None | Slight | None |

| IMS6 | Mild | None | None | None | None | Mild | Mild | Slight | None | Slight | None |

| IMS7 | None | None | None | None | None | Mild | None | Mild | Slight | None | None |

| IMS8 | Slight | None | None | None | None | Mild | Slight | Slight | Slight | Slight | Slight |

| IMS9 | Mild | None | None | None | None | Mild | Slight | Slight | Slight | None | None |

| IMS10 | None | None | None | None | None | Slight | Slight | None | None | None | None |

| IMS11 | None | None | None | None | None | Slight | None | None | None | None | None |

Information regarding IMS participants’ visual and visuo-perceptual abilities.

| Vision | Reported best corrected acuity | Strabismus | Eye movements | Mid-level perception* (modified t-test)2 | |

|---|---|---|---|---|---|

| IMS1 | Hypermetropy, astigmatism | Mild vision loss (7/10) | Slight | H: Absent; V: Reduced | 0.9 |

| IMS2 | Hypermetropy, astigmatism | Normal vision | Slight | H: Absent; V: Reduced | −3.3 |

| IMS3 | Myopia | Normal vision | None | H: Absent; V: Reduced | −0.3 |

| IMS4 | Hypermetropy, astigmatism | Mild vision loss (5/10) | None | H: Absent; V: Typical | −2.9 |

| IMS5 | Hypermetropy, astigmatism | Mild vision loss (8/10) | Slight | H: Very limited; V: Typical | −0.3 |

| IMS6 | Hypermetropy, astigmatism | Normal vision | Slight | H: Typical; V: Typical | 0.1 |

| IMS7 | Hypermetropy, astigmatism | Moderate vision loss of the left eye (2/10) | None | H: Typical; V: Typical | 0.5 |

| IMS8 | Normal | Normal vision | None | H: Typical; V: Typical | 0.5 |

| IMS9 | Normal | Normal vision | Slight | H: Absent; V: Absent | −3.3 |

| IMS10 | Myopia | Mild vision loss (8/10) | None | H: Absent; V: Typical | −0.3 |

| IMS11 | Myopia, astigmatism | Mild vision loss: 6/10 right eye; 5/10 left eye | Slight | H: Reduced; V: Typical | −4.2 |

-

* Leuven Perceptual Organization Screening Test, L-POST (Torfs et al., 2014). 2(Crawford and Howell, 1998).

Additional files

-

Supplementary file 1

Information regarding IMS participants’ demographic, neurological, psychiatric, medical and surgical/therapeutic history.

- https://cdn.elifesciences.org/articles/54687/elife-54687-supp1-v2.docx

-

Supplementary file 2

Facial action units corresponding to the facial expression of the six basic emotions and their presence/absence in the repertoire of the IMS 8 and 10.

- https://cdn.elifesciences.org/articles/54687/elife-54687-supp2-v2.docx

-

Transparent reporting form

- https://cdn.elifesciences.org/articles/54687/elife-54687-transrepform-v2.docx