SpikeInterface, a unified framework for spike sorting

Figures

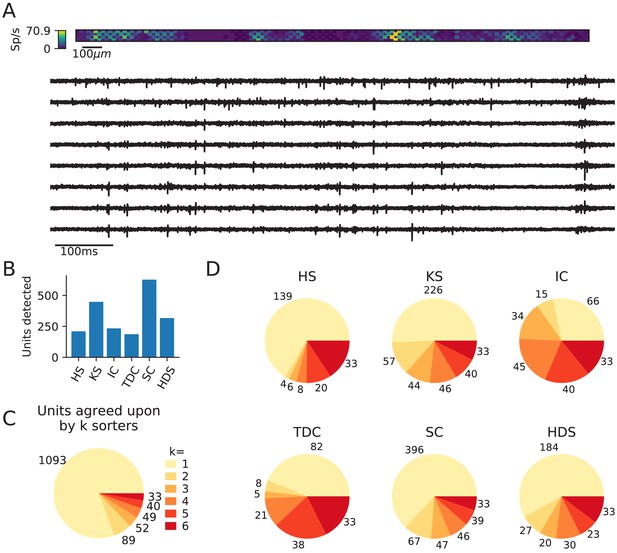

Comparison of spike sorters on a real Neuropixels dataset.

(A) A visualization of the activity on the Neuropixels array (top, color indicates spike rate estimated on each channel evaluated with threshold detection) and of traces from the Neuropixels recording (below). (B) The number of detected units for each of the six spike sorters (HS = HerdingSpikes2, KS = Kilosort2, IC = IronClust, TDC = Tridesclous, SC = SpyKING Circus, HDS = HDSort). (C) The total number of units for which k sorters agree (unit agreement is defined as 50% spike match). (D) The number of units (per sorter) for which k sorters agree; most sorters find many units that other sorters do not.

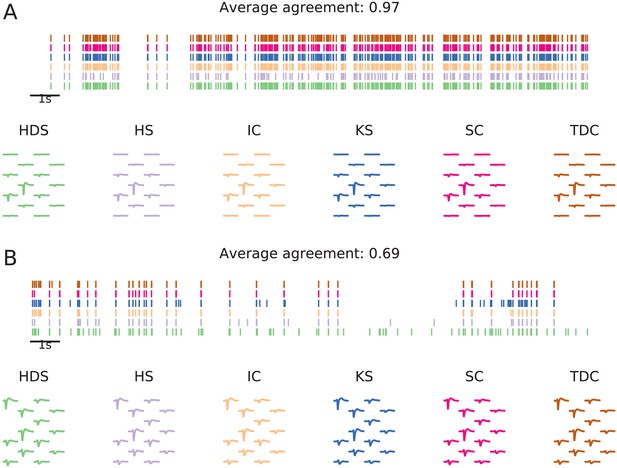

Examples of matched units in a Neuropixels recording.

The illustration shows units from six spike sorters that were matched by spike train comparison. Panel (A) shows a unit with high agreement score (0.97), and panel (B) a lower agreement score (0.69). In both panels, the top plot shows the spike trains (the first 20 s of the recording) found by each sorter, and below unit templates (estimated from waveforms of 100 spikes randomly sampled from each unit) are shown.

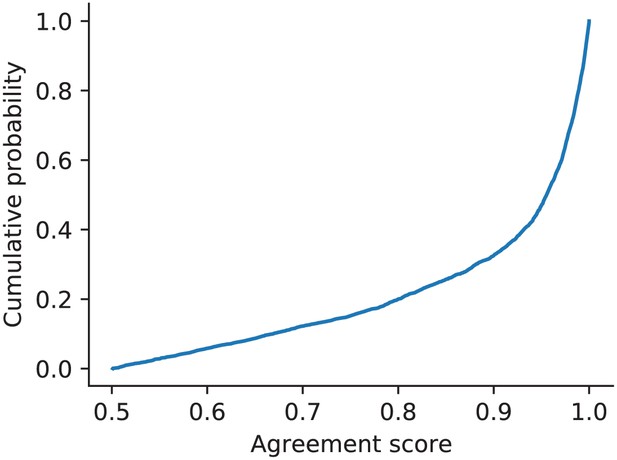

Cumulative histogram of agreement scores (above threshold of .5 that defines a match) for the ensemble sorting of the simulated ground-truth dataset.

This analysis was performed with the six chosen sorters and highlights how over 80% of the matched units had an agreement score greater than 0.8.

Comparison of spike sorters on a Neuropixels recording.

This dataset contains spontaneous neural activity from the rat cortex (motor and somatosensory areas) by Marques-Smith et al., 2018a; Marques-Smith et al., 2018b (dataset spe-c1). The dataset is also available at https://gui.dandiarchive.org/#/dandiset/000034/draft. (A) A visualization of the activity on the Neuropixels array (top, color indicates spike rate estimated on each channel evaluated with threshold detection) and of traces from the Neuropixels recording (below). (B) The number of detected units for each of the six spike sorters (HS = HerdingSpikes2, KS = Kilosort2, IC = IronClust, TDC = Tridesclous, SC = SpyKING Circus, HDS = HDSort). (C) The total number of units for which k sorters agree (unit agreement is defined as 50% spike match). (D) The number of units (per sorter) for which k sorters agree; Most sorters find many units that other sorters do not. The analysis notebook for this analysis can be found at https://spikeinterface.github.io/blog/ensemble-sorting-of-a-neuropixels-recording-2/.

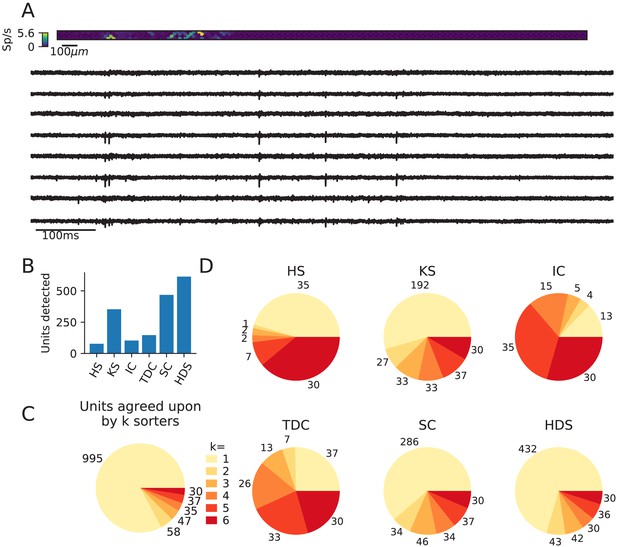

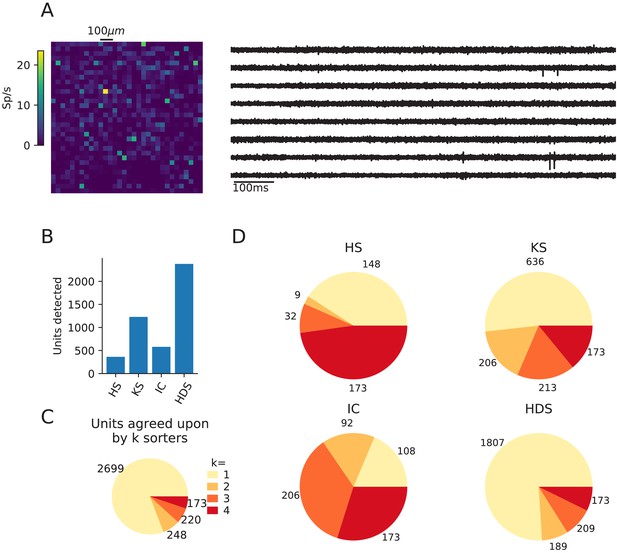

Comparison of spike sorters on a Biocam recording from a mouse retina.

This retina recording (Hilgen et al., 2017) has 1’024 channels in a square configuration, and a sampling frequency of 23199 Hz. The dataset can be found at https://gui.dandiarchive.org/#/dandiset/000034/draft. Only four spike sorters were capable of processing this data set (HS = HerdingSpikes2, KS = Kilosort2, IC = IronClust, HDS = HDSort). (A) A visualization of the activity on the Biocam array (top, color indicates spike rate estimated on each channel evaluated with threshold detection) and of traces from the recording (below). (B) The number of detected units for each of the four spike sorters. (C) The total number of units for which k sorters agree (unit agreement is defined as 50% spike match). (D) The number of units (per sorter) for which k sorters agree; most sorters find many units that other sorters do not. The analysis notebook for this analysis can be found at https://spikeinterface.github.io/blog/ensemble-sorting-of-a-3brain-biocam-recording-from-a-retina/.

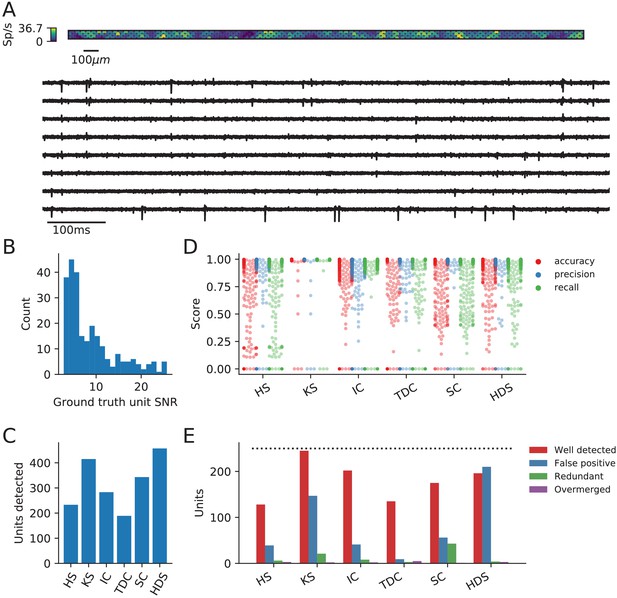

Evaluation of spike sorters on a simulated Neuropixels dataset.

(A) A visualization of the activity on and traces from the simulated Neuropixels recording. (B) The signal-to-noise ratios (SNR) for the ground-truth units. (C) The number of detected units for each of the six spike sorters (HS = HerdingSpikes2, KS = Kilosort2, IC = IronClust, TDC = Tridesclous, SC = SpyKING Circus, HDS = HDSort). (D) The accuracy, precision, and recall of each sorter on the ground-truth units. (E) A breakdown of the detected units for each sorter (precise definitions of each unit type can be found in the SpikeComparison Section of the Methods). The horizontal dashed line indicates the number of ground-truth units (250).

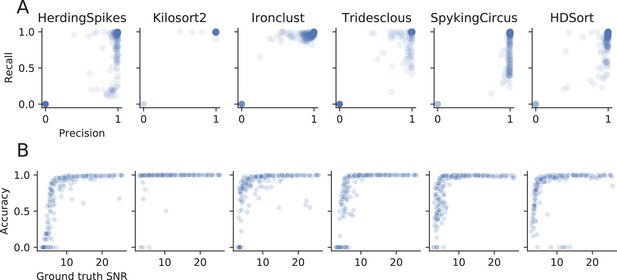

Evaluation of spike sorters performance metrics.

(A) Precision versus recall for the ground-truth comparison the simulated dataset. Some sorters seem to favor precision (HerdingSpikes, SpyKING Circus, HDSort), others instead have higher recall (Ironclust) or score well on both measures (Kilosort2). Tridesclous does not show a bias towards precision or recall. (B) Accuracy versus SNR. All the spike sorters (except Kilosort2) show a strong dependence of performance with respect to the SNR of the ground-truth units. Kilosort2, remarkably, is capable of achieving a high accuracy also for low-SNR units.

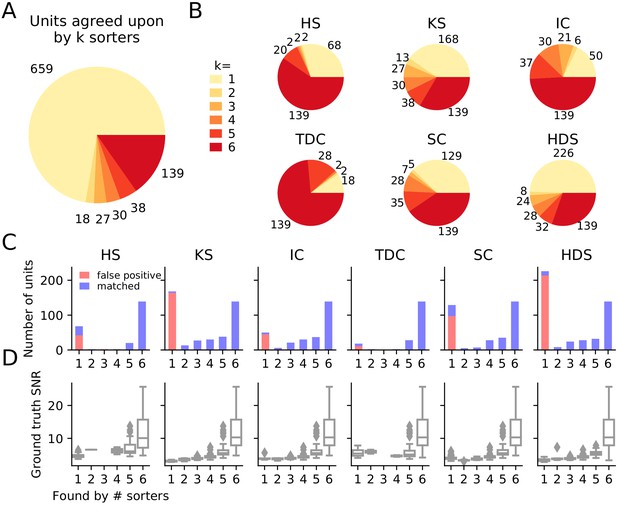

Comparison of spike sorters on a simulated Neuropixels dataset.

(A) The total number of units for which k sorters agree (unit agreement is defined as 50% spike match). (B) The number of units (per sorter) for which k sorters agree; Most sorters find many units that other sorters do not. (HS = HerdingSpikes2, KS = Kilosort2, IC = IronClust, TDC = Tridesclous, SC = SpyKING Circus, HDS = HDSort) (C) Number of matched ground-truth units (blue) and false positive units (red) found by each sorter on which k sorters agree upon. Most of the false positive units are only found by a single sorter. Number of false positive units found by sorters: HS = 4, KS = 4, IC = 4, SC = 2, TDC = 1, HDS = 2. (D) Signal-to-noise ratio (SNR) of ground-truth unit with respect to the number of k sorters agreement. Results are split by sorter.

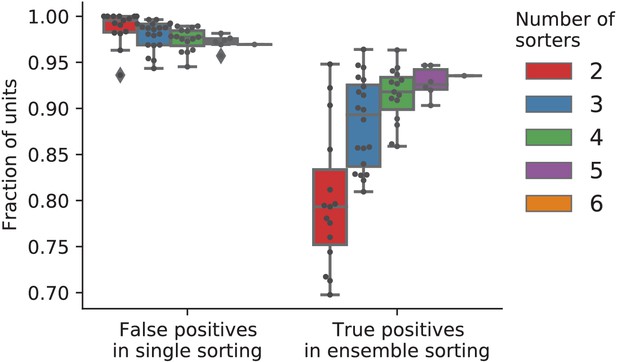

The fractions of predicted false and true positive units from ensembles using different numbers of sorters.

All possible subsets of two to five of the six sorters were tested by removing corresponding units from the full sorting comparison. Each dot corresponds to one unique combination of sorters. This analysis shows that false positive units are well-identified using pairs of sorters (almost all false positive units are only found by one sorter), indicating that the sorters are biased in different ways. However, the fraction of true positives in the ensemble (at least two sorters agree) can be significantly lower when only pairs of sorters are used. This is explained by the fact that, for this dataset, a fraction of true positive units are only found by one sorter (as expected since the quality of detection and isolation of the units varies among sorters). In contrast, using four or more sorters reliably identifies most true positive units. For two sorters, the most reliable identification of true positives was achieved by combining two of Kilosort2, Ironclust, and HDSort.

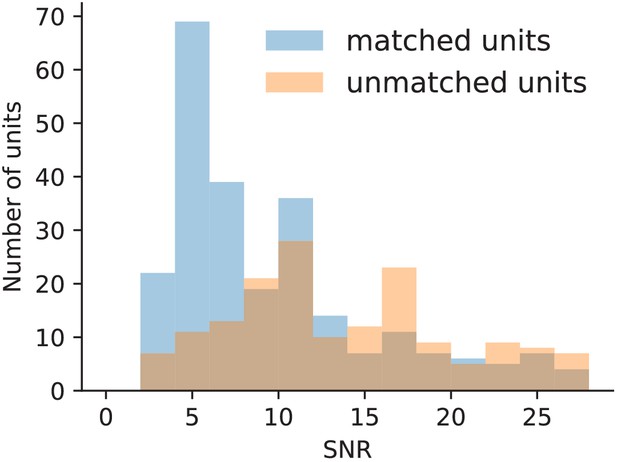

The SNR of all units found by Kilosort2 in the ground-truth data separated into those with and without matches in the ground-truth spike trains.

Many detected false positive units have an SNR above the mode of the ground-truth SNR, indicating that SNR is not a good measure to separate false and true positives in this case.

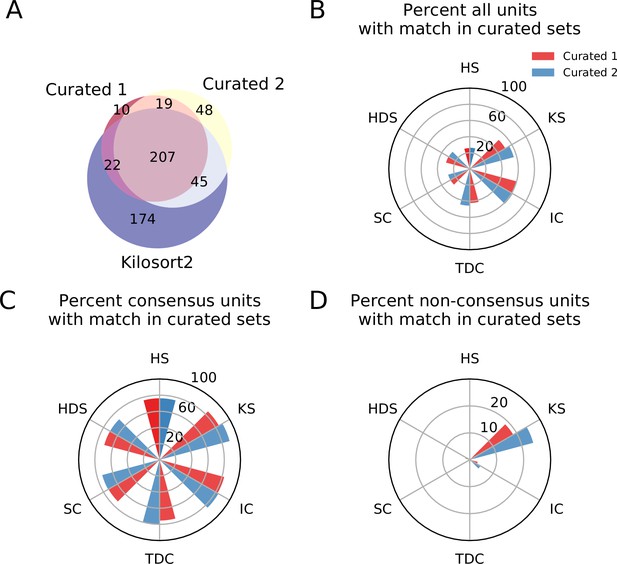

Comparison between consensus and manually curated outputs.

(A) Venn diagram showing the agreement between Curator 1 and 2. 174 units are discarded by both curators from the Kilsort2 output. (B) Percent of matched units between the output of each sorter and C1 (red) and C2 (blue). Ironclust has the highest match with both curated datasets. (C) Similar to C, but using the consensus units (units agreed upon by at least two sorters - ). The percent of matching with curated datasets is now above 70% for all sorters, with Kilosort2 having the highest match (KSc ∩C1 = 84.55%, KSc∩C2 = 89.55%), slightly higher than Ironclust (ICc ∩ C1 = 82.63%, ICc ∩ C2 = 83.83%). (D) Percent of non-consensus units () matched to curated datasets. The only significant overlap is between Curator one and Kilosort2, with a percent around 18% (KSnc ∩ C1 = 18.58%, KSnc ∩ C2 = 24.34%).

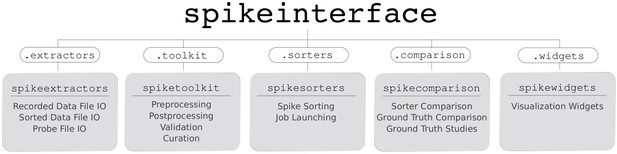

Overview of SpikeInterface’s Python packages, their different functionalities, and how they can be accessed by our meta-package, spikeinterface.

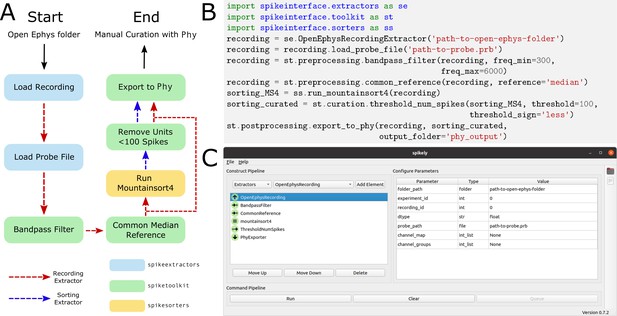

Sample spike sorting pipeline using SpikeInterface.

(A) A diagram of a sample spike sorting pipeline. Each processing step is colored to represent the SpikeInterface package in which it is implemented and the dashed, colored arrows demonstrate how the Extractors are used in each processing step. (B) How to use the Python API to build the pipeline shown in (A). (C) How to use the GUI to build the pipeline shown in (A).

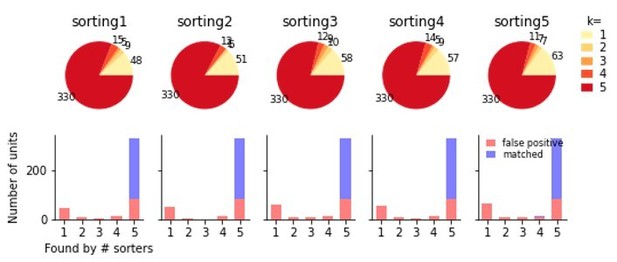

Comparison of five individual runs of Kilosort2 on the simulated Neuropixels recording.

The top shows the proportions of units from each sorting found in k other sortings. Below these units are split according to false and true positive units after comparison to the ground-truth data. While a sizable fraction of false positive units are unique to each run of the sorter, many are identical in all sortings, indicating that variability in multiple sorter outputs cannot be used to reliably separate false and true positive units.

Tables

Currently available file formats in SpikeInterface and if they are writable.

*The Phy writing method is implemented in spiketoolkit as the export_to_phy function (all other writing methods are implemented in spikeextractors).

Currently available quality metrics in Spikeinterface.

Re-implemented by researchers at Allen Institute for Brain and by the SpikeInterface team.

| Metric | Description | Reference |

|---|---|---|

| Signal-to-noise ratio | The signal-to-noise ratio computed on unit templates. | N/A |

| Firing rate | The average firing rate over a time period. | N/A |

| Presence ratio | The fraction of a time period in which spikes are present. | N/A |

| Amplitude Cutoff | An estimate of the miss rate based on an amplitude histogram. | N/A |

| Maximum drift | The maximum change in spike position (computed as the center of mass of the energy of the first principal component score) throughout a recording. | N/A |

| Cumulative drift | The cumulative change in spike position throughout a recording. | N/A |

| ISI violations | The rate of inter-spike-interval (ISI) refractory period violations. | Hill et al., 2011 |

| Isolation Distance | Radius of the smallest ellipsoid that contains all the spikes from a cluster and an equal number of spikes from other clusters (centered on the specified cluster). | Harris et al., 2001 |

| L-ratio | Assuming that the distribution of spike distances from a cluster center is multivariate normal, L-ratio is the average value of the tail distribution for non-member spikes of that cluster. | Schmitzer-Torbert and Redish, 2004 |

| D-Prime | The classification accuracy between two units based on linear discriminant analysis (LDA) | Hill et al., 2011 |

| Nearest-neighbors | A non-parametric estimate of unit contamination using nearest-neighbor classification. | Chung et al., 2017 |

| Silhouette score | The ratio between cohesiveness of a cluster (distance between member spikes) and its separation from other clusters (distance to non-member spikes). | Rousseeuw, 1987 |

Currently available spike sorters in Spikeinterface.

TM = Template Matching; SL = Spike Localization; DB = Density-based clustering.

| Name | Method | Notes | Reference |

|---|---|---|---|

| Klusta | DB | Python-based, semi-automatic, designed for low channel count, dense probes. | Rossant et al., 2016 |

| Mountainsort4 | DB | Python-based, fully automatic, unique clustering method (isosplit), designed for low channel count, dense probes and tetrodes. | Chung et al., 2017 |

| Kilosort | TM | MATLAB-based, GPU support, semi-automated final curation. | Pachitariu et al., 2016 |

| Kilosort2 | TM | MATLAB-based, GPU support, semi-automated final curation, designed to correct for drift. | Pachitariu et al., 2018 |

| SpyKING Circus | TM | Python-based, fast and scalable with CPUs, designed to correct for drift. | Yger et al., 2018 |

| HerdingSpikes2 | DB + SL | Python-based, fast and scalable with CPUs, scales up to thousands of channels. | Hilgen et al., 2017 |

| Tridesclous | TM | Python-based, graphical user interface, GPU support, multi-platform | Garcia and Pouzat, 2015 |

| IronClust | DB + SL | MATLAB-based, GPU support, designed to correct for drift. | Jun et al., 2020 |

| Wave clus | TM | Matlab-based, fully automatic, designed for single electrodes and tetrodes, multi-platform. | Chaure et al., 2018 |

| HDsort | TM | Matlab-based, fast and scalable, designed for large-scale, dense arrays. | Diggelmann et al., 2018 |