Efficient coding in biophysically realistic excitatory-inhibitory spiking networks

eLife Assessment

This study offers a valuable treatment of how the population of excitatory and inhibitory neurons integrates principles of energy efficiency in their coding strategies. The convincing analysis provides a comprehensive characterisation of the model, highlighting the structured connectivity between excitatory and inhibitory neurons. The role of the many free parameters are discussed and studied in depth.

https://doi.org/10.7554/eLife.99545.4.sa0Valuable: Findings that have theoretical or practical implications for a subfield

- Landmark

- Fundamental

- Important

- Valuable

- Useful

Convincing: Appropriate and validated methodology in line with current state-of-the-art

- Exceptional

- Compelling

- Convincing

- Solid

- Incomplete

- Inadequate

During the peer-review process the editor and reviewers write an eLife Assessment that summarises the significance of the findings reported in the article (on a scale ranging from landmark to useful) and the strength of the evidence (on a scale ranging from exceptional to inadequate). Learn more about eLife Assessments

Abstract

The principle of efficient coding posits that sensory cortical networks are designed to encode maximal sensory information with minimal metabolic cost. Despite the major influence of efficient coding in neuroscience, it has remained unclear whether fundamental empirical properties of neural network activity can be explained solely based on this normative principle. Here, we derive the structural, coding, and biophysical properties of excitatory-inhibitory recurrent networks of spiking neurons that emerge directly from imposing that the network minimizes an instantaneous loss function and a time-averaged performance measure enacting efficient coding. We assumed that the network encodes a number of independent stimulus features varying with a time scale equal to the membrane time constant of excitatory and inhibitory neurons. The optimal network has biologically plausible biophysical features, including realistic integrate-and-fire spiking dynamics, spike-triggered adaptation, and a non-specific excitatory external input. The excitatory-inhibitory recurrent connectivity between neurons with similar stimulus tuning implements feature-specific competition, similar to that recently found in visual cortex. Networks with unstructured connectivity cannot reach comparable levels of coding efficiency. The optimal ratio of excitatory vs inhibitory neurons and the ratio of mean inhibitory-to-inhibitory vs excitatory-to-inhibitory connectivity are comparable to those of cortical sensory networks. The efficient network solution exhibits an instantaneous balance between excitation and inhibition. The network can perform efficient coding even when external stimuli vary over multiple time scales. Together, these results suggest that key properties of biological neural networks may be accounted for by efficient coding.

eLife digest

The networks of nerve cells that make up the brain are complex and versatile. They enable the information processing necessary for both simple and complex thought processes. But, the organization of nerve networks in the brain is a topic of great debate among scientists.

One idea is that nerve cell networks in the brain are organized to be as efficient as possible at transmitting information. Scientists supporting this idea say it allows the brain to send accurate information using as little energy as possible.

Scientists have developed mathematical models to explain how this efficient coding model of brain activity works. But how accurately these mathematical models capture complex brain tasks is up for debate. Some question how well these models explain how the brain makes sense of sensory information like sights or smells. Are they able to explain nerve cell organization or how nerve cells react to new information or experiences? Scientists also question how well the mathematical models capture biological and physical constraints on nerve cell activity.

To answer these questions, Koren et al. used mathematical models to systematically test whether the efficient coding model was consistent with what happens in realistic circumstances. The experiments show that mathematical models of efficient coding are consistent with actual brain cell behavior, organization and interconnections. The models also reflected the cells’ biological and physical constraints.

The experiments support the idea that brain networks are designed for efficiency. But the models used in the study are too simple to assess the full range and complexity of information processing in the brain. More studies are needed to test more complex mathematical models that better recreate more advanced brain activities. Further study of the biological and physical constraints on nerve cells in the brain may shed more light on how they behave in brain networks.

Introduction

Information about the sensory world is represented in the brain through the dynamics of neural population activity (Abbott et al., 2016; Thalmeier et al., 2016). One prominent theory about the principles that may guide the design of neural computations for sensory function is efficient coding (Barlow, 1961; Olshausen and Field, 1996; Deneve and Chalk, 2016a). This theory posits that neural computations are optimized to maximize the information that neural systems encode about sensory stimuli while at the same time limiting the metabolic cost of neural activity. Efficient coding has been highly influential as a normative theory of how networks are organized and designed to optimally process natural sensory stimuli in visual (Atick, 1992; Olshausen and Field, 1997; Simoncelli and Olshausen, 2001; Vinje and Gallant, 2000; Olshausen and Field, 2004; Li, 2014), auditory (Lewicki, 2002), and olfactory sensory pathways (Koulakov and Rinberg, 2011).

The first normative neural network models (Olshausen and Field, 1996; Olshausen and Field, 2004) designed with efficient coding principles had at least two major levels of abstractions. First, neural dynamics was greatly simplified, ignoring the spiking nature of neural activity. Instead, biological networks often encode information through millisecond-precise spike timing (Bialek et al., 1991; Bialek and Rieke, 1992; Panzeri et al., 2001; Nemenman et al., 2008; Kayser et al., 2010; Ince et al., 2013; Panzeri et al., 2010). Second, these earlier contributions mostly considered encoding of static sensory stimuli, whereas the sensory environment changes continuously at multiple timescales and the dynamics of neural networks encodes these temporal variations of the environment (Fairhall et al., 2001; Wark et al., 2009; Mazzoni et al., 2008; Młynarski and Hermundstad, 2021).

Recent years have witnessed a considerable effort and success in laying down the mathematical tools and methodology to understand how to formulate efficient coding theories of neural networks with more biological realism (Koren et al., 2023). This effort has established the incorporation of recurrent connectivity (Lochmann et al., 2012; Zhu and Rozell, 2013), of spiking neurons, and of time-varying stimulus inputs (Boerlin et al., 2013; Bourdoukan et al., 2012; Moreno-Bote and Drugowitsch, 2015; Chalk et al., 2016; Deneve and Machens, 2016b; Gutierrez and Denève, 2019; Kadmon et al., 2020; Rullán Buxó and Pillow, 2020). In these models, the efficient coding principle has been implemented by designing networks whose activity maximizes the encoding accuracy, by minimizing the error between a desired representation and a linear readout of network’s activity, subject to a constraint on the metabolic cost of processing. This double objective is captured by a loss function that trades off encoding accuracy and metabolic cost. The minimization of the loss function is performed through a greedy approach, by assuming that a neuron will emit a spike only if this will decrease the loss. This, in turn, yields a set of leaky integrate-and-fire (LIF) neural equations (Boerlin et al., 2013; Bourdoukan et al., 2012), which can also include biologically plausible non-instantaneous synaptic delays (Koren and Denève, 2017; Rullán Buxó and Pillow, 2020; Kadmon et al., 2020). Although most initial implementations did not respect Dale’s law, further studies analytically derived efficient networks of excitatory (E) and inhibitory (I) spiking neurons that respect Dale’s law (Boerlin et al., 2013; Barrett et al., 2016; Chalk et al., 2016; Koren and Panzeri, 2022) and included spike-triggered adaptation (Koren and Panzeri, 2022). These networks take the form of generalized leaky integrate-and-fire (gLIF) models neurons, which are realistic models of neuronal activity (Brette and Gerstner, 2005; Mensi et al., 2012; Gerstner et al., 2014) and capable of accurately predicting real neural spike times in vivo (Jolivet et al., 2008). Efficient spiking models thus have the potential to provide a normative theory of neural coding through spiking dynamics of E-I circuits (Brendel et al., 2020; Koren and Panzeri, 2022; Podlaski and Machens, 2024) with high biological plausibility.

However, despite the major progress described above, we still lack a thorough characterization of which structural, coding, biophysical and dynamical properties of excitatory- inhibitory recurrent spiking neural networks directly relate to efficient coding. Previous studies only rarely made predictions that could be quantitatively compared against experimentally measurable biological properties. As a consequence, we still do not know which, if any, fundamental properties of cortical networks emerge directly from efficient coding.

To address the above questions, we systematically analyze our biologically plausible efficient coding model of E and I neurons that respects Dale’s law (Koren and Panzeri, 2022). We make concrete predictions about experimentally measurable structural, coding and dynamical features of neurons that arise from efficient coding. We systematically investigate how experimentally measurable emergent dynamical properties, including firing rates, trial-to-trial spiking variability of single neurons and E-I balance (Vogels et al., 2011), relate to network optimality. We further analyze how the organization of the connectivity arising by imposing efficient coding relates to the anatomical and effective connectivity recently reported in visual cortex, which suggests competition between excitatory neurons with similar stimulus tuning. We find that several key and robustly found empirical properties of cortical circuits match those of our efficient coding network. This lends support to the notion that efficient coding may be a design principle that has shaped the evolution of cortical circuits and that may be used to conceptually understand and interpret them.

Results

Assumptions and emergent structural properties of the efficient E-I network derived from first principles

We study the properties of a spiking neural network in which the dynamics and structure of the network are analytically derived starting from first principles of efficient coding of sensory stimuli. The model relies on a number of assumptions, described next.

The network responds to time-varying features of a sensory stimulus, (e.g. for a visual stimulus, contrast, orientation, etc.) received as inputs from an earlier sensory area. We model each feature as an independent Ornstein–Uhlenbeck (OU) processes (see Materials and methods). The network’s objective is to compute a leaky integration of sensory features; the target representations of the network, , is defined as

with a characteristic integration time-scale (Figure 1A(i)). We assumed leaky integration of sensory features for consistency with previous theoretical models (Barrett et al., 2016; Chalk et al., 2016; Gutierrez and Denève, 2019). This assumption stems from the finding that, in many cases, integration of sensory evidence by neurons is well described by an exponential kernel (Scott et al., 2017; Danskin et al., 2023). Additionally, a leaky integration of neural activity with an exponential kernel implemented in models of neural activity readout often explains well the perceptual discrimination results (Gold and Shadlen, 2001; Usher and McClelland, 2001; Chong et al., 2020). This suggests that the assumption of leaky integration of sensory evidence, although possibly simplistic, captures relevant aspects of neural computations.

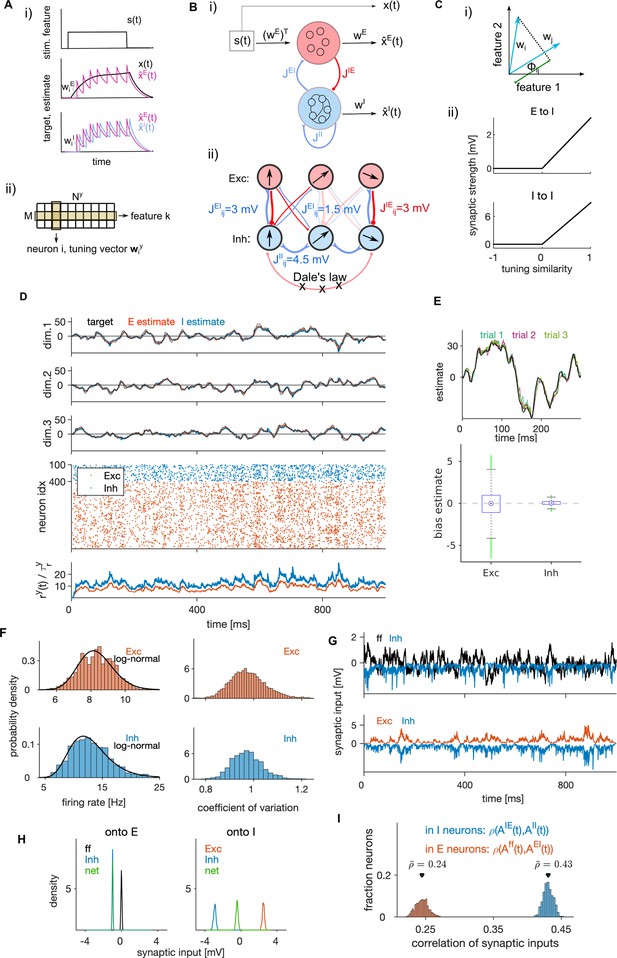

Structural and dynamical properties of the efficient E-I spiking network.

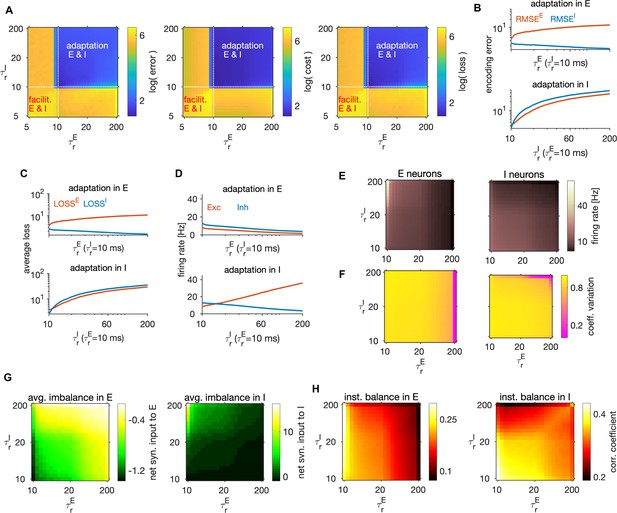

(A, i) Encoding of a target signal representing the evolution of a stimulus feature (top) with one E (middle) and one I spiking neuron (bottom). The target signal integrates the input signal . The readout of the E neuron tracks the target signal and the readout of the I neuron tracks the readout of the E neuron. Neurons spike to bring the readout of their activity closer to their respective target. Each spike causes a jump of the readout, with the sign and the amplitude of the jump being determined by neuron’s tuning parameters. (ii) Schematic of the matrix of tuning parameters. Every neuron is selective to all stimulus features (columns of the matrix), and all neurons participate in encoding of every feature (rows). (B, i) Schematic of the network with E (red) and I (blue) cell type. E neurons are driven by the stimulus features while I neurons are driven by the activity of E neurons. E and I neurons are connected through recurrent connectivity matrices. (ii) Schematic of E (red) and I (blue) synaptic interactions. Arrows represent the direction of the tuning vector of each neuron. Only neurons with similar tuning are connected and the connection strength is proportional to the tuning similarity. (C, i) Schematic of similarity of tuning vectors (tuning similarity) in a 2-dimensional space of stimulus features. (ii) Synaptic strength as a function of tuning similarity. (D) Coding and dynamics in a simulation trial. Top three rows show the signal (black), the E estimate (red) and the I estimate (blue) for each of the three stimulus features. Below are the spike trains. In the bottom row, we show the average instantaneous firing rate (in Hz). (E) Top: Example of the target signal (black) and the E estimate in three simulation trials (colors) for one stimulus feature. Bottom: Distribution (across time) of the time-dependent bias of estimates in E and I cell type. (F) Left: Distribution of time-averaged firing rates in E (top) and I neurons (bottom). Black traces are fits with log-normal distribution. Right: Distribution of coefficients of variation of interspike intervals for E and I neurons. (G) Distribution (across neurons) of time-averaged synaptic inputs to E (left) and I neurons (right). In E neurons, the mean of distributions of inhibitory and of net synaptic inputs are very close. (H) Sum of synaptic inputs over time in a single E (top) and I neuron (bottom) in a simulation trial. (I) Distribution (across neurons) of Pearson’s correlation coefficients measuring the correlation of synaptic inputs and (as defined in Materials and methods, Equation 43) in single E (red) and I (blue) neurons. All statistical results (E–F, H–I) were computed on 10 simulation trials of 10 s duration. For model parameters, see Table 1.

The network is composed of two neural populations of excitatory (E) and inhibitory (I) neurons, defined by their postsynaptic action which respects Dale’s law. For each population, , we define a population readout of each feature, , as a filtered weighted sum of spiking activity of neurons in the population,

where is the spike train of neuron of type and is the vector of decoding weights of the neuron for features (Figure 1A(ii)). We assume that every neuron encodes multiple () stimulus features and that the encoding of every stimulus is distributed among neurons. As a result of the optimization, the decoding weights of the neurons are equivalent to the neuron’s stimulus tuning parameters (see Materials and methods and Brendel et al., 2020). We sampled tuning parameters uniformly from a -dimensional hypersphere with unit radius, giving tuning vectors with unit length to all neurons (see Materials and methods). To control the amount of inhibition in the network, we then multiplied the tuning vectors of I neurons with a factor , homogeneously across all I neurons. Normalization of decoding vectors preserves the heterogeneity of decoding weights across neurons, which may benefit coding efficiency (Zeldenrust et al., 2021).

Following previous work (Boerlin et al., 2013; Barrett et al., 2016; Chalk et al., 2016), we impose that E and I neurons have distinct normative objectives and we define specific loss functions relative to each neuron type. To implement at the same time, as requested by efficient coding, the constraints of faithful stimulus representation with limited computational resources (Tavoni et al., 2019), we define the loss functions of the population as a weighted sum of a time-dependent encoding error and time-dependent metabolic cost:

We refer to , the parameter controlling the relative importance of the metabolic cost over the encoding error, as the metabolic constant of the network. We hypothesize that population readouts of E neurons, , track the target representations, , and the population readouts of I neurons, , track the population readouts of E neurons, , by minimizing the squared error between these quantities (Koren and Panzeri, 2022) (see also (Boerlin et al., 2013; Denève et al., 2017) for related approaches). Furthermore, we hypothesize the metabolic cost to be proportional to the instantaneous estimate of network’s firing frequency. We thus define the variables of loss functions in Equation 3 as

where , is the low-pass filtered spike train of neuron (single neuron readout) with time constant , proportional to the instantaneous firing rate of the neuron: . We then impose the following condition for spiking: a neuron emits a spike at time only if this decreases the loss function of its population (Equation 3) in the immediate future. The condition for spiking also includes a noise term (Materials and methods) accounting for sources of stochasticity in spike generation (Faisal et al., 2008) which include the effect of non-specific inputs from the rest of the brain.

We derived the dynamics and network structure of a spiking network that instantiates efficient coding (Figure 1B, see Materials and methods). The derived dynamics of the subthreshold membrane potential and obey the equations of the generalized leaky integrate and fire (gLIF) neuron

where , , and are synaptic current, spike-triggered adaptation current and non-specific external current, respectively, is the membrane resistance and is the resting potential. This dynamics is complemented with a fire-and-reset rule: when the membrane potential reaches the firing threshold , a spike is fired and is set to the reset potential . The analytical solution in Equation 5 holds for any number of neurons (with at least 1 neuron in each population) and predicts an optimal spike pattern to encode the presented external stimulus. Following previous work (Boerlin et al., 2013) in which physical units were assigned to derived mathematical expressions to interpret them as biophysical variables, we express computational variables (target stimuli in Equation 1, population readouts in Equation 2 and the metabolic constant in Equation 3) with physical units in such a way that all terms of the biophysical model (Equation 5) have realistic physical units.

The synaptic currents in E neurons, , consist of feedforward currents, obtained as stimulus features weighted by the tuning weights of the neuron, and of recurrent inhibitory currents (Figure 1B). Synaptic currents in I neurons, , consist of recurrent excitatory and inhibitory currents. Note that there are no recurrent connections between E neurons, a consequence of our assumption of no across-feature interaction in the leaky integration of stimulus features (Equation 1). This assumption is likely to be simplistic even for early sensory cortices (Emanuel et al., 2021). However, in other studies, we found that many properties of efficient networks implementing leaky integration hold also when input features are linearly mixed during integration (Koren and Panzeri, 2022; Koren et al., 2023).

The optimization of the loss function yielded structured recurrent connectivity (Figure 1B(ii)–C). Synaptic strength between two neurons is proportional to their tuning similarity, forming like-to-like connectivity, if the tuning similarity is positive; otherwise the synaptic weight is set to zero (Figure 1C(ii)) to ensure that Dale’s law is respected. A connectivity structure in which the synaptic weight is proportional to pairwise tuning similarity is consistent with some empirical observations in visual cortex (Znamenskiy et al., 2024) and has been suggested by previous models (Boerlin et al., 2013; Sadeh and Clopath, 2020). Such connectivity organization is also suggested by across-neuron influence measured with optogenetic perturbations of visual cortex (Chettih and Harvey, 2019; Oldenburg et al., 2024). While such connectivity structure is the result of optimization, the rectification of the connectivity that enforces Dale’s law does not emerge from imposing efficient coding, but from constraining the space of solutions to biologically plausible networks. Rectification also sets the overall connection probability to 0.5, which is consistent with empirically observed connection probability from pyramidal (E) neurons to parvalbumin-positive (I) neurons (Pala and Petersen, 2015; Campagnola et al., 2022), but likely overestimates the connection probability from parvalbumin-positive neurons to pyramidal neurons, which tends to be lower (Campagnola et al., 2022). (For a study of how efficient coding would be implemented if the above Dale’s law constraint were removed and each neuron were free to have either an inhibitory or excitatory effect depending on the postsynaptic target, see Appendix 1 and Figure 1—figure supplement 1A–E).

The spike-triggered adaptation current of neuron in population , , is proportional to its low-pass filtered spike train. This current realizes spike-frequency adaptation or facilitation depending on the difference between the time constants of population and single neuron readout (see Results subsection ‘Weak or no spike-triggered adaptation optimizes network efficiency’).

Finally, non-specific external currents have a constant mean that depends on the parameter , and fluctuations that arise from the noise with strength in the condition for spiking. The relative weight of the metabolic cost over the encoding error, , controls how the network responds to feedforward stimuli, by modulating the mean of the non-specific synaptic currents incoming to all neurons. Together with the noise strength , these two parameters set the non-specific synaptic currents to single neurons that are homogeneous across the network and akin to the background synaptic input discussed in Destexhe et al., 2003. By allowing a large part of the distance between the resting potential and the threshold to be taken by the non-specific current, we found a biologically plausible set of optimally efficient model parameters (Table 1) including the firing threshold at about 20 mV from the resting potential, which is within the experimental ballpark (Constantinople and Bruno, 2013), and average synaptic strengths of 0.75 mV (E-I and I-E synapses) and 2.25 mV (I-I synapses), which are consistent with measurements in sensory cortex (Campagnola et al., 2022). An optimal network without non-specific currents can be derived (see Materials and methods, Equation 25), but its parameters are not consistent with biology (see Appendix 2). The non-specific currents can be interpreted as synaptic currents that are modulated by larger-scale variables, such as brain states (see subsection ‘Non-specific currents regulate network coding properties’).

Table of default model parameters for the efficient E-I network.

Parameters above the double horizontal line are the minimal set of parameters needed to simulate model equations (Equation 29a-29h in Materials and methods). Parameters below the double horizontal line are biophysical parameters, derived from the same model equations and from model parameters listed above the horizontal line. Parameters , , and were chosen for their biological plausibility and computational simplicity. Parameters , , , , ratio of mean E-I to I-I synaptic connectivity and are parameters that maximize network efficiency (see the section ‘Criterion for determining model parameters’ in Materials and methods). The metabolic constant and the noise strength are interpreted as global network parameters and are for this reason assumed to be the same across the E and I population, e.g., and (see Equation 3). The connection probability of is the consequence of rectification of the connectivity (see Equation 24 in Materials and methods).

| Parameter | Notation | Value |

|---|---|---|

| Number of E neurons | 400 | |

| Ratio of E to I neuron numbers | 4:1 | |

| Number of the input features | 3 | |

| Time constant of the population readout (E and I) | 10ms | |

| Time constant of the single neuron readout | 10ms | |

| Noise strength (non-specific current) | 5.0 mV | |

| Heterogeneity factor of tuning parameters in E | 1.0 (mV)1/2 | |

| Ratio of mean I-I to E-I synaptic connectivity | mean I-I: mean E-I | 3:1 |

| Metabolic constant | 14 mV | |

| Threshold constant | 18 mV | |

| Distance threshold to reset potential (E neurons) | 19 mV | |

| Distance threshold to reset potential (I neurons) | 21 mV | |

| Connection probability (recurrent synapses) | 0.5 | |

| Mean E-I synaptic weight (EPSP to I at max) | 0.75 mV | |

| Mean I-E synaptic weight (IPSP to E at max) | 0.75 mV | |

| Mean I-I synaptic weight (IPSP at max) | 2.25 mV |

To summarize, the analytical derivation of an optimally efficient network includes gLIF neurons (Burkitt, 2006; Jolivet et al., 2008; Gerstner et al., 2014; Schwalger et al., 2017; Harkin et al., 2021), a distributed code with linear mixed selectivity to the input stimuli (Chang and Tsao, 2017; Kaufman et al., 2014), spike-triggered adaptation, structured synaptic connectivity, and a non-specific external current akin to background synaptic input.

Encoding performance and neural dynamics in an optimally efficient E-I network

The equations for the E-I network of gLIF neurons in Equation 5 optimize the loss functions at any given time and for any set of parameters. In particular, the network equations have the same analytical form for any positive value of the metabolic constant . To find a set of parameters that optimizes the overall performance, we minimized the loss function averaged over time and trials. We then optimized the parameters by setting the metabolic constant such that the encoding error weights 70% and the metabolic error weights 30% of the average loss, and by choosing all other parameters such as to minimize numerically the average loss (see Materials and methods). The numerical optimization was performed by simulating a model of 400 E and 100 I units, a network size relevant for computations within one layer of a cortical microcolumn (Lefort et al., 2009). The set of model parameters that optimized network efficiency is detailed in Table 1. Unless otherwise stated, we will use the optimal parameters of Table 1 in all simulations and only vary parameters detailed in the figure axes.

With optimally efficient parameters, population readouts closely tracked the target signals (Figure 1D, M=3, for E and I neurons, respectively). When stimulated by our three-dimensional time-varying feedforward input, the optimal E-I network provided a precise estimator of target signals (Figure 1E, top). The average estimation bias ( and , see Materials and methods) of the network minimizing the encoding error was close to zero ( = 0.02 and = 0.03) while the bias of the network minimizing the average loss (and optimizing efficiency) was slightly larger and negative (= –0.15 and = −0.34), but still small compared to the stimulus amplitude (Figure 1E, bottom, Figure 1—figure supplement 1F). Time- and trial-averaged encoding error (RMSE) and metabolic cost (MC, see Materials and methods) were comparable in magnitude (, for E and I), but with smaller error and lower cost in I, leading to a better performance in I (average loss of 2.5) compared to E neurons (average loss of 3.7). We report both the encoding error and the metabolic cost throughout the paper, so that readers can evaluate how these performance measures may generalize when weighting differently the error and the metabolic cost.

Next, we examined the emergent dynamical properties of an optimally efficient E-I network. I neurons had higher average firing rates compared to E neurons, consistently with observations in cortex (Neske et al., 2015). The distribution of firing rates was well described by a log-normal distribution (Figure 1F, left), consistent with distributions of cortical firing observed empirically (Buzsáki and Mizuseki, 2014). Neurons fired irregularly, with mean coefficient of variation (CV) slightly smaller than 1 (Figure 1F, right; CV = [0.97, 0.95] for E and I neurons, respectively), compatible with cortical firing (Softky and Koch, 1993). We assessed E-I balance in single neurons through two complementary measures. First, we calculated the average (global) balance of E-I currents by taking the time-average of the net sum of synaptic inputs (shortened to net synaptic input, see Ahmadian and Miller, 2021). Second, we computed the instantaneous (Okun and Lampl, 2008; also termed detailed in Vogels et al., 2011) E-I balance as the Pearson correlation () over time of E and I currents received by each neuron (see Materials and methods).

We observed an excess inhibition in both E and I neurons, with a negative net synaptic input in both E and I cells (Figure 1H), indicating an inhibition-dominated network according to the criterion of average balance (Ahmadian and Miller, 2021). In E neurons, net synaptic current is the sum of the feedforward current and recurrent inhibition and the mean of the net current is close to the mean of the inhibitory current, because feedforward inputs have vanishing mean. Furthermore, we found a moderate instantaneous balance (Xue et al., 2014), stronger in I compared to E cell type (Figure 1G, I, , for I and E neurons, respectively), similar to levels measured empirically in rat visual cortex (Tan et al., 2013).

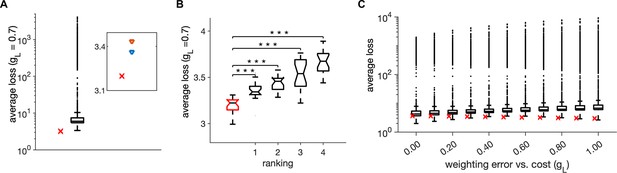

We determined optimal model parameters by optimizing one parameter at a time. To independently validate the so obtained optimal parameter set (reported in Table 1), we varied all six model parameters explored in the paper with Monte-Carlo random joint sampling (10,000 random samples), uniformly within a biologically plausible parameter range for each parameter (Table 2). We did not find any parameter configuration with lower average loss than the setting in Table 1 (Figure 2A–B) when using the weighting of the encoding error with metabolic cost between (Figure 2C). The three parameter settings that came the closest to our configuration on Table 1 had stronger noise but also stronger metabolic constant than our configuration (Table 3). The second, third and fourth configurations had longer time constants of both E and I single neurons. Ratios of E-I neuron numbers and of I-I to E-I connectivity in the second, third, and fourth best configuration were either jointly increased or decreased with respect to our optimal configuration. This suggests that joint covariations in parameters may influence the network’s optimality. While our finite Monte-Carlo random sampling does not fully prove the global optimality of the configuration in Table 1, it shows that it is highly efficient.

Table of parameter ranges for Monte-Carlo sampling.

Minimum and maximum of the uniform distributions from which we randomly drew parameters during Monte-Carlo random sampling.

| Parameter | mean I-I: mean E-I | |||||

|---|---|---|---|---|---|---|

| minimum | 5 ms | 5 ms | 2 mV | 1 mV | 1 | 1 |

| maximum | 50 ms | 50 ms | 29 mV | 10 mV | 8 | 8 |

Monte-Carlo joint random sampling on six model parameters.

(A) Distribution of the trial-averaged loss, with weighting = 0.7, from 10,000 random simulations and using 20 simulation trials of duration of 1 s for each parameter configuration. The red cross marks the average loss of the parameter setting in Table 1. Inset: The average loss of the parameter setting in Table 1 (red cross) and of the first- and second-best parameter settings from the random search. (B) Distribution of the average loss across 20 simulation trials for the parameter setting in Table 1 (red) and for the first four ranked points according to the trial-averaged loss in A. Stars indicate a significant two-tailed t-test against the distribution in red (*** indicate ). (C) Same as in A, for different values of weighting of the error with the cost . Parameters for all plots are in Table 1.

Table of best four parameter settings from Monte-Carlo sampling.

The performance was evaluated using trial- and time-averaged loss. Each parameter setting was evaluated on 20 trials, with each trial using an independent realization of tuning parameters, noise in the non-specific current and initial conditions for the integration of the membrane potentials. We tested 10,000 parameter settings.

| Parameter | mean I-I: mean E-I | |||||

|---|---|---|---|---|---|---|

| First | 12.6 | 11.1 | 2.1 | 4.7 | 5.4 | 3.0 |

| Second | 11.4 | 10.0 | 2.9 | 6.2 | 6.1 | 3.3 |

| Third | 10.0 | 10.7 | 10.1 | 3.0 | 3.2 | 2.5 |

| Fourth | 12.5 | 13.5 | 2.9 | 5.4 | 4.9 | 3.5 |

Competition across neurons with similar stimulus tuning emerging in efficient spiking networks

We next explored coding properties emerging from recurrent synaptic interactions between E and I populations in the optimally efficient networks.

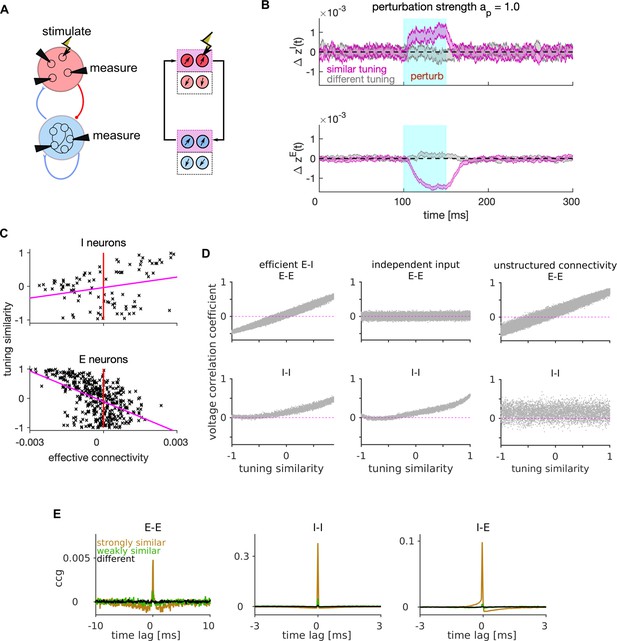

An approach that has recently provided empirical insight into local recurrent interactions is measuring effective connectivity with cellular resolution. Recent effective connectivity experiments photostimulated single E neurons in primary visual cortex and measured its effect on neighbouring neurons, finding that the photostimulation of an E neuron led to a decrease in firing rate of similarly tuned close-by neurons (Chettih and Harvey, 2019). This effective lateral inhibition (Lochmann et al., 2012) between E neurons with similar tuning to the stimulus implements competition between neurons for the representation of stimulus features (Chettih and Harvey, 2019). Since our model instantiates efficient coding by design and because we removed connections between neurons with different selectivity, we expected that our network implements lateral inhibition and would thus give comparable effective connectivity results in simulated photostimulation experiments.

To test this prediction, we simulated photostimulation experiments in our optimally efficient network. We first performed experiments in the absence of the feedforward input to ensure all effects are only due to the recurrent processing. We stimulated a randomly selected single target E neuron and measured the change in the instantaneous firing rate from the baseline firing rate, , in all the other I and E neurons (Figure 3A, left). The photostimulation was modeled as an application of a constant depolarising current with a strength parameter, , proportional to the distance between the resting potential and the firing threshold ( means no stimulation, while indicates photostimulation at the firing threshold). We quantified the effect of the simulated photostimulation of a target E neuron on other E and I neurons, distinguishing neurons with either similar or different tuning with respect to the target neuron (Figure 3A, right; Figure 3—figure supplement 1A–D).

Mechanism of lateral excitation/inhibition in the efficient spiking network.

(A) Left: Schematic of the E-I network and of the stimulation and measurement in a perturbation experiment. Right: Schematic of the propagation of the neural activity between E and I neurons with similar tuning. (B) Trial and neuron-averaged deviation of the firing rate from the baseline, for the population of I (top) and E (bottom) neurons with similar (magenta) and different tuning (gray) to the target neuron. Traces show the mean ± standard error of the mean, with the standard error of the mean on the variability across neurons and across trials. The stimulation strength corresponded to an increase in the firing rate of the stimulated neuron by 28.0 Hz. (C) Scatter plot of the tuning similarity vs. effective connectivity to the target neuron. Red line marks zero effective connectivity and magenta line is the least-squares line. Stimulation strength was . (D) Correlation of membrane potentials vs. the tuning similarity in E (top) and I cell type (bottom), for the efficient E-I network (left), for the network where each E neuron receives independent instead of shared stimulus features (middle), and for the network with unstructured connectivity (right). In the model with unstructured connectivity, elements of each connectivity matrix were randomly shuffled. We quantified voltage correlation using the (zero-lag) Pearson’s correlation coefficient, denoted as , for each pair of neurons. (E) Average cross-correlogram (CCG) of spike timing with strongly similar (orange), weakly similar (green) and different tuning (black). Statistical results (B–E) were computed on 100 simulation trials. The duration of the trial in D-E was 1 s. Parameters for all plots are in Table 1.

The photostimulation of the target E neuron increased the instantaneous firing rate of similarly-tuned I neurons and reduced that of other similarly-tuned E neurons (Figure 3B). We quantified the effective connectivity as the difference between the time-averaged firing rate of the recorded cell in presence or absence of the photostimulation of the targeted cell, measured during perturbation and up to 50 ms after. We found positive effective connectivity on I and negative effective connectivity on E neurons with similar tuning to the target neuron, with a positive correlation between tuning similarity and effective connectivity on I neurons and a negative correlation on E neurons (Figure 3C). We confirmed these effects of photostimulation in presence of a weak feedforward input (Figure 3—figure supplement 1E), similar to the experiments of Chettih and Harvey, 2019 in which photostimulation was applied during the presentation of visual stimuli with weak contrast. Thus, the optimal network replicates the preponderance of negative effective connectivity between E neurons and the dependence of its strength on tuning similarity found in Chettih and Harvey, 2019.

In summary, lateral excitation of I neurons and lateral inhibition of E neurons with similar tuning is an emerging coding property of the efficient E-I network, which recapitulates competition between neurons with similar stimulus tuning found in visual cortex (Chettih and Harvey, 2019; Oldenburg et al., 2024). An intuition of why this competition implements efficient coding is that the E neuron that fires first activates I neurons with similar tuning. In turn, these I neurons inhibit all similarly tuned E neurons (Figure 3A, right), preventing them to generate redundant spikes to encode the sensory information that has already been encoded by the first spike. Suppression of redundant spiking reduces metabolic cost without reducing encoded information (Boerlin et al., 2013; Koren and Denève, 2017).

While perturbing the activity of E neurons in our model qualitatively reproduces empirically observed lateral inhibition among E neurons (Chettih and Harvey, 2019; Oldenburg et al., 2024), these experiments have also reported positive effective connectivity between E neurons with very similar stimulus tuning. Our intuition is that our simple model cannot reproduce this finding because it lacks E-E connectivity.

To explore further the consequences of E-I interactions for stimulus encoding, we next investigated the dynamics of lateral inhibition in the optimal network driven by the feedforward sensory input but without perturbing the neural activity. Previous work has established that efficient spiking neurons may present strong correlations in the membrane potentials, but only weak correlations in the spiking output, because redundant spikes are prevented by lateral inhibition (Boerlin et al., 2013; Deneve and Machens, 2016b). We investigated voltage correlations in pairs of neurons within our network as a function of their tuning similarity. Because the feedforward inputs are shared across E neurons and weighted by their tuning parameters, they cause strong positive voltage correlations between E-E neuronal pairs with very similar tuning and strong negative correlations between pairs with very different (opposite) tuning (Figure 3D, top-left). Voltage correlations between E-E pairs vanished regardless of tuning similarity when we made the feedforward inputs independent across neurons (Figure 3D, top-middle), showing that the dependence of voltage correlations on tuning similarity occurs because of shared feedforward inputs. In contrast to E neurons, I neurons do not receive feedforward inputs and are driven only by similarly tuned E neurons (Figure 3A, right). This causes positive voltage correlations in I-I neuronal pairs with similar tuning and vanishing correlations in neurons with different tuning (Figure 3D, bottom-left). Such dependence of voltage correlations on tuning similarity disappears when removing the structure from the E-I synaptic connectivity (Figure 3D, bottom-right).

In contrast to voltage correlations, and as expected by previous studies (Boerlin et al., 2013; Deneve and Machens, 2016b), the coordination of spike timing of pairs of E neurons (measured with cross-correlograms or CCGs) was very weak (Figure 3E). For I-I and E-I neuronal pairs, the peaks of CCGs were stronger than those observed in E-E pairs, but they were present only at very short lags (lags < 1 ms). This confirms that recurrent interactions of the efficient E-I network wipe away the effect of membrane potential correlations at the spiking output level, and shows information processing with millisecond precision in these networks (Boerlin et al., 2013; Deneve and Machens, 2016b; Koren and Denève, 2017).

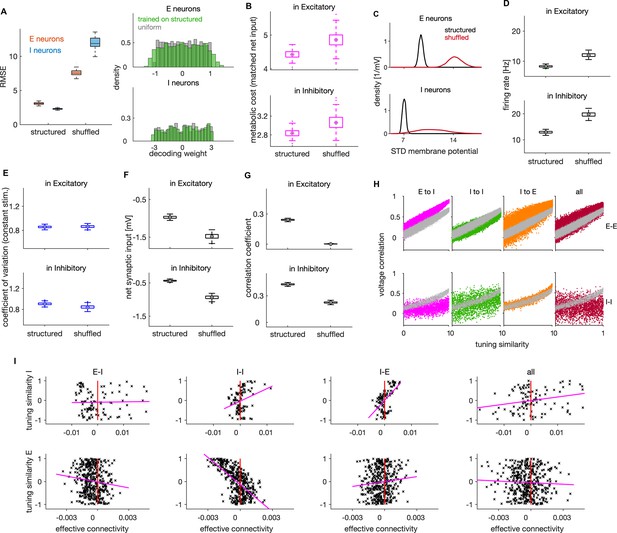

The effect of structured connectivity on coding efficiency and neural dynamics

The analytical solution of the optimally efficient E-I network predicts that recurrent synaptic weights are proportional to the tuning similarity between neurons. We next investigated the role of such connectivity structure by comparing the behavior of an efficient network with an unstructured E-I network, similar to the type studied in previous works (Brunel, 2000; Renart et al., 2010; Mazzoni et al., 2008). We removed the connectivity structure by randomly permuting synaptic weights across neuronal pairs (see Materials and methods). Such shuffling destroys the relationship between tuning similarity and synaptic strength (as shown in Figure 1C(ii)) while it preserves Dale’s law and the overall distribution of connectivity weights.

We found that shuffling the connectivity structure significantly altered the efficiency of the network (Figure 4A, B), neural dynamics (Figure 4C, D, F–H) and lateral inhibition (Figure 4I). In particular, structured networks differ from unstructured ones by showing better encoding performance (Figure 4A), lower metabolic cost (Figure 4B), weaker variance of the membrane potential over time (Figure 4C), lower firing rates (Figure 4D) and weaker average (Figure 4F) and instantaneous balance (Figure 4G) of synaptic inputs. However, we found only a small difference in the variability of spiking between structured and unstructured networks (Figure 4E). While these results are difficult to test experimentally due to the difficulty of manipulating synaptic connectivity structures in vivo, they highlight the importance of the connectivity structure for cortical computations.

Effects of connectivity structure on coding efficiency, neural dynamics and lateral inhibition.

(A) Left: Root mean squared error (RMSE) in networks with structured and randomly shuffled recurrent connectivity. Random shuffling consisted of a random permutation of the elements within each of the three (E–I, I–I, I–E) connectivity matrices. Right: Distribution of decoding weights after training the decoder on neural activity from the structured network (green), and a sample from uniform distribution as typically used in the optimal network. (B) Metabolic cost in structured and shuffled networks with matched average balance. The average balance of the shuffled network was matched with the one of the structured network by changing the following parameters: , and by decreasing the amplitude of the OU stimulus by factor of 0.88. (C) Standard deviation of the membrane potential (in mV) for networks with structured and unstructured connectivity. Distributions are across neurons. (D) Average firing rate of E (top) and I neurons (bottom) in networks with structured and unstructured connectivity. (E) Same as in D, showing the coefficient of variation of spiking activity in a network responding to a constant stimulus. (F) Same as in D, showing the average net synaptic input, a measure of average imbalance. (G) Same as in D, showing the time-dependent correlation of synaptic inputs, a measure of instantaneous balance. (H) Voltage correlation in E-E (top) and I-I neuronal pairs (bottom) for the four cases of unstructured connectivity (colored dots) and the equivalent result in the structured network (grey dots). We show the results for pairs with similar tuning. (I) Scatter plot of effective connectivity versus tuning similarity to the photostimulated E neuron in shuffled networks. The title of each plot indicates the connectivity matrix that has been shuffled. The magenta line is the least-squares regression line and the photostimulation is at threshold (). Results were computed using 200 (A–G) and 100 (H–I) simulation trials of 1 s duration. Parameters for all plots are in Table 1.

We also compared structured and unstructured networks about their relation between pairwise voltage correlations and tuning similarity, by randomizing connections within a single connectivity type (E-I, I-I or I-E) or within all these three connectivity types at once (‘all’). We found the structure of E-I connectivity to be crucial for the linear relation between voltage correlations and tuning similarity in pairs of I neurons (Figure 4H, magenta).

Finally, we analyzed how the structure in recurrent connectivity influences lateral inhibition that we observed in efficient networks. We found that the dependence of lateral inhibition on tuning similarity vanishes when the connectivity structure is fully removed (Figure 4I, ‘all’ on the right plot), thus showing that connectivity structure is necessary for lateral inhibition. While networks with unstructured E-I and I-E connectivity still show inhibition in E neurons upon single neuron photostimulation (because of the net inhibitory effect of recurrent connectivity; Figure 4—figure supplement 1F), this inhibition was largely unspecific to tuning similarity (Figure 4I, ‘E-I’ and ‘I-E’). Unstructured connectivity decreased the correlation between tuning similarity and effective connectivity from in I and E neurons in a structured network to and in networks with unstructured E-I and I-E connectivity, respectively. Removing the structure in I-I connectivity, in contrast, increased the correlation between effective connectivity and tuning similarity in E neurons (, Figure 4I, second from the left), showing that lateral inhibition takes place irrespectively of the I-I connectivity structure.

Previous empirical (Znamenskiy et al., 2024) and theoretical work has established the necessity of strong E-I-E synaptic connectivity for lateral inhibition (Sadeh and Clopath, 2020; Mackwood et al., 2021). To refine this understanding, we asked what is the minimal connectivity structure necessary to qualitatively replicate empirically observed lateral inhibition. We did so by considering a simpler connectivity rule than the one obtained from first principles. We assumed neurons to be connected (with random synaptic efficacy) if their tuning vectors are similar ( if ) and unconnected otherwise ( if ), relaxing the precise proportionality relationship between tuning similarity and synaptic weights (as on Figure 1C(ii)). We found that networks with such simpler connectivity respond to activity perturbation in a qualitatively similar way as the optimal network (Figure 3—figure supplement 1F) and still replicate experimentally observed activity profiles in Chettih and Harvey, 2019.

While optimally structured connectivity predicted by efficient coding is biologically plausible, it may be difficult to realise it exactly on a synapse-by-synapse basis in biological networks. Following Calaim et al., 2022, we verified the robustness of the model to small deviations from the optimal synaptic weights by adding a random jitter, proportional to the synaptic strength, to all synaptic connections (see Materials and methods). The encoding performance and neural activity were barely affected by weak and moderate levels of such perturbation (Figure 4—figure supplement 1G, H), demonstrating that the network is robust against random jittering of the optimal synaptic weights.

In summary, we found that some aspects of recurrent connectivity structure, such as the like-to-like organization, are crucial to achieve efficient coding. Instead, for other aspects there is considerable flexibility; the proportionality between tuning similarity and synaptic weights is not crucial for efficiency and small random jitter of optimal weights has only minor effects. Structured E-I and I-E, but not I-I connectivity, is necessary for implementing experimentally observed pattern of lateral inhibition whose strength is modulated by tuning similarity.

Weak or no spike-triggered adaptation optimizes network efficiency

We next investigated the role of within-neuron feedback triggered by each spike, , that emerges from the optimally efficient solution (Equation 5). A previous study (Gutierrez and Denève, 2019) showed that spike-triggered adaptation, together with structured connectivity, redistributes the activity from highly excitable neurons to less excitable neurons, leaving the population readout invariant. Here, we address model efficiency in presence of adapting or facilitating feedback as well as differential effects of adaptation in E and I neurons.

The spike-triggered within-neuron feedback has a time constant equal to that of the single neuron readout (E neurons) and (I neurons). The strength of the current is proportional to the difference in inverse time constants of single neuron and population readouts, . This spike-triggered current is negative, giving spike-triggered adaptation (Mensi et al., 2012), if the single-neuron readout has longer time constant than the population readout (), or positive, giving spike-triggered facilitation, if the opposite is true (; Table 4). We expected that network efficiency would benefit from spike-triggered adaptation, because accurate encoding requires fast temporal dynamics of the population readouts, to capture fast fluctuations in the target signal, while we expect a slower dynamics in the readout of single neuron’s firing frequency, , a process that could be related to homeostatic regulation of single neuron’s firing rate (Abbott and Nelson, 2000; Turrigiano and Nelson, 2004). In our optimal E-I network we indeed found that optimal coding efficiency is achieved in absence of within-neuron feedback or with weak adaptation in both cell types (Figure 5A). The optimal set of time constants only weakly depended on the weighting of the encoding error with the metabolic cost (Figure 7—figure supplement 1A). We note that adaptation in E neurons promotes efficient coding because it enforces every spike to be error-correcting, while a spike-triggered facilitation in E neurons would lead to additional spikes that might be redundant and reduce network efficiency. Contrary to previously proposed models of adaptation in LIF neurons (Brette and Gerstner, 2005; Schwalger and Lindner, 2013), the strength and the time constant of adaptation in our model are not independent, but they both depend on , with larger yielding both longer and stronger adaptation.

Relation of time constants of single-neuron and population readout set an adaptation or a facilitation current.

The population readout that evolves on a faster (slower) time scale than the single neuron readout determines a spike-triggered adaptation (facilitation) in its own cell type.

| Relative speed | Relation of time constants | Current |

|---|---|---|

| faster than | adaptation in E | |

| slower than | facilitation in E | |

| faster than | adaptation in I | |

| slower than | facilitation in I |

Adaptation, network coding efficiency and excitation-inhibition balance.

(A) The encoding error (left), metabolic cost (middle) and average loss (right) as a function of single neuron time constants (E neurons) and (I neurons), in units of ms. These parameters set the sign, the strength, as well as the time constant of the feedback current in E and I neurons. Best performance (lowest average loss) is obtained in the top right quadrant, where the feedback current is spike-triggered adaptation in both E and I neurons. The performance measures are computed as a weighted sum of the respective measures across the E and I populations with equal weighting for E and I. All measures are plotted on the scale of the natural logarithm for better visibility. (B) Top: Log-log plot of the RMSE of the E (red) and the I (blue) estimates as a function of the time constant of the single neuron readout of E neurons, , in the regime with spike-triggered adaptation. Feedback current in I neurons is set to 0. Bottom: Same as on top, as a function of while the feedback current in E neurons is set to 0. (C) Same as in B, showing the average loss. (D) Same as in B, showing the firing rate. (E) Firing rate in E (left) and I neurons (right), as a function of time constants and . (F) Same as in E, showing the coefficient of variation. (G) Same as E, showing the average net synaptic input, a measure of average imbalance. (H) Same as E, showing the average net synaptic input, a measure of instantaneous balance. All statistical results were computed on 100 simulation trials of 1 s duration. For other parameters, see Table 1.

To gain insights on the differential effect of adaptation in E vs I neurons, we set the adaptation in one cell type to 0 and varied the strength of adaptation in the other cell type by varying the time constant of the single neuron readout. With adaptation in E neurons (and no adaptation in I), we observed a slow increase of the encoding error in E neurons, while the encoding error increased faster with adaptation in I neurons (Figure 5B). Similarly, network efficiency increased slowly with adaptation in E and faster with adaptation in I neurons (Figure 5C), thus showing that adaptation in E neurons decreases less the performance compared to the adaptation in I neurons. With increasing adaptation in E neurons, the firing rate in E neurons decreased (Figure 5D), leading to E estimates with smaller amplitude. Because E estimates are target signals for I neurons and because weaker E signals imply weaker drive to I neurons, average loss of the I population decreased by increasing adaptation in E neurons (Figure 5C top, blue trace).

Firing rates and variability of spiking were sensitive to the strength of adaptation. As expected, adaptation in E neurons caused a decrease in the firing levels in both cell types (Figure 5D, E). In contrast, adaptation in I neurons decreased the firing rate in I neurons, but increased the firing rate in E neurons, due to a decrease in the level of inhibition. Furthermore, adaptation decreased the variability of spiking, in particular in the cell type with strong adaptation (Figure 5F), a well-known effect of spike-triggered adaptation in single neurons (Schwalger and Lindner, 2013).

In regimes with adaptation, time constants of single neuron readout influenced the average balance (Figure 5G) as well as the instantaneous balance (Figure 5H) in E and I cell type. To gain a better understanding of the relationship between adaptation, E-I interactions and network optimality, we measured the instantaneous and time-averaged E-I balance while varying the adaptation parameters and studied their relation with the loss. By increasing adaptation in E neurons, the average imbalance got weaker in E neurons (Figure 5G, left), but stronger in I neurons (Figure 5G, right). Regimes with precise average balance in both cell types were suboptimal (compare Figure 5A, right and G), while regimes with precise instantaneous balance were highly efficient (compare Figure 5A, right and H).

To test how well the average balance and the instantaneous balance of synaptic inputs predict network efficiency, we concatenated the column-vectors of the measured average loss and of the average imbalance in each cell type and computed the Pearson correlation between these quantities. The correlation between the average imbalance and the average loss was weak in the E cell type () and close to zero in the I cell type (), suggesting almost no relation between efficiency and average imbalance. In contrast, the average loss was negatively correlated with the instantaneous balance in both E () and in I cell type (), showing that instantaneous balance of synaptic inputs is positively correlated with network efficiency. When measured for varying levels of spike-triggered adaptation, unlike the average balance of synaptic inputs, the instantaneous balance is thus mildly predictive of network efficiency.

In sum, our results show that the optimally efficient solution does not include within-neuron feedback, while a model with weak and short-lasting spike-triggered adaptation is slightly suboptimal, although still highly efficient. Our results predict that information coding would be more efficient with adaptation than with facilitation. Assuming that our I neurons describe parvalbumin-positive interneurons, our results suggest that the weaker adaptation in I compared to E neurons, reported empirically (Pala and Petersen, 2015), may be beneficial for the network’s encoding efficiency.

Spike-triggered adaptation in our model captures adaptive processes in single neurons that occur on time scales lasting from a couple of milliseconds to tens of milliseconds after each spike. However, spiking in biological neurons triggers adaptation on multiple time scales, including much slower time scales on the order of seconds or tens of seconds (Pozzorini et al., 2013). Our model does not capture adaptive processes on these longer time scales (but see Gutierrez and Denève, 2019).

Non-specific currents regulate network coding properties

In our derivation of the optimal network, we obtained a non-specific external current (in the following, non-specific current) . Non-specific current captures all synaptic currents that are unrelated and unspecific to the stimulus features. This non-specific term collates effects of synaptic currents from neurons untuned to the stimulus (Levy et al., 2020; Zylberberg, 2018), as well as synaptic currents from other brain areas. It can be conceptualized as the background synaptic activity that provides a large fraction of all synaptic inputs to both E and I neurons in cortical networks (Destexhe and Paré, 1999), and which may modulate feedforward-driven responses by controlling the distance between the membrane potential and the firing threshold (Destexhe et al., 2003). Likewise, in our model, the non-specific current does not directly convey information about the feedforward input features, but influences the network dynamics.

Non-specific current comprises mean and fluctuations (see Materials and methods). The mean is proportional to the metabolic constant and its fluctuations reflect the noise that we included in the condition for spiking. Since governs the trade-off between encoding error and metabolic cost (Equation 3), higher values of imply that more importance is assigned to the metabolic efficiency than to coding accuracy, yielding a reduction in firing rates. In the expression for the non-specific current, we found that the mean of the current is negatively proportional to the metabolic constant (see Materials and methods). Because the non-specific current is typically depolarizing, this means that increasing yields a weaker non-specific current and increases the distance between the mean membrane potential and the firing threshold. Thus, an increase of the metabolic constant is expected to make the network less responsive to the feedforward signal.

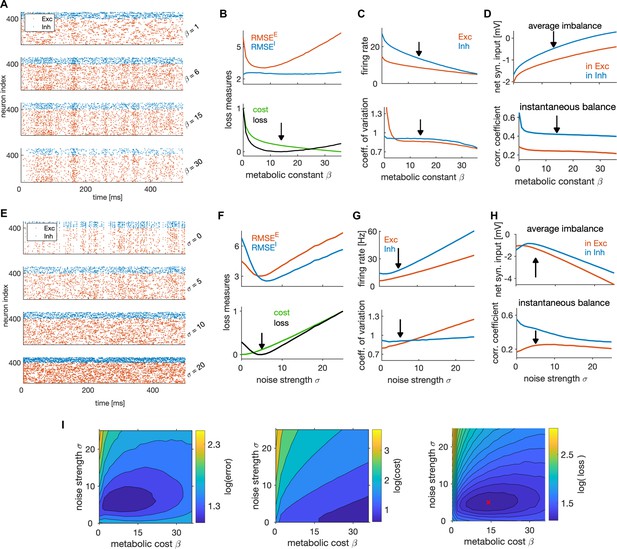

We found the metabolic constant to significantly influence the spiking dynamics (Figure 6A). The optimal efficiency was achieved for non-zero levels of the metabolic constant (Figure 6B), with the mean of the non-specific current spanning more than half of the distance between the resting potential and the threshold (Table 1). Stronger weighting of the loss of I compared to E neurons and stronger weighting of the error compared to the cost yielded weaker optimal metabolic constant (Figure 7—figure supplement 1B). Metabolic constant modulated the firing rate as expected, with the firing rate in E and I neurons decreasing with the increasing of the metabolic constant (Figure 6C, top). It also modulated the variability of spiking, as increasing the metabolic constant decreased the variability of spiking in both cell types (Figure 6C, bottom). Furthermore, it modulated the average balance and the instantaneous balance in opposite ways: larger values of led to regimes that had stronger average balance, but weaker instantaneous balance (Figure 6D). We note that, even with suboptimal values of the metabolic constant, the neural dynamics remained within biologically relevant ranges.

State-dependent coding and dynamics are controlled by non-specific currents.

(A) Spike trains of the efficient E-I network in one simulation trial, with different values of the metabolic constant . The network received identical stimulus across trials. (B) Top: RMSE of E (red) and I (blue) estimates as a function of the metabolic constant. Bottom: Normalized average metabolic cost and average loss as a function of the metabolic constant. Black arrow indicates the minimum loss and therefore the optimal metabolic constant. (C) Average firing rate (top) and the coefficient of variation of the spiking activity (bottom), as a function of the metabolic constant. Black arrow marks the metabolic constant leading to optimal network efficiency in B. (D) Average imbalance (top) and instantaneous balance (bottom) balance as a function of the metabolic constant. (E) Same as in A, for different values of the noise strength . (F) Same as in B, as a function of the noise strength. The noise is a Gaussian random process, independent over time and across neurons. (G) Same as C, as a function of the noise strength. (H) Top: Same as in D, as a function of the noise strength. (I) The encoding error measured as RMSE (left), the metabolic cost (middle) and the average loss (right) as a function of the metabolic constant and the noise strength . Metabolic constant and noise strength that are optimal for the single parameter search (in B and F) are marked with a red cross in the figure on the right. For plots in B-D and F-I, we computed and averaged results over 100 simulation trials with 1 second duration. For other parameters, see Table 1.

The fluctuation part of the non-specific current, modulated by the noise strength that we added in the definition of spiking rule for biological plausibility (see Materials and methods), strongly affected the neural dynamics as well (Figure 6E). The optimal performance was achieved with non-vanishing noise levels (Figure 6F), similarly to previous work showing that the noise prevents excessive network synchronization that would harm performance (Chalk et al., 2016; Koren and Denève, 2017; Timcheck et al., 2022). The optimal noise strength depended on the weighting of the error with the cost, with strong weighting of the error predicting stronger noise (Figure 7—figure supplement 1C).

The average firing rate of both cell types, as well as the variability of spiking in E neurons, increased with noise strength (Figure 6G), and some level of noise in the non-specific inputs was necessary to establish the optimal level of spiking variability. Nevertheless, we measured significant levels of spiking variability already in the absence of noise, with a coefficient of variation of about 0.8 in E and 0.9 in I neurons (Figure 6G, bottom). This indicates that the recurrent network dynamics generates substantial variability even in absence of an external source of noise. The average and instantaneous balance of synaptic currents exhibited a non-linear behavior as a function of noise strength (Figure 6H). Due to decorrelation of membrane potentials by the noise, instantaneous balance in I neurons decreased with increasing noise strength (Figure 6H, bottom).

Next, we investigated the joint impact of the metabolic constant and the noise strength on network optimality. We expect these two parameters to be related, because larger noise strength requires stronger metabolic constant to prevent the activity of the network to be dominated by noise. We thus performed a two-dimensional parameter search (Figure 6I). As expected, the optima of the metabolic constant and the noise strength were positively correlated. A weaker noise required lower metabolic constant, and-vice-versa. While achieving maximal efficiency at non-zero levels of the metabolic cost and noise (see Figure 6I) might seem counterintuitive, we speculate that such setting is optimal because some noise in the non-specific current prevents over-synchronization and over-regularity of firing that would harm efficiency, similarly to what was shown in previous works (Chalk et al., 2016; Koren and Denève, 2017; Timcheck et al., 2022). In the presence of noise, a non-zero metabolic constant is needed to suppress inefficient spikes purely induced by noise that do not contribute to coding and increase the error. This gives rise to a form of stochastic resonance, where an optimal level of noise is helpful to detect the signal coming from the feedforward currents.

In summary, non-specific external currents derived in our optimal solution have a major effect on coding efficiency and on neural dynamics. In qualitative agreement with empirical measurements (Destexhe and Paré, 1999; Destexhe et al., 2003), our model predicts that more than half of the average distance between the resting potential and firing threshold is accounted for by non-specific synaptic currents. Similarly to previous theoretical work (Chalk et al., 2016; Koren and Denève, 2017), we find that some level of external noise, in the form of a random fluctuation of the non-specific synaptic current, is beneficial for network efficiency. This remains a prediction for experiments.

Optimal ratio of E-I neuron numbers and of the mean I-I to E-I synaptic efficacy coincide with biophysical measurements

Next, we investigated how coding efficiency and neural dynamics depend on the ratio of the number of E and I neurons ( or E-I ratio) and on the relative synaptic strengths between E-I and I-I connections.

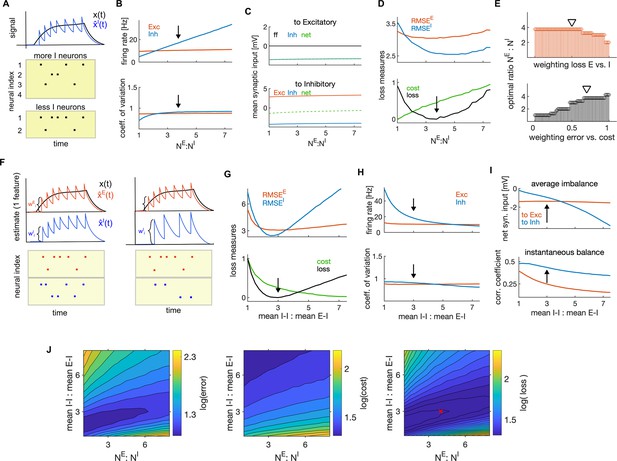

Efficiency objectives (Equation 3) are based on population, rather than single-neuron activity. Our efficient E-I network thus realizes a computation of the target representation that is distributed across multiple neurons (Figure 7A). Following previous reports (Barrett et al., 2016), we predict that, if the number of neurons within the population decreases, neurons have to fire more spikes to achieve an optimal population readout because the task of tracking the target signal is distributed among fewer neurons. To test this prediction, we varied the number of I neurons while keeping the number of E neurons constant. As predicted, a decrease of the number of I neurons (and thus an increase in the ratio of the number of E to I neurons) caused a linear increase in the firing rate of I neurons, while the firing rate of E neurons stayed constant (Figure 7B, top). However, the variability of spiking and the average synaptic inputs remained relatively constant in both cell types as we varied the E-I ratio (Figure 7B, bottom, C), indicating a compensation for the change in the ratio of E-I neuron numbers through adjustment in the firing rates. These results are consistent with the observation in neuronal cultures of a linear change in the rate of postsynaptic events but unchanged postsynaptic current in either E and I neurons for variations in the E-I neuron number ratio (Sukenik et al., 2021).

Optimal ratios of E-I neuron numbers and of mean I-I to E-I efficacy.

(A) Schematic of the effect of changing the number of I neurons on firing rates of I neurons. As encoding of the stimulus is distributed among more I neurons, the number of spikes per I neuron decreases. (B) Average firing rate as a function of the ratio of the number of E to I neurons. Black arrow marks the optimal ratio. (C) Average net synaptic input in E neurons (top) and in I neurons (bottom). (D) Top: Encoding error (RMSE) of the E (red) and I (blue) estimates, as a function of the ratio of E-I neuron numbers. Bottom: Same as on top, showing the cost and the average loss. Black arrow shows the minimum of the loss, indicating the optimal parameter. (E) Top: Optimal ratio of the number of E to I neurons as a function of the weighting of the average loss of E and I cell type (using the weighting of the error and cost of 0.7 and 0.3, respectively). Bottom: Same as on top, measured as a function of the weighting of the error and the cost when computing the loss. (The weighting of the losses of E and I neurons is 0.5.) Black triangles mark weightings that we typically used. (F) Schematic of the readout of the spiking activity of E (red) and I population (blue) with equal amplitude of decoding weights (left) and with stronger decoding weight in I neuron (right). Stronger decoding weight in I neurons results in a stronger effect of spikes on the readout, leading to less spikes by the I population. (G–H) Same as in D and B, as a function of the ratio of mean I-I to E-I efficacy. (I) Average imbalance (top) and instantaneous balance (bottom) balance, as a function of the ratio of mean I-I to E-I efficacy. (J) The encoding error (RMSE; left) the metabolic cost (middle) and the average loss (right) as a function of the ratio of E-I neuron numbers and the ratio of mean I-I to E-I connectivity. The optimal ratios obtained with single parameter search (in D and G) are marked with a red cross. All statistical results were computed on 100 simulation trials of 1 second duration. For other parameters, see Table 1.

The ratio of the number of E to I neurons had a significant influence on coding efficiency. We found a unique minimum of the encoding error of each cell type, while the metabolic cost increased linearly with the ratio of the number of E and I neurons (Figure 7D). Using the usual weighting , we found the optimal ratio of E to I neuron numbers to be in range observed experimentally in cortical circuits (Figure 7D, bottom, black arrow, ; Markram et al., 2004). The optimal ratio depended on the weighting of the error with the cost, decreasing when increasing the cost of firing (Figure 7E, bottom). Also the encoding error (RMSE) alone, without considering the metabolic cost, predicted optimal ratio of the number of E to I neurons within a plausible physiological range, , with stronger weightings of the encoding error by I neurons predicting higher ratios (Figure 7E, top).

Next, we investigated the impact of the strength of E and I synaptic efficacy (EPSPs and IPSPs). As evident from the expression for the population readouts (Equation 2), the magnitude of tuning parameters (which are also decoding weights) determines the amplitude of jumps of the population readout caused by spikes (Figure 7F). The larger these weights are, the larger is the impact of spikes on the population signals.

E and I synaptic efficacies depend on the tuning parameters. We parametrized the distribution of tuning parameters as uniform distributions centered at zero, but allowed the spread of distributions in E and I neurons ( and ) to vary across E and I cell type (Materials and methods). In the optimally efficient network, as found analytically (Materials and methods section ‘Dynamic equations for the membrane potentials’), the E-I connectivity is the transpose of the of the I-E connectivity, which implies that these connectivities are exactly balanced and have the same mean. We also showed analytically that by parametrizing tuning parameters with uniform distributions, the scaling of synaptic connectivity of E-I (equal to I-E) and I-I connectivity is controlled by the variance of tuning parameters of the pre and postsynaptic population as follows: . Using these insights, we were able to analytically evaluate the mean E-I and I-I synaptic efficacy (see Materials and methods section ‘Parametrization of synaptic connectivity’).

We next searched for the optimal ratio of the mean I-I to E-I efficacy as the parameter that maximizes network efficiency. Network efficiency was maximized when such ratio was about 3–1 (Figure 7G). Our results suggest the optimal E-I and I-E synaptic efficacy, averaged across neuronal pairs, of 0.75 mV, and the optimal I-I efficacy of 2.25 mV, values that are consistent with empirical measurements in the primary sensory cortex (Cossell et al., 2015; Pala and Petersen, 2015; Campagnola et al., 2022). The optimal ratio of mean I-I to E-I connectivity decreased when the error was weighted more with respect to the metabolic cost (Figure 7—figure supplement 1D).

Similarly to the ratio of E-I neuron numbers, a change in the ratio of mean E-I to I-E synaptic efficacy was compensated for by a change in firing rates, with stronger I-I synapses leading to a decrease in the firing rate of I neurons (Figure 7H, top). Conversely, weakening the E-I (and I-E) synapses resulted in an increase in the firing rate in E neurons (Figure 7—figure supplement 1E, F). This is easily understood by considering that weakening the E-I and I-E synapses activates less strongly the lateral inhibition in E neurons (Figure 3) and thus leads to an increase in the firing rate of E neurons. We also found that single neuron variability remained almost unchanged when varying the ratio of mean I-I to E-I efficacy (Figure 7H, bottom) and the optimal ratio yielded optimal levels of average and instantaneous balance of synaptic inputs, as found previously (Figure 7I). The instantaneous balance monotonically decreased with increasing ratio of I-I to E-I efficacy (Figure 7I, bottom, Figure 7—figure supplement 1G).

Further, we tested the co-dependency of network optimality on the above two ratios with a 2-dimensional parameter search. We expected a positive correlation of network performance as a function of these two parameters, because both of them regulate the level of instantaneous E-I balance in the network. We found that the lower ratio of E-I neuron numbers indeed predicts a lower ratio of the mean I-I to E-I connectivity (Figure 7J). This is because fewer E neurons bring less excitation in the network, thus requiring less inhibition to achieve optimal levels of instantaneous balance. The co-dependency of the two parameters in affecting network optimality might be informative as to why E-I neuron number ratios may vary across species (for example, it is reported to be 2:1 in human cortex [Fang et al., 2022] and 4:1 in mouse cortex). Our model predicts that lower E-I neuron number ratios require weaker mean I-I to E-I connectivity.

In summary, our analysis suggests that optimal coding efficiency is achieved with more E neurons than I neurons and with mean I-I synaptic efficacy stronger than the E-I and I-E efficacy, and that these two parameters are positively correlated. Optimal ratios of E to I neurons and of connection strengths are broadly consistent with empirical measurements of these parameters in biological networks. The optimal network has less I than E neurons, but the impact of spikes of I neurons on the population readout is stronger, also suggesting that spikes of I neurons convey more information.

Dependence of efficient coding and neural dynamics on the stimulus statistics

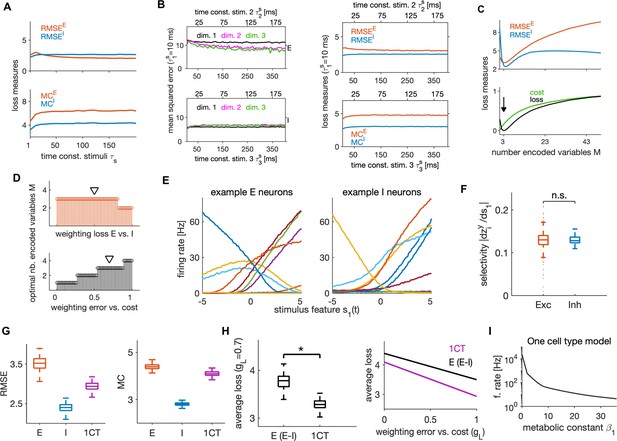

We further investigated how the network’s behavior depends on the timescales of the input stimulus features. We manipulated the stimulus timescales by changing the time constants of OU processes. The network efficiently encoded stimulus features when their time constants varied between 1 and 200 ms, with stable encoding error, metabolic cost (Figure 8A) and neural dynamics (Figure 8—figure supplement 1A, B). To examine if the network can efficiently encode also stimuli that evolve on different timescales, we tested its performance in response to input variables, each with a different timescale. We kept the timescale of the first variable constant at ms, while we varied the time constants of the other two keeping the time constant of the third twice as long as that of the second. We found excellent performance of the network in response to such stimuli that was stable across timescales (Figure 8B). The prediction that the network can encode information effectively over a wide range of time scales can be tested experimentally, by measuring the sensory information encoded by the activity of a set of neurons while varying the sensory stimulus timescales over a wide range.

Dependence of efficient coding and neural dynamics on stimulus parameters and comparison of E-I versus one cell type model architecture.