A simple regulatory architecture allows learning the statistical structure of a changing environment

Abstract

Bacteria live in environments that are continuously fluctuating and changing. Exploiting any predictability of such fluctuations can lead to an increased fitness. On longer timescales, bacteria can ‘learn’ the structure of these fluctuations through evolution. However, on shorter timescales, inferring the statistics of the environment and acting upon this information would need to be accomplished by physiological mechanisms. Here, we use a model of metabolism to show that a simple generalization of a common regulatory motif (end-product inhibition) is sufficient both for learning continuous-valued features of the statistical structure of the environment and for translating this information into predictive behavior; moreover, it accomplishes these tasks near-optimally. We discuss plausible genetic circuits that could instantiate the mechanism we describe, including one similar to the architecture of two-component signaling, and argue that the key ingredients required for such predictive behavior are readily accessible to bacteria.

eLife digest

Associations inferred from previous experience can help an organism predict what might happen the next time it faces a similar situation. For example, it could anticipate the presence of certain resources based on a correlated environmental cue.

The complex neural circuitry of the brain allows such associations to be learned and unlearned quickly, certainly within the lifetime of an animal. In contrast, the sub-cellular regulatory circuits of bacteria are only capable of very simple information processing. Thus, in bacteria, the ‘learning’ of environmental patterns is believed to mostly occur by evolutionary mechanisms, over many generations.

Landmann et al. used computer simulations and a simple theoretical model to show that bacteria need not be limited by the slow speed of evolutionary trial and error. A basic regulatory circuit could, theoretically, allow a bacterium to learn subtle relationships between environmental factors within its lifetime. The essential components for this simulation can all be found in bacteria – including a large number of ‘regulators’, the molecules that control the rate of biochemical processes. And indeed, some organisms often have more of these biological actors than appears to be necessary. The results of Landmann et al. provide new hypothesis for how such seemingly ‘superfluous’ elements might actually be useful.

Knowing that a learning process is theoretically possible, experimental biologists could now test if it appears in nature. Placing bacteria in more realistic, fluctuating conditions instead of a typical stable laboratory environment could demonstrate the role of the extra regulators in helping the microorganisms to adapt by ‘learning’.

Introduction

Organisms that live in changing environments evolve strategies to respond to the fluctuations. Many such adaptations are reactive, for example sensory systems that allow detecting changes when they occur and responding to them. However, adaptations can be not only reactive, but also predictive. For example, circadian clocks allow photosynthetic algae to reorganize their metabolism in preparation for the rising sun (Bell-Pedersen et al., 2005; Husain et al., 2019). Another example is the anticipatory behavior in E. coli, which allows it to prepare for the next environment under its normal cycling through the mammalian digestive tract (Savageau, 1983); similar behaviors have been observed in many species (Tagkopoulos et al., 2008; Mitchell et al., 2009).

All these behaviors effectively constitute predictions about a future environment: the organism improves its fitness by exploiting the regularities it ‘learns’ over the course of its evolution (Mitchell and Lim, 2016). Learning such regularities can be beneficial even if they are merely statistical in nature. A prime example is bet hedging: even if the environment changes stochastically and without warning, a population that learns the statistics of switching can improve its long-term fitness, for example, by adopting persistor phenotypes with appropriate probability (Kussell and Leibler, 2005; Veening et al., 2008). The seemingly limitless ingenuity of evolutionary trial-and-error makes it plausible that virtually any statistical structure of the environment that remains constant over an evolutionary timescale could, in principle, be learnt by an evolving system, and harnessed to improve its fitness (Watson and Szathmáry, 2016).

However, the statistical structure of the environment can itself change, and this change can be too quick to be learned by evolution (Figure 1A). For example, an organism might experience a period of stability followed by a period of large fluctuations, or an environment where two resources are correlated, and then another where they are not. Note that there are two key timescales here – that of the fluctuations themselves (which we assume to be fast), and the slower timescale on which the structure of those fluctuations changes. One expects such scenarios to be common in an eco-evolutionary context. As an example, consider a bacterium in a small pool of water. Its immediate environment, shaped by local interactions, is fluctuating on the timescale at which the bacterium changes neighbors. The statistical properties of these fluctuations depend on the species composition of the pool. As such, the fast fluctuations are partially predictable, and learning their structure could help inform the fitness-optimizing strategy: a neighbor encountered in a recent past is likely to be seen again in the near future. However, these statistics change on an ecological timescale, and such learning would therefore need to be accomplished by physiological, rather than evolutionary, mechanisms.

Learning environment statistics can benefit living systems, but is a difficult problem.

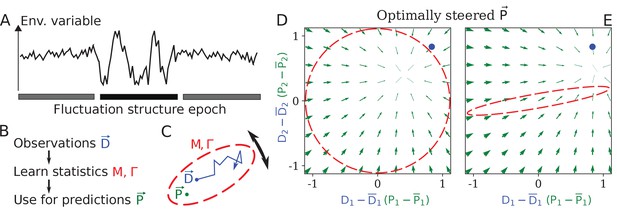

(A) An environment is characterized not only by its current state, but also by its fluctuation structure, such as variances and correlations of fluctuating environmental parameters. In this work, we consider an environment undergoing epochs that differ in their fluctuation structure. Epochs are long compared to the physiological timescale, but switch faster than the evolutionary timescale. (B) The fluctuation structure can inform the fitness-maximizing strategy, but cannot be sensed directly. Instead, it would need to be learned from past observations, and used to inform future behavior. (C) To formalize the problem, we consider a situation where some internal physiological quantities must track fluctuating external factors undergoing a random walk. Since it is impossible to react instantaneously, always lags behind . The dashed ellipse illustrates the fluctuation structure of (encoded in parameters and Γ, see text), and changes on a slower timescale than the fluctuations of . (D, E) The optimal behavior in the two-dimensional version of our problem, under a constrained maximal rate of change . For a given current (blue dot), the optimal control strategy would steer any current (green arrows) toward the best guess of the future , which depends on the fluctuation structure (red ellipse: (D) fluctuations are uncorrelated and isotropic; (E) fluctuations have a preferred direction). The optimal strategy is derived using control theory (Appendix 1, section 'Control theory calculation').

On a physiological timescale, this problem is highly nontrivial: the organism would have to perform inference from prior observations, encode them in memory, and act upon this knowledge (Figure 1B). It is clear that solutions to this problem do exist: such behaviors, common in neural systems, can be implemented by neural-network-like architectures; and these known architectures can be translated into biochemical networks (Hjelmfelt et al., 1991; Kobayashi, 2010; Fages et al., 2017; Katz and Springer, 2016; Katz et al., 2018). But single-celled organisms operate in a severely hardware-limited regime rarely probed by neuroscience. Streamlined by evolution, bacterial genomes quickly shed any unused complexity. Whether we could expect learning-like behaviors from bacteria depends on whether useful networks could be simple enough to plausibly be beneficial.

Known examples of phenotypic memory, for example, when the response is mediated by a long-lived protein, can be interpreted as a simple form of learning (Lambert et al., 2014; Hoffer et al., 2001); circuits capable of adapting to the current mean of a fluctuating signal, as in bacterial chemotaxis (Barkai and Leibler, 1997), also belong in this category. Prior theory work has also proposed that simple genetic circuits could learn more subtle binary features, such as a (transient) presence or absence of a correlation between two signals (Sorek et al., 2013).

Here, we show that a simple generalization of a ubiquitous regulatory motif, the end-product inhibition, can learn, store, and ‘act upon’ the information on continuous-valued features such as timescales and correlations of environmental fluctuations, and moreover, can do so near-optimally. We identify the key ingredients giving rise to this behavior, and argue that their applicability is likely more general than the simple metabolically inspired example used here.

Results

The setup

For a simple model capturing some of the challenges of surviving in a fluctuating environment, consider a situation where some internal physiological quantities must track fluctuating external variables . For example, the expression of a costly metabolic pathway would ideally track the availability of the relevant nutrient, or the solute concentration in the cytoplasm might track the osmolarity of the environment. In abstract terms, we describe these environmental pressures by the time-dependent , and postulate that the organism fitness is determined by the average mismatch , a quantity we will henceforth call ‘performance’. Here and below, angular brackets denote averaging over time.

In this simple model, a given static clearly defines a unique optimal state ; the regulatory challenge is entirely due to being a fluctuating quantity. The challenges faced by real organisms are certainly vastly more rich: even in the static case, the optimal behavior may not be unique, or even well-defined (optimal under what constraints?); and in the dynamic case, the future state of the environment could be affected by past actions of the organism. These considerations can add layers of complexity to the problem, but our minimal model is sufficient to focus on the basic issues of sensing, learning and responding to changing fluctuation statistics of external factors.

If changes sufficiently slowly, the organism can sense it and adapt accordingly. We, instead, are interested in the regime of rapid fluctuations. When changes in are too rapid for the organism to match to exactly, it can rely on statistical structure. At the simplest level, the organism could match the mean, setting . However, information on higher-order statistics, for example correlations between D1 and D2, can further inform the behavior and improve fitness.

To see this, in what follows, we will consider the minimal case of such structured fluctuations, namely a -dimensional vector undergoing a random walk in a quadratic potential (the Ornstein—Uhlenbeck process):

with mean , fluctuation strength Γ, independent Gaussian random variables with zero mean and unit variance, and the matrix defining the potential.

In this system, the relevant ‘fluctuation structure’ is determined by and Γ. In one dimension, Equation (1) gives a variance of . In two dimensions, denoting the eigenvalues of as , the stationary distribution of the fluctuating is a Gaussian distribution with principal axes oriented along the eigenvectors of , and standard deviations along these directions given by and . Intuitively, we can think of the fluctuating as filling out an ellipse (Figure 1C). Going forward, when we refer to learning fluctuation structure, we mean learning properties of and Γ.

If and Γ are known, the optimal strategy minimizing , where is set by Equation (1), can be computed exactly, as a function of the maximum allowed rate of change (Liberzon, 2011). (If we do not constrain , the optimal behavior is of course .) Briefly, the optimal behavior is to steer toward the best guess of the expected future (see Appendix 1, section 'Control theory calculation'). This best guess depends on the fluctuation structure, as illustrated by the comparison between Figure 1D and E for an isotropic and an anisotropic .

However, in our problem, we will assume that and Γ do not stay constant long enough to be learned by evolution, and thus are unknown to the system. In this regime, it is not clear that the behavior of an - and Γ-optimized system is relevant. Nevertheless, we will describe a regulatory architecture consisting of common regulatory elements that will adapt its responsiveness to the fluctuation structure of its input (‘learn’); for example, in the two-dimensional case, it will indeed develop the anisotropic response shown in Figure 1E. Moreover, we will find the steady-state performance of our architecture to be near-optimal, when compared to the theoretical ceiling of a system that knows and Γ perfectly.

Proposed architecture: end-product inhibition with an excess of regulators

The section above was intentionally very general. To discuss solutions available to cells, it is convenient to restrict the scope from this general formulation to a more specific metabolically-inspired case. From here onwards, let be the instantaneous demand in metabolite xi (determined by external factors), and be the rate at which the metabolite is produced, with both defined in units of metabolite concentration per unit time. The number of components of the vector now has the meaning of the number of metabolites, and we will denote it as . The cell needs to match to (or, equivalently, maintain the homeostasis of the internal metabolite concentrations xi).

The simplest way to solve this problem is via feedback inhibition. Consider first the case of a single metabolite . If an accumulation of inhibits its own synthesis, a decreased demand will automatically translate into a decreased production. For our purposes, we will model this scenario by placing the synthesis of metabolite under the control of a regulator (e.g. a transcription factor), which is, in turn, inhibited by (Figure 2A). For simplicity, we will measure regulator activity directly in units of equivalent production of . The dynamics of this system, linearized for small fluctuations of metabolite concentration , can be written in the following form (see Appendix 1, section 'Simple end-product inhibition):

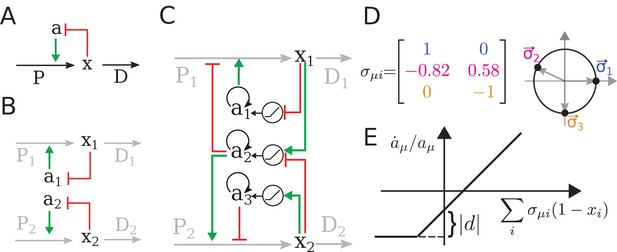

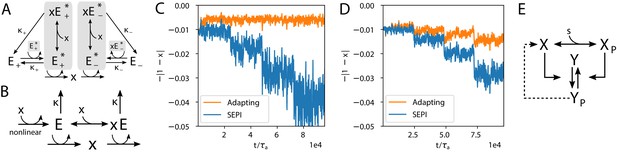

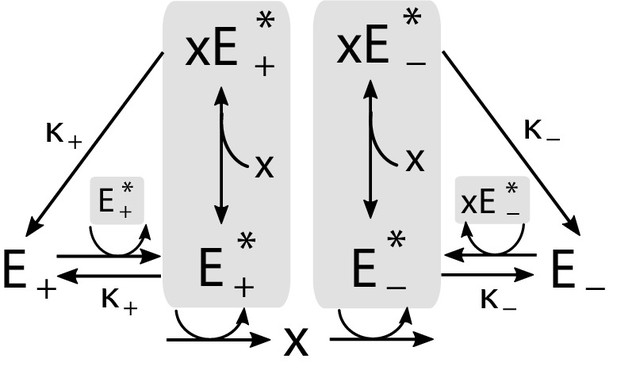

The regulatory architecture we consider is a simple generalization of end-product inhibition.

(A) Simple end-product inhibition (SEPI) for one metabolite. Green arrows show activation, red arrows inhibition. (B) Natural extension of SEPI to several metabolites. (C) We consider regulatory architectures with more regulators than metabolites, with added self-activation (circular arrows) and a nonlinear activation/repression of regulators by the metabolite concentrations xi (pictograms in circles). (D) Visualizing a regulation matrix for two metabolites. In this example, the first regulator described by activates the production of x1; the second inhibits x1 and activates x2. For simplicity, we choose vectors of unit length, which can be represented by a dot on the unit circle. This provides a convenient way to visualize a given regulatory architecture. (E) The nonlinear dependence of regulator activity dynamics on metabolite concentrations xi in our model (see Equation 4).

Here, we introduced P0 with dimension of production (concentration per time) to render dimensionless. In Equation 2c, λ has the units of concentration × time, and setting defines a time scale for changes in regulator activity. Assuming the dynamics of metabolite concentrations are faster than regulatory processes, and choosing the units so that and , we simplify the equations to:

We will refer to this architecture as simple end-product inhibition (SEPI). For two metabolites , the straightforward generalization is to have two independent copies of this circuit, with two regulators a1, a2 (Figure 2B). Denoting the number of regulators as , we note that in the SEPI architecture, there are as many regulators as there are metabolites: .

The architecture we will describe builds on this widely used regulatory motif, and relies on three added ingredients:

An excess of regulators: ;

Self-activation of regulators;

Nonlinear activation/repression of the regulators by the metabolite concentrations xi.

Here and below, we use index μ for regulators () and index for metabolites ().

These three ingredients, we claim, will be sufficient for the circuit to both learn higher order statistics and to use this information appropriately when matching the production to demand. It is important to emphasize that all three are readily accessible to cells. In fact, there are multiple ways to build regulatory circuits exhibiting the proposed behavior using common regulatory elements. To focus on the general mechanism rather than any one particular implementation, we will defer describing these example circuits until later in the text (Figure 6); here, we will consider a minimal modification of Equation (3) that contains the required ingredients:

This architecture bears a similarity to neural networks, and, as we will see, the familiar intuition about the value of extra ‘hidden nodes’ indeed holds. However, we caution the reader not to rely too heavily on this analogy. For example, here is a constant matrix describing how the activities of regulators control the synthesis of metabolites xi.

For two metabolites () as in Figure 2C, each regulator is summarized by a 2-component vector ; its components can be of either sign (or zero) and specify how strongly the regulator is activating or repressing the synthesis of metabolite xi. For simplicity, below, we will choose these vectors to be of unit length. Then, each regulator is fully characterized by an angle in the plane, which allows for a convenient visualization of the regulatory systems (Figure 2D). The defines the regulatory logic of our system and does not change with time. The parameter allows us to tune the strength of the simple nonlinearity (Figure 2E); below we set (strong nonlinearity) unless explicitly stated otherwise. As we will show later, the learning behavior is also observed for more realistic functions such as the Hill function, but the simple piece-wise linear form of Equation (4) will help us relate the observed behavior to specifically nonlinearity as opposed to, for example, cooperativity (the Hill parameter tunes both simultaneously). Finally, the parameter κ reflects degradation and is assumed to be small: . Previously, for SEPI, it could be neglected, but here, it will matter due to the nonlinearity; for more details, see Appendix 1, section 'Simple end-product inhibition'. The parameters used in simulations are all listed in Appendix 1, section 'Parameters used in figures'.

Just like simple end-product inhibition in Equation (3), the modified system Equation (4) will correctly adapt production to any static demand (see Appendix 1, section 'Adaptation to static demand'). In the following, we will show that the added ingredients also enable learning the structure of fluctuating environments. For this purpose, we expose our system to demands with fixed means () but a changing fluctuation structure.

The regulatory architecture described above outperforms simple end-product inhibition by learning environment statistics

To show that our system is able to adapt to different fluctuation structures, we probe it with changing environmental statistics, and show that it, first, learns these statistics, and, second, is able to make use of this information in its behavior.

For simplicity, we start with the 1-dimensional case (Figure 3A–F). In dimension , an excess of regulators means we have both an activator and a repressor for the production of (Figure 3A). This is reminiscent of paradoxical regulation (Hart et al., 2012). We probe our system with changing environmental statistics by exposing it to a demand with an increasing variance (Figure 3B,C). As a reminder, here and below, the mean demand is fixed at 1.

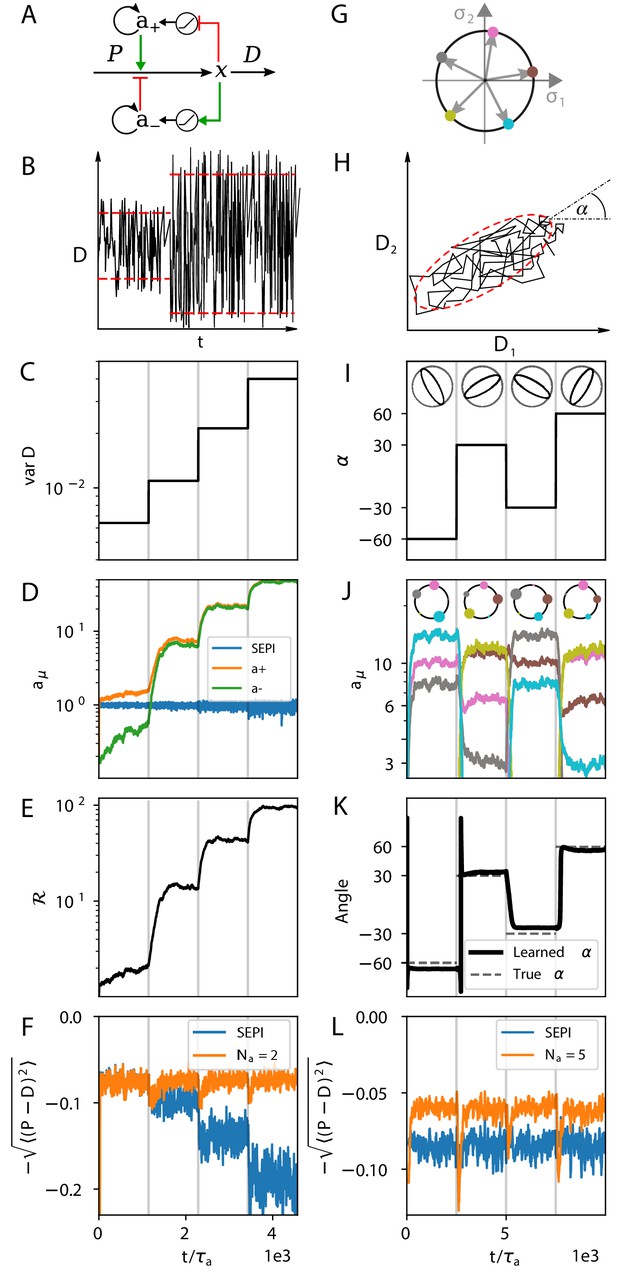

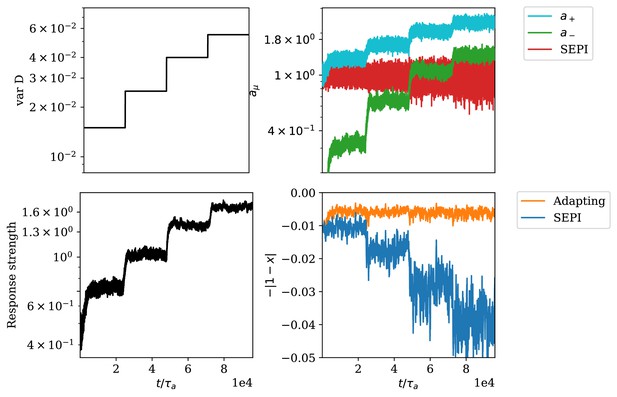

The regulatory architecture we consider successfully learns environment statistics, and outperforms simple end-product inhibition.

Left column in one dimension, right column in two. (A) Regulation of a single metabolite with one activator and one repressor . (B, C) The variance of is increased step-wise (by increasing Γ). (D) Regulator activities respond to the changing statistics of . For SEPI, the activity of its single regulator is unchanged. (E) Faced with larger fluctuations, our system becomes more responsive. (F) As fluctuations increase, SEPI performance drops, while the circuit of panel A retains its performance. (G) In the 2d case, we consider a system with regulators; visualization as in Figure 2D. (H) Cartoon of correlated demands with a dominant fluctuation direction (angle α). (I) We use α to change the fluctuation structure of the input. (J) Regulator activities respond to the changing statistics of . Colors as in panel G. (K) The direction of largest responsiveness (‘learned angle’; see text) tracks the α of the input. (L) The system able to learn the dominant direction of fluctuations outperforms the SEPI architecture, even if the timescale of SEPI is adjusted to match the faster responsiveness of the system (see Appendix 1, section 'Parameters used in figures'). Panels B and H are cartoons.

Faced with a faster fluctuating input, our system upregulates both and while keeping constant (; Figure 3D). In this way, the two activity levels and encode both the mean and the variance of fluctuations. Crucially, the system makes use of the information it stores: The increased regulator activities allow future changes in to be faster. The system’s responsiveness, which we can define as , increases as (Figure 3E; see also Appendix 1, section 'Defining the system's responsiveness'). As a result, as shown in Figure 3F, our system is able to perform approximately equally well (after adaptation time) in each environment, unlike a system like simple end-product inhibition, which is unable to adapt its sensitivity. In summary, Figure 3D–F show that the simple architecture of Figure 3A can not only learn the statistics of environment fluctuations, but also ‘act upon this knowledge,’ effectively performing both computations of Figure 1B.

The idea of learning the fluctuation structure is perhaps clearer in dimension , since the two demands can now be correlated with each other, and it seems intuitive that a system able to learn the typical direction of fluctuations (the angle α in Figure 3H) should be able to track the input better. Indeed, as we saw in Figure 1D–E, when environment fluctuations are anisotropic, the responsiveness of a well-adapted strategy must be anisotropic as well: the preferred direction must elicit a stronger response. Mathematically, the responsiveness is now a matrix , and for a well-adapted system we expect its eigenvectors to align with the principal directions of . In Figure 3G–L, Figure 4 and Figure 5, our discussion will focus on this two-dimensional case.

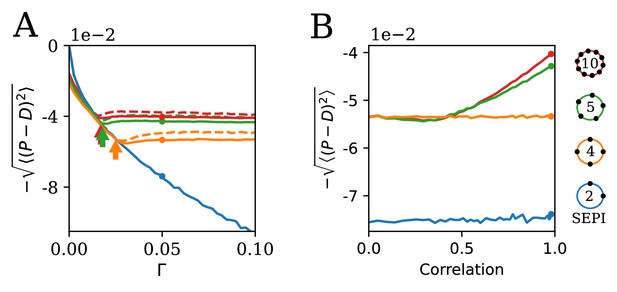

The ability to learn statistics is most useful when fluctuations are large and/or strongly correlated.

(A) The performance of different circuits shown as a function of Γ, which scales the fluctuation magnitude (input is two-dimensional and correlated, angle , anisotropy ). Once the fluctuations become large enough to activate the learning mechanism, performance stabilizes; in contrast, the SEPI performance continues to decline. Arrows indicate the theoretical prediction for the threshold value of Γ; see Appendix 1, section 'The minimal Γ needed to initiate adaptation'. Dashed lines indicate the theoretical performance ceiling (calculated at equivalent Control Input Power, see text). (B) Comparison of circuit performance for inputs of the same variance, but different correlation strengths. regulators arranged as shown can learn the variance but not correlation; the SEPI architecture is unable to adapt to either. Parameter Γ is held constant at 0.05; the marked points are identical to those highlighted in panel A (and correspond to fluctuation anisotropy ).

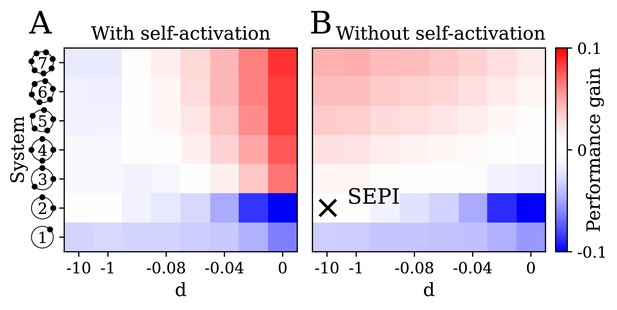

The key ingredients enabling learning are an excess of regulators, nonlinearity, and self-activation.

(A) System performance in the two-dimensional case , shown as a function of the number of regulators (vertical axis) and the strength of nonlinearity (horizontal axis; is indistinguishable from a linear system with ). Color indicates performance gain relative to the SEPI architecture; performance is averaged over angle α (see Figure 3H). (B) Same as panel A, for a model without self-activation (see text). The SEPI-like architecture (linear with ) is highlighted.

Figure 3G–L show the behavior of our system (Equation 4) with regulators (Figure 3G), exposed to an input structured as shown in Figure 3H, where we vary α. As represented pictorially in Figure 3I, we rotate the fluctuation structure matrix in Equation (1), keeping its eigenvalues fixed with (this fixes the ratio of major to minor semi-axis lengths).

With regulators, matching the mean value of would leave degrees of freedom that can be influenced by other parameters (such as variance in each dimension and correlation between different demands). And indeed, changing environment statistics induces strong changes in the regulator state adopted by the system, with regulators better aligned to the input fluctuations reaching higher expression (Figure 3J; note the diagrams at the top, where dot size reflects the activity reached by the corresponding regulator at the end of each epoch; compare to the diagrams in Figure 3I). This activity pattern shapes the responsiveness matrix . Figure 3K plots the ‘learned angle’, defined as the direction of the dominant eigenvector of ; we find that it tracks the stimulus angle. Finally, Figure 3L demonstrates that our architecture is able to make use of this learning, outperforming the SEPI system, whose responsiveness is isotropic and fixed.

The performance is near-optimal

In the previous section, we have shown by example (Figure 3) that the proposed regulatory architecture can learn the statistics of the environment. We now characterize systematically the conditions under which learning improves performance and compare our system to the theoretical performance ceiling. Note that unlike the general statement that learning correlations improves performance, the optimal performance ceiling is necessarily specific to a given model of the environmental fluctuations. Nevertheless, this comparison is informative.

The fluctuation structure in our model is defined by Γ and . We first investigate the dependence of performance on Γ (Figure 4A), exposing our system to a two-dimensional input structured as in Figure 3H with as before, , and a changing Γ.

Although the input is two-dimensional, changing Γ scales the overall magnitude of fluctuations, and the behavior is analogous to the simpler one-dimensional example shown in the first column of Figure 3. At (static input), and by extension, for Γ finite but small, examining the steady state of Equation (4) shows that only out of regulators can be active. In this regime, our system is essentially identical to SEPI—the extra regulators, though available, are inactive—and in fact performs slightly worse. This is because at nonzero κ, the steady state of Equation (4) is slightly offset from the ideal state . (While this effect can be corrected, it is only relevant in the parameter regime where no learning occurs, so we chose to keep Equation (4) as simple as possible; for additional discussion, see Appendix 1, section 'Performance penalty from the degradation term').

When Γ becomes sufficiently large, the first term in Equation (4) (proportional to the fluctuation size ) for one of the inactive regulators finally exceeds, on average, the degradation term. At this point, the system enters the regime where the number of active regulators exceeds , and its performance deviates from the SEPI curve. Beyond this point, further changes to the stimulus no longer affect performance, as our system is able to adapt its responsiveness to the changing fluctuation magnitude (compare to Figure 3F). The threshold value of Γ satisfies ; the proportionality coefficient of order 1 depends on the specific arrangement of regulators but can be estimated analytically (see Appendix 1, section 'The minimal Γ needed to initiate adaptation'). The theoretically predicted deviation points are indicated with arrows, and are in agreement with the simulation results. When a regulator in the system is particularly well-aligned with the dominant direction of fluctuations, the deviation occurs sooner, explaining the better performance of our system when the regulators are more numerous.

To better assess the performance of our system, we compare it to the theoretical optimum derived from control theory, which we represent with dotted lines in Figure 4A. For given and Γ, the family of optimal behaviors is parameterized by Control Input Power (CIP), defined as . If could react infinitely fast, it would track perfectly, but increasing response speed necessarily comes at a cost (of making more sensors, or more enzymes for faster synthesis / degradation of xi); constraining the CIP is thus a proxy for specifying the maximum tolerable cost. In order to compare our system with the optimal family of solutions, we compute of our system at each Γ ( is the simulation time), and compare to the performance of the optimally steered solution with a matched CIP; details of the calculation can be found in Appendix 1, section 'Control theory calculation'. Figure 4A demonstrates that the simple architecture we described not only benefits from matching its responsiveness to its input, but is in fact near-optimal when compared to any system of equivalent responsiveness.

It is important to note that for low Γ, the performance of the SEPI architecture also tracks the optimal curve. Throughout this work, our intention is not to demonstrate that SEPI is a ‘poor’ architecture. To the contrary, the surprising efficiency of SEPI has been noted before (Goyal et al., 2010; Pavlov and Ehrenberg, 2013), and Figure 4 similarly shows that at its own CIP, its performance is virtually optimal. The advantage of our learning-capable architecture derives from its ability to increase responsiveness when necessary, in the correct direction. Our simplified treatment of the SEPI architecture is not a strawman we seek to dismiss, but an example of a system that exhibits no learning.

Having investigated the effect of fluctuation variance (changing Γ), we turn to the effect of their correlation. Up to now, we subjected our system to a strongly correlated two-dimensional input with anisotropy (illustrated, to scale, in Figure 1E). We will now consider a range of anisotropy values, down to anisotropy 1 (uncorrelated fluctuations, Figure 1D), keeping the variances of D1 and D2 constant, as before, and .

The result is presented in Figure 4B. With or larger, our system is able to take advantage of the correlation, assuming it is strong enough to activate the learning mechanism. (In fact, its performance can reach values that exceed the theoretical ceiling achievable by any system that assumes the two dimensions of to be independent, and thus must be exploiting the correlation in its inputs; see Appendix 1, section 'The system makes use of correlations in the input' and Appendix 1—figure 1). For , the performance curve remains flat. This is because the four regulators are arranged as two independent copies of the system shown in Figure 3A (one pair for each of the two inputs); this architecture can take advantage of the learned variance, but not the correlation. Finally, the SEPI architecture can adapt to neither variance nor correlation; its performance curve is also flat, but is lower. As expected, the advantage of our architecture manifests itself in environments with periods of large and/or strongly correlated fluctuations.

The behavior is generalizable

The model described above was a proof of principle, showing that simple regulatory circuits could learn the fluctuation structure of their inputs. Given the simplicity of our model, it is not to be expected that the exact dynamics of Equation (4) are replicated in real cells. However, the benefit of this simplicity is that we can now trace this behavior to its key ingredients, which we expect to be more general than the model itself: an excess of regulators, nonlinearity, and self-activation. In this section, we examine their role: first in our model (Figure 5), and then in more realistic circuits, relaxing our simplifying assumptions (Figure 6).

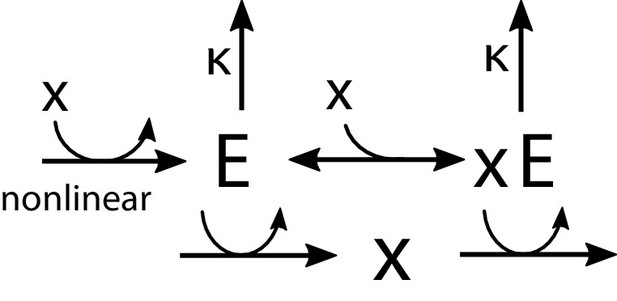

Realistic implementations.

(A) An alternative circuit that implements the logic described above, but with different forms of the key ingredients, including a Hill-function nonlinearity. Here, the circuit is based on a pair of self-activating enzymes which can be in an active () or inactive state (). For details see Equation (5). (B) Another circuit capable of learning fluctuation variance to better maintain homeostasis of a quantity . Synthesis and degradation of are catalyzed by the same bifunctional enzyme, whose production is regulated nonlinearly by itself. For more details see Equations (6) and (7). (C) The circuit in panel A performs well at the homeostasis task of maintaining ’s concentration at 1, despite the changing variance of the input. For comparison, we’ve included a SEPI analogue of the circuit, described in Appendix 1, section 'Realistic biochemical implementations'. (D) Same as panel C, but with the circuit from panel B. Note that the ’SEPI’ line is different here, and is now a SEPI analogue of the circuit in panel B. (E) Solid arrows: a common two-component architecture of bacterial sensory systems with a bifunctional histidine kinase (X) and its cognate response regulator (Y). Adding an extra regulatory link (nonlinear auto-amplification, dashed arrow) can endow this system with self-tuned reactivity learning the statistics of the input; see text.

In Figure 5, the parameter on the horizontal axis is the strength of nonlinearity (see Figure 2E), from perfectly linear at , to strongly nonlinear at . The vertical axis corresponds to an increasing number of regulators , which we label as in Figure 2D; for completeness, we also include the simplest system with a single regulator co-activating both x1 and x2 (bottom row). Panel A examines the performance of our system as defined in Equation (4), that is, with self-activation included. In panel B, we remove self-activation by omitting the prefactor in front of the max function in Equation (4). The color scheme is chosen so that red indicates an improvement, and blue a deterioration, of the circuit performance relative to the SEPI architecture, which approximately corresponds to the point highlighted in Figure 5B. The difference between the labeled point and the SEPI architecture is that all models represented in Figure 5 include a small degradation term, which becomes important in the nonlinear regime. For the SEPI-like case, its effect on performance is negligible (see Appendix 1, section 'Performance penalty from the degradation term') . Performance is averaged over five angles α; see Appendix 1, section 'Parameters used in figures'.

Unsurprisingly, the performance of the simple SEPI-like architecture can be improved by adding extra regulators (pink region in Figure 5B): each new regulator allows the system to respond more quickly in a yet another direction of perturbation, with which it is ‘aligned’. However, such a strategy would have limited utility in a biological setting, since the marginal improvement per regulator must offset the cost of added complexity. The mechanism described here corresponds to the red area in Figure 5A. Importantly, in the presence of both nonlinearity and self-activation, even a single extra regulator () can already provide a significant benefit.

Figure 5A shows that in the context of our model, the reported behavior requires to exceed , and to be sufficiently large. However, these ingredients are more general than the specific implementation in Equation (4). In our model, additional regulators were required because they supplied the slow degrees of freedom to serve as memory; such degrees of freedom could be implemented in other ways, for example, as phosphorylation or methylation (Barkai and Leibler, 1997). Similarly, while nonlinearity is essential (linear dynamics cannot couple to higher-order terms, such as fluctuation magnitude), its exact functional form may be changed while retaining the learning behavior (see Appendix 1, section 'Nonlinearity acts as a sensor of fluctuation variance'). Finally, the explicitly self-catalytic behavior of in our model is only one possible strategy for translating the stored memory into a faster response.

To demonstrate the generality of these ingredients, we constructed two circuits with very different architectures (Figure 6A,B), both reproducing the results of Figure 3C–F. These are not the only ways that the logic described above can be implemented; rather, our intention is to show that as long as we keep the key elements, we can relax our simplifying assumptions, such as the form of the nonlinearity and self-activation, while still retaining the ability to learn.

The first of these proposed circuits (Figure 6A) is based on a pair of allosteric enzymes with the toy nonlinearity of Figure 2E replaced by more realistic cooperative binding, and implements dynamics otherwise very similar to those shown above. In this circuit, the enzymes and can be in an active or inactive state: The active form of , which we denote , catalyzes the production of ; similarly, catalyzes degradation of . In addition, the active enzymes can bind to molecules of the metabolite to control the self-catalytic activity. The total concentration of , bound and unbound, then plays the role of the activating regulator from above (), while plays the role of the inhibitor (). The equations defining the dynamics are then:

Despite the extensive changes relative to Figure 3A, the system is still able to learn. Figure 6C compares its performance to a non-learning version with only the activating branch , which is analogous to the single-activator SEPI architecture (compare to Figure 3F). For a detailed discussion of this more biologically plausible model, see Appendix 1, section 'A pair of allosteric enzymes'.

Our other proposed circuit (Figure 6B) differs significantly. Here, instead of seeking to match to , the system maintains the homeostasis of a concentration perturbed by external factors. In this implementation, the production and degradation of are both catalyzed by a single bifunctional enzyme; the responsiveness of this circuit scales with the overall expression of the enzyme , and larger fluctuations of lead to upregulation of due to the nonlinearity, as before. (For a detailed discussion, see e Appendix 1, section 'An architecture based on a bifunctional enzyme'.) Defining as the total concentration of the enzyme in both its bound and unbound states, the bound and unbound fractions are described by Hill equations,

The dynamics of our system are:

Despite its compactness, this circuit is also able to learn (Figure 6D; compare to Figures 3F and 6C).

Interestingly, this particular logic is very similar to a small modification of the standard two-component signaling architecture (Figure 6E). In this architecture, the signal determines the concentration of the phosphorylated form of the response regulator ; the rapidity of the response is determined by the expression of the histidine kinase , present at a much lower copy number. Although the signaling architecture of Figure 6E, at least in some parameter regimes, is known to be robust to the overall concentrations of and (Batchelor and Goulian, 2003), this robustness property applies only to the steady-state mapping from to , not the kinetics. Thus, much like in Figure 6B,a nonlinear activation of by (known as autoregulation [Goulian, 2010] or autoamplification [Hoffer et al., 2001], and shown as a dashed arrow in Figure 6E) would endow this signaling system with self-tuned reactivity that learns the statistics of the input.

Discussion

In this paper, we have studied a regulatory architecture which is able to infer higher-order statistics from fluctuating environments and use this information to inform behavior. For concreteness, we phrased the regulatory task as seeking to match the production of one or two metabolites to a rapidly fluctuating demand . Alternatively, and perhaps more generally, the circuits we constructed can be seen as maintaining the homeostasis in a quantity that is continually perturbed by external factors. We demonstrated that a simple architecture was capable of learning the statistics of fluctuations of its inputs and successfully using this information to optimize its performance. We considered one-dimensional and two-dimensional examples of such behavior.

In one dimension, learning the statistics of the input meant our circuit exhibited a self-tuned reactivity, learning to become more responsive during periods of larger fluctuations. Importantly, we have shown that this behavior can be achieved by circuits that are highly similar to known motifs, such as feedback inhibition (Figure 2A–C) or two-component signaling (Figure 6B,E). The latter connection is especially interesting: There are at least a few examples of two-component systems where autoamplification, a necessary ingredient for the learning behavior discussed here, has been reported (Shin et al., 2006; Williams and Cotter, 2007). Moreover, in the case of the PhoR/PhoB two-component system in E. coli, such autoamplification has been experimentally observed to allow cells to retain memory of a previously experienced signal (phosphate limitation; Hoffer et al., 2001), a behavior the authors described as learning-like. As reported, this behavior constitutes a response to the signal mean and is similar to other examples of simple phenotypic memory (e.g. Lambert et al., 2014); however, our analysis demonstrates that a similar architecture may also be able to learn more complex features. Such a capability would be most useful in contexts where the timescale of sensing could plausibly be the performance bottleneck. Since transcriptional processes are generally slower than the two-component kinetics, we expect our discussion to be more relevant for two-component systems with non-transcriptional readout, such as those involved in chemotaxis or efflux pump regulation.

In the two-dimensional case, our simple circuit was able to learn and unlearn transient correlation structures of its inputs, storing this information in expression levels of different regulators. Our argument was a proof of principle that, for example, the gut bacteria could have the means to not only sense, but also predict nutrient availability based on correlations learned from the past, including correlations that change over faster-than-evolutionary timescales, such as the life cycle (or dietary preferences) of the host. Importantly, we showed that this ability could come cheaply, requiring only a few ingredients beyond simple end-product inhibition.

The mechanism described here could suggest new hypotheses for the functional role of systems with an excess of regulators, as well as new hypotheses for bacterial function in environments with changing structure.

Materials and methods

All simulations performed in Python 3.7.4. Simulation scripts reproducing all figures are included as Source code 1.

Appendix 1

This text contains supplementary information on modeling details, the analytical calculations, and the exact parameters used in figures.

S1 Simple end-product inhibition

As the starting point of our regulatory architecture, we consider a basic form of end-product inhibition (SEPI). The environment is modeled by a time-dependent (externally induced) demand for metabolite which is produced at a rate (controlled by the system); both and are defined in units of metabolite concentration per unit time. The depletion of the metabolite depends on its concentration and the demand . Assuming first-order kinetics (or, alternatively, expanding a general function to linear order in small fluctuations of ) the dynamics of is:

Further, we consider the temporal evolution of the regulator activity

By linearizing around the stationary values we get

To examine this equation, we introduce the Fourier transforms and and get

Equation (S4) shows that if the support of is restricted to high frequencies, , then the degradation term in Equation (S3) is negligible. Including it would only add a restoring force, reducing the amplitude of fluctuations of , and decreasing the performance of the system. Since we are interested in the regime of fast fluctuations of , we choose to omit this term in the SEPI system. With we arrive at the dynamics used in the main text:

In the nonlinear system (Equation (3) of the main text), however, fast fluctuations of can cause the growth of (as discussed in the section ‘The nonlinearity as a sensor of the fluctuation variance’), thereby inducing slow frequency modes to its dynamics. Thus, in the nonlinear case, we cannot omit the degradation term.

S2 Performance penalty from the degradation term

The model considered in the main text modifies the SEPI architecture as follows:

Consider the case of a static input. We observe that if x0 is set to 1, as in the main text, the presence of the degradation term causes the equilibrium point of these dynamics to be displaced away from . Therefore, for a static input, the performance of this system—the degree of mismatch between and , or, equivalently, the deviation of xi from 1—is actually worse than the performance of the original SEPI.

While the case of a static input is irrelevant for the discussion in the main text, this slight offset leads to a performance penalty also for a fluctuating input. Indeed, time-averaging Equation (S7) shows that for any active regulator, we must have

where is the nonlinearity in our equation, . Clearly, we will in general again have ; this is particularly obvious in the limit of small fluctuations when the nonlinearity is ‘not active’, such that .

This effect could be corrected by shifting x0. In the interest of keeping our model as close to SEPI as possible, we chose not to do so: this penalty is only significant in the regime where no learning occurs, and is otherwise outweighed by the performance increase due to self-tuned responsiveness, with the additional benefit of simplifying the discussion in the main text. Even if optimizing x0 could make the performance slightly closer to the optimal bound, this kind of fine-tuning seems irrelevant in a biological context.

S3 Defining the system’s responsiveness

In the main text, we use a measure for the ‘responsiveness’ of our system to changes in the demand . In this section it is shown in detail how this measure is defined. The central aim of the considered regulatory model is to match the time-dependent demand with the regulated production . The temporal evolution of is given by:

with

For a static demand the production relaxes to a constant value (where we assumed small κ) and consequently . A deviation from the static demand will lead to a change of the production - the larger , the faster the response to the changed demand. Therefore, we define the responsiveness of the production to the demand as . When assuming small fluctuations the explicit expression for the responsiveness is then given by:

As an example we consider the one-dimensional system studied in Figure 3A-F in the main text for which the responsiveness is

where we used that and .

S4 Control theory calculation

The calculation below follows the standard procedure known as Linear-Quadratic Optimal Control (LQC). For more details, we refer the reader to standard sources (e.g. Liberzon, 2011).

The problem we set is minimizing , where follows the dynamics of Equation (1) in the main text,

We then wish to calculate the optimal way of steering . For simplicity, we will set the mean (in the context of this abstract problem, this is equivalent to working with mean-shifted variables and similarly ). We can start by discretizing the above equation,

where , and ξ has variance . We seek to determine the optimal way to steer ; in other words, the function (‘control policy’) dictating how should be changed in a given time step:

We then can define our cost function, which combines a cost for the magnitude of ut (how quickly we can change ), and the difference between and :

The describing the optimal behavior is the one that minimizes this cost. In order to solve for , we follow standard control theory techniques and define the ‘cost-to-go’ function,

This function defines the smallest cost of all remaining steps; in particular, the total cost that we are trying to minimize is . The cost-to-go satisfies the boundary condition

and the following recursive relation:

Since our system is Gaussian, this recursive relation can be solved by adopting a quadratic ansatz:

Solving for the matrices , , , and , gives us the following recursive relations:

Since our problem is to minimize the cost at steady state (known in control theory as an ‘infinite horizon’ problem, ), we are interested in the fixed point of this mapping, specifically the matrices to which this mapping converges when we start from and (as defined by Equation (S19)).

Since is the identity matrix, all are proportional to the identity matrix as well: , where . The fixed point of this mapping is . Similarly, the fixed point of is . Expressing this in terms of α only:

With these expressions, the optimal ‘control policy’ is defined by the value of that minimizes Equation S20. This defines the optimal way to change for a given observed :

or, restoring the notations of the main text, including a non-zero :

This is the exact solution to the discrete version of the problem we considered. Since our simulations in this work use a discrete timestep, this is the form we use. Nevertheless, it is instructive to consider the small-, large-CIP limit such that and are both small compared to inverse eigenvalues of . In this case we have, to first order in :

This leads to the following, and very intuitive, form of the optimal control dynamics:

In other words, at every step the change in mirrors the average expected change in , with an extra term seeking to reduce their deviation. Note also that setting (infinite CIP) corresponds to steering directly to the expected value of at the next timestep, as expected.

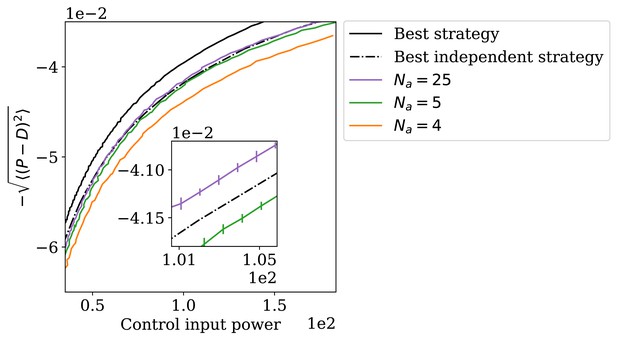

S5 The system makes use of correlations in the input

Figure 4B in the main text demonstrated that, as the fluctuating inputs become increasingly correlated, our architecture is able to outperform SEPI by an increasingly wide margin. The natural interpretation of this result is that the system is able to learn and exploit this correlation. Technically, however, one might note that this observation alone does not yet prove that our architecture is able to appropriately use the information it learned about specifically correlation. For example, it could be that strongly correlated inputs are somehow inducing a stronger increase in reactivity, causing the system to be generally faster, but without benefiting specifically from the correlated nature of its inputs.

Rather than focusing on excluding this specific scenario (which could be done by comparing the CIP values along the curves shown in Figure 4B), we will show that with a sufficient number of regulators, our architecture can perform better than the theoretical ceiling achievable by any strategy that assumes the inputs to be independent. This will formally prove that, at least for some parameters, our system’s improved performance must necessarily make use of the correlation of its inputs. Although the argument is somewhat academic in nature (we will prove our point using regulators), it is theoretically pleasing, and so we present it here.

Specifically, we consider a system subjected to inputs structured as shown in Figure 3H, with angle so that the two inputs have the same variance. Appendix 1—figure 1 shows the performance of our architecture for several values of the number of regulators , plotted as curves parameterized by the degradation rate κ. The degradation rate controls how large the can become: a high value of κ leads to lower average steady-state values of the regulator activities, causing the system to be less responsive to changes in . Thus, κ can be used to set the CIP of the regulatory system, allowing us to plot these performance curves in the ‘performance vs. CIP’ axes traditional for control theory.

The adapting system can perform better than the best independence-assuming strategy.

Reassuringly, all these performance curves are located below the optimal control-theory ceiling computed for the true correlation structure of the input. However, the plot also shows the ‘best independent’ curve, defined as follows. Consider all possible matrices corresponding to uncorrelated inputs: . Each such defines a family of control strategies (that would have been optimal if this were the true governing the dynamics of the input); this family is indexed by a parameter ρ as described above. A system following an (independence-assuming) strategy while faced with the actual (partially correlated) inputs will exhibit a certain performance and a certain CIP, which we denote . With these notations, the ‘best independent’ curve is defined as

We note that the correlated-input CIP is different from the independent-input CIP that a given strategy would have incurred if faced by the input for which it is optimal. In particular, while the latter can be computed analytically, the former has to be evaluated in simulations. This makes the optimization procedure computationally costly; thankfully, the symmetry ensured by choosing allows restricting the search to isotropic strategies , reducing the problem dimensionality from three parameters to more manageable two .

The result is shown in Appendix 1—figure 1 as a dashed line. As highlighted in the inset, with enough regulators, our architecture is indeed able to outperform the theoretical ceiling of the best independence-assuming strategy. Although regulators is of course a regime irrelevant for biological applications, the aim of this argument was to formally prove a theoretical point, namely that the system as constructed must necessarily be making use of the correlation in the input signal, at least for some values of the parameters; by construction, the ‘best independent’ curve is a high bar to clear.

S6 Model predictive control

When framing the problem in the main text, we discussed it as consisting of two tasks, learning the fluctuation structure of the environment and ‘applying that knowledge’ (Figure 1B), and treated the two as conceptually separate. In particular, the calculation in section ‘S4 Control theory calculation’ is known as linear-quadratic Gaussian control (LQG) that assumes the correct model of the environment to already be known. This separation was done to simplify presentation, allowing us to encapsulate the control theory discussion, potentially less familiar to our audience, to a smaller portion of the narrative. In addition, the LQG calculation is simple, exact, and provides an upper bound on how well any other control strategy could perform.

Mechanistically, however, the two tasks are inseparable. In the language of control theory, our architecture implements an example of model predictive control: a strategy where the system response is governed by a continually updated internal model of the environment (here, the activity levels of the regulators, which encode the learned correlations between the inputs).

How could one distinguish a predictive vs. non-predictive control scheme in practice, when measuring all internal variables to decipher whether or not they encode an ‘internal model of the environment’ is infeasible? For our scheme, its ‘predictive’ ability manifests itself as follows. Imagine exposing our system to two inputs which for a period of time are strongly correlated, with . The learning process will drive the responsiveness matrix from one that was initially isotropic to one aligned with the correlated direction (in the notation of the main text, ). Compare now the response of the system to an increase in D1: . The naïve (untrained) system would respond by increasing P1 only, as would the SEPI architecture. In contrast, the ‘trained’ system, having learned the correlation between D1 and D2, responds by upregulating both P1 and P2 together. In this hypothetical example, our analysis predicts that deleting seemingly superfluous regulators would hinder or remove this ability (depending on the implementation, possibly without even affecting fitness in a static environment).

This is the behavior expected of a predictive controller: Under a model where and are strongly correlated, one expects this state of the environment to relax back to the diagonal in the near future. This kind of associative learning is experimentally measurable and, on an evolutionary scale, is well-known. Our scheme provides a mechanism for implementing this behavior on a physiological timescale. Another mechanism focusing on binary associations was previously described by Sorek et al., 2013.

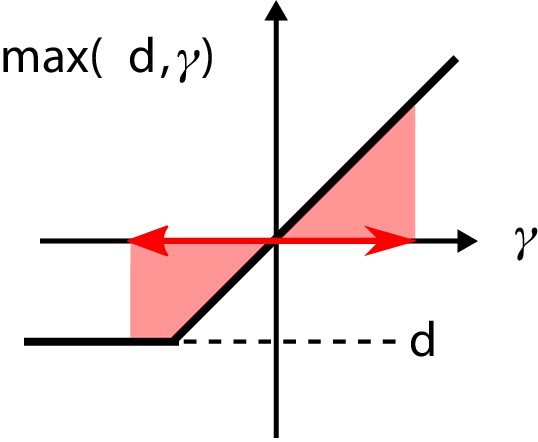

S7 Nonlinearity acts as a sensor of fluctuation variance

In the main text, we argue that the nonlinearity in the dynamics of the regulator concentrations acts as a senor for the variance of the fluctuations. To see this, we consider the dynamics of one regulator that is controlling the production of one metabolite:

To simplify notation, we define . Since the dynamics of are slow compared to , the fluctuations of γ are on a faster timescale than the regulator dynamics. If the fluctuations of γ are small, the nonlinearity in the function is ‘not activated’: . In this case, the temporal average of is zero. In contrast, if the fluctuations are strong enough, the nonlinearity is activated (see Appendix 1—figure 2). Then, the temporal average is positive, leading to an additional growth of . Due to the resulting larger values of , the response of the system becomes faster, making the match between and better and thus serving to decrease the variance of γ. As a result, the final average steady-state regulator concentration is reached if the system has decreased the variance of γ sufficiently by increasing the rapidity of its response.

The nonlinearity in the regulatory architecture.

If the fluctuations of the input are large enough, the average over the nonlinearity is positive, causing additional growth of the regulator concentration .

This argument makes it clear why the specific choice of nonlinearity is not particularly important. If , then the static steady state satisfies . For a fast-fluctuating input this becomes

For any nonlinear , as long as , the displacement of the original steady state will be determined by higher order statistics of the input. In particular, the rectified-linear nonlinearity in our equations can be replaced, for example, by a Hill function. Note that for the argument presented here, the eventual saturation of the response at large argument is irrelevant: the system will retain all the relevant behavior as long as the new nonlinearity is cup-convex in a sufficiently large vicinity of its static fixed point; see section ‘S9.1 A pair of allosteric enzymes’ for an explicit example.

The assumption is not innocuous; in general, of course, the value of is sensitive not only to the variance of (or other higher-order terms), but also to its mean, and building a system that is sensitive to specifically the variance requires adapting to the mean first. In our model, this is automatically accomplished by the underlying end-product inhibition architecture, which adapts the mean to mean , after which fluctuates around 1, no matter the value of .

S8 The minimal Γ needed to initiate adaptation

Figure 4A in the main text includes arrows indicating theoretically derived threshold values of Γ above which our system (with a given ) will begin to adapt its timescale of response, deviating from SEPI in its behavior. Here, we show how this threshold value of Γ can be determined.

As discussed in the main text, at static input () only out of regulators can be active. Consider the regulators that remain inactive in the static case, and imagine gradually increasing the fluctuation magnitude. Recall the equation for regulator activity dynamics:

After introducing we can write

If we chose as one of the regulators that remained inactive in the static case, we have at ; as we begin increasing the fluctuation magnitude, the time-averaged will at first remain negative. The threshold Γ we seek is the one where the first (time-averaged) crosses into positive values. It is clear that if the fluctuations of are so small that at all times, the system does not adapt. On the other hand, if the fluctuations are large enough and fast compared to the response of the system, they generate an effective additional growth of . To first order, this additional growth term is proportional to the standard deviation of . Therefore, we expect the fluctuations to cause a growth of if the additional growth term is large compared to κ, i.e. , with a constant of order 1.

The approximate value of can be determined using the following argument. With , and assuming that is, first, fluctuating on a fast timescale compared to and, second, is Gaussian with mean and variance , we can average over the fluctuations in Equation (S28):

The system is in a stable steady state if either and or and . In the non-trivial first case we get the condition . Approximating one sees that the average growth rate is positive if , so that . If this condition is satisfied, continues its growth until the separation of timescales between and becomes invalid and decreases; this is the mechanism by which the system adapts to fast fluctuations.

The variance can be derived from the statistical properties of . If the fluctuations of the demand are small it holds that where is the covariance matrix of the stationary probability distribution of the fluctuations with and . The variance is then given by and the minimal value of Γ is set by the largest of the considered system.

S9 Realistic biochemical implementations

In the main text, we proposed a simple model of metabolic regulation which highlighted the necessary properties for learning environment statistics, namely an excess of regulators , self-activation, and a nonlinear regulation of by the metabolite concentrations xi. To show how these properties can enable more realistic systems to learn the statistics of a fluctuating environment, here we present two biochemical implementations. The first of these implements dynamics nearly identical to those described in the main text, and the second, illustrated in Figure 5b, bears resemblance to two-component systems. As described in the main text, we do not necessarily expect either of these networks to be implemented in real biological systems ‘as is’. Instead, we use these to illustrate the diversity of systems that could use the logic described in this paper to learn statistics of their environment. For simplicity, we consider the one-dimensional case (first column of Figure 3 in the main text).

S9.1 A pair of allosteric enzymes

The circuit is shown in Appendix 1—figure 3. The enzymes and can be in an active or inactive state: The active form of , which we denote , catalyzes the production of ; similarly, catalyzes degradation of . In addition, we postulate that active enzymes can bind to molecules of the metabolite , which controls self-catalytic activity (see diagram). The total concentration of , bound and unbound, plays the role of the activating regulator from the main text (), while plays the role of the inhibitor ().

Implementation of the regulatory mechanism based on a pair of self-activating enzymes which can be in an active () or inactive state ().

Gray shading indicates catalysts of reactions.

The same regulatory structure could be realized with transcription factor regulation, with the role of the active enzymes ( and ) played by two transcription factors. In this version, activation/deactivation of enzymes is replaced by the simpler process of transcription factor synthesis/degradation. For concreteness, here, we focus on the enzymatic case, largely because we expect architectures like ours to be more relevant in fast-responding circuits, which tend to be non-transcriptional. However, except for the difference of timescales, the dynamics of the two versions would otherwise be identical; in particular, both implementations would ‘learn’ in the same way.

For this discussion, it is convenient to have timescales of dynamics of and xi encoded as explicit parameters. Assuming first-order kinetics, the dynamics of the network can then be described by:

Here, we assume that the metabolite is much more abundant than the active enzymes and , meaning that the relative amount of bound is very small. This allows us to neglect, in the dynamics of , the source and sink terms due to binding and unbinding of to the enzymes. We also assume that this binding and unbinding occurs on a much faster timescale than all other processes.

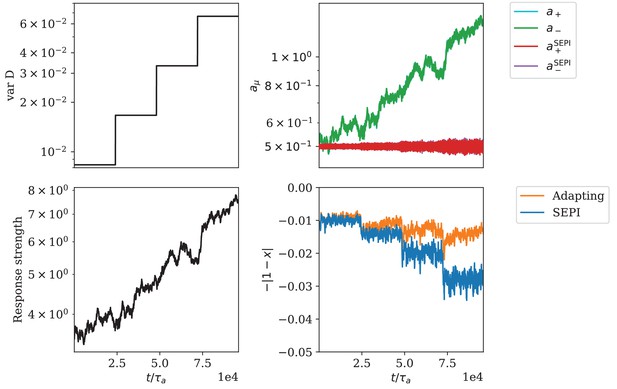

Appendix 1—figure 4 shows an example of simulation results for these dynamics (for the full list of parameters used, see section ‘S11 Parameters used in figures’). We see that the system reacts to an increasing variance of environmental fluctuations (A) by increasing regulator activities (B). The figure also shows the behavior of a SEPI system which only consists of one regulator described by the dynamics in Equation (S30). Appendix 1—figure 4C shows that the response strength, defined as discussed in the SI section ‘Defining the system’s responsiveness,’

is increasing due to the changed regulator activities. Finally, Appendix 1—figure 4D compares the performance of the system Equation (S30) with the corresponding SEPI system (which, again, we define by the same equations as Equation (S30), except without the regulator). Similar to Figure 3F in the main text, the performance of the adapting system does not change as the variance of the stimulus increases, while the SEPI system becomes worse.

Adaptation of responsiveness to an increasing variance of environmental fluctuations.

(A) Step-wise increase of the variance of . (B) Time-series of regulator concentrations, where and correspond to the total concentrations of and respectively. (C) The responsiveness of the system as defined in Equation (S31). (D) The deviation of the metabolite concentration from its target value.

S9.2 An architecture based on a bifunctional enzyme

For the reader’s convenience, we reproduce this circuit in Appendix 1—figure 5 (identical to Figure 6B in the main text). As described in the main text, for greater generality, we will here rephrase the task: instead of matching production to demand, we will think of maintaining the homeostasis of a quantity perturbed by external factors. For example, instead of being a metabolite concentration, could be osmolarity mismatch, and our circuit a hypothetical architecture for active control of osmotic pressure. In this interpretation, the enzyme might be a mechanosensor triggered by tension in the membrane or cell wall, while ‘production’ and ‘degradation’ of could be activities of opposing pumps, or regulators of glycerol export or metabolism.

Regulation by allosteric forms of one enzyme .

The unbound form activates the production of , while the bound form promotes its degradation. The synthesis of is regulated nonlinearly by the metabolite concentration .

To simplify our language when describing the terms in the specific equations we simulate, we will still talk of a metabolite being produced and degraded. However, to better accommodate alternative interpretations, the regulator activities will now be defined so that and would be equal on average (for example, the activities of pumps in opposite directions should, on average, balance). This homeostasis-maintaining formulation is in contrast to Figure 3D in the main text, where regulators satisfied the constraint .

The production and degradation of are catalyzed by a bifunctional enzyme that changes its activity when bound to forming the compound . The concentration of the unbound form corresponds to the activating regulator, , and increases the production of , while plays the role of the inhibiting regulator, , and promotes the degradation of .

As above, we assume first-order kinetics for the production and degradation of , and that the binding kinetics are fast compared to the other timescales in the problem. Defining as the total concentration of the enzyme in both its bound and unbound states, the bound and unbound fractions are described by Hill equations:

These expressions make it explicit that a small change in the concentration induces a change in and that is proportional to their sum, . In this way, the circuit shown in Appendix 1—figure 6 does include an element of self-activation (one of our ‘key ingredients’), even though no interaction in the diagram is explicitly self-catalytic.

Adaptation of responsiveness for the circuit architecture based on a bifunctional enzyme.

(A) The variance of is increased step-wise. (B) Change of regulator activities. The regulator activities and overlap strongly and cannot be distinguished in this panel. (C) The response strength of the system. (D) The mismatch of the metabolite concentration from its target value.

With these notations, the dynamics of our system are:

where we assumed that modifying the enzyme does not significantly affect the quantity . (In the metabolic formulation, this corresponds to the assumption that is much more abundant than , so that the sequestration of by has negligible effect on the free concentration of ; in the osmotic formulation, the act of triggering a mechanosensor has negligible instantaneous effect on pressure). In the second equation, the synthesis of the enzyme depends nonlinearly on the metabolite concentration . The specific form of nonlinearity does not significantly affect the results, as long as it is sufficiently cup-convex in the vicinity of the operating point: As described in the section ‘S7 Nonlinearity acts as a sensor of fluctuation variance’, we can think of the nonlinearity as a ‘sensor’ for the variance of environmental fluctuations. Whenever fluctuations in increase such that the current responsiveness of the system fails to maintain the homeostasis of within previous bounds of fluctuation magnitude, the fluctuations of will lead to growth of , increasing the responsiveness until it is again able to reduce the fluctuations of . In our simulations we chose a Hill function with cooperativity 4 (see section ‘S11 Parameters used in figures’).

Appendix 1—figure 6 shows simulation results for this system. As in the first column of Figure 3, the variance of is increased and the response of the system to this change is monitored. We see that the regulator concentrations correspondingly increase, causing a larger response strength . The increase in response strength is able to compensate for most of the performance loss, which shows that the system successfully adapts its timescale of response. This is in contrast to the ‘SEPI-like’ system with a fixed value , which cannot adapt its responsiveness and whose performance drops with every increase in fluctuation variance.

S10 Adaptation to static demand

In the main text, we argue that the production of the proposed system Equation (3) adapts to any static demand . The full dynamics of the system is

With a static demand, Equation (S34) possesses the same fixed points as the simplified dynamics:

These dynamics have a Lyapunov-function

This can be verified by considering the temporal change of

with . Thus, always increases and is obviously bound from above. For small κ, the maximum of is reached for , showing that the system adapts to any static demand.

S11 Parameters used in figures

If not stated otherwise, we use the following parameters: , , , , , , , , . Since the demand is modeled by a stochastic process which is, in principle, unbounded, there is a non-zero probability that the demand becomes negative. To prevent this behavior in the simulations we set if .

Figure 3C–F

Fluctuations: In 1D the matrix only has one element which we set to , .

System: .

Timescales: .

Figure 3F shows a running average over 100 steps.

Figure 3I–L

Fluctuations:

System: , , .

SEPI: For a fair comparison, the timescale of SEPI is chosen such that its responsiveness matches the faster responsiveness of the adapting system (measured in an environment with an isotropic with the same determinant as used in Figure 3J–L): .

For visualization purposes, to prevent long transients after changes of the activation angle, the regulator activities were capped from below at .

Figure 3L shows a running average over 100 steps.

Figure 4A

Fluctuations: Γ from 0 to 0.1 in 40 equidistant steps.

Timescale SEPI: .

Simulation length: 5 · 107 steps.

Figure 4B

Fluctuations: , anisotropy A=[ 1, 1.05,1.1, 1.15, 1.2 , 1.25, 1.3 , 1.35, 1.4, 1.45, 1.5, 1.55, 1.6 , 1.65, 1.7, 1.75, 1.8, 1.85, 1.9, 1.95, 2, 2.1, 2.2, 2.3, 2.4, 2.5, 2.6, 2.7, 2.8, 2.9, 3.25, 3.5, 4, 4.5, 5, 6, 7, 8, 9, 10]. For each value of and are chosen as: , with .

Timescale SEPI: .

Simulation length: 5 · 107 steps.

Figure 5A and B

Fluctuations: Results averaged over activation angles .

System: , .

Simulation length: 107 steps.

Figure 6C and Appendix 1—figure 4

The parameters in the simulation were chosen so as to ensure that, first, ; and second, the steady-state stays at 1 (this is analogous to setting in the main text). Specifically, we used: , , , , , , , , . The parameters describing the fluctuations of are chosen as: , , .

A brief explanation: While the parameter list is long, there is a simple reasoning which sets most of these choices, and explains how the parameters of this model need to be related to each other in order for the adaptation of responsiveness to occur. First of all, we assume that the concentration changes on a much faster timescale than the regulator concentrations ; here we choose and . Further, the average of is chosen to be equal to one. Then, for small fluctuations of we have . On the other hand, the non-trivial fixed points of the regulator concentration dynamics are reached if

Thus, we can set the equilibrium point of by choosing , , and . Here, without loss of generality, we choose that the fixed point is reached at by setting

For the sought-after effect to occur, the fast fluctuations of around need to result in an effective additional growth of and , providing a ‘sensor’ for the variance of . One possibility to get this behavior is to set and . To avoid the regulator concentrations to grow indefinitely, and need to be a little larger than the determined values in Equation (S39). Finally, the parameter γ can be chosen rather freely; here we choose . Simulation length: 3.2 · 107 steps with . Panels 6C and S4 D show a running average over 100 timesteps.

Figure 6D and Appendix 1—figure 6:

We used the following parameters for the simulations: , , , , , , . The nonlinearity was chosen as: with , , , . The parameters describing the fluctuations of are set to: , . For the mechanism to work, the timescales need to fulfill . The parameters P0, and are set by the choice of the fixed-point x0 (here ). Simulation length: 3.2 · 107 steps with . Panels 6D and S6 D show a running average over 100 timesteps.

Appendix 1—figure 1

System: κ from 0.01 to 0.025 in steps of size 1.25 · 10−4.

Simulation length = 5 · 107 steps.

For each simulation, the performance was averaged over the last 107 time steps.

Appendix 1—figure 1 inset

All system parameters as in Figure S1 except for: κ from 0.013 to 0.014 in steps of size 2.5 · 10−5.

Simulation length: 108 steps.

For each simulation, the performance was averaged over the last 2 · 107 steps.

The results are binned in 20 equal-width bins.

Data availability

Python simulation scripts reproducing all figures in the paper from scratch, as well as pre-computed simulation data files for faster plotting, are provided as Source code 1 (and are also publicly available at https://doi.org/10.17632/5xngwv5kps.2).

References

-

Circadian rhythms from multiple oscillators: lessons from diverse organismsNature Reviews Genetics 6:544–556.https://doi.org/10.1038/nrg1633

-

ConferenceStrong turing completeness of continuous chemical reaction networks and compilation of mixed analog-digital programsInternational Conference on Computational Methods in Systems Biology. pp. 108–127.

-

Two-component signaling circuit structure and propertiesCurrent Opinion in Microbiology 13:184–189.https://doi.org/10.1016/j.mib.2010.01.009

-

Achieving optimal growth through product feedback inhibition in metabolismPLOS Computational Biology 6:e1000802.https://doi.org/10.1371/journal.pcbi.1000802

-

Autoamplification of a two-component regulatory system results in "learning" behaviorJournal of Bacteriology 183:4914–4917.https://doi.org/10.1128/JB.183.16.4914-4917.2001

-

Implementation of dynamic Bayesian decision making by intracellular kineticsPhysical Review Letters 104:228104.https://doi.org/10.1103/PhysRevLett.104.228104

-

BookCalculus of Variations and Optimal Control Theory: A Concise IntroductionPrinceton University Press.

-

Escherichia coli Habitats, Cell Types, and Molecular Mechanisms of Gene ControlThe American Naturalist 122:732–744.https://doi.org/10.1086/284168

-