Recurrent neural networks enable design of multifunctional synthetic human gut microbiome dynamics

Abstract

Predicting the dynamics and functions of microbiomes constructed from the bottom-up is a key challenge in exploiting them to our benefit. Current models based on ecological theory fail to capture complex community behaviors due to higher order interactions, do not scale well with increasing complexity and in considering multiple functions. We develop and apply a long short-term memory (LSTM) framework to advance our understanding of community assembly and health-relevant metabolite production using a synthetic human gut community. A mainstay of recurrent neural networks, the LSTM learns a high dimensional data-driven non-linear dynamical system model. We show that the LSTM model can outperform the widely used generalized Lotka-Volterra model based on ecological theory. We build methods to decipher microbe-microbe and microbe-metabolite interactions from an otherwise black-box model. These methods highlight that Actinobacteria, Firmicutes and Proteobacteria are significant drivers of metabolite production whereas Bacteroides shape community dynamics. We use the LSTM model to navigate a large multidimensional functional landscape to design communities with unique health-relevant metabolite profiles and temporal behaviors. In sum, the accuracy of the LSTM model can be exploited for experimental planning and to guide the design of synthetic microbiomes with target dynamic functions.

Editor's evaluation

The ultimate goal of this work is to apply machine learning to learn from experimental data on temporal dynamics and functions of microbial communities to predict their future behavior and design new communities with desired functions. Using a significant amount of experimental data, the authors suggest a method that outperforms the state-of-the-art approach. The work is of broad interest to those working on microbiome prediction and design.

https://doi.org/10.7554/eLife.73870.sa0Introduction

Microbial communities perform chemical and physical transformations to shape the properties of nearly every environment on Earth from driving biogeochemical cycles to mediating human health and disease. These functions performed by microbial communities are shaped by a multitude of abiotic and biotic interactions and vary as a function of space and time. The complex dynamics of microbial communities are influenced by pairwise and higher order interactions, wherein interactions between pairs of species can be modified by other community members (Sanchez-Gorostiaga et al., 2019; Mickalide and Kuehn, 2019; Hsu et al., 2019). In addition, the interactions between community members can change as a function of time as the community continuously reacts to and modifies its environment (Hart et al., 2019). Therefore, flexible modeling frameworks that can capture the complex and temporally changing interactions that determine the dynamic behaviors of microbiomes are needed. These predictive modeling frameworks could be used to guide the design of interventions to precisely manipulate community-level functions to our benefit.

The generalized Lotka-Volterra (gLV) model has been widely used to predict community dynamics and deduce pairwise microbial interactions shaping community assembly (MacArthur, 1970). For example, the gLV model has been used to predict the assembly of tens of species based on absolute abundance measurements of lower species richness (i.e. number of species) communities (Venturelli et al., 2018; Mounier et al., 2008; Clark et al., 2021). The parameters of the gLV model can be efficiently inferred based on properly collected absolute abundance measurements and can provide insight into significant microbial interactions shaping community assembly (Bucci et al., 2016). However, this model does not represent higher order interactions or microbial community functions beyond species growth. To capture such microbial community functions, composite gLV models have been developed to predict a community-level functional activity based on species abundance at an endpoint (Clark et al., 2021; Stein et al., 2018). However, these approaches have been limited to the prediction of a single community-level function at a single time point. Therefore, new modeling frameworks are needed to capture temporal changes in multiple community-level functions, such as tailoring the metabolite profile of the human gut microbiome (Fischbach and Sonnenburg, 2011).

Neural network architectures, such as recurrent neural networks (RNNs), are universal function approximators (Dambre et al., 2012; Schäfer and Zimmermann, 2006) that enable greater flexibility compared to gLV models for modeling dynamical systems. However, neural network based models often require significantly more model parameters, which poses additional challenges to model fitting and generalizability. A particular RNN model architecture called long short-term memory (LSTM) addresses challenges associated with training on sequential data by incorporating gating mechanisms that learn to regulate the influence of information from previous instances in the sequence (Lipton et al., 2015). From their initial successes in speech recognition (Graves et al., 2005) and computer vision (Byeon et al., 2015), LSTMs have recently been applied to modeling biological data such as subcellular localization of proteins (Sonderby et al., 2015) and prediction of biological age from activity collected from wearable devices (Rahman and Adjeroh, 2019). Related to microbiomes, deep learning frameworks have been applied to predict gut microbiome metabolites based on community composition data (Le et al., 2020), final community composition based on microbial interactions (Larsen et al., 2012) and end-point community composition based on the presence/absence of species (Michel-Mata et al., 2021). In addition, RNN architectures have been used to model phytoplankton (Jeong et al., 2001) and macroinvertebrate (Chon et al., 2001) community dynamics. Despite achieving reasonable prediction performance, previous efforts at modeling ecological system dynamics using RNNs are typically limited to handful of organisms (<10), have provided limited model interpretation and have not been leveraged to predict temporal changes in community behaviors. In addition, RNN architectures have not been used for bottom-up community design, which could be exploited for applications in bioremediation, bioprocessing, agriculture, and human health (Leggieri et al., 2021; Lawson et al., 2019; Clark et al., 2021).

Here, we apply LSTMs to model time dependent changes in species abundance and the production of key health-relevant metabolites by a diverse 25-member synthetic human gut community. LSTMs are a good model for microbiomes because (1) LSTMs are a natural choice for a neural network based model of time-series data (Goodfellow et al., 2016); (2) LSTMs are highly flexible models that can capture complex interaction networks that are often neglected in ecological models; (3) LSTMs can be modified to capture additional system variables such as environmental factors (e.g. metabolites). In addition, LSTMs have some advantages over traditional RNNs because they can capture long-term dependencies. LSTMs have additional parameters that adjust the effects of earlier time points on the predictions at later time points in a time-series. We use the trained LSTM model to elucidate significant microbe-microbe and microbe-metabolite interactions.

The flexibility and accuracy of the LSTM model enabled systematic integration into our experimental planning process in two stages. First, the LSTM was fit to data from a previous study with low temporal resolution involving a moderate number of synthetic microbial communities (Clark et al., 2021). The distribution of LSTM metabolite predictions was then used to identify sparse sub-communities in the tails of the distribution, communities that we refer to as ‘corner cases’. A second experiment was then performed that expanded the training data for the LSTM in the vicinity of these corner cases with higher time resolution. The LSTM-guided two-stage experimental planning procedure substantially reduced the number of experiments compared to random sampling of the functional landscape with temporal resolution in a single stage experiment. Therefore, the LSTM analysis enabled our main findings on dynamical behaviors of communities and identified the key species critical for community assembly and metabolite profiles. Compared to the gLV model, the proposed LSTM framework provides a better fit to the experimental data, captures higher order interactions and provides higher accuracy predictions of species abundance and metabolite concentrations. In addition, our approach preserves model interpretability through a suitably developed gradient-based framework and locally interpretable model-agnostic explanations (LIME) (Ribeiro et al., 2016a). Using our time-series data of species abundance and metabolite concentrations, we demonstrate that the temporal behaviors of the communities cluster into distinct groups based on the presence and absence of sets of species. Our results highlight that LSTM models are powerful tools for predicting and designing the dynamic behaviors of microbial communities.

Results

LSTM outperforms the generalized Lotka Volterra ecological model

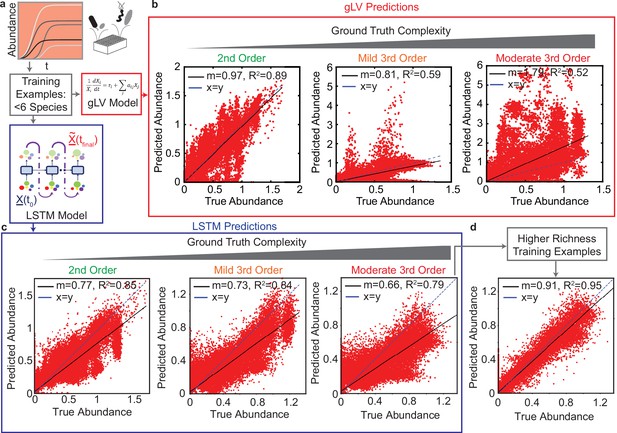

Our first objective was to compare the predictive performance of the LSTM model to a commonly used ecological modeling approach. The gLV model is a widely used ecological model consisting of a coupled set of ordinary differential equations that captures the growth dynamics of members of a community based on their intrinsic growth rate and interactions between all pairs of community members (Venturelli et al., 2018). Therefore, the gLV model is not suited to capture higher order interactions among species or changes in inter-species interactions resulting from variation in the environment. By contrast, the LSTM modeling framework is flexible and can capture complex relationships between species as well as time-dependent changes in inter-species interactions. To evaluate the strengths and limitations of these modeling frameworks, we characterized the performance of the gLV and LSTM models in learning the behavior of a ground truth model that included pairwise and third-order interactions between species (Methods).

Our ground truth model is based on a gLV model of a 25-member synthetic gut community from a previous study (Clark et al., 2021). To perturb our ground truth model with higher order interactions, we add third-order interaction terms with either mild or moderate parameter magnitudes (Methods). Using this model, we simulate sub-communities that vary in the number of species. Of all the randomly simulated communities, those containing six or fewer species are used to train both the gLV and LSTM models (624 training communities), while the remaining communities (3299 test communities with ≥10 species) are used as a hold-out test set. The 624 training communities includes 25 monospecies, 300 unique pairwise communities, 100 unique three-member communities, 100 unique five-member communities, and 99 unique six-member communities. The simulated data spans 48 hr separated by an interval of 8 hr, reflecting the experimentally feasible periodic sampling interval of 8 hr.

Recall that we restrict our attention to simpler (fewer species) communities for training to determine if the behavior of lower order communities can be used to predict higher order communities. Further, pairwise inter-species interactions are easier to decipher in lower order communities due to potential co-variation among parameters (correlations between parameters) as a consequence of model structure or methods of data collection. A similar training/test partitioning was used to generate predictive models of complex community behaviors (Venturelli et al., 2018; Clark et al., 2021; Hromada et al., 2021).

The prediction performance of the trained gLV and LSTM models on the hold-out test set are similar for the ground truth model containing only pairwise interactions (Pearson of 0.89 and 0.85 for gLV and LSTM models, respectively) (Figure 1b, c left). For the ground truth model with mild third-order interactions (interaction coefficients that do not exceed 25% of the maximum of the absolute values of the coefficients for the second-order interactions), the performance of the LSTM model is substantially better than the gLV model with the -score of 0.85, as opposed to 0.52 for the gLV model (Figure 1b, c, middle). In addition, the LSTM model performs significantly better than the gLV model for higher magnitude (moderate) third-order perturbations (third-order interaction coefficients that do not exceed 50% of the maximum of the absolute values of the coefficients for second-order interactions) (Figure 1b, c, right).

Comparison of generalized Lotka Volterra (gLV) and Long Short Term Memory (LSTM) model prediction performance of species abundance in a 25-member microbial community in response to third-order perturbations of varying magnitude.

For both models, training data consists of low species richness communities ( species, , Pearson correlation p-value lt0.0001). (a) & (d): Data was generated using a gLV model that captures monospecies growth and pairwise interactions. Scatter plots of true versus predicted species abundance at using the gLV and LSTM models, respectively. represents a vector of species abundances. (b) & (e) Scatter plot of true versus predicted species abundance of the gLV and LSTM models, respectively when the simulated data is subjected to low magnitude (mild) third-order interactions. (c) & (f) Scatter plot of true versus predicted species abundance of gLV and LSTM models, respectively when the simulated data is further subjected to moderately large third-order interactions. (g) Scatter plot of true versus predicted species abundance for the LSTM model. The training set included a set of higher richness communities (50 each of 11 and 19 member communities). All predictions are forecasted from the species abundance at time 0.

This in silico analysis highlights the advantages of adopting more expressive neural network models over severely constrained ecological models such as gLV. In addition, a key advantage of the proposed LSTM model over the gLV model is the amount of time required for training the two models. The gLV equations are coupled nonlinear ordinary differential equations, and thus training gLV models requires substantial computational time (nearly 5–6 hr), whereas the LSTM models can be trained in minutes on the same platform. Therefore, the LSTM approach is highly suited for real-time training and planning of experiments. Note that both the composite as well as the LSTM model require tuning of hyperparameters for optimal performance. The details of the computational implementation are provided in the Methods section.

To further leverage this in silico experimental approach, we aimed to identify what type of datasets are required for building predictive models of high richness community behaviors depending on the nature of their underlying interactions. In further analyzing our results, we observed a crescent shaped prediction profile, representing an inherent bias, which we hypothesized was due to the training data containing only communities with ≤6 species (Figure 1c). To test this hypothesis, we augmented the training set with 100 communities enriched with a larger number of species (randomly sampled 11 and 19-member communities). Using this enriched training set, the LSTM model accurately predicts the community dynamics of the hold-out set with an of 0.95 (Figure 1d). In sum, the LSTM has difficulty predicting the behavior of high richness communities when the training data consists of only low richness communities. However, adding a moderate number of high richness communities to the training set eliminates the prediction bias and improves the prediction performance of the LSTM.

LSTM accurately predicts experimentally measured microbial community assembly

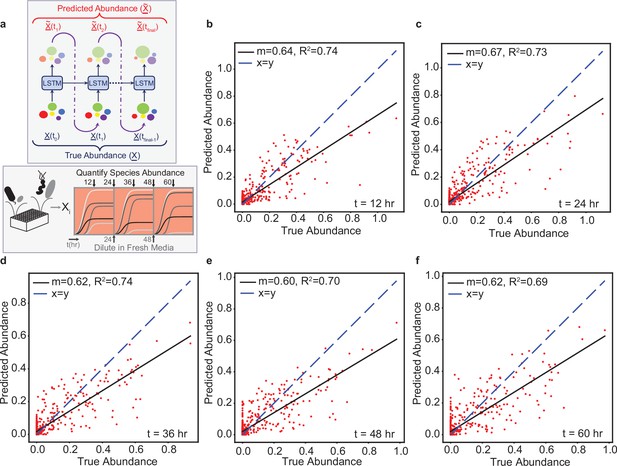

After validating our methods using the ground truth modeling approach described above, we evaluated the ability of the LSTM to capture the dynamics of experimentally characterized synthetic human gut microbial communities. We tested the effectiveness of the LSTM on time-resolved species abundance data from a previous study of a well-characterized twelve-member synthetic human gut community (Venturelli et al., 2018). The experimental data consists of species abundance sampled approximately every 12hr. A total of 175 microbial communities with sizes varying from 2 to 12 were used to train and evaluate the LSTM model. Of the 175 microbial communities, 102 microbial communities were selected randomly to constitute the training set, while the remaining 73 microbial communities constituted the hold-out test set (Supplementary file 1). This train/test split was similar to that used to train a gLV model in the previous study (Venturelli et al., 2018). The previous study represented perturbations in cell densities and nutrient availability by diluting the community 20-fold every 24 hr into fresh media (i.e. passaging of the communities) (Figure 2a ). The sequential dilutions of the communities are external perturbations that introduce further complexity towards model training.

The LSTM model can predict the temporal changes in species abundance in a 12-member synthetic human gut community in response to periodic dilution (passaging).

(a) Proposed LSTM modeling methodology for the dynamic prediction of species abundance in a microbial community. The initial abundance information is an input to the first LSTM cell, the output of which is trained to predict abundance at the next time point. Consequently, the predicted abundance becomes an input to another LSTM cell with shared weights to predict the abundance at the subsequent time point. The process is repeated until measurements at all time points are available. represents a vector of species abundances. Thus, all predictions are forecasted from the abundance at time 0. (b) Scatter plot of measured (true) and predicted species abundance of a 12-member synthetic human gut community at 12 hr (, p-value ). (c) Scatter plot of measured (true) and predicted abundance at 24 hr (p-value ). (d) Scatter plot of measured (true) and predicted abundance at 36 hr (p-value ). (e) Scatter plot of measured (true) and predicted abundance at 48 hr (p-value ). (f) Scatter plot of measured (true) and predicted abundance at 60 hr (p-value ).

We trained a LSTM network to predict species abundances at various time points given the information of initial species abundance. We found that a total of five LSTM units can predict species abundance at different time points (12, 24, 36, 48, and 60 hr) based on the initial species abundance. The output of each LSTM unit is used as an input to the next unit. However, the input to the current LSTM unit is randomized between the output from the previous LSTM unit and the true abundance at the current time point in the randomized teacher forcing mode of training in order to eliminate temporal bias in the prediction of end-point species abundances. We did not model the passaging perturbations explicitly, since the experimental procedure was consistent across all communities. This also highlights the advantage of using black-box approaches, such as the LSTM network, where physical parameters such as dilution do not need to be explicitly modeled. Here, each LSTM unit consists of a single hidden layer comprising of 2048 hidden units with ReLU activation. The details on hyperparameter tuning, learning rates, and choice of optimizer are provided in the Methods section.

Despite the passaging perturbations and variation in the sampling times, the LSTM accurately predicts (Pearson -scores of 0.74, 0.73, 0.74, 0.70, and 0.69 at time points 12, 24, 36, 48, and 60 hr, respectively) not only the end-point species abundance, but also the abundances at intermediate time points on hold-out test sets (Figure 2b-f). These results demonstrate that the LSTM model can accurately predict the temporal changes in species abundance of multi-species communities in the presence of external perturbations. Representative communities that were accurately or poorly predicted by the LSTM are shown in (Figure 2—figure supplement 1).

LSTM enables end-point design of multifunctional synthetic human gut microbiomes

The chemical transformations (i.e. functions) performed by the community are the key design variables for microbiome engineering goals, as evidenced by their major impacts on human health (Sharon et al., 2014). Thus, we explored prediction of microbial community functions by applying the LSTM framework to design health-relevant metabolite profiles using synthetic human gut communities.

A core function of gut microbiota is to transform complex dietary substrates into fermentation end products such as the beneficial metabolite butyrate, which is a major determinant of gut homeostasis (Litvak et al., 2018). In a previous study, we designed butyrate-producing synthetic human gut microbiomes from a set of 25 prevalent and diverse human gut bacteria using a composite gLV and statistical model (Clark et al., 2021). While the composite model approach was successful in predicting butyrate concentration, designing community-level profiles of multiple metabolites adds substantial complexity and limited flexibility using the composite modeling approach. Thus, we leveraged the accuracy and flexibility of LSTM models to design the metabolite profiles of synthetic human gut microbiomes. We focused on the fermentation products butyrate, acetate, succinate, and lactate which play important roles in the gut microbiome’s impact on host physiology and interactions with constituent community members (Fischbach and Sonnenburg, 2011).

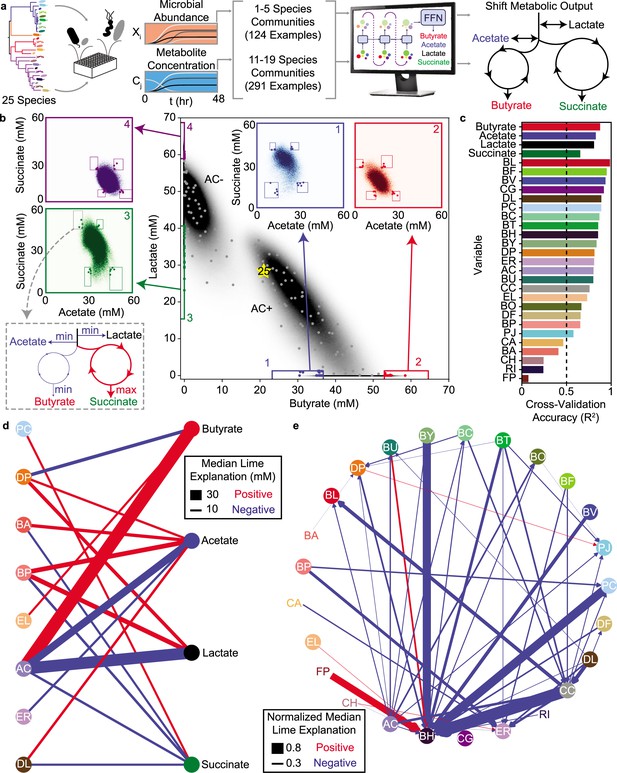

We used the species abundance and metabolite concentrations from our previous work (Clark et al., 2021) to train an initial LSTM model. This model uses a feed-forward network (FFN) at the output of the final LSTM unit that maps the endpoint species abundance (a 25-dimensional vector) to the concentrations of the four metabolites (Figure 3a). The entire neural network model comprising LSTM units and a feed-forward network is learned in an end-to-end manner during the training process, (i.e. all the network weights are trained simultaneously). Cross-validation of this model (Model M1, Supplementary file 1) on a set of hold-out community observations shows good agreement between the model predictions and experimental measurements for metabolite concentrations and microbial species abundances (Figure 3—figure supplement 1). Thus, we used this model to design high species richness (i.e. >10 species) communities with tailored metabolite profiles (Figure 3a).

LSTM-guided design and interpretability of community-level metabolite production profiles (a) Schematic of model-training and design of communities with tailored metabolite outputs.

(b) Heatmap of butyrate and lactate concentrations of all possible communities predicted by the LSTM model M1. Grey points indicate communities chosen via -means clustering to span metabolite design space. Colored boxes indicate ‘corner’ regions defined by percentile values on each axis with points of the corresponding color indicating designed communities within that ‘corner’. Insets show heat maps of acetate and succinate concentrations for all communities within the corresponding boxes on the main figure. Boxes on the inset indicate ‘corners’ defined by percentile values on each axis with colored points corresponding to the same points indicated on the main plot. (c) Cross-validation accuracy of LSTM model trained and validated on a random 90/10 split of all community observations (model M2), evaluated as Pearson correlation for the correlation of predicted versus measured for each variable (all p-valueslt0.05, N and p-value for each test reported in Supplementary file 1). Dashed line indicates , which is used as a cutoff for including a variable in the subsequent network diagrams. (d) and (e) Network representation of median LIME explanations of the LSTM model M2 from (c) for prediction of each metabolite concentration (d) or species abundance (e) by the presence of each species. Edge widths are proportional to the median LIME explanation across all communities from (b) used to train the model in units of concentration (for (d)) or normalized to the species’ self-impact (for (e)). Only explanations for those variables where the cross-validated predictions had are shown. Networks were simplified by using lower thresholds for edge width (5 mM for (d), 0.2 for (e)). Red and blue edges indicate positive and negative contributions, respectively.

We first used the LSTM model M1 to simulate every possible combination of >10 species (26,434,916 total communities). The simulated communities separate into two regions: one centered around a dense ellipse of high butyrate concentration characterized by communities containing the butyrate-producing species Anaerostipes caccae (AC) and a second dense ellipse of communities that produce low levels of butyrate and lacked AC (Figure 3b). This bimodality due to the presence/absence of AC is consistent with our previous finding that AC is the strongest driver of butyrate production in this system (Clark et al., 2021). In addition, the strong negative correlation between lactate and butyrate in the AC+ cluster of communities (, , N=14,198,086) is consistent with the ability of AC to transform lactate into butyrate (Clark et al., 2021). These results demonstrate that the LSTM model can capture the major microbial drivers of metabolite production as well as the correlations between different metabolites.

We used our simulated metabolite production landscape to plan informative experiments for testing the predictive capabilities of our model. First, we designed a set of ‘distributed’ communities that spanned the range of typical metabolite concentrations predicted by our model. To this end, we selected 100 communities closest to the centroids of 100 clusters determined using k-means clustering of the four-dimensional metabolite space. Second, we designed a set of communities to test our model’s ability to predict extreme shifts in metabolite outputs. To do so, we identified four ‘corners’ of the distribution in the lactate and butyrate space (Figure 3b). We next examined the relationship between acetate and succinate within each of these corners and found that the distributions varied depending on the given corner (Figure 3b, inset). The total carbon concentration in the fermentation end products across all predicted communities displayed a narrow distribution (mean 316 mM, standard deviation 20 mM, Figure 3—figure supplement 2). The production of the four metabolites are coupled due to the structure of metabolic networks and fundamental stoichiometric constraints (Oliphant and Allen-Vercoe, 2019). Therefore, the model learned the inherent ‘trade-off’ relationships between these fermentation products based on the patterns in our data. We chose a final set of 80 ‘corner’ communities for experimental validation (five communities from each combination of maximizing or minimizing each metabolite, Methods).

By experimentally characterizing the endpoint community composition and metabolite concentrations of the 180 designed communities, we found that the LSTM model M1 accurately predicted the rank order of metabolite concentrations and microbial species abundances. The LSTM model substantially outperformed the composite model (gLV and regression, model from previous work [Clark et al., 2021]) trained on the same data for the majority (59%) of output variables (Figure 3—figure supplement 3a). Additionally, replacing the regression module of the composite model with either a Random Forest Regressor or a Feed Forward Network did not improve the metabolite prediction accuracy beyond that of the LSTM (Figure 3—figure supplement 3a). One of the key limitations of the composite models is that the metabolite variables are a function of the endpoint species abundance, but the species abundances are not a function of the metabolite concentrations. By contrast, the LSTM model can capture such feedbacks between metabolites and species. Notably, the LSTM model prediction accuracy for the metabolites was similar for both the ‘distributed’ and ‘corner’ communities (Figure 3—figure supplement 3b–e). These results indicate that our model is useful for designing communities with a broad range of metabolite profiles that includes those at the extremes of the metabolite distributions.

To determine if the LSTM model could separate groups of communities with extreme behaviors, we treated the ‘corners’ as classes and quantified the classification accuracy of our model. The model accurately classified the communities when considering only butyrate and lactate concentrations. However, the model had poorer separation when acetate and succinate were also considered in defining the classes (Figure 3—figure supplement 3f). The misclassification rate was higher for small Euclidean distances between classes and decreased with the Euclidean distance (Figure 3—figure supplement 3g). This implies that the insufficient variation in concentrations due to fundamental stoichiometric constraints limited our ability to define 16 distinct classes that maximized/minimized each metabolite. While model M1 accurately predicted metabolite concentrations and the majority of species abundances, several individual species abundances were poorly predicted (, Figure 3—figure supplement 3a). Thus, we used the dataset to improve the LSTM model. To this end, we combined the new observations with the original observations and randomly partitioned the data into 90% for training and 10% for cross-validation. The resulting model (M2, Supplementary file 1) was substantially more predictive of species abundances ( for all but five species FP, RI, CA, BA, CH (Figure 3c)).

Using local interpretable model-agnostic explanations to decipher interactions

One of the commonly noted limitations of machine learning models is their lack of interpretability for extracting biological information about a system. Fortunately, generally applicable tools have been developed to aid in model interpretation. Thus, we sought to use such methods to decipher key relationships among variables within the LSTM to deepen our biological understanding of the system. We used local interpretable model-agnostic explanations (LIME) (Ribeiro et al., 2016b), to quantify the impact of each species’ presence on each metabolite and species in each of the sub-communities used to train model M2. We used the median impact of each species presence on each metabolite or species across all training instances to generate networks that revealed microbe-metabolite (Figure 3d) and microbe-microbe (Figure 3e) interactions. In general, these networks represent broad design principles for the community metabolic outputs by indicating which species have the most consistent and strong impacts on each metabolite and species abundance across a wide range of sub-communities. For instance, the metabolite network highlights Anaerostipes caccae (AC) as having the largest positive effect on butyrate production with an additional positive contribution from EL and a negative contribution from DP, consistent with the previous composite gLV model of butyrate production by this community (Clark et al., 2021).

In addition, the number of microbial species impacting each metabolite in these networks trended with the number of microbial species that individually produced or consumed each metabolite (Figure 3—figure supplement 4). For example, butyrate displayed the fewest edges (3) and was produced by the lowest number of individual species (4). By contrast, acetate had the most edges (6) and was produced by the largest number of individual species (19). The inferred microbe-metabolite network consisted of diverse species including Proteobacteria (DP), Actinobacteria (BA, BP, EL), Firmicutes (AC, ER, DL) and one member of Bacteroidetes (PC), but excluded members of Bacteroides. Therefore, while Bacteroides exhibited high abundance in many of the communities, they did not substantially impact the measured metabolite profiles but instead modulated species growth and thus community assembly (Figure 3e). We explored the consistency of LIME explanations for the full 25-member community in response to random partitions of the training data to provide insights into the sensitivity of the LIME explanations given the training data (Figure 3—figure supplement 5, Figure 3—figure supplement 6). These results demonstrated that the direction of the strongest LIME explanations of the full community were consistent in sign despite variations in magnitude. One exception is for the species Roseburia intestinalis (RI), which had high variability across different test/train splits. This is consistent with previous observations that RI has substantial growth variability across experimental communities (Clark et al., 2021). In sum, these results demonstrate that in general the LIME explanations were robust to variations in the training data.

The LIME explanations of inter-species interactions exhibited a statistically significant correlation with their corresponding inter-species interaction parameters from a previously parameterized gLV model of this system (Clark et al., 2021; Figure 3—figure supplement 7a). The sign of the interaction was consistent in 80% of the interactions with substantial magnitude (>0.05 in both the LIME explanations and gLV parameters) (Figure 3—figure supplement 7b). This consistency with previous observations suggests that the LSTM model was able to capture similar broad trends in inter-species relationships as gLV (interpreted through the average LIME explanation across all observed communities). The LSTM model captured more nuanced context-specific behaviors (interpreted as the LIME explanation for one specific community context) than the mathematically restricted gLV model, which substantially improved the predictive capability of the LSTM model. These results demonstrate that the LSTM framework is useful for developing high accuracy predictive models for the design of precise community-level metabolite profiles. Our approach also preserves the ability to decipher different types of interactions in the LSTM model that are explicitly encoded in less accurate and flexible ecological models such as gLV.

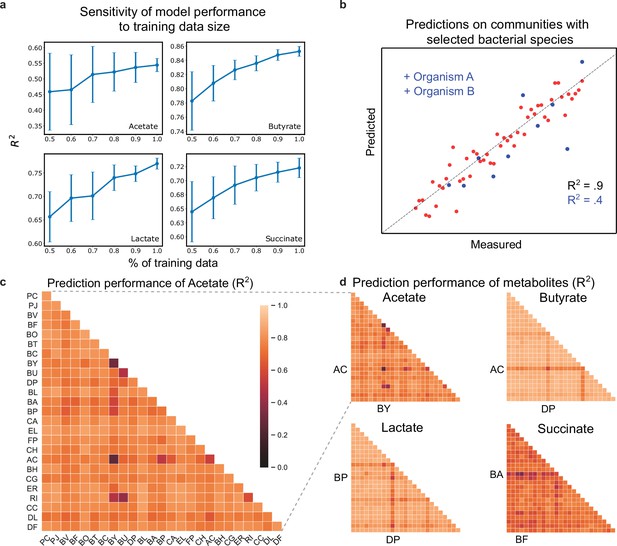

Sensitivity of LSTM model prediction accuracy highlights poorly understood species and pairwise interactions

Identification of species that limit prediction performance could guide selection of informative experiments to deepen our understanding of the behaviors of poorly predicted communities. Therefore, we evaluated the sensitivity of the LSTM model (model M2) prediction accuracy to species presence/absence and the amount of training data. High sensitivity of model prediction performance to the number of training communities indicates that collection of additional experimental data would continue to improve the model. Additionally, identifying poorly understood communities will guide machine learning-informed planning of experiments. To evaluate the model’s sensitivity to the size of the training dataset, we computed the hold-out prediction performance () as a function of the size of the training set by sub-sampling the data (Figure 4a). We used 20-fold cross-validation to predict metabolite concentrations and species abundance. Our results show that the ability to improve prediction accuracy as a function of the size of the training data set was limited by the variance in individual species abundance in the training set (Figure 4—figure supplement 1). For instance, certain species with low variance (e.g. FP, EL, DP, RI) in abundance in the training set displayed low sensitivity to the amount of training data and were poorly predicted by the model. The high sensitivity of specific metabolites (e.g. lactate) and species (e.g. AC, BH) to the amount of training data indicates that further data collection would likely improve the model’s prediction performance.

Hold-out prediction performance on sub-communities provides information about poorly understood species and interactions between species.

(a) Sensitivity of metabolite prediction performance () to the amount of training data. Training datasets were randomly subsampled 30 times using 50–100% of the total dataset in increments of 10%. Each subsampled training set was subject to 20-fold cross-validation to assess prediction performance. Lineplot of the mean prediction performance over the 30 trials for each percentage of the data. Error bars denote 1 s.d. from the mean. (b) Schematic scatter plot representing how communities containing species A and B define a poorly predicted subsample of the full sample set (c) Heatmap of prediction performance () of acetate for each subset of communities containing a given species (diagonal elements) or pair of species (off-diagonal elements). (d) Heatmap of prediction performance for acetate, butyrate, lactate, and succinate. A sample subset containing a given species or pair of species included all communities in which the species were initially present. Predictions for each community were determined using 20-fold cross validation so that for each model the predicted samples were excluded from the training samples. N and p-values are reported in Supplementary file 1.

To determine how pairwise combinations of species impacted model prediction performance, we used 20-fold cross-validation to evaluate the prediction performance () on subsets of the total dataset, where subsets were selected based on the presence of individual species or pairs of species (Figure 4b). Using this approach, we identified individual species and species pairs that had the greatest impact on the prediction performance of metabolite concentrations. Sample subsets with poor prediction performance highlight individual species and species pairs whose presence reduced the model’s ability to accurately predict metabolite concentrations. Although the subsets were smaller than the total data set (), calculation of prediction performance was not limited by small sample sizes, where the number of communities in each subset ranged from to .

The interaction network shown in Figure 3d shows the impact of individual species on each metabolite, but does not provide information about whether the effect is due to individual species or pairwise interactions. To determine whether pairwise interactions influence metabolite concentrations, we quantified how prediction performance changed in response to the presence individual species and pairs of species. Specifically, if prediction performance taken over a subset of communities containing a given species pair was markedly different than prediction performance for the subsets corresponding to the individual species, this implies that the given pairwise interaction impacts metabolite production. Using equation 5 (Methods), we found that the prediction performance of lactate and butyrate were the least sensitive to species pairs (average decrease in prediction performance for subsets with species pairs of 0.72% and 1.10% compared to corresponding single species subsets). However, the prediction performance of acetate and succinate were the most sensitive to the presence of species pairs (increase in prediction performance of 6.68% for acetate and a decrease of 2.951% for succinate). This difference in prediction performance suggests that pairwise interactions influences the production of acetate and succinate, while the production of lactate and butyrate are primarily driven by the action of single species. The sensitivity of acetate and succinate to pairwise interactions is consistent with the inferred interaction network shown in Figure 3d, which highlights multiple species-metabolite interactions for acetate and succinate and sparse and strong species-metabolite interactions for butyrate and lactate.

Pairs of certain Bacteroides and butyrate producers including BY-RI, BU-RI, and BY-AC resulted in reduced prediction performance of acetate. This suggests that interactions between specific Bacteroides and butyrate producers were important for acetate transformations, which is consistent with the conversion of acetate into butyrate. Based on the LIME analysis in Figure 3d, AC, DP, and BP had the largest impact on lactate. Thus, the hold-out prediction performance for lactate was primarily impacted by specific pairs that include these species. In sum, these results demonstrate how the LSTM model can be used to identify informative experiments for investigating poorly understood species and interactions between species, where collection of more data would likely improve model prediction performance.

Time-resolved measurements of communities reveal design rules for qualitatively distinct metabolite dynamics

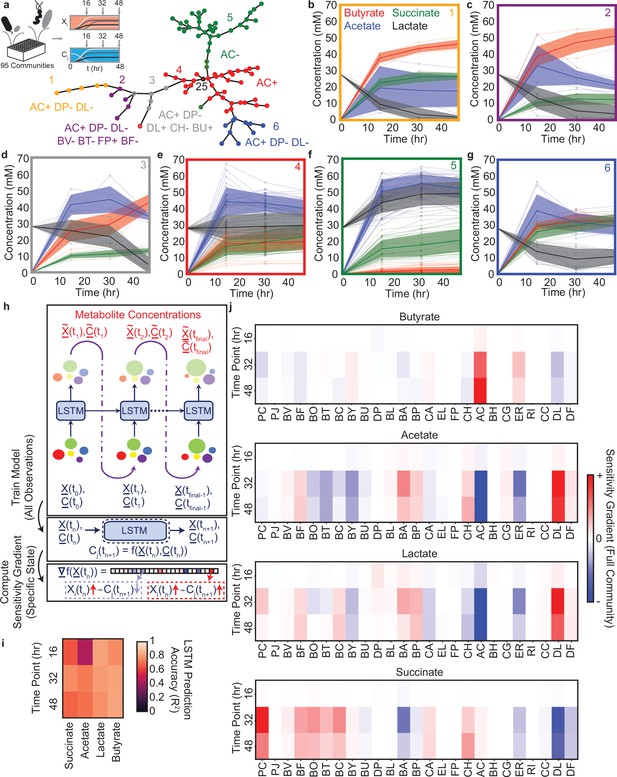

We next leveraged the LSTM model’s dynamic capabilities to understand the temporal changes in metabolite concentrations and community assembly of the 25-member synthetic gut microbiome. To this end, we chose a representative subset of 95 out of the 180 communities from Figure 3b, Figure 5—figure supplement 1a, 60 communities for training, 34 for validation, plus the full 25-member community and experimentally characterized species abundance and metabolite concentrations every 16 hr during community assembly (Figure 5a). We analyzed the dynamic behaviors of these communities using a clustering technique to extract high-level design rules of species presence/absence that determined qualitatively distinct temporal metabolite trajectories (i.e. broad trends consistent across a set of communities) and exploited the LSTM framework to identify context-specific impacts of individual species on metabolite production (i.e. a more fine-tuned case-by-case analysis).

Community metabolite trajectories cluster into qualitatively distinct groups which can be classified based on presence and absence of key microbial species.

(a) Schematic of experiment and network representing a minimal spanning tree across the 95 communities where weights (indicated by edge length) are equal to the Euclidean distance between the metabolite trajectories for each community. Node colors indicate clusters determined as described in the Materials and methods. Red node with black outline annotated with ‘25’ represents the 25-member community. Annotations indicate the most specific microbial species presence/absence rules that describe most data points in the cluster of the corresponding color as determined by a decision tree classifier (Materials and methods). Communities that deviate from the rules for their cluster are indicated with a border matching the color of the closest cluster whose rules they do follow. Network visualization generated using the draw_kamada_kawai function in networkx (v2.1) for Python 3. (b–g) Temporal changes in metabolite concentrations for communities within each cluster (indicated by sub-plot border color), with individual communities denoted by transparent lines. Solid lines and shaded regions represent the mean ±1 s.d. of all communities in the cluster. (h) Schematic of LSTM model training and computation of gradients to evaluate impact of species abundance on metabolite concentrations in a specific community context. (i) Heatmap of model M3 prediction accuracy for four metabolites in the 34 validation communities at each time point (Pearson correlation , N=34 for all tests). (j) Heatmap of the gradient analysis of model M3 as described in (h) for the full 25-species community. N and p-values are reported in Supplementary file 1.

The temporal trajectories of species abundance and metabolite concentrations showed a wide range of qualitatively distinct trends across the 95 communities (Figure 5b–g). For example, some metabolites concentrations monotonically increased (e.g. butyrate in Figure 5b, c, e and g), monotonically decreased (e.g. lactate in Figure 5b, c) or exhibited biphasic dynamics (e.g. acetate in Figure 5c). To determine if there were communities with similar temporal changes in metabolite concentrations, we clustered communities using a minimal spanning tree (Grygorash et al., 2006) on the Euclidean distance between the metabolite trajectories of each pair of communities (Figure 5a). The resulting six clusters exhibited high quantitative within-cluster similarity and qualitatively distinct metabolite trajectories (Figure 5b–g). Clusters 4 and 5, which contained the largest number of communities, had a high fraction of ‘distributed’ communities (Figure 3b). Clusters with a smaller number of communities contained a higher percentage of ‘corner’ communities (Figure 5—figure supplement 1b,c). Therefore, the LSTM model informed by endpoint measurements of species abundance and metabolite concentrations elucidated ‘corner’ communities with qualitatively distinct temporal behaviors. These communities were unlikely to be discovered via random sampling of sub-communities due to the high density of points towards the center of the distribution and low density of communities in the tails of the distribution (Figure 3b). Additionally, some ‘corner’ communities that were similar in metabolite profiles when considering the endpoint measurement separated into different clusters when considering the dynamic data (e.g. Clusters 2 and 3, which have similar metabolite profiles at 48 hr but qualitatively distinct dynamics) (Figure 5b). This demonstrates that using a community design approach to explore the extremes of system behaviors with a limited time resolution enabled the identification of new features when the communities with extreme functions were characterized with higher time resolution.

To identify general patterns in species presence/absence of these communities that could explain the temporal behaviors of each cluster, we used a decision tree analysis to identify an interpretable classification scheme (Figure 5—figure supplement 1d). Using this approach, the large clusters were separated by relatively simple classification rules (i.e. AC+ for Cluster 4 and AC- for Cluster 5), whereas the smaller clusters had more complex classification rules involving larger combinations of species (3–7 species), all involving AC, DP, and DL (Figure 5a). The influential role of DP was corroborated by a previous study showing that DP substantially inhibits butyrate production (Clark et al., 2021). In addition, the inferred microbe-metabolite networks based on the LSTM model M2 demonstrated that the presence of DL was linked to higher acetate and lower succinate production (Figure 3d), consistent with its key role in shaping metabolite dynamics in this system. The variation in the number of communities across clusters is consistent with previous observations that species-rich microbial communities tend towards similar behavior(s) (e.g. Clusters 4 and 5 contained many communities). By contrast, more complex species presence/absence design rules are required to identify communities that deviate from this typical behavior (e.g. Clusters 1–3 and 6 contained few communities) (Clark et al., 2021).

Using LSTM with higher time-resolution to interpret contextual interactions

While our clustering analysis identified general design rules for metabolite trajectories, there remained unexplained within-cluster variation. Thus, we used the LSTM framework to identify those effects beyond these general species presence/absence rules that determine the precise metabolite trajectory of a given community. Simultaneous predictions of species abundance and the concentration of all four metabolites at all time points necessitates specific modifications to the LSTM architecture shown in Figure 2a. In particular, we consider a 29-dimensional input vector whose first 25 components correspond to the species abundance, while the remaining four components correspond to the concentration of metabolites (Figure 5h). The 29-dimensional feature vector is suitably normalized so that the different components have zero mean and unity variance. The feature scaling is important to prevent dominance of high-abundance species. The output of each LSTM unit is fed into the input block of the subsequent LSTM unit in order to advance the model forward in time. The reason behind concatenating instantaneous species abundances with metabolite concentrations can be understood as follows. Prediction of metabolite concentrations at various time points requires a time-series model (either using ODEs or LSTM in this case). Further, the future trajectory of metabolite concentrations is a function of both the species abundance, as well as the metabolite concentrations at the current time instant. Therefore, we concatenate both the metabolite concentrations and species abundances to create a 29-dimensional feature vector. The trained LSTM framework on the 60 training communities (model M3) displayed good prediction performance on the metabolite concentrations of the 34 validation communities plus the full 25-species community (Figure 5i). The prediction accuracy of species abundance was lower than metabolite concentrations, presumably due to the limited number of training set observations of each species (Figure 5—figure supplement 2).

We used a gradient-based sensitivity analysis of the LSTM model M3 to provide biological insights into the contributions of individual species based on the temporal changes in metabolite concentrations (Figure 5h and j, Methods). This method involves computing partial derivatives of output variables of interest with respect to input variables, which are readily available through a single backpropagation pass (LeCun et al., 1988; Peurifoy et al., 2018). As an example case, we applied this analysis approach to the full 25-species community, which was grouped into Cluster 4, with the design rule ‘AC+’ (Figure 5a). Consistent with this design rule, we observed strong sensitivity gradients between the abundance of AC and the concentrations of butyrate, acetate, and lactate, consistent with our biological understanding of the system (Clark et al., 2021). Beyond the ‘AC+’ design rule, there was a strong sensitivity gradient between DL and acetate and succinate, consistent with the inferred networks based on the LSTM model M2 that used endpoint measurements (Figure 3d). Further, the contributions of certain species on metabolite production varied as a function of time. For instance, in the initial time point, species abundances were similar and thus the contribution of individual species to metabolite production was uniform. However, interactions between species during community assembly enhanced the contribution of specific metabolite driver species such as AC. In addition, the contributions of individual species such as PC and BA to succinate production peaked at 32 hr and then decreased by 48 hr, highlighting that the effects of these species were maximized at intermediate time points. In sum, the gradient-based method identified the quantitative contributions of each species to the temporal changes in metabolite concentrations for a representative 25-member community, identifying context-specific behaviors beyond the previously identified broader design rules. These two complementary approaches are useful for identifying design rules governing metabolite dynamics. The clustering method can identify broad design rules for species presence/absence and the LSTM analysis gradient approach can uncover fine-tuned quantitative contributions of species to the temporal changes in community-level functions.

To directly evaluate the performance of the gLV and LSTM model, we trained a discretized version of the gLV model (approximate gLV model) on the same dataset and used the same algorithm as the LSTM. The approximate gLV model was augmented with a two layer feed-forward neural network with a hidden dimension equivalent to the hidden dimension used in the LSTM model to enable metabolite predictions (Figure 5—figure supplement 3a, b). The approximate gLV model enables the computation of gradients via the backpropagation algorithm, which is also used to train the LSTM. By contrast, computation of gradients of the continuous-time gLV model requires numerical integration. This approximate gLV model does not perform as well as the LSTM model at species abundance predictions using the same data used to train LSTM model M3 (Figure 5—figure supplement 3c, b). In addition, the LSTM outperforms the approximate gLV augmented with the feed-forward network at metabolite predictions (Figure 5—figure supplement 3e, f). In sum, the LSTM outperforms the discretized gLV model using the same training algorithm, highlighting the power of the LSTM model in accurately predicting the temporal changes in microbiome composition and metabolite concentrations.

Discussion

The LSTM modeling framework trained on species abundance and metabolite concentrations accurately predicted multiple health-relevant functions of complex synthetic human gut communities. This model is powerful for designing communities with target metabolite profiles. Microbial communities continuously impact metabolites by releasing or consuming them. Therefore, by modeling both microbial growth and the metabolites they produce/consume together, the LSTM captured the interconnections between these variables. Due to its flexibility, the LSTM model outperforms the widely used gLV model in the presence of higher-order interactions. We leveraged the computational efficiency of LSTM model to predict the metabolite profiles of tens of millions of communities. We used these model predictions to identify sparsely represented ‘corner case’ communities that maximized/minimized community-level production of four health-relevant metabolites. In the absence of a predictive model, these infrequent communities would have been difficult to discover among the vast metabolite profile landscape of possible communities.

Beyond the model’s predictive capabilities, we showed that biological information including significant microbe-metabolite and microbe-microbe interactions, can be extracted from LSTM models. These biological insights could enable the discovery of key species and interactions driving community functions of interest. Further, this could inform the design of microbial communities from the bottom-up or interventions to manipulate community-level behaviors. For example, the inferred microbe-metabolite network highlighted AC is a major ecological driver of several metabolites including butyrate, acetate and lactate in our system. In addition, this microbe-metabolite network did not include species of the highly abundant genus Bacteroides but instead featured members of Firmicutes (AC, ER, DL), Actinobacteria (BA, BP, EL), Proteobacteria DP and Bacteroidetes PC. Notably, Bacteroides displayed numerous interactions in the microbe-microbe interaction network, suggesting that they played a key role in the growth of constituent community members opposed to production of specific metabolites. Therefore, our model suggests that Bacteroides influence broad ecosystem functions such as community growth dynamics whereas species highlighted in the microbe-metabolite network contribute to specialized functions such as the production of specific measured metabolites (Rivett and Bell, 2018). Therefore, the microbe-metabolite interaction network could be used to identify key species that could be targeted for manipulating the dynamics of specific metabolites.

We performed time-resolved measurements of metabolite production and species abundance using a set of designed communities and demonstrated that communities tend towards a typical dynamic behavior (i.e. Clusters 4 and 5). Therefore, random sampling of sub-communities from the 25-member system would likely exhibit behaviors similar to Clusters 4 and 5. We used the LSTM model to identify ‘corner cases’ communities that displayed metabolite concentrations near the tails of the metabolite distributions at the endpoint. The model allowed us to identify unique sub-clusters with disparate dynamic behaviors. We demonstrated that the endpoint model predictions were confirmatory (Figure 3c) and also led to new discoveries when additional measurements were made in the time dimension. Specifically, certain ‘corner cases’ communities identified based on prediction of a single time-point displayed distinct dynamic trajectories. For instance, Clusters 2 and 3 based on the decision tree classifier displayed similar end-point metabolite concentrations (Figure 5c, d). However, lactate decreased immediately over time in Cluster 2 communities but remained high until approximately 30 hr and then decreased in Cluster 3 communities. The design rule for Cluster 3 included the presence of lactate producers BU and DL (Figure 3—figure supplement 4), suggesting that these individual species’ lactate producing capabilities enabled the community to maintain a high lactate concentration for an extended period of time in the context of the Cluster 3 communities. While we focused on the production of four health-relevant metabolites produced by gut microbiota, a wide range of health-relevant compounds are produced by gut bacteria. Therefore, communities that cluster together based on dynamic trends in the four measured metabolites could separate into new clusters based on the temporal patterns of other compounds produced or degraded by the communities.

Time-resolved measurements were required to reveal the different dynamic behaviors of communities in Clusters 2 and 3 to improve our understanding and the design of community functions. The ability to resolve differences in the dynamic trajectories of communities requires time sampling when the system behavior is changing as a function of time as opposed to time sampling once the system has reached a steady-state (i.e. saturated as a function of time). The time to reach steady-state varied across different communities and metabolites of interest. For instance, lactate reached steady-state at an earlier time point (12 hr) in Cluster 4 communities whereas communities in Cluster 3 approached steady-state at a later time point (48 hr). Therefore, model-guided experimental planning could be used to identify the optimal sampling times to resolve differences in community dynamic behaviors. Achieving a highly predictive LSTM model required substantially less training data than a previous study that approximated the behavior of mechanistic biological systems models with RNNs (Figure 2; Wang et al., 2019). While the performance of any data-driven algorithm improves with the quantity and quality of available data, we demonstrate that the LSTM can translate learning on lower-order communities to accurately predict the behavior of higher-order communities given a limited and informative training set that is experimentally feasible. For synthetic microbial communities, the quality of the training set depends on the frequency of time-series measurements within periods in which the system displays rich dynamic behaviors (i.e. excitation of the dynamic modes of the system), the range of initial species richness, representation of each community member in the training data and sufficient variation in species abundances or metabolite concentrations (Figure 4—figure supplement 1). The dynamic behaviors of the synthetic communities characterized in vitro may likely exhibit significant differences to their behaviors in new environments such as the mammalian gut. However, communities in sub-clusters whose behaviors deviated substantially from the typical community behaviors (e.g. Clusters 2 and 3 versus Clusters 4 and 5) may be more likely than random to display unique dynamic behaviors in vivo. Future work will investigate whether the in vitro dynamic behavior cluster patterns can be used as prior information to guide the design of informative communities in new environments for building predictive models.

The current implementation of the LSTM model lacks uncertainty quantification for individual predictions, which could be used to guide experimental design (Radivojević et al., 2020). Recent progress in using Bayesian recurrent neural networks has led to emergence of Bayesian LSTMs (Fortunato et al., 2017; Li et al., 2021), which provides uncertainty quantification for each prediction in the form of posterior variance or posterior confidence interval. However, currently, the implementation and training of such Bayesian neural networks can be significantly more difficult than training the LSTM model developed here. In addition, we benchmarked the performance of the LSTM against a widely used gLV model which has been demonstrated to accurately predict community assembly in communities with up to 25 species (Venturelli et al., 2018; Clark et al., 2021). The gLV model has been modified mathematically to capture more complex system behaviors (McPeek, 2017). However, implementation of these gLV models to represent the behaviors of microbiomes with a large number of interacting species poses major computational challenges.

While our current approach treated microbiome species composition as the sole set of design variables in a constant environmental background, microbiomes in reality are impacted by differences in the physicochemical composition of their environment (Thompson et al., 2017). Given sufficient observations of community behavior under varied environmental contexts (e.g. presence/absence of certain nutrients), our LSTM approach could be further leveraged to design complementary species and environmental compositions for desired microbiome functional dynamics. Further, we can leverage the wealth of biological information stored in the sequenced genomes of the constituent organisms. Integrating methods such as genome scale models (Magnúsdóttir et al., 2017) with our LSTM framework could leverage genomic information to enable predictions when the genomes of the organisms are varied (i.e. alternative strains of the same species with disparate metabolic capabilities). In this case, introducing variables representing the presence/absence of specific metabolic reactions would potentially enable the model to predict the impact of a species with a varied set of metabolic reactions on a given set of functions without new experimental observations. Integrating this information into the model could thus enable a mapping between genome information and community-level functions.

While previous approaches have used machine learning methods to predict microbiome functions based on microbiome species composition (Le et al., 2020; Larsen et al., 2012; Thompson et al., 2019), our approach is a major step forward in predicting the future temporal trajectory of microbiome functions from an initial species composition. The dynamic nature of our approach enables the design of optimal initial community compositions or interventions to steer a community to a desired future state. The flexibility of our approach to various time resolutions could be especially useful in scenarios where a microbiome may display undesired transients on the path from an initial state to a desired final state. For instance, in treatment of gut microbiome dysbiosis, it is important to ensure that any transient states of the microbiome are not harmful to the host (e.g. pathogen blooms or overproduction of toxic metabolites) as the system approaches a desired healthy state (Xiao et al., 2020). However, because predictions with increased time resolution require more data for model training, the ability of our approach to predict system behaviors based on initial and final observations is useful for scenarios where transient states may be less important, such as in bioprocesses where the concentration of products at the time of harvest is the primary design objective (Yenkie et al., 2016). Finally, the computational efficiency and accuracy of the LSTM model could be exploited in the future for autonomous design and optimization of multifunctional communities via computer-controlled design-test-learn cycles (King et al., 2009).

Materials and methods

| Reagent type (species) or resource | Designation | Source or reference | Identifiers | Additional information |

|---|---|---|---|---|

| Strain, strain background (Prevotella copri CB7) | PC | DSM 18205 | ||

| Strain, strain background (Parabacteroides johnsonii M-165) | PJ | DSM 18315 | ||

| Strain, strain background (Bacteroides vulgatus NCTC 11154) | BV | ATCC 8482 | ||

| Strain, strain background (Bacteroides fragilis EN-2) | BF | DSM 2151 | ||

| Strain, strain background (Bacteroides ovatus NCTC 11153) | BO | ATCC 8483 | ||

| Strain, strain background (Bacteroides thetaiotaomicron VPI 5482) | BT | ATCC 29148 | ||

| Strain, strain background (Bacteroides caccae VPI 3452 A) | BC | ATCC 43185 | ||

| Strain, strain background (Bacteroides cellulosilyticus CRE21) | BY | DSMZ 14838 | ||

| Strain, strain background (Bacteroides uniformis VPI 0061) | BU | DSM 6597 | ||

| Strain, strain background (Desulfovibrio piger VPI C3-23) | DP | ATCC 29098 | ||

| Strain, strain background (Bifidobacterium longum subs. infantis S12) | BL | DSM 20088 | ||

| Strain, strain background (Bifidobacterium adolescentis E194a (Variant a)) | BA | ATCC 15703 | ||

| Strain, strain background (Bifidobacterium pseudocatenulatum B1279) | BP | DSM 20438 | ||

| Strain, strain background (Collinsella aerofaciens VPI 1003) | CA | DSM 3979 | ||

| Strain, strain background (Eggerthella lenta 1899 B) | EL | DSM 2243 | ||

| Strain, strain background (Faecalibacterium prausnitzii A2-165) | FP | DSM 17677 | ||

| Strain, strain background (Clostridium hiranonis T0-931) | CH | DSM 13275 | ||

| Strain, strain background (Anaerostipes caccae L1-92) | AC | DSM 14662 | ||

| Strain, strain background (Blautia hydrogenotrophica S5a33) | BH | DSM 10507 | ||

| Strain, strain background (Clostridium asparagiforme N6) | CG | DSM 15981 | ||

| Strain, strain background (Eubacterium rectale VPI 0990) | ER | ATCC 33656 | ||

| Strain, strain background (Roseburia intestinalis L1-82) | RI | DSM 14610 | ||

| Strain, strain background (Coprococcus comes VPI CI-38) | CC | ATCC 27758 | ||

| Strain, strain background (Dorea longicatena 111–35) | DL | DSMZ 13814 | ||

| Strain, strain background (Dorea formicigenerans VPI C8-13) | DF | DSM 3992 | ||

| Sequence-based reagent | Forward Primer Index: ATCACG | IDT | AATGATACGGCGACCACCGAGATCTACAC ATCACG ACACTCTTTCCCTACACGACGCTCTTCCGATCT ACTCCTACGGGAGGCAGCAGT | |

| Sequence-based reagent | Forward Primer Index: CGATGT | IDT | AATGATACGGCGACCACCGAGATCTACAC CGATGT ACACTCTTTCCCTACACGACGCTCTTCCGATCT T ACTCCTACGGGAGGCAGCAGT | |

| Sequence-based reagent | Forward Primer Index: TTAGGC | IDT | AATGATACGGCGACCACCGAGATCTACAC TTAGGC ACACTCTTTCCCTACACGACGCTCTTCCGATCT GT ACTCCTACGGGAGGCAGCAGT | |

| Sequence-based reagent | Forward Primer Index: TGACCA | IDT | AATGATACGGCGACCACCGAGATCTACAC TGACCA ACACTCTTTCCCTACACGACGCTCTTCCGATCT CGA ACTCCTACGGGAGGCAGCAGT | |

| Sequence-based reagent | Forward Primer Index: ACAGTG | IDT | AATGATACGGCGACCACCGAGATCTACAC ACAGTG ACACTCTTTCCCTACACGACGCTCTTCCGATCT ATGA ACTCCTACGGGAGGCAGCAGT | |

| Sequence-based reagent | Forward Primer Index: GCCAAT | IDT | AATGATACGGCGACCACCGAGATCTACAC GCCAAT ACACTCTTTCCCTACACGACGCTCTTCCGATCT TGCGA ACTCCTACGGGAGGCAGCAGT | |

| Sequence-based reagent | Forward Primer Index: CAGATC | IDT | AATGATACGGCGACCACCGAGATCTACAC CAGATC ACACTCTTTCCCTACACGACGCTCTTCCGATCT GAGTGG ACTCCTACGGGAGGCAGCAGT | |

| Sequence-based reagent | Forward Primer Index: ACTTGA | IDT | AATGATACGGCGACCACCGAGATCTACAC ACTTGA ACACTCTTTCCCTACACGACGCTCTTCCGATCT CCTGGAG ACTCCTACGGGAGGCAGCAGT | |

| Sequence-based reagent | Forward Primer Index: GATCAG | IDT | AATGATACGGCGACCACCGAGATCTACAC GATCAG ACACTCTTTCCCTACACGACGCTCTTCCGATCT ACTCCTACGGGAGGCAGCAGT | |

| Sequence-based reagent | Forward Primer Index: TAGCTT | IDT | AATGATACGGCGACCACCGAGATCTACAC TAGCTT ACACTCTTTCCCTACACGACGCTCTTCCGATCT T ACTCCTACGGGAGGCAGCAGT | |

| Sequence-based reagent | Forward Primer Index: GGCTAC | IDT | AATGATACGGCGACCACCGAGATCTACAC GGCTAC ACACTCTTTCCCTACACGACGCTCTTCCGATCT GT ACTCCTACGGGAGGCAGCAGT | |

| Sequence-based reagent | Forward Primer Index: CTTGTA | IDT | AATGATACGGCGACCACCGAGATCTACAC CTTGTA ACACTCTTTCCCTACACGACGCTCTTCCGATCT CGA ACTCCTACGGGAGGCAGCAGT | |

| Sequence-based reagent | Forward Primer Index: AGTCAA | IDT | AATGATACGGCGACCACCGAGATCTACAC AGTCAA ACACTCTTTCCCTACACGACGCTCTTCCGATCT ATGA ACTCCTACGGGAGGCAGCAGT | |

| Sequence-based reagent | Forward Primer Index: AGTTCC | IDT | AATGATACGGCGACCACCGAGATCTACAC AGTTCC ACACTCTTTCCCTACACGACGCTCTTCCGATCT TGCGA ACTCCTACGGGAGGCAGCAGT | |

| Sequence-based reagent | Forward Primer Index: ATGTCA | IDT | AATGATACGGCGACCACCGAGATCTACAC ATGTCA ACACTCTTTCCCTACACGACGCTCTTCCGATCT GAGTGG ACTCCTACGGGAGGCAGCAGT | |

| Sequence-based reagent | Forward Primer Index: CCGTCC | IDT | AATGATACGGCGACCACCGAGATCTACAC CCGTCC ACACTCTTTCCCTACACGACGCTCTTCCGATCT CCTGGAG ACTCCTACGGGAGGCAGCAGT | |

| Sequence-based reagent | Forward Primer Index: GTAGAG | IDT | AATGATACGGCGACCACCGAGATCTACAC GTAGAG ACACTCTTTCCCTACACGACGCTCTTCCGATCT ACTCCTACGGGAGGCAGCAGT | |

| Sequence-based reagent | Forward Primer Index: GTCCGC | IDT | AATGATACGGCGACCACCGAGATCTACAC GTCCGC ACACTCTTTCCCTACACGACGCTCTTCCGATCT T ACTCCTACGGGAGGCAGCAGT | |

| Sequence-based reagent | Forward Primer Index: GTGAAA | IDT | AATGATACGGCGACCACCGAGATCTACAC GTGAAA ACACTCTTTCCCTACACGACGCTCTTCCGATCT GT ACTCCTACGGGAGGCAGCAGT | |

| Sequence-based reagent | Forward Primer Index: GTGGCC | IDT | AATGATACGGCGACCACCGAGATCTACAC GTGGCC ACACTCTTTCCCTACACGACGCTCTTCCGATCT CGA ACTCCTACGGGAGGCAGCAGT | |

| Sequence-based reagent | Forward Primer Index: GTTTCG | IDT | AATGATACGGCGACCACCGAGATCTACAC GTTTCG ACACTCTTTCCCTACACGACGCTCTTCCGATCT ATGA ACTCCTACGGGAGGCAGCAGT | |

| Sequence-based reagent | Forward Primer Index: CGTACG | IDT | AATGATACGGCGACCACCGAGATCTACAC CGTACG ACACTCTTTCCCTACACGACGCTCTTCCGATCT TGCGA ACTCCTACGGGAGGCAGCAGT | |

| Sequence-based reagent | Forward Primer Index: GAGTGG | IDT | AATGATACGGCGACCACCGAGATCTACAC GAGTGG ACACTCTTTCCCTACACGACGCTCTTCCGATCT GAGTGG ACTCCTACGGGAGGCAGCAGT | |

| Sequence-based reagent | Forward Primer Index: GGTAGC | IDT | AATGATACGGCGACCACCGAGATCTACAC GGTAGC ACACTCTTTCCCTACACGACGCTCTTCCGATCT CCTGGAG ACTCCTACGGGAGGCAGCAGT | |

| Sequence-based reagent | Forward Primer Index: ACTGAT | IDT | AATGATACGGCGACCACCGAGATCTACAC ACTGAT ACACTCTTTCCCTACACGACGCTCTTCCGATCT ACTCCTACGGGAGGCAGCAGT | |

| Sequence-based reagent | Forward Primer Index: ATGAGC | IDT | AATGATACGGCGACCACCGAGATCTACAC ATGAGC ACACTCTTTCCCTACACGACGCTCTTCCGATCT T ACTCCTACGGGAGGCAGCAGT | |

| Sequence-based reagent | Forward Primer Index: ATTCCT | IDT | AATGATACGGCGACCACCGAGATCTACAC ATTCCT ACACTCTTTCCCTACACGACGCTCTTCCGATCT GT ACTCCTACGGGAGGCAGCAGT | |

| Sequence-based reagent | Forward Primer Index: CAAAAG | IDT | AATGATACGGCGACCACCGAGATCTACAC CAAAAG ACACTCTTTCCCTACACGACGCTCTTCCGATCT CGA ACTCCTACGGGAGGCAGCAGT | |

| Sequence-based reagent | Forward Primer Index: CAACTA | IDT | AATGATACGGCGACCACCGAGATCTACAC CAACTA ACACTCTTTCCCTACACGACGCTCTTCCGATCT ATGA ACTCCTACGGGAGGCAGCAGT | |

| Sequence-based reagent | Forward Primer Index: CACCGG | IDT | AATGATACGGCGACCACCGAGATCTACAC CACCGG ACACTCTTTCCCTACACGACGCTCTTCCGATCT TGCGA ACTCCTACGGGAGGCAGCAGT | |

| Sequence-based reagent | Forward Primer Index: CACGAT | IDT | AATGATACGGCGACCACCGAGATCTACAC CACGAT ACACTCTTTCCCTACACGACGCTCTTCCGATCT GAGTGG ACTCCTACGGGAGGCAGCAGT | |

| Sequence-based reagent | Forward Primer Index: CACTCA | IDT | AATGATACGGCGACCACCGAGATCTACAC CACTCA ACACTCTTTCCCTACACGACGCTCTTCCGATCT CCTGGAG ACTCCTACGGGAGGCAGCAGT | |

| Sequence-based reagent | Forward Primer Index: CAGGCG | IDT | AATGATACGGCGACCACCGAGATCTACAC CAGGCG ACACTCTTTCCCTACACGACGCTCTTCCGATCT ACTCCTACGGGAGGCAGCAGT | |

| Sequence-based reagent | Forward Primer Index: CATGGC | IDT | AATGATACGGCGACCACCGAGATCTACAC CATGGC ACACTCTTTCCCTACACGACGCTCTTCCGATCT T ACTCCTACGGGAGGCAGCAGT | |

| Sequence-based reagent | Forward Primer Index: CATTTT | IDT | AATGATACGGCGACCACCGAGATCTACAC CATTTT ACACTCTTTCCCTACACGACGCTCTTCCGATCT GT ACTCCTACGGGAGGCAGCAGT | |

| Sequence-based reagent | Forward Primer Index: CCAACA | IDT | AATGATACGGCGACCACCGAGATCTACAC CCAACA ACACTCTTTCCCTACACGACGCTCTTCCGATCT CGA ACTCCTACGGGAGGCAGCAGT | |

| Sequence-based reagent | Forward Primer Index: CGGAAT | IDT | AATGATACGGCGACCACCGAGATCTACAC CGGAAT ACACTCTTTCCCTACACGACGCTCTTCCGATCT ATGA ACTCCTACGGGAGGCAGCAGT | |

| Sequence-based reagent | Forward Primer Index: CTAGCT | IDT | AATGATACGGCGACCACCGAGATCTACAC CTAGCT ACACTCTTTCCCTACACGACGCTCTTCCGATCT TGCGA ACTCCTACGGGAGGCAGCAGT | |

| Sequence-based reagent | Forward Primer Index: CTATAC | IDT | AATGATACGGCGACCACCGAGATCTACAC CTATAC ACACTCTTTCCCTACACGACGCTCTTCCGATCT GAGTGG ACTCCTACGGGAGGCAGCAGT | |

| Sequence-based reagent | Forward Primer Index: CTCAGA | IDT | AATGATACGGCGACCACCGAGATCTACAC CTCAGA ACACTCTTTCCCTACACGACGCTCTTCCGATCT CCTGGAG ACTCCTACGGGAGGCAGCAGT | |

| Sequence-based reagent | Forward Primer Index: GACGAC | IDT | AATGATACGGCGACCACCGAGATCTACAC GACGAC ACACTCTTTCCCTACACGACGCTCTTCCGATCT ACTCCTACGGGAGGCAGCAGT | |

| Sequence-based reagent | Forward Primer Index: TAATCG | IDT | AATGATACGGCGACCACCGAGATCTACAC TAATCG ACACTCTTTCCCTACACGACGCTCTTCCGATCT T ACTCCTACGGGAGGCAGCAGT | |

| Sequence-based reagent | Forward Primer Index: TACAGC | IDT | AATGATACGGCGACCACCGAGATCTACAC TACAGC ACACTCTTTCCCTACACGACGCTCTTCCGATCT GT ACTCCTACGGGAGGCAGCAGT | |

| Sequence-based reagent | Forward Primer Index: TATAAT | IDT | AATGATACGGCGACCACCGAGATCTACAC TATAAT ACACTCTTTCCCTACACGACGCTCTTCCGATCT CGA ACTCCTACGGGAGGCAGCAGT | |

| Sequence-based reagent | Forward Primer Index: TCATTC | IDT | AATGATACGGCGACCACCGAGATCTACAC TCATTC ACACTCTTTCCCTACACGACGCTCTTCCGATCT ATGA ACTCCTACGGGAGGCAGCAGT | |

| Sequence-based reagent | Forward Primer Index: TCCCGA | IDT | AATGATACGGCGACCACCGAGATCTACAC TCCCGA ACACTCTTTCCCTACACGACGCTCTTCCGATCT TGCGA ACTCCTACGGGAGGCAGCAGT | |

| Sequence-based reagent | Forward Primer Index: TCGAAG | IDT | AATGATACGGCGACCACCGAGATCTACAC TCGAAG ACACTCTTTCCCTACACGACGCTCTTCCGATCT GAGTGG ACTCCTACGGGAGGCAGCAGT | |

| Sequence-based reagent | Forward Primer Index: TCGGCA | IDT | AATGATACGGCGACCACCGAGATCTACAC TCGGCA ACACTCTTTCCCTACACGACGCTCTTCCGATCT CCTGGAG ACTCCTACGGGAGGCAGCAGT | |

| Sequence-based reagent | Reverse Primer Index: ATCACGAG | IDT | CAAGCAGAAGACGGCATACGAGAT ATCACGAG GTGACTGGAGTTCAGACGTGTGCTCTTCCGATCT ggactaccagggtatctaatcctgt | |

| Sequence-based reagent | Reverse Primer Index: CGATGTTC | IDT | CAAGCAGAAGACGGCATACGAGAT CGATGTTC GTGACTGGAGTTCAGACGTGTGCTCTTCCGATCT A ggactaccagggtatctaatcctgt | |

| Sequence-based reagent | Reverse Primer Index: TTAGGCGA | IDT | CAAGCAGAAGACGGCATACGAGAT TTAGGCGA GTGACTGGAGTTCAGACGTGTGCTCTTCCGATCT TC ggactaccagggtatctaatcctgt | |

| Sequence-based reagent | Reverse Primer Index: TGACCAAT | IDT | CAAGCAGAAGACGGCATACGAGAT TGACCAAT GTGACTGGAGTTCAGACGTGTGCTCTTCCGATCT CTA ggactaccagggtatctaatcctgt | |

| Sequence-based reagent | Reverse Primer Index: ACAGTGCT | IDT | CAAGCAGAAGACGGCATACGAGAT ACAGTGCT GTGACTGGAGTTCAGACGTGTGCTCTTCCGATCT GATA ggactaccagggtatctaatcctgt | |

| Sequence-based reagent | Reverse Primer Index: GCCAATGT | IDT | CAAGCAGAAGACGGCATACGAGAT GCCAATGT GTGACTGGAGTTCAGACGTGTGCTCTTCCGATCT ACTCA ggactaccagggtatctaatcctgt | |

| Sequence-based reagent | Reverse Primer Index: CAGATCGA | IDT | CAAGCAGAAGACGGCATACGAGAT CAGATCGA GTGACTGGAGTTCAGACGTGTGCTCTTCCGATCT TTCTCT ggactaccagggtatctaatcctgt | |

| Sequence-based reagent | Reverse Primer Index: ACTTGAAA | IDT | CAAGCAGAAGACGGCATACGAGAT ACTTGAAA GTGACTGGAGTTCAGACGTGTGCTCTTCCGATCT CACTTCT ggactaccagggtatctaatcctgt | |

| Sequence-based reagent | Reverse Primer Index: GATCAGTG | IDT | CAAGCAGAAGACGGCATACGAGAT GATCAGTG GTGACTGGAGTTCAGACGTGTGCTCTTCCGATCT ggactaccagggtatctaatcctgt | |

| Sequence-based reagent | Reverse Primer Index: TCTACCTC | IDT | CAAGCAGAAGACGGCATACGAGAT TCTACCTC GTGACTGGAGTTCAGACGTGTGCTCTTCCGATCT A ggactaccagggtatctaatcctgt | |

| Sequence-based reagent | Reverse Primer Index: CTTGTATG | IDT | CAAGCAGAAGACGGCATACGAGAT CTTGTATG GTGACTGGAGTTCAGACGTGTGCTCTTCCGATCT TC ggactaccagggtatctaatcctgt | |

| Sequence-based reagent | Reverse Primer Index: TAGCTTCC | IDT | CAAGCAGAAGACGGCATACGAGAT TAGCTTCC GTGACTGGAGTTCAGACGTGTGCTCTTCCGATCT CTA ggactaccagggtatctaatcctgt | |

| Sequence-based reagent | Reverse Primer Index: GGCTACCA | IDT | CAAGCAGAAGACGGCATACGAGAT GGCTACCA GTGACTGGAGTTCAGACGTGTGCTCTTCCGATCT GATA ggactaccagggtatctaatcctgt | |

| Sequence-based reagent | Reverse Primer Index: ATGCACTT | IDT | CAAGCAGAAGACGGCATACGAGAT ATGCACTT GTGACTGGAGTTCAGACGTGTGCTCTTCCGATCT ACTCA ggactaccagggtatctaatcctgt | |

| Sequence-based reagent | Reverse Primer Index: GACGGAAC | IDT | CAAGCAGAAGACGGCATACGAGAT GACGGAAC GTGACTGGAGTTCAGACGTGTGCTCTTCCGATCT TTCTCT ggactaccagggtatctaatcctgt | |

| Sequence-based reagent | Reverse Primer Index: AGCCTTGG | IDT | CAAGCAGAAGACGGCATACGAGAT AGCCTTGG GTGACTGGAGTTCAGACGTGTGCTCTTCCGATCT CACTTCT ggactaccagggtatctaatcctgt | |

| Sequence-based reagent | Reverse Primer Index: CCGTAGAG | IDT | CAAGCAGAAGACGGCATACGAGAT CCGTAGAG GTGACTGGAGTTCAGACGTGTGCTCTTCCGATCT ggactaccagggtatctaatcctgt | |

| Sequence-based reagent | Reverse Primer Index: GTGAGACT | IDT | CAAGCAGAAGACGGCATACGAGAT GTGAGACT GTGACTGGAGTTCAGACGTGTGCTCTTCCGATCT A ggactaccagggtatctaatcctgt | |

| Sequence-based reagent | Reverse Primer Index: AATGCTCA | IDT | CAAGCAGAAGACGGCATACGAGAT AATGCTCA GTGACTGGAGTTCAGACGTGTGCTCTTCCGATCT TC ggactaccagggtatctaatcctgt | |

| Sequence-based reagent | Reverse Primer Index: GCATCGTA | IDT | CAAGCAGAAGACGGCATACGAGAT GCATCGTA GTGACTGGAGTTCAGACGTGTGCTCTTCCGATCT CTA ggactaccagggtatctaatcctgt | |

| Sequence-based reagent | Reverse Primer Index: CGAACAGC | IDT | CAAGCAGAAGACGGCATACGAGAT CGAACAGC GTGACTGGAGTTCAGACGTGTGCTCTTCCGATCT GATA ggactaccagggtatctaatcctgt | |

| Sequence-based reagent | Reverse Primer Index: TCGGAAGG | IDT | CAAGCAGAAGACGGCATACGAGAT TCGGAAGG GTGACTGGAGTTCAGACGTGTGCTCTTCCGATCT ACTCA ggactaccagggtatctaatcctgt | |

| Sequence-based reagent | Reverse Primer Index: TTCTGTCG | IDT | CAAGCAGAAGACGGCATACGAGAT TTCTGTCG GTGACTGGAGTTCAGACGTGTGCTCTTCCGATCT TTCTCT ggactaccagggtatctaatcctgt | |

| Sequence-based reagent | Reverse Primer Index: GTACTCAC | IDT | CAAGCAGAAGACGGCATACGAGAT GTACTCAC GTGACTGGAGTTCAGACGTGTGCTCTTCCGATCT CACTTCT ggactaccagggtatctaatcctgt | |