Visualization of currents in neural models with similar behavior and different conductance densities

Abstract

Conductance-based models of neural activity produce large amounts of data that can be hard to visualize and interpret. We introduce visualization methods to display the dynamics of the ionic currents and to display the models’ response to perturbations. To visualize the currents’ dynamics, we compute the percent contribution of each current and display them over time using stacked-area plots. The waveform of the membrane potential and the contribution of each current change as the models are perturbed. To represent these changes over a range of the perturbation control parameter, we compute and display the distributions of these waveforms. We illustrate these procedures in six examples of bursting model neurons with similar activity but that differ as much as threefold in their conductance densities. These visualization methods provide heuristic insight into why individual neurons or networks with similar behavior can respond widely differently to perturbations.

https://doi.org/10.7554/eLife.42722.001eLife digest

The nervous system contains networks of neurons that generate electrical signals to communicate with each other and the rest of the body. Such electrical signals are due to the flow of ions into or out of the neurons via proteins known as ion channels. Neurons have many different kinds of ion channels that only allow specific ions to pass. Therefore, for a neuron to produce an electrical signal, the activities of several different ion channels need to be coordinated so that they all open and close at certain times.

Researchers have previously used data collected from various experiments to develop detailed models of electrical signals in neurons. These models incorporate information about how and when the ion channels may open and close, and can produce numerical simulations of the different ionic currents. However, it can be difficult to display the currents and observe how they change when several different ion channels are involved.

Alonso and Marder used simple mathematical concepts to develop new methods to display ionic currents in computational models of neurons. These tools use color to capture changes in ionic currents and provide insights into how the opening and closing of ion channels shape electrical signals.

The methods developed by Alonso and Marder could be adapted to display the behavior of biochemical reactions or other topics in biology and may, therefore, be useful to analyze data generated by computational models of many different types of cells. Additionally, these methods may potentially be used as educational tools to illustrate the coordinated opening and closing of ion channels in neurons and other fundamental principles of neuroscience that are otherwise hard to demonstrate.

https://doi.org/10.7554/eLife.42722.002Introduction

Experimental and computational studies have clearly demonstrated that neurons and circuits with similar behaviors can, nonetheless, have very different values of the conductances that control intrinsic excitability and synaptic strength. Using a model of the crustacean stomatogastric ganglion (STG), Prinz et al. (2004) showed that similar network activity can arise from widely different sets of membrane and synaptic conductances. Recent experimental measurements have shown two- to six-fold variability in individual components in the same identified neurons (Schulz et al., 2006; Schulz et al., 2007; Roffman et al., 2012; Swensen and Bean, 2005). The use of RNA sequencing and other molecular measurements have shown significant cell-to-cell variability in the expression of ion channels (Temporal et al., 2012; Temporal et al., 2014; Tobin et al., 2009). Together these results suggest that similar activities arise from different cellular and network mechanisms. Here, we use conductance-based models to explore how different these mechanisms are and how they respond to perturbation.

Because of the intrinsic variability, canonical models that capture the mean behavior of a set of observations are not sufficient to address these issues (Golowasch et al., 2002; Balachandar and Prescott, 2018). To incorporate intrinsic biophysical variability Prinz et al. (2004) introduced an ensemble modeling approach. They constructed a database with millions of model parameter combinations, analyzed their solutions to assess network function, and screened for conductance values for which the activity resembled the data (Calabrese, 2018). An alternative was used by Achard and De Schutter (2006). They combined evolutionary strategies with a fitness function based on a phase-plane analysis of the models’ solutions to find parameters that reproduce complex features in electrophysiological recordings of neuronal activity, and applied their procedure to obtain 20 very different computational models of cerebellar Purkinje cells. Here, we adopt a similar approach and apply evolutionary techniques to optimize a different family of landscape functions that rely on thresholds or Poincaré sections to characterize the models’ solutions.

In some respects, biological systems are a black-box because one cannot read out the values over time of all their underlying components. In contrast, computational models allow us to inspect how all the components interact and this can be used to develop intuitions and predictions about how these systems will respond to perturbations. Despite this, much modeling work focuses on the variables of the models that are routinely measured in experiments, such as the membrane potential. While in the models we have access to all state variables, this information can be hard to represent when many conductances are at play. Similarly, the effect of perturbations – such as the effect of partially or completely blocking or removing a particular channel – can be complex and also hard to display in a compact fashion. Here, we address these difficulties and illustrate two novel visualization methods. We represent the currents in a model neuron using stacked area plots: at each time step, we display the shared contribution of each current to the total current through the membrane. This representation is useful to visualize which currents are most important at each instant and allows the development of insight into how these currents behave when the system is perturbed. Perturbation typically results in drastic changes of the waveform of the activity and these changes depend on the kind of perturbation under consideration. Additionally, we developed a novel representation that relies on computing the probability of , which allows a visualization of these changes. We illustrate the utility of these procedures using models of single neuron bursters or oscillators.

Results

Finding parameters: landscape optimization

The numerical exploration of conductance-based models of neurons is a commonplace approach to address fundamental questions in neuroscience (Dayan and Abbott, 2001). These models can display much of the phenomenology exhibited by intracellular recordings of single neurons and have the major advantage that many of their parameters correspond to measurable quantities (Herz et al., 2006). However, finding parameters for these models so that their solutions resemble experimental observations is a difficult task. This difficulty arises because the models are nonlinear, they have many state variables and they contain a large number of parameters (Bhalla and Bower, 1993). These models are complex, and we are not aware of a general procedure that would allow the prediction of how an arbitrary perturbation in any of the parameters will affect their solutions. The problem of finding sets of parameters so that a nonlinear system will display a target behavior is ubiquitous in the natural sciences. A general approach to this problem consists of optimizing a score function that compares features of the models’ solutions to a set of target features. Consequently, landscape-based optimization techniques for finding parameters in compartmental models of neurons have been proposed before (Achard and De Schutter, 2006; Druckmann et al., 2007; Ben-Shalom et al., 2012). Here, we employ these ideas to develop a family of score functions that are useful to find parameters so that their activities reach a desired target.

In this work, we started with a well-studied model of neural activity described previously (Liu et al., 1998; Goldman et al., 2001; Prinz et al., 2004; O'Leary et al., 2014). The neuron is modeled according to the Hodgkin-Huxley formalism using a single compartment with eight currents. Following Liu et al. (1998), the neuron has a sodium current, ; transient and slow calcium currents, and ; a transient potassium current, ; a calcium-dependent potassium current, ; a delayed rectifier potassium current, ; a hyperpolarization-activated inward current, ; and a leak current .

We explored the space of solutions of the model using landscape optimization. The procedure consists of three steps. First, we generate voltage traces by integration of Equation 5 (Materials and methods). We then score the traces using an objective or landscape function that defines a target activity. Finally, we attempt to find minima of the objective function. The procedures used to build objective functions whose minima correspond to sets of conductances that yield the target activities are shown in Figure 1. Voltage traces were generated by integration of Equation 5 and were then scored according to a set of simple measures. The procedure is efficient in part because we chose measures that require little computing power and yet are sufficient to build successful target functions. For example, we avoid the use of Spike Density Functions (SDF) and Fourier transforms when estimating burst frequencies and burst durations. In this section, we describe target functions whose minima correspond to bursting and tonic activity in single compartment models. This approach can also be applied to the case of small circuits of neurons (Prinz et al., 2004).

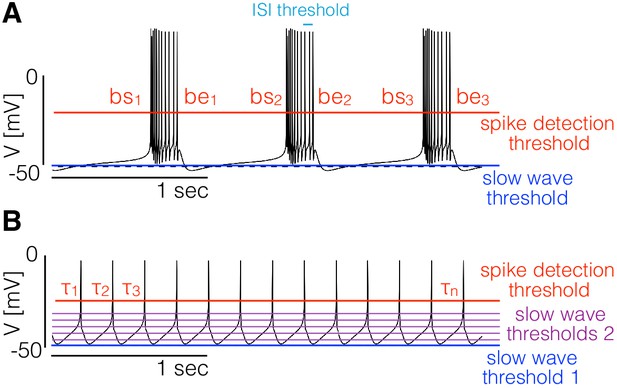

Landscape optimization can be used to find models with specific sets of features.

(A) Example model bursting neuron. The activity is described by the burst frequency and the burst duration in units of the period (duty cycle). The spikes detection threshold (red line) is used to determine the spike times. The ISI threshold (cyan) is used to determine which spikes are bursts starts (bs) and bursts ends (be). The slow wave threshold (blue line) is used to ensure that slow wave activity is separated from spiking activity. (B) Example model spiking neuron. We use thresholds as before to measure the frequency and the duty cycle of the cell. The additional slow wave thresholds (purple) are used to control the waveform during spike repolarization.

We begin with the case of bursters (Figure 1A). We targeted this type of activity by measuring the bursting frequency, the duty cycle, and the number of crossings at a threshold value to ensure that spiking activity is well separated from slow wave activity. To measure the burst frequency and duty cycle of a solution, we first compute the time stamps at which the cell spikes. Given the sequence of values we determine that a spike occurs every time that crosses the spike detection threshold (red in Figure 1). We build a sequence of spike times by going through the sequence of voltages and keeping the values of for which (we consider upward crossings). Each element of the sequence contains the time step at which the i-th spike is detected. Bursts are determined from the sequence of spike times ; if two spikes happen within a temporal interval shorter than they are part of a burst. Using this criterion we can find which of the spike times in correspond to the start and end of bursts. The starts (bs) and ends (be) of bursts are used to estimate the duty cycle and burst frequency. We loop over the sequence of spike times and determine that a burst starts at if . After a burst starts, we define the end of the burst at if . When a burst ends we can measure the burst duration as and since the next burst starts (by definition) at we also can measure the ‘period’ (if periodic) of the oscillation as . Every time a burst starts and ends we get an instance of the burst frequency and the duty cycle . We build distributions of these quantities by looping over the sequence and define the burst frequency and duty cycle as the mean values and . Finally, we count downward crossings in the sequence with two slow wave thresholds (with ) and the total number of bursts in .

For any given set of conductances, we simulated the model for s and dropped the first s to mitigate the effects of transient activity. We then computed the burst frequency , the duty cycle , the number of crossings with the slow wave thresholds and the number of bursts . We discard unstable solutions; a solution is discarded if or . If a solution is not discarded, we can use the following quantities to measure how close it is to the target behavior,

Here, measures the mismatch of the bursting frequency of the model cell with a target frequency and accounts for the duty cycle. measures the difference between the number of bursts and the number of crossings with the slow wave thresholds . Because we want a clear separation between slow wave activity and spiking activity, we ask that . Note that if during a burst goes below this solution would be penalized (factor accounts for using two slow wave thresholds). Let denote a set of parameters, we can then define an objective function

where the weights determine the relative importance of the different sources of penalties. In this work we used , , , and the penalties were calculated using seconds with msecs. The target behavior for bursters was defined by (duty cycle ) () and bursting frequency .

We can use similar procedures to target tonic spiking activity. Note that the procedure we described previously to determine bursts from the sequence of spike times is also useful in this case. If a given spike satisfies the definition of burst start and it also satisfies the definition of burst end then it is a single spike and the burst duration is zero. Therefore, we compute the bursts and duty cycles as before and ask that the the target duty cycle is zero.

There are multiple ways to produce tonic spiking in this model and some solutions display very different slow wave activity. To further restrict the models, we placed a middle threshold at and detected downward crossings at this value. We defined as the lag between the upward crossings at the spiking threshold () and downward crossings at is useful because it takes different values for tonic spikers than it does for single-spike bursters even though their spiking patterns can be identical. Finally, we found that the model attempts to minimize at the expense of hyperpolarizing the membrane beyond and introducing a wiggle that can be different in different solutions. To penalize this we included additional thresholds between and , counted the number of downward crossings at these values , and asked that these numbers are equal to the number of spikes . With these definitions, we define the partial errors as before,

The total error as a function of the conductances reads as follows,

The values , , , and , produce solutions that are almost identical to the one displayed in Figure 1B.

In all cases, evaluation of the objective functions requires that the models are simulated for a number of seconds and this is the part of the procedure that requires most computing power. Longer simulations will provide better estimations for the burst frequency and duty cycle of the cells, but will linearly increase the time it takes to evaluate the objective function. If the simulations are shorter, evaluations of the objective function are faster but the minimization may be more difficult due to transient behaviors and its minima may not correspond to stable solutions. In this work, we minimized the objective function using a standard genetic algorithm (Holland, 1992; Goldberg and Holland, 1988). The choice of the optimization routine and the choice of the numerical scheme for the simulations are independent of the functions. See Materials and methods for details on the how we performed this optimization. The same functions can be utilized to estimate parameters in models with different channel types.

Visualizing the dynamics of ionic currents: currentscapes

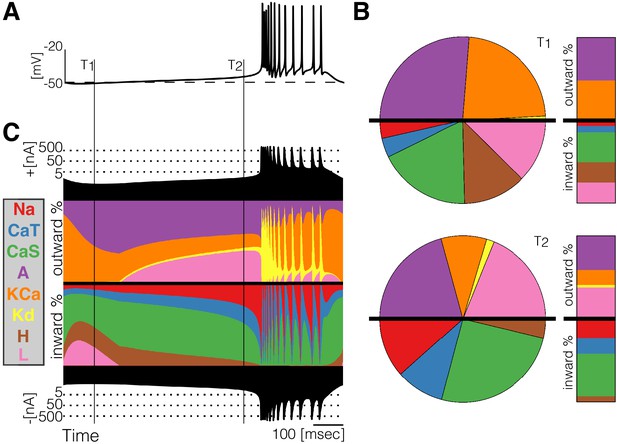

Most modeling work focuses on the variables of the models that are routinely measured in experiments such as the membrane potential as is shown in Figure 2A for a bursting neuron. While in the models we have access to all state variables, this information can be hard to represent when several current types are at play. One difficulty is that some currents like and vary over several orders of magnitude, while other currents like the and span smaller ranges. Additionally, the relative contribution of each current to the total flux through the membrane varies over time. Here, we introduce a novel representation that is simple and permits displaying the dynamics of the currents in a cohesive fashion.

Currentscape of a model bursting neuron.

A simple visualization of the dynamics of ionic currents in conductance-based model neurons. (A) Membrane potential of a periodic burster. (B) Percent contribution of each current type to the total inward and outward currents displayed as pie charts and bars at times and (C) Percent contribution of each current to the total outward and inward currents at each time stamp. The black filled curves on the top and bottom indicate total inward outward currents respectively on a logarithmic scale. The color curves show the time evolution of each current as a percentage of the total current at that time. For example, at the total outward current is and the orange shows a large contribution of . At the total outward current has increased to and the current is contributing less to the total.

At any given time stamp, we can compute the total inward and outward currents. We can then express the values of each current as a percentage of this quantity. The normalized values of the currents at any time can be displayed as a pie chart representing the share of each current type (Figure 2B). Because we want to observe how these percentages change in time, we display the shares in a bar instead of a disk. The currentscapes are constructed by applying this procedure to all time stamps and stacking the bars. These types of plots are known as stacked area plots and their application to this problem is novel. Figure 2C shows the currentscape of a periodically bursting model neuron over one cycle. The shares of each current type to the total inward and outward currents are displayed in colors, and the total inward and outward currents are represented by the filled black curves in logarithmic scale in the top and bottom.

Visualizing changes in the waveforms as a parameter is changed

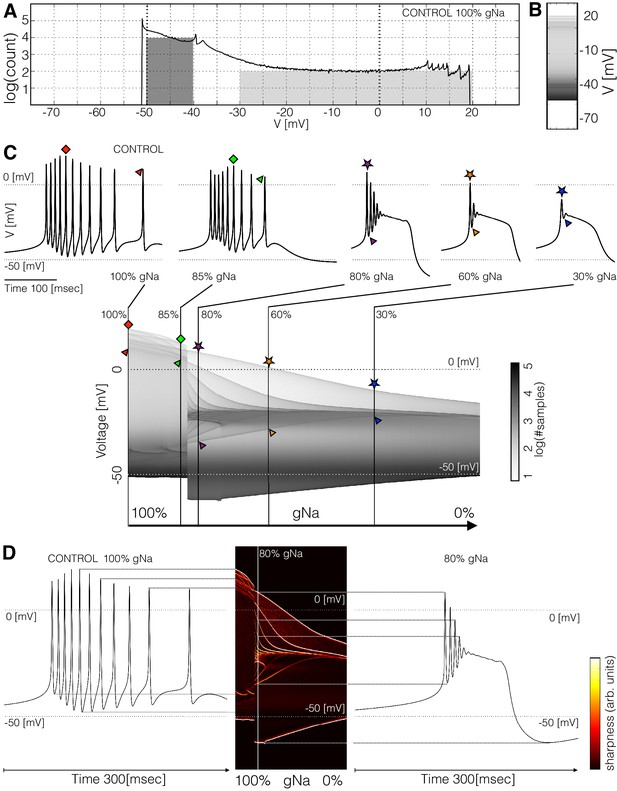

To visualize changes in the activity as a conductance is gradually removed we computed the distribution of membrane potential values. This reduction contains information about the waveform of the membrane potential, while all temporal information such as frequency can no longer be recovered. The number of times that a given value of is sampled is proportional to the time the system spends at that value. Figure 3A shows the distribution of for a periodic burster with and sampled from s of simulation. The count is larger than for values between and , and smaller than for between and . The areas of the shaded regions are proportional to the probability that the system will be observed at the corresponding range (Figure 3B). Note that the area of the dark gray region is while the light gray is , so the probability that the cell is, at any given time, in a hyperpolarized state is more than times larger than the probability that the cell is spiking.

Membrane potential distributions.

(A) Distribution of membrane potential values. The total number of samples is . Y-axis scale is logarithmic. The area of the dark shaded region can be used to estimate of the probability that the activity is sampled between and , and the area of the light shaded region is proportional to the probability that is sampled between and . The area of the dark region is times larger than the light region. (B) The same distribution in (A) represented as a graded bar. (C) Distribution of as a function of and , and waveforms for several values.The symbols indicate features of the waveforms and their correspondence to the ridges of the distribution of . (D) Waveforms under two conditions and their correspondence to the ridges of the distribution of . The ridges were enhanced by computing the derivative of the distribution along the direction.

The distribution of features sharp peaks. In many cases, the peaks in these distributions correspond to features of the waveform, such as the amplitudes of the individual spikes, or the minimum membrane potential (see Figure 3—figure supplement 1). This happens because every time the membrane potential reaches a maxima or minima (in time) the derivative is close to zero. The system spends more time close to values of where the velocity is small than in regions where is large, as it occurs during the flanks of spikes. Therefore, when we sample a solution at a random instant, it is more likely that corresponds to the peak of a spike than to either flank of the spike, while the most likely outcome is that is in the hyperpolarized range (). In this particular burster, there are spikes in the burst but there are only peaks in the distribution (between and ); some spikes have similar amplitudes so they add to a larger peak in the distribution. The overall or total amplitude of the oscillation can be read from the distribution since the count of is zero outside a range ( to ). These distributions can also be represented by a graded bar as shown in Figure 3B. As conductances are gradually removed the waveform of the activity changes and so does the distribution of values.

Figure 3C shows how the distribution of changes as is decreased. The waveforms at a few values of are shown for reference. For each value in the range ( to with values) we computed the count and display in gray scales. In this example, the cell remains in a bursting regime up to and transitions abruptly into a single-spike bursting mode for further decrements (). The spikes produce thin ridges in the distribution that show how their individual amplitudes change. The colored symbols indicate the correspondence between features in the waveform and ridges in the distribution. In this example, the peak amplitudes of the spikes are similar for values of greater than . After the transition, the amplitudes of the spikes are very different; two spikes go beyond and the rest accumulate near . As the oscillations collapse onto a small band at and only one spike is left.

The distributions allow the visualization of the amplitudes of the individual spikes, the slow waves, and other features as the parameter is changed. To highlight ridges in the distributions, the center panel in Figure 3D shows the derivative in color. This operation is similar to performing a Sobel filtering (Sobel and Feldman, 1968) of the image in Figure 3C. The traces on each side of this panel correspond to the control (left) and conditions. Notice how the amplitudes of each spike, features of the slow wave, and overall amplitude correspond to features in the probability distribution. This representation permits displaying how the features of the waveform change for many values of the perturbation parameter .

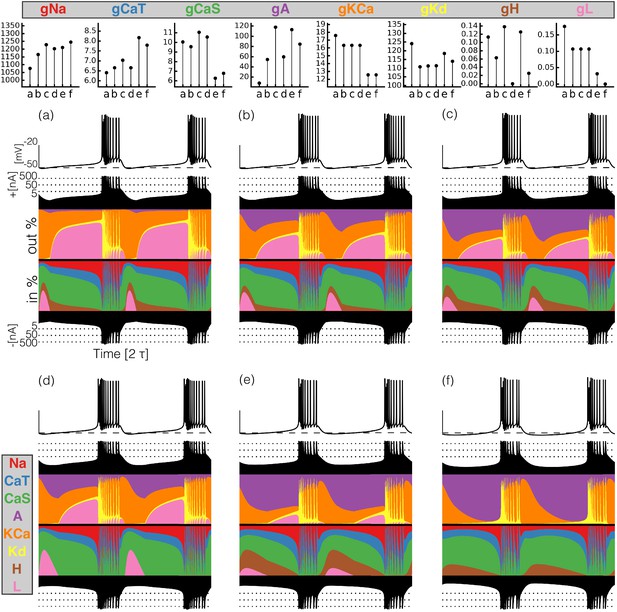

The maximal conductances do not fully predict the currentscapes

We explored the solutions of a classic conductance-based model of neural activity using landscape optimization and found many sets of parameters that produce similar bursting activity. Inspired by intracellular recording performed in the Pyloric Dilator () neurons in crabs and lobsters we targeted bursters with frequencies and duty cycles . We built bursting model neurons and visually inspected the dynamics of their currents using their currentscapes. Based on this, we selected six models that display similar membrane activity via different current compositions for further study. Because the models are nonlinear, the relationship between the dynamics of a given current type and the value of its maximal conductance is non-trivial. Figure 4 shows the values of the maximal conductances in the models (top) and their corresponding activity together with their currentscapes (bottom).

Currentscapes of model bursting neurons.

(top) Maximal conductances of all model bursters. (bottom) The panels show the membrane potential of the cell and the percent contribution of each current over two cycles.

It can be difficult to predict the currentscapes based on the values of the maximal conductances. In most cases, it appears that the larger the value of the maximal conductance, the larger the contribution of the corresponding current. However, this does not hold in all cases. For example, burster (f) shows the largest current contribution, but bursters (c) and (e) have larger values of . The maximal conductance of the current is low in model (f) but the contribution of this current to the total is similar to that in models (a) and (b). The values of are similar for bursters (e) and (f) but the contribution of this current is visibly different in each model.

Response to current injection

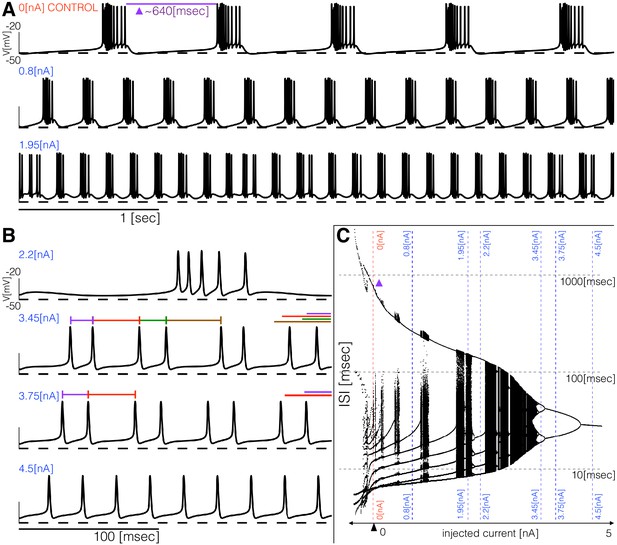

The models produce similar activity with different current dynamics. To further reveal differences in how these activities are generated, we subjected the models to simple perturbations. We begin describing the response to constant current injections in Figure 5. Figure 5A and Figure 5B show the membrane potential of model (a) for different values of injected current. In control, the activity corresponds to regular bursting and larger depolarizing currents result in a plethora of different regimes. The distributions of inter-spike intervals (ISI) provide a means to characterize these regimes (Figure 5C). When the cell is bursting regularly such as in control and in the condition, the interspike interval distributions consist of one large value that corresponds to the interburst interval ( in control) and several smaller values around which correspond to the ISI within a burst. There are values of current for which the activity appears irregular and correspondingly, the ISI values are more diverse. Figure 5B shows the response of the model to larger depolarizing currents. The activity undergoes a sequence of interesting transitions that result in tonic spiking. When the activity is periodic and there are ISI values. Larger currents result in ISI values and tonic spiking produces one ISI value. Figure 5C shows the ISI distributions (y-axis, logarithmic scale) for each value of injected current (x-axis).

Response to current injections and interspike-intervals (ISI) distributions of model (a).

(A) (top) Control traces (no current injected ), regular bursting (), irregular bursting . (B) (top) Fast regular bursting (), quadruplets (), doublets () and singlets () (tonic spiking). (C) ISI distributions over a range of injected current.

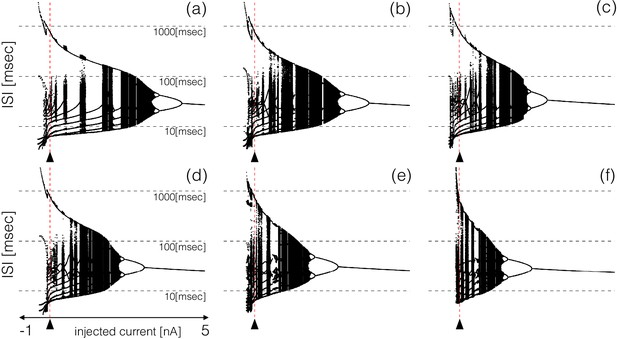

All these bursters transition into tonic spiking regimes for depolarizing currents larger than but they do so in different ways. To explore these transitions in detail, we computed the inter-spike interval (ISI) distributions over intervals of for different values of the injected current. Figure 6 shows the ISI distributions for the six models at equally spaced values of injected current over the shown range. The y-axis shows the values of all ISIs on a logarithmic scale and the x-axis corresponds to injected current. In the control, the ISI distribution consists of a few small values () that correspond to the ISIs of spikes within a burst, and a single larger value () that corresponds to the interval between the last spike of a burst and the first spike of the next burst. When the cell fires tonically the ISI distributions consist of a single value. The ISI distributions exhibit complicated dependences on the control parameter that result in beautiful patterns. For some current values, the cells produce small sets of ISI values indicating that the activity is periodic. However, this activity is quite different across regions. Interspersed with the regions of periodicity there are regions where the ISI distributions densely cover a band of values indicating non-periodic activity. Overall the patterns feature nested forking structures that are reminiscent of classical period doubling routes to chaos (Feigenbaum, 1978; Canavier et al., 1990).

ISI distributions of the six model bursting neurons over a range of injected current.

The panels show all ISI values of each model burster over a range on injected currents (vertical axis is logarithmic). All bursters transition into tonic spiking regimes for injected currents larger than and the details of the transitions are different across models.

Extracting insights from these visualization tools

Detailed conductance-based models show complex and rich behaviors in response to all kinds of perturbations. There is a vast amount of information that can be seen in these models and their visualizations in Figures 7 - 15. It is entirely impossible for us to point out even a fraction of what can be seen or learned from these figures. Nonetheless, we will illustrate a few examples of what can be seen using these methods, knowing that these details will be different for models that are constructed in the future and analyzed using these and similar methods.

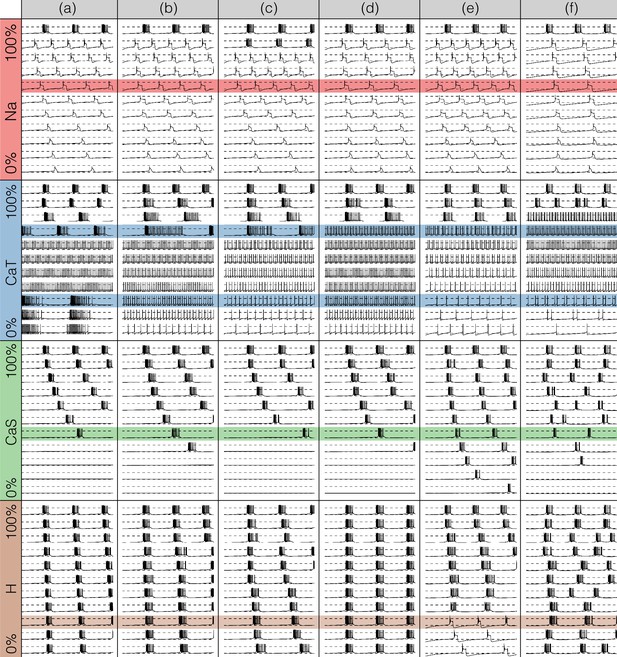

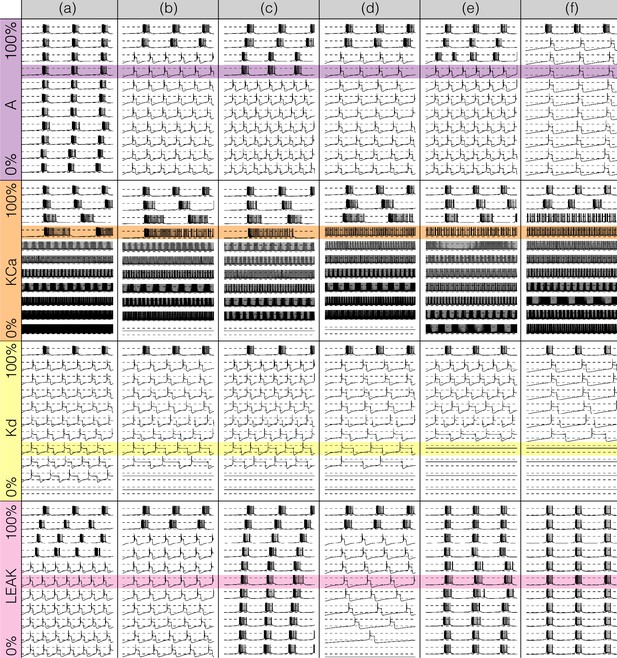

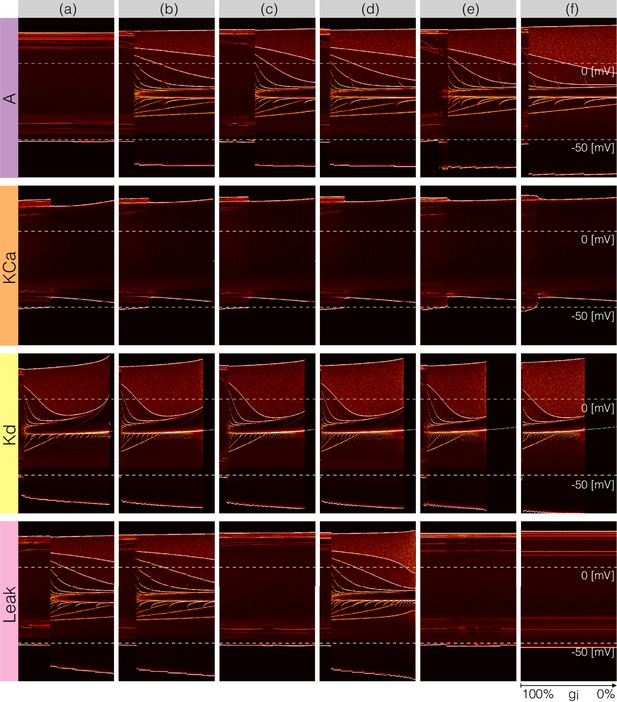

Perturbing the models with gradual decrements of the maximal conductances

Figures 7 and 8 show the effects of gradually decreasing each of the currents in these bursters from to for all six models. This type of analysis might be relevant to some kinds of pharmacological manipulations or studies of neuromodulators that decrease a given current. The figures show s of data for each condition. In all panels, the top traces correspond to the control condition () and the traces below show the activity that results from decreasing the maximal conductance. The dashed lines are placed for reference at and . Each panel shows the traces for values of the corresponding maximal conductance equally spaced between (control) and (completely removed). Each row of panels corresponds to a current type and the columns correspond to the different model bursters. Figure 7 displays the perturbations for the inward currents and Figure 8 shows the outward and leak currents.

Effects of decreasing maximal conductances: inward currents.

The figure shows the membrane potential of all model cells as the maximal conductance of each current is gradually decreased from to . Each panel shows traces with a duration of 3 s. Dashed lines are placed at and . The shading indicates values of maximal conductance for which the activity the models differs the most.

Effects of decreasing maximal conductances: outward currents.

The figure shows the membrane potential of all model cells as the maximal conductance of each current is gradually decreased from to . Each panel shows traces with a duration of 3 s. Dashed lines are placed at and . The shading indicates values of maximal conductance for which the activity the models differs the most.

Taken together Figures 7 and 8 illustrate that each model (a-f) changes its behavior differently in response to decreases in each current. Additionally, decreases in some currents have only relatively small effects but decreases in others have much more profound effects. Because the description of all that can be seen in these figures is beyond the scope of this paper, we chose to focus on the effects of decreasing the because it has rich and unexpected behaviors.

The effect of decreasing the conductance is quite diverse across models. The activities of the models at the intermediate values of shows visible differences. When models (a), (b) and (c) show bursting activity at different frequencies and with different duty cycles. Models (d), (e) and (f) become tonic spikers at this condition, but their frequencies are different. Note that in the case of model (e) the spiking activity is not regular and the ISIs take several different values. When most models spike tonically but now (e) is regular and (f) shows doublets. When is completely removed, most models transition into a tonic spiking regime with the exception of model (a), that displays a low frequency bursting regime with duty cycle .

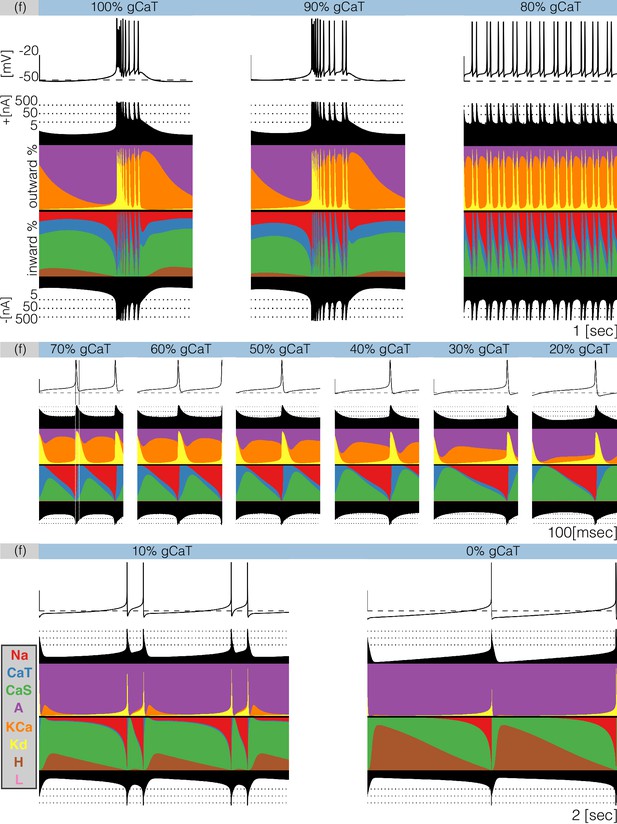

Gradually removing one current impacts the dynamics of all currents

Decreasing any conductance can trigger qualitative changes in the waveform of the membrane potential and in the contributions of each current to the activity. In Figure 9 we plot currentscapes for the effects of decreasing in model (f). This allows us to examine at higher resolution the changed contributions of currents that give rise to the interesting dynamics seen in Figure 7. Each panel in Figure 9 corresponds to a different decrement value and shows the membrane potential on top, and the currentscapes at the bottom. The top panels show second of data and correspond to the (control), and conditions. The center panels show s of data for decrements ranging from to and the bottom panels show s for the and conditions. As is gradually removed the activity transitions from a bursting regime to a tonic spiking regime.

Decreasing in model (f).

The figure shows the traces and the currentscapes of model (f) as is gradually decreased. Top panels show second of data, center panels show seconds and the bottom panels show seconds (see full traces in Figure 8).

When the neuron produces bursts but these become irregular and their durations change. Decreasing the conductance to results in completely different activity. The spiking pattern appears to be periodic but there are at least three different ISI values. It is hard to see changes in the contribution across these conditions, but changes in other currents are more discernible. The contribution of the current that is large in the control and conditions, is much smaller in the condition. Additionally, the and currents show larger contributions, the current contributes less and the current is negligible. Further increments in simulated blocker concentration result in tonic spiking regimes with frequencies ranging from to . The center panels in Figure 9 show the currentscapes for these conditions on a different time scale to highlight the contributions of . The leftmost panel shows the condition. In this panel, we placed vertical lines indicating the time stamps at which the peak of the spike and the minimum occur. Notice the large contribution of the current prior to the peak of the spike, and the large contribution of the current for the next . When the membrane potential is at its minimum value the current dominates the inward currents and remains the largest contributor for the next . The current reduces its share drastically by the time the current is visible and takes over. The contribution of remains approximately constant during repolarization and vanishes as the membrane becomes depolarized and the current becomes dominant. The effect of removing is visible on this scale. The waveform of the contribution remains qualitatively the same: largest at the minimum voltage and approximately constant until the next spike. However, the contribution of during repolarization becomes smaller, and for larger conductance decrements results in a thinner band. Finally, the bottom panels show the cases and which correspond to a two-spike burster and a tonic spiker, respectively. Note that even though the contribution of is barely visible, complete removal of this current results in a very different pattern. The activity switched from bursting to spiking and the current composition is different; disappeared in the condition and the current takes over. Notice also the larger contribution of the current.

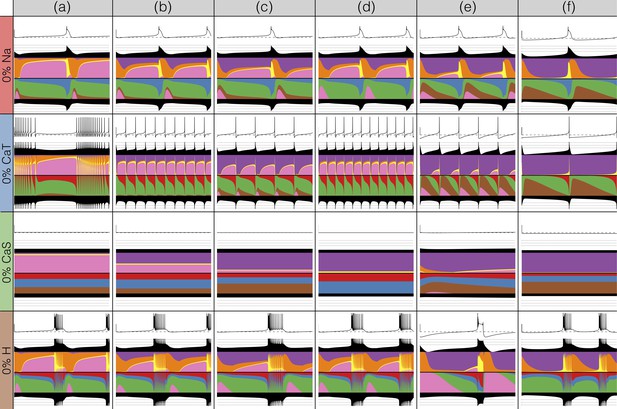

Modeling current deletions

There has been a great deal of work studying the effects of genetic and/or pharmacological deletions of currents. One of the puzzles is why some currents, known to be physiologically important, can have relatively little phenotype in some, or all individuals. For this reason in Figures 10 and 11, we show the effects of deletion of each current in all six models. Each panel shows seconds of data. The inward currents are portrayed in Figure 10 and the outward and leak currents are shown in Figure 11.

Complete removal of one current: inward currents.

The figure shows the traces and currentscapes for all bursters when one current is completely removed.

Complete removal of one current: outward currents.

The figure shows the traces and currentscapes for all bursters when one current is completely removed.

Removal of some currents has little obvious phenotype differences across the population although the currentscapes are different, such as seen for the and cases. Removal of some currents produces similar phenotypes in most, but not all of the six models as seen in the and cases. Removal of had virtually identical effects both on the phenotype and the currents. For other currents, such as and the , we find two types of responses with nearly half of the models for each case (the exception is model (d) ). In the case of the current both the phenotype and the currents composition are very diverse across models.

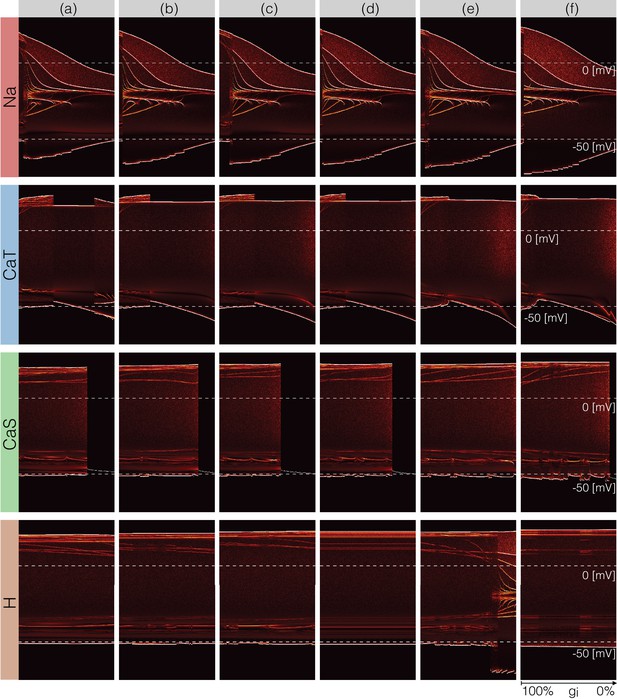

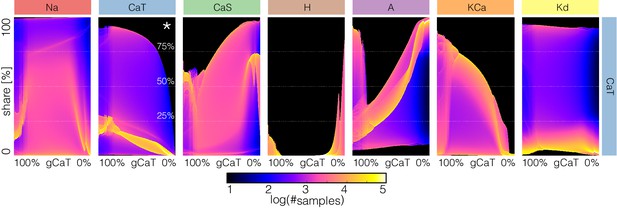

Changes in waveform as conductances are gradually decreased

A fuller description of the behavior/phenotype of all of the models for all values of conductance decrements can be seen in Figures 12 and 13. These figures use the probability scheme described in Figure 3 and Figure 3—figure supplement 1. Using these methods, it is possible to see exactly how the waveforms change and the boundaries of activity for each model and each conductance. The panels show the ridges of the probability distributions of the membrane potential for values of maximal conductance values (see Materials and methods). The probability of was computed using 30 s of data after dropping a transient period of s. It was estimated using bins in the range and samples for each maximal conductance value. The system spends more time in regions where and is sampled more at those values. Therefore, features such as the amplitudes of the spikes appear as sharp peaks in the probability distributions. To highlight these peaks and visualize how they change as currents are gradually decreased, we plot the derivative or sharpness of the distribution in colors (see color scale in Figure 3D). Overall, these plots show that for any given current, there are ranges of the conductance values where a small change results in a smooth deformation of the waveform, and there are specific values at which abrupt transitions take place. As before there is too much detail to describe everything in these figures so we will discuss a subset of the features highlighted by this representation.

Changes in waveform as currents are gradually removed.

Inward currents. The figure shows the ridges of the probability distribution of as a function of and each maximal conductance . The ridges of the probability distributions appear as curves and correspond to values of where the system spends more time, such as extrema. The panels show how different features of the waveform such as total amplitude, and the amplitude of each spike, change as each current is gradually decreased.

Changes in waveform as currents are gradually removed.

Outward and leak currents. The figure shows the ridges of the probability distribution of as a function of and each maximal conductance . See Figure 12.

The top rows in Figure 12 correspond to removing the current in the models. Note that the minimum value of in control (left) is close to and a small decrement in results in larger amplitude. The colored curves inside the envelopes correspond to the spikes’ amplitudes and features of the slow waves. For instance, when the current is completely removed (right) the amplitude of the oscillation is and the activity corresponds to a single-spike bursting mode. The spike amplitude is given by the top edge of the colored region and the curve near indicates the burst ‘belly’: the membrane hyperpolarizes slowly after spike termination and there is a wiggle at this transition.

Removing in model (a) does not disrupt bursting activity immediately. Notice that the amplitude of the bursts remains approximately constant over a range of values. The dim red and yellow lines at show that the amplitudes of the spikes are different and have different dependences with . When the model transitions into a tonic spiking regime, the amplitude of the spikes is the same and there is only one amplitude value. This value stays constant over a range but the minimum membrane potential decreases and the overall amplitude therefore increases. The model returns to a bursting regime for values of smaller than . Notice that in model (a) the membrane potential during bursts goes below , unlike in the control condition. Notice that the waveform of the membrane potential changes abruptly as is reduced and the models transition into a spiking regime. Model (f) is less resilient to this perturbation and this transition takes place at lower conductance values.

Removing does not much change the waveform, but it alters the temporal properties of the activity. The models remain bursting up to a critical value and the amplitude of the spikes was changed little. The features of the slow wave do not much change either except in model (f). Model (c) is less resilient to this perturbation since it becomes quiescent for lower decrements of the maximal conductance than the other models. The effect of gradually removing appears similar to in this representation. In this case again, the morphology of the waveform is less altered than its temporal properties (except in model (e) where a transition takes place).

Figure 13 shows the same plots for the outward and leak currents. The current in model (a) is very small () and its removal has little effect on the activity. This translates into curves that appear as parallel lines indicating spikes with different amplitudes that remain unchanged. The rest of the models exhibit a transition into a different regime. The waveforms of this regime appears similar to the waveforms which result from removing (see Figure 7) but in this representation it is easier to observe differences such as the overall amplitude of the oscillation. The amplitude decreases as is decreased and increases as is decreased. Removing has a similar effect to removing in that the models transition into tonic spiking regimes. The difference is that the spiking regimes that result from removing have smaller amplitudes and also correspond to more depolarized states.

All models are very sensitive to removing and low values result in single-spike bursting modes with large amplitudes. Model (c) is least fragile to this perturbation and exhibits a visible range ( to ) with bursting modes. These oscillations break down in a similar way to the case and display similar patterns. However, an important difference is that unlike in the case, the overall amplitude of the oscillation increases as is decreased. As before, the top edge corresponds to the amplitude of the large spike and the curves in the colored region correspond to extrema of the oscillation. After spiking, the membrane remains at a constant depolarized value () for a long period and produces a high-frequency oscillation before hyperpolarization. The amplitude of this oscillation increases as is further decreased, and this results in a white curve that starts above and ends above . The beginning of this curve corresponds to a high-frequency oscillation that occurs after spike termination. This type of activity is termed plateau oscillations and was reported in models of leech heart interneurons (Cymbalyuk and Calabrese, 2000) and in experiments in lamprey spinal neurons (Wang et al., 2014). These features are hardly visible in the traces in Figure 8 and are highlighted by this representation. Finally, the case appears similar to mixture of the and cases. The cells remain bursting over a range of values and some of them transition into a single-spike bursting mode that is different from the case.

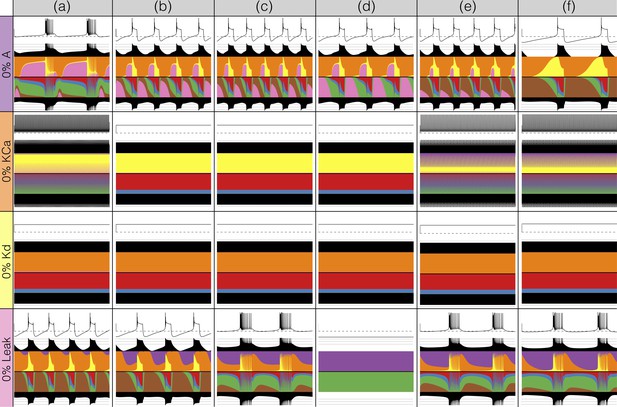

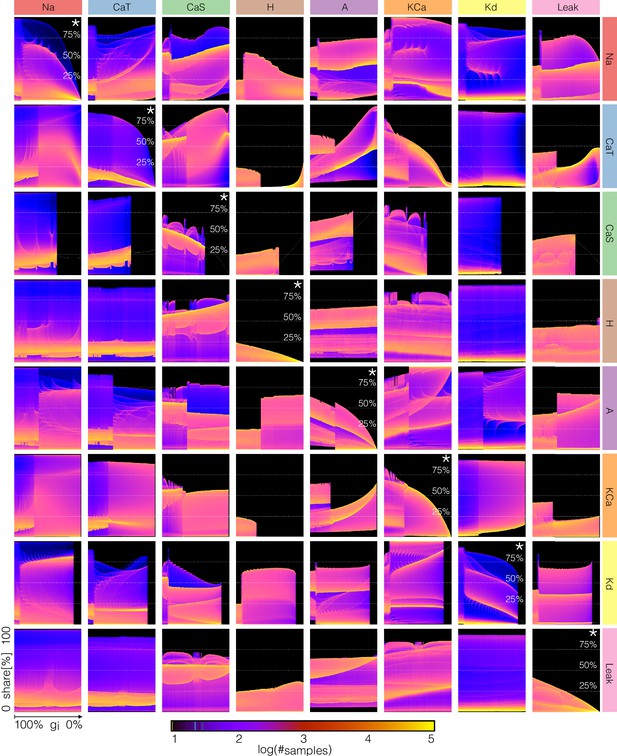

Changes in current contributions as conductances are gradually decreased

The key to the visualization method in Figures 12 and 13 is to consider not as a time series but as a stochastic variable with a probability distribution (see Figure 3 and supplement). The same procedure can be applied to the time series of each current. However, because the contributions of the currents are different at different times, and at different decrements of conductance values, it is not possible to display this information using the same scale for all channels. To overcome this, we proceed as in the currentscapes and instead focus on the normalized currents or shares to the total inward and outward currents (the rows of matrices and , see Materials and methods). The current shares correspond to the width of the color bands in the currentscapes and can also be represented by a time series that is normalized to the interval . The probability distribution of permits displaying changes in the contributions of each current to the activity as one current is gradually removed. Interpreting these distributions is straightforward as before: the number of times the system is sampled in a given current share configuration is proportional to the time the system spends there. The aim of plotting these distributions is to visualize how the currentscapes would change for all values of the conductance decrement. To illustrate this procedure, we return to to explore further the causes of the complex behavior of model (f) (see Figure 9).

Figure 14 shows the probability distributions of the current shares as is gradually decreased in model (f) (see also Figure 9 and Figure 14—figure supplement 1). The panels show the share of each current as is gradually decreased and the probability is indicated in colors. In control the and current shares are distributed in a similar way. Both currents can at times be responsible for of the inward current, but most of the time they contribute . The current is larger right before spike repolarization and the amounts to of the small () total inward current. For larger decrements, the system transitions into tonic spiking and the contribution of the current is more evenly distributed over a wider range. The contribution of the current is predominantly and trends to zero as . Note also that as the contribution of decreases, the contribution of increases to values larger than while in control it contributes with . The contribution of the current is small () between and ; it becomes negligible between and and becomes dominant after . The current behaves similarly to the . It contributes of the (small ) total outward current before burst initiation and its contribution decreases drastically when the system transitions into tonic spiking. As is removed further the current is more likely to contribute with a larger share. The contribution of the current decreases as is decreased and some of it persists even when is completely removed. In contrast, the contribution of the current does not appear to change much and nor does its role in the activity.

Changes in waveform of current shares as one current is gradually decreased.

The panels show the probability distribution of the share of each current for model (f) as is decreased (see Figure 14—figure supplement 1).

Performing the same analysis for all conductances results in a large amount of information. Despite this and because we are plotting the normalized currents or current shares, our representation allows us to display this information in a coherent fashion. As an example, in Figure 15 we show the effect of gradually decreasing each current on all the currents in model (c). The rows indicate which conductance is decreased and the columns show the effect of this perturbation on the corresponding current. The first row shows how the shares of each current change as the current is decreased. For instance, the effect of decreasing on the current (indicated by *) is as expected, with the maxima of the distribution trending to zero as . The effect of removing on the other currents is non-trivial and is displayed along the same row. Notice that while the effect of removing a current on that same current (diagonal panels) is relatively predictable, the rest of the currents become rearranged in complicated ways.

Changes in waveform of current shares as each current is gradually decreased.

The panels show the probability distribution of the share of each current for model (c) as each current is decreased.

Again, a full description of these diagrams is beyond the scope of this work so we will only make some observations. When the pertubations are negligible or weak ( to ) all currents play a role because there are periods of time in which they contribute to at least of the total current. There are ranges of the conductances over which small changes result in smooth transformations of the current configuration, there are specific values at which sharp transitions take place, and these values are different depending on the current that is decreased. While some of this information can also be extracted from Figures 12 and 13, the diagrams in Figure 15 show how the currents get reorganized at these transitions. In addition, this arrangement is convenient for comparing the effect of decreasing each conductance on a given current. For example, the contributions of the and currents change little for most perturbations (except when these conductances are decreased). In contrast, the contributions of , , , , and the change more noticeably. Finally, the contribution of the current increases for most conductance decrements of any type, except at the transition values where it can grow or shrink in an abrupt manner.

Discussion

There is an ever larger availability of experimental data to inform detailed models of identified neuron types (McDougal et al., 2017). Experimenters have determined the kinetics of many channel types, both in vertebrate and invertebrate neurons. There are also model databases with thousands of parameters which permit the development of large scale models of neural tissue (Bezaire et al., 2016). One difficulty in ensemble modeling is the necessity of incorporating the biological variability observed in some of the parameters – such as the conductances – at the same time that we require the models to capture some target activity. In other words, we may be interested in modeling a type of cell that displays some sterotypical behavior, and would like to obtain many different versions of such models. Two main approaches to this problem were introduced in the past. One consists of building a database of model solutions over a search domain and screening for target solutions: this considers all possible value combinations within an allowed range up to a numerical resolution and then applies quantitative criteria to determine which solutions correspond to the target activity (Prinz et al., 2004). An alternative approach consists of designing a target function that assigns a score to the models’ solutions in such a way that lower scores correspond to solutions that meet the targets, and then optimizing these functions (Achard and De Schutter, 2006; Druckmann et al., 2007; Ben-Shalom et al., 2012).

Both approaches have advantages and shortcomings. In the case of the database approach, trying all posible parameter combinations in a search range becomes prohibitively expensive as more parameters are allowed to vary. One advantage of this approach is that it provides a notion of how likely it is to find conductances within a search range that will produce the activity. In the landscape approach, we find solutions by optimization and – without further analysis – we do not know how likely a given solution type is. This approach has the advantage that it can be scaled to include large numbers of parameters. Additionally, if a particular solution is interesting, we can use genetic algorithms on successful target functions to ‘breed’ as many closely related models as desired. Ultimately, any optimization heuristic requires blind testing random combinations of the parameters, and developing quantitative criteria for screening solutions in a database results in some sort of score function, so the two approaches are complementary. A successful target function can determine if a random perturbation results in disruption of the activity and this can be used to perform population-based sensitivity analyses (Devenyi and Sobie, 2016).

Regardless of the optimization approach, most work is devoted to the design of successful target functions. Different modeling problems require different target functions (Roemschied et al., 2014; Fox et al., 2017; Migliore et al., 2018) and one challenge in their design is that sometimes we do not know a priori if the model contains solutions that will produce good minima. In addition, a poorly constrained target function can feature multiple local minima that could make the optimization harder, so even if there are good minima they may be hard to find. One difference between the landscape functions in Achard and De Schutter (2006) and the ones utilized here is that in their setup model solutions are compared to a target time series via a phase-plane method. The functions introduced in this work use an analysis based on Poincaré sections or thresholds to characterize the waveform and to define an error or score. Instead of targeting a particular waveform, we ask that some features of the waveform – such as the frequency and the burst duration – are tightly constrained, while other features – such as the concavity of the slow waves – can be diverse. This is motivated by the fact that across individuals and species, the activity of the pyloric neurons can be diverse but the neurons always fire in the same sequence and the burst durations have a well-defined mean. Our approach is successful in finding hundreds of models that display a target activity in minutes using a commercially available desktop computer. Application of evolutionary techniques to optimize these functions provides a natural means to model the intrinsic variability observed in biological populations.

One of the main benefits of computational modeling is that once a behavior of interest is successfully captured we then possess a mechanistic description of the phenomena that can be used to test ideas and inform experiments (Coggan et al., 2011; Lee et al., 2016; Devenyi and Sobie, 2016; Gong and Sobie, 2018). As the models gain biophysical detail these advantages wane in the face of the complexity imposed by larger numbers of variables and parameters. Conductance-based models of neural activity generate large amounts of data that can be hard to visualize and interpret. The development of novel visualization procedures has the potential to assist intuition into the details of how these models work (Gutierrez et al., 2013). Here, we introduced a novel representation of the dynamics of the ionic currents in a single compartment neuron. Our representation is simple and displays in a concise way the contribution of each current to the activity. This representation is easily generalizable to multi-compartment models and small networks, and to any type of electrically excitable cell, such as models of cardiac cells (Britton et al., 2017).

We employed these procedures to build many similar bursting models with different conductance densities and to study their response to perturbations. The responses of the models to current injections and gradual decrements of their conductances can be diverse and complex. Inspection of the ISI distributions revealed wide ranges of parameter values for which the activity appears irregular, and similar regimes can be attained by gradually removing some of the currents. Period doubling routes to chaos in neurons have been observed experimentally and in conductance-based models (Hayashi et al., 1982; Hayashi and Ishizuka, 1992; Szücs et al., 2001; Canavier et al., 1990; Xu et al., 2017). The sort of bifurcation diagrams displayed by these models upon current injection are qualitatively similar to those exhibited by simplified models of spiking neurons for which further theoretical insight is possible (Touboul and Brette, 2008). Period doubling bifurcations and low dimensional chaos arise repeatedly in neural models of different natures including rate models (Ermentrout, 1984; Alonso, 2017). The bursters studied here are close (in parameter space) to aperiodic or irregular regimes suggesting that such regimes are ubiquitous and not special cases.

We showed that in these model neurons similar membrane activities can be attained by multiple mechanisms that correspond to different current compositions. Because the dynamical mechanisms driving the activity are different in different models, perturbations can result in qualitatively different scenarios. Our visualization methods allow us to gather intuition on how different these responses can be and to explore the contribution of each current type to the neural activity. Even in the case of single compartment bursters, the response to perturbations of a population can be diverse and hard to describe. To gain intuition into the kind of behaviors the models display upon perturbation, we developed a representation based on the probability of the membrane potential . This representation permits displaying changes in the waveform of as each current is blocked. This representation shows that the models respond to perturbations in different ways, but that there are also similarities among their responses. A concise representation of the effect of a perturbation is a necessary step towards developing a classification scheme for the responses.

Materials and methods

Numerical data and data analysis and plotting code, sufficient to reproduce the figures in the paper are available on Dryad Digital Repository (https://dx.doi.org/10.5061/dryad.d0779mb).

Model equations

Request a detailed protocolThe membrane potential of a cell containing channels and membrane capacitance is given by:

Each term in the sum corresponds to a current and is externally applied current. The maximal conductance of each channel is given by , and are the activation and inactivation variables, the integers and are the number of gates in each channel, and is the reversal potential of the ion associated with the i-th current. The reversal potential of the Na, K, H and leak currents were kept fixed at , , and while the calcium reversal potential was computed dynamically using the Nernst equation assuming an extracellular calcium concentration of . The kinetic equations describing the seven voltage-gated conductances were modeled as in Liu et al. (1998),

The functions , , and are based on the experimental work of Turrigiano et al., 1995 and are listed in refs. (Liu et al., 1998; Turrigiano et al., 1995). The activation functions of the current require a measure of the internal calcium concentration (Liu et al., 1998). This is an important state variable of the cell and its dynamics are given by,

Here, is a current-to-concentration factor and . These values were originally taken from Liu et al. and were kept fixed. Finally, . The number of state variables or dimension of the model is . We explored the solutions of this model in a range of values of the maximal conductances and calcium buffering time scales. The units for voltage are , the conductances are expressed in and currents in . Voltage traces were obtained by numerical integration of Equation 5 using a Runge-Kutta order (RK4) method with a time step of (Press et al., 1988). We used the same set of initial conditions for all simulations in this work , and . For some values of the parameters, the system (Equation 5) can display multistability (Cymbalyuk et al., 2002; Shilnikov et al., 2005).

Optimization of target function

Request a detailed protocolOptimization of the objective function Equation 2 is useful to produce sets of parameters that result in bursting regimes. In this work, the optimization was performed over a search space of allowed values listed here: we searched for ([]), , , , , , , , ([msecs]). We minimized the objective function using a standard genetic algorithm Holland (1992). This is optimization technique is useful to produce large pools of different solutions and is routinely utilized to estimate parameters in biophysical models (see for example Assaneo and Trevisan, 2010). The algorithm was started with a population of random seeds that were evolved for generations. The mutation rate was . Fitter individuals were chosen more often to breed new solutions (elitism parameter was with corresponding to equal breeding probability). The computation was performed on a multicore desktop computer ( threads) and takes about hr to produce good solutions.

Currentscapes

Request a detailed protocolThe currentscapes are stacked area plots of the normalized currents. Although it is easy to describe their meaning, a precise mathematical definition of the images in Figure 2 can appear daunting in a first glance. Fortunately, the implementation of this procedure results in simple python code.

The time series of the currents can be represented by a matrix with rows and columns. For simplicity, we give a formal definition of the currentscapes for positive currents. The definition is identical for both current signs and is applied independently for each. We construct a matrix of positive currents by setting all negative elements of to zero, and . Summing over rows results in a normalization vector with elements . The normalized positive currents can be obtained as (element by element or entry-wise product). Matrix is hard to visualize as it is. The columns of correspond to the shares of each positive current and can be displayed as pie charts (see Figure 2). Here, instead of mapping the shares to a pie we map them to a segmented vertical ‘churro’. The currentscapes are generated by constructing a new matrix whose number of rows is given by a resolution factor , and the same number of columns as . Each column of produces one column of . Introducing the auxiliary variable we can define the currentscape as,

The current types are indexed by and we assume . The black filled curve in Figure 2B corresponds to the normalization vector plotted in logarithmic scale. We placed dotted lines at , and for reference throughout this work. The currentscapes for the negative currents are obtained by applying definition (Equation 8) to a matrix of negative currents defined in an analogous way as . Finally, note that matrices and are difficult to visualize as they are. The transformation given by definition (Equation 8) is useful to display their contents.

ISI distributions

Request a detailed protocolWe inspected the effects of injecting currents in our models by computing the inter-spike interval ISI distributions. For this, we started the models from the same initial condition and simulated them for s. We dropped the first s to remove transient activity and kept the last s for analysis. Spikes were detected as described before. We collected ISI values for values of injected current equally spaced between and .

V distributions

Request a detailed protocolTo sample the distributions of we simulated the system with high temporal resolution ( ) for s, after dropping the first s to remove transients. We then sampled the numerical solution at random time stamps and kept samples for each percent value. We took values between and 0.

Parameters

Model parameters used in this study are listed in Table 1.

Parameters used in this study and error value.

https://doi.org/10.7554/eLife.42722.020| gNa | gCaT | gCaS | gA | gKCa | gKd | gH | gL | |||

|---|---|---|---|---|---|---|---|---|---|---|

| model (a) | 1076.392 | 6.4056 | 10.048 | 8.0384 | 17.584 | 124.0928 | 0.11304 | 0.17584 | 653.5 | 0.051 |

| model (b) | 1165.568 | 6.6568 | 9.5456 | 54.5104 | 16.328 | 110.7792 | 0.0628 | 0.10676 | 813.88 | 0.053 |

| model (c) | 1228.368 | 7.0336 | 11.0528 | 117.5616 | 16.328 | 111.2816 | 0.13816 | 0.10676 | 605.98 | 0.027 |

| model (d) | 1203.248 | 6.6568 | 10.5504 | 59.5344 | 16.328 | 111.4072 | 0.0 | 0.10676 | 653.5 | 0.471 |

| model (e) | 1210.784 | 8.164 | 6.28 | 113.04 | 12.56 | 118.4408 | 0.1256 | 0.0314 | 393.13 | 0.109 |

| model (f) | 1245.952 | 7.7872 | 6.7824 | 84.6544 | 12.56 | 113.9192 | 0.02512 | 0.0 | 174.34 | 0.047 |

| model (Figure 2) | 1228.368 | 7.0336 | 11.0528 | 117.5616 | 16.328 | 110.7792 | 0.13816 | 0.10048 | 605.98 | 0.007 |

| model (Figure 3) | 895.528 | 3.8936 | 16.5792 | 116.4312 | 21.352 | 115.6776 | 0.0 | 0.08792 | 828.73 | 0.058 |

Data availability

All data generated or analysed during this study are included in the manuscript and supporting files. Source data files have been provided for Figures 2 through 15. Data package available in Dryad: doi:10.5061/dryad.d0779mb.

-

Dryad Digital RepositoryData from: Visualization of currents in neural models with similar behavior and different conductance densities.https://doi.org/10.5061/dryad.d0779mb

References

-

Complex parameter landscape for a complex neuron modelPLOS Computational Biology 2:e94.https://doi.org/10.1371/journal.pcbi.0020094

-

Nonlinear resonances and multi-stability in simple neural circuitsChaos: An Interdisciplinary Journal of Nonlinear Science 27:013118.https://doi.org/10.1063/1.4974028

-

Optimizing ion channel models using a parallel genetic algorithm on graphical processorsJournal of Neuroscience Methods 206:183–194.https://doi.org/10.1016/j.jneumeth.2012.02.024

-

Inconvenient truth to principle of neuroscienceTrends in Neurosciences 41:488–491.https://doi.org/10.1016/j.tins.2018.05.006

-

Routes to chaos in a model of a bursting neuronBiophysical Journal 57:1245–1251.https://doi.org/10.1016/S0006-3495(90)82643-6

-

Bursting in leech heart interneurons: cell-autonomous and network-based mechanismsThe Journal of Neuroscience 22:10580–10592.https://doi.org/10.1523/JNEUROSCI.22-24-10580.2002

-

There and back again: iterating between population-based modeling and experiments reveals surprising regulation of calcium transients in rat cardiac myocytesJournal of Molecular and Cellular Cardiology 96:38–48.https://doi.org/10.1016/j.yjmcc.2015.07.016

-

Period doublings and possible chaos in neural modelsSIAM Journal on Applied Mathematics 44:80–95.https://doi.org/10.1137/0144007

-

Quantitative universality for a class of nonlinear transformationsJournal of Statistical Physics 19:25–52.https://doi.org/10.1007/BF01020332

-

Mechanisms of generation of membrane potential resonance in a neuron with multiple resonant ionic currentsPLOS Computational Biology 13:e1005565.https://doi.org/10.1371/journal.pcbi.1005565

-

Genetic Algorithms and Machine Learning95–99, Machine Learning and Its Applications, Genetic Algorithms and Machine Learning, 3, Springer.

-

Global structure, robustness, and modulation of neuronal modelsThe Journal of Neuroscience 21:5229–5238.https://doi.org/10.1523/JNEUROSCI.21-14-05229.2001

-

Failure of averaging in the construction of a conductance-based neuron modelJournal of Neurophysiology 87:1129–1131.https://doi.org/10.1152/jn.00412.2001

-

Population-based mechanistic modeling allows for quantitative predictions of drug responses across cell typesNpj Systems Biology and Applications 4:11.https://doi.org/10.1038/s41540-018-0047-2

-

Chaotic nature of bursting discharges in the onchidium pacemaker neuronJournal of Theoretical Biology 156:269–291.https://doi.org/10.1016/S0022-5193(05)80676-9

-

A model neuron with activity-dependent conductances regulated by multiple calcium sensorsThe Journal of Neuroscience 18:2309–2320.https://doi.org/10.1523/JNEUROSCI.18-07-02309.1998

-

Twenty years of ModelDB and beyond: building essential modeling tools for the future of neuroscienceJournal of Computational Neuroscience 42:1–10.https://doi.org/10.1007/s10827-016-0623-7

-

Similar network activity from disparate circuit parametersNature Neuroscience 7:1345–1352.https://doi.org/10.1038/nn1352

-

Animal-to-animal variability of connection strength in the leech heartbeat central pattern generatorJournal of Neurophysiology 107:1681–1693.https://doi.org/10.1152/jn.00903.2011

-

A 3x3 isotropic gradient operator for image processingA Talk at the Stanford Artificial Project, Pages.

-

Nonlinear behavior of sinusoidally forced pyloric pacemaker neuronsJournal of Neurophysiology 85:1623–1638.https://doi.org/10.1152/jn.2001.85.4.1623

-

Dynamics and bifurcations of the adaptive exponential integrate-and-fire modelBiological Cybernetics 99:319–334.https://doi.org/10.1007/s00422-008-0267-4

-

Selective regulation of current densities underlies spontaneous changes in the activity of cultured neuronsThe Journal of Neuroscience 15:3640–3652.https://doi.org/10.1523/JNEUROSCI.15-05-03640.1995

-

Hyperpolarization-Activated current induces Period-Doubling cascades and chaos in a cold thermoreceptor modelFrontiers in Computational Neuroscience 11:12.https://doi.org/10.3389/fncom.2017.00012

Article and author information

Author details

Funding

National Institutes of Health (R35 NS097343)

- Eve Marder

Swartz Foundation (2017)

- Leandro M Alonso

National Institutes of Health (MH046742)

- Eve Marder

National Institutes of Health (T32 NS07292)

- Leandro M Alonso

The funders had no role in study design, data collection and interpretation, or the decision to submit the work for publication.

Acknowledgements

LMA acknowledges Marcos Trevisan for early discussions on genetic algorithms and Francisco Roslan for programming training.

Copyright

© 2019, Alonso and Marder

This article is distributed under the terms of the Creative Commons Attribution License, which permits unrestricted use and redistribution provided that the original author and source are credited.

Metrics

-

- 3,698

- views

-

- 556

- downloads

-

- 128

- citations

Views, downloads and citations are aggregated across all versions of this paper published by eLife.

Citations by DOI

-

- 128

- citations for umbrella DOI https://doi.org/10.7554/eLife.42722