Stimulus-dependent relationships between behavioral choice and sensory neural responses

Abstract

Understanding perceptual decision-making requires linking sensory neural responses to behavioral choices. In two-choice tasks, activity-choice covariations are commonly quantified with a single measure of choice probability (CP), without characterizing their changes across stimulus levels. We provide theoretical conditions for stimulus dependencies of activity-choice covariations. Assuming a general decision-threshold model, which comprises both feedforward and feedback processing and allows for a stimulus-modulated neural population covariance, we analytically predict a very general and previously unreported stimulus dependence of CPs. We develop new tools, including refined analyses of CPs and generalized linear models with stimulus-choice interactions, which accurately assess the stimulus- or choice-driven signals of each neuron, characterizing stimulus-dependent patterns of choice-related signals. With these tools, we analyze CPs of macaque MT neurons during a motion discrimination task. Our analysis provides preliminary empirical evidence for the promise of studying stimulus dependencies of choice-related signals, encouraging further assessment in wider data sets.

Introduction

How perceptual decisions depend on responses of sensory neurons is a fundamental question in systems neuroscience (Parker and Newsome, 1998; Gold and Shadlen, 2001; Romo and Salinas, 2003; Gold and Shadlen, 2007; Siegel et al., 2015; van Vugt et al., 2018; O’Connell et al., 2018; Steinmetz et al., 2019). The seminal work of Britten et al., 1996 showed that responses from single cells in area MT of monkeys during a motion discrimination task covaried with behavioral choices. Similar activity-choice covariations have been found in many sensory areas during a variety of both discrimination and detection two-choice tasks (see Nienborg et al., 2012; Cumming and Nienborg, 2016, for a review). Identifying which cells encode choice, and how and when they encode it, is essential to understand how the brain generates behavior based on sensory information.

With two-choice tasks, Choice Probability (CP) has been the most prominent measure (Britten et al., 1996; Parker and Newsome, 1998; Nienborg et al., 2012) used to quantify activity-choice covariations. Although early studies (Britten et al., 1996; Dodd et al., 2001) explored potential dependencies of the CP on the stimulus content, no significant evidence was found of a CP stimulus dependency. Accordingly, it has become common to report for each neuron a single CP value to quantify the strength of activity-choice covariations. This scalar CP value has been typically calculated either only from trials with a single, non-informative stimulus level (e.g. Dodd et al., 2001; Parker et al., 2002; Krug et al., 2004; Wimmer et al., 2015; Katz et al., 2016; Wasmuht et al., 2019), or by pooling trials across stimulus levels (so-called grand CP [Britten et al., 1996]) under the assumption that choice-related neural signals are separable from stimulus-driven responses (e.g. Verhoef et al., 2015; Pitkow et al., 2015; Smolyanskaya et al., 2015; Bondy et al., 2018). Alternatively, a single CP is sometimes obtained simply averaging CPs across stimulus levels (e.g. Cai and Padoa-Schioppa, 2014; Latimer et al., 2015; Liu et al., 2016). Even when activity-choice covariations are modeled jointly with other covariates of the neural responses using Generalized Linear Models (GLMs) (Truccolo et al., 2005; Pillow et al., 2008), the stimulus level and the choice value are also usually used as separate predictors of the responses (Park et al., 2014; Runyan et al., 2017; Scott et al., 2017; Pinto et al., 2019; Minderer et al., 2019).

This focus on characterizing a neuron by a single CP value is mirrored in the existing theoretical studies. Existing theoretical results rely on a standard feed-forward model of decision making in which a neural representation of the stimulus is converted by a threshold mechanism into a behavioral choice (Shadlen et al., 1996; Cohen and Newsome, 2009b; Haefner et al., 2013) assuming a single, zero-signal stimulus level, and hence ignoring stimulus dependencies of CPs. Furthermore, so far no analytical mechanistic model accounts for feedback contributions to activity-choice covariations known to be important empirically (Nienborg and Cumming, 2009; Cumming and Nienborg, 2016; Bondy et al., 2018).

The main contribution of this work is to extend CP analysis reporting a single CP value for each cell to a more complete characterization of within-cell patterns of choice-related activity across stimulus levels. First, we extended the analytical results of Haefner et al., 2013 to the general case of informative stimuli and to include both feedforward and feedback sources of the covariation between the choice and each cell. Our results predict that CP stimulus dependencies can appear in a cell-specific way because of stimulus-dependencies of cross-neuronal correlations. We show that they can also appear for all neurons because of the transformation of the neural representation of the stimulus into a binary choice, if the decision-making process relies on a threshold mechanism (or threshold criterion) to convert a continuous decision variable into a binary choice. Second, we developed two new analytical methods (a refined CP analysis and a new generalized linear model with stimulus-choice interactions) with increased power to detect stimulus dependencies in activity-choice covariations. Our new CP analysis isolates within-cell stimulus dependencies of activity-choice covariations from across-cells heterogeneity in the magnitude of the CP values, which may hinder their detection (Britten et al., 1996). Third, we applied this analysis framework to the classic dataset of Britten et al., 1996 containing recordings from neurons in visual cortical area MT and found evidence for our predicted population-level threshold-induced dependency but also additional interesting cell-specific dependencies. We found consistent results on the existence of stimulus-choice interactions in neural activity both with our refined CP analysis and using generalized linear models with interaction terms. Finally, we show that main properties of the additional dependencies found can be explained modeling the cross-neuronal correlation structure induced by gain fluctuations (Goris et al., 2014; Ecker et al., 2014; Kayser et al., 2015; Schölvinck et al., 2015), which have been shown to explain a substantial amount of response variability in MT visual cortex (Goris et al., 2014).

Results

We will first present the analysis of a theoretical model of how informative stimuli modulate choice probabilities. We will then analyze MT visual cortex neuronal responses from Britten et al., 1996, applying new methods developed to quantify stimulus-dependent activity-choice covariations with CPs and GLMs. This analysis provides preliminary empirical evidence in support of using these new methods for studying stimulus dependencies of activity-choice covariations.

A general account for choice-related neural signals in the presence of informative stimuli

In a two-choice psychophysical task, such as a stimulus discrimination or detection task, a neuron is said to contain a ‘choice-related signal’, or ‘decision-related signal’ when its activity carries information about the behavioral choice above and beyond the information that it carries about the stimulus (Britten et al., 1996; Parker and Newsome, 1998; Nienborg et al., 2012). The interpretation of choice-related signals in terms of decision-making mechanisms is however difficult. Much progress in our understanding of their meaning has relied on using models to derive mathematically the relationship between the underlying decision-making mechanisms and different measures of activity-choice covariation (Haefner et al., 2013; Pitkow et al., 2015) usually used to quantify choice-related signals.

The most widely used measure of activity-choice covariation for tasks involving two choices is choice probability, . The is defined as the probability that a random sample of neural activity from all trials with behavioral choice equal to 1 is larger than one sample randomly drawn from all trials with choice (Britten et al., 1996; Parker and Newsome, 1998; Nienborg et al., 2012; Haefner et al., 2013):

where is any measure of the neural activity, which we will here consider to be the neuron’s per-trial spike count. Another prominent measure of choice-related signals is choice correlation, (Pitkow et al., 2015). This quantity is defined under the assumption that the binary choice is mediated by an intermediate continuous decision value, . This value may represent the brain’s estimate of the stimulus, or an internal belief about the correct choice. The definition of CC further assumes that the categorical choice is related to via a thresholding operation such that the choice depends on whether is smaller or larger than a threshold θ (Gold and Shadlen, 2007). Its expression is as follows:

where is the covariance of the neural responses with , and , their variance across trials. Perhaps, the simplest measure of activity-choice covariation, which has been used in empirical studies (Mante et al., 2013; Ruff et al., 2018), is what we called the choice-triggered average, , defined as the difference between a neuron’s average spike count across trials with behavioral decision minus the average spike count in trials with decision :

The CP and CTA quantify activity-choice covariations without assumptions about the underlying decision-making mechanisms. However, their interpretation has commonly (Nienborg et al., 2012) been informed in previous analytical and computational studies by assuming a specific feedforward decision-threshold model of choice-related signals (Shadlen et al., 1996; Cohen and Newsome, 2009b). Haefner et al., 2013 used that model to derive an analytical expression for CP valid under two assumptions that are often violated in practice: first, the model assumes a causally feedforward structure in which sensory responses caused the decision, and second, it is assumed that both decisions are equally likely. However, the presence of informative stimuli leads to one choice being more likely than the other, hampering the application of the analytical results to Grand CPs and to detection tasks (Bosking and Maunsell, 2011; Smolyanskaya et al., 2015), which involve informative stimuli. Furthermore, decision-related signals have empirically been shown to reflect substantial feedback components (Nienborg and Cumming, 2009; Nienborg et al., 2012; Macke and Nienborg, 2019). We will next extend this previous model (Haefner et al., 2013) to obtain a general expression of the CP valid for informative stimuli and regardless of the feedforward or feedback origin of the dependencies between the neural responses and the decision variable.

We first consider a most generic model in which we simply assume that the response ri of the sensory neurons covaries with the behavioral decision , but without making any assumption about the origin of that covariation (Figure 1A). We find that to a first approximation (exact solution provided in Methods), the CP of cell captures the difference between the distributions and resulting from a difference in their means, and hence is related to the CTA:

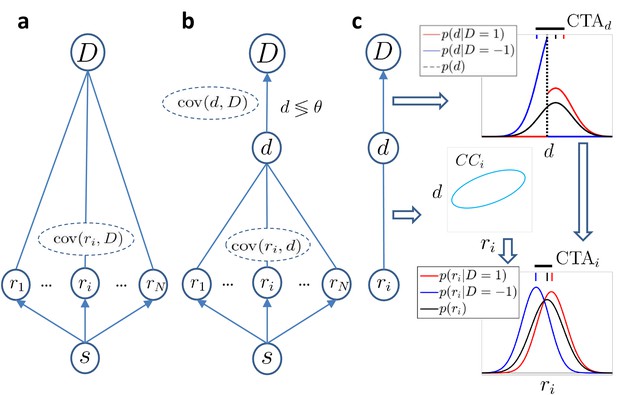

Models of choice probabilities.

Arrows indicate causal influences. Undirected edges indicate relationships that may be due to feedforward, feedback, and/or common inputs. (a) A model agnostic to the causal origin of the choice–response covariation: the response of sensory neurons encoding a stimulus covaries with choice . (b) Threshold model with a continuous decision variable mediating the relationship between responses and choice. The binary decision is made comparing to a threshold θ. (c) The threshold mechanism (vertical dashed black line) dichotomizes the -space, resulting in a difference between the means of the conditional distributions associated with (red and blue vertical dashes on top of figure). This difference is quantified by (horizontal thick black line) and implies a non-zero difference between the choice-triggered average responses () in the presence of a correlation, , between and ri.

The generically quantifies the linear dependencies between responses and choice, and this approximation of the CP does not depend on their feedforward or feedback origin (Figure 1A). We next add the assumption that the relationship between a neuron’s response and the choice is mediated by the continuous variable , as commonly assumed by previous studies and described above (Figure 1B). This splits any correlation between the neural response ri and choice into the product of the two respective correlations: = , where is the choice correlation as defined in Equation 2. It follows (see Methods) that:

where is the average difference in between the two choices, in analogy to the for neuron . Equation 5 describes how activity-choice covariations appear in the model (Figure 1C): the threshold mechanism dichotomizes the space of the decision variable, resulting in a different mean of for each choice, which is quantified in . If the activity of cell is correlated with the decision variable (non zero ), the is then reflected in the of the cell. In previous theoretical work (Haefner et al., 2013), the distribution over was assumed to be fixed and centered on the threshold value θ. Here, we remove that assumption and consider that may not be centered on the threshold if the stimulus is informative, containing evidence in favor of one of the two choices, or if the choice is otherwise biased. In those cases, the normalized in Equation 5, namely , can be determined (see Materials and methods) in terms of the probability of choosing choice 1, , which we call the ‘choice rate’, . Since the decision variable is determined as the combination of the responses of many cells, its distribution is well approximated by a Gaussian distribution, but now with a nonzero mean determined by the stimulus content. With this assumption, the normalized for is equal to , and for each other value differs by a scaling factor

where is the density function of a zero-mean, unit variance, Gaussian distribution, and is the corresponding inverse cumulative density function. By construction, for where it has its minimum. Given the factor , combining Equations 4 and 5 we can relate CP and CC across different ratios , corresponding to different stimulus levels, irrespectively of whether CP is caused by feedforward or feedback signals. In the linear approximation (see Methods for the exact formula and derivation with the decision-threshold model), this relationship reads:

For equal fractions of choices, , this CP expression corresponds to the linear approximation derived in Haefner et al., 2013. Note that extending the CP formula to required us to also make explicit the dependency of the choice correlations on the choice rate, . Unlike which is an effect of the decision-making threshold mechanism and shared by all neurons, is specific to and generally different for each neuron, reflecting its role in the perceptual decision-making process. A CC stimulus dependence may arise as a result of stimulus-dependent decision feedback (Haefner et al., 2016; Bondy et al., 2018; Lange and Haefner, 2017), or other sources of stimulus-dependent cross-neuronal correlations (Ponce-Alvarez et al., 2013; Orbán et al., 2016) such as shared gain fluctuations (Goris et al., 2014). In fact, we will show below that gain-induced stimulus-dependent cross-neuronal correlations account for observed features in our empirical data. Note that we do not distinguish between CC stimulus dependencies and a dependence of the CC on . We do not make this distinction here because most generally a change in the stimulus level results in a change of , and the two cannot be disentangled. However, the more generally depends on other factors such as the reward value, attention level, or arousal state, and in Equation 7 the separate dependencies on the stimulus and can be explicitly indicated as when the experimental paradigm allows to separate these two influences.

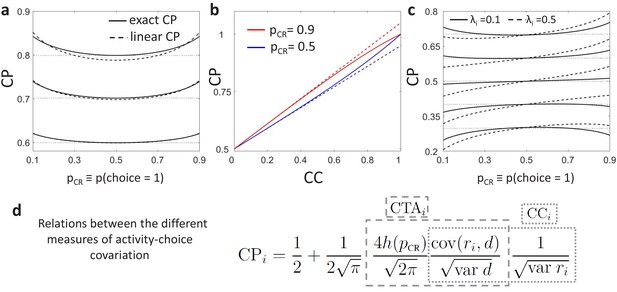

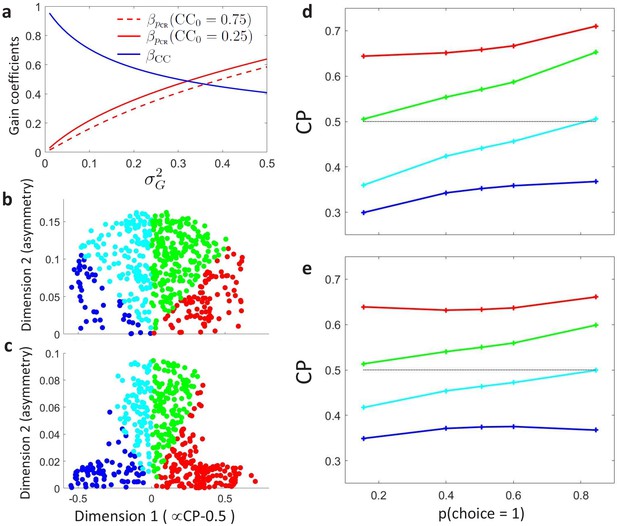

For simplicity, we presented above only the general relationship between the CP and CC in Equation 7 derived as a linear approximation for weak activity-choice covariations, as this is the regime relevant for single sensory neurons. See Methods for the exact analytical solution from the threshold model (Equation 16) and Appendix 1 for its derivation. Despite the assumption of weak activity-choice covariations, this approximation is very close over the empirically relevant range of CC’s (Figure 2A–B). Below we will focus on a concrete type of CC stimulus dependence, namely originated by gain fluctuations, but it is clear from Equation 7 that any CC stimulus dependence will modify the shape induced purely by the threshold effect. A summary of the overall relation between the CP, , and is provided in Figure 2D.

Predictions for stimulus dependencies from the threshold model.

(a) CP dependence on through the threshold-induced factor . Results are shown for three values of a stimulus-independent choice correlation, , isolating the shape of from other stimulus dependencies. Solid curves represent the exact solution of the CP obtained from our model (see Methods, Equation 16) and dashed curves its linear approximation (Equation 7). (b) Comparison of the exact solution of the CP (solid) and its linear approximation (dashed), as a function of the magnitude of a stimulus-independent choice correlation. Results are shown for two values of , 0.5 and 0.9. (c) CP dependence on when together with the factor stimulus dependencies also appear through stimulus-dependent choice correlations induced by response gain fluctuations (Equation 11). Results are shown for five values of (dotted horizontal lines) and in each case for two values of , the fraction of the variance of a cell caused by the gain fluctuations (Methods). (d) Summary of the derived relationships as provided by Equations 4-7.

The model provides a concrete prediction of a stereotyped dependence of CP on through when the choice-related signals are mediated by an intermediate decision variable , which is testable using data. First, under the assumption that CC is constant and therefore is the only source of CP dependence on , for a positive CC (), the should have a minimum at and increase symmetrically as deviates from 0.5 as the result of a change in the stimulus in either direction (Figure 2A). When the CC is negative (), then should have a maximum at and analogously decrease symmetrically as deviates from 0.5. Second, since the influence of is multiplicative, it creates higher absolute differences in the CP across different stimulus levels for cells with a stronger CP (either larger or smaller than 0.5). Third, the dependence on is weak for a wide range of values (Figure 2A), making it empirically detectable only when including highly informative stimuli in the analysis to obtain values very different from 0.5. However, for those values, CP estimates are less reliable, because only for few trials the choice is expected to be inconsistent with the sensory information, meaning that one of the two distributions or is poorly sampled. This means that to detect the modulation for single cells, many trials would be needed for each value of to obtain good estimates. Because is common to all cells, averaging profiles across cells can also improve the estimation. This averaging may also help to isolate the modulation, assuming that cell-specific stimulus dependencies introduced through choice correlations are heterogeneous across cells and average out. We refer to Appendix 1 for a detailed analysis of the statistical power for the detection of as a function of the number of trials and cells used to estimate an average profile. We will present below (Section ‘Stimulus dependence of choice-related signals in the responses of MT cell’) evidence for the modulation from a re-analysis of the data in Britten et al., 1996.

The structure of CP stimulus dependencies induced by response gain fluctuations

We will now focus on a concrete source of stimulus-dependent correlations that leads to a non-constant , namely the effect of gain fluctuations into the stimulus-response relationship (Goris et al., 2014; Ecker et al., 2014; Kayser et al., 2015; Schölvinck et al., 2015). Goris et al., 2014 showed that 75% of the variability in the responses in monkeys MT cells when presented with drifting gratings could be explained by gain fluctuations. We derive the CP dependencies on in a feedforward model of decision-making (Shadlen et al., 1996; Haefner et al., 2013) that also models the effect of gain fluctuations in the responses. The feedfoward model considers a population of sensory responses, , with tuning functions , responses , and a covariance structure of the neuron’s intrinsic variability . The responses are read out into the decision variable with a linear decoder

where are the read-out weights. The categorical choice is made by comparing to a threshold θ. With this model, the general expression of Equation 7 reduces to

where and . This expression corresponds to the one derived by Haefner et al., 2013, except for and for the fact that we now explicitly indicate the dependence of the correlation structure on the stimulus. The expression relates the CP magnitude to single-unit properties such as the neurometric sensitivity, as well as to population properties, such as the decoder pooling size and the magnitude of the cross-neuronal correlations, which determine CC (Shadlen et al., 1996; Haefner et al., 2013). In particular, if the decoding weights are optimally tuned to the structure of the covariability at the decision boundary, this results in a proportionality between and the neurometric sensitivity of the cells: (Haefner et al., 2013), as has been experimentally observed (Britten et al., 1996; Parker and Newsome, 1998). While this feedfoward model is generic, we concretely study CC stimulus dependencies induced by the effect of global gain response fluctuations in cross-neuronal correlations. Following Goris et al., 2014 we modeled the responses of cell in trial as , where gk is a gain modulation factor shared by the population. We assume that the readout weights are stimulus-independent. As a consequence, the covariance of population responses has a component due to the gain fluctuations:

where is the variance of the gain and is the covariance not associated with the gain, which for simplicity we assume to be stimulus independent. The component of the cross-neuronal covariance matrix induced by gain fluctuations is proportional to the tuning curves (). A deviation of the stimulus from the uninformative stimulus s0 produces a change in the population firing rates, which affects the variability of the responses, the variability of the decoder, and their covariance, which all vary with . Because the variance of the decoder and the covariance both depend on the concrete form of the read-out weights, the effect of gain-induced stimulus dependencies on the CP is specific for each decoder. Under the assumption of an optimal linear decoder at the decision boundary s0 (), we obtain an approximation of the CC dependence on the stimulus deviation from s0 (see Methods for details):

where the slope is determined by the coefficient , with being the fraction of the variance of cell caused by the gain fluctuations (Methods). The choice rate is determined by the stimulus as characterized by the psychometric function. For this form of the slope coefficient obtained with an optimal decoder all the factors contributing to it are positive (Figure 2C). In Appendix 4 we further analytically describe how gain fluctuations introduce CP stimulus dependencies not only for an optimal decoder, but also for any unbiased decoders. Conversely to the factor , the pattern of profiles produced by the gain fluctuations is cell-specific, with a stronger asymmetric component for cells with higher (Figure 2C). Furthermore, while the sign of the multiplicative modulation changes when CC>0 or CC<0, the gain-induced contribution in Equation 11 is additive. As seen in Figure 2C, for cells with a weak activity-choice covariation for uninformative stimuli ( close to 0.5), this implies that the CP of a neuron can actually change from below 0.5 to above 0.5 across the stimulus range presented in the experiment.

Stimulus dependencies of choice-related signals in the responses of MT cells

In the light of our findings above, we re-analyzed the classic Britten et al., 1996 data containing responses of neurons in area MT in a coarse motion direction discrimination task (see Methods for a description of the data set). Our objective is to identify any patterns of CP dependence on the choice rate/stimulus level. First, we describe our results testing for the threshold-induced CP stimulus dependence, , and then more generally we characterize the patterns found in the data using clustering analysis. Finally, as an alternative to CP analysis, we show how to extend Generalized Linear Models (GLMs) of neural activity to include stimulus-choice interaction terms that incorporate the stimulus dependencies of activity-choice covariations derived with our theoretical approach and found above in the MT data.

Testing the presence of a threshold-induced CP stimulus dependence in experimental data

We start describing how to analyze within-cell profiles to test the existence of the threshold-induced modulation. The theoretically derived properties of suggest several empirical signatures that will be reflected in the within-cell profiles. First, because introduces a multiplicative modulation of the choice correlation, for informative stimuli it leads to an increase of the CP for cells with positive choice correlation () and to a decrease for cells with negative choice correlation (). Second, because is multiplicative, the absolute magnitude of the modulation will be higher for cells with stronger choice correlation, that is CPs most different from 0.5. Third, the effect of is strongest when one choice dominates and hence most noticeable for highly informative stimuli.

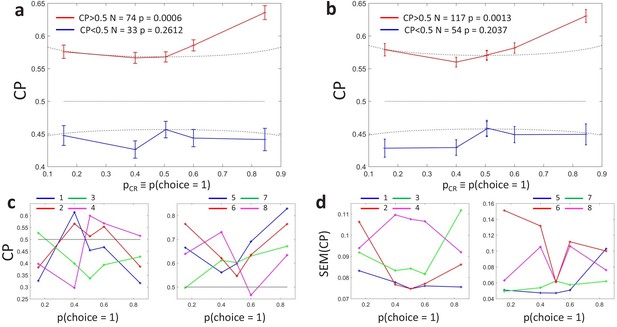

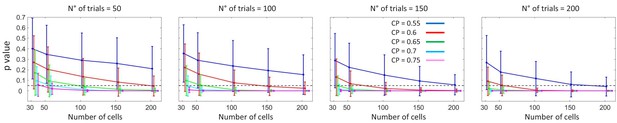

These properties of indicate that, to detect this modulation, it is necessary to examine within-cell profiles isolated from across-cells heterogeneity in the magnitude of the CP values. Ideally, we would like to calculate a profile for each cell and analyze the shape of these single-cell profiles. However, given the available number of trials, estimates of profiles for single cells are expected to be noisy. The estimation error of the CP is higher when is close to 0 or 1, the same values for which the modulation would be most noticeable. The standard error of can be approximated as (Bamber, 1975; Hanley and McNeil, 1982, see Methods), where is the number of trials. In the Britten et al. data set the number of trials varies for different stimulus levels, and most frequently for highly informative stimuli. In that case, for , only three trials for choice are expected, and . As can be seen from Figure 2A, this error surpasses the order of magnitude of the CP modulations expected from . This means that we need to combine CP estimates of adjacent values, and/or combine estimated profiles across neurons, to reduce the standard error (See Appendix 1 for a detailed analysis of the statistical power for the detection of ).

When averaging CPs across neurons, two considerations are important. First, cells that for have a CP higher or lower than 0.5 should be separated, given that the sign of the CC leads to an inversion of the profile resulting from (Equation 7). If not separated, the -dependence would average out, or the average profile would reflect the proportion of cells with CPs higher or lower than 0.5 in the data set. Second, the average should correspond to an average -across cells- of within-cell profiles, and hence it should only include cells for which a full profile can be calculated. This is important because for each cell the modulation is relative to the value of . If a different subset of cells was included in the average of the CP at each value, the resulting shape across values of the averaged CPs would not be an average of within-cell profiles. Conversely, in that case, the resulting shape would reflect the heterogeneity in the magnitude of the CP values across the subsets of cells averaged at each value. In the single-cell recordings from Britten et al., the range of stimulus levels used varies across neurons, and for a substantial part of the cells a full profile cannot be constructed. Following the second consideration, those cells were excluded from the analysis to avoid that they only contributed to the average at certain values.

We derived the following refined procedure to analyze profiles. As a first step, we constructed a profile for each cell. First, for each cell and each stimulus coherence level we calculated a CP estimate if at least four trials were available for each decision. For the experimental data set, CPs are always estimated from its definition (Equation 1), and we will only use the theoretical expression of to fit the modulation of the experimentally estimated profiles. Second, as a first way to improve the CP estimates, we binned values into five bins and assigned stimulus coherence levels to the bins according to the psychometric function that maps stimulus levels to , with the central bin containing the trials from the zero-signal stimulus. A single CP value per bin for each cell was then obtained as a weighted average of the CPs from stimulus levels assigned to each bin. The weights were calculated as inversely proportional to the standard error of the estimates, giving more weight to the most reliable CPs (see Methods). The results that we present hereafter are all robust to the selection of the minimum number of trials and the binning intervals. Unless otherwise stated, in all following analyses we included all the cells () for which we had data to compute CPs in all five bins, thus allowing us to estimate a full within-cell profile. As a second step, we averaged the within-cell profiles across cells, taking into account the two considerations above. As before, averages were weighted by inverse estimation errors.

Figure 3A shows the averaged profiles. To assess the statistical significance of the CP dependence on , we developed a surrogates method to test whether a pattern consistent with the predicted CP-increase for informative stimuli could appear under the null hypothesis that the CP has a constant value independent of (see Methods). For the cells with average CP higher than 0.5, we found that the modulation of the CP was significant (), with higher CPs obtained for close to 0 or one in agreement with the model. For cells with average CP lower than 0.5, the modulation was not significant (). While the actual absence of a modulation would imply that the choice-related signals in these neurons are not mediated by a continuous intermediate decision-variable but may be, for example, due to categorical feedback, we point out the lower power of this statistical test due to fewer neurons being in the group and the expected effect size being lower, too. First, there were 74 cells with CP higher than 0.5 but only 33 with CP lower than 0.5, meaning that the estimation error is larger for the average profile of the cells with . Second, as the modulation predicted by is multiplicative, its impact is expected to be smaller when the magnitude of is smaller. Figure 3A shows that CP values are on average closer to 0.5 for the cells with , in agreement with Figure 5 of Britten et al., 1996. This means that fewer cells classified in the group with have choice-related responses. Therefore, the fact that we cannot validate the prediction of an inverted symmetric modulation for the cells with with respect to the cells with is not strong evidence against the existence of a threshold-induced CP stimulus dependence. We further confirmed the robustness of the results in a wider set of cells. For this purpose, we repeated the analysis forming subsets separately including cells with a computable CP for the three bins with lower or equal 0.5, and the three with higher or equal than 0.5. Also in this case the observed pattern was significant () for cells with average CP higher than 0.5 (Figure 3B, ), and non-significant for cells with CP lower than 0.5 (p=0.20).

Choice probability as a function of the choice rate for MT cells during a motion direction discrimination task (Britten et al., 1996).

(a) Average CP as a function of . The average across cells was calculated separately for cells with average CP higher or lower than 0.5. Dotted curves reflect the relationship predicted by the factor (Equation 6). Significance of the stimulus dependencies was evaluated against the null hypothesis of a constant CP value using surrogate data (see Methods). (b) Same analysis but with a less strict inclusion criterion (see main text). (c) profile for four example cells with average CP lower and higher than 0.5, respectively. (d) Standard errors of the estimated CP for the example cells as a function of .

Interestingly, the identified significant dependence for the cells with goes beyond the symmetric threshold-induced shape predicted by , both in magnitude and shape (Figure 2A), since the increase is bigger for values close to 1 than to 0. This implies that the choice correlation for each neuron, , must systematically change with as well, contributing to the overall CP stimulus dependency observed. In particular, the observed average profile indicates that the CP increase appears to be higher for . The finding of this asymmetry is consistent with results reported in Britten et al., 1996, who found a significant but modest effect of coherence direction on the CP (see their Figure 3). By experimental design, the direction of the dots corresponding to choice was tuned for each cell separately to coincide with their most responsive direction. This means that this asymmetry indicates that CPs tend to increase more when the stimulus provides evidence for the direction eliciting a higher response. However, Britten et al., 1996 found no significant relation between the global magnitude of the firing rate and the CP (see their Figure 3), and we confirmed this lack of relation specifically for the subset of cells (no significant correlation coefficient between average rate and average CP values, ). This eliminates the possibility that higher CPs for high values are due only to higher responses, and suggests a richer underlying structure of patterns, which we will investigate next using cluster analysis to identify the predominant patterns shared by the within-cell profiles.

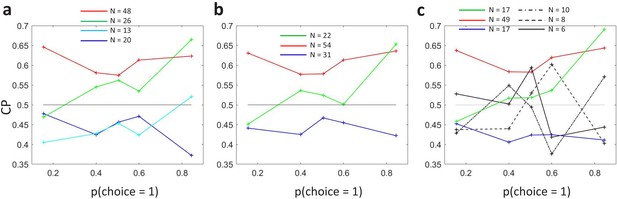

Characterizing the experimental patterns of CP stimulus dependencies with cluster analysis

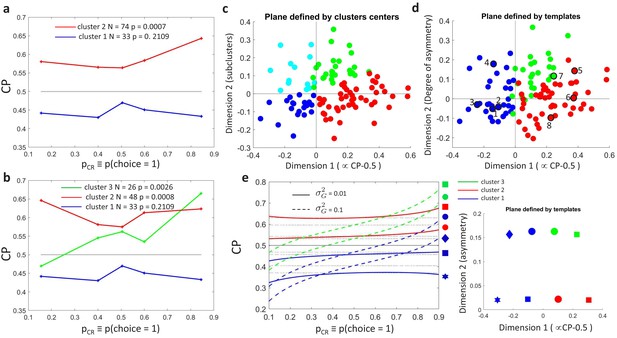

We carried out unsupervised -means clustering (Bishop, 2006) to examine the patterns of without a priori assumptions about a modulation associated with the threshold effect. Clustering was performed on , with each cell represented as a vector in a five-dimensional space, where five is the number of bins used to summarize the data as described above. To consider both the shape and sign of the modulation, distances between neurons were calculated with the cosine distance between their profiles (one minus the cosine of the angle between the two vectors). Clustering was performed for a range of specified numbers of clusters. Specifying the existence of two clusters, we naturally recovered the distinction between cells with CP higher or lower than 0.5 (Figure 4A). The statistical significance of any -modulation was again assessed constructing surrogate profiles and repeating the clustering analysis on those surrogates. As before, a significant dependence of the CP on was found only for the cluster associated with CP higher than 0.5 ( for and for ).

Clustering analysis of choice probability as a function of .

(a–b) CP as a function of for clusters of the MT cells determined by -means clustering. Each profile corresponds to the center of a cluster. Significance of the modulation was quantified as in Figure 3. (a) Two clusters () for all cells. (b) Further subclustering of cells with average into two subclusters. (c) Representation of the profiles in a two-dimensional space spanned by the cluster means. The horizontal axis is defined by clusters 1 and 2 and closely aligned with . Vertical axis is defined as perpendicular to horizontal axis in the plane defined by the subcluster means. Colors correspond to the clusters of panel b, with blue and cyan further indicating subclusters of cells with average (see Appendix 3—figure 1A). (d) Space defined by projection onto two templates: a constant relationship (x-axis) representing the magnitude of , and a monotonic relationship with slope 1 (y-axis) representing CP asymmetry. Colors correspond to the clusters of panel b and numbers indicate example cells shown in Figure 3C. (e) Modeling the influence of neuronal gain modulation on profiles. profiles for different combinations of strength of the gain fluctuations, , and the choice correlation that would be obtained for the uninformative stimulus s0 with no gain fluctuations, . We display for four values of (curves vertically separated) and two values of (solid vs dashed). Each curve corresponds to a point in the two-dimensional space defined by the symmetric and asymmetric templates introduced in panel b. See Methods for model details.

As mentioned above, the divergence from of the average profile for cells with suggests that cell-specific modulations are introduced through . While the variability of individual profiles (Figure 3C) is expected to reflect substantially the high estimation errors of for the single cells (Figure 3D), the presence of subclusters can identify patterns common across cells.

We proceed to examine subclusters within the cluster with a significant profile, excluding from our analysis cells within the cluster (analogous results were found when increasing the number of clusters in a nonhierarchical way, without a priori excluding these cells, see Appendix 3—figure 1A). Average profiles obtained when inferring two subclusters of cells with are shown in Figure 4B. For both subclusters the dependence is significant ( for cluster two and for cluster 3, respectively, in Figure 4B). The larger cluster has a more symmetric shape of dependence on , with an increase of CP in both directions when the stimulus is informative, consistent with the prediction of a threshold-induced CP stimulus dependence . For the smaller cluster the dependence is asymmetric, with a CP increase when the stimulus direction is consistent with the preferred direction of the cells and a decrease in the opposite direction. We verified that no significant difference exists between the firing rates of the cells in the two subclusters (Wilcoxon rank-sum test, ). The monotonic shape of the second subcluster mirrors the dependency produced by response gain fluctuations as predicted by the gain model described above. This suggests that the neurons in this subcluster differ from the neurons in the other subcluster by a substantially larger gain-induced variability, a testable prediction for future experiments and further discussed below.

Introducing a second cluster allows for representing each neuron’s -dependency in the two-dimensional space (Figure 4C) spanned by the mean profiles for each of the three clusters. The horizontal axis corresponds to the separation between the two initial clusters, and is closely aligned to the departure of the average CP from 0.5. The vertical axis is defined by the vectors corresponding to the centers of the two subclusters and hence is determined separately for the cells with average CP higher and lower than 0.5 (see Methods for details, and Appendix 3—figure 1A). The vertical axis is associated with the degree to which the dependence is symmetric or asymmetric with respect to . Cells for which the CP increases consistently with its preferred direction of motion coherence lie on the upper half-plane. To further support this interpretation of the axis, we repeated the clustering procedure replacing the nonparametric -means procedure with a parametric procedure that defines the subclusters with a symmetric and an asymmetric template, respectively. The data is distributed approximately equally in both spaces (Figure 4C–D).

Similar results were also obtained when increasing the number of clusters non-hierarchically. Introducing a third cluster for all cells leaves almost unaltered the cluster of cells with CP lower than 0.5 (Appendix 3—figure 1B). The cluster of cells with CP higher than 0.5 splits into two subclusters analogous to the ones found from cells with CP higher than 0.5 alone. The distinction between cells with more symmetric and asymmetric dependencies is robust to the selection of a larger number of clusters, that is, clusters with this type of dependencies remain large when allowing for the discrimination of more patterns (Appendix 3—figure 1C). However, we do not mean to claim that the variety of profiles across cells can be reduced to three separable clusters. As reflected in the distributions in Figure 4C–D, the clusters are not neatly separable. Indeed, a richer variety of profiles would be expected if the properties of profiles across cells were associated with their tuning properties and the structure of feedback projections, as we further argue in the Discussion. The predominance of a symmetric and asymmetric pattern would only reflect which are the predominant shapes shared across cells.

This clustering analysis confirms the presence of shared patterns of CP stimulus-dependence across cells, whose shape is compatible with the analytical predictions from the threshold- and gain-related dependencies. The symmetric component of CP stimulus dependence is congruent with (Equation 6), albeit with a larger magnitude than predicted (Figures 2A and 3A, and additional analysis of the statistical power in Appendix 1). This stronger modulation suggests an additional symmetric contribution of the choice correlation and/or a dynamic feedback reinforcing the stronger modulation for highly informative stimuli. However, while the cluster analysis separates the predominant patterns, the Britten et al. data lacks the statistical power to further distinguish between and symmetric contributions with a similar shape.

Gain-induced CP stimulus dependencies in the MT responses

Three key features of the dependencies observed for the MT cells are qualitatively explained by introducing shared gain fluctuations in the decision threshold model described above (Figure 4E) – the first two manifesting itself on the population (cluster) level and the third one on an individual neuron level. First, a shared gain variability predicts the existence of the asymmetric CP stimulus dependence seen in cluster 3 (Equation 11 and Figure 2C). Second, the average CP of the asymmetric cluster 3 is lower than the average CP of the symmetric cluster 2 (compare red and green profiles in Figure 4B+E). And third, if gain variability is indeed a driving factor for the observed asymmetry in cluster 3, then within this cluster, neurons with a higher amount of gain variability should also have a steeper profile, a prediction we could confirm as described in the next paragraph.

In order to test this prediction, for each neuron in cluster 3, we first computed the degree of asymmetry of its profile from the data directly, by simply fitting a quadratic function to (Methods). Next, and independently of this, we used the method of Goris et al., 2014 to estimate the amount of gain variability for each neuron. Knowing each neuron’s gain variability allowed us to predict each neuron’s degree of asymmetry (slope of as determined by , using Equation 11). We indeed found a significant correlation between the predicted and the observed slopes (, ) supporting the conclusion that shared gain variability underlies the observed asymmetric shape of for the neurons in cluster 3. For cluster 2, in which the symmetric pattern is predominant, no analogous correlation was found (, ). It is important to note that the asymmetry predicted by the gain variability overestimates the actually observed one by an order of magnitude (average observed slope of compared to an average predicted slope of ). However, this is not surprising given our simplifying assumption of a single global gain factor across the whole population whereas in practice the gain fluctuations are likely inhomogeneous across the population. Furthermore, the actual read-out used by the brain may deviate from the optimal one, further reducing the expected match between predictions and observations. A more precise modeling of CP–stimulus dependencies would require measurements of the cross-neuronal correlation structure that is not available from the single unit recordings of Britten et al., 1996 but will be for future population recordings.

Modeling stimulus-dependent choice-related signals with GLMs

The implications of a stimulus-dependent relationship between the behavioral choice and sensory neural responses are not restricted to measuring them as CPs, for which activity-choice covariations are quantified without incorporating other explanatory factors of neural responses. To further substantiate the existence of this stimulus-dependent relationship in MT data, and to understand how our model predictions could help to refine other analytical approaches, we examined how representing that relationship can improve statistical models of neural responses. In particular, we study how the stimulus-dependent choice-related signals that we discovered may inform the refinement of Generalized Linear Models (GLMs) of neural responses (Truccolo et al., 2005; Pillow et al., 2008). In the last few years, GLMs have been used for modelling choice dependencies together with the dependence on other explanatory variables, such as the external stimulus, response memory, or interactions across neurons (Park et al., 2014; Runyan et al., 2017). Typically, in a GLM of firing rates each explanatory variable contributes with a multiplicative factor that modulates the mean of a Poisson process. In their classical implementation, the choice modulates the firing rate as a binary gain factor, with a different gain for each of the two choices (Park et al., 2014; Runyan et al., 2017; Pinto et al., 2019). The multiplicative nature of this factor already introduces some covariation between the impact of the choice on the rate and the one of the other explanatory variables. However, using a single regression coefficient to model the effect of the choice on the neural responses may be insufficient if choice-related signals are stimulus dependent, as suggested by our theoretical and experimental analysis.

We developed a GLM (see Methods) that can model stimulus-dependencies of choice signals (or, in other words, stimulus-choice interactions) by including multiple choice-related predictors that allow for a different strength of dependence of the firing rate on the choice for different subsets of stimulus levels (via the choice rate, ). We fitted this model, which we call the stimulus-dependent-choice GLM, to MT data and we compared its cross-validated performance against two traditional GLMs. In the first type, called the stimulus-only GLM, the rate in each trial is predicted only based on the external stimulus level. In a second type, that we called stimulus-independent-choice GLM and that corresponds to the traditional way to include choice signals in a GLM (Park et al., 2014; Runyan et al., 2017; Scott et al., 2017; Pinto et al., 2019; Minderer et al., 2019), additionally the effect of choice is included, but using only a single, stimulus-independent choice predictor.

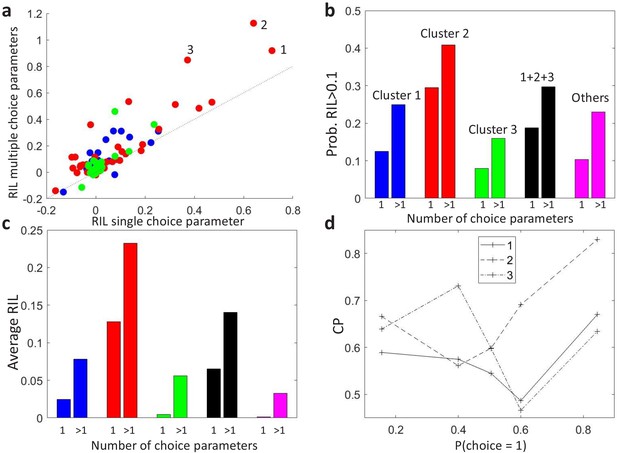

To compare the models, we separated the trials recorded from each MT cell (Britten et al., 1996) into training and testing sets, and calculated the average cross-validated likelihood for each type of model on the held-out testing set. To quantify the increase in predictability when adding the choice as a predictor we defined the relative increase in likelihood (RIL) as the relative increase of further adding the choice as a predictor relative to the increase of previously adding the stimulus as a predictor. RIL measures the relative influence of the choice and the sensory input in the neural responses. Figure 5A compares the cross-validated RIL values obtained on MT neural data when fitting either the stimulus-independent-choice or the stimulus-dependent-choice GLMs. We found that RIL values were mostly higher when allowing for multiple choice parameters, both in terms of average RIL values (Figure 5C) and in terms of the proportion of cells in each cluster for which the RIL was higher than a certain threshold, here selected to be at 10% (Figure 5B).

Modeling stimulus-dependent choice-related signals with GLMs.

(a) Scatter plot of the cross-validated relative increase in likelihood (RIL), with respect to a stimulus-only model, of the stimulus-dependent-choice GLMs (multiple choice parameters) versus the stimulus-independent-choice GLMs (a single choice parameter). (b) Proportion of cells with RIL>0.1 for the two types of models, grouped by the clusters as in Figure 4B. Cells not included in the set of 107 cells for which a CP value could be estimated for each bin of are labeled as ‘Others’. (c) Average RIL values, grouped as in b. (d) profiles of the three cells with the highest RIL in the stimulus-dependent-choice GLMs, as numbered in panel a.

GLMs that include stimulus-choice interaction terms can be used not only to better describe the firing rate of neural responses, but also to individuate more precisely the neurons or areas by their choice signals. To illustrate this point, we show how adding the interaction terms may change the relative comparison of cells by their RIL values. Consider the three neurons with highest RIL for the stimulus-dependent-choice GLM (Figure 5A, and with corresponding profiles shown in Figure 5D). The ranking of cells 1 and 2 by RIL flips with respect to the stimulus-independent-choice GLM because of the higher modulation of cell 2. Similarly, while the RIL with multiple choice parameters for cells 1 and 3 are close, the RIL of cell 3 is substantially lower with a single choice parameter, indicating that its pattern of stimulus dependence is less well captured by a single parameter. The degree to which a model with interaction terms improves the predictability will depend on the shape of the patterns, which themselves are expected to vary across areas or across cells with different tuning properties. For example, we see in Figure 5C that for the cluster with an asymmetric profile (cluster 3), the average RIL with only one choice parameter suggests that this type of cells are not choice driven. The reason is that for the cells in this cluster the sign of the choice influence on the rate can be stimulus dependent, which is impossible to model by a single choice parameter. Furthermore, the profile of the GLM choice parameters across stimulus levels provides a characterization of stimulus-dependent choice-related signals analogous to the profile, in this case within the GLM framework, hence allowing efficient inference including principled regularization and the ability to account for a range of factors beyond choices and stimuli. Overall, we expect that accounting for stimulus-choice interactions in GLMs will allow for a more accurate assessment of the relative importance of stimulus and choice on neural responses.

Discussion

Our work makes several contributions to the understanding of how choice and stimulus signals in neural activity are coupled. The first is that we derived a general analytical model of perceptual decision-making predicting how the relationship between sensory responses and choice should depend on stimulus strength, regardless of whether this relationship is due to feedforward or feedback choice-related signals. The key model assumption is that the link between sensory responses and choices is mediated by a continuous decision variable and a thresholding mechanism. Second, we designed new, more powerful methods to measure within-cell dependencies of choice probabilities (CPs) on stimulus strength. Third, we studied CP stimulus dependencies in the classic dataset by Britten et al., 1996. Interestingly, we found a rich and previously unknown structure in how CPs in MT neurons depend on stimulus strength. In addition to a symmetric dependence predicted by the thresholding operation, we found an asymmetric dependence which we could explain by incorporating previously proposed gain fluctuations (Goris et al., 2014) in our model, thereby introducing a stimulus-dependent component in the cross-neuronal covariance. Finally, we showed that generalized linear models (GLMs) that account for stimulus-choice interactions better explain sensory responses in MT and allow for a more accurate characterization of how stimulus-driven and how choice-driven a cell’s response is.

Advances on analytical solutions of choice probabilities

Previous work has demonstrated that solving analytically models of perceptual decision-making can lead to important new insights on the interpretation of the relationship between neural activity and choice in terms of decision-making computations (Bogacz et al., 2006; Gold and Shadlen, 2007; Haefner et al., 2013). In particular, previous analytical work on CPs has shown how experimentally measured CPs relate to the read-out weights by which sensory neurons contribute to the internal stimulus decoder in a feedforward model, assuming both choices are equally likely (Haefner et al., 2013; Pitkow et al., 2015). Here, we provided a general analytical solution of CPs in a more general model, with informative stimuli resulting in an unbalanced choice rate, and valid both for feedforward and feedback choice signals. We derived the analytical dependency of CP on the probability of one of the choices (), which mediates the dependence of the CP on the stimulus strength. Our model is therefore directly applicable to both discrimination and detection tasks, for any stimulus strength that elicits both choices. As we demonstrated, these advances in the analytical solution of the decision-threshold model allowed for detecting and interpreting stimulus dependencies of choice-related signals in neural activity.

Characterization of patterns of choice probability stimulus-dependencies from sensory neurons

Characterizing within-cell stimulus dependencies of activity-choice covariations at the population level requires isolating these dependencies from across-cells heterogeneity in the magnitude of the CP values. Our analytical analysis suggests possible reasons why previous attempts failed to find stimulus dependencies of CPs in real neural data. First, the magnitude of the CP dependence on is proportional to the magnitude of choice-related signals (i.e. on how different CPs are from 0.5). This implies that neuron-specific dependencies need to be characterized for each cell individually, relative to the CP obtained with the uninformative stimulus. Only neurons for which a full individual CP profile can be estimated should be averaged to determine stimulus dependencies at the population level, or otherwise the overall average CP profile of stimulus dependence will be dominated by variability associated with the different subsets of neurons contributing to the CP estimate at each stimulus level. Second, the threshold-induced predicted direction of CP dependence on is different for neurons with CP larger or smaller than 0.5, that is, neurons more responsive to opposite choices. This opposite modulation can cancel out the magnitude of the overall threshold-induced dependence of the CP on stimulus strength when averaging over all neurons, as done in previous analyses (Britten et al., 1996). Informed by these insights we characterized the within-cell dependencies of choice-related signals on stimulus strength. The application of our refined methods to the classic neural data from MT neurons during a perceptual decision-making task of Britten et al., 1996 allowed us to find stimulus dependencies of CPs, while previous analyses had not detected a significant effect.

Our understanding of how CP-stimulus dependencies may arise within the decision-making process, and the methods we used to measure these dependencies in existing data, will allow future studies to perform more fine-grained analyses and interpret more appropriately choice-related signals. Traditional analyses computed a single CP value for each neuron by either concentrating on zero-signal trials (e.g. Dodd et al., 2001; Parker et al., 2002; Krug et al., 2004; Wimmer et al., 2015; Katz et al., 2016; Wasmuht et al., 2019) or calculating grand CPs (Britten et al., 1996) across stimulus levels (e.g. Cai and Padoa-Schioppa, 2014; Verhoef et al., 2015; Latimer et al., 2015; Pitkow et al., 2015; Smolyanskaya et al., 2015; Liu et al., 2016; Bondy et al., 2018). Grand CPs are calculated directly as a weighted average of the CPs estimated for each stimulus level, or by pooling the responses from trials of all stimulus levels, after subtracting an estimate of the stimulus-related component (Kang and Maunsell, 2012). Our theoretical CP analysis shows that the latter procedure also corresponds to a specific type of weighted average (Appendix 2). Using the so computed individual CP values for each cell, areas or populations were then often ranked in terms of their averaged CP values per neurons. Areas with higher CP values are then identified as areas key for decision-making (e.g. Nienborg and Cumming, 2006; Cai and Padoa-Schioppa, 2014; Pitkow et al., 2015; Yu et al., 2015).

However, if CPs depend on , it is clear that a single grand CP value cannot summarize this dependence. The use of average single CPs may thus introduce confounds in their comparison and miss important cell-specific information. For example, patterns with different sign for different values will result in lower average CP values. Similarly, the comparison of the grand CP of a cell across tasks may mostly reflect changes in the sampling in each task of stimulus levels, leading to a change in how much the CP(s) associated with each stimulus level contributes to the grand CP. As a result, the change in the grand CP may be interpreted as indicating the existence of task-dependent choice-related signals, even if the CP(s) profile is invariant. In the same way, if the structure of patterns covaries with the tuning properties, the comparison of the grand CP across cells with different tuning properties may mostly depend on the sampling of stimulus levels. This limitation is not specific to average CP values, and applies to other measures that consider choice-related and stimulus-driven components of the response as separable, such as partial correlations (e.g. Zaidel et al., 2017). Our work instead indicates that the shape of the patterns cannot be summarized in the average, and this shape may be informative about the role of the activity-choice covariations, when comparing across cells with different tuning properties, cells from different areas, or across tasks (e.g. Romo and Salinas, 2003; Nienborg and Cumming, 2006; Nienborg et al., 2012; Krug et al., 2016; Sanayei et al., 2018; Shushruth et al., 2018; Jasper et al., 2019; Steinmetz et al., 2019). Our new methods allow quantifying these CP patterns to better characterize the covariations between neural activity and choice across neurons and populations.

A key novelty introduced in our study is the development of a model-inspired methodological procedure for identifying genuine within-cell profiles, that would otherwise be masked by across-cells heterogeneity in the magnitude of the CP values. As representative examples of how our procedure may find previously unnoticed patterns of CP dependencies, we discuss the previous analyses in Britten et al., 1996 and in Dodd et al., 2001. Britten et al., 1996 analyzed the dependence of the CP on the stimulus strength at the population level (see their Figure 3). In particular, for each stimulus level they averaged the CP of all cells for which an estimate of the CP was calculated, without separating cells with CP higher or lower than 0.5. Furthermore, in their data set, the stimulus levels vary across cells, and hence in their analysis different subsets of cells contribute to the CP average at each stimulus level. Dodd et al., 2001 presented a scatter plot of the CPs for all cells and stimulus levels (see their Figure 6). Although this analysis did not average cells with and , in the scatter plot the cell-identity of each dot is not represented. This means that it is not possible to trace the within-cell profiles. Like in the case of Britten et al., also in Dodd et al., 2001 the sampled stimulus levels varied across cells, further confounding the within-cell profiles with heterogeneity of CP magnitudes across cells. As shown by our analysis of the data of Britten et al., 1996, our analytical tools can add extra discoveries from these data, by removing some potential confounds that may have obscured the presence of within-cell CP patterns. It is important to note however that our model-based results do not imply in any way that these previous papers reached to inaccurate conclusions, as these analyses were done for purposes other than discovering the within-cell patterns predicted by our models. In particular, most of the analysis of Dodd et al., 2001 used only CPs calculated from trials with non-informative stimuli, and their main results did not rely on the evaluation of CP stimulus dependencies. Similarly, while Britten et al., 1996 used z-scoring to calculate grand CPs combining all stimulus levels, their analysis did not involve the comparison of grand CPs across areas or types of cells with different tuning properties. As discussed above, it is for this kind of comparisons, when the patterns of profiles may themselves vary across the groups of cells compared, that reducing profiles to a single CP value may confound the comparison.

Generalized linear models with stimulus-choice interactions

Our work has also implications for improving generalized linear models (GLMs) of neural activity, which are very widely used to describe neural responses in the presence of many explanatory variables that could predict the neuron’s firing rate, such as the external stimulus, motor variables, autocorrelations or refractory periods, and the interaction with other neurons (Truccolo et al., 2005). While usually the stimulus and the choice are treated as separate explanatory variables (e.g. Park et al., 2014; Runyan et al., 2017; Scott et al., 2017; Pinto et al., 2019; Minderer et al., 2019), we used GLMs including explicit interactions between choice and stimulus to show that, consistently with the finding of non-constant patterns, these models improved the goodness of fit for the responses of MT cells. Importantly, making the choice term depend on the choice rate, , affected the quantification of how stimulus-driven or choice-driven different cells are, quantified as the increased in predictive power when further adding the choice as a predictor after the stimulus. This suggests a more fine-grained way to compare the degree of a neuron’s association with the behavioral choice or the stimulus, for example across neuron types or brain areas (Runyan et al., 2017; Pinto et al., 2019; Minderer et al., 2019). Our GLMs with multiple choice parameters associated with subsets of stimulus levels also allow characterizing the patterns in the vector of choice parameters analogously to our analysis of -patterns. Furthermore, our approach can be extended straightforwardly to GLMs that model the influence of the choice across the time-course of the trials (Park et al., 2014), by making the stimulus-choice interaction terms time-dependent. GLMs with time-dependent stimulus-choice interaction terms can also be useful for experimental settings with multiple sensory cues presented at different times (e.g. Romo and Salinas, 2003; Sanayei et al., 2018) or a continuous time-dependent stimulus (Nienborg and Cumming, 2009), to account for a difference in the interaction of stimuli with the choice depending on the time they are presented. Similarly, the interaction terms may also help to model the influence of choice history in the processing of sensory evidence in subsequent trials (Tsunada et al., 2019; Urai et al., 2019), in which case the interaction terms would be between the stimulus and the choice from the previous trial.

Patterns of stimulus-choice interactions as a signature of mechanisms of perceptual decision-making

Theoretical and experimental evidence suggests that the patterns of stimulus dependence of choice-related signals may be informative about the mechanisms of perceptual decision-making. Activity-choice covariations have been characterized in terms of the structure of cross-neuronal correlations and of feedforward and feedback weights (Shadlen et al., 1996; Cohen and Newsome, 2009b; Nienborg and Cumming, 2010; Haefner et al., 2013; Cumming and Nienborg, 2016). Stimulus dependencies may be inherited from the dependence of cross-neuronal correlations on the stimulus (Kohn and Smith, 2005; Ponce-Alvarez et al., 2013), or from decision-related feedback signals (Bondy et al., 2018). Experimental (Nienborg and Cumming, 2009; Cohen and Maunsell, 2009a; Bondy et al., 2018), and theoretical (Lee and Mumford, 2003; Maunsell and Treue, 2006; Wimmer et al., 2015; Haefner et al., 2016; Ecker et al., 2016) work indicates that top-down modulations of sensory responses play an important role in the perceptual decision-making process. In particular, feedback signals are expected to show cell-specific stimulus dependencies associated with the tuning properties (Lange and Haefner, 2017). Different coding theories attribute different roles to the feedback signals, for example, conveying predictive errors (Rao and Ballard, 1999) or prior information for probabilistic inference (Lee and Mumford, 2003; Fiser et al., 2010; Haefner et al., 2016; Tajima et al., 2016; Bányai and Orbán, 2019, Bányai et al., 2019; Lange and Haefner, 2020). Accordingly, characterizing the stimulus dependencies of activity-choice covariations in connection with the tuning properties of cells is expected to provide insights into the role of feedback signals and may help to discriminate between alternative proposals. Such an analysis would require simultaneous recordings of populations of neurons tiling the space of receptive fields, and the joint characterization of the cross-neuronal correlations and tuning properties. Although this is beyond the scope of this work, we have shown that the analysis methods we proposed are capable of identifying a nontrivial structure in the stimulus-dependencies of choice-related signals. A better understanding of their differences across brain areas, across cells with different tuning properties, or for different types of sensory stimuli, promises further insights into the mechanisms of perceptual decision-making.

While we here analyzed single-cell recordings, our conclusions hold for any type of recordings used to study activity-choice covariations. This spans the range from single units (Britten et al., 1996), multiunit activity (Sanayei et al., 2018), and measurements resulting from different imaging techniques at different spatial scales like intrinsic imaging or fMRI (Choe et al., 2014; Thielscher and Pessoa, 2007; Runyan et al., 2017; Michelson et al., 2017). Given the increasing availability of population recordings, larger number of trials due to chronic recordings, and the advent of stimulation techniques to help to discriminate the origin of the choice-related signals (Cicmil et al., 2015; Tsunada et al., 2016; Yang et al., 2016; Lakshminarasimhan et al., 2018; Fetsch et al., 2018; Yu and Gu, 2018), we expect our tools to help gain new insights into the mechanisms of perceptual decision-making.

Materials and methods

We here describe the derivations of the CP analytical solutions, our new methods to analyze stimulus dependencies in choice-related responses, and we describe the data set from Britten et al., 1996 in which we test the existence of stimulus dependencies.

An exact CP solution for the threshold model

Request a detailed protocolWe first derive our analytical CP expression valid in the presence of informative stimuli, decision-related feedback, and top-down sources of activity-choice covariation, such as prior bias, trial-to-trial memory, or internal state fluctuations. We follow Haefner et al., 2013 and assume a threshold model of decision making, in which the choice is triggered by comparing a decision variable with a threshold θ, so that if choice is made, and otherwise. The identification of the binary choices as is arbitrary and an analogous expression would hold with another mapping of the categorical variable. The choice probability (Britten et al., 1996) of cell is defined as

and measures the separation between the two choice-specific response distributions and . It quantifies the probability of responses to choice to be higher than responses to . If there is no dependence between the choice and the responses this probability is . To obtain an exact solution of the CP, we assume that the distribution of the responses ri of cell and the decision variable can be well approximated by a bivariate Gaussian. Under this assumption, following Haefner et al., 2013 (see their Supplementary Material) the probability of the responses for choice follows the distribution

where a more parsimonious expression is obtained using the z-score . This distribution is a skew-normal (Azzalini, 1985), where is the standard normal distribution with zero mean and unit variance, and is its cumulative function. Furthermore, is the covariance of ri and , is the conditional standard deviation of given ri, and the probability of is

which determines the rate of each choice over trials. The choice could equally be taken as the choice of reference, resulting in an analogous formulation. Intuitively, increases when the mean of the decision variable is higher than the threshold θ, and decreases when its standard deviation increases. The form of the distribution of Equation 13 can be synthesized in terms of and the correlation coefficient , which was named by Pitkow et al., 2015 choice correlation (). In particular, defining and

The CP is completely determined by and , and these distributions depend only on and the correlation coefficient . Plugging the distribution of Equation 15 into the definition of the CP (Equation 12) an analytical solution is obtained:

where is the Owen’s T function (Owen, 1956). In Appendix 1, we provide further details of how this expression is derived. For an uninformative stimulus (), the function reduces to the arctangent and the exact result obtained in Haefner et al., 2013 is recovered. The dependence on can be understood because under the Gaussian assumption the linear correlation captures all the dependence between the responses and the decision variable . The dependence on reflects the influence of the threshold mechanism, which maps the dependence of ri with into a dependence with choice by partitioning the space of in two regions.

While Equation 16 provides an exact solution of the CP, in the Results section we present and mostly focus on a linear approximation to understand how the stimulus content modulates the choice probability. This approximation is derived (Appendix 1) in the limit of a small , which leads to CPs close to 0.5 as usually measured in sensory areas (Nienborg et al., 2012). However, as we show in the Results and further justify in the Appendix this approximation is robust for a wide range of values. The linear approximation relates the choice probability to the Choice Triggered Average (CTA) (Haefner, 2015; Chicharro et al., 2017), defined as the difference of the mean responses for each choice (Equation 3). Given the binary nature of choice , the is directly proportional to the covariance of the responses and the choice: . [Note: This relation holds for the covariance between any variable and a binary variable , and independently of the convention adopted for the values of : the factor 2 has to be replaced by in general for instead of .] This relation between and , given the factorization resulting from the mediating decision variable in the threshold model, allows expressing the as in Equation 5, connecting to the choice-triggered average of , . This connection indicates that in the threshold model is expected to be stimulus dependent, since an informative stimulus shifts the mean of , thus altering the dichotomization of produced by the threshold θ. The exact form of depends on the distribution . However, since is determined by a whole population of neurons, its distribution is expected to be well approximated by a Gaussian distribution, even if the distribution of neural responses for any single neuron is not Gaussian. With this Gaussian approximation, the normalized in Equation 5, namely , is specified in terms of the probability of choosing choice 1, , by the factor (Equation 6). In more detail, the CTA is

Neuronal data

Request a detailed protocolTo study stimulus dependencies in the relationship between the responses of sensory neurons and the behavioral choice, we analyzed the data from Britten et al., 1996 publicly available in the Neural Signal Archive (http://www.neuralsignal.org). In particular, we analyzed data from file nsa2004.1, which contains single unit responses of macaque MT cells during a random dot discrimination task. This file contains 213 cells from three monkeys. We also used file nsa2004.2, which contains paired single units recordings from 38 sites from one monkey. In the experimental design, for the single unit recordings the direction tuning curve of each neuron was used to assign a preferred-null axis of stimulus motion, such that opposite directions along the axis yield a maximal difference in responsiveness (Bair et al., 2001). For paired recordings, the direction of stimulus motion was selected based on the direction tuning curve of the two neurons and the criterion used to assign it varied depending on the similarity between the tuning curves. For cells with similar tuning, a compromise between the preferred directions of the two neurons was made. For cells with different tuning, the axis were chosen to match the preference of the most responsive cell. To minimize the influence in our analysis of the direction of motion selection, we only analyzed the most responsive cell from each site. Accordingly, our initial data set consisted in a total of 251 cells. The same qualitative results were obtained when limiting the analysis to data from nsa2004.1 alone. Further criteria regarding the number of trials per each stimulus level were used to select the cells. As discussed below, if not indicated otherwise, we present the results from 107 cells that fulfilled all the criteria required.

Analysis of stimulus-dependent choice probabilities

Our analysis of choice probabilities stimulus dependencies is based on examining the patterns in the profile as a function of the probability . We here describe how these profiles are constructed, the surrogates-based method used to assess the significance of stimulus dependencies, and the clustering analysis used to identify different stimulus dependence patterns. Matlab functions are available at https://github.com/DanielChicharro/CP_DP (Chicharro, 2021; copy archived at swh:1:rev:5850c573860eb04317e7dc550f96b1f47ca91c6a) to calculate weighted average CPs, to obtain CP profiles, and to generate surrogates consistent with the null hypothesis of a constant CP.

Profiles of CP as a function of the choice rate

Request a detailed protocolWe constructed profiles instead of profiles based on the prediction from the theoretical threshold model of the modulatory factor . We estimated the value associated with each random dots coherence level using the psychophysical function for each monkey separately. For each coherence level, we calculated a CP value if at least 15 trials were available in total, and at least four for each choice. In the original analysis of Britten et al., 1996 stimulus dependencies were examined averaging across cells the CP at each coherence level. This analysis did not separate the within-cell stimulus dependencies from variability due to changes in choice probabilities across cells. In particular, in the data set the stimulus levels presented vary across cells, which means that for each coherence level the average CP does not only reflect any potential stimulus dependence of the CP but also which subset of cells contribute to the average at that level. Therefore, we binned the range of in a way that for each cell at least one stimulus level mapped to each bin of . We here present the results using five bins defined as , where ε was selected such that only trials with the uninformative (zero coherence) stimulus were comprised in the central bin. Results are robust to the exact definition of the bins. We selected larger bins for highly informative stimulus levels for two reasons. First, the stimulus levels used in the experimental design do not uniformly cover the range of , there are more stimulus levels corresponding to values close to . Second, the CP estimates are worse for highly informative stimuli. In particular, the standard error of the CP estimates depends on the magnitude of the CP itself (Bamber, 1975; Hanley and McNeil, 1982) but for small can be approximated as

where is the number of trials. The product is maximal at and decreases quadratically when approximates 0 or 1. Furthermore, in the data set the number of trials is higher for stimuli with low information, while most frequently for highly informative stimuli. We used these estimates of the error to combine the CPs of different stimulus levels assigned to the same bin of . The average for bin was calculated as with normalized weights proportional to . A full profile could be constructed for 107 cells, while for the rest a CP value could not be calculated for at least one of the bins because of the criteria on the number of trials. Together with the profile , we also obtained an estimate of its error as a weighted average of the errors, which corresponds to