Geometric models for robust encoding of dynamical information into embryonic patterns

Abstract

During development, cells gradually assume specialized fates via changes of transcriptional dynamics, sometimes even within the same developmental stage. For anterior-posterior (AP) patterning in metazoans, it has been suggested that the gradual transition from a dynamic genetic regime to a static one is encoded by different transcriptional modules. In that case, the static regime has an essential role in pattern formation in addition to its maintenance function. In this work, we introduce a geometric approach to study such transition. We exhibit two types of genetic regime transitions arising through local or global bifurcations, respectively. We find that the global bifurcation type is more generic, more robust, and better preserves dynamical information. This could parsimoniously explain common features of metazoan segmentation, such as changes of periods leading to waves of gene expressions, ‘speed/frequency-gradient’ dynamics, and changes of wave patterns. Geometric approaches appear as possible alternatives to gene regulatory networks to understand development.

Introduction

Development from one zygote to a viable animal is a complex process (Wolpert et al., 2006), comprising multiple dynamical sub-processes, including cell movements, tissue morphogenesis, dynamical gene expressions, and cellular differentiations. Eventually, cell identities are fixed by various mechanisms, such as multistable gene regulatory networks and epigenetic markers. Little is known about how this transition from a dynamic/initiation phase to a static/maintenance one is mediated. Are there general characteristics that should be matched between dynamic and static phases to mediate a robust transition?

In dynamical systems theory, a transition between different regimes is called a ‘bifurcation’, defined as a qualitative change in the dynamics of a system driven by a so-called ‘control parameter’ (Strogatz, 2015). Bifurcations are of many types but can be systematically classified. For instance, generic families of potentials driving the dynamics have been identified as different ‘catastrophes’ (Poston and Stewart, 2012). While such mathematical descriptions are highly technical, they are reminiscent of the theory of epigenetic landscapes pushed forward by Waddington, 1957. It is thus natural to ask if such classifications can be done for development. Could dynamical systems theory help us in this pursuit, and in studying development in general? Here, the main issue is to find a way to frame the problem to derive general results.

In recent years, numerous experimental studies have revealed that quantitative changes of gene expressions during development often followed standard predictions from dynamical systems theory (Huang et al., 2007). The Waddington landscape’s analogy (Jaeger and Monk, 2014) has led to many insights in cell differentiation (Graf and Enver, 2009), and recent data on cell reprogramming quantitatively validated the associated ‘landscape picture’ (Pusuluri et al., 2018). Geometric models of development have been developed in particular cases, precisely predicting the general phenotypes of wildtype and mutants (e.g. the development of Caenorhabditis elegans vulva [Corson and Siggia, 2012] and Drosophila bristle patterns [Corson et al., 2017]).

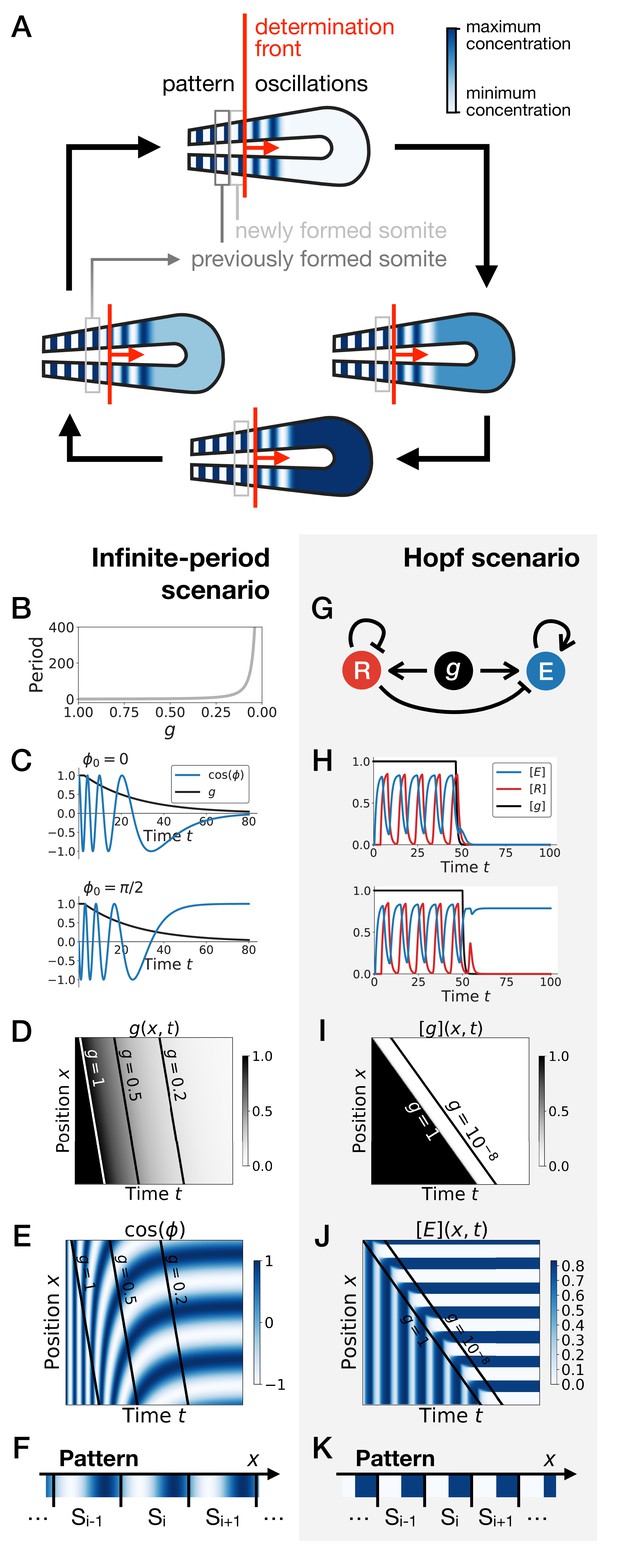

The Clock-and-Wavefront model (Cooke and Zeeman, 1976), accounting for the observed dynamical somite (vertebrae precursors) formation, was inspired by catastrophe theory. The model predicted that a retracting wavefront translates the periodic expression of a genetic clock into a spatial pattern via ‘catastrophic’ transitions demarcating the positions of the somites (Figure 1A). Identification of the predicted clock in 1997 (Palmeirim et al., 1997) has since led to many subsequent theoretical and experimental works, including observation of similar clocks in multiple arthropods (El-Sherif et al., 2012; Sarrazin et al., 2012). Cooke and Zeeman, 1976 originally assumed that the clock is an external process, blind to the subsequent segmentation process it directs. However, it has been very clear from the early experiments in Palmeirim et al., 1997 that cellular oscillators increase their period prior to segmentation, leading to traveling waves of various signalling pathways such as Notch (Giudicelli et al., 2007; Morelli et al., 2009; Figure 1A). Importantly, Notch waves eventually stabilize into a pattern of delta ligand stripes (Giudicelli and Lewis, 2004; Jiang et al., 2000), with a functional continuity between the dynamic and the static regime. Indeed, it has been shown that the dynamical phase of the clock is encoded into static rostro-caudal identities (Oginuma et al., 2010). This suggests that the observed oscillation is not a simple external pacemaker for segment formation: rather, clocks, associated waves and the resulting stripes combine into an emergent process leading to proper fate encoding. Segmentation thus possibly appears as the canonical example of transition from a dynamical gene expression regime to a static functional one.

Scenarios for segment formation.

(A) General phenomenology of segment or somite formation. The white to blue gradient represents the oscillating system (e.g. some Notch signaling pathway gene). The determination front (red vertical line) sweeps the embryo in the posterior direction (red arrow) and translates the periodic expression of a genetic clock into a spatial pattern. (B–F) Pattern formation with the infinite-period scenario. (B) Period divergence is imposed as control parameter decreases from 1 to 0. (C) Two simulated cells with the same dynamics of end up with different final values of the phase. (D–E) Kymographs showing respectively the dynamics of parameter used in the simulated embryo and the dynamics of the genetic clock. (F) Schematic of the final pattern. (G–K) Pattern formation with the Hopf scenario. (G) Schematic of the gene regulatory network. (H) Depending on the dynamics of , simulated cells can end up with either a high or a low concentration of protein E. (I–J) Kymograph showing respectively the dynamics of parameter g used in the simulated embryo and the dynamics of protein E. (K) Schematic of the final pattern. The boundary between two segments (‘Si’) is set arbitrarily at the transition from high to low concentrations of protein E.

Two broad scenarios have been proposed to model this process (see Figure 1) . In the first scenario, the period of the individual oscillators is diverging to infinity as they become more anterior (or similarly, the frequency of the clock is approaching zero), automatically giving rise to a fixed pattern (Figure 1B–F). This model corresponds to Julian Lewis’ model for c-hairy one expression pattern in somitogenesis (appendix of [Palmeirim et al., 1997]), and it is possible to experimentally quantify the period divergence within this model (Giudicelli et al., 2007). This also corresponds to the implicit scenario of many theoretical models assuming that the frequency of the clock approaches zero as cells get more anterior, such as the models in Ares et al., 2012; Morelli and Jülicher, 2007, possibly with a sharp discontinuity suppressing period divergence (Jörg et al., 2015; Soroldoni et al., 2014). Those models are appealing owing to their simplicity, since all behaviour is encoded in a dynamical frequency gradient (possibly mediated by FGF [Dubrulle and Pourquié, 2004]). However, it is unclear what happens from a dynamical systems theory standpoint (a noteworthy exception being the proposal that the gradient emerges through a singularity in phase similar to the Burger’s equation [Murray et al., 2013]). In particular, the pattern in this scenario literally corresponds to a frozen clock, such that there is an infinite number of local steady states corresponding to the frozen phases of the oscillators.

A second scenario grounded in dynamical systems theory has been proposed (François and Siggia, 2012). In this scenario, a genetic network transits from an oscillatory state to an ensemble of (stable) epigenetic states (in Waddington’s sense) fixing the pattern. Possible examples include the initial reaction-diffusion based model by Meinhardt, 1986, or the cell-autonomous model under morphogen control evolved in François et al., 2007; Figure 1G. Based on geometric arguments, if bifurcations are local, the most generic model of this transition is expected to present two steps as explained in François and Siggia, 2012. As a steep control parameter (possibly controlled by a morphogen such as FGF) decreases, the oscillation dies out through a Hopf bifurcation, leading to a single transient intermediate state. Then, for even lower values of the morphogen, one or several new (stable) states appear (technically through saddle-node bifurcations, see Figure 1—figure supplement 1). If the system translates rapidly enough from the oscillatory regime to the multistable regime, a pattern can be fixed (Figure 1H–K). Contrary to the previous scenario where the period of the clock goes to infinity, a Hopf bifurcation is associated to a finite period when the clock stops. The pattern of gene expression itself is laid thanks to multiple expression states discretizing the phase of the clock (Figure 1—figure supplement 1). Importantly, a finite number of states are observed, for example anterior and posterior fates within one somite (as first pointed out by Meinhardt, 1982).

In this paper, we revisit those ideas with explicit modeling to characterize the behavior of systems transitioning from a dynamical regime (such as an oscillation) to a static multistable regime. We introduce two new assumptions: 1. the two different phases of developmental expression (dynamic and static) can be separated into two independent sets of transcriptional modules acting on several genes simultaneously, and 2. the system smoothly switches from one set to the other. This proposal is motivated by the recent suggestion in insects that different sets of enhancers control waves of gap genes at different phases of embryonic growth (El-Sherif and Levine, 2016). Such assumptions explain the so-called ‘speed-gradient’ model suggested to explain the gene expression wave dynamics observed during AP patterning in the beetle Tribolium (Zhu et al., 2017) (see Figure 1—figure supplement 2) and (with some additional assumptions) the more subtle gene expression dynamics observed during AP patterning in Rudolf et al., 2020; El-Sherif and Levine, 2016. Using both gene-network and geometric formalisms, we characterize the types of bifurcations found in systems transitioning from a dynamic to a static regime. Surprisingly, we find that if the transition is smooth enough, global bifurcations appear. This situation is different from the standard scenario (Hopf and saddle-nodes) that we nevertheless recover if the transition is more non-linear. This is a generic result that is better studied and understood using geometric models. We further show that the transition through a global bifurcation is more robust than the sequence of Hopf and saddle-node bifurcations with respect to several perturbations that we simulate. Finally, we find that this model can explain many features of metazoan segmentation, such as ‘speed-gradient’ mechanisms or changes of spatial wave profiles due to relaxation-like oscillations, and we discuss biological evidence and implications. This geometric approach thus offers a plausible scenario underlying embryonic patterning with many associated experimental signatures.

Model

In the following, we consider a class of developmental models based on the combination of (at least) two different transcriptional modules. Biologically, those two modules correspond to two sequential developmental phases. The main assumptions are that those transcriptional modules are globally regulated for multiple genes at the same time (which could be done for instance through chromatin global regulations) and that there is a continuous transition from one to the other. Here, we focus on metazoan segmentation and regionalization, but the formalism might be applicable to other patterning processes where both an initiation phase and a maintenance phase have been described.

We use ordinary differential equations to model our system. Calling a vector encoding the state of all proteins in any given cell (typically corresponds to concentrations of proteins), a generic single-cell equation describing all models presented in the following is:

In Equation 1, variable encodes an external control parameter of the developmental transition. For example, could be an external morphogen concentration driving patterning, but more complex situations with feedback are possible, where could also be part of the system (e.g. the phase difference between oscillators [Beaupeux and François, 2016; Sonnen et al., 2018]). For simplicity, we rescale variables so that is constrained between 0 and 1. The terms and correspond to different sets of modules, their influence on the dynamics being weighted by functions and , respectively. The term encodes the noise. Finally, represents dynamical terms that are independent of the transcriptional modules, such as protein degradation.

We focus here on the simplest two-module case, where encodes a multistable system (i.e. with multiple fixed points at steady state) and a dynamic system (i.e. oscillatory). In this situation we will assume , , , and , meaning that for the network is in a pure dynamic phase, while for the network is multistable. Details on the specific forms of , , and are given in the following and in the Appendix. We study two types of models: gene-network like models where and explicitly model biochemical interactions between genes (such as transcriptional repression), and geometric models where and directly encode flows in an abstract 2D phase space, similarly to Corson and Siggia, 2017.

We model an embryo as a line of cells, corresponding to the antero-posterior axis. The dynamics within each cell (position ) is described by Equation 1. The only difference between cells is that the dynamics of is a prescribed function of , for example we assume that there is a function describing the dynamics of a morphogen. We focus on the transition between the two regimes as continuously changes from 1 to 0 in different cells as a function of time. We will typically consider a sliding morphogen gradient moving along the antero-posterior axis with speed , described by where the function encodes the shape of the morphogen, and parameter is a measure of the gradient’s spatial steepness.

We also include noise in the system with the help of an additive Gaussian white noise. For gene networks, we follow an approach similar to the τ-leaping algorithm (Gillespie, 2001), where the variance of the noise corresponds to the sum of the production and the degradation terms (approximating independent Poisson noises). A multiplicative noise intensity term is introduced, where Ω can be interpreted as the typical concentration of the proteins in the system, so that bigger Ω corresponds to lower noise. In addition, we add diffusion coupling the cells in the stochastic gene network models. For the geometric model, the variance of the noise is held independent of the position . A more detailed description of the noise and diffusion terms is provided in the Appendix.

All source codes and data used for this paper are available at: https://github.com/laurentjutrasdube/Dual-Regime_Geometry_for_Embryonic_Patterning (copy archived at https://github.com/elifesciences-publications/Dual-Regime_Geometry_for_Embryonic_Patterning).

Relation to existing biological networks

The model described above aims at being generic, but one can relate it to existing developmental networks. A two modules dichotomy was initially proposed in the Drosophila context, where two enhancers (early and late) were observed for Krüppel and knirps (El-Sherif and Levine, 2016). An extension of this model has been proposed in Zhu et al., 2017 for a gap gene cascade under control of the maternal gene Caudal, which would thus play the role of (a detailed model is reproduced in the Supplement, see Figure 1—figure supplement 2). The dynamic module corresponds to a genetic cascade comprising hunchback, Krüppel, mille-pates, and giant. Each gene in this sequence activates the next one, and later genes repress earlier ones. For the static module, each gene self-activates, and hunchback and Krüppel repress one another. The situation is less clear for vertebrates, given the plethora of oscillating genes and possible redundancy in three different pathways, specifically Notch, Wnt and FGF (Dequéant et al., 2006). The two-module system we consider assumes both the dynamic and static regimes are realized by the same set of genetic components (genes and/or signalling pathways). Notch signaling is an ideal candidate to be a component of both a dynamic and a static regime that might mediate vertebrate somitogenesis, since Notch is implicated in both the core segmentation clock of vertebrates (e.g. via Lfng [Dale et al., 2003], or the hes/her family in zebrafish [Lewis, 2003]) and oscillation stabilization (Jiang et al., 2000; Oginuma et al., 2010). Importantly, several genes of the Notch signalling pathways (e.g. DeltaC in zebrafish) are first expressed in an oscillatory manner then stabilize in striped patterns, as expected in our model (Giudicelli and Lewis, 2004; Wright et al., 2011). There are also multiple genetic interactions between members of the Notch pathway, in particular again Delta genes (Schwendinger-Schreck et al., 2014), with different roles and changes of regulations in the dynamic vs static phase (see e.g. [Wright et al., 2011]).The oscillation itself could be mediated through one or several negative feedback loops in this pathway (Lewis, 2003), and stabilization could be realized through one of the multiple Notch signaling interactions (possibly via cell coupling, similarly to what is observed in other systems [Corson and Siggia, 2017]).

Results

A model for the transition between two genetic modules: Hopf vs. SNIC

In Zhu et al., 2017, it was suggested that the transition from a ‘wave-like’ behaviour to a static pattern during Tribolium segmentation was mediated by a smooth transition from one set of modules (corresponding to the oscillatory phase) toward another (corresponding to the fixed pattern). This explained the ‘speed-gradient’ mechanism where the typical time-scale of the dynamical system depends strongly on an external gradient (in this case, the concentration of the transcription factor Caudal). In the Appendix, we further study the associated bifurcation, and observe that new fixed points corresponding to the stabilization of gap gene expressions appear on the dynamical trajectory of those gap genes (Figure 1—figure supplement 2F). In simple words, the gap gene expression pattern slowly ‘freezes’ without any clear discontinuity in its behaviour from the dynamic to the static phase, which is reminiscent of the ‘infinite-period’ scenario displayed on Figure 1.

We first aim to generalize this observed property. A simple way to generate waves of gene expressions (as in the gap-gene system described above) is to consider an oscillatory process, so that each wave of the oscillation corresponds to a wave of gap genes. We are not saying here that the gap-gene system is an oscillator, but rather that its dynamics can be encompassed into a bigger oscillator (Verd et al., 2018). The other advantage of considering oscillators is that we can better leverage dynamical systems theory to identify and study the bifurcations. Furthermore, it allows for a better connection with oscillatory segmentation processes in vertebrates and arthropods.

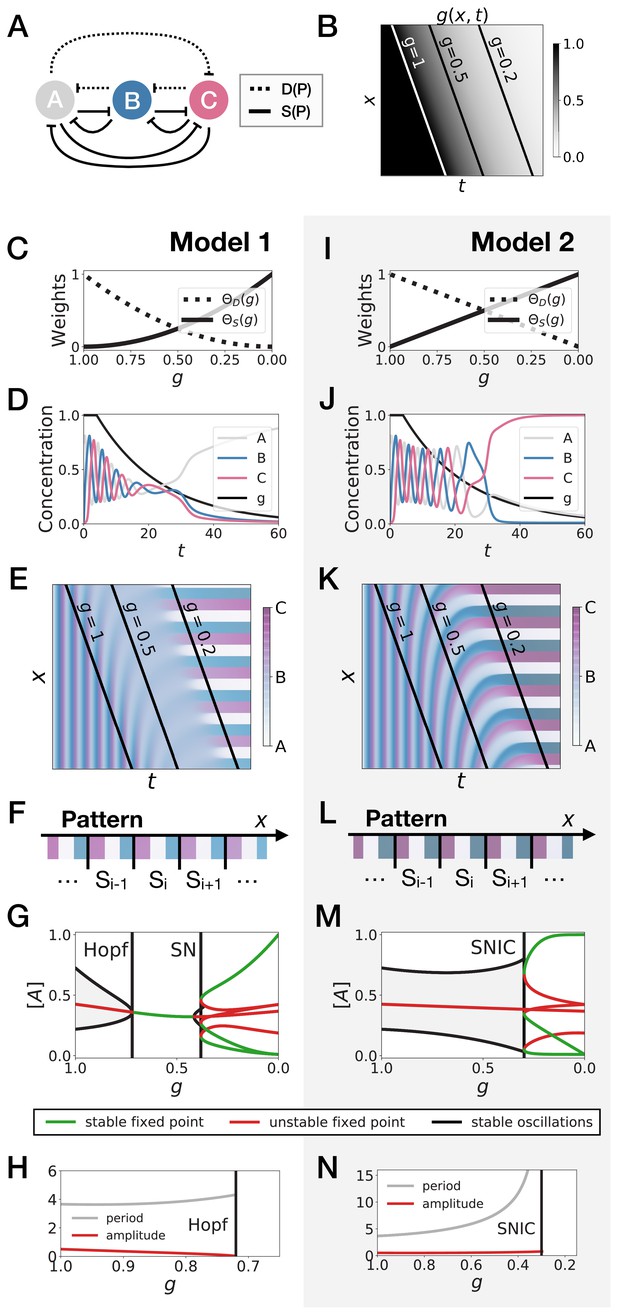

We thus start with an idealized gene regulatory network with three genes under the control of two regulatory modules (Figure 2). In the dynamic phase , we assume that the three genes are oscillating with a repressilator dynamics (Elowitz and Leibler, 2000), so that the system keeps a reference dynamical attractor and an associated period. In the static phase , we assume that the module encodes a tristable system via mutual repression (Figure 2A).

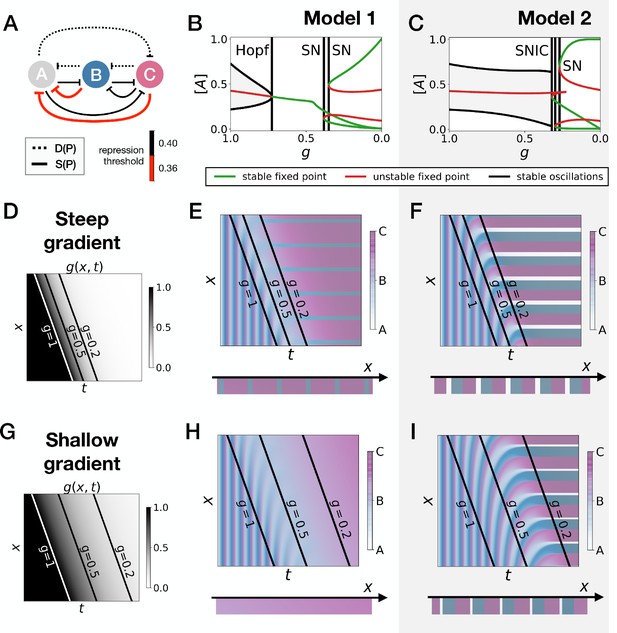

3-gene models for pattern formation.

(A) Schematic of the gene regulatory networks encoded by the dynamic term (dotted line) and the static term (solid line). (B) Kymograph showing the dynamics of parameter used in the simulated embryos for both Models 1 and 2. (C–H) Simulation results for Model 1. (C) Weights of the dynamic (dotted line) and static (solid line) modules as a function of parameter . (D) Gene concentration and value of parameter inside a representative simulated cell as a function of time. (E) Kymograph showing the dynamics of gene expression in the simulated embryo. Transparent colors are used to represent the concentration of the three genes, so that mixes of the three genes can be easily perceived. Genes A, B, and C are shown in transparent white, blue and purple, respectively. Simulated cells with intermediate concentrations of all genes appear grey. (F) Schematic of the final pattern. (G) Bifurcation diagram showing the types of dynamics available to the simulated embryo as a function of parameter . The maximum and minimum concentrations of gene A on the stable limit cycles are shown in black. Stable and unstable fixed points are shown in green and red, respectively. ‘SN’ stands for saddle-node bifurcation. (H) Period (grey line) and amplitude (red line) of the oscillations along the stable limit cycle. (I–N) Simulation results for Model 2.

We study the dynamics in a simulated embryo under the control of a regressing front of (Figure 2B). Transition from the dynamic module to the static module is expected to form a pattern by translating the phase of the oscillator into different fates, implementing a clock and wavefront process similar in spirit to the one in François et al., 2007. We compare two versions of this model, presenting the two different behaviors that we found. In Model 1 (Figure 2C–H), the weights of the two modules are non-linear in : and (Figure 2C). In Model 2 (Figure 2I–N), the weights of the two modules are linear in : and (Figure 2I). We note that the initial and final attractors of both models are identical. Importantly, only the transition from one set of modules (and thus one type of dynamics) to the other is different. This two-module system thus offers a convenient way to compare the performance of different modes of developmental transition while keeping the same ‘boundary conditions’ (i.e. the same initial and final attractors).

Figure 2E and Figure 2K show the kymographs for both models without noise, with behaviors of individual cells in Figure 2D and Figure 2J. While the final patterns of both models are the same (Figure 2F and Figure 2L), giving rise to a repeated sequence of three different fates, it is visually clear that the pattern formed with Model 2 is more precise and sharper along the entire dynamical trajectory than the one formed with Model 1, which goes through a ‘blurry’ transitory phase (compare mid-range values of on Figure 2E and Figure 2K).

To better understand this result, we plot the bifurcation diagram of both models as a function of in Figure 2G and Figure 2M. As decreases, Model 1 is the standard case of a local Hopf bifurcation (Strogatz, 2015), which happens at . Three simultaneous saddle-node bifurcations appear for lower values of , corresponding to the appearance of the fixed points defining the three regions of the pattern. The behaviour of Model 2 is very different: the fixed points form on the dynamical trajectory, via three simultaneous Saddle Node on Invariant Cycle (or SNIC) bifurcations (Strogatz, 2015). Both models display waves corresponding to the slowing down of the oscillators, leading to a static regime. In Model 1, the time-scale disappears with a finite value because of the Hopf bifurcation (Figure 2H). For Model 2, it diverges because of the SNIC (Figure 2N), suggesting an explicit mechanism for the infinite-period scenario of Figure 1.

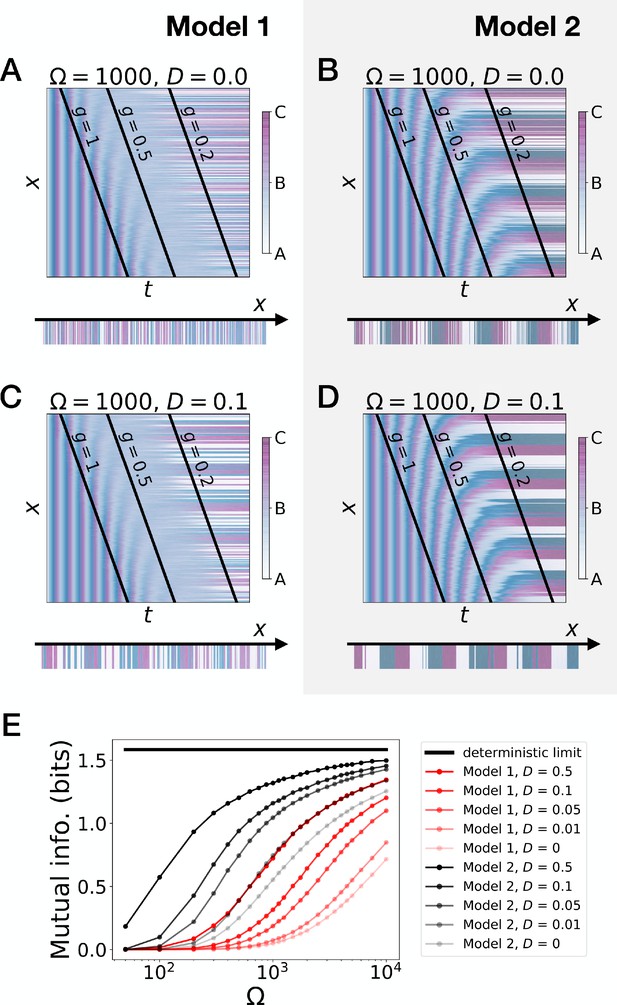

To further quantify the differences of performance between the two models, we introduce noise (encoded with variable Ω, see the Model section and the Appendix) and diffusion (Figure 3A–D). We also define a mutual information metric measuring how precisely the phase of the oscillator is read to form the final pattern (Figure 3E, see the Appendix for details), consistent with the experimental observation in vertebrate segmentation that oscillatory phases and pattern are functionally connected (Oginuma et al., 2010). Intuitively, this metric quantifies in a continuous way the number of fates encoded by the system at steady state. Ideal mutual information for the three mutually exclusive genes of Models 1 and 2 gives bits of mutual information, meaning that the pattern deterministically encodes the phase of the cycle into three static fates with equal weights. While addition of noise decreases this mutual information as expected (Figure 3E), Model 2 (black curves) always outperforms Model 1 (red curves). For a reasonable level of noise corresponding to a few thousands of proteins in the system, Model 2 can encode 21.3 ∼ 2.5 fates, close to the optimum 3. Furthermore, for a given diffusion constant, Model 1 requires a ten times smaller noise level than Model 2 to encode the same amount of mutual information, which thus indicates much better noise resistance for Model 2.

Stochastic simulations of the 3-gene models.

(A–D) Kymographs showing the stochastic dynamics of gene expression in simulated embryos. The specific values of the typical concentration Ω and of the diffusion constant D used to generate each kymograph are indicated on the panels. The concentration of the three genes at the last simulated time point is shown schematically in the lower part of each panel. (E) Mutual information as a function of typical concentration Ω for Model 1 (red lines) and Model 2 (black lines). Paler colors correspond to lower values of the diffusion constant D. The thick horizontal black line indicates the ideal mutual information for three mutually exclusive genes. Note that higher values of Ω correspond to lower noise levels.

Those observations suggest that appearance of stable fixed points through SNIC bifurcations rather than through Hopf bifurcations generates a more robust pattern. The superiority of Model 2 can be rationalized in the following way: when there is a Hopf bifurcation, only one fixed point exists for a range of values, so that all trajectories are attracted towards it. This corresponds to the ‘blurred’ zone in the kymographs of Figure 2 and Figure 3. In presence of noise, the effect is to partially erase the memory of the phase of the oscillation when only one fixed point is present for the dynamics. Conversely, a SNIC bifurcation directly translates the phase of the oscillation into fixed points, without any erasure of phase memory, ensuring higher information transfer from the dynamic to the static phase, and therefore more precise patterning.

The Hopf bifurcation of Model 1 occurs when the weights of the dynamic and static modules become small compared to the degradation term, which generates an ‘intermediate regime’ with one single fixed point after the oscillations of the dynamic module and before the multistability of the static module. The specific form of the weights is not what determines the bifurcation, but rather the presence or absence of an intermediate regime. We confirm this observation with similar 3-gene models that used Hill functions for the weights and (Figure 2—figure supplement 1 and Figure 3—figure supplement 1). Interestingly, we get both Hopf and SNIC bifurcations with the same shape for the two weights; the Hopf is obtained by shifting the weight of the dynamic term toward larger values of the control parameter. This effectively generates the required intermediate regime where both weights are small compared to the degradation term.

Gene-free models present a similar geometry of transition

Hopf and saddle-node bifurcations are ‘local’ bifurcations, in the sense that changes of the flow in phase space are confined to an arbitrarily small region of phase space as the bifurcation is approached. They do not in principle require complex changes of the flow or fine-tuning of the parameters to happen. As such, they are the most standard cases in many natural phenomena and in most theoretical studies. Conversely, SNIC bifurcations are ‘global’ bifurcations (Strogatz, 2015; Ermentrout, 2008): they are associated to changes of the flow in large regions of phase space (e.g. when a limit cycle disappears with a non-zero amplitude) and usually require some special symmetries or parameter adjustments to occur (e.g. to ensure that a saddle-node collides with a cycle).

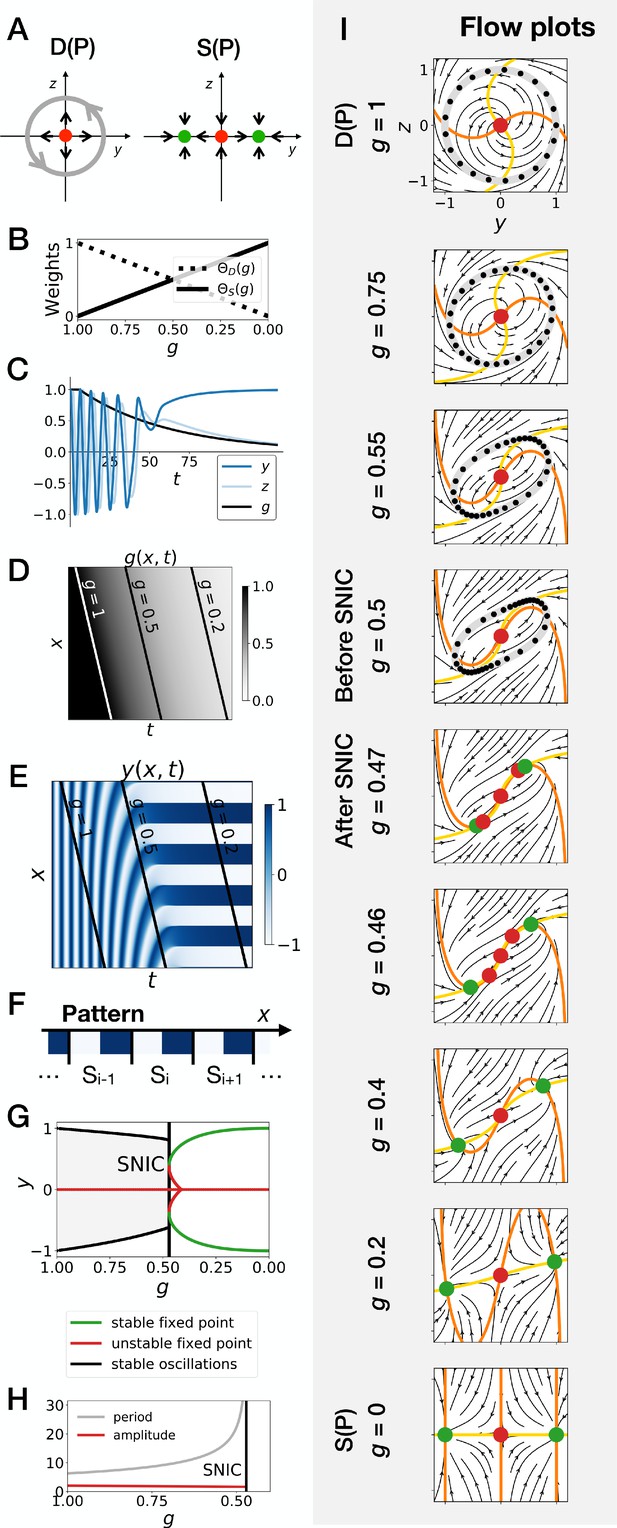

It is therefore a surprise that SNIC bifurcations spontaneously appear in the models considered here. To better understand how this is possible and if this is a generic phenomenon, we follow ideas first proposed by Corson and Siggia, 2012 and consider geometric (or gene-free) systems. We aim to see if: 1. SNIC bifurcations are generically observed, and 2. a model undergoing a SNIC bifurcation is in general more robust to perturbations than a model undergoing a Hopf bifurcation, with initial and final attractors being held fixed. We thus build 2D geometric versions of the system (variables and ). The dynamic module is defined by a non-harmonic oscillator on the unit circle, while the static module is defined by two stable fixed points, at , (see Figure 4A, and the Appendix for the equations). Like previously, we build a linear interpolation between the two systems as a function of and explore the consequence on the bifurcations (Figure 4B–H). Since the flow in the system is 2D, we can also easily visualize it (Figure 4I and Figure 4—video 2).

Gene-free geometric model for pattern formation (Model 2).

(A) Schematic of the flow encoded by the dynamic and static terms. The grey circle represents oscillations on the unit circle. Green and red dots represent unstable and stable fixed points, respectively. (B) Weights of the dynamic (dotted line) and static (solid line) modules as a function of parameter . (C) Values of geometric coordinates and and of parameter in a simulated cell as a function of time. (D–E) Kymographs showing respectively the dynamics of parameter used in the simulated embryo and the dynamics of coordinate . (F) Schematic of the final pattern. (G) Bifurcation diagram showing the types of dynamics available to the simulated embryo as a function of parameter . The maximum and minimum values of coordinate on the stable limit cycles are shown in black. Stable and unstable fixed points are shown in green and red, respectively. (H) Period and amplitude of the oscillations. (I) Flow in phase space for different values of parameter . The same color scheme than panel A is used to represent the cycles and the fixed points. Positions along the limit cycle at time points separated by a fixed time interval are indicated with black dots, so that variations of the speed of the oscillations along the limit cycle can be visualized. The yellow and orange lines represent the and nullclines, respectively.

In brief, this geometric approach confirms all the observations made on the gene network model of the previous section, and further clarifies the origin of the SNIC bifurcation. Because of the smooth transition between modules, the entire flow in 2D needs to interpolate from a cycle to a bistable situation. When both modules have close to equal weights (around ), the flow and associated cycle concentrate around two future fixed points. This appears in retrospect as the most natural way to interpolate between the two situations since both types of attractor (stable limit cycle, and multiple stable fixed points) are effectively present at the same time around . For this reason, the oscillations are also more similar to relaxation oscillations, rapidly jumping between two values corresponding to the future fixed points. When is further lowered, the weight of the static module dominates and ‘tears apart’ the cycle, forming two fixed points.

This situation is so generic that in fact, to obtain a Hopf bifurcation, we need to mathematically reinforce the fixed point at for intermediate values of . More precisely, three things are required to get a Hopf bifurcation. First, we need to add an extra term, the ‘intermediate module’, characterized by a single fixed point at and . Without this module, we consistently get a SNIC bifurcation, for all forms of coupling tested, including linear and non-linear couplings (Figure 4—figure supplement 1A–D). Second, we need to set the weight of the intermediate module to 0 for and , so that we get the oscillations of the dynamic module at the beginning of the simulation and the bistability of the static module at the end of the simulation. We achieve this by setting the weight of the intermediate term to , which is of second order in . Third, we need to make the weights of the dynamic and static modules smaller than the weight of the intermediate module for intermediate values of the control parameter, that is for around 0.5. This is achieved by using cubic weights for the dynamic and static modules (Figure 4—figure supplement 1G and Figure 4—figure supplement 2). Weights of the fourth order in also lead to a Hopf bifurcation (Figure 4—figure supplement 1H), while linear weights lead to a SNIC bifurcation (Figure 4—figure supplement 1E). Interestingly, quadratic weights lead to simultaneous supercritical Hopf and pitchfork bifurcations (Figure 4—figure supplement 1F). The non-linearity of the coupling helps reinforce the relative weight of the intermediate module for values of around 0.5, but the exact shape of the non-linearity is not crucial. Furthermore, our mutual information metric confirms that the pattern is more robustly encoded when the system goes through a SNIC bifurcation rather than through a sequence of Hopf and pitchfork bifurcations (Figure 4—figure supplement 5F).

Detailed simulations of the gene-free model with the intermediate module and with cubic weights for the dynamic and static modules are shown on Figure 4—figure supplement 2. As expected for a Hopf bifurcation, the flow first concentrates on the central fixed point at , before re-emerging in a bistable pattern for lower (Figure 4—figure supplement 2I and Figure 4—video 1). There are technically two types of Hopf bifurcations, depending on the stability of the limit cycle. A Hopf bifurcation is supercritical (resp. subcritical) if a stable (resp. unstable) limit cycle becomes a stable (resp. unstable) fixed point. All Hopf bifurcations discussed previously are supercritical. However, by changing slightly the dynamic module of the gene-free model (and including the intermediate module as well as cubic weights for the dynamic and static modules) we can also obtain a subcritical Hopf bifurcation (Figure 4—figure supplement 3). During this bifurcation, an unstable limit cycle forms around the origin. This unstable limit cycle coexists with the stable limit cycle of the dynamic module for some range of values before they annihilate each other during a so-called ‘saddle-node of limit cycles’ bifurcation. Finally, the two fixed points of the static module form during two simultaneous saddle-node (of fixed points) bifurcations (Figure 4—figure supplement 3I and Figure 4—video 3). Again, our mutual information metric confirms that the pattern is more precise when the system goes through a SNIC bifurcation (Model 2), rather than through supercritical or subcritical Hopf bifurcations (Models 1 and 3, resp.) (Figure 4—figure supplement 5E). Taken together, these results suggest that keeping the static and dynamic attractors fixed, patterning is both more generic and more robustly encoded through a SNIC bifurcation than through a Hopf bifurcation, at least in simple low-dimension models.

Robustness and asymmetry in the fixed points

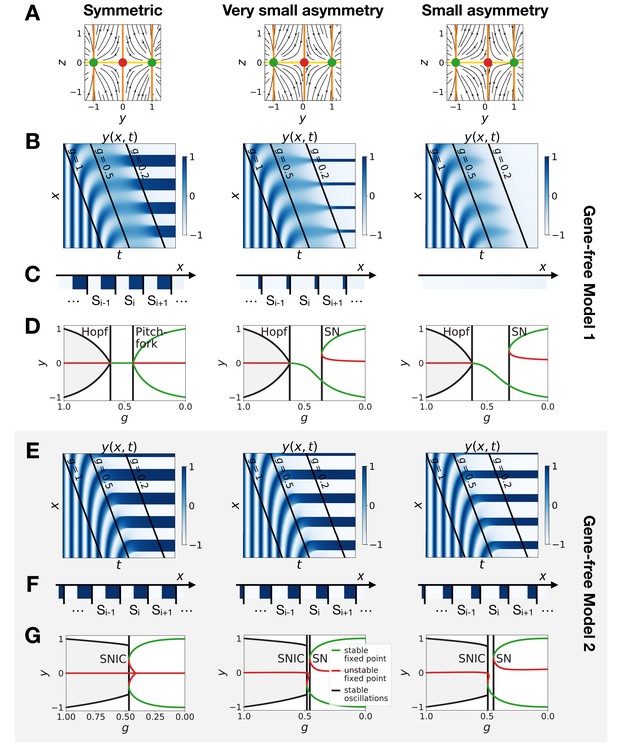

A concern with the results of the previous section might be that those mathematical models are in fact fine-tuned and too symmetrical, so that in particular when the transition occurs, both new fixed points appear for the same value of the control parameter. Furthermore, real biological networks have no reason to be perfectly symmetrical (although evolution itself might select for more symmetrical dynamics if needed). We thus relax our hypotheses to study a system where parameters and trajectories are not symmetrical (Figures 5 and 6).

Perturbation of the morphogen gradient steepness in asymmetric 3-gene models.

(A) Schematic of the gene regulatory networks encoded by the dynamic term (dotted line) and the static term (solid line). The thick red lines indicate stronger repression than the black lines (see the parameters in the Appendix). (B–C) Bifurcation diagram showing the types of dynamics available in Models 1 and 2. The maximum and minimum concentrations of gene A on the stable limit cycles are shown in black. Stable and unstable fixed points are shown in green and red, respectively. The main bifurcations are identified with vertical lines. ‘SN’ stands for saddle-node bifurcation. (D–F) Simulation results for a steep gradient of parameter . (D) Kymograph showing the dynamics of parameter used in the simulated embryos for both Models 1 and 2. (E–F) Kymographs showing the dynamics of gene expression in the simulated embryos of Models 1 and 2. The concentration of the three genes at the last simulated time point is shown schematically in the lower part of the panels. (G–I) Simulation results for a shallow gradient of parameter .

Perturbation of the morphogen gradient steepness in the gene-free geometric models.

(A) Flow plots showing the changes of geometry of the static module. (B–C) Corresponding kymographs and final patterns for Model 1. (D) Associated bifurcations diagrams. ‘SN’ stands for saddle-node bifurcation. (E–F) Corresponding kymographs and final patterns for Model 2. (G) Associated bifurcation diagrams.

Going back first to the gene network model, we induce an asymmetry between the fixed points by changing thresholds of repression in the static phase (Figure 5A). The bifurcation diagrams of Figure 5B–C indicate that the asymmetry of the fixed points indeed breaks the simultaneity of appearance of all fixed points in both scenarios. We nevertheless notice that for those changes of parameters, all bifurcations still happen in a very narrow range of for the SNIC model.

Asymmetry of the fixed points might therefore destroy the advantage of SNIC vs Hopf by creating a transient zone where one of the fixed points always dominates. We thus perform a comparison between Models 1 and 2 with the same asymmetric static enhancers (Figure 5, see also Figure 5—figure supplements 1 and 2, and the Appendix for details). To compare the two cases, we consider different time-scales of the morphogen gradient. The reasoning is that the slower the decay of , the more time the system spends in a region of parameter space without all three final fixed points, allowing the system to relax and ‘lose’ phase information. Conversely, a faster decay of means that less time is spent in a region with few fixed points, and therefore the patterns are expected to be more robust.

We first decrease the thresholds of repression of gene A by both genes B and C (Figure 5A). Results of these simulations are shown in Figure 5: Model 2 with a SNIC bifurcation still outperforms Model 1 with Hopf and saddle-node bifurcations. In particular, it is again visually clear on kymographs that Model 2 produces a robust and well-defined pattern at any time point of the simulations, while Model 1 gives rise to a much ‘fuzzier’ pattern before the transition. Model 1 produces a robust static pattern only for a steep gradient (allowing to quickly move through the ‘fuzzy’ phase) and a weak asymmetry in the static module (Figure 5E). It is brittle to any change of the dynamics of (Figure 5H) or to stronger asymmetry in the static module (Figure 5—figure supplement 1E,H). Conversely, Model 2 is robust to different shapes of the morphogen (Figure 5F,I). Only for a strong asymmetry does the system lose one fixed point (Figure 5—figure supplement 1I), but even in this case transitions through a SNIC bifurcation appear superior to transitions through a Hopf bifurcation.

The fragility of the Hopf bifurcation to asymmetries in the parameters can be understood as follows. In the asymmetric versions of Model 1, one of the fixed points of the static term forms at the Hopf bifurcation, way before the two other fixed points form. It is therefore the only attractor available for a large range of values. However, in Model 2 the same asymmetry only favors one of the fixed points for a small range of values, generating a robust pattern. Again, we can use the mutual information metric defined above to quantify the robustness of the pattern and confirm the superiority of Model 2 (Figure 5—figure supplement 2J). We also confirmed these results for the case of random modifications of the repression thresholds of all interactions in the static term (Figure 5—figure supplement 2).

The asymmetry introduced in Figure 5 changes the shapes of the basins of attraction and the positions of the fixed points. The geometric model allows to change those features independently. The most generic way to introduce an asymmetry in the system is to fix the positions of the fixed points of the static regime and change only the positions of the basins of attraction (the reason is that the future fates depend on the position of the separatrix between different regimes [Corson and Siggia, 2012]). To replicate this situation in the 2D gene-free models, we move the unstable fixed point of the static term along the axis. Results of this procedure are shown on Figure 6 and confirm our results on the gene-network based models: Model 2 bifurcates via a SNIC and is always more robust than Model 1. When we change the positions of the fixed points in the static regime to move them away from the limit cycle (still in an asymmetric way), interestingly both Models 1 and 2 now bifurcate via SNICs (Figure 6—figure supplement 1). Furthermore, we see that for Model 1, the amplitude of the limit cycle decreases before the bifurcation, while for Model 2, the amplitude increases (Figure 6—figure supplement 1E).

We conclude from all those numerical perturbations that even with asymmetric basins of attraction and asymmetric parameters, transitions based on SNIC bifurcations are both more generic and more robust than the ones based on Hopf bifurcations, at least in simple low-dimension models.

Spatial wave profiles: Hopf vs SNIC

Our theoretical study suggest that SNIC based transitions are both more robust and more generic than Hopf/saddle-nodes ones. We thus now examine ways to distinguish between Hopf and SNIC based transitions experimentally. The natural method would be to modify the value of the control parameter of the bifurcations (in our case parameter ) and check how the attractors of the system change. A recent experimental example can be found in the auditory hair-bundle context where it has been suggested that Hopf bifurcations can be distinguished from SNIC bifurcations by tuning the external driving force (Salvi et al., 2016). However, the situation in development is different from any sensory system since the actual control parameters are not known and are likely combinations of various signaling systems (such as FGF or Wnt). There could also be many compensatory developmental mechanisms, making it difficult to fully take control of the system, as well known by developmental geneticists.

In the absence of a direct control, we have to rely on indirect measurements. The most obvious choice is to use the antero-posterior position along the embryo (or along the PSM in the case of somite formation) as a proxy for the control parameter. In situs are informative of what locally happens in insects (El-Sherif et al., 2012; Zhu et al., 2017). In vertebrates, it is possible to monitor in real-time segmentation oscillators in embryos (Aulehla et al., 2008; Webb et al., 2016; Delaune et al., 2012) and in tissue cultures (Lauschke et al., 2013; Matsuda et al., 2020; Diaz-Cuadros et al., 2020), so that many properties of the cycles can be inferred.

A first relevant metric (used in Salvi et al., 2016) is the shape of the limit cycle as a function of the control parameter. There is a clear contrast between Hopf and SNIC bifurcations on our simulated kymographs: for Hopf bifurcations, as mentioned above the transition zone is ‘blurred’ because the damped oscillations relax toward the unique fixed point, while for SNIC bifurcations the oscillations localize close to the fixed points, and as a consequence the pattern is gradually reinforced (Figures 2, 3, 4, 5; damped oscillations are especially visible in the geometric model). To better visualize the ‘blurry’ transition zone characteristic of Hopf bifurcations in the gene-free model (resp. in the 3-gene model), we compute the distribution of the values of the geometric variables (resp. of the gene concentrations) for different values of parameter (Figure 7—figure supplement 2). In models with Hopf bifurcations, the distribution becomes very narrow for intermediate values of , which is a direct consequence of the damped oscillations past the Hopf bifurcations. This suggests that measuring experimentally the distribution of gene concentrations across developmental time at fixed antero-posterior positions can help distinguish SNIC from Hopf bifurcations during actual pattern formation processes. The experimental picture, both from in situs and live imaging, does not support a narrowing of the distribution: it shows clear increases of amplitude that seems more consistent with a gradual reinforcement of the pattern, similar to a SNIC (see e.g. [Delaune et al., 2012]).

Another metric that could help identify the type of bifurcation is the time evolution of the period (resp. of the frequency), which presents a discontinuity for both types of Hopf bifurcations, but continuously goes to infinity (resp. zero) for SNIC bifurcations. The local spatial wavelength of the pattern provides a continuous measurement related to the local period (as first used and derived in Giudicelli et al., 2007). Calling the somite size (or the wavelength of anterior/posterior markers within somites after clock stopping), and the respective period and local wavelength of the pattern at position (with being the tail and the front), and assuming the cells move towards the anterior with constant speed, we have

In the posterior so that is infinite: all cells are synchronized locally. As cells move towards the anterior, decays and decreases but stays bigger than . If there is a Hopf bifurcation, one expects that the clock will stop with a final period , corresponding to a critical wavelength of the wave , which is strictly greater than . There is therefore in principle a discontinuity between the wavelength of the propagating wave in the PSM and the wavelength of the static pattern. Conversely, if goes continuously to infinity, decays continuously to converge towards (as measured in Giudicelli et al., 2007). This is a simple and intuitive experimental outcome: in this situation the wavelength of the propagating wave in the PSM decreases until it exactly matches the pattern of anterior markers. Therefore, a signature of the finite vs infinite period bifurcation might be visible by comparing the local wavelength of propagating waves in the PSM to the wavelength of anterior markers in the pattern. Practically, monitoring the distance between the peaks of the oscillations does not give a clear difference between Hopf and SNIC, the reason being that such a discrete measurement can smooth out an abrupt change in the period and that past the Hopf bifurcation, damped oscillations actually give rise to a ‘ghost’ frequency which can decrease quite significantly (see Figure 2—figure supplement 2). Therefore, a measurement of the local wavelength might be less conclusive than a measurement of the amplitude. Nevertheless, we notice that a SNIC scenario gives rise to a combination of an increase of the amplitude with a continuous shrinking of the wavelength, meaning that many refining waves (corresponding to broad variations of periods) will simultaneously propagate.

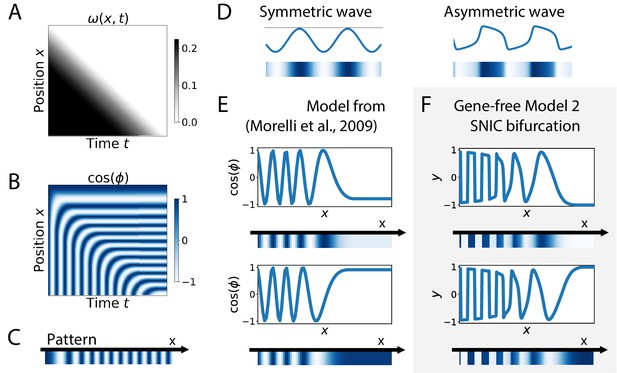

Finally, SNIC bifurcations are also accompanied by characteristic changes of the shape of spatial wave profiles that might be observable in experiments. We further compare a SNIC-based model to a phase model where an infinite-period behaviour is explicitly assumed, namely the model of a collection of coupled oscillators from Morelli et al., 2009. A kymograph of the spatio-temporal profile of the frequency imposed on the oscillators is shown in Figure 7A, and the dynamics of the resulting pattern formation process is shown on the kymograph of Figure 7B, with the final pattern on Figure 7C. The most striking difference is observed on the shape of the spatial wave profile as it moves towards the region where the pattern stabilizes. In the infinite-period scenario of Morelli et al., 2009, the phase profile is by construction symmetric (albeit stretched in the posterior compared to the anterior, see Figure 7E). In the SNIC scenario, we see a clear asymmetry in the peaks of the wave profile (as shown schematically in Figure 7D). Indeed, following the peaks of oscillations spatially from anterior to posterior (left to right), we see that the transition from positive to negative values of occurs in two steps. During the first step, the system stays close to for some time, with values decreasing slowly, while in the second step the system goes rapidly towards negative values and reaches fast (Figure 7F). This phenomenon, which gives rise to a ‘sawtooth’ pattern of propagating waves, is observed in all our versions of Model 2 (and is notably absent from all our versions of Model 1, see Figure 7—figure supplement 1). Those spatial asymmetries are likely due to the asymmetries of the limit cycles of relaxation oscillators, where a system jumps between two (or more) steady states in an asymmetric way (which can also be observed in systems close to criticality, see Tufcea and François, 2015). Our model thus offers a simple explanation of wave asymmetry, solving the long-standing problem of the asymmetry of AP vs PA transitions, which is possibly crucial for segment polarity as first suggested by Meinhardt, 1982.

Wave pattern in different models for the infinite-period scenario.

(A) Frequency profile for the simulation of the model of coupled oscillators from Morelli et al., 2009. (B–C) Kymograph showing the dynamics of the phase of the oscillators and the corresponding final pattern. (D) Two schematic examples of possible wave patterns (symmetrical vs asymmetrical). The symmetric wave is obtained with a sine function. The asymmetric wave is retrieved from simulations of a Van der Pol oscillator. (E) Wave pattern for the model of Panels (A–C) for two different time points. (F) Wave pattern for Model 2 of Figure 4 for two different time points.

Discussion

In this work, we have explored the dynamical properties of generic two-module systems, where one module corresponds to a dynamic phase of genetic expression and the other corresponds to a static phase controlling embryonic patterning. The unexpected result is that those models typically present global bifurcations where new fixed points appear on the trajectories in phase space. Such SNIC bifurcations come from the smooth interpolation between a flow defining an oscillator in phase space and a landscape characterized by several fixed points. The oscillating attractor then gets continuously deformed until it breaks into several fixed points, leading to the SNIC. This interpolation is a direct consequence of the assumed two-module control as shown on multiple examples above. Importantly, the overall developmental sequence in this context is emergent, since the dynamics close to the bifurcation cannot be understood independently from the static or dynamic modules only. SNIC bifurcations also provide robustness to various perturbations (since fixed points appearing on cycles better preserve information on the oscillatory phase). Below we detail how this model also recapitulates many observed features of metazoan segmentation.

Experimental evidence

In absence of a direct handle on the control parameter, comparison of SNIC and Hopf bifurcations in simulated systems reveal three possible signatures of SNIC bifurcations in the propagating antero-posterior waves of genetic expression: 1. oscillations gradually reinforcing the pattern for SNIC (instead of damped oscillations for Hopf) 2. relaxation-like oscillations leading to some asymmetry in the wave pattern, and 3. a continuous shrinking of the spatial wavelength during the dynamic phase to match the static pattern for SNIC, with many well-defined propagating waves.

For prediction 1, decades of in situs of oscillating genes and of experimental monitoring of Notch signalling pathways argue against damped oscillations in somitogenesis. Weak waves gradually refine into well-defined stripes in the anterior PSM, in most species (see for example the comparison made in Gomez et al., 2008), suggesting an increase of amplitude in oscillations. Consistent with this, visualising segmentation clock oscillations in live vertebrate embryos suggests an increase of oscillation amplitude as cells get more anterior in the PSM (Delaune et al., 2012; Lauschke et al., 2013). A similar observation was also made for gap genes during short-germ segmentation in Tribolium (Zhu et al., 2017).

For prediction 2, in somitogenesis, there is an asymmetry in the wave pattern before stabilization. The transition from the high to low phase within one somite is shallower than the transition between those two phases from one somite to the other (i.e. posterior of one somite to anterior of the next), as detailed in Shih et al., 2015. A possible read-out is the downstream pattern of Cadherin controlling segment boundary formation, which presents a sawtooth profile (McMillen et al., 2016), consistent with the observed asymmetry in the wave pattern. It has been proposed that the main reason for the clock control was precisely to generate such periodic sawtooth pattern, and a SNIC bifurcation offers a plausible mechanism. In insects, it has been proposed that one of the roles of traveling waves of segmentation genes (e.g. eve genes shift) is indeed to provide segment polarity (Rothschild et al., 2016; Clark, 2017; Clark and Akam, 2016), which is consistent with this proposal, although precise quantification of wave asymmetry has not been done in this context to our knowledge. In situs of gap genes in short germ insects show asymmetry between the anterior and posterior borders of the propagating gene expression waves as well (Zhu et al., 2017).

For prediction 3, the slowdown of oscillations during vertebrate segmentation appears quite variable between species. Mouse segmentation clock seems at first more consistent with a relatively sudden Hopf scenario. There is roughly a 2π phase shift between the posterior and the front, consistent with only a moderate change of the period/wavelength (Lauschke et al., 2013). Other species have several propagating waves within the PSM, seemingly more consistent with a broader period regime. In zebrafish, in vivo real-time imaging suggests a wavelength of propagating waves in the anterior PSM of roughly twice the segment length, meaning that the period of the clock at the front is at least half of the period in the tail bud (Shih et al., 2015). However, this measurement likely overestimates the wavelength and thus underestimates the period at the front since it is based on a peak to peak measurement between the last two waves. Thus, the period of the clock might further increase in the anterior, consistent with a period divergence and with measurements of Giudicelli et al., 2007 on in situs, who proposed that the period indeed diverges (Giudicelli et al., 2007). Importantly, as said above, while the period increases the amplitude increases too Shih et al., 2015, which is more consistent with a SNIC bifurcation. In situs in snake PSM clearly show a decrease of the wavelength that seems to continuously match the pattern in the anterior, possibly more consistent with an infinite-period bifurcation as well (Gomez et al., 2008).

A direct experimental measure of the wavelength of the pattern for the last oscillations can be found with Mesp2 (Takahashi et al., 2000; Saga and Takeda, 2001). The consensus on Mesp2 is that it is expressed in the last few waves of oscillations, and that its pattern continuously regresses to reach exactly the wavelength of the anterior pattern. This is consistent with an infinite-period bifurcation. In Oginuma et al., 2010 it is argued that Mesp helps setting the segment boundary in mouse by precisely reading the Notch wave, explaining both its biological role and why it is a convenient marker of the wavelength. We notice that the model used to explain the experiments in Oginuma et al., 2010 is Julian Lewis’ model from the appendix of Palmeirim et al., 1997, thus presenting an infinite-period divergence.

One cannot completely exclude from all those experimental observations a Hopf scenario where waves would not be very damped (or would be amplified for some reason) past the bifurcation, while the period of damped oscillations would become long until the saddle-node bifurcation is reached. A way to experimentally falsify this scenario is to induce a smoother transition and see if damped oscillations appear. Interestingly, both in vertebrates and in short germ insects, changes of Wnt signalling indeed give rise to smoother transitions from the dynamic to the static regime (suggesting that Wnt could be related to the control parameter ). In mouse, beta-catenin gain of function mutants give rise to considerably extended PSM toward the anterior (Aulehla et al., 2008), with up to five waves of oscillating Lfng (compared to only one in WT). Thus, this extended PSM qualitatively looks much more like a zebrafish or a snake PSM, with reinforcement of Lfng in situs from anterior to posterior (suggesting a refinement of the pattern and not damped oscillations, see Figure 4g,h of Aulehla et al., 2008). In the anterior PSM of such mutants, the wavelength of the oscillations decreases to match exactly the Mesp2 wavelength (Aulehla et al., 2008, see e.g. Figure 3), and the very last waves move extremely slowly towards the anterior (Alexander Aulehla, private communication), consistent with a divergence of the time-scale. In Tribolium, axin RNAi similarly changes the wave pattern, where smoother and more spatially extended propagation of gap gene expressions are observed in those mutants, with reduced wavelengths compared to the WT (see Figure 4 of Zhu et al., 2017). Those phenotypes are exactly what is expected from an infinite-period bifurcation, again consistent with the SNIC scenario.

Lastly, damped oscillations would also be absent from Hopf scenarios where the bifurcation that destroys the limit cycle occurs at a value of the control parameter that is almost exactly the value at which the system becomes multistable. This happens for example when the supercritical Hopf bifurcation that kills the limit cycle happens at the same value of parameter than the pitchfork bifurcation that generates bistability (Figure 4—figure supplement 1F). A similar phenomenon occurs in the model presented on Figure 4—figure supplement 4: the two simultaneous subcritical Hopf bifurcations that generate the bistability and the saddle-node of limit cycles that destroys the stable limit cycle happen in a very narrow range of values. We cannot fully exclude those cases from the experimental data, but we notice they are intrinsically less generic since multiple bifurcations have to occur at the same time, which happens only in our models because of specific parameter choices, symmetries in the equations or specific choices of non-linearity for the weights of the modules.

Model predictions

The most straightforward prediction of the model proposed here is the presence of several global transcriptional modules between strongly interacting genes, directly controlling the smooth changes of developmental time-scale (in a similar way to the ‘speed-gradient’ model in Zhu et al., 2017). Many developmental genes are regulated by multiple ‘shadow’ enhancers (Cannavò et al., 2016). A smooth transition between different enhancers has even been observed for gap genes in Drosophila (El-Sherif and Levine, 2016). Global regulation of transcriptional modules could be biologically achieved through ‘super enhancers’ regulating many genes at the same time (Hnisz et al., 2017). A non-trivial prediction of our model is that the intrinsic time-scale of the system is a function of the relative balance of transcriptional activities of the modules. The transcriptional control described here naturally allows for infinite-period bifurcations, an implicit mechanism in several models of metazoan segmentation. This is to be contrasted with classical models of negative feedback oscillators such as the Goodwin model, where the time-scale is entirely controlled by degradation and is independent from transcription and translation rates (Forger, 2011), and with models of delayed oscillators, where the time-scale is essentially controlled by transcriptional delays (Lewis, 2003).

Our model is controlled by an external parameter . The most natural hypothesis would be that corresponds to an actual morphogen gradient. As said above, Wnt is a natural candidate, but feedbacks clearly exist with Caudal in Tribolium (Zhu et al., 2017) and FGF in vertebrates. However, in the spirit of the initial wavefront proposal by Cooke and Zeeman, could also be in some context a temporal variable, for example an effective timer. Recent works on somitogenesis have suggested that the segmentation front could also be coupled to the slowing down of two oscillators (Lauschke et al., 2013), possibly one corresponding to Notch/FGF and the other to Wnt (Sonnen et al., 2018), so that the oscillation could feedback on itself to define . One could also imagine that is related to more biophysical variables (density, elasticity) (Hubaud et al., 2017). It is important to point out that in our framework the nature of the bifurcation does not depend on the nature of . While it might be difficult to experimentally disentangle feedbacks between the bifurcations and the control parameter from actual properties of the bifurcations themselves, our predictions on the nature of the bifurcations would not change.

An assumption of this two-module framework is that the same genes interact to control the system in both the dynamic and static regimes, giving rise to a smooth dynamical transition during development. This is consistent with what is observed for gap gene dynamics in short germ insects (Zhu et al., 2017). For vertebrate segmentation, we do not know yet mechanistically how both regimes are controlled, but the Notch signalling pathway is implicated in all steps of somitogenesis and in particular is known to gate information from the oscillatory to the segmented regime (Oginuma et al., 2010). An opposite view would be that the transition from dynamic to static regime is de facto sudden (even if it appears smooth for other reasons). Such scenario could be realized in different ways. For instance, different enhancers could regulate completely different sets of genes in the dynamic vs static regimes. The ‘static’ genes would then interact with the ‘dynamic’ genes only briefly during development, ensuring transmission of positional information between the static and dynamic regimes in a very localized region of time and space. In somitogenesis, as said above specific genes are indeed expressed at the so-called ‘front’ (such as Mesp2) and could act like gating processes transferring the information from the clock to an independent patterning system. In this case, we would be back to a sequential point of view where different regimes of development live in different regions of phase space, and the local bifurcation scenario would then be more plausible.

Evolution and developmental plasticity

Evolutionary simulations for the evolution of patterning have favored a scenario based on Hopf and saddle-node bifurcations (François et al., 2007). Those simulations did not include multiple enhancers like here, and all transcriptional regulations had essentially to evolve from scratch, possibly suggesting a ‘Ur-segmentation clock’ based on Hopf and saddle-node bifurcations. This scenario is not excluded by our model: in particular there would be no difference between Hopf and SNIC bifurcations under the control of steep gradients of , which shortcut the region where only one fixed point is present. So Hopf/saddle node bifurcations under control of a steep gradient are likely the easiest solution found by (simulated) evolution. However, adding a more complex, enhancer-based evolutionary grammar might allow for more combinatorial use of dynamical attractors, and associated robustness to external perturbations (such as the shape of the gradient). A SNIC bifurcation might then plausibly evolve from the modularization (West-Eberhard, 2003) of a system based on Hopf and saddle-node bifurcations (both in vivo and in silico). First, since the SNIC bifurcation is the generic scenario that we observe in our framework, it should be easy to discover by evolution once a multi-enhancer system combining an oscillator and a bistable system has evolved. Second, SNIC-controlled segmentation is plastic in the sense that changes of dynamics of the control parameter would change the transient phenotypes (such as the number of oscillating waves) but would still generate a pattern. Modularizations leading to developmental plasticity has been suggested to be an important engine for evo-devo (West-Eberhard, 2003), since it allows for intra-specific variability without impinging on the most important phenotypes (here, segmentation).

Importantly, such plasticity is indeed observed experimentally both for short germ and vertebrate segmentation. For instance, in Tribolium one can considerably modify Caudal gradient dynamics and still see proper patterning (Zhu et al., 2017). It could thus explain how and why there is so much quantitative variability in segmentation mechanisms, such as short vs intermediate germ band segmentation (as suggested in Zhu et al., 2017). In somitogenesis, there is a lot of interspecies quantitative variability in the numbers of waves and rescaled periods (Gomez et al., 2008) while the qualitative dynamics itself appears very conserved see for example (Krol et al., 2011). In other words, a two-module mechanism makes the dynamics both more robust – a generic bifurcation scenario gives precise phase encoding – and more evolvable – one can vary many features of the system (e.g. basins of attractions, dynamics of the control parameter) and still get proper patterning, again a hallmark of developmental plasticity.

In brief, we have discussed a two-module based model of the smooth transition in development from a dynamical regime to a static one. This model explains time-scale divergence, as well as robustness to changes of morphogen dynamics (Zhu et al., 2017) and to noise. It provides a possible explanation for smooth robust transitions in metazoan segmentation, with a non-trivial (global) bifurcation. Further experimental and theoretical studies are required to assess the importance of smooth transitions for encoding dynamic information into spatial patterns of genetic expressions.

Appendix 1

1 A two-enhancer model reproduces dynamical features of tribolium segmentation

In Zhu et al., 2017, two of us proposed a model of Tribolium segmentation relying on the interplay of two sets of enhancers. In short, two sets of enhancers (static , dynamic ) were used, where the role of parameter g is played by morphogen Caudal (cad) (El-Sherif et al., 2012; Figure 1—figure supplement 2). encodes a multistable system and a sequential cascade of genetic expression of gap genes (, , , ). This system was found to implement a ‘speed gradient’ model, where the speed of traveling waves of gap genes from posterior to anterior depended on the level of cad concentration (Figure 1—figure supplement 2B–D). This led to robust patterning of the embryo (Figure 1—figure supplement 2E) but the mathematical origin of the speed gradient was not explained.

To better understand the underlying dynamics of the system, we consider the time courses of multiple cells at different positions and thus with different final fates. Figure 1—figure supplement 2F shows the projection of the cells’ dynamics on a 2D plane corresponding to the first two genes expressed in the cascade ( and ), as well as a typical flow for different values of while keeping the other genes (, and ) at zero. Importantly, for , we see the appearance of a new fixed point (green disk on Figure 1—figure supplement 2F).

We make four observations:

The flow of the system is canalized. The trajectories of the cells stay very close to one another in phase space.

As cad is lowered, the new fixed point appears very close to the common trajectory of all cells (Figure 1—figure supplement 2F, top row), and clearly separates the trajectories of cells ending up at different fates (Figure 1—figure supplement 2F, bottom row).

When cad further decreases, the new fixed point moves in the high hb, low Kr region, corresponding to the eventual fate of Cell 1.

When the new fixed point appears, the flow of cells past this fixed point is slowed down (Figure 1—figure supplement 2G).

These four observations offer a concise explanation to the ‘speed gradient’ model: as the system gets closer to the bifurcation happening at , the system is slowing down because of the future fixed points appearing on the trajectory. Intuitively, this is due to the fact that a fixed point corresponds to a frozen state, and thus to an infinite time-scale (static). When cad varies, the system has to interplay between a non-zero time-scale (dynamics) and such infinite time-scale, and it thus makes sense a priori that in between, the time-scale of the system diverges. This mechanism is close in principle to the critical timing proposed in Tufcea and François, 2015.

2 List of the functions used for the dynamics of each model

2.1 Gene network models

In the gene network models, biochemical interactions between genes are modeled explicitly. Ordinary differential equations (ODEs) represent the dynamics of the concentration of the proteins that are encoded by the genes in the network. The deterministic part of the dynamics is composed of a protein production term and a protein degradation term. The production rate of a given protein can be altered by the interactions between the genes. Hill functions are use to model repression and activation of the production of a given protein by the genes. When multiple genes affect the concentration of a protein, the Hill functions corresponding to each interaction are multiplied. In the simulations, we set to one the maximal production rate of all proteins. Similarly, we set the degradation rate of all proteins to 1. In Equation 1 of the main text, encodes the degradation term, and represents the production term.

2.1.1 3-gene models

The proteins associated to the three genes are named arbitrarily , and :

Appendix 1—table 1 lists the values of the parameters used in the repression interactions of all versions of the 3-gene models: the symmetric version used to generate the results of Figure 2, Figure 2—figure supplements 1 and 2, Figure 3, Figure 3—figure supplement 1 and Figure 7—figure supplement 2, the version with a weak asymmetry used in Figure 5, the version with a strong asymmetry used in Figure 5—figure supplement 1 and the version with a randomized asymmetry used in Figure 5—figure supplement 2. In the latter version, we randomly picked the values of the repression interactions of the static term from a Gaussian distribution with mean 0.4 and standard deviation 0.04. Appendix 1—table 2 lists the weights and used for all 3-gene models: Models 1 and 2 used to generate the results of Figure 2, Figure 2—figure supplement 1, Figure 3, Figure 5, Figure 5—figure supplements 1 and 2 and Figure 7—figure supplement 2, as well as Models 1 and 2 with Hill functions for the weights and , used in Figure 2—figure supplement 1, Figure 3—figure supplement 1 and Figure 7—figure supplement 2.

Parameter values for the repression interactions of the 3-gene models.

| Model version | |||||||||

|---|---|---|---|---|---|---|---|---|---|

| Symmetric | 0.4 | 0.4 | 0.4 | 0.4 | 0.4 | 0.4 | 0.4 | 0.4 | 0.4 |

| Weak asymmetry | 0.4 | 0.4 | 0.4 | 0.36 | 0.36 | 0.4 | 0.4 | 0.4 | 0.4 |

| Strong asymmetry | 0.4 | 0.4 | 0.4 | 0.32 | 0.32 | 0.36 | 0.36 | 0.4 | 0.4 |

| Randomized asymmetry | 0.4 | 0.4 | 0.4 | 0.3825 | 0.3560 | 0.4334 | 0.4102 | 0.3802 | 0.4038 |

Weights of the dynamic and static terms of the 3-gene models.

| Weights | Model 1 | Model 2 | Model 1 with hill functions | Model 2 with hill functions |

|---|---|---|---|---|

| g | ||||

2.1.2 Model of Tribolium segmentation

In the model of Figure 1—figure supplement 2, the interactions between hunchback (hb), Krüppel (Kr), mille-pattes (mlpt), giant (gt) and an unidentified gene X are modeled (see the supplement of Zhu et al., 2017). Note that the role of parameter g is played by () in the model for Tribolium segmentation.

2.2 Gene-free models

In the gene-free model, ODEs encode flows in an abstract 2D phase space. The two geometric variables are named arbitrarily y and z:

where parameter (resp. and ) controls the position of the unstable fixed point (resp. the stable fixed points) of the static term along the y axis. Parameter is set to 0 in the symmetric version of the model used in Figure 4, Figure 4—figure supplements 1, 2, 3, 4 and 5, Figure 4—videos 1, 2, 3, 4, Figure 6, Figure 7, Figure 7—figure supplement 1 and Figure 7—video 1, as well as in the asymmetric versions of Figure 6—figure supplement 1 and Figure 7—video 1. In Figure 6, parameter is set to 0.05 and 0.1 to model different levels of asymmetry in the basins of attraction. In Figure 7—figure supplement 1, parameter is set to 0.02 for Model 1 and 0.05 for Model two to obtain a similar level of asymmetry in the final pattern generated by the two models. We set and for all versions of the gene-free models, except for the asymmetric versions used to generate the results of Figure 6—figure supplement 1 and Figure 7—video 1. In the former, the stable fixed points of the static module are placed outside the region delimited by the limit cycle of the dynamic module by setting and , while in the latter, we set and .

To obtain a supercritical Hopf bifurcation with the gene-free model, we followed a similar approach than for the 3-gene model. We reasoned that the sum of the weights of the dynamic and static modules should become smaller than a degradation-like term for values of g around 0.5. For this reason, an ‘intermediate term’ is introduced in the ODE. The intermediate term is weighted by the function . Equation 1 of the main text thus becomes:

Recall that in a given cell, only the dynamic module should be present at the beginning of the simulation, when . Similarly, only the static module should be present at the end of the simulation, when . Therefore, we set the weight of the intermediate module equal to , which is zero at both and . Since this weight is of the order two in g, we make the weights of the dynamic and static modules of the order three in g to ensure that they become smaller than the weight of the intermediate term for g around 0.5. To obtain subcritical Hopf bifurcations with the gene-free model, we used a slightly different dynamic module:

Appendix 1—table 3 lists the weights used for all gene-free models: Model one used to generate the results of Figure 4—figure supplements 1, 2 and 5, Figure 4—video 1, Figure 6, Figure 6—figure supplement 1, Figure 7—figure supplements 1 and 2, and Figure 7—video 1, Model two used to generate the results of Figure 4, Figure 4—figure supplements 1 and 5, Figure 4—video 2, Figure 6, Figure 6—figure supplement 1, Figure 7, Figure 7—figure supplements 1 and 2, and Figure 7—video 1, Model three used to generate the results of Figure 4—figure supplements 3 and 5, Figure 4—video 3 and Figure 7—figure supplement 2, and Model four used to generate the results of Figure 4—figure supplements 4 and 5, Figure 4—video 4 and Figure 7—figure supplement 2.

Weights of the dynamic, static and intermediate terms of the gene-free models.

| Weights | Models 1 and 3 | Models 2 and 4 |

|---|---|---|

| g | ||

| 0 |

2.3 Infinite-period scenarios of Figure 1 and Figure 7

The infinite-period scenario of Figure 1B–F is a simplified version of the model of the appendix of Palmeirim et al., 1997. The dynamics of the phase of the oscillators are modeled directly using the following ODE:

The infinite-period scenario of Figure 7A–E is the 1D model of coupled oscillators from Gillespie, 2001. In brief, the dynamics of the phase of the oscillators are described by the following ODE:

where represents the coupling strength between a cell and its two nearest neighbors, a is the average cell diameter (cd), and τ is the time delay in the coupling. The spatio-temporal profile of the frequency of the oscillators is given by the following formula:

where represents the characteristic intrinsic frequency of the oscillators, v is the speed at which the spatial frequency profile moves along the posterior direction, and σ controls the spatial steepness of the frequency profile. Appendix 1—table 4 lists the parameter values used to generate the results of Figure 7A–E. See Gillespie, 2001 for more details.

Parameter values for the ODE of the phase oscillators in the infinite-period scenario of Figure 7.

| 0.07 | 1 | 0 | 0.3886 | 0.255 | 36 |

2. 4 Hopf scenario of Figure 1

The Hopf scenario of Figure 1G–K is the cell-autonomous model evolved in silico in François et al., 2007. The model describes the dynamics of two proteins, the effector protein and the repressor protein , under the control of morphogen g via ODEs with time delays:

The subscript of a closed parenthesis indicates the time at which the expression inside the parenthesis is evaluated. If no such parenthesis with a subscript is present in a given expression, this expression is evaluated at time t. The values of all parameters are given in Appendix 1—tables 5 and 6