Multiple decisions about one object involve parallel sensory acquisition but time-multiplexed evidence incorporation

Abstract

The brain is capable of processing several streams of information that bear on different aspects of the same problem. Here, we address the problem of making two decisions about one object, by studying difficult perceptual decisions about the color and motion of a dynamic random dot display. We find that the accuracy of one decision is unaffected by the difficulty of the other decision. However, the response times reveal that the two decisions do not form simultaneously. We show that both stimulus dimensions are acquired in parallel for the initial ∼0.1 s but are then incorporated serially in time-multiplexed bouts. Thus, there is a bottleneck that precludes updating more than one decision at a time, and a buffer that stores samples of evidence while access to the decision is blocked. We suggest that this bottleneck is responsible for the long timescales of many cognitive operations framed as decisions.

Introduction

Decisions are often informed by several aspects of a problem, each guided by different sources of information. In many instances, these aspects are combined to support a single judgment. For example, an observer might judge the distance of an animal by combining perspective cues, binocular disparity and motion parallax. In other instances, the aspects are distinct dimensions of the same object. For example, the animal’s distance and its identity as potential predator or prey. The former problem of cue combination (Jacobs, 1999; Ernst and Banks, 2002) is a topic of study in what has been termed the Bayesian Brain (Knill and Pouget, 2004). The latter is the subject of this paper. It arises in a wide variety of problems whose solutions depend on identifying a set of conjunctions such as the ingredients of a favorite dish, or when one must make multiple judgments, or decisions, about the same stimulus.

The neuroscience of decision-making has focused largely on perceptual decisions, contrived to promote the integration of noisy evidence over time toward a categorical choice about one stimulus dimension. A well-studied example is a decision about the net direction of motion of randomly moving dots. In such binary decisions (e.g. left or right), behavioral and neural studies have shown that humans and monkeys accumulate noisy samples of evidence and commit to a choice when the accumulated evidence reaches a threshold (Ratcliff, 1978; Palmer et al., 2005; Gold and Shadlen, 2007; Stine et al., 2020). The framework has been extended to more than two categories (e.g. Churchland et al., 2008; Bogacz et al., 2007; Ditterich, 2010) but it remains focused on a common stream of evidence bearing on a single stimulus feature. Less is known about how multiple streams of evidence are accumulated for a multidimensional decision (Lorteije et al., 2015). Given the parallel organization of the sensory systems, one might expect all available evidence to be integrated simultaneously. However, there are also reasons to suspect that two decisions cannot be made in parallel. This is based on a variety of experiments that expose a ‘psychological refractory period’ (PRP; Welford, 1952). When participants are asked to make two decisions in a rapid succession, it appears that the second decision is delayed until the first decision is complete (Pashler, 1994). Based on such observations, it has been argued that there is a structural bottleneck in the response selection step, such that only one response can be selected at a time (Sigman and Dehaene, 2005).

Here, we develop a task in which the participant views one visual stimulus and makes two decisions about the same object. The stimulus comprises elements that give rise to two streams of evidence bearing on their motion and color, and the participant must decide on both aspects and report the combined category. The task was designed to allow participants to integrate both streams of evidence simultaneously from the same location in the visual field and to indicate both choices with just one response. We show that, even in this situation, the two streams of evidence are accumulated one at a time, and moreover, this seriality arises despite the parallel access of the visual system to both streams. We suggest that seriality is explained by a bottleneck between the parallel acquisition of evidence and its incorporation into separate decision processes. We elaborate a model of bounded evidence accumulation, used previously to explain both the speed and accuracy of motion (Palmer et al., 2005) and color decisions (Bakkour et al., 2019), and show that these accumulations must occur in series. The results have implications for a variety of psychological observations concerning sequential vs. parallel operations, and they address the fundamental question of why mental processes take the time they do.

Results

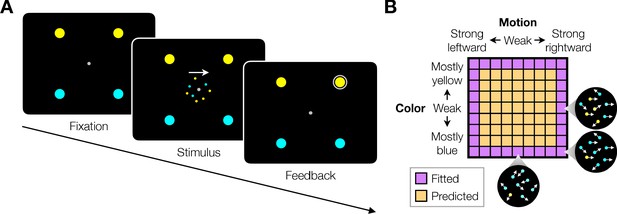

We studied variants of a perceptual task that required binary decisions about two properties of a dynamic random dot display. Human participants decided the dominant color and direction of motion in a small patch of dynamic random dots (Figure 1). The stimulus is similar to one introduced by Mante et al., 2013, who studied the problem of gating when making a decision about only a single dimension, either color or motion. On each video frame, each dot has a probability of being colored blue or yellow and it has another probability of being plotted either at a displacement relative to a dot shown 40 ms earlier or, alternatively, at a random location in the display. We refer to the probability of a displacement as the coherence or strength and use its sign to designate the direction. We use an analogous signed probability for the color coherence or strength (see Materials and methods). In the main tasks, participants reported their answer by making an eye or hand movement to select one of four choice targets. We refer to this as a double-decision and refer to the two aspects as stimulus dimensions. We employed several variants of this basic task in our study.

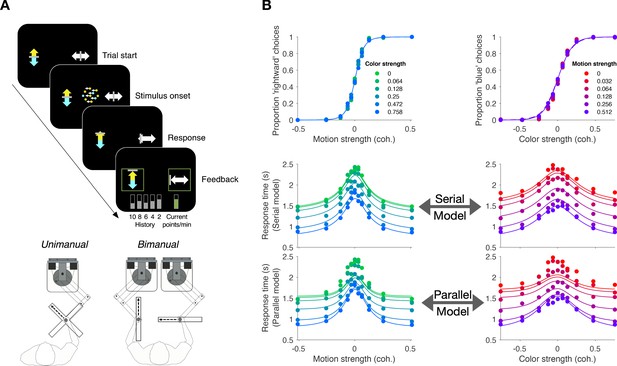

Double decision task.

(A) Timeline of the behavioral task. Participants fixated a gray dot at the center of the screen. A dynamic random dot stimulus was displayed and the participant was asked to judge the overall motion direction and the dominant color (the arrow is for visualization purposes only and was not presented to the subject). They reported this double-decision by selecting one of four targets to indicate motion direction (left and right target for leftward and rightward motion, respectively) and color (top yellow vs. bottom blue targets). The response was deemed correct when both motion and color judgments were correct. Participants received auditory feedback as to whether they were correct and the correct target was also indicated by a white ring. Across the experiments the targets could be selected with an eye movement or a hand movement, either when the participant was ready to report (reaction time) or when the dot display was extinguished (experimenter-controlled duration). (B) Motion and color strengths were varied independently across trials, represented by a matrix of combinations of difficulty levels (here shown for the eye reaction-time experiment with 81 combinations; see Materials and methods for Exp. 1-eye). Insets illustrate typical motion and color for three of the conditions. For feedback only, choices on the weakest motion strength (0% coherence) were deemed correct randomly; same for the weakest color strength. For the combinations shown in purple, at least one stimulus dimension was at its strongest value (easiest). For some analyses, the data from these combinations are used to fit a model, which is evaluated by predicting the data from the remaining combinations (amber).

Roadmap of the experimental results

We first present the main finding using a free response paradigm, what we term double-decision reaction time (Experiment 1). It demonstrates no interference in choice accuracy—that is, the difficulty of the color decision does not affect the accuracy of motion decisions, and vice versa—but critically, the double-decision (2D) time is the sum of the two single-decision (1D) times. The analysis suggests that the motion and color decisions are not formed at the same time. This establishes the prediction that with brief stimulus presentations, successful color decisions ought to be attained at the expense of motion, and vice versa—that is, choice interference. We then test this prediction (Experiment 2) and fail to confirm it. We show that color and motion can be acquired in parallel but are unable to update the decision simultaneously. This confirms the response selection bottleneck predicted by Pashler (Fagot and Pashler, 1992) and it implies the existence of buffers (Sperling, 1960; Kamienkowski and Sigman, 2008), where sensory information can be held before it updates a decision variable—the accumulated evidence for color or motion.

The combination of a buffer and serial updating leads to a revised prediction that interference in accuracy should occur over a narrow range of stimulus viewing duration, controlled by the experimenter. We confirm this prediction (Experiment 3), showing that there is no interference at short viewing times, but that there is a narrow regime of the stimulus duration in which accuracy on one dimension suffers because a limited amount of deliberation time needs to be shared with the other dimension, which reconciles conflicting observations of parallel and serial patterns of decision-making in the literature (e.g. Schumacher et al., 2001; Tombu and Jolicoeur, 2004). We then introduce a bimanual version of the task (Experiment 4) which affords direct reports of both the color and motion termination times. It confirms the assumption that the double-decision time is the sum of two sequential sampling processes, each with its own stopping time, and it shows that the color and motion decisions compete before the first decision terminates. This implies some form of time-multiplexed alternation. In the last experiment, we ask participants to judge whether the motion in a pair of patches is the same or different (Experiment 5) and find that this binary decision, based only on motion processing, also exhibits additive decision times. Finally, we introduce a conceptual model of the double-decision process that serves as a platform to connect the computational elements with known and unknown neural mechanisms.

Experiment 1. Double-decision reaction time (eye and unimanual)

Participants were asked to judge both the net direction (left or right) and dominant color (yellow or blue) of a patch of dynamic random dots and to indicate both decisions with a single movement to one of four choice targets (Figure 1A). Different groups of participants performed the task by indicating their choices with an eye movement (Exp. 1-eye, N = 3) or a reach (Exp. 1-unimanual, N = 8; see Figure 5A). On each trial the strength and direction of motion as well as the strength and sign of color dominance were chosen independently, leading to 81 (9 × 9 in Exp. 1-eye) or 121 (11 × 11 in Exp. 1-unimanual) combinations. The single movement furnished two decisions and one response time (RT; n.b., We use the terms, response time and reaction time, interchangeably to respect usage in psychology and neurophysiology literatures). Participants were given feedback that the decision was correct if the motion and color were both correct (see Materials and methods). After initial training, each participant in Exp. 1-eye performed 4,624–10,969 trials over 11–17 sessions; each participant in Exp. 1-unimanual performed 2304 trials over two sessions.

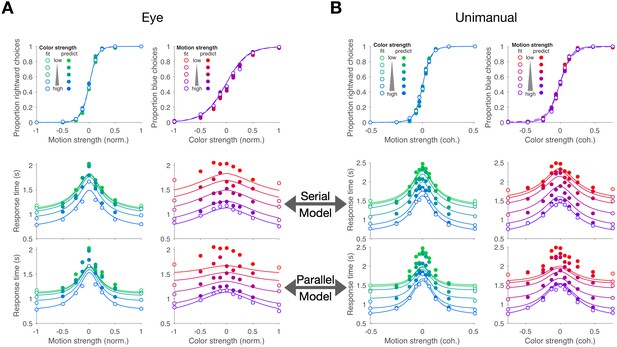

Figure 2A,B shows choices and mean RT as a function of stimulus strength for the eye and unimanual tasks, respectively. The graphs in the left column of each panel show the data plotted as a function of motion strength and direction. Each color on this graph corresponds to a different difficulty of the other dimension (i.e. the color decision). Similarly, the graphs in the right columns show the data plotted as a function of color strength and dominance; the strength of the uninformative dimension, motion, is shown by the purple/red shading. Unsurprisingly, the proportion of rightward choices increased as a function of the sign and strength of the motion coherence, and the proportion of blue choices increased as a function of the sign and strength of color coherence. The striking feature of these graphs is that sensitivity to variation in the stimulus along each dimension is unaffected by the difficulty along the uninformative dimension. This is evident from the superposition of the colored data points. It is also supported by a logistic regression analysis, which favored a choice model in which the sensitivity along one dimension is not influenced by the stimulus strength along the other dimension (ΔBIC = 23 and 22 for motion and color in the eye task, respectively; ΔBIC = 34 and 52 for the unimanual task; positive values are support for the regression model of Equation 13 without the term). It implies that the two stimulus dimensions do not interfere with each other. This is consistent with the well-established idea that color and motion are processed by parallel, independent channels (Livingstone and Hubel, 1988; Ramachandran and Gregory, 1978; Carney et al., 1987; Cavanagh et al., 1984). However, another possibility is that the two dimensions do not interfere because they are not processed simultaneously but serially.

Double-decisions exhibit additive response times but no interference in accuracy (Experiment 1).

Participants judged the dominant color and direction of dynamic random dots and indicated the double-decision by an eye movement (A; Exp. 1-eye) or reach (B; Exp. 1-unimanual) to one of four choice-targets. All graphs show the behavioral measure (proportion of choices, top row; mean RT, rows 2 and 3) as a function of either signed motion or color strength. Positive and negative color strength indicate blue- or yellow-dominance, respectively. Positive and negative motion strength indicate rightward or leftward, respectively. Colors of symbols and traces indicate the difficulty (unsigned coherence) of the other stimulus dimension (e.g., color, for the graphs with abscissae labeled 'Motion strength'). Symbols are combined data from three participants (Exp. 1-eye) and eight participants (Exp. 1-unimanual). Open symbols identify the conditions used to fit the serial (middle row) and parallel (bottom row) models. These are the conditions in which at least one of the two stimulus strengths was at its maximum (purple shading, Figure 1B). In the top row, fits of the serial and parallel models are shown by solid and dashed lines, respectively. The models comprise two bounded drift-diffusion processes, which explain the choices and decision times as a function of either color or motion. They differ only in the way they combine the decision times to explain the double-decision RT. For the serial model, the double-decision time is the sum of the color and motion decision times. For the parallel model, the double-decision time is the longer of the color and motion decisions (see Materials and methods). Smooth curves are the predictions based on the fits to the open symbols. Both models predict no interaction on choice (top row). The predictions of RT are superior for the serial model (middle row) compared to the parallel model (bottom row). Data are the same in the lower two rows. Stimulus strengths in A were not identical for the three participants and were normalized to a common ±1 scale before averaging, so the psychometric curves for eye and hand cannot be compared visually (see Appendix 1—table 1 for comparison of parameters from the fits). For simplicity, only correct (and all 0% coherence trials) are shown in the RT graphs (see Materials and methods).

The RTs support this serial hypothesis. The RTs, plotted as a function of either motion or color, are bell-shaped curves, such that longer RTs are associated with the most difficult stimulus strength and the fastest with the easiest. In contrast to the choice functions, the uninformative dimension—that is, with respect to the dimension of the abscissa—affects the scale of these RTs, giving rise to a stacked family of bell-shaped curves. The more difficult the other dimension, the longer the RT.

We attempted to explain the choice-RT data in Figure 2 with models of bounded evidence integration (e.g. drift-diffusion; Ratcliff, 1978; Palmer et al., 2005). Such models provide excellent accounts of choice and RT on the motion-only and color-only versions of these tasks (Palmer et al., 2005; Bakkour et al., 2019). To explain the double-decision data set, we pursued two variants of these models under the assumption that motion and color are processed in parallel or in series. The curves in Figure 2 are a mixture of fits and predictions. To fit the data, we used all trials in which at least one of the dimensions was at its strongest level (open symbols Figure 2; 32 purple conditions in Figure 1B for the eye task and 40 conditions for the unimanual task). We used these fits to predict the data from the remaining conditions (filled symbols; 49 amber conditions in Figure 1B for the eye task; 81 for the unimanual task).

Both models are consistent with no interference in the choice functions. The models can be distinguished on the basis of the RT data. For an experiment with only a single dimension (e.g. motion), the RT is the sum of the amount of time that evidence is integrated to reach a terminating bound (the decision time, or , for motion and color choice, respectively) plus time delays that are not affected by task difficulty, such as sensory and motor delays, termed the non-decision time (). If the color and motion decisions are made in parallel, then the total decision time should be determined by the slower process (), whereas if the decisions are made serially, the total decision time would be determined by the sum of the two decision times (). In both cases, we expect both motion and color strengths to affect the RT. In the serial case, an increase in the difficulty of color, say, should augment the total RT by the same amount for all motion strengths, giving rise to stacked functions of the same shapes (solid curves, middle row, Figure 2A,B). In the parallel case, an increase in the difficulty of color should augment the total RT by an amount that depends on the difficulty of motion (solid curves, bottom row Figure 2A,B). The color dimension is likely to determine the total RT when motion is strong, but it has less control when the motion is weak. The logic should produce stacked bell-shaped functions that pinch together in the middle of the graph. The data are better explained by the serial predictions (e.g. large mismatches by the parallel model when both dimensions are weak). Formal model comparison provides strong support for the serial models overall (geometric mean of Bayes factor across participant and task combinations: ) and for 9 of 11 participants individually (Figure 2—figure supplement 1).

The systematic underestimate of RT by both models on the doubly difficult stimulus strengths arises for two reasons. First, the drift-diffusion model implements a restrictive assumption that the variance of the noisy momentary evidence is the same for all stimulus strengths. The variance of the momentary evidence is likely to be smaller near 0% (see Materials and methods). The overestimate of the variance in the model would lead to an underestimate of the RT in the middle of the graphs of RT vs. motion strength, and along the top of the graphs of RT vs. color strength. Second, the inclusion of all trials at 0% coherence tends to inflate the mean RT because just under half of the trials resemble errors in the sense that the choice is opposite the sign of the component of the drift rate that instantiates a direction or color bias (e.g. , Equation 2). Importantly, we pursued a second approach to compare serial and parallel models which shows that the superiority of the serial model does not rest on the systematic underestimates of RT in Figure 2.

In the second approach, we focus specifically on the decision times. It considers only the distribution of RTs and attempts to account for them under serial and parallel logic. Instead of fitting diffusion models, this empirical approach explains the observed double-decision RT distributions as either the serial or parallel combination of latent (i.e. unobservable) distributions of color and motion decision times, as well as the four distributions (one for each choice). We estimate these latent distributions with gamma distributions. For the serial case, the predicted double-decision RT distributions are established by convolution of the latent single-dimension distributions and the distribution of . For the parallel case, the latent distributions are combined using the max logic, and the result is convolved with the appropriate distribution of (see Materials and methods). Figure 2—figure supplement 2 shows fits to the double-decision RT distributions for the more informative conditions for the serial and parallel models. The model comparisons, based on all the data, yield ‘decisive’ support (Kass and Raftery, 1995) for the serial processing of motion and color (geometric mean of Bayes factor for participant and task combinations with all but one out of 11 participants individually supporting the serial rule; Figure 2—figure supplement 3). We also display the mean RTs derived from the fits in the same format as Figure 2 (Figure 2—figure supplement 4 and Figure 2—figure supplement 5). Both approaches support the conclusion that the color-motion double-decisions are formed serially from two independent decision processes, each with its own termination rule. However, neither analysis discerns the nature of the serial processing (e.g. whether they alternate or one is prioritized). We will consider this issue later.

Experiment 2. Brief stimulus presentation (eye)

The results from the double-decision RT experiment support sequential updating of two decision variables, which represent accumulated evidence for the motion and color choices. If this is true, it leads to a straightforward prediction. If the stimulus duration is not controlled by the decision maker but by the experimenter, and if it is brief, then the two stimulus dimensions would compete for the limited processing time, and we ought to observe choice-interference. We therefore conducted a second experiment with the participants from Exp. 1-eye (N = 3). In this experiment, we presented the same motion/color coherence combinations, but limited the duration of the stimulus viewing time to just 120 ms. We know from previous experiments with 1D tasks that performance continues to increase with stimulus duration up to at least one half second (Kiani et al., 2008; Waskom and Kiani, 2018). Thus, it is reasonable to assume that performance accuracy would suffer if it is not possible to make use of the full 120 ms of evidence for both motion and color. We predicted that sensitivity to both color and motion should be worse on the double-decision task than on color-only and motion-only versions of the identical task. Each participant performed a total of 7305–7741 trials (4052–4275 1D trials and 3240–3466 2D trials) over 12–19 days.

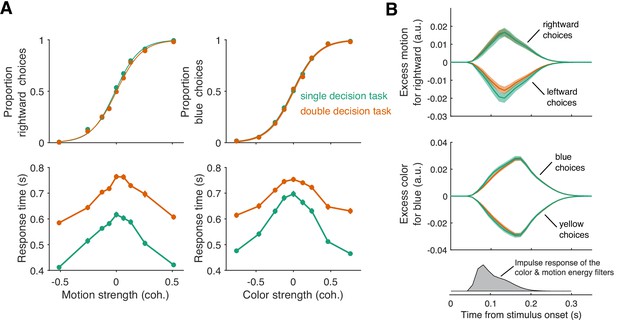

To our surprise, double-decisions were just as accurate as their 1D controls (Figure 3A). We also observed no change in the sensitivity to color across the range of motion difficulties, and vice versa (ΔBIC = 7.2 and 9.7 for motion and color choices, respectively, in support of no interaction; Equation 12, ). This suggests that evidence for color and motion was acquired simultaneously, in parallel, and without interference. Further support for this conclusion is adduced from an analysis of the stimulus information used to make the decisions—what is known as psychophysical reverse correlation (Beard and Ahumada, 1998; Okazawa et al., 2018). Figure 3B displays the degree to which trial-by-trial variation in the noisy displays influences the choice (see Materials and methods). It shows that these stimulus fluctuations influenced choices almost identically in the double-decision task and 1D controls.

Parallel acquisition and serial incorporation of a brief color-motion pulse (Experiment 2).

Participants completed a short-duration variant of the double-decision task in which the stimulus was presented for only 120 ms. They also performed blocks in which they were asked to report only the color or only the motion direction (single decision in which they could ignore the irrelevant dimension). Data from double- and single-decision blocks are indicated by color. (A) Choices and RTs for single and double-decision blocks. Top-left, proportion of rightward choices as a function of motion strength. Top-right, proportion of blue choices as a function of color strength. The solid lines are logistic fits. They are nearly identical for single- and double-decisions. Bottom row, RT for the single- and double-decisions plotted as a function of motion strength (left) and color strength (right). For double-decisions, these are the same data plotted as a function of either the motion or color dimension. Data points show the average RT as a function of motion or color coherence, after grouping trials across participants and all strengths of the ‘other’ dimension (i.e. color, left; motion, right). Error bars indicate s.e.m. across trials. Although the stimulus was presented for only 120 ms, RTs were modulated by decision difficulty. Importantly, RTs were longer in the double-decision task than in the single-decision task. (B) Psychophysical reverse correlation analysis. Top, Time course of the motion information favoring rightward, extracted from the random-dot display on each trial, that gave rise to a left or right choice. Shading indicates s.e.m. Middle, Time course of the color information favoring blue, extracted from the random-dot display on each trial, that gave rise to a blue or yellow choice. Shading indicates s.e.m. The similarity of the green and orange curves indicates that participants were able to extract the same amount of information from the stimulus when making single- and double-decisions. Bottom, Impulse response of the filters used to extract the motion and color signals (see Materials and methods). They explain the long time course of the traces for the 120 ms duration pulse.

At first glance, the observation seems to be at odds with our interpretation of the double-decision RT experiment, which provided strong support for serial processing, primarily in the pattern of RTs. In Experiment 2, the entire stimulus stream lasts only 120 ms, which is less than a typical saccadic latency to a bright spot. Nevertheless, participants exhibited variation in the time of their responses as a function of stimulus strength (Figure 3A, bottom panels) and these RTs were surprisingly long. The fastest were ∼300 ms longer than the stimulus (RT>400 ms). Importantly, they are approximately 100–200 ms longer in the double-decisions than in single decisions. It is difficult to make too much of this observation, because the participants might have procrastinated for reasons unrelated to the dynamics of the decision process. However, procrastination would not explain the difference between the two conditions. As parallel acquisition of the 120 ms color and motion take the same amount of time as acquisition of either of the streams alone (by definition), the extra time in the double-decision is probably explained by serial incorporation of evidence into the two decisions. This observation also implies the existence of buffers that store the information from one stream as it awaits incorporation into the decision.

Our results so far suggest that color and motion information are acquired in parallel but are incorporated into the decision in series. We therefore wondered if the same schema might apply to the double-decision RT task. For this to hold, some kind of alternation must occur such that segments of one or the other stimulus stream is not incorporated into its decision. Suppose, for example, that at ms, motion information had been incorporated into decision variable, , and color information had been stored in a buffer. Suppose further that motion continues to update the decision variable, , until it reaches a termination bound at , and only then can the buffered color information be incorporated into decision variable, . From then on color information could update until this decision terminates. In this imagined scenario, the color information between 0.12 s and is not incorporated in the decision.

One might also imagine two alternatives to the latter part of this scenario. In both, the information from color continues to update the buffer (but not ) throughout the motion decision without loss. Then at either (i) all the information about color is incorporated immediately into or (ii) the buffered information is incorporated in over time (e.g. as if the recorded color information is played back). The first alternative is equivalent to the parallel model that is inconsistent with the data. The second alternative, implausible as it may seem, implies the color decision is blind to the color information in the display during the playback of the recorded color information. These alternatives are not intended as serious models but to convey two general intuitions. First, if there is a buffer at play in the double-decision RT task then it must take time for the buffered information to be incorporated, or the RTs would have conformed to the parallel logic. Second, if the duration of the buffer is finite, when both 1D processes require more processing time than the duration of the buffer, there will be portions of the color and/or motion stimulus that do not affect the decision.

One might therefore ask why the second point does not lead to a reduction in sensitivity (or accuracy) in color, say, when motion is weak and competes with color for processing time. The answer is that when the decision maker controls the termination of the decision, they can compensate for the missing information by collecting more, until the level reaches the same terminating bound. This leads to a straightforward prediction. If the experimenter controls the termination of the evidence stream, then missing portions of the color and/or motion stimulus might impair performance, especially when the other stimulus dimension is weak.

Experiment 3. Variable-duration stimulus presentation (eye)

We therefore predicted that under conditions in which the experimenter controls the viewing duration, there is an intermediate range of viewing durations, greater than 120 ms and less than the average RT of difficult double-decisions, where we might observe interference in sensitivity. To appreciate this prediction, it is essential to recognize that when the experimenter controls viewing duration of a random dot display, the decision maker applies a termination criterion, as they do in choice-RT experiments (Kiani et al., 2008). There is no overt manifestation of this termination, although it can be identified by introducing perturbations to the stimulus (see also Kang et al., 2017). Before such termination, sensitivity improves by the square root of the stimulus viewing duration () as expected for perfect integration of signal-plus-noise. In a double-decision, when the two decision processes are splitting the time equally, the sensitivity of each should only improve by . However, when one process terminates, the rate of improvement of the other process should recover, until that process reaches its terminating bound. The model predicts a range of stimulus strengths and viewing durations in which interference in accuracy ought to be evident. It also predicts that the range and degree of interference might depend on which stimulus dimension the participant prioritizes. Here, we set out to test this prediction.

Two participants each performed ∼11,800 trials over 12–16 sessions. The task was identical in structure to the brief-duration experiment. However, stimuli were presented at fixed durations ranging from 120 to 1200 ms (in steps of 120 ms). Only three levels of difficulty were used for each dimension: one easy and two difficult coherence levels. The two difficult coherence levels were adjusted individually to yield 80% and 65% accuracy, respectively, ensuring above-chance performance despite the high difficulty level. The easy coherence level was fixed at the highest motion/color coherence from Experiment 1-unimanual, as this coherence typically supports perfect accuracy. The number of coherence levels was reduced compared to Experiments 1 and 2 in light of the large number of conditions (6 signed motion coherences × 6 signed color coherences × 10 stimulus durations). The key comparison here is sensitivity to difficult color, say, when (i) motion is difficult and therefore likely to compete with the color for decision time vs. (ii) motion is easy and less likely to wrest time away from color. This comparison within the double-decision task is more appropriate than a comparison between the double and single decision tasks as these tasks are likely to elicit different termination bounds, as they have different error rates—0.75 and 0.5—on difficult trials (see Materials and methods).

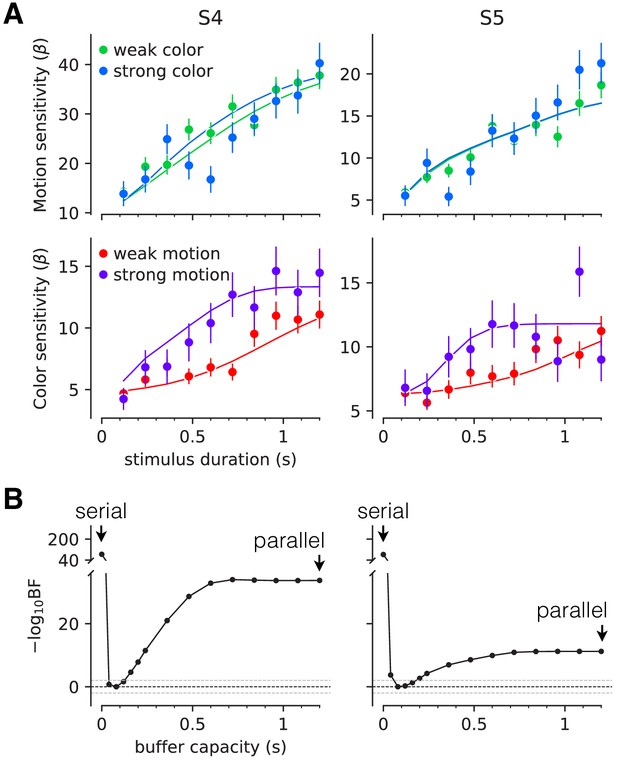

Figure 4 shows the sensitivity to motion and color as a function of stimulus duration, when the other stimulus dimension was easy or difficult. The sensitivity is the slope of a logistic fit of the motion (or color) choices to the three levels of difficulty. Notice that for both participants there is no difference in sensitivity at the shortest stimulus duration (120 ms), consistent with the findings above. However both participants exhibited lower sensitivity at intermediate durations when color choices were coupled with difficult motion. This difference implies an interference. It is less compelling, if present at all, when motion choices are coupled with difficult color. This pattern, in which motion difficulty affects color sensitivity but not vice-versa, is consistent with participants prioritizing one decision over the other. This would arise if participants consistently monitored the motion stream first and turned to color after the motion decision terminated. In this case, the difficulty of the color would not affect the decisions for motion, but harder motion would take longer to terminate, thereby leaving less time for color processing. We therefore fit a model in which one decision was prioritized over another by including a parameter that determined the probability that motion would be processed first. We also included a parameter that controls the duration of the stimulus streams that can be held in the buffer. This is, effectively, the amount of stimulus information that can be acquired in parallel. The best fits of the model, shown by the smooth curves (Figure 4A), suggest a buffer capacity of approximately 80 ms worth of stimulus information (Figure 4B and Figure 4—figure supplement 1) and prioritization of motion on approximately 80–96% of trials. The serial and parallel models are special cases of this model in which the buffer durations are very short or long, respectively. Both such buffer capacities provide very poor fits to the data (Figure 4—figure supplement 2). Note that we continue to refer to the model as serial because even in the parallel phase, the decision is updated serially.

Interference in choice accuracy can be elicited at intermediate viewing durations (Experiment 3).

Two participants (columns) performed the color-motion double-decision task with a random dot display presented for 120–1200 ms. (A) Top, Motion sensitivity as a function of stimulus duration and color strength. Symbols are the slope of a logistic fit of the proportion of rightward choices as a function of signed motion strength, for each stimulus duration. Data are split by whether the color strength was strong (blue) or weak (green). Error bars are s.e. Bottom, Analogous color-sensitivity split by whether the motion strength was strong (purple) or weak (red). Curves are fits to the data from each participant using two bounded drift diffusion models that operate serially after an initial stage of parallel acquisition, here termed the buffer capacity. During the serial phase, one of the dimensions is prioritized until it terminates. The prioritization favored motion for both participants ( and 0.96, for participants S4 and S5, respectively). (B) Negative log likelihood of the model fits as a function of the buffer capacity, relative to the model fit at 80 ms capacity. The model is equivalent to a purely serial model, when the buffer capacity is zero, and to a purely parallel model when the buffer capacity exceeds the maximum stimulus duration. Negative log likelihoods were computed for a discrete set of buffer capacities (black points). Horizontal lines at indicate Bayes factor = 1. Dashed lines show where the Bayes factor = ± 100 (‘decisive’ evidence; Kass and Raftery, 1995).

The findings therefore support our prediction, and in doing so, they support the hypothesis that a common principle underlies double-decisions ranging from a tenth to at least two seconds, independent of whether this duration is controlled by the experimenter or by the decision maker. Namely, there is parallel acquisition but serial incorporation of color and motion into the double-decision process. The interference in choice accuracy demonstrated in this experiment is the only example of choice interference in our study. It is remarkably elusive, because it can be observed only for stimulus durations for which three conditions are satisfied: (i) the duration of the stimulus is long enough that parallel acquisition is no longer possible; (ii) the duration of the stimulus is short enough that accuracy on one dimension would benefit from additional sensory evidence; (iii) the duration should support termination of the other dimension for strong but not weak stimuli. The interference is also deceptive. It is explained by a competition for processing time, not by an interaction affecting the fidelity of the sensory streams themselves. It is an example of resource sharing (Tombu and Jolicoeur, 2002; Tombu and Jolicoeur, 2005; Kahneman, 1973), but the resource is time, specifically.

Experiment 4. Two-effector double-decision reaction time (bimanual)

There are two important features of the serial model: the existence of two decision variables that are terminated independently, and that these accumulations are not updated at the same time but in series. A limitation in the experiments so far is that we had access to the completion of the double-decision but not to the completion of each component. Therefore, we could only speculate about which decision completed first, and when. Without knowledge of the first decision time, we cannot tell how often a participant switched between updating the motion and color decision variables. For example, the prioritization considered in the previous section could arise by completing one decision before deliberating on the second or by alternating back and forth on a schedule that allocates more time to motion. We therefore conducted an experiment in which participants indicated their choice and RT for each stimulus dimension using separate effectors.

The eight participants who performed the unimanual version of the double-decision RT task (Exp. 1-unimanual) also performed a bimanual version of the same task (Figure 5A). In the unimanual version, participants used a handle to move a cursor to one of four targets that simultaneously communicated color and motion decisions. In the bimanual version, participants indicated their motion decision by moving one of the handles in a left/right direction and indicated their color decision with a forward/backward movement of the other handle. Participants were instructed to report each 1D decision as soon as it was made. To facilitate this, they received extensive training, consisting of blocks in which one of the stimulus dimensions was set at its easiest level. Both the order of the tasks (unimanual and bimanual) and the hand assignments (left/right × color/motion) were balanced between the participants (see Materials and methods). Trial numbers (2304) and motion-color coherence levels (11 × 11 combinations of signed coherence levels) were identical for the uni- and bimanual version of the task.

Replication of double-decision choice-reaction time when the decisions are reported with two effectors (Experiment 4).

(A) Participants performed the color-motion double-decision choice-reaction task, but indicated the double-decision with either a unimanual movement to one of four choice-targets or a bimanual movement in which each hand reports one of the stimulus dimensions (N = 8 participants performed both tasks in a counterbalanced order). In both conditions, the hand or hands were constrained by a robotic interface to move only in directions relevant for choice (rectangular channels). The display was the same in the unimanual and bimanual tasks, with up-down movement reflecting color choice and left-right movement reflecting motion choice. A scrolling display of proportion correct was used to encourage accuracy. In the unimanual trials both choices were indicated simultaneously. However, in the bimanual trials each choice could be indicated separately and the dot display disappeared only when the second hand left the home position. (B) Choice proportions and double-decision mean RT on the bimanual task. The double-decision RT on the bimanual task is the latter of the two hand movements. The data are plotted as a function of either signed motion or color strength (abscissae), with the other dimension shown by color (same conventions as in Figure 2). Solid traces are identical to the ones shown in Figure 2B for the unimanual task, generated by the method of fitting the conditions containing at least one stimulus condition at its maximum strength and predicting the rest of the data. They establish predictions for the bimanual data from the same participants. The agreement supports the conclusion that the participants used the same strategy to solve the bimanual and unimanual versions of the task. Note that a few symbols are occluded by others.

Before tackling the questions that motivate the bimanual experiment, we first ascertained whether participants used the same strategy to make bimanual double-decisions as they did on the unimanual version. It seemed conceivable that by using separate hands to indicate the motion and color decisions, participants could achieve parallel decision formation, for example, as a pianist reads the treble and bass staves with the right and left hands, typically. We therefore conducted a model comparison similar to that of Figure 2. To fit the models, we used the color and motion choice on each trial along with the second response time (RT2nd) regardless of whether it was to indicate direction or color. This allows us to fit models that are identical to those used in the unimanual task (Figure 2). In the bimanual task, the final RTs (RT2nd) are well described by the fits to the unimanual double-decision RTs (Figure 5). We illustrate this in two ways. In the figure, the solid traces are not fits to the bimanual data; they are fits to the unimanual data shown in Figure 2B. Clearly the choice probabilities and RTs in the bimanual task are well captured by the model fit to the unimanual data. The fits to the bimanual data are shown in Figure 5—figure supplement 1, and model comparison favors the serial over the parallel model for seven of the eight participants (Figure 2—figure supplement 1). Importantly, the participants’ behavior was strikingly similar in the unimanual and bimanual versions of the task. The similarity between the two versions of the task is also supported with a model-free analysis. In Figure 5—figure supplement 2, we superimpose the accuracy and the RTs for the unimanual and bimanual tasks. There is an almost perfect overlap between these two aspects of choice behavior, providing further support for a common set of processes operating in both versions of the task.

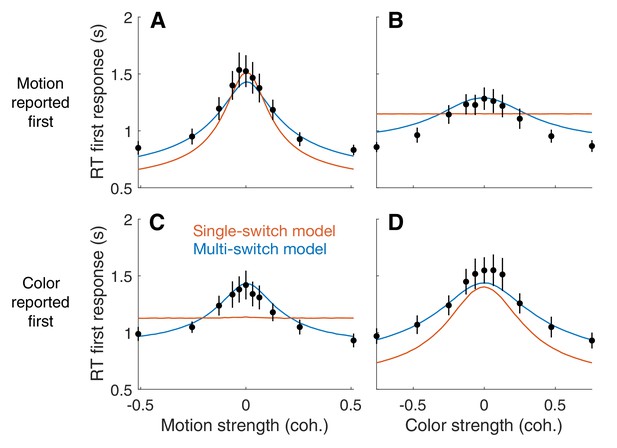

The bimanual task allows us to distinguish between two variants of the serial model that were not distinguishable in the unimanual task. In the first variant, the single-switch model, the decision maker only switches from one decision to the next when the first decision is completed. Thus, the decision that terminates first (D1st) is the one that is evaluated first, and only then the other decision is evaluated. In the second variant, the multi-switch model, the decision maker can alternate between decisions even before finalizing one of them. If little time is wasted when switching, these two models make similar predictions for the RT in the unimanual task: the RT will be the sum of the two decision times plus the non-decision latencies. However, the models make qualitatively different predictions for how the response time for D1st depends on the difficulty of the other decision.

The single-switch model predicts that the RT for D1st is independent of the difficulty of the decision reported second (D2nd). This is because D2nd is not evaluated until the first decision is completed. The prediction of the multi-switch model is less straightforward. Suppose that in a given trial the motion decision is easy and the color decision is difficult. If the color was reported first, the motion was probably not evaluated at all before committing to D1st, since if it had been evaluated it would most likely have ended before the color decision. In contrast, if both dimensions were difficult, which decision was reported first is largely uninformative about the number of alternations between color and motion that occurred before committing to the first decision; since both decisions take longer to complete, it is possible that both have been evaluated before one of them terminated. Therefore, the multi-switch model predicts that the first decision takes longer the more difficult the other decision is: when D2nd is easy, it is more likely that it was not considered before committing to the D1st decision and thus the average RT1st is shorter.

To disambiguate between the single-switch and multi-switch models, we fit both models to the data from the bimanual task. First, we fit a serial model identical to that of Figure 2 to the data from the bimanual task. We used the same procedure as in Figure 2; that is, we ignore RT1st and fit RT2nd and the choices given to the two decisions. Then, we used three additional parameters to attempt to explain RT1st. These parameters are the average time between switches (), the probability of starting the trial evaluating the motion decision (), and the non-decision time for the first decision (, see Materials and methods). These parameters only affect RT1st; they do not influence RT2nd or the choice probabilities. The three parameters were fit to minimize the mean-squared error between the models' predictions and the data points (Figure 6; Appendix 1—table 2). The single switch model is a special case of the multi-switch model where is very large (i.e. longer than the slowest first decision time).

First response times in the bimanual task suggest multiple switches in decision updating (Experiment 4).

For bimanual double-decisions, participants indicate two RTs per trial. Whereas up to now we have only considered the RT corresponding to completion of both color and motion decisions, the analyses in this figure concern the RT of the first of the two. Symbols are means ± s.e. (N = 8 participants). Curves are fits to single- and multi-switch model (orange and blue, respectively). (A) RT as a function of motion strength when motion was reported first. (B) RT as a function of color strength when motion was reported first. (C) RT as a function of motion strength when color was reported first. (D) RT as a function of color strength when color was reported first. In panels A and D, the first response corresponds to the stimulus dimension represented on the abscissa. The data exhibit the expected pattern of fast RT when the stimulus is strong and slow RT when the stimulus is weak (i.e. near 0). This would occur if the serial processing of motion and color ensued one after the other (single-switch) or with more than one alternation (multi-switch), although the latter provides a better account of the data. In panels B and C, the first response corresponds to the stimulus dimension that is not represented on the abscissa. Here the single-switch model fails to account for the data. If there were only one switch and color terminates first, then the strength of motion is irrelevant, because all processing time was devoted to color. Similarly, if there were only one switch and motion terminates first, then the strength of color is irrelevant, because all processing time was devoted to motion.

The model comparison provides clear support for multiple switches. Figure 6 shows the average RT for the decision reported first (RT1st), split by whether the first decision was color or motion, and grouped by either color or motion strength. Both the single- and multi-switch models provide a good explanation of the RT1st when grouped as a function of the coherence of the decision that was reported first (Figure 6, panels A and D). However, only the multi-switch model could explain the interaction between RT1st and the coherence of D2nd (Figure 6B and C). The graphs show that RT1st is longer when D2nd is more difficult, and this effect was well explained by the multi-switch model. Unlike what is seen in the data, the single-switch model predicts that RT1st should not vary with the coherence of D2nd (flat orange lines in panels B and D). Because we fit the models for each participant individually, we can analyze the frequency of alterations predicted by the model with multiple switches. For one of the participants, the best-fitting inter-switch interval was greater than the slowest decision time, and thus the model was no different from the single-switch model. For the other seven participants, alternations were sparse: the average inter-switch interval was 704 ± 205 ms (mean ± s.e.m. across participants).

To summarize, the bimanual version of the double-decision task allowed us to infer not only that the two dimensions were addressed serially, but that people may alternate between both attributes of the stimulus in a time-multiplexed manner. The model suggests that alternations were sparse, as if the participants considered one decision for several hundred milliseconds, and switched temporarily to the other decision if they found no conclusive evidence about the first. Moreover, it provides direct evidence for two termination events, as assumed in our model fits. This rules out a class of models of the double-decision as a race among four accumulations for each of the color-motion combinations, what we term target-wise integration, as these models preclude completion of one decision before the other.

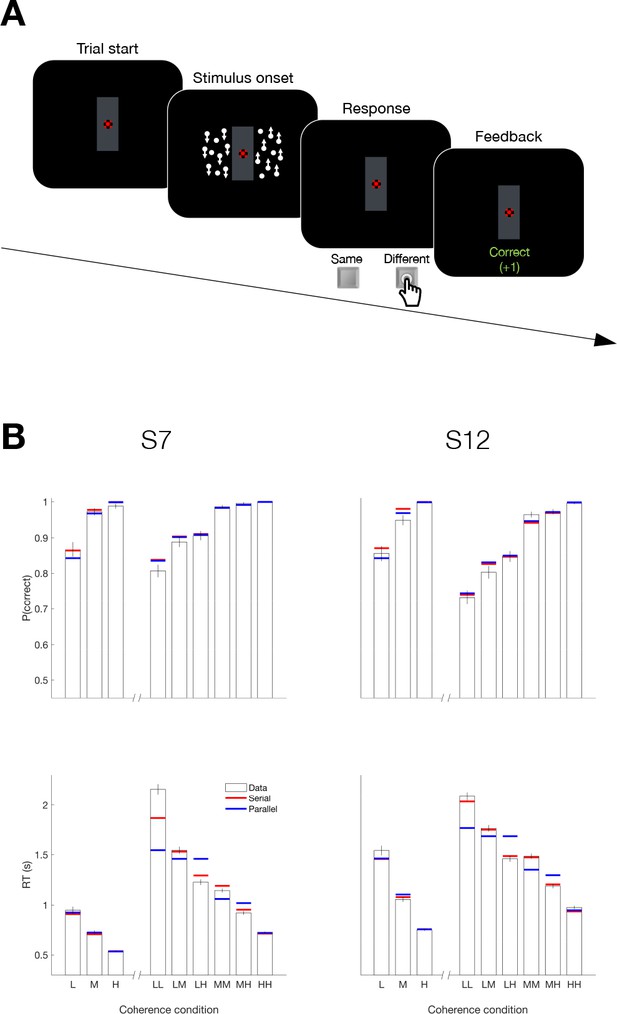

Experiment 5. Binary-response double-decision reaction time

Up to now, we have observed serial decision making when participants had to provide two answers—that is, four possible responses. A possible concern is that the reason we observed the serial pattern of double-decisions was that it required a quaternary response. We therefore designed a task that involves a double-decision but only a binary choice. Two participants, who had participated in Experiment 4, were asked to report whether the net direction in two patches of random dots were the same or different by pressing one of two response keys with the index fingers of each hand; (Figure 7A). The two motion stimuli were presented to the left and right of a central fixation cross Figure 7A. The direction (up or down) and strength of motion (three coherence levels) were controlled independently in the two stimuli. Each participant completed four sessions (3072 trials) of this task and additionally completed a single session (768 trials) of a 1D task in which they judged the up/down direction of a single stimulus presented on the left or right side of the screen.

Serial decision making in a Same vs. Different task (Experiment 5).

(A) Task. Two dynamic random dot motion displays were presented in rectangular patches to the left and right of a central fixation cross. The direction and motion strength were randomized from trial to trial and between the patches (up or down × three motion strengths). Participants judged whether the dominant direction of the left and right patches is the same or different and indicated the decision when ready by pressing a response key with their left or right index finger. At the end of each trial, participants received feedback. In a separate block, participants also performed a 1D direction discrimination task in which only one patch of random dots was displayed. (B) Results and fits for two participants (columns). Top, Proportion of correct choices as a function of the level of motion strength (i.e. unsigned coherence; L = low; M = medium; H = High). Bottom, Response times for each level of motion strength. The first three bars represent the direction task where only a single motion stimulus was presented. The six bars on the right of each plot represent the same-different task. Horizontal red and blue lines are fits of serial and parallel drift-diffusion models to the means. Only correct trials were included for RT analyses.

Both participants exhibited accuracy-RT functions that depended on the difficulty of both motion stimuli. Figure 7B shows the proportion of correct choices plotted as a function of the coherences for both the 1D (up-down) and 2D (same-difference) trials. The RTs associated with same-different judgment were almost twice as long as the RTs from a 1D direction judgment. Part of this difference might be attributed to the conversion from two direction judgments to the same-different response, but that should not depend on difficulty and it is hard to reconcile this with the magnitude of the difference. Instead they suggest additive decision times. The horizontal red and blue lines in Figure 7B are fits to a drift diffusion model that assume the 2D same/different decision is formed from two 1D direction decisions under serial and parallel models, respectively. We constrained the fits in the 1D and 2D tasks to share the same sensitivity to motion strength and the same up-down bias (see Materials and methods, Equation 2 and Appendix 1—table 3). Bayes factor for both participants favored the serial model ( and 31.6 for S7 and S12, respectively).

The comparison of serial vs. parallel rests on an understanding of the way distributions of the two up/down decision times are combined to generate the same/different decision times. A possible concern is that the parsimonious drift diffusion models used to estimate these latent distributions is wanting. For example, they assume stationary bounds, which distorts the shapes of the distributions (Drugowitsch and Moreno-Bote, 2014). We therefore conducted the model comparison using an empirical method that uses only the observed same/different RTs and tries to account for them solely through combination of latent distributions of up/down decision times (Figure 7—figure supplement 1). This analysis also provides strong support for the serial account (Figure 7—figure supplement 1; for both participants). Like the color-motion task, there is every reason to assume that the acquisition of evidence from the two patches of random dots occurs in parallel. Yet once again, the pattern of RTs supports serial incorporation into the double decision. The use of a binary response in the same-different task rules out the possibility that the long decision times in our 2D experiments are explained by the doubling of alternatives (Hick’s law; Hick, 1952; Luce, 1986; Usher et al., 2002). Moreover, the findings demonstrate that the serial incorporation of evidence into a double-decision is not restricted to different perceptual modalities, such as color and motion.

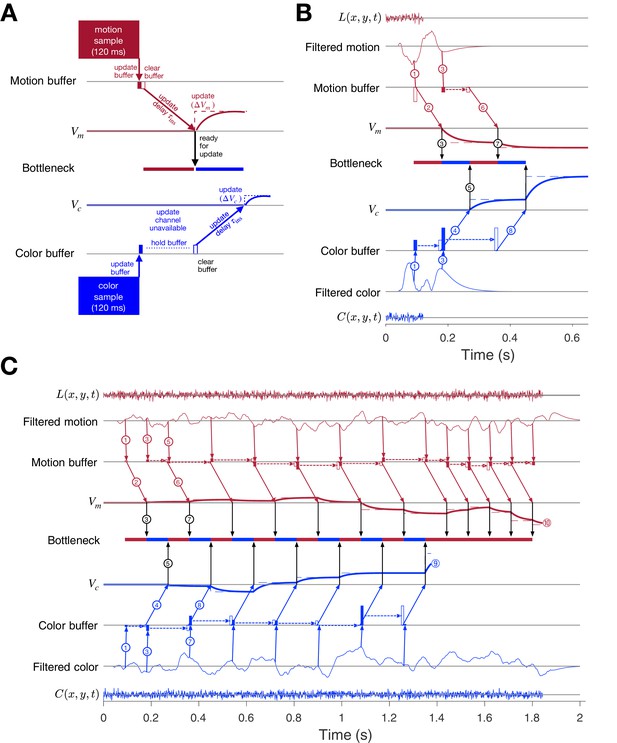

Parallel acquisition with serial incorporation model

Taken together, the results from our five experiments suggest that the prolongation of RTs in double-decisions is the result of serial integration of evidence during the decision-making process, independent of the modality of choice implementation and number of response options. Parallel acquisition of the two sensory streams followed by serial incorporation into decision variables reconciles the findings of the short duration experiment (Experiment 2) with those of the double-decision RT experiment (Experiment 1). The variable duration (Experiment 3) and bimanual (Experiment 4) experiments suggest that (i) parallel acquisition and serial incorporation is not limited to the short duration experiment and (ii) serial alternation of color and motion can occur before one process terminates. Here, we develop a conceptual framework that accommodates the findings from all five experiments with what is known about the neurobiology of similar 1D perceptual decisions. We will proceed by illustrating the steps that underlie the acquisition of evidence samples, their temporary storage in buffers, and their incorporation into the decision variables that govern choice and the two decision times. We first make the case for the buffer using a simulated trial from the short duration experiment. We then elaborate the diagram to account for the serial pattern of decision times when the stimulus duration is longer.

Consider the example in Figure 8A of a process leading to a decision in the short duration task. Suppose that visual processing of the 120 ms motion stream gives rise to a single sample of evidence that captures the information from the brief pulse, and the same is true for the color stream. These samples of evidence are acquired in parallel and placed in buffers, where they can be stored temporarily. The values in these buffers may be thought of as latent instructions to a cortical circuit to update a decision variable ( or ) by some amount ( or ). While the samples can be acquired simultaneously, only one sample can update the corresponding decision variable at a time. This is the bottleneck that imposes serial multiplexing in the 2D tasks. One of the samples must be held (buffered) until the other update operation has cleared. If motion is the first to be updated, then cannot be updated until the circuit receiving the motion-update instruction has received it (black arrow). This takes some amount of time, (for instruct). The update instruction is realized by an integrator with a time constant ( ms) leading to slow cortical dynamics (maroon and blue traces).

Parallel acquisition of evidence and serial updating of two decision variables.

An elaborated drift diffusion model permits reconciliation of the serial processing implied by the double-decision choice-RT experiment and the failure to observe interference in choice accuracy when the color-motion stimulus is restricted to a brief pulse. The main components of the model are introduced in panel A and elaborated in panels B and C. In all panels, maroon and blue indicate motion and color processes, respectively. (A) Simulated trial from the short duration experiment (Experiment 2). Information flows from top to middle graphs for motion; and from bottom to middle graphs for color. Time is left to right. The evidence from both color and motion is extracted from the 120 ms random dot stimulus in parallel. Both can be stored temporarily in separate buffers (filled rectangles), which send an instruction to the circuits representing the respective decision variables in their persistent firing rates. The instruction is to change the firing rate by an amount ( or ). This latency from clearance of the sample from the buffer to receipt of the instruction takes time (, diagonal arrows), and this is followed by the realization of the instruction in the evolving firing rates of cortical neurons (smooth colored curves). In the example, the is the first to update. A central bottleneck precludes updating . The bottleneck is unblocked when the instruction is received by the circuit that represents the motion decision variable (black arrow). This allows the buffered evidence for color to update . Open rectangle represents clearance of the buffer content, which occurs immediately for motion and after a delay for color in this example. Dashed lines associated with the decision stage show the instructed change in the decision variable ( and ). Smooth colored curves show the evolution of the decision variables. (B) Elaboration of the example in panel-A. The boxes representing the 120 ms stimulus are replaced by the two outer rows: (i) raw luminance and color data stream, and , respectively, represented as biased Wiener processes (duration 120 ms); (ii) filtered evidence streams containing the relevant motion (right minus left) and color (blue minus yellow) signals. The filters introduce a delay and smoothing. The filtered signals can be sampled by the buffer every ms, so long as the buffer is available (i.e. empty). The bottleneck shows the process that is accessing the update channel. Other than the first sample, the prioritization is equal and alternating. Only one process can update at a time. Circled numbers identify the key events described in Results. Events sharing the same number are approximately coincidental. (C) Example of a double-decision in the choice-RT task. The first eight steps parallel the logic of the process shown in panel B. The decision variables then continue to update serially, in alternation, until reaches a terminating bound . The decisions then continues as a single-dimension motion process until reaches a terminating bound (). Note that the sampling rate is the same as it was in the parallel phase, whereas during alternation it was half this rate for each dimension. Bound height is indicated by and .

In this example, each buffer receives all the information available in the stimulus. Were there additional samples in the stimulus, the motion buffer would be ready to receive another sample when it sends its content, whereas the color buffer cannot be updated until it is cleared, later. The bottleneck is between the buffer and the update of the decision variable, more specifically, the initiation of the dynamic process that implements this update in a cortical circuit. In this case, there is no consequence beyond a delay, because there is no more evidence from the stimulus after 120 ms.

Figure 8B elaborates the diagram in panel A using another trial from the short duration experiment. We now represent the transformation of sensory data to evidentiary samples by applying a stage of signal processing to the raw luminance and color data, and . These functions are just shorthand for the noisy spatiotemporal displays. The motion filter is meant to capture the impulse response of direction selective simple and complex cells in the visual cortex (Movshon et al., 1978a; Movshon et al., 1978b; Adelson and Bergen, 1985; Britten et al., 1993; DeAngelis et al., 1993), and we assume a similar operation on the stimulus color stream. They are also shorthand for a difference signal, such as right minus left and blue minus yellow. The filtering introduces a delay and a smearing of these streams. While the motion filters must sample the at rates sufficient to support the extraction of fast fluctuations and fine spatial displacement, the neurons ultimately pool these signals nonlinearly over space and time (Britten et al., 1993; Zylberberg et al., 2016). These are the signals represented by the maroon filter traces in Figure 8B. This is the convolution of and the function in Figure 3B (bottom). The same filter is applied to to make the filtered color traces (blue). Importantly, for purposes of integrating the information in the color-motion random dot displays, 11 Hz sampling ( ms) is sufficient. Notice that the filtered representation lasts longer than the stimulus. Therefore, in this case, the decision is based on at least two samples of evidence per sensory stream.

The buffers acquire their first samples at ms (Figure 8B, arrows and ). The motion buffer is cleared as soon as it is acquired (open maroon rectangle) to instruct a change in (arrow ). The instruction is received ms later. Thus, it is 180 ms after stimulus onset that the neurons representing begin to reflect the motion evidence. We set ms mainly to simplify the figure (but see Figure 8—figure supplement 1). This unblocks the bottleneck (③), thereby allowing the first color sample to be cleared from its buffer (open blue rectangle) and replaced by a second color sample (filled blue rectangle, ). Notice that the second motion sample is also acquired at ms, that is, after the first acquisition (and its immediate clearance). The first color sample instructs , thus blocking other updates for () and is first registered by at ms, which unblocks the bottleneck (⑤). Because we are assuming alternation in this example, this leads to the second update of (). With the motion buffer available, it would be possible to obtain a third sample from the motion stream at ms, but the filtered signal has decayed to nearly zero, and we assume extinction of the stimulus is registered by the brain in time to terminate sampling. Upon receipt of , the bottleneck is unblocked ( ms; ⑦) and the second color sample is cleared from its buffer ( ms) to instruct (). There is no signal left to integrate, and the decision is made based on the signs of and . Thus, the decision is based on simultaneous (parallel) acquisition of two samples of evidence, which are incorporated serially into their respective decision variables.

The exercise helps us appreciate how a stream of evidence lasting only 120 ms could lead to a double-decision 400–600 ms later (Figure 3A). It also illustrates the compatibility of parallel acquisition and serial incorporation into the decisions, and it suggests that serial processing is imposed at the step between buffered samples and incorporation into the decision variables. This is the ‘response selection’ bottleneck hypothesized by Pashler, 1994 and others (e.g. Marti et al., 2012; see Discussion).

The idea extends naturally to double-decisions that are extended in time. Figure 8C illustrates a simulated double-decision in a free response task. The double-decision is made once both decision variables reach their terminating bounds. The example follows the same initial steps as the short duration experiment, except that when the second motion and color samples are cleared from their respective buffers, they are replaced with a third sample. Notice that beginning with the third motion sample, the interval to the next sample has doubled (180 ms), because the example posits regular alternation (for purposes of illustration only; see Figure 8—figure supplement 2 and Figure 8—figure supplement 3). This longer interval begins with the second sample. From that point forward, until the color decision terminates, the streams are effectively undersampled. Decision processes ignore approximately half of the evidence supplied by the stimulus. This is because both streams supply independent samples of evidence at a rate greater than 5.5 Hz (i.e. an interval of 180 ms).

In the example, it is that reaches the bound first ( s; ). There may be no overt behavior associated with this terminating event, as in the eye and unimanual reaching tasks, but direct evidence for this termination is adduced from the bimanual reaching task. From this point forward, the processing is devoted solely to motion until it terminates at a negative value of (). Notice that when the bottleneck is unblocked, there is always a buffered sample ready to be cleared, and this occurs at intervals of ms. The process is now as efficient as a single decision process. Indeed, a simple 1D decision about motion (or color) is likely to involve the same instruction delays and bottleneck (Figure 8—figure supplement 1). If , then like the first sample of motion, all subsequent samples of motion could pass immediately from the buffer to update without loss of information. The model is thus a variant of standard symmetrically bounded random walk or drift-diffusion (Laming, 1968; Link, 1975; Ratcliff, 1978; Shadlen et al., 2006; Ratcliff and Rouder, 1998; Palmer et al., 2005). It is compatible with the long time it takes for visual evidence to impact the representation of the decision variable in cortical areas like the FEF and LIP (e.g., ∼180 ms).

The diagrams in Figure 8 are intended for didactic purposes, to lay out the need for a buffer and the seriality imposed by a bottleneck between the buffer and the update of the DV in circuits associated with working memory. The values for the delays and time constants, and , were chosen mainly to simplify an already complex diagram, and the same holds for the assumption of strict alternation. The logic does not change if the serial processing were to involve many updates of color or motion before switching to the other dimension (Figure 8—figure supplement 2 and Figure 8—figure supplement 3). The important assumption is that it takes time to update a decision variable, and during this update there is a bottleneck that precludes another update. Importantly, whether alternating, as in Figure 8C, or starting one process after completing the other, as in Figure 8—figure supplement 2, there is a period of time in which information in the sensory stream is not affecting one of the decisions. This loss is apparent in the additivity of decision times, but it leads to no interference in accuracy in the RT task, because the termination criterion has not changed, and this (and the stimulus strength) determines accuracy. This is the insight that led to the prediction that under certain conditions in which the experimenter controls the duration of the color-motion display, there ought to be interference between color and motion sensitivity (Figure 4).

Discussion

In one sense, the present study extends the framework of bounded evidence accumulation to more complex decisions composed of the conjunction of two decisions about two distinct features. In another more important sense, the findings highlight a bottleneck in information processing that touches on the very speed of thought. The experimental findings demonstrate that a double-decision about the dominant color and direction of motion of a patch of random dots is formed serially. This is surprising, because color and motion are canonical examples of parallel visual pathways from the retina through the visual and extrastriate visual association cortex, and there are compelling demonstrations of this parallel processing on conscious perception (Cavanagh et al., 1984; Cavanagh et al., 1985; Carney et al., 1987). Moreover, the stimulus was designed to minimize interference or competition for spatial attention. It was restricted to a small aperture in the center of the visual field, and the same individual dots supply the motion and color information. It seems fair to say that the deck was stacked in favor of parallel processing. Indeed we confirmed that the color and motion information in the random dot stimulus used here was acquired in parallel.

With one notable exception, there was not a hint of an interaction between color or motion on choice performance in our experiments. That is, changing the difficulty of one dimension, say color, did not affect the perceptual accuracy—or more precisely, sensitivity—to the other dimension, say motion. This held over a wide range of difficulties spanning chance to perfect performance. The one exception was when we controlled viewing duration (Figure 4) and this turns out to be explained by a competition of the two streams for processing time, not by an interaction affecting the fidelity of the sensory streams themselves. Had we attended solely to the choice data, we would have likely concluded that the motion and color decisions were formed in parallel, consistent with 40 years of vision science (Livingstone and Hubel, 1988; Ramachandran and Gregory, 1978).

Evidence for seriality of the decision process is adduced mainly from the pattern of double-decision RTs. The RT is the time from the onset of the color-motion stimulus to the initiation of the movement used to indicate the decision: the sum of the time it takes to complete the double decision, plus time delays that are not affected by task difficulty, termed the non-decision time (). If the color and motion decisions are made in parallel, then the double-decision time is the larger of the two decision times, . If the decisions are made serially, the double-decision time is the sum, . We focused on the max vs. sum distinction using a combination of fitting and prediction. The simplest approach relies only on empirical fits of the double-decision RT distributions (Figure 2—figure supplement 2) derived from a smaller set of latent distributions of one-dimensional color and motion decision-times, under the appropriate operations for parallel and serial combination (Equation 6 and convolution, respectively). The approach focuses solely on the RTs and was therefore essential for Experiment 5, where we had no access to the direction choices in the two patches of random dots. It reveals ‘decisive’ support (Kass and Raftery, 1995) for seriality in all but one of the 11 participants (Figure 2—figure supplement 3). A drawback of the approach is that it does not constrain the relationship between choice accuracy and decision time. For this, we used a variety of bounded drift-diffusion models. These are the fits shown in Figure 2. Here too, we attempted to contrast the max and sum logic by predicting the RT distribution for the majority of conditions. We fit the choice-RT data from the subset of conditions in which at least one of the stimulus dimensions was at its strongest level. The fits, under the max or sum rule, supply the marginal distributions of color and motion decision times to predict the RT of the remaining conditions, through application of the same rule. This approach also provides decisive support for the serial model (see Figure 2—figure supplement 1).

The case for buffers and bottlenecks

The strong support for serial processing does not specify where in the processing chain the seriality arises. The answer to this question resolves the apparent contradiction with vision science, and highlights a connection with a body of literature from psychology that addresses the topic of dual task interference, more specifically the psychological refractory period. The key is the short and variable duration experiments (Figures 3 and 4). If seriality were imposed at the level of sensory acquisition then when both color and motion are difficult, accuracy on one dimension should come at the expense of accuracy on the other, on average. We did not observe this at short durations, and not for lack of power, as made clear by the interference that was detected at intermediate durations. Nor did we observe any reduction in accuracy compared to single decisions, and there was no difference in the magnitude and time course over which momentary fluctuations of color and motion predicted the individual choices on single- and double-decisions (Figure 3B). These observation also rule out the possibility that there was interference but it was balanced across trials—that is, a mixture of trials in which successful motion processing impaired color processing on half the trials and successful color processing impaired motion processing on the other half.

Thus, the short duration experiment demonstrates parallel processing and the necessity of at least one buffer. The results in the variable duration experiment might lead us to entertain the possibility that only color is buffered, because motion was prioritized. However, the bimanual task demonstrates that motion is not always processed first, and both color and motion are processed before the first process terminates. We therefore conclude that there are two buffers which are capable of holding a sample of evidence about color or motion, respectively, while the other dimension is incorporated into the decision. This places the bottleneck between the buffered evidence and the representation of the decision variable. We believe the bottleneck arises because of an anatomical constraint. It is simply impossible to connect in parallel every possible source of evidence with the neural circuits responsible for representing a proposition or plan. As Zylberberg et al., 2010 theorized, the brain's routing problem holds the key to why many mental operations operate serially. We will return to this idea after interpreting our results in the context of the neurobiology of decision making. We do this by pursuing the neural correlates of a computational model that supports parallel acquisition of sensory evidence and its serial incorporation into two decisions.

Connecting computational models to neurobiology

The operations depicted in Figure 8 are intended to reconcile what is known about the neurobiology of simple 1D decisions with the constraints introduced by the double-decision task. The mathematical instantiation of the model requires only minor modifications of two bounded drift-diffusion processes with temporal multiplexing (see Materials and methods). However, the architecture implied by Figure 8B,C facilitates interpretation of the experimental findings in relation to neural processing. In the mathematical depiction of drift-diffusion, the momentary evidence is a biased Wiener process. However, in reality the stimulus is not a Wiener process, nor is the representation of momentary evidence by neurons (Zylberberg et al., 2016), which arise through application of a transfer function that effectively spreads the impact of a pair of displaced dots over 100–150 ms (Figure 3B, bottom; Adelson and Bergen, 1985). Thus, the neural representation of the motion can be approximated by the leaky integral of a biased Wiener process (Cain et al., 2013; Barlow and Tripathy, 1997). Such smoothing would not be warranted for the detection of fast changes, but it is adequate for a signal that is to be integrated over time. We know less about the filtration of a color difference, but the same logic applies.