Learning developmental mode dynamics from single-cell trajectories

Abstract

Embryogenesis is a multiscale process during which developmental symmetry breaking transitions give rise to complex multicellular organisms. Recent advances in high-resolution live-cell microscopy provide unprecedented insights into the collective cell dynamics at various stages of embryonic development. This rapid experimental progress poses the theoretical challenge of translating high-dimensional imaging data into predictive low-dimensional models that capture the essential ordering principles governing developmental cell migration in complex geometries. Here, we combine mode decomposition ideas that have proved successful in condensed matter physics and turbulence theory with recent advances in sparse dynamical systems inference to realize a computational framework for learning quantitative continuum models from single-cell imaging data. Considering pan-embryo cell migration during early gastrulation in zebrafish as a widely studied example, we show how cell trajectory data on a curved surface can be coarse-grained and compressed with suitable harmonic basis functions. The resulting low-dimensional representation of the collective cell dynamics enables a compact characterization of developmental symmetry breaking and the direct inference of an interpretable hydrodynamic model, which reveals similarities between pan-embryo cell migration and active Brownian particle dynamics on curved surfaces. Due to its generic conceptual foundation, we expect that mode-based model learning can help advance the quantitative biophysical understanding of a wide range of developmental structure formation processes.

Editor's evaluation

This work proposes a method to obtain a reduced description of the collective dynamics of thousands of cells moving together during zebrafish gastrulation as a few fundamental modes, and to derive effective dynamics for these modes. This well-written work enables a simplified picture of the key features of cellular collective motion, that will be useful to physicists and biologists looking for a quantitative understanding of morphogenesis.

https://doi.org/10.7554/eLife.68679.sa0Introduction

Embryogenesis, the development of a multicellular organism from a single fertilized egg cell, requires coordinated collective motions of thousands of cells across a wide range of length and time scales (Gilbert and Barresi, 2016; Solnica-Krezel, 2005). Understanding how a highly reproducible and robust tissue organization arises from the dynamics and interactions of individual cells presents a major interdisciplinary challenge (Collinet and Lecuit, 2021). Recent advances in high-resolution live imaging make it possible to track the internal biological states and physical movements of many individual cells on pan-embryonic scales throughout various stages of development (Stelzer, 2015; Power and Huisken, 2017; Hartmann et al., 2019; Shah et al., 2019). This unprecedented wealth of data poses two intertwined compression problems of equal practical and conceptual importance. The first concerns the efficient reduction of high-dimensional tracking data without loss of relevant information; the second relates to inferring predictive low-dimensional models for the developmental dynamics. Mathematical solutions to the first problem are aided by taking into account the geometry and symmetries of the developing embryo, which suggest suitable basis functions for a coarse-grained and sparse mode representation of raw data (Levy, 2006). Efficient algorithmic approaches tackling the second problem appear within reach thanks to recent advances in the direct inference of dynamical systems equations from data (Brunton et al., 2016; Rackauckas et al., 2021). Building on these ideas, we construct and demonstrate here a computational framework that translates developmental single-cell trajectory data on curved surfaces into quantitative models for the dominant hydrodynamic modes.

Widely applied in physics (Kac, 1966; Goldenfeld and Woese, 2011; Kantsler and Goldstein, 2012; Bhaduri et al., 2020), engineering (Soong and Grigoriu, 1993; Heydari et al., 2021), and spectral computing (Driscoll et al., 2014; Burns et al., 2020; Fortunato et al., 2021), mode representations (Schmid, 2010; Tu et al., 2014) provide a powerful tool to decompose and study system dynamics at and across different energetic, spatial and temporal scales. In quantum systems, for example, mode representations in the form of carefully constructed eigenstates are used to characterize essential energetic system properties (Slater and Koster, 1954; Jaynes and Cummings, 1963). Similarly, turbulence theory has seen significant progress by studying the coupling between Fourier modes that represent dynamics at different length scales. This approach enabled a better understanding of energy cascades (Kolmogorov, 1941; Wang et al., 2021) and provided insights into the nature of turbulence in non-living (Kraichnan and Montgomery, 1980; Pope, 2000) and in living (Dunkel et al., 2013; Bratanov et al., 2015; Ramaswamy and Jülicher, 2016; Alert et al., 2020) systems. Additionally, the multi-scale nature of many biological processes make them particularly amenable to a representation in terms of spatial and temporal modes (Marchetti et al., 2013). Despite this fact, however, mode representations are not yet widely used to characterize and compress cell tracking data, or to infer dynamic models from such data.

To demonstrate the practical potential of mode representations for the description of multicellular developmental processes, we develop here a computational framework that takes cell tracking data as inputs, translates these data into a sparse mode representation by exploiting symmetries of the biological system, and utilizes recently developed ODE inference techniques (Rackauckas et al., 2021) to infer a predictive dynamical model. The model will be specified in terms of a learned Green’s function that propagates initial cell density and flux data forward in time. To validate the approach, we demonstrate that it correctly recovers the hydrodynamic equations for active Brownian particle (ABP) dynamics on curved surfaces. Subsequently, as a first example application to experimental single-cell tracking data, we consider the pan-embryonic cell migration during early gastrulation in zebrafish (Shah et al., 2019), an important vertebrate model system for studying various morphogenetic events (Solnica-Krezel, 2005; Krieg et al., 2008; Morita et al., 2017). During gastrulation, complex migratory cell movements organize several thousand initially undifferentiated cells into different germlayers that lay out the primary body plan (Rohde and Heisenberg, 2007). The underlying high-dimensional single-cell data make this process a prototypical test problem for illustrating how spatio-temporal information can be efficiently compressed to analyze and model biological structure formation.

Results

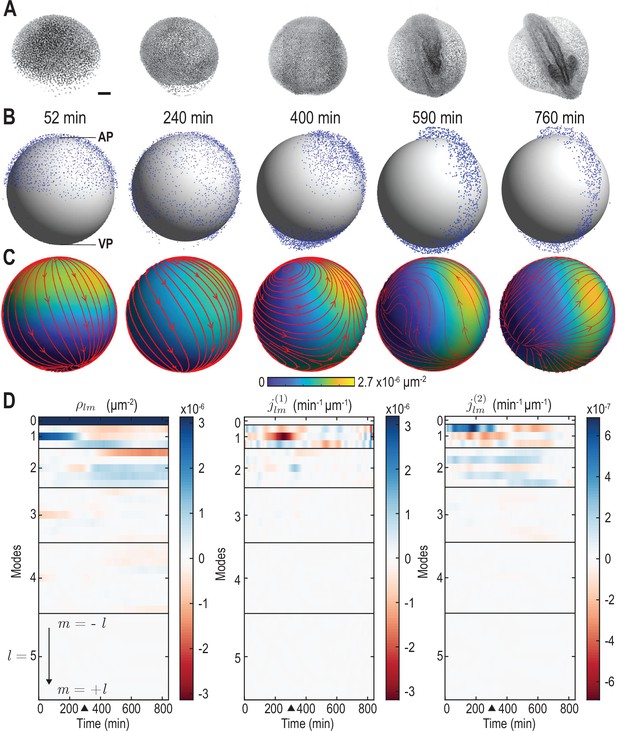

Broadly, our goal is to translate experimentally measured single-cell trajectories on a curved surface into a quantitative model of collective cell migration dynamics. As a specific example, we consider recently published lightsheet microscopy data that captures the individual movements of thousands of cells during early zebrafish development from epiboly onset at 4 hours post-fertilization (hpf) to about 18 hpf (Shah et al., 2019). This developmental period is characterized by a collective symmetry breaking event during which cells collectively migrate over the yolk cell surface (Rohde and Heisenberg, 2007). Namely, they rearrange from an initial localization around the animal pole (AP) (Figure 1A, left) into a more elongated configuration that already indicates the basic geometry of the fully developed zebrafish larva (Figure 1A, right). Working with a two-dimensional (2D) sphere projection of the experimental data, we first describe a coarse-graining approach that faithfully captures cell-mass transport on a curved surface. We then construct a sparse mode representation of the resulting hydrodynamic fields in terms of scalar and vector spherical harmonic basis functions, discuss mode signatures of morphogenetic symmetry breaking events, and connect them to the dynamics of topological defects in the cellular flux. We validate this mode representation framework and the subsequent model inference using synthetic data of ABPs on a sphere, for which coarse-grained fields and learned models can be directly compared against analytical predictions. Finally, we infer a linear model for the mode dynamics of the experimental zebrafish data, which enables us to study the characteristics of cell interactions through kernels that couple cell density and flux and compare their features with the hydrodynamic mean-field signatures of ABPs on a sphere.

From single-cell tracking data to sparse mode amplitude representations.

(A) Microscopic imaging data of early zebrafish development (adapted from Figure 1b in Kobitski et al., 2015) shows cell migration from an initially homogeneous pole of cells (left) toward an elongated structure that indicates the head-tail axis of the fully developed organism. Scale bar, . (B) Experimental single-cell tracking data from Shah et al., 2019 (blue dots) during similar developmental time points (±20 min) as in A. min for the indicated time points in B corresponds to a developmental time of 4 hours post fertilization. The -axis points from the ventral pole (VP) to the animal pole (AP). (C) Coarse-grained relative cell density (color) and associated coarse-grained flux (streamlines) determined from single cell positions and velocities from data in B via Equation 2a. Thickness of streamlines is proportional to the logarithm of the spatial average of (see Video 1). (D) Dynamic harmonic mode representation of the relative density (Equation 4, left panel) and of the flux (Equation 5, middle and right panel) for fields shown in C. The modes correspond to compressible, divergent cell motion, the modes describe incompressible, rotational cell motion. Mode amplitudes become negligible for (Video 2). For all panels, horizontal black lines delineate blocks of constant harmonic mode number and black triangles denote the end of epiboly phase.

Coarse-graining of cellular dynamics on a spherical surface

The experimentally observed cell motions are approximately two-dimensional (2D): The radius of the yolk cell surface on which the dynamics takes place is much larger than the average height changes of the evolving cell mass (Shah et al., 2019). We therefore adopt a thin film approximation, in which the cellular motion is represented on an effective spherical mid-surface (gray surface in Figure 1B); refined future models should aim to account for the full 3D dynamics. Focusing here on the in-plane dynamics, we project all cell positions and velocities onto a spherical mid-surface of radius . On this spherical surface, each cell has a position and in-plane velocity .

As a second processing step, a coarse-grained representation of the single-cell dynamics on a spherical surface is determined. To facilitate the applicability of our framework to a wide range of experimental inputs, we propose a coarse-graining approach that can flexibly integrate cell number variations stemming from cell divisions, but also those from experimental uncertainties in cell imaging and tracking. Consequently, we first consider an idealized scenario in which the total cell number is approximately constant. In this case, mass conservation informs the construction of self-consistent coarse-graining kernels on a spherical surface. In a second step, we describe how this approach generalizes when there are variations in the total cell number.

Consistent coarse-graining of idealized microscopic data

Our specific aim is to translate microscopic cell positions and velocities into a continuous cell surface density and an associated flux at any point of the spherical mid-surface. For an approximately constant total number of cells, the fields and are related by the mass conservation equation

Here, denotes the in-plane divergence of the cell number flux. To convert cell position and velocities into a normalized cell surface density and an associated normalized flux , we consider a kernel coarse-graining of the form (Appendix 1)

where is the total number of cells and is the angular velocity of a given cell on a reference unit sphere (Appendix 1). The kernels and are given by a scalar and a matrix-valued function, respectively. The matrix kernel takes into account contributions of a particle with velocity at to nearby points on the sphere, which involves an additional projection to ensure that is everywhere tangent to the spherical surface (Appendix 1). Importantly, the mass conservation Equation 1 implies a non-trivial consistency relation between the kernels and in Equation 2a, Equation 2b. The kernels that obey this condition represent different coarse-graining length scales (Appendix 1—figure 2). Throughout, we fix an intermediate coarse-graining length scale to enable a sparse representation of the experimental data, while ensuring that spatial details of the dynamics remain sufficiently well resolved. The final surface density and the associated normalized flux , computed from Equation 2a and Equation 2b using a kernel with an effective great-circle coarse-graining width of , are shown in Figure 1C (see also Video 1).

Time evolution of the pre-processed cell tracking data (point cloud, see Materials and methods), and of the density field (colormap) and associated flux (streamlines) corresponding to the harmonic modes shown in Figure 1D.

This mode representation was determined by the coarse-graining and projection procedure described in the main text. Streamline thickness is proportional to the logarithm of the average flux amplitude . For visualization purposes, cell distances to the origin were rescaled by a factor of , where is the average cell distance from center at time and is the mid-surface radius.

Consequences of cell number variations in experimental data

Because cell divisions are essential to most developmental processes, total cell numbers will in many cases – including early zebrafish gastrulation (Kobitski et al., 2015) – vary over time. True cell numbers and cell number changes are often difficult to measure due to experimental uncertainties arising from single-cell imaging and tracking within dense cellular aggregates. We therefore merely assume here that single cells are tracked in a representative fashion so that local relative surface densities found from Equation 2a reflect the probability that cells are present at a given point . In the absence of further information on cell deaths and cell divisions, we additionally make the more restrictive assumption that cell appearances or disappearances are everywhere proportional to the local cell density. With these assumptions, we can define a cell number surface density , where is the cell number at time and is the normalized surface density given in Equation 2a. Similarly, a cell number flux is given by , where the flux is computed from the data as described by Equation 2b. Using these definitions in Equation 1, we find that the fields and obey a continuity equation

where denotes a time-dependent effective growth rate. Importantly, under the two above assumptions, Equation 3 encodes for any time-dependent total cell number the same information as Equation 1 for coarse-grained normalized surface density and associated flux given by Equation 2a and Equation 2b, respectively. In the following analysis, we hence focus on these normalized fields.

Spatial mode representation on a spherical surface

To obtain a sparse mode representation of the hydrodynamic fields and on the spherical surface, we expand them in terms of scalar and vector spherical harmonics (SHs) (Arfken et al., 2013; Sandberg, 1978) (Appendix 2.A). SHs are defined on points of the unit sphere, where μm is the mid-surface radius. In this basis, the scalar density field is represented as

which conveniently separates the time- and space-dependence of into mode amplitudes and scalar harmonic functions , respectively. The maximal mode number is a proxy for the maximal spatial resolution at which is faithfully represented. Similarly, the vector-valued flux can be decomposed into time-dependent mode amplitudes and , while its spatial dependence is described by vector SHs and (Sandberg, 1978) (Appendix 2, Video 2).

Reconstruction of the hydrodynamics fields in real space by adding consecutive scalar and vector spherical harmonic modes of progressively higher order .

Surface coloring depicts the density field , the associated flux is indicated by streamlines. Streamline thickness is proportional to the logarithm of the average flux amplitude . The shown fields correspond to the time point min in Video 1.

Besides the in-plane divergence that leads to local density changes (see Equation 1), the cell number flux also contains an in-plane curl component that is associated with locally rotational cell flux. The two sets of vector SHs and conveniently decompose the flux into these contributions: Because , and (Sandberg, 1978), we see from Equation 5 that corresponds to modes that drive density changes and represents modes of local rotational cell motion that change relative cell positions but do not change local density. Indeed, using harmonic mode representations of the cell number density Equation 4 and the cell number flux Equation 5 directly in the continuity Equation 1, we find a set of ordinary differential equation in mode space

where and for each value of , . Equation 6 offers an alternative way of determining the modes directly from the modes of the coarse-grained cell number density (see Equation 4 and Equation 2a), while ensuring that the resulting fields obey mass conservation exactly. In practice, the modes found from a vector harmonic representation of the coarse-grained cell number flux (Equation 2b) will often deviate from modes determined from Equation 6, even if cell numbers are expected to be conserved. This can be, for example, due to limited accuracy in determining velocities from noisy single-cell trajectories , or due to spatially inhomogeneous appearances and disappearances of cells in tracking data. Consistent with our simplifying assumption that cell number changes in the data can be sufficiently well approximated by a globally homogeneous growth rate (compare Equation 1 with Equation 3), the subsequent analysis uses the modes as determined from the density modes via Equation 6, together with modes from the explicit velocity coarse-graining Equation 2b. The complete construction is detailed in Appendix 2 and the full coarse-grained dynamics is shown in Video 1.

The representation of and in terms of spherical harmonic modes with leads in total to mode amplitude trajectories, displaying only a few dominant contributions (Figure 1D) with almost no signal remaining for (Figure 1—figure supplement 1, Video 2). This demonstrates that the underlying coarse-grained experimental data is sufficiently smooth and implies that a spectral representations is indeed meaningful. Thus, the coarse-graining approach outlined above provides a sparse spectral representation of high-dimensional microscopic single-cell data. The associated harmonic basis functions and vectors have an intuitive physical meaning, convenient algebraic properties and, as we will see, encode information about the length scales and symmetries of the collective dynamics.

Temporal mode representation

We further compress the dynamical information by representing the time series of the modes in terms of Chebyshev polynomial basis functions (Driscoll et al., 2014; Mason and Handscomb, 2002). To simplify notation, we define a dynamic mode vector that collects all the modes up to determined in the previous section and consider an expansion

in terms of the spatio-temporal mode coefficients with temporal mode number (Appendix 2). This compression allows us to accurately evaluate time derivatives of the mode amplitudes (Supekar et al., 2021), an important step when using Equation 6 to determine flux modes directly from density modes . Fixing and in the remainder, the initial single-cell data set of about 1.4 million recorded cell position entries, or 4.2 million degrees of freedom, has thus been reduced to 2250 mode coefficients, corresponding to a compression ratio .

Characterization of the developmental mode dynamics

A harmonic mode decomposition naturally integrates the geometry of the underlying domain and simultaneously provides useful insights into spatial scales and symmetries of the dynamics. For each mode in the sets of SHs , and , the integer index indicates the spatial scale of the harmonic, with being a constant and larger indicating progressively finer spatial scales. The second index provides additional information about the orientation of the harmonic scalar function or vector field. The modes and are particularly useful for characterizing the symmetry of spatial patterns on a spherical surface (Mietke et al., 2019; Scholich et al., 2020): Modes with indicate patterns with a global polar symmetry, whereas modes with represent spatial patterns with a global nematic symmetry. We now exploit these features for a detailed characterization of the symmetry breaking that takes place during cellular rearrangements and to study the properties of the cellular flux in more detail. To this end, we discuss spatial averages

of different real-space observables over the mid-surface .

Mode signatures of developmental symmetry breaking

To study how different developmental stages and their associated symmetry breaking events are reflected in the mode representation, we first consider the average cell surface density fluctuations

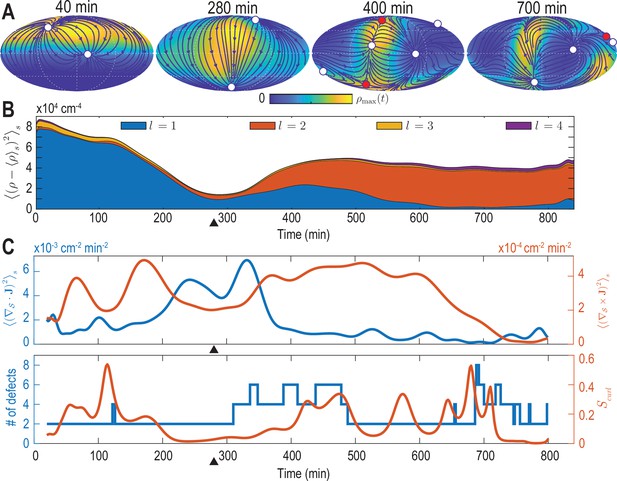

For each mode , the power spectrum in Equation 9 provides a rotationally invariant quantity (Çetingül et al., 2012; Schwab et al., 2013) that can effectively serve as an order parameter to characterize the symmetry of cell density patterns on the spherical surface. The dynamics of the density fluctuations given in Equation 9 broken down into contributions from each mode is shown in Figure 2B. Several features of this representation are particularly striking and can be directly related to specific developmental stages. First, patterns of cell surface density fluctuations evolve from a dominantly polar symmetry () into density patterns with a prominent nematic symmetry (). These mode signatures intuitively reflect the essential symmetry breaking that takes place when cells collectively reorganize from an initially localized cell dome (Figure 1B, 52 min) into an elongated shape that wraps in an open ring-like pattern around the yolk cell (Figure 1B, 760 min). Second, during this transition at around 300 min (9 hpf) (black triangle in Figure 2B), the cell surface density is most homogeneous as fluctuations become minimal for all modes . Interestingly, this time point approximately marks the completion of epiboly, when the different cell layers have fully engulfed the yolk. Finally, although in a less pronounced manner, the power spectrum of the mode also exhibits an increased amplitude towards later times, indicating the formation of structures at finer spatial scales as development progresses. We find that mode signatures of the symmetry breaking and progression through developmental stages are robust (Figure 2—figure supplement 1B, D), illustrating that mode-based analysis can provide a systematic and meaningful characterization of developmental symmetry breaking events.

Mode signatures of developmental symmetry breaking and topological defects in cellular flux.

(A) Two-dimensional Mollweide projection of the compressed coarse-grained density field (colormap) and of the coarse-grained cell flux (streamlines) at different time points of zebrafish gastrulation.White circles depict topological defects of charge +1 in the flux vector field, red circles depict defects with charge -1. The total defect charge is 2 at all times. Defects are seen to ‘lead’ the large-scale motion of cells and later localize mostly along the curve defined by the forming spine. Animal pole (AP) and ventral pole (VP) are located at top and bottom, respectively. (B) Density fluctuations as a function of developmental time (see Equation 9), broken down in contributions from different harmonic modes . The underlying symmetry breaking is highlighted prominently by this representation: During the first 75% of epiboly (0–280 min) cells migrate away from, but are still mostly located near the animal pole, presenting a density pattern with polar symmetry (). During the following convergent extension phase cells converge towards a confined elongated region that is ‘wrapped’ around the yolk, corresponding to a density pattern with nematic symmetry (). Black triangles indicate transition from epiboly to convergent extension. (C) Comparison of surface averaged divergence and curl of the cellular flux computed via Equation 10a and Equation 10b, respectively (top). A relative curl amplitude computed from these quantities via Equation 11 correlates with the appearance of an increased number of topological defects in the cell flux (bottom), suggesting that incompressible, rotational cell flux is associated with the formation of defects.

Mode signatures of emergent topological defects in cellular flux

The vectorial nature of the cell number flux on a spherical surface implies the presence of topological defects (colored circles in Figure 2A, see Materials and methods) (Kamien, 2002). Several recent experimental results pertaining to the self-organization of multicellular systems suggest an important role of such topological defects in organizing morphogenetic events (Doostmohammadi et al., 2016; Saw et al., 2017; Guillamat et al., 2020; Copenhagen et al., 2020; Meacock et al., 2020; Maroudas-Sacks et al., 2020). We therefore analyze how defects within the cell number flux are dynamically organized during early zebrafish gastrulation and if signatures of defect formation and annihilation are present in the mode representation Equation 5. We first consider the average squared divergence and curl of the cell number flux given by

which are shown in Figure 2C (top). The two contributions to the collective cellular dynamics – locally compressible, divergent flux quantified by the divergence and locally incompressible, rotational cell motion characterized by the curl – are independently determined by the modes and . Therefore, each contribution can be evaluated conveniently and with high accuracy from a representation of in terms of vector SHs. From Figure 2C (top), we see that the most significant divergent flux (blue curve) occurs around 300 min at the transition from epiboly towards the convergence and extension stage. A quantification of the incompressible rotational flux relative to the total cell number flux is shown in Figure 2C (bottom), where we plotted the relative curl amplitude

This measure suggests a correlation between incompressible rotational cell motion and the occurrence of topological defects (circles in Figure 2A) in the cell flux . The total number of topological defects present at any time point is depicted in Figure 2C (bottom, blue curve). Because the vector-valued flux is defined on a sphere, we observe that the total topological charge always sums to +2 (Kamien, 2002), while additional defect pairs with opposite charge (red +1 and white -1 circles in Figure 2A) can be created, resulting in total defect numbers greater than two (see Figure 2C, bottom). Interestingly, the relative curl amplitude defined in Equation 11 indicates that increased contributions from incompressible rotational flux are associated with the formation of topological defects in the cell number flux, a feature that is robustly identified by our framework (Figure 2—figure supplement 1A, C, Figure 2—figure supplement 3, Figure 2—figure supplement 4). The appearance of additional defects at the end of epiboly, when the developing embryo begins to extrude more significantly in the radial direction, suggests that topological defects in the 2D projected cellular flux fields could signal the start of the formation of more complex structures in three dimensions.

Learning a linear hydrodynamic model of the developmental mode dynamics

The results in Figure 2 confirm that a low-dimensional mode representation can capture essential characteristics of developmental symmetry breaking processes. The mode representation therefore provides a natural starting point for the inference of hydrodynamic models from coarse-grained cell-tracking data. For a given time-dependent mode vector that contains all modes up to , the simplest hydrodynamic model corresponds to the linear dynamical equation

where the constant coefficient matrix encodes the couplings between different modes. Intuitively, Equation 12 aims to describe an experimentally observed density and flux dynamics in terms of a relaxation process, starting from inhomogeneous initial conditions represented by . The mathematical learning problem is then to find a coefficient matrix such that the linear model Equation 12 holds for the mode vector time series that was determined from the coarse-graining procedure described in the previous sections.

Validation of the learning framework using active Brownian particle dynamics

Before applying the combined coarse-graining and inference framework to experimental data, we illustrate and validate the learning approach on synthetic data for which coarse-graining results and hydrodynamic mean-field equations are analytically tractable. To this end, we consider the stochastic dynamics of non-interacting active Brownian particles (ABPs) on the unit sphere of radius (Sknepnek and Henkes, 2015; Fily et al., 2016; Castro-Villarreal and Sevilla, 2018). Similar to a migrating cell, an ABP at position moves across the unit sphere at constant speed v0 in the direction of its fluctuating orientation unit vector . The strength of the orientational Gaussian white noise is characterized by a rotational diffusion constant (Figure 3A, Appendix 3).

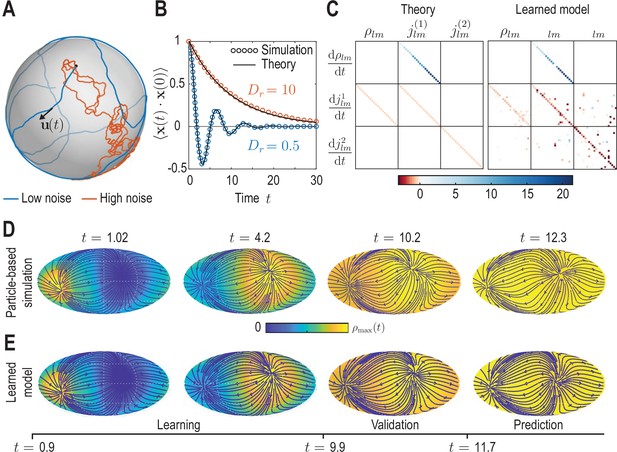

Learning active Brownian particle (ABP) dynamics on a sphere.

(A) ABPs move on a unit sphere (radius ) with angular speed along a tangential unit vector that is subject to stochastic in-plane fluctuations (see Appendix 3 for further details). Example single-particle trajectories are shown in the high-noise (orange, in units of ) and in the low-noise regime (blue, ). Time is measured in units of in all panels. (B) Position correlation function averaged over independent ABP trajectories show distinct oscillations of period in the low-noise regime, as ABPs orbit the spherical surface more persistently (see Video 3). Standard error of the mean is smaller than symbol size. (C) Analytically predicted (left) and inferred (right) dynamical matrices (see Equation 12) describing the mean-field dynamics of a large collection of non-interacting ABPs (see Equation 13a, Equation 13b, Equation 13c and Appendix 3) show good quantitative agreement. (D) Mollweide projections of coarse-grained ABP simulations with and using cell positions from the first time point in the zebrafish data (Figure 1) as the initial condition: At each position 60 particles with random orientation were generated and their ABP dynamics simulated, amounting to approximately particles in total. The density fields homogenize over time, where the maximum density at has decayed to about 5% of the maximum density at . Blue lines and arrows indicate streamlines of the cell flux . (E) Simulation of the learned linear model, Equation 12 with shown in (C) (right), for the same initial condition as in D. Marked time points indicate intervals of learning, validation and prediction phases of the model inference (see Appendix 4).

Coarse-grained dynamics of active Brownian particles on the unit sphere in the low-noise () and high-noise () regime.

Data from independent ABP simulations was coarse-grained using the kernels and () described in Appendix 1. Initial ABP positions were sampled from an axisymmetric distribution with . Mollweide projections in the left and right column are color-coded for density and flux magnitude , respectively. Colormaps are normalized by the maximum values of density and flux magnitude fields across all time points.

Compared with conventional passive Brownian motion, self-propulsion of an ABP along its orientation direction introduces a persistence to the particle’s motion that is reduced as rotational noise is increased. Additionally, the topology of the spherical surface implies that in the low-noise regime, , particles are expected to return to the vicinity of their starting points after a duration . The conjunction of persistent motion and topology then leads to oscillatory dynamics in the positional correlation (blue dots in Figure 3B, Appendix 3). Comparing correlations from stochastic ABP simulations in different noise regimes with theoretical predictions (solid lines in Figure 3B) validates our numerical ABP simulation scheme.

To generate a test data set for our coarse-graining and inference framework, we simulated non-interacting ABPs in both the low-noise () and the high-noise () regime with initial positions drawn from the experimental data shown in Figure 1. Specifically, at each cell position present in the data, we generated 60 particles with random orientation, amounting to approximately particles in total, and simulated their dynamics on a unit sphere. The resulting trajectory data were coarse-grained following the procedure outlined in the previous sections, yielding dynamic density fields and fluxes (Video 3), together with their mode representations and .

In the second ‘learning’ step, we infer a sparse mode coupling matrix that approximates the dynamics Equation 12 for the dynamical mode vectors obtained from the coarse-grained simulated ABP data. Our inference algorithm combines adjoint techniques (Rackauckas et al., 2021) and a multi-step sequential thresholding approach inspired by the Sparse Identification of Nonlinear Dynamics (SINDy) algorithm introduced by Brunton et al., 2016. The full algorithm is detailed in Appendix 4 and illustrated in the summary flowchart Appendix 4—figure 1. Importantly, we perform the sparse regression using dynamical mode vectors rescaled by their median absolute deviation (MAD) to compensate for substantial scale variations between different modes. The final output matrix of this learning algorithm is shown in the right panel of Figure 3C and can be compared against the analytically coarse-grained dynamics of ABPs on curved surfaces (Fily et al., 2016; Castro-Villarreal and Sevilla, 2018). Under suitable closure assumptions (Appendix 3), the mean-field dynamics of ABPs on a unit sphere is given in harmonic mode space by

from which we can read off the mode coupling matrix shown in the left panel of Figure 3C. A direct comparison between the theoretical and the inferred matrices shows that our framework recovers both the structure and the quantitative values of with good accuracy. Due to the finite number of ABPs used to determine the coarse-grained fields, we do not expect that the theoretically predicted coupling matrix is recovered perfectly from the data. Instead, some mode couplings suggested by Equation 13a may not be present or modified in the particular realization of the ABP dynamics that was coarse-grained. Indeed, direct simulation of the learned model projected in real space (Figure 3E) reveals a density and flux dynamics that agrees very well with the dynamics of the the coarse-grained input data (Figure 3D). Altogether, these results demonstrate that the proposed inference framework enables us to to faithfully recover expected mean-field dynamics from coarse-grained fields of noisy particle-based data.

Learning developmental mode dynamics from experimental data

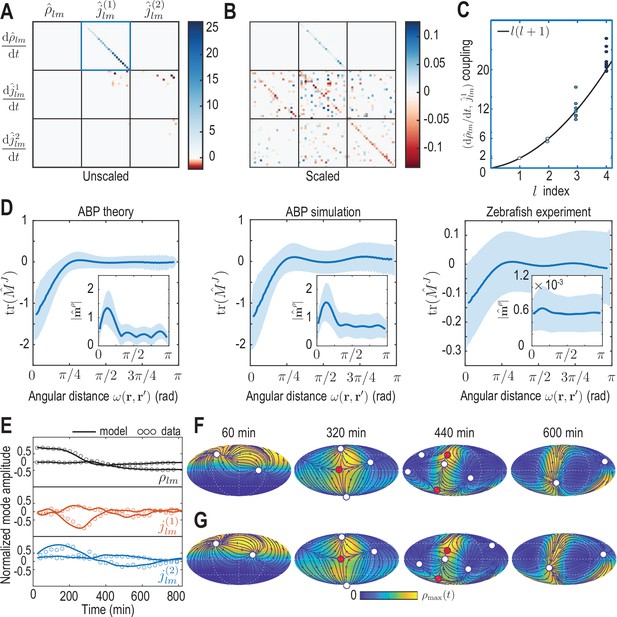

The same inference framework can now be directly applied to the coarse-grained experimental zebrafish embryo data shown in Figure 1C and D, yielding a sparse coefficient matrix (Figure 4A and B) that encodes the dynamics of the developmental mode vector according to Equation 12. The inferred coupling between the time derivative of density modes and flux modes faithfully recovers mass conservation (Figure 4C; see Equation 6). Overall, the learned matrix has 395 non-zero elements, effectively providing further compression of the experimental data, which required 2,250 spatio-temporal mode coefficients collected in (see Equation 7) for its representation. Using the mode vector of the first experimental time point as the initial condition, the inferred minimal model Equation 12 with shown in (Figure 4A and B) faithfully recovers both the mode and real-space dynamics seen in the coarse-grained fields of the experimental input data (Figure 4E–G, Video 4).

Model learning for experimental data of collective cell motion during early zebrafish development.

(A) Visualization of the constant mode coupling matrix that was learned from experimental data (see Appendix 4) and describes the dynamics of the mode vector via Equation 12. Dimensionless fields are defined by and () with and min. (B) Scaling the learned matrix by the Mean Absolute Deviation (MAD) of the modes (see Appendix 4) reveals structures reminiscent of the mode coupling matrix learned for ABPs (Figure 3C). (C) The learned model recovers mass conservation in mode space (Equation 6). (D) Comparison of theoretical and inferred real-space kernels (see Equation 14 and Appendix 4) for the ABP dynamics and for the experimental data of collective cell motion. The trace of the non-dimensional kernel (the only non-zero eigenvalue, Appendix 4—figure 2) indicates a localized flux-flux coupling with a similar profile among both systems. The oscillating magnitude of the non-dimensionalized density-flux kernel (insets) in the ABP system indicates a gradient-like coupling and is consequence of the persistent ABP motion. In the experimental data, a first peak around is also visible, but less pronounced. All kernel properties were computed by averaging over pairs of positions that are separated by the same angular distance . Solid lines indicate mean, shaded areas indicate standard deviation. (E) Comparison of experimental mode dynamics (circles) with numerical solution (solid line) of the minimal model Equation 12 for learned matrix visualized in A. For clarity, the comparison is shown for the two dominant modes of each set of harmonic modes and . (F, G) Mollweide projections of the experimental data (F) and of the numerical solution of the learned model (G) show very good agreement (Video 4). Blue lines and arrows illustrate streamlines defined by the cell flux , circles depict defects with topological charge +1 (white) and -1 (red).

Comparison of dynamics of the experimental and learned density (colormap) and flux fields (streamlines) represented in a Mollweide projection.

White circles depict topological defects of charge +1 in the vector field , red circles depict defects with charge -1. The total defect charge is 2 at all times. Top row depicts the coarse-grained (see Equation 2a) and projected (see Equations 4–7) experimental data, snapshots in the bottom row are obtained by reintegrating the ordinary differential equation model Equation 12 using the learned matrix (see Figure 4A). The colorbar is at each time point scaled to the interval [0, ].

It is instructive to analyze the inferred matrix and the linear model it encodes in more detail. Comparing the MAD-rescaled matrix (see Appendix 4) learned for the experimental zebrafish data (Figure 4B) with the non-dimensionalized matrix learned for the active Brownian particle dynamics (Figure 3C), we find similar patterns of prominent diagonal and block-diagonal couplings. Consistent with the analysis of single cell trajectories (Shah et al., 2019), this suggests that a random, but persistent movement of cells akin to ABPs moving on a sphere partially contributes to the early gastrulation process in zebrafish. This is complemented in the minimal model of the experimental dynamics by significant off-diagonal contributions (Figure 4B), which are absent in the non-interacting ABP model. Such off-diagonal contributions represent effective linear approximations of cell-cell interactions, environmental influences or other external stimuli reflected in the experimental time-series data. Ultimately, such contributions to the mode coupling matrix help realize the symmetry breaking process observed in the underlying experimental data (Figure 2).

The inferred mode coupling matrix shown in Figure 4B together with Equation 12 provides a highly robust minimal model. Specifically, despite being linear, it is numerically stable over a period approximately four times as long as the input data from which the matrix was learned. Furthermore, simulations with modified initial conditions (see Figure 4—figure supplement 1) still exhibit a characteristic symmetry breaking and lead to the emergence of density and flux patterns similar to those seen in Figure 4F and G. For example, simulating Equation 12 using the initial condition of a different experimental data set (Figure 2—figure supplement 1) leads to final patterns with the same symmetry as in the original training data, further corroborating that the observed symmetry breaking is directly encoded in the interactions represented by the matrix . A similar robustness is observed under moderate perturbations of the initial condition, such as a rotation of initial cell density patterns relative to the coordinate system in which was inferred, or a local depletion of the initial density, emulating a partial removal of cells as experimentally realized in Morita et al., 2017. Taken together, these numerical experiments demonstrate that the inferred mode coupling matrix meaningfully captures the dynamics and interactions of cells that facilitate the symmetry breaking observed during early zebrafish development.

Green’s function representation of learned models in real space

To characterize the inferred spatial interactions in more detail, we can analyze the real-space representation of the learned mode coupling matrix . While the density dynamics represented by (the first row in Figure 4AB) simply reflects mass conservation Equation 1 in real space, the dynamics of the flux (the second and third row in Figure 4A and B) corresponds in real space to the integral equation (Appendix 4)

where is the spherical surface area element. The vector-valued kernel in Equation 14 connects the distribution of cell density across the surface to dynamic changes of the flux at a given point . Similarly, the matrix-valued kernel describes how the distribution of cell fluxes at affects temporal changes of the flux at .

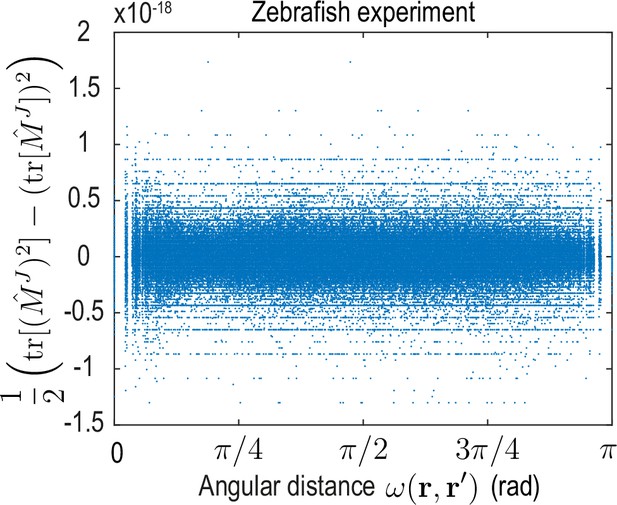

To analyze the spatial range of interactions between points and , we use the fact that the matrix-valued kernel has only one non-zero eigenvalue (Appendix 4—figure 2). Consequently, the trace serves as a proxy for the distance-dependent interaction strength mediated by . Averages of over point-pairs with the same angular distance are shown for the ABP dynamics and for the minimal model inferred from experimental data in Figure 4D. Note that to make the models amenable to comparison, we compute from the known mean-field model of ABPs (Equation 13a) using the same finite number of modes as used to represent the ABP and the zebrafish data (). In theory, one expects for the ABP dynamics a highly localized, homogeneous kernel , so that an exact spectral representation would require an infinite number of modes (see Appendix 4). In practice, using a finite number of modes leads to a wider kernel range (Figure 4D ’ABP theory’) and introduces an apparent spatial inhomogeneity, as indicated by the non-zero standard deviation of at fixed distance (blue shades). Both the quantitative profile of and its variation are successfully recovered by applying the inference framework to stochastic simulations of ABPs (Figure 4D ’ABP simulation’) where was computed from the learned mode coupling matrix shown in Figure 3C. For the inferred minimal model of the cell dynamics (Figure 4D ’Zebrafish experiment’), we find a similar short-ranged flux-flux coupling mediated by . However, the increased variability of at fixed distances indicates more substantial spatial inhomogeneities of the corresponding interactions. These inhomogeneities are absent in a non-interacting system of ABPs and represent an interpretable real-space signature of the symmetry-breaking mechanisms built into the underlying mode coupling matrix .

A similar analysis can be performed for the kernel that couples the density at position to dynamics of fluxes at position (see Equation 14), where we average the magnitude over pairs (, ) with the same angular distance (Figure 4D insets). Using a finite number of modes to compute this kernel in the different scenarios again introduces apparent spatial inhomogeneities in all cases. Additionally, all kernel profiles exhibit a distinct maximum at short range, indicating a coupling between density gradients and the flux dynamics that emerges microscopically from a persistent ABP and cell motion (see Appendix 3 and 4) – an observations that is consistent with the similar block-diagonal structure of both inferred matrices (compare Figures 3C and 4B).

In conclusion, the real-space analysis and comparison of inferred interaction kernels further highlights potential ABP-like contributions to the collective cellular organization during early zebrafish development and reveals an effectively non-local coupling between density and flux dynamics. The latter could result, for example, from unresolved fast-evolving morphogens (Hannezo and Heisenberg, 2019), through mechanical interactions with the surrounding material (Münster et al., 2019) or due to other relevant degrees of freedom that are not explicitly captured in this linear hydrodynamic model. More generally, a real-space representation of kernels provides an alternative interpretable way to study the interactions and symmetry-breaking mechanisms encoded by models directly learned in mode space.

Discussion

Leveraging a sparse mode representation of collective cellular dynamics on a curved surface, we have presented a learning framework that translates single-cell trajectories into quantitative hydrodynamic models. This work complements traditional approaches to find quantitative continuum models of complex multicellular processes (Etournay et al., 2015; Hannezo et al., 2015; Morita et al., 2017; Streichan et al., 2018; Münster et al., 2019) that match problem-specific constitutive relations of active materials in real-space with experimental observations. We have demonstrated here that length scales and symmetries associated with a mode representation can directly inform about the character of symmetry breaking transitions and topological features of collective cellular motion even before a model is specified. The successful applications to synthetic ABP simulation data and experimental zebrafish embryo data show that model learning in mode space provides a promising and computationally feasible approach to infer quantitative interpretable models in complex geometries.

The learned linear minimal model for cell migration during early zebrafish morphogenesis quantitatively recapitulates the spatiotemporal dynamics of a complex developmental process (Figure 4F and G), and highlights similarities between collective cell migration and analytically tractable ABP dynamics on a curved surface. An extension to nonlinear mode-coupling models or an integration of additional, experimentally measured degrees of freedom, such as concentration fields of morphogens involved in mechanochemical feedbacks (Hannezo and Heisenberg, 2019), is in principle straightforward by including nonlinear terms in Equation 12. Furthermore, the above framework could be generalized to describe the dynamics within a spherical shell of finite height by complementing the surface vector SHs used in this work by their radial counterpart (Barrera et al., 1985).

To provide a concrete example, we focused here on applying the model learning framework to single-cell tracking data of early zebrafish morphogenesis. However, the essentially spherical organization of cells during gastrulation observed in zebrafish is shared by many species whose early development occurs through a similar discoidal cleavage (Gilbert and Barresi, 2016), and the framework introduced here is directly applicable once tracking data becomes available for these systems. More generally, as novel imaging technologies are being developed (Keller et al., 2010; Royer et al., 2016; Shah et al., 2019), we expect that even larger and more detailed imaging data will further facilitate the exploration of finer scales and length-scale bridging processes (Lenne et al., 2021) through learning approaches that directly built on mode-based data representations.

Materials and methods

Data pre-processing

Request a detailed protocolWe obtained two single-cell tracking data sets from the experiments described in Shah et al., 2019. These data consist of the Cartesian coordinates of each cell together with a tracking ID. Some of the data is accessible at https://idr.openmicroscopy.org with ID number idr0068. We first denoised each cell trajectory using MATLAB’s (Matlab, 2019) wavelet denoiser function wdenoise, and centered the cloud of cells by least-squares fitting a spherical surface through it and shifting the origin at each time to coincide with the center of this sphere. We then computed the velocity of each cell by using Tikhonov-regularized differentiation as described in Knowles and Renka, 2014 and implemented in the MATLAB third-party module rdiff (Wagner, 2020). After examination of the cells’ velocity distribution, we further removed outlier cells whose speed is in the 95th percentile or above and verified that this operation only removes aberrant cells. Finally, we rotated the data to align the animal pole of the embryo with the -axis, as determined by the direction of the center of mass of the initial cell distribution. The resulting single cell data are shown as point clouds in Figure 1B and in Video 1.

Topological defect tracking

Request a detailed protocolWe have developed a defect tracker that identifies topological defects in vector fields tangent to a spherical surface via integration along suitable Burger circuits. The corresponding software is available at (https://github.com/NicoRomeo/surf-vec-defects; Romeo, 2022, copy archived at swh:1:rev:6dc742c376b0d085e19ece65f932ac6935342aba).

Appendix 1

Consistent coarse-graining on curved surfaces

We describe the derivation of self-consistent coarse-graining kernels that are used in the main text to convert single cell information into a continuous density field and its associated fluxes on a spherical surface. We first motivate this problem for a flat surface and then proceed with a detailed derivation for the case of a spherical surface.

Kernel consistency in Euclidean space

It is instructive to first consider a set of particles at positions moving with velocities , where capitalized vectors indicate position and velocity in Euclidean space, e.g. particles move on a flat surface or within some three-dimensional volume. A coarse-grained density and a mass flux can be defined from this microscopic information by

where and represent a scalar-valued and a matrix-valued kernel function, respectively. At the same time, in a system with a constant number of particles, mass conservation implies, in general,

relating the density and the mass flux of particles. Using the coarse-graining prescriptions Equation 15a and Equation 15b directly in Equation 16 and assuming the resulting relation must hold for any set of particle trajectories, one finds a general kernel consistency relation

This condition is automatically satisfied for any translationally invariant and isotropic pair of kernels and , where is the unit matrix. Coarse-graining with such kernels is frequently employed in practice: Positions and velocities can be, for example, simply convolved with a Gaussian function of mean zero (Supekar et al., 2021).

Kernel consistency on a curved surface

For a surface parameterized by with generalized coordinates , two tangential basis vectors are defined by (). Partial derivatives are, in the following, denoted . The metric tensor is given by . The mean curvature is defined by , where denotes the unit surface normal and the Einstein summation convention is used. The covariant form of mass conservation Equation 1 (main text) on a curved surface reads

with and denotes the covariant derivative. In general, we are interested in describing an effective dynamics for cell positions and velocities that are projected onto a common reference sphere of radius . Such a description can be found by first formulating the coarse-graining approach for a unit sphere, on which particle positions and velocities are fully determined by angular coordinates and corresponding angular velocities, and finally rescaling the density and flux fields by suitable factors of . The corresponding coarse-graining Equation 2b (main text) of in-plane angular velocities for particles on a unit sphere reads covariantly

where and we drop the dependence on time to simplify the notation. The two-point kernel tensor (a ‘bitensor’) is evaluated in the tangent space of for its first index and in the tangent space of at the second, primed index (Appendix 1—figure 1). Mass conservation on a curved surface, Equation 18, together with the coarse-graining prescriptions Equation 2a (main text) and Equation 19 then implies a covariant kernel consistency relation

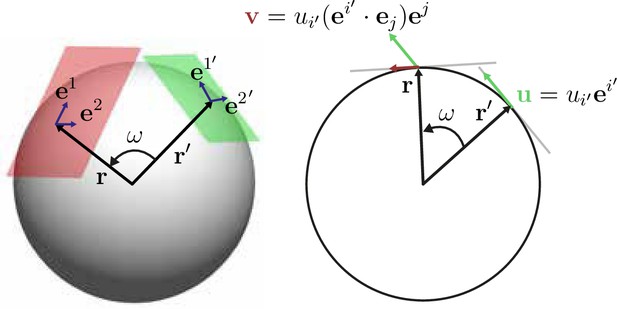

Illustration of the action of the coarse-graining tensor kernel Equation 19.

Left: acts in the two tangent space at points and that are separated by an angular distance . Each tangent plane has corresponding basis vectors, for . Right: The tensor kernel projects vectors in the tangent space of and generates a vector tangent at .

Solving the kernel consistency relation on a sphere

We solve Equation 20 in the following on the unit sphere, such that corresponds to the surface normal. The final result can simply be rescaled to any spherical surface of radius . Furthermore, we note that the parameter

provides a measure for the great circle distance between two points on a sphere. Hence, we consider an ansatz for the kernels in Equation 20 of the form

with two unknown scalar functions and . The relevant derivatives of the ansatz Equation 22a and Equation 22b can readily be evaluated to

Here, ⊗ denotes a dyadic product and we use and , which follows from Equation 21, as well as in the second equation, which holds on a unit sphere and follows from the definition of the mean curvature. We then use the expansion of the identity matrix in on the spherical basis , such that in our case with we have . Hence, Equation 23b becomes

Using Equation 24 and Equation 23a in the kernel consistency relation Equation 20 and dividing by (at , for which , Equation 20 is obeyed for any , ), we find that the scalar functions in the kernel ansatz Equation 22a and Equation 22b have to obey

Hence, the general covariant consistency relation Equation 20 implies for the kernel ansatz Equation 22a and Equation 22b that the weighting functions and must be related by

Kernel functions with compact support

In the last step, we determine a family of kernel functions and defined on the interval that satisfy Equation 25, along with the requirements:

and must be regular on

on

is normalized to one on the unit sphere.

Recalling with angular distance between and , a family of functions fulfilling these conditions is given by

where is an indicator function that is one if and vanishes otherwise (Appendix 1—figure 2). In this work, we have chosen the kernels Equation 22a and Equation 22b with and for . For these kernels derived here, densities and associated fluxes that are coarse-grained on a unit sphere can be converted into effective densities and fluxes on a spherical surface of radius through the rescaling and . Equivalently, rescaled kernels and can be used directly, as was done in Equation 2a and Equation 2b of the main text to generate the data shown in Figure 1 (main text).

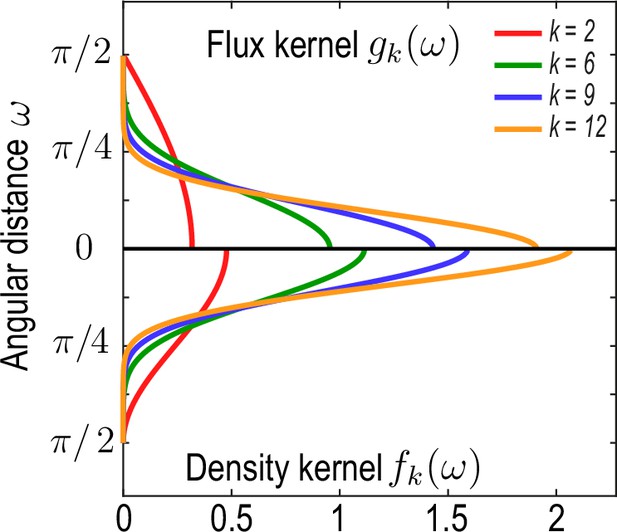

Family of kernel functions and given in Equation 26a.

These functions represent weights of the coarse-graining kernels Equation 22a and Equation 22b that are defined such that the kernels satisfy the consistency relation Equation 19. denotes angular distances between and. Coarse-graining of a conserved number of particles on a sphere to determine a density field (Equation 2a, main text) requires a different weighting – – than the coarse-graining of an associated flux (Equation 2b , main text), which requires a weighting instead to ensure that coarse-grained fields obey mass conservation Equation 18. A characteristic coarse-graining length scale associated with these kernels is the half-width at half-maximum (HWHM), which is related to by HWHM .

Appendix 2

Spatio-temporal mode decomposition

In this section, we provide explicit expressions for the scalar and spherical harmonic basis functions (‘spatial modes’), as well as for the Chebyshev basis functions (‘temporal modes’) used in this work. Additionally, we describe a systematic approach to determine the minimal number of modes needed to describe the coarse-grained data, while preserving a high level of accuracy in the representation.

Spatial basis: spherical harmonics

In this work, we use the real spherical harmonics defined in spherical coordinates by Arfken et al., 2013 as

where is the associated Legendre polynomial of degree and order , and

Vector spherical harmonics can be defined and expressed as vector fields in 3D or covariantly as (Sandberg, 1978; Mietke et al., 2019)

where denotes the gradient operator an a unit sphere, is the covariant Levi-Civita tensor, and the metric tensor. Scalar harmonics and either vector harmonic are orthogonal:

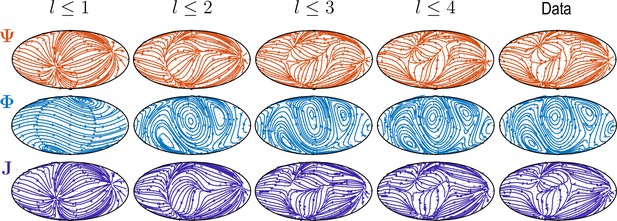

where . The increasing complexity of patterns and accuracy of reconstruction with larger is illustrated in Appendix 2—figure 1 and Video 2.

Sequentially adding vector spherical harmonics and – equivalent to increasing in Equation 5 – resolves increasing levels of details present in experimental flux fields ("Data").

Main features of the data are captured already by a relatively small number of modes ( used throughout this work).

Temporal basis: Chebyshev polynomials

Chebyshev polynomials of the first kind are defined by Arfken et al., 2013.

Chebyshev polynomials form an orthogonal basis of continuous functions on the interval , such that an expansion

uniformly converges as (Driscoll et al., 2014). This representation also allows computing derivatives spectrally from

Information loss through coarse-graining

Coarse-graining microscopic data into smooth fields is an irreversible operation, during which some of the original particle information is irretrievably lost. The choice of coarse-graining scale is thus dictated by a trade-off between smoothness and information content - choosing larger coarse-graining scales leads to smoother fields but blurs finer scale structures which may be of interest. To inform our choice of coarse-graining scale, we quantify the loss of information incurred by the coarse-graining operation.

The measure we introduce to quantify information loss is based on the the well-known relationship between the smoothness of functions in real space and Fourier space (Stein and Shakarchi, 2011): A smooth function in real space should have a peaked, quickly decaying spectrum in Fourier space while a collection of point-like objects such as delta functions should have a uniform non-decaying spectrum. Specifically, we describe a uniformly sampled field as a matrix with components being the field values . In our case, represents either the density field or any of the Cartesian components of the flux vector field at a given time point. We find the complex discrete Fourier spectrum of this matrix using the two-dimensional fast Fourier transform. We then calculate the power spectral density (PSD) of the Fourier spectrum as and interpret the normalized PSD.

as a discrete probability distribution. The spectral entropy characterizing the information content of the field is then defined by

Smooth fields are sharply peaked in Fourier space and have a low spectral entropy, whereas fields that resolve discrete single particle information are rather flat in Fourier space and have a large spectral entropy. The difference in entropy between particle data and smoothed fields then measures the information eliminated by the coarse-graining procedure. If we additionally normalize by the entropy of the spectral entropy of the raw particle data, we finally obtain a relative measure of the information that is lost in the coarse-graining process. In general, a measure as given in Equation 34 can be defined for any transform with the property that smoothness in real space leads to a fast decaying spectrum in transform space.

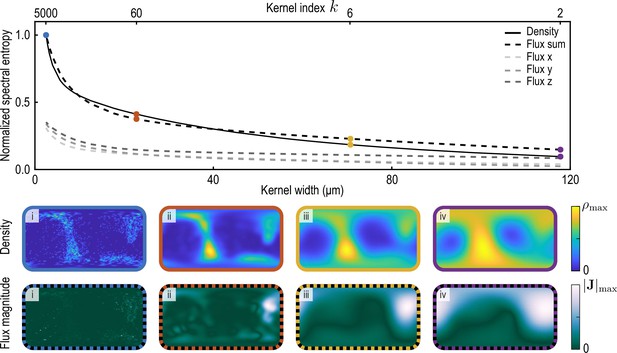

We compute the spectral entropy of density and flux component fields at a representative time point and for varying coarse-graining length scales (Appendix 2—figure 2). Specifically, we coarse-grain density and flux through the procedure described in the main text and in Appendix 1 for different values of the kernel parameter (see Equation 26a). Large values of correspond to small coarse-graining length scales, with the effective half-width at half-maximum (HWHM) of the kernels given in Equation 22a and Equation 22b with weight functions Equation 26a and Equation 26b scaling as HWHM . Normalized spectral entropies with are then computed using Equation 34. For the flux field, we define ("Flux sum" in Appendix 2—figure 2) and interpret the sum of these three contributions ("Flux x", "Flux y", "Flux z" in Appendix 2—figure 2) as the total information contained in the flux field. We find that the spectral entropies of all fields show similar features. In particular, an increasing coarse-graining width first results in a sharp loss of information as individual particle positions are blurred, followed by less steep information loss as continuous fields progressively lose details of finer structures. In this work, we use an intermediate value of the coarse-graining parameter (yellow data in Appendix 2—figure 2).

Normalized spectral entropy as a function of the coarse-graining kernel width (top) computed for density and flux field using Equation 34.

To evaluate the spectral entropy for the vector-valued flux, we define (‘Flux sum’). The coarse-graining width – the half-width at half-maximum (HWHM) of the coarse-graining kernels Equation 22a and Equation 22b with weight functions Equation 26a – is varied by varying the kernel index , where (see Appendix 1—figure 2). The fields and are shown in the two bottom rows for different values of i. (blue, data used to compute the reference spectral entropies and ) ii. (brown) iii. (yellow, used in main text) iv. (purple).

Optimal compression in space and time

Spectral representations are exact in the limit of an infinite number of modes. In practice, we choose a maximal harmonic mode number and maximal Chebyshev mode number . A too large value of and provides little compression benefit, while too small values suffer accuracy penalties. Hence, there is a compression-accuracy trade-off that we seek to optimize. To evaluate the trade-off quantitatively, we define a heuristic compression metric by

where is the number of sampled time steps and is the number of spatial grid points used for coarse-graining. Larger values of correspond to a higher compression factor. To define accuracy metrics, we consider the norm

where the sum runs over regularly sampled time points ti. We denote a particular mode representation of the data that was coarse-grained via Equation 2a and Equation 2b (main text) for as the ‘uncompressed’ reference. A measure to characterize the accuracy of a mode-truncated ‘compressed’ data representation is then given by a relative average mode reconstruction error

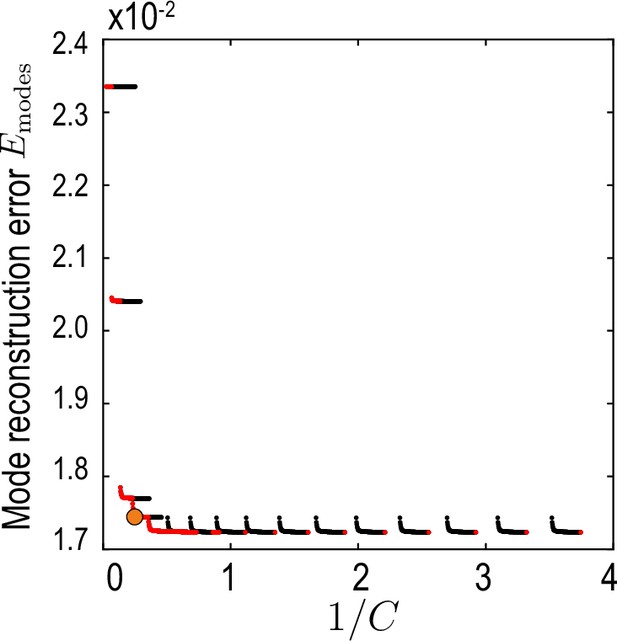

This measure compares the compressed mode representation , truncated at maximal Chebychev mode number (temporal representation Equation 32, Appendix 2) and maximal harmonic mode number (spatial representation, Equations 4; 5, main text) with the reference modes . To find a compromise between accuracy, characterized by , and compression defined in Equation 35, the aim is to find a pair on the Pareto front (Jin and Sendhoff, 2008) of vs. (red dots in Appendix 2—figure 3).

Relative average mode reconstruction error Equation 36.

As a function of the inverse of the compression defined in Equation 35. Red points indicate the Pareto front (Jin and Sendhoff, 2008) of this compression-accuracy approximation trade-off. Orange circle indicates the final value used for our analysis.

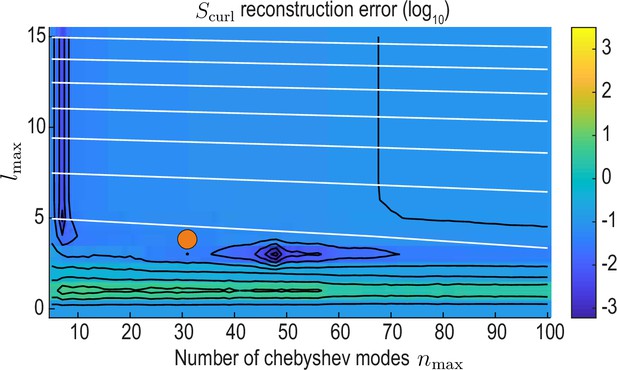

Note that the modes and are so far omitted from this analysis, because the latter are in practice found directly from density modes via Equation 6 (main text). However, taking temporal derivatives of using Equation 33 to determine introduces undesirable oscillations for too large Chebychev cut-offs . This implies an additional trade-off between the need for accuracy (higher ) and stability (lower ). In practice, we wish to find values of such that relative amplitudes of pairs and are preserved by the compression. This can be achieved by comparing the relative curl amplitude

to the analog quantity computed from the reference modes and analyzing the curl reconstruction error

as a function of and (Appendix 2—figure 4). From this, we find a region of low error around , which also is on the Pareto front of the accuracy vs. compression trade-off (orange circles in Appendix 2—figures 3 and 4) and represents the final values used throughout this work.

reconstruction error landscape (log scale) as a function of and .

Black contour lines indicate iso-error lines (see Equation 37, const.), whereas white contour lines indicate iso-compression levels (see Equation 35, const.). Orange circle indicates the final value used for our analysis.

Appendix 3

Active Brownian particles on the sphere

In this section, we describe the stochastic dynamics of non-interacting, active Brownian particles (ABPs) (Romanczuk et al., 2012) on curved surfaces and derive analytically coarse-grained mean-field equations, as well as a kernel representation of ABP dynamics. These results are used in the main text to validate our coarse-graining and inference framework.

We consider active Brownian particles at position that move with speed v0 on the surface of a unit sphere (radius ) in the direction of their unit orientation vector . Since at all times, we can interpret v0 as the particle’s angular speed on the unit sphere. The orientation vector is at all times tangential to the surface, but is subject to random in-plane fluctuations characterized by a rotational diffusion coefficient . The corresponding dynamics of and is given by the stochastic differential equations (in units )

where the stochastic differential Equation 38b is interpreted in the Stratonovich sense, as denoted by the symbol "o" (Braumann, 2007). It follows from Equation 38a that and are normalized at all times. In the absence of rotational diffusion (), the vectors and rotate over time by an angle around the axis . Consequently, particle trajectories in the absence of noise trace out great circles in the plane defined by .

Spatial correlation of APBs on a sphere

To illustrate how ABPs on a sphere differ from ABPs in Euclidean space, we study first the correlation function , where the angled brackets denote a Gaussian white-noise average. To this end, we rewrite the ABP dynamics Equation 38a in their equivalent Itô form given by

In the Itô formulation any smooth function obeys , such that (Winkler et al., 2015)

and

which yields a damped harmonic oscillator equation for the correlation function

Normalization and orthogonality of and imply the initial conditions and at . The behavior of solutions of Equation 40 is a function of the rotational Péclet number that quantifies the ratio between active motion and orientational diffusion. For , (‘high-noise regime’), the position correlation function decays according to Equation 40 monotonically to zero. For , (‘low -noise regime’) position correlations exhibit damped oscillations. To validate our simulation method (described in the following section), analytic predictions for are in Figure 3B (main text) compared against the ensemble average over simulated ABPs.

Stochastic simulation of active Brownian particles on the sphere

To ensure a numerically exact normalization of the particle’s position and orientation vectors on the unit sphere, we simulated the dynamics

We numerically solve the Itô formulation of this system using the Euler-Mayurama scheme (Higham., 2001), and confirm that this system reproduces the correlation dynamics predicted by Equation 40 (Figure 3B, main text).

Fokker-Planck equation

To study the continuum dynamics of a large number of non-interacting ABPs on a sphere, we determine the dynamics of the probability density of particle positions and orientations at time . To do so, it is convenient to express particle positions in terms of a parameterisation that defines tangential basis vectors by () and a metric tensor . By definition, we have and Equation 38a can be rewritten as

General tangential vectors on the surface can be written as and on a unit sphere the surface normal can be identified with particle positions . Hence, on the unit sphere the Gauss-Weingarten relation reads , where denote Christoffel symbols and is the curvature tensor. This implies together with Equation 42 the geometric relation

Comparing this identity with the stochastic dynamics in Equation 38b and using that for unit vectors on the unit sphere, we find the covariant stochastic differential equation

In Equation 43, denotes the Levi-Civita tensor on the unit sphere.

In this covariant basis, we define the scalar probability density

where denotes a Dirac function. Combining Equations 42; 43, standard methods (Fily et al., 2016; Castro-Villarreal and Sevilla, 2018) allow us to obtain the Fokker-Planck equation for as

Using the identity , the dynamics of the probability density is finally given by

which agrees with the result in Castro-Villarreal and Sevilla, 2018.

Hydrodynamic expansion

To connect the Fokker-Planck dynamics given in Equation 46 to hydrodynamic fields, we define (probability) density and fluxes by , and . Their dynamics on the unit sphere is given by Castro-Villarreal and Sevilla, 2018.

where couplings to higher order fields are neglected, as they vanish at shorter time-scales due to the presence of rotational noise. Expressing Equation 47a and Equation 47b in terms of scalar and vector spherical harmonics (see Appendix 2) for an arbitrary sphere radius R0 yields the mode dynamics Equation 13a, Equation 13b and Equation 13c the main text.

Appendix 4

Learning and interpreting the linear model

We describe details about the inference procedure used to learn the linear ordinary differential equation (ODE) model considered in the main text. We then discuss how the matrix found by this procedure can be further studied in terms of its real-space kernel representation and derive this kernel for the ABP dynamics introduced in Appendix 4.

Inference of the dynamical mode coupling matrix M

Given a dynamical mode vector , the goal is to learn a linear minimal model

of the mode dynamics. Here, is an unknown mode coupling matrix, where generally . In systems with global mass conservation, as considered in this work, one can additionally use that the mode is constant and eliminate the corresponding couplings from .

To describe the algorithm that was used to infer the mode coupling matrix , we parameterize by a vector that contains all non-zero entries and introduce a function that represents the underlying matrix structure. Together, they generate the explicit form of the mode coupling matrix. Imposing structure on the matrix, such as rank constraints, or sparsity leads to a shorter vector and modifies the definition of accordingly. Denoting as the result of numerically integrating the system of ODEs Equation 48 up to time from initial condition with , we define the loss function

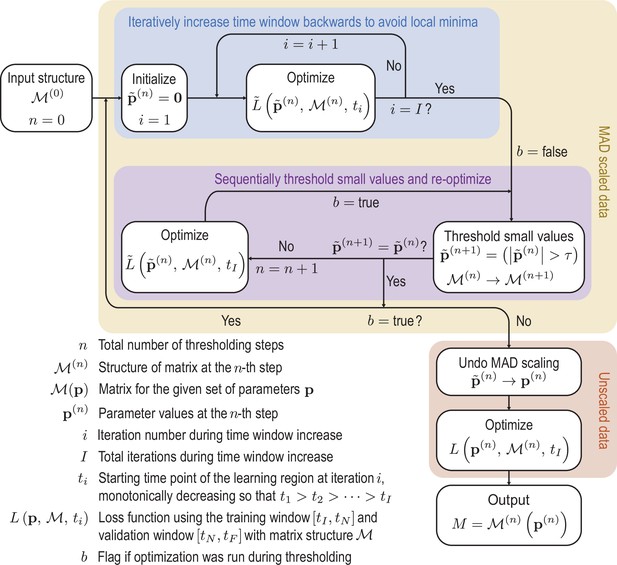

where the ti are time points in an interval at which the data and the ODE solution are sampled. Using the ODE solvers and optimization functions provided by the Julia modules DifferentialEquations.jl and DiffEqFlux.jl (Rackauckas et al., 2021), we can differentiate through the ODE solver to calculate derivatives of the loss function Equation 49 with respect to parameters and subsequently apply gradient-based optimization algorithms. The loss function is minimized using the ADAM algorithm (Kingma and Ba, 2017), followed by the Broyden-Fletcher-Goldfarb-Shannon (BFGS) algorithm (Nocedal and Wright, 2006). To increase the robustness of the optimization and promote sparsity, we use a sequentially thresholded algorithm (Supekar et al., 2021; Brunton et al., 2016; Reinbold, 2020). A complete overview of this procedure is shown in Appendix 4—figure 1 and the details of the specific design decisions made in the algorithm are discussed in the following:

1. To account for the variation in scale between the different modes in the data , each mode is normalized by its median absolute deviation (MAD) across the full time-span in which the data are available. Specifically, we scale each mode by

where and the median is taken over all time-points, giving rise to a scaled mode vector . Losses analogous to Equation 49 that are computed using scaled data are denoted in the following by .

2. To prevent over-fitting, we divide the data into two regions, a learning region from to and a validation region from to . Only data from the learning region is used in the optimization of the loss function Equation 49. However, the model is integrated into the validation region, and a corresponding validation loss using only the data in the validation region is calculated. During each optimization run, we choose the model with the lowest loss in the validation region, lowering the likelihood of over-fitting to the specific data in the learning region.

3. To prevent the optimization from getting stuck in local minima, we incrementally increase the time-span of the data included in the optimization objective (blue box in Appendix 4—figure 1). We increase the time window backward from a fixed endpoint , choosing each iteration an earlier initial condition at time . The advantage of stepping backward rather than forward from a fixed initial condition is twofold: first, the validation region stays unchanged throughout the optimization, making comparisons of the validation loss easy. Second, because the initial condition changes with each run, the learned matrix tends to be more robust to fluctuations in the initial condition.

4. After the optimization step, sparsity is promoted by thresholding the elements in the matrix (Brunton et al., 2016), removing small magnitude elements that do not noticeably contribute to the mode dynamics (purple box in Appendix 4—figure 1). The optimization procedure is then repeated until the thresholding converges. The threshold is chosen to generate a sparse matrix that still reproduces the dynamics faithfully.

5. Once the sparsity pattern is obtained from the sequential thresholding and optimization procedure a final run of the optimization is performed on the unscaled mode data to find the final dynamical matrix , which removes any potential slight bias the MAD scaling might have introduced in the parameter values .

Finally, the numerical stability of the model can be checked by examining the eigenvalues of the learned matrix. For the ABP test data, we learn a matrix for which the largest real part of its eigenvalues is at machine precision. For the experimental data, the largest real part in the eigenvalues is , which corresponds to a time scale of around 675 mins. While the corresponding dynamics will eventually become unstable, solutions remain bound over a period of approximately 45 hours, which is four times as long as the input data from which the mode coupling matrix was learned.

Learning and validation regions used in this work

For the ABP data, the first 15 frames are excluded, so that – consistent with coarse-graining assumptions (see Appendix 3, Equation 47a, Equation 47b) any remnants of higher orientational order introduced by the initial conditions have decayed. The subsequent 140 frames are used as the learning region, followed by a validation region of 20 frames. Each frame corresponds to a time interval of approximately 0.06 in units of . We exclude the first and last 10 frames of the experimental zebrafish data and split the remaining data into a learning region of 360 frames, with the remaining 40 frames used for validation. Each frame corresponds to a time interval of 2 min.

Initially the data is rescaled using the median absolute deviation (MAD) defined in Equation 50 to account for variation in scales across the modes.

Scaled variables are denoted by tildes. To avoid local minima of the optimization function, we iteratively feed more data into the cost function. Next we sequentially threshold the small terms in the matrix until convergence is reached. These procedures are repeated until the sparsity pattern converges. Finally the scaling is undone and the parameters are optimized on the unscaled data to produce the final matrix. Schematic of the learning procedure.

Green’s function representation of the learned matrix

The learned matrix consists of 9 blocks each with entries. Each block relates a mode family to time derivatives of another and we write

We denote the components of each block by , where , and , are multi-indices that represent the harmonic modes . Using the mode representation Equation 5 and the form of the linear minimal model Equation 48, we find

Using Equation 30a, Equation 51 can be cast into the dynamic kernel Equation 14 given in the main text, where we defined the vector kernel

and the matrix kernel