COVID-19 pandemic dynamics in South Africa and epidemiological characteristics of three variants of concern (Beta, Delta, and Omicron)

Abstract

Severe acute respiratory syndrome coronavirus 2 (SARS-CoV-2) variants of concern (VOCs) have been key drivers of new coronavirus disease 2019 (COVID-19) pandemic waves. To better understand variant epidemiologic characteristics, here we apply a model-inference system to reconstruct SARS-CoV-2 transmission dynamics in South Africa, a country that has experienced three VOC pandemic waves (i.e. Beta, Delta, and Omicron BA.1) by February 2022. We estimate key epidemiologic quantities in each of the nine South African provinces during March 2020 to February 2022, while accounting for changing detection rates, infection seasonality, nonpharmaceutical interventions, and vaccination. Model validation shows that estimated underlying infection rates and key parameters (e.g. infection-detection rate and infection-fatality risk) are in line with independent epidemiological data and investigations. In addition, retrospective predictions capture pandemic trajectories beyond the model training period. These detailed, validated model-inference estimates thus enable quantification of both the immune erosion potential and transmissibility of three major SARS-CoV-2 VOCs, that is, Beta, Delta, and Omicron BA.1. These findings help elucidate changing COVID-19 dynamics and inform future public health planning.

Editor's evaluation

This paper proposes a modeling framework that can be used to track the complex behavioral and immunological landscape of the COVID-19 pandemic over multiple surges and variants in South Africa, which has been validated previously for other regions and time periods. This work may be useful for infectious disease modelers, epidemiologists, and public health officials as they navigate the next phase of the pandemic or seek to understand the history of the epidemic in South Africa.

https://doi.org/10.7554/eLife.78933.sa0Introduction

Since its emergence in late December 2019, the severe acute respiratory syndrome coronavirus 2 (SARS-CoV-2) has spread globally, causing the coronavirus disease 2019 (COVID-19) pandemic (Koelle et al., 2022). In just 2 years, SARS-CoV-2 has caused several pandemic waves in quick succession in many places. Many of these repeated pandemic waves have been driven by new variants of concern (VOCs) or interest (VOIs) that erode prior immunity from either infection or vaccination, increase transmissibility, or a combination of both. However, while laboratory and field studies have provided insights into these epidemiological characteristics, quantifying the extent of immune erosion (or evasion) and changes to transmissibility for each VOC remains challenging.

Like many places, by February 2022 South Africa had experienced four distinct pandemic waves caused by the ancestral SARS-CoV-2 and three VOCs (Beta, Delta, and Omicron BA.1). However, South Africa is also unique in that the country had the earliest surge for two of the five VOCs identified to date – namely, Beta (Tegally et al., 2021) and Omicron (Viana et al., 2022). To better understand the COVID-19 dynamics in South Africa and variant epidemiological characteristics, here we utilize a model-inference system similar to one developed for study of SARS-CoV-2 VOCs, including the Beta variant in South Africa (Yang and Shaman, 2021c). We use this system to reconstruct SARS-CoV-2 transmission dynamics in each of the nine provinces of South Africa from the pandemic onset during March 2020 to the end of February 2022 while accounting for multiple factors modulating underlying transmission dynamics. We then rigorously validate the model-inference estimates using independent data and retrospective predictions. The validated estimates quantify the immune erosion potential and transmissibility of three major SARS-CoV-2 variants, that is, Beta, Delta, and Omicron (BA.1), in South Africa. Our findings highlight several common characteristics of SARS-CoV-2 VOCs and the need for more proactive planning and preparedness for future VOCs, including development of a universal vaccine that can effectively block SARS-CoV-2 infection as well as prevent severe disease.

Results

Model fit and validation

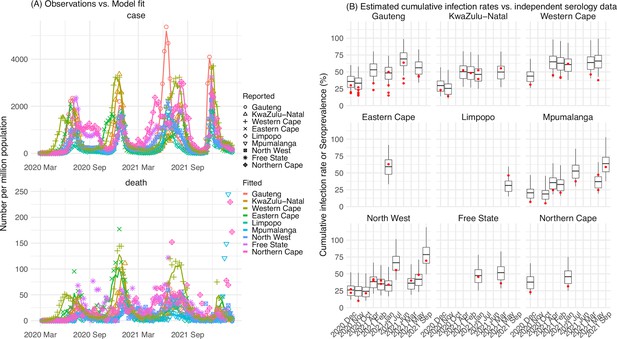

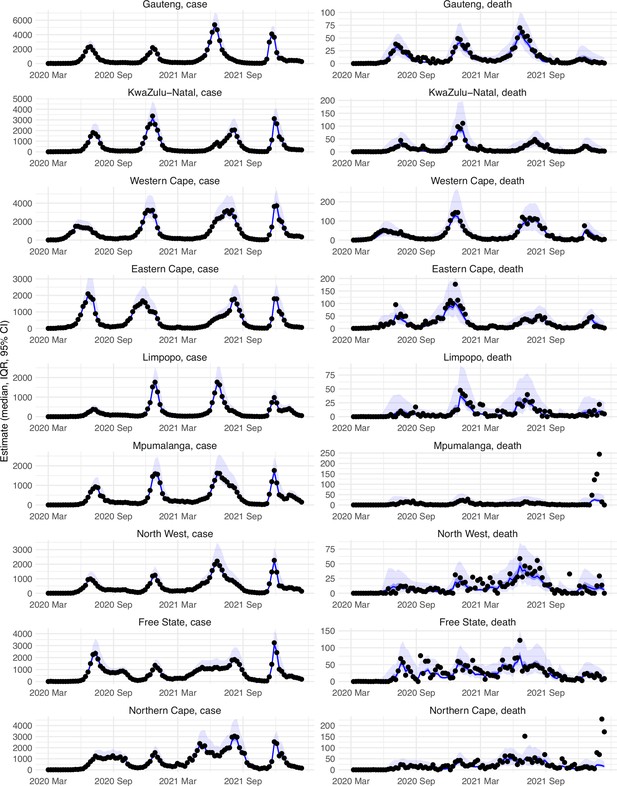

The model-inference system uses case and death data to reconstruct the transmission dynamics of SARS-CoV-2, while accounting for under-detection of infection, infection seasonality, implemented nonpharmaceutical interventions (NPIs), and vaccination (see Materials and methods). Overall, the model-inference system is able to fit weekly case and death data in each of the nine South African provinces (Figure 1A, Appendix 1—figure 1, and additional discussion in Appendix 1). Additional testing (in particular, for the infection-detection rate) and visual inspections indicate that posterior estimates for the model parameters are consistent with those reported in the literature, or changed over time and/or across provinces in directions as would be expected (see Appendix 1).

Pandemic dynamics in South Africa, model-fit and validation using serology data.

(A) Pandemic dynamics in each of the nine provinces (see legend); dots depict reported weekly numbers of cases and deaths; lines show model mean estimates (in the same color). (B) For validation, model estimated infection rates are compared to seroprevalence measures over time from multiple sero-surveys summarized in The South African COVID-19 Modelling Consortium, 2021. Boxplots depict the estimated distribution for each province (middle bar = mean; edges = 50% CrIs) and whiskers (95% CrIs), summarized over n=100 model-inference runs (500 model replica each, totaling 50,000 model realizations). Red dots show corresponding measurements. Note that reported mortality was high in February 2022 in some provinces (see additional discussion in Appendix 1).

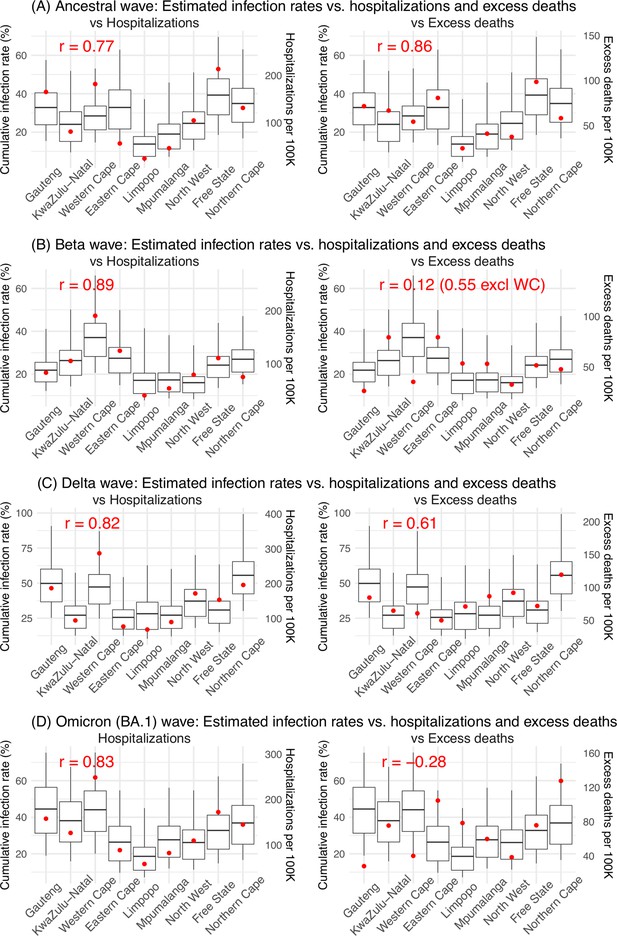

We then validated the model-inference estimates using three independent datasets. First, we used serology data. We note that early in the pandemic serology data may reflect underlying infection rates but later, due to waning antibody titers and reinfection, likely underestimate infection. Compared to seroprevalence measures taken at multiple time points in each province, our model estimated cumulative infection rates roughly match corresponding serology measures and trends over time; as expected, model estimates were higher than serology measures taken during later months (Figure 1B). Second, compared to hospital admission data, across the nine provinces, model estimated infection numbers were well correlated with numbers of hospitalizations for all four pandemic waves caused by the ancestral, Beta, Delta, and Omicron (BA.1) variants, respectively (r>0.75, Appendix 1—figure 2A–D). Third, model-estimated infection numbers were correlated with age-adjusted excess mortality for both the ancestral and Delta wave (r=0.86 and 0.61, respectively; Appendix 1—figure 2A and C). For the Beta wave, after excluding Western Cape, a province with a very high hospitalization rate but low excess mortality during this wave (Appendix 1—figure 2B), model-estimated infection numbers were also correlated with age-adjusted excess mortality for the remaining provinces (r=0.55; Appendix 1—figure 2B). For the Omicron (BA.1) wave, like many other places, due to prior infection and/or vaccination (Nyberg et al., 2022; Wolter et al., 2022), mortality rates decoupled from infection rates (Appendix 1—figure 2D). Overall, comparisons with the three independent datasets indicate our model-inference estimates align with underlying transmission dynamics.

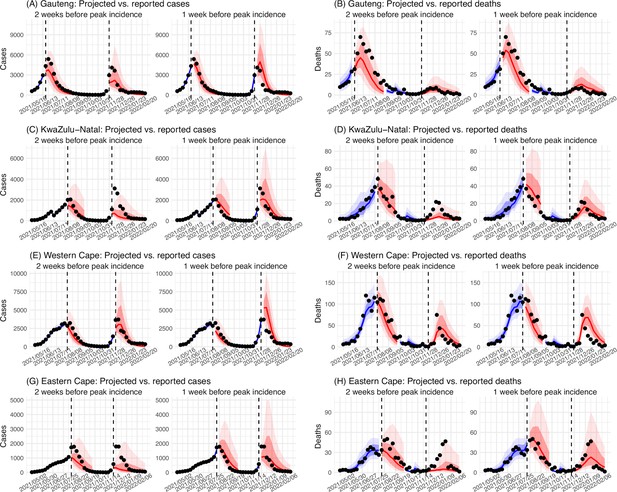

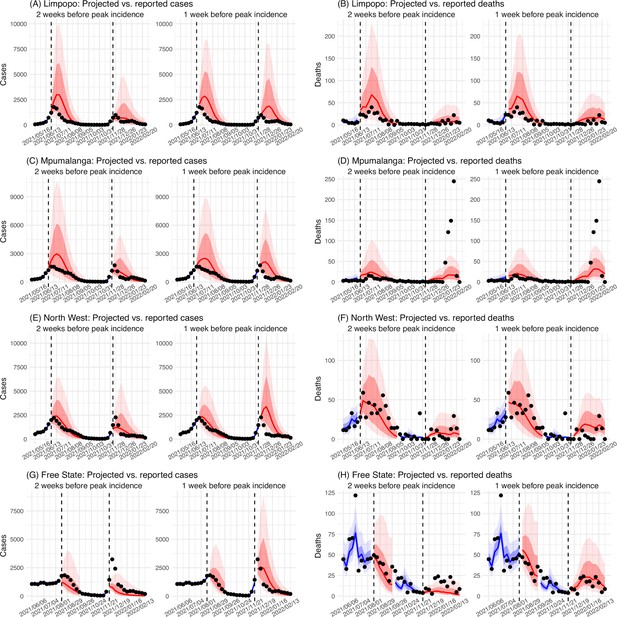

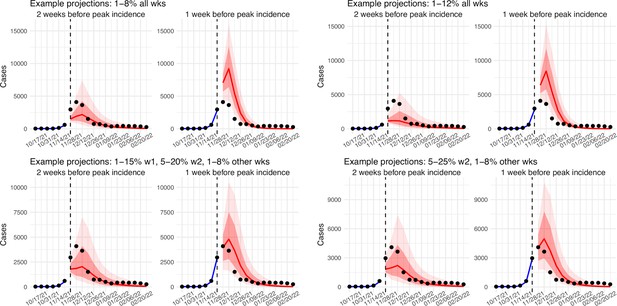

In addition, as a fourth model validation, we generated retrospective predictions of the Delta and Omicron (BA.1) waves at two key time points, that is 2 weeks and 1 week, separately, before the observed peak of cases (approximately 3–5 weeks before the observed peak of deaths; Figure 2). To accurately predict a pandemic wave caused by a new variant, the model-inference system needs to accurately estimate the background population characteristics (e.g. population susceptibility) before the emergence of the new variant, as well as changes in population susceptibility and transmissibility due to the new variant. This is particularly challenging for South Africa, as the pandemic waves there tended to progress quickly, with cases surging and peaking within 3–7 weeks before declining. As a result, often only 1–6 weeks of new variant data were available for model-inference before generating the prediction. Despite these challenges, 1–2 weeks before the case peak and 3–5 weeks before the observed death peak, the model was able to accurately predict the remaining trajectories of cases and deaths in most of the nine provinces for both the Delta and Omicron (BA.1) waves (Figure 2 for the four most populous provinces and Appendix 1—figure 3 for the remainder). These accurate model predictions further validate the model-inference estimates.

Model validation using retrospective prediction.

Model-inference was trained on cases and deaths data since March 15, 2020 until 2 weeks (1st plot in each panel) or 1 week (2nd plot) before the Delta or Omicron (BA.1) wave (see timing on the x-axis); the model was then integrated forward using the estimates made at the time to predict cases (left panel) and deaths (right panel) for the remaining weeks of each wave. Blue lines and surrounding shades show model fitted cases and deaths for weeks before the prediction (line = median, dark blue area = 50% CrIs, and light blue = 80% CrIs, summarized over n=100 model-inference runs totaling 50,000 model realizations). Red lines show model projected median weekly cases and deaths; surrounding shades show 50% (dark red) and 80% (light red) CIs of the prediction (n = 50,000 model realizations). For comparison, reported cases and deaths for each week are shown by the black dots; however, those to the right of the vertical dash lines (showing the start of each prediction) were not used in the model. For clarity, here we show 80% CIs (instead of 95% CIs, which tend to be wider for longer-term projections) and predictions for the four most populous provinces (Gauteng in A and B; KwaZulu-Natal in C and D; Western Cape in E and F; and Eastern Cape in G and H). Predictions for the other five provinces are shown in Appendix 1—figure 3.

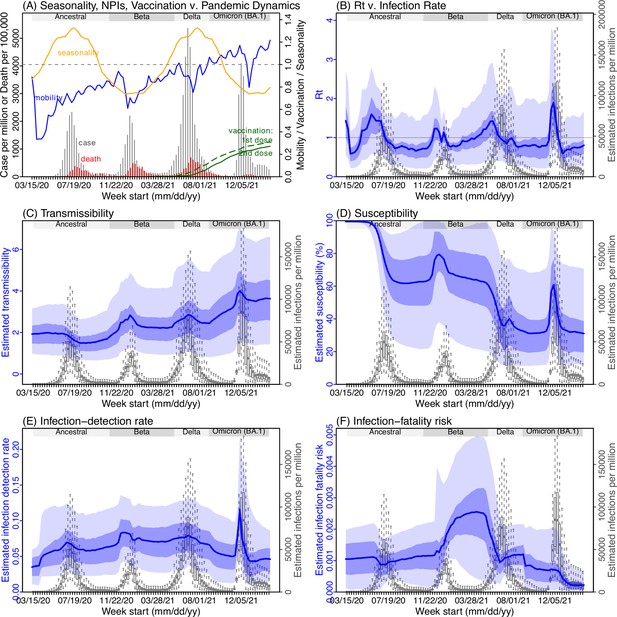

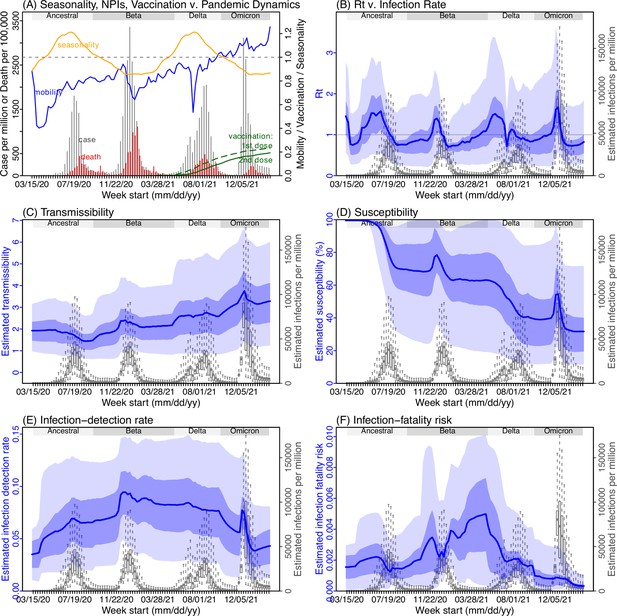

Pandemic dynamics and key model-inference, using Gauteng province as an example

Next, we use Gauteng, the province with the largest population, as an example to highlight pandemic dynamics in South Africa thus far and develop key model-inference estimates (Figure 3 for Gauteng and Appendix 1—figures 4–11 for each of the other eight provinces). Despite lower cases per capita than many other countries, infection numbers in South Africa were likely much higher due to under-detection. For Gauteng, the estimated infection-detection rate during the first pandemic wave was 4.59% (95% CI: 2.62–9.77%), and increased slightly to 6.18% (95% CI: 3.29–11.11%) and 6.27% (95% CI: 3.44–12.39%) during the Beta and Delta waves, respectively (Appendix 1—table 1). These estimates are in line with serology data. In particular, a population-level sero-survey in Gauteng found 68.4% seropositivity among those unvaccinated at the end of the Delta wave (Madhi et al., 2022). Combining the reported cases at that time (~6% of the population size) with undercounting of infections in sero-surveys due to sero-reversions and reinfections suggests that the overall detection rate would be less than 10%.

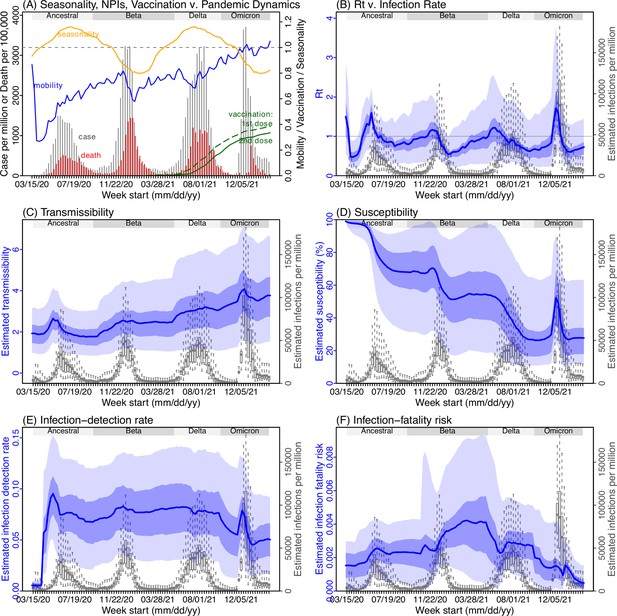

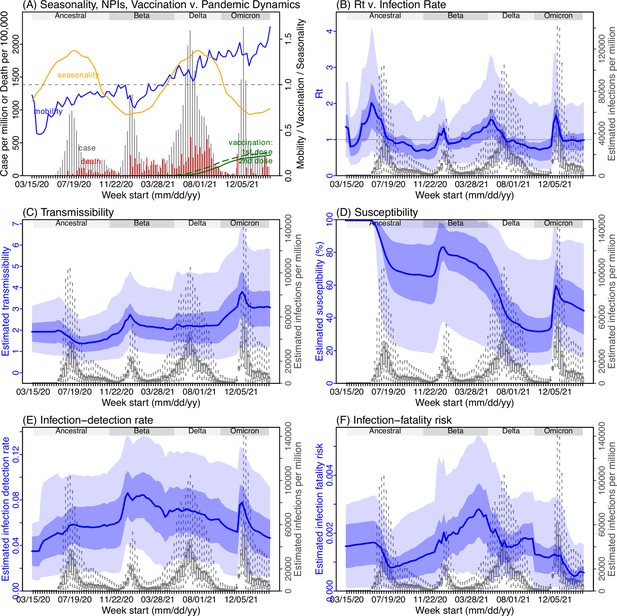

Example model-inference estimates for Gauteng.

(A) Observed relative mobility, vaccination rate, and estimated disease seasonal trend, compared to case and death rates over time. Key model-inference estimates are shown for the time-varying effective reproduction number Rt (B), transmissibility RTX (C), population susceptibility (D, shown relative to the population size in percentage), infection-detection rate (E), and infection-fatality risk (F). Grey shaded areas indicate the approximate circulation period for each variant. In (B) – (F), blue lines and surrounding areas show the estimated mean, 50% (dark) and 95% (light) CrIs; boxes and whiskers show the estimated mean, 50% and 95% CrIs for estimated infection rates. All summary statistics are computed based on n=100 model-inference runs totaling 50,000 model realizations. Note that the transmissibility estimates (RTX in C) have removed the effects of changing population susceptibility, NPIs, and disease seasonality; thus, the trends are more stable than the reproduction number (Rt in B) and reflect changes in variant-specific properties. Also note that infection-fatality risk estimates were based on reported COVID-19 deaths and may not reflect true values due to likely under-reporting of COVID-19 deaths.

Using our inferred under-detection (Figure 3E), we estimate that 32.83% (95% CI: 15.42–57.59%, Appendix 1—table 2) of the population in Gauteng were infected during the first wave, predominantly during winter when more conducive climate conditions and relaxed public health restrictions existed (see the estimated seasonal and mobility trends, Figure 3A). This high infection rate, while with uncertainty, is in line with serology measures taken in Gauteng at the end of the first wave (ranging from 15% to 27% among 6 sero-surveys during November 2020; Figure 1B) and a study showing 30% sero-positivity among participants enrolled in the Novavax NVX-CoV2373 vaccine phase 2a-b trial in South Africa during August – November 2020 (Shinde et al., 2021).

With the emergence of Beta, another 21.87% (95% CI: 12.16–41.13%) of the population in Gauteng – including reinfections – is estimated to have been infected, even though the Beta wave occurred during summer under less conducive climate conditions for transmission (Figure 3A). The model-inference system estimates a large increase in population susceptibility with the surge of Beta (Figure 3D; note population susceptibility is computed as S / N×100%, where S is the estimated number of susceptible people and N is population size). This dramatic increase in population susceptibility (vs. a likely more gradual change due to waning immunity), to the then predominant Beta variant, suggests Beta likely substantially eroded prior immunity and is consistent with laboratory studies showing low neutralizing ability of convalescent sera against Beta (Garcia-Beltran et al., 2021; Wall et al., 2021). In addition, an increase in transmissibility is also evident for Beta, after accounting for concurrent NPIs and infection seasonality (Figure 3C; note transmissibility is computed as the product of the estimated variant-specific transmission rate and the infectious period; see Materials and methods for detail). Notably, in contrast to the large fluctuation of the time-varying effective reproduction number over time (Rt, Figure 3B), the transmissibility estimates are more stable and reflect changes in variant-specific properties. Further, consistent with in-depth epidemiological findings (Abu-Raddad et al., 2021a), the estimated overall infection-fatality risk for Beta was about twice as high as the ancestral SARS-CoV-2 (0.19% [95% CI: 0.10–0.33%] vs. 0.09% [95% CI: 0.05–0.20%], Figure 3F and Appendix 1—table 3). Nonetheless, these estimates are based on documented COVID-19 deaths and are likely underestimates.

With the introduction of Delta, a third pandemic wave occurred in Gauteng during the 2021 winter. The model-inference system estimates a 49.82% (95% CI: 25.22–90.79%) attack rate by Delta, despite the large number of infections during the previous two waves. This large attack rate was possible due to the high transmissibility of Delta, as reported in multiple studies (Public Health England, 2021; Allen et al., 2022; Challen et al., 2021; Earnest et al., 2021; Vöhringer et al., 2021), the more conducive winter transmission conditions (Figure 3A), and the immune erosive properties of Delta relative to both the ancestral and Beta variants (Dhar et al., 2021; Liu et al., 2021; de Oliveira and Lessells, 2021).

Due to these large pandemic waves, prior to the detection of Omicron (BA.1) in Gauteng, estimated cumulative infection numbers surpassed the population size (Figure 4B), indicating the large majority of the population had been infected and some more than once. With the rise of Omicron (BA.1), the model-inference system estimates a very large increase in population susceptibility (Figure 3D), as well as an increase in transmissibility (Figure 3C); however, unlike previous waves, the Omicron (BA.1) wave progresses much more quickly, peaking 2–3 weeks after initiating marked exponential growth. These estimates suggest that several additional factors may have also contributed to the observed dynamics, including changes to the infection-detection rate (Figure 3E and Appendix 1), a summer seasonality increasingly suppressing transmission as the wave progressed (Figure 3A), as well as a slight change in population mobility suggesting potential behavior changes (Figure 3A). By the end of February 2022, the model-inference system estimates a 44.49% (95% CI: 19.01–75.30%) attack rate, with only 4.26% (95% CI: 2.46–9.72%) of infections detected as cases, during the Omicron (BA.1) wave in Gauteng. In addition, consistent with the reported 0.3 odds of severe disease compared to Delta infections (Wolter et al., 2022), estimated overall infection-fatality risk during the Omicron (BA.1) wave was about 30% of that during the Delta wave in Gauteng (0.03% [95% CI: 0.02–0.06%] vs. 0.11% [95% CI: 0.06–0.21%], based on documented COVID-19 deaths; Appendix 1—table 3).

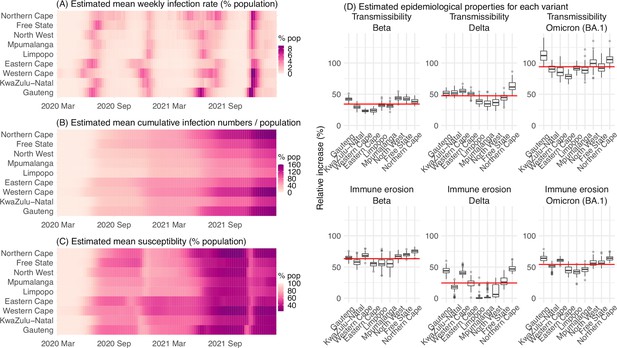

Model-inferred epidemiological properties for different variants across SA provinces.

Heatmaps show (A) Estimated mean infection rates by week (x-axis) and province (y-axis), (B) Estimated mean cumulative infection numbers relative to the population size in each province, and (C) Estimated population susceptibility (to the circulating variant) by week and province. (D) Boxplots in the top row show the estimated distribution of increases in transmissibility for Beta, Delta, and Omicron (BA.1), relative to the Ancestral SARS-CoV-2, for each province (middle bar = median; edges = 50% CIs; and whiskers = 95% CIs; summarized over n=100 model-inference runs); boxplots in the bottom row show, for each variant, the estimated distribution of immune erosion to all adaptive immunity gained from infection and vaccination prior to that variant. Red lines show the mean across all provinces.

Model inferred epidemiological characteristics across the nine provinces in South Africa

Across all nine provinces in South Africa, the pandemic timing and intensity varied (Figure 4A–C). In addition to Gauteng, high cumulative infection rates during the first three pandemic waves are also estimated for Western Cape and Northern Cape (Figure 1C–E, Figure 4B and Appendix 1—table 2). Overall, all nine provinces likely experienced three large pandemic waves prior to the growth of Omicron (BA.1); estimated average cumulative infections ranged from 60% of the population in Limpopo to 122% in Northern Cape (Figure 4B). Corroboration for these cumulative infection estimates is derived from mortality data. Excess mortality before the Omicron (BA.1) wave was as high as 0.47% of the South African population by the end of November 2021 (The South African Medical Research Council (SAMRC), 2021), despite the relatively young population (median age: 27.6 years (Anonymous, 2020b) vs. 38.5 years in the US [United States Census Bureau, 2020]) and thus lower expected infection-fatality risk (Levin et al., 2020; O’Driscoll et al., 2021). Assuming an infection-fatality risk of 0.5% (similar to estimates in COVID-19 Forecasting Team, 2022 for South Africa), these excess deaths would convert to a 94% infection rate.

We then use these model-inference estimates to quantify the immune erosion potential and increase in transmissibility for each VOC. Specifically, the immune erosion (against infection) potential is computed as the ratio of two quantities – the numerator is the increase of population susceptibility due to a given VOC and the denominator is population immunity (i.e. complement of population susceptibility) at wave onset. The relative increase in transmissibility is also computed as a ratio, that is, the average increase due to a given VOC relative to the ancestral SARS-CoV-2 (see Materials and methods). As population-specific factors contributing to transmissibility (e.g. population density and average contact rate) would be largely cancelled out in the latter ratio, we expect estimates of the VOC transmissibility increase to be generally applicable to different populations. However, prior exposures and vaccinations varied over time and across populations; thus, the level of immune erosion is necessarily estimated relative to the local population immune landscape at the time of the variant surge and should be interpreted accordingly. In addition, this assessment does not distinguish the sources of immunity or partial protection against severe disease; rather, it assesses the overall loss of immune protection against infection for a given VOC.

In the above context, we estimate that Beta eroded immunity among 63.4% (95% CI: 45.0–77.9%) of individuals with prior ancestral SARS-CoV-2 infection and was 34.3% (95% CI: 20.5–48.2%) more transmissible than the ancestral SARS-CoV-2. These estimates for Beta are consistent across the nine provinces (Figure 4D, 1st column and Table 1), as well as with our previous estimates using national data for South Africa (Yang and Shaman, 2021c). Additional support for the high immune erosion of Beta is evident from recoverees of ancestral SARS-CoV-2 infection who were enrolled in the Novavax NVX-CoV2373 vaccine phase 2a-b trial (Shinde et al., 2021) and found to have a similar likelihood of COVID-19, mostly due to Beta, compared to those seronegative at enrollment.

Estimated increases in transmissibility and immune erosion potential for Beta, Delta, and Omicron (BA.1).

The estimates are expressed in percentage for the median (and 95% CIs). Note that estimated increases in transmissibility for all three variants are relative to the ancestral strain, whereas estimated immune erosion is relative to the composite immunity combining all previous infections and vaccinations accumulated until the surge of the new variant. See main text and Methods for details.

| Province | Quantity | Beta | Delta | Omicron (BA.1) |

|---|---|---|---|---|

| All combined | % Increase in transmissibility | 34.3 (20.5, 48.2) | 47.5 (28.4, 69.4) | 94 (73.5, 121.5) |

| % Immune erosion | 63.4 (45, 77.9) | 24.5 (0, 53.2) | 54.1 (35.8, 70.1) | |

| Gauteng | % Increase in transmissibility | 42.2 (35.6, 48.3) | 51.8 (44.5, 58.7) | 112.6 (96.2, 131.8) |

| % Immune erosion | 65 (57, 72.2) | 44.3 (36.4, 54.9) | 64.1 (56, 74.2) | |

| KwaZulu-Natal | % Increase in transmissibility | 29.7 (22.9, 36.6) | 52.5 (44.8, 60.8) | 90.6 (77.9, 102.4) |

| % Immune erosion | 58.1 (48.3, 71.3) | 17.3 (1.4, 27.6) | 51.1 (39.3, 58.1) | |

| Western Cape | % Increase in transmissibility | 23.4 (20.2, 27.4) | 55.2 (48.2, 62.7) | 86.1 (72.6, 102.6) |

| % Immune erosion | 68.9 (62.5, 76.4) | 41.5 (35.6, 53.5) | 61 (55.5, 67.3) | |

| Eastern Cape | % Increase in transmissibility | 24.1 (18, 29.7) | 50.2 (40.5, 57.4) | 78.4 (67.6, 89.2) |

| % Immune erosion | 54.6 (45.1, 61.2) | 24.2 (15.4, 36.2) | 45.3 (34.5, 57.2) | |

| Limpopo | % Increase in transmissibility | 32.6 (24.9, 39.8) | 38.9 (31.5, 50.5) | 91.8 (82.6, 102.4) |

| % Immune erosion | 56.3 (38.4, 76.2) | 1.8 (0, 21.2) | 42.1 (33.2, 53.2) | |

| Mpumalanga | % Increase in transmissibility | 31.2 (25.4, 38.6) | 35.3 (24.9, 48.2) | 88.6 (72.8, 104.3) |

| % Immune erosion | 55.6 (39.8, 70) | 3.1 (0, 21.7) | 45.9 (37.7, 55.7) | |

| North West | % Increase in transmissibility | 43.8 (36.9, 52.1) | 36.8 (25.6, 47.5) | 100 (81.7, 121.1) |

| % Immune erosion | 67 (58.4, 75.4) | 12.4 (0.4, 30.5) | 56.6 (48.2, 68.8) | |

| Free State | % Increase in transmissibility | 42.7 (35, 49.8) | 43.8 (31.9, 52.1) | 92.2 (77.4, 106.9) |

| % Immune erosion | 70 (64.5, 76.2) | 27.7 (17.6, 41.6) | 57 (49.5, 66.6) | |

| Northern Cape | % Increase in transmissibility | 38.6 (32.6, 44.8) | 63.1 (50.4, 79.2) | 106 (94.7, 119.6) |

| % Immune erosion | 75 (67.4, 82) | 47.9 (40.5, 59.1) | 64 (57.3, 72.6) |

Estimates for Delta vary across the nine provinces (Figure 4D, 2nd column), given the more diverse population immune landscape among provinces after two pandemic waves. Overall, we estimate that Delta eroded 24.5% (95% CI: 0–53.2%) of prior immunity (gained from infection by ancestral SARS-CoV-2 and/or Beta, and/or vaccination) and was 47.5% (95% CI: 28.4–69.4%) more transmissible than the ancestral SARS-CoV-2. Consistent with this finding, and in particular the estimated immune erosion, studies have reported a 27.5% reinfection rate during the Delta pandemic wave in Delhi, India (Dhar et al., 2021) and reduced ability of sera from Beta-infection recoverees to neutralize Delta (Liu et al., 2021; de Oliveira and Lessells, 2021).

For Omicron (BA.1), estimates also vary by province but still consistently point to its higher transmissibility than all previous variants (Figure 4D, 3rd column). Overall, we estimate that Omicron (BA.1) is 94.0% (95% CI: 73.5–121.5%) more transmissible than the ancestral SARS-CoV-2. This estimated transmissibility is higher than Delta and consistent with in vitro and/or ex vivo studies showing Omicron (BA.1) replicates faster within host than Delta (Garcia-Beltran et al., 2022; Hui et al., 2022). In addition, we estimate that Omicron (BA.1) eroded 54.1% (95% CI: 35.8–70.1%) of immunity due to all prior infections and vaccination. Importantly, as noted above, the estimate for immune erosion is not directly comparable across variants, as it is relative to the combined population immunity accumulated until the rise of each variant. In the case of Beta, it is immunity accumulated from the first wave via infection by the ancestral SARS-CoV-2. In the case of Omicron (BA.1), it includes immunity from prior infection and re-infection of any of the previously circulating variants as well as vaccination. Thus, the estimate for Omicron (BA.1) may represent a far broader capacity for immune erosion than was evident for Beta. Supporting the suggestion of broad-spectrum immune erosion of Omicron (BA.1), studies have reported low neutralization ability of convalescent sera from infections by all previous variants (Rössler et al., 2022; Cele et al., 2022), as well as high attack rates among vaccinees in several Omicron (BA.1) outbreaks (Brandal et al., 2021; Helmsdal et al., 2022).

Discussion

Using a comprehensive model-inference system, we have reconstructed the pandemic dynamics in each of the nine provinces of South Africa. Uncertainties exist in our findings, due to incomplete and varying detection of SARS-CoV-2 infections and deaths, changing population behavior and public health interventions, and changing circulating variants. To address these uncertainties, we have validated our estimates using three datasets not used by our model-inference system (i.e. serology, hospitalization, and excess mortality data; Figure 1B and Appendix 1—figure 2) as well as retrospective prediction (Figure 2 and Appendix 1—figure 4). In addition, as detailed in the Results, we have showed that estimated underlying infection rates (Figure 1B and Appendix 1—figure 2) and key parameters (e.g. infection-detection rate and infection-fatality risk) are in line with other independent epidemiological data and investigations. The detailed, validated model-inference estimates thus allow quantification of both the immune erosion potential and transmissibility of three major SARS-CoV-2 VOCs, that is, Beta, Delta, and Omicron (BA.1).

The relevance of our model-inference estimates to previous studies has been presented in the Results section. Here, we make three additional general observations, drawn from global SARS-CoV-2 dynamics including but not limited to findings in South Africa. First, high prior immunity does not preclude new outbreaks, as neither infection nor current vaccination is sterilizing. As shown in South Africa, even with the high infection rate accumulated from preceding waves, new waves can occur with the emergence or introduction of new variants. Around half of South Africans are estimated to have been infected after the Beta wave (Appendix 1—table 2), yet the Delta variant caused a third large pandemic wave, followed by a fourth wave with comparable infection rates by Omicron BA.1 (Figure 4B, Appendix 1—table 2, and Appendix 1—table 4 for a preliminary assessment of reinfection rates).

Second, large numbers of hospitalizations and/or deaths can still occur in later waves with large infection surges, even though prior infection may provide partial protection and to some extent temper disease severity. This is evident from the large Delta wave in South Africa, which resulted in 0.2% excess mortality (vs. 0.08% during the first wave and 0.19% during the Beta wave [The South African Medical Research Council (SAMRC), 2021]). More recently, due to the Omicron BA.4/BA.5 subvariants that have been shown to evade prior immunity including from BA.1 infection (Cao et al., 2022; Khan et al., 2022), a fifth wave began in South Africa during May 2022, leading to increases in both cases and hospitalizations (Sarah et al., 2022). Together, the continued transmission and potential severe outcomes highlight the importance of continued preparedness and prompt public health actions as societies learn to live with SARS-CoV-2.

Third, multiple SARS-CoV-2 VOCs/VOIs have emerged in the two years since pandemic inception. It is challenging to predict the frequency and direction of future viral mutation, in particular, the level of immune erosion, changes in transmissibility, and innate severity. Nonetheless, given high exposure and vaccination in many populations, variants capable of eroding a wide spectrum of prior immunity (i.e. from infection by multiple preexisting variants and vaccination) would have a greater chance of causing new major outbreaks. Indeed, except for the Alpha variant, the other four important VOCs (i.e. Beta, Gamma, Delta, and Omicron) all produced some level of immune erosion. In addition, later VOCs, like Delta and Omicron, appear to have been more genetically distinct from previous variants (van der Straten et al., 2022). As a result, they are likely more capable of causing re-infection despite diverse prior exposures and in turn new pandemic waves. Given this pattern, to prepare for future antigenic changes from new variants, development of a universal vaccine that can effectively block SARS-CoV-2 infection in addition to preventing severe disease (e.g. shown in Mao et al., 2022) is urgently needed (Morens et al., 2022).

The COVID-19 pandemic has caused devastating public health and economic burdens worldwide. Yet SARS-CoV-2 will likely persist in the future. To mitigate its impact, proactive planning and preparedness is paramount.

Materials and methods

Data sources and processing

Request a detailed protocolWe used reported COVID-19 case and mortality data to capture transmission dynamics, weather data to estimate infection seasonality, mobility data to represent concurrent NPIs, and vaccination data to account for changes in population susceptibility due to vaccination in the model-inference system. Provincial level COVID-19 case, mortality, and vaccination data were sourced from the Coronavirus COVID-19 (2019-nCoV) Data Repository for South Africa (COVID19ZA)(Data Science for Social Impact Research Group at University of Pretoria, 2021). Hourly surface station temperature and relative humidity came from the Integrated Surface Dataset (ISD) maintained by the National Oceanic and Atmospheric Administration (NOAA) and are accessible using the ‘stationaRy’ R package (Iannone, 2020a; Iannone, 2020b). We computed specific humidity using temperature and relative humidity per the Clausius-Clapeyron Equation (Wallace and Hobbs, 2006). We then aggregated these data for all weather stations in each province with measurements since 2000 and calculated the average for each week of the year during 2000–2020.

Mobility data were derived from Google Community Mobility Reports (Google Inc, 2020); we aggregated all business-related categories (i.e. retail and recreational, transit stations, and workplaces) in all locations in each province to weekly intervals. For vaccination, provincial vaccination data from the COVID19ZA data repository recorded the total number of vaccine doses administered over time; to obtain a breakdown for numbers of partial (one dose of mRNA vaccine) and full vaccinations (one dose of Janssen vaccine or two doses of mRNA vaccine), separately, we used national vaccination data for South Africa from Our World in Data (Anonymous, 2020a; Mathieu et al., 2021) to apportion the doses each day. In addition, cumulative case data suggested 18,586 new cases on November 23, 2021, whereas the South Africa Department of Health reported 868 (Department of Health Republic of South Africa, 2021a). Thus, for November 23, 2021, we used linear interpolation to fill in estimates for each province on that day and then scaled the estimates such that they sum to 868.

Model-inference system

The model-inference system is based on our previous work estimating changes in transmissibility and immune erosion for SARS-CoV-2 VOCs including Alpha, Beta, Gamma, and Delta (Yang and Shaman, 2021c; Yang and Shaman, 2022). Below we describe each component.

Epidemic model

Request a detailed protocolThe epidemic model follows an SEIRSV (susceptible-exposed-infectious-recovered-susceptible-vaccination) construct per Equation 1:

where S, E, I, R are the number of susceptible, exposed (but not yet infectious), infectious, and recovered/immune/deceased individuals; N is the population size; and ε is the number of travel-imported infections. In addition, the model includes the following key components:

Virus-specific properties, including the time-varying variant-specific transmission rate , latency period Zt, infectious period Dt, and immunity period Lt. Of note, the immunity period Lt and the term R/Lt in Equation 1 are used to model the waning of immune protection against infection. Also note that all parameters are estimated for each week (t) as described below.

The impact of NPIs. Specifically, we use relative population mobility (see data above) to adjust the transmission rate via the term mt, as the overall impact of NPIs (e.g. reduction in the time-varying effective reproduction number Rt) has been reported to be highly correlated with population mobility during the COVID-19 pandemic.(Yang et al., 2021b; Lasry et al., 2020; Kraemer et al., 2020) To further account for potential changes in effectiveness, the model additionally includes a parameter, et, to scale NPI effectiveness.

The impact of vaccination, via the terms v1,t and v2,t. Specifically, v1,t is the number of individuals successfully immunized after the first dose of vaccine and is computed using vaccination data and vaccine effectiveness (VE) for 1st dose; and v2,t is the additional number of individuals successfully immunized after the second vaccine dose (i.e. excluding those successfully immunized after the first dose). In South Africa, around two-thirds of vaccines administered during our study period were the mRNA BioNTech/Pfizer vaccine and one-third the Janssen vaccine (Department of Health Republic of South Africa, 2021b). We thus set VE to 20%/85% (partial/full vaccination) for Beta, 35%/75% for Delta, and 10%/35% for Omicron (BA.1) based on reported VE estimates (Abu-Raddad et al., 2021b; Lopez Bernal et al., 2021; Andrews et al., 2021).

Infection seasonality, computed using temperature and specific humidity data as described previously (see supplemental material of Yang and Shaman, 2021c). Briefly, we estimated the relative seasonal trend (bt) using a model representing the dependency of the survival of respiratory viruses including SARS-CoV-2 to temperature and humidity (Biryukov et al., 2020; Morris et al., 2021), per

In essence, the seasonality function in Equation 2 assumes that humidity has a bimodal effect on seasonal risk of infection, with both low and high humidity conditions favoring transmission [i.e. the parabola in 1st set of brackets, where q(t) is weekly specific humidity measured by local weather stations]; and this effect is further modulated by temperature, with low temperatures promoting transmission and temperatures above a certain threshold limiting transmission [i.e. 2nd set of brackets, where T(t) is weekly temperature measured by local weather stations and Tc is the threshold]. As SARS-CoV-2 specific parameters (a, b, c, Tc, and Texp in Equation 2) are not available, to estimate its seasonality using Equation 2, as done in Yang and Shaman, 2021c, we use parameters estimated for influenza (Yuan et al., 2021) and scale the weekly outputs [i.e., ] by the annual mean (i.e. ) per Equation 3. In doing so, the scaled outputs (bt) are no longer specific to influenza; rather, they represent the relative, seasonality-related transmissibility by week, general to viruses sharing similar seasonal responses. As shown in Figure 2A, bt estimates over the year averaged to 1 such that weeks with bt >1 (e.g. during the winter) are more conducive to SARS-CoV-2 transmission, whereas weeks with bt <1 (e.g. during the summer) have less favorable climate conditions for transmission. The estimated relative seasonal trend, bt, is used to adjust the relative transmission rate at time t in Equation 1.

Observation model to account for under-detection and delay

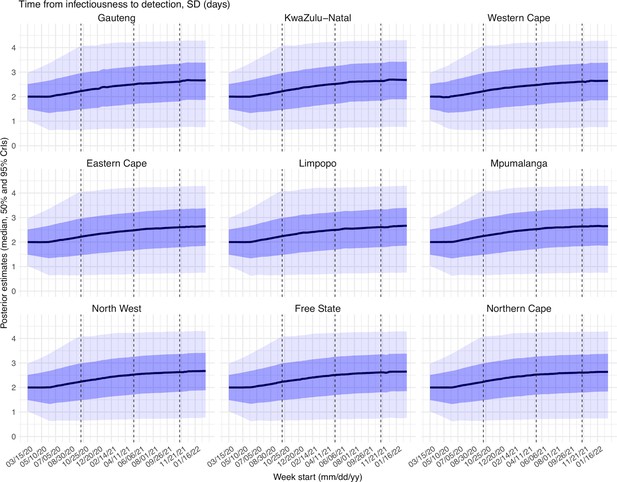

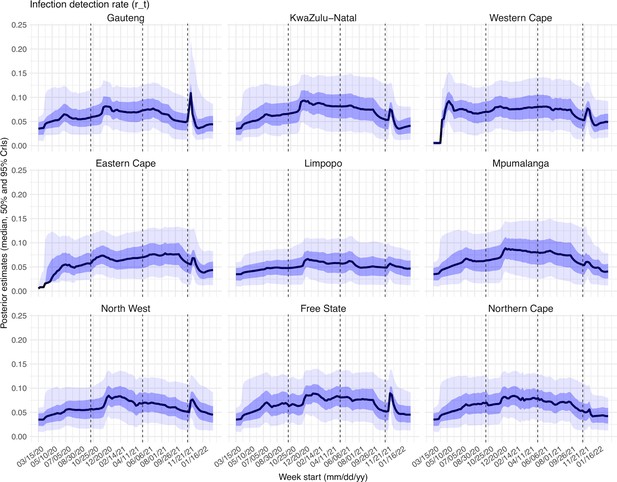

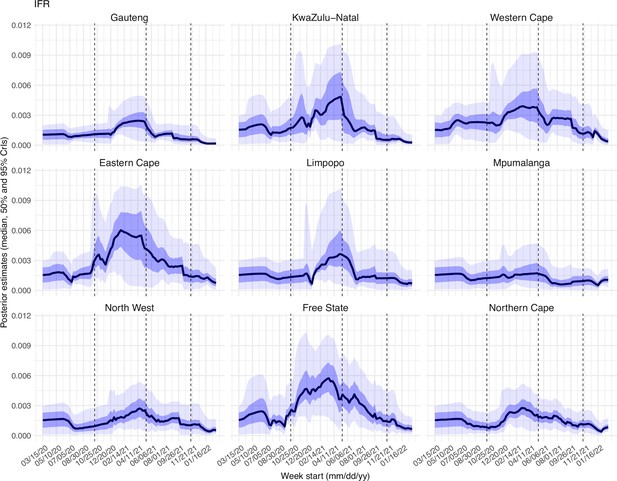

Request a detailed protocolUsing the model-simulated number of infections occurring each day, we further computed the number of cases and deaths each week to match with the observations, as done in Yang et al., 2021a. Briefly, we include (1) a time-lag from infectiousness to detection (i.e. an infection being diagnosed as a case), drawn from a gamma distribution with a mean of Td,mean days and a standard deviation of Td, sd days, to account for delays in detection (Appendix 1—table 5); (2) an infection-detection rate (rt), that is the fraction of infections (including subclinical or asymptomatic infections) reported as cases, to account for under-detection; (3) a time-lag from infectiousness to death, drawn from a gamma distribution with a mean of 13–15 days and a standard deviation of 10 days; and (4) an infection-fatality risk (IFRt). To compute the model-simulated number of new cases each week, we multiplied the model-simulated number of new infections per day by the infection-detection rate, and further distributed these simulated cases in time per the distribution of time-from-infectiousness-to-detection. Similarly, to compute the model-simulated deaths per week and account for delays in time to death, we multiplied the simulated-infections by the IFR and then distributed these simulated deaths in time per the distribution of time-from-infectious-to-death. We then aggregated these daily numbers to weekly totals to match with the weekly case and mortality data for model-inference. For each week, the infection-detection rate (rt), the infection-fatality risk (IFRt)., and the two time-to-detection parameters (Td, mean and Td, sd) were estimated along with other parameters (see below).

Model inference and parameter estimation

Request a detailed protocolThe inference system uses the ensemble adjustment Kalman filter (EAKF [Anderson, 2001]), a Bayesian statistical method, to estimate model state variables (i.e. S, E, I, R from Equation 1) and parameters (i.e. , Zt, Dt, Lt, et, from Equation 1 as well as rt, IFRt and other parameters from the observation model). Briefly, the EAKF uses an ensemble of model realizations (n=500 here), each with initial parameters and variables randomly drawn from a prior range (see Appendix 1—table 5). After model initialization, the system integrates the model ensemble forward in time for a week (per Equation 1) to compute the prior distribution for each model state variable and parameter, as well as the model-simulated number of cases and deaths for that week. The system then combines the prior estimates with the observed case and death data for the same week to compute the posterior per Bayes' theorem (Anderson, 2001). During this filtering process, the system updates the posterior distribution of all model variables and parameters for each week. For a further discussion on the filtering process and additional considerations, see the Appendix 1; diagnosis of model posterior estimates for all parameters are also included in the Appendix 1 and Appendix 1—figures 15–23.

Estimating changes in transmissibility and immune erosion for each variant

Request a detailed protocolAs in Yang and Shaman, 2021c, we computed the variant-specific transmissibility () as the product of the variant-specific transmission rate () and infectious period (Dt). Note that Rt, the time-varying effective reproduction number, is defined as To reduce uncertainty, we averaged transmissibility estimates over the period a particular variant of interest was predominant. To find these predominant periods, we first specified the approximate timing of each pandemic wave in each province based on: (1) when available, genomic surveillance data; specifically, the onsets of the Beta wave in Eastern Cape, Western Cape, KwaZulu-Natal, and Northern Cape, were separately based on the initial detection of Beta in these provinces as reported in Tegally et al., 2021; the onsets of the Delta wave in each of the nine provinces, separately, were based on genomic sequencing data from the Network for Genomic Surveillance South Africa (NGS-SA)(The National Institute for Communicable Diseases (NICD) of the National Health Laboratory (NHLS) on behalf of the Network for Genomics Surveillance in South Africa (NGS-SA), 2021); and (2) when genomic data were not available, we used the week with the lowest case number between two waves. The specified calendar periods are listed in Appendix 1—table 6. During later waves, multiple variants could initially co-circulate before one became predominant. As a result, the estimated transmissibility tended to increase before reaching a plateau (see, e.g. Figure 2C). In addition, in a previous study of the Delta pandemic wave in India (Yang and Shaman, 2022), we also observed that when many had been infected, transmissibility could decrease a couple months after the peak, likely due to increased reinfections for which onward transmission may be reduced. Thus, to obtain a more variant-specific estimate, we computed the average transmissibility () using the weekly RTX estimates over the 8-week period starting the week prior to the maximal Rtx during each wave; if no maximum existed (e.g. when a new variant is less transmissible), we simply averaged over the entire wave. We then computed the change in transmissibility due to a given variant relative to the ancestral SARS-CoV-2 as .

To quantify immune erosion, similar to Yang and Shaman, 2021c, we estimated changes in susceptibility over time and computed the change in immunity as ΔImm = St+1 – St +it, where St is the susceptibility at time-t and it is the new infections occurring during each week-t. We sum over all ΔImm estimates for a particular location, during each wave, to compute the total change in immunity due to a new variant, . Because filter adjustment could also slightly increase S, to avoid overestimation, here we only included substantial increases (i.e. ΔImm per week >0.5% of the total population) when computing changes due to a new variant. As such, we did not further account for smaller susceptibility increases due to waning immunity [for reference, for a population that is 50% immune and a 2-year mean immunity period, 0.5 / (52×2)×100% = 0.48% of the population would lose immunity during a week due to waning immunity]. We then computed the level of immune erosion as the ratio of to the model-estimated population immunity prior to the first detection of immune erosion, during each wave. That is, as opposed to having a common reference of prior immunity, here immune erosion for each variant depends on the state of the population immune landscape –that is, combining all prior exposures and vaccinations – immediately preceding the surge of that variant.

For all provinces, model-inference was initiated the week starting March 15, 2020 and run continuously until the week starting February 27, 2022. To account for model stochasticity, we repeated the model-inference process 100 times for each province, each with 500 model realizations and summarized the results from all 50,000 model estimates.

Model validation using independent data

Request a detailed protocolTo compare model estimates with independent observations not assimilated into the model-inference system, we utilized three relevant datasets:

Serological survey data measuring the prevalence of SARS-CoV-2 antibodies over time. Multiple serology surveys have been conducted in different provinces of South Africa. The South African COVID-19 Modelling Consortium summarizes the findings from several of these surveys (see Figure 1A of The South African COVID-19 Modelling Consortium, 2021). We digitized all data presented in Figure 1A of The South African COVID-19 Modelling Consortium, 2021 and compared these to corresponding model-estimated cumulative infection rates (computed mid-month for each corresponding month with a seroprevalence measure). Due to unknown survey methodologies and challenges adjusting for sero-reversion and reinfection, we used these data directly (i.e. without adjustment) for qualitative comparison.

COVID-19-related hospitalization data, from COVID19ZA (Data Science for Social Impact Research Group at University of Pretoria, 2021). We aggregated the total number of COVID-19 hospital admissions during each wave and compared these aggregates to model-estimated cumulative infection rates during the same wave. Of note, these hospitalization data were available from June 6, 2020 onwards and are thus incomplete for the first wave.

Age-adjusted excess mortality data from the South African Medical Research Council (SAMRC)(The South African Medical Research Council (SAMRC), 2021). Deaths due to COVID-19 (used in the model-inference system) are undercounted. Thus, we also compared model-estimated cumulative infection rates to age-adjusted excess mortality data during each wave. Of note, excess mortality data were available from May 3, 2020 onwards and are thus incomplete for the first wave.

Model validation using retrospective prediction

Request a detailed protocolAs a fourth model validation, we generated model predictions at 2 or 1 weeks before the week of highest cases for the Delta and Omicron (BA.1) waves, separately, and compared the predicted cases and deaths to reported data unknown to the model. Predicting the peak timing, intensity, and epidemic turnaround requires accurate estimation of model state variables and parameters that determine future epidemic trajectories. This is particularly challenging for South Africa as the pandemic waves tended to progress quickly such that cases surged to a peak in only 3–7 weeks. Thus, we chose to generate retrospective predictions 2 and 1 weeks before the peak of cases in order to leverage 1–6 weeks of new variant data for estimating epidemiological characteristics. Specifically, for each pandemic wave, we ran the model-inference system until 2 weeks (or 1 week) before the observed peak of cases, halted the inference, and used the population susceptibility and transmissibility of the circulating variant estimated at that time to predict cases and deaths for the remaining weeks (i.e. 10–14 weeks into the future). Because the infection detection rate and fatality risk are linked to observations of cases and deaths, changes of these quantities during the prediction period could obscure the underlying infection rate and accuracy of the prediction. Thus, for these two parameters specifically, we used model-inference estimates for corresponding weeks to allow comparison of model-predicted cases and deaths with the data while focusing on testing the accuracy of other key model estimates (e.g. transmissibility of the new variant). As for the model-inference, we repeated each prediction 100 times, each with 500 model realizations and summarized the results from all 50,000 ensemble members.

Data Availability

Request a detailed protocolAll data used in this study are publicly available as described in the “Data sources and processing” section.

Code availability

Request a detailed protocolAll source code and data necessary for the replication of our results and figures are publicly available at https://github.com/wan-yang/covid_SouthAfrica (copy archived at swh:1:rev:40c0e5ac5ab65005b600a4ca646fec04b0870b81) (Yang, 2022).

Appendix 1

Supplemental results and discussion

A brief note on reported COVID-19 mortality and model-inference strategy in this study

COVID-19 mortality data in some South African provinces appeared irregular with very high weekly death counts for some weeks even though cases in preceding weeks were low (see, e.g., COVID-19 related deaths in Mpumalanga and Northern Cape in Appendix 1—figure 1). A likely explanation is the audit and release of mortality data including deaths that occurred in previous time periods, which were not redistributed according to the actual time of death. Such instances have occurred in multiple countries (see, e.g., some of the documentations by Financial Times in ref (FT Visual & Data Journalism team, 2020), under the header “SOURCES”). Here, we could not adjust for this possibility due to a lack of information on these apparent data releases. Instead, to account for potential data errors, the ensemble adjustment Kalman filter (EAKF) algorithm (Anderson, 2001), used in the model-inference system, includes an estimate of observational error variance for computing the posterior estimates. In this study, the observational error variance was scaled to corresponding observations (thus, weeks with higher mortality would also have larger observational errors). In doing so, the EAKF reduces the weight of observations with larger observational errors (e.g., for weeks with very large death counts), which reduces their impact on the inference of model dynamics. As such, the posterior estimates for mortality tend to (intentionally) miss very high outlying data points (see Figure 1 and Appendix 1—figure 1). In addition, posterior estimates for the infection-fatality risk (IFR) are more stable over time, including for weeks with outlying death counts (see, e.g., Appendix 1—figure 23, IFR estimates for Mpumalanga).

In light of these COVID-19 related mortality data patterns, we computed the overall IFR during each pandemic wave using two methods. The first method computes the wave-specific IFR as the ratio of the total reported COVID-19 related deaths to the model-estimated cumulative infection rate during each wave. Because reported COVID-19 related mortality is used as the numerator, this method is more heavily affected by the aforementioned data irregularities. The second method computes the wave-specific IFR as a weighted average of the weekly IFR estimates during each wave, a measure for which both the numerator and denominator are model-inference derived; the weights are the estimated fraction of infections during each week. As shown in Appendix 1—table 3, for provinces with consistent case and mortality trends (e.g., Gauteng), the two methods generated similar IFR estimates. In contrast, for provinces with mortality trends inconsistent with case trends (e.g., Mpumalanga), the second method generated IFR estimates more comparable to other provinces than the first method.

Considerations in parameter prior choice and the EAKF inference algorithm

The model-inference system included 9 parameters, namely, the variant-specific transmission rate , latency period Zt, infectious period Dt, immunity period Lt, scaling factor of NPI effectiveness et, infection-detection rate rt, IFRt, and two parameters for the distribution of time from infectiousness to case detection (i.e., the mean and standard deviation, for a gamma distribution). The initial prior distributions were randomly drawn from uniform distributions with ranges listed in Appendix 1—table 5. For parameters with previous estimates from the literature (e.g., transmission rate β, incubation period Z, infectious period D, and immunity period L; see Appendix 1—table 5, column “Source/rationale”), we set the prior range accordingly. For parameters with high uncertainty and spatial variation (e.g., infection-detection rate), we preliminarily tested initial prior ranges by visualizing model prior and posterior estimates, using different ranges. For instance, for the infection-detection rate, when using a higher prior range (e.g., 5 –20% vs 1 –10%), the model prior tended to overestimate observed cases and underestimate deaths. Based on the initial testing, we then used a wide range able to reproduce the observed cases and deaths relatively well and then derived estimates of unobserved state variables and parameters.

Importantly, the EAKF used here is an iterative filtering algorithm. After initialization using the initial prior distributions, it iteratively incorporates additional observations at each time step (here, each week) to compute and update the model posterior (including all model state variables and parameters) using the model prior and the latest observations. For the model state variables, the prior is computed per the dynamic model (here, Equation 1); for the model parameters, the prior is the posterior from the last time step. As such, the influence of the initial prior range tends to be less pronounced compared to methods such as Markov Chain Monte Carlo (MCMC). In addition, to capture potential changes over time (e.g., likely increased detection for variants causing more severe disease), we applied space reprobing (SR) (Yang and Shaman, 2014), a technique that randomly replaces parameter values for a small fraction of the model ensemble, to explore a wider range of parameter possibilities (Appendix 1—table 5). Due to both the EAKF algorithm and space reprobing, the posterior parameter estimates can migrate outside the initial parameter ranges (e.g., for the transmission rate during the circulation of new variants).

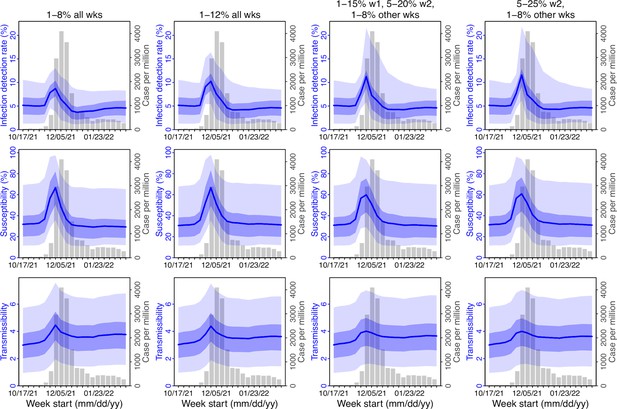

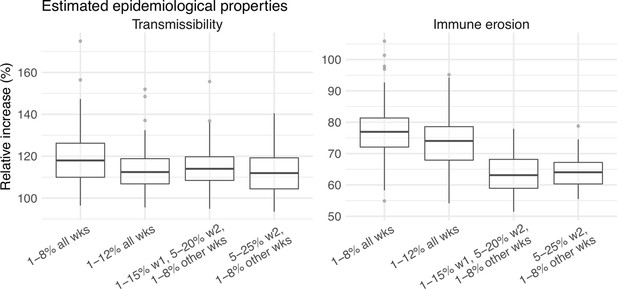

Testing of the infection-detection rate during the Omicron (BA.1) wave in Gauteng

A major challenge for this study is inferring the underlying transmission dynamics of the Omicron (BA.1) wave in Gauteng, where Omicron was initially detected and had the earliest case surge. In Gauteng, the number of cases during the first week of reported detection (i.e., the week starting 11/21/21) increased 4.4 times relative to the previous week; during the second week of report (i.e., the week starting 11/28/21) cases increased another 4.9 times. Yet after these two weeks of dramatic increases, cases peaked during the third week and started to decline afterwards. Initial testing suggested substantial changes in infection-detection rates during this time; in particular, detection could increase during the first two weeks due to awareness and concern for the novel Omicron variant and decline during later weeks due to constraints on testing capacity as well as subsequent reports of milder disease caused by Omicron. To more accurately estimate the infection-detection rate and underlying transmission dynamics, we ran and compared model-inference estimates using 4 settings for the infection-detection rate.

As noted above, with the model-EAKF filtering algorithm, parameter posterior is iteratively updated and becomes the prior at the next time step such that information from all previous time steps is sequentially incorporated. Given the sequential nature of the EAKF, rather than using a new prior distribution for the infection-detection rate, to explore new state space (here, potential changes in detection rate), we applied SR (Yang and Shaman, 2014), which randomly assigns the prior values of a small fraction of the model ensemble while preserving the majority that encodes prior information. In previous studies (Yang and Shaman, 2021c; Yang and Shaman, 2014), we have showed that the model ensemble posterior would remain similar if there is no substantial change in the system and more efficiently migrate towards new state space if there is a substantial change. Here, to explore potential changes in infection detection rates during the Omicron (BA.1) wave, we tested 4 SR settings for the infection-detection rate: (1) Use of the same baseline range as before (i.e., 1%–8%; uniform distribution, same for other ranges) for all weeks during the Omicron (BA.1) wave; (2) Use of a wider and higher range (i.e., 1%–12%) for all weeks; (3) Use of a range of 1%–15% for the 1st week of Omicron reporting (i.e., week starting 11/21/21), 5%–20% for the 2nd week of Omicron reporting (i.e., the week starting 11/28/21), and 1%–8% for the rest; and (4) Use of a range of 5%–25% for the 2nd week of reporting and 1%–8% for all others.

Estimated infection-detection rates in Gauteng increased substantially during the first two weeks of the Omicron (BA.1) wave and decreased afterwards under all four SR settings (Appendix 1—figure 12, 1st row). This consistency suggests a general trend in infection-detection rates at the time in accordance with the aforementioned potential changes in testing. Without using a higher SR range (e.g., 1%–8% and 1%–12% in columns 1–2 of Appendix 1—figure 12 vs 5%–20% and 5%–25% for week 2 in columns 3–4), the estimated increases in infection-detection rate were lower; instead, the model-inference system attributed the dramatic case increases in the first two weeks to higher increases in population susceptibility and transmissibility (Appendix 1—figure 12, 2nd and 3rd row, compare columns 1–2 vs. 3–4). However, the higher estimates for population susceptibility and transmissibility contradicted with the drastic decline in cases shortly afterwards such that the model-inference system readjusted the transmissibility to a lower level during later weeks (see the uptick in estimated transmissibility in Appendix 1—figure 12, 3rd row, first 2 columns). In contrast, when higher infection-detection rates were estimated for the first two weeks using the last two SR settings, the transmissibility estimates were more stable during later weeks (Appendix 1—figure 12, 3rd row, last 2 columns). In addition, model-inference using the latter two SR settings also generated more accurate retrospective predictions for the Omicron (BA.1) wave in Gauteng (Appendix 1—figure 13).

Given the above results, we used the 4th SR setting in the model-inference for Gauteng (i.e., replace a fraction of the infection detection rate using values randomly drawn from U[5%, 25%] for the week starting 11/28/21 and U[1%, 8%] for all other weeks during the Omicron wave). Reported cases in other provinces did not change as dramatically as in Gauteng; therefore, for those provinces, we used the baseline setting, i.e., values drawn from U[1%, 8%], for re-probing the infection-detection rate. Nonetheless, we note that the overall estimates for changes in transmissibility and immune erosion of Omicron (BA.1) were slightly higher under the first two SR settings but still consistent with the results presented in the main text (Appendix 1—figure 14).

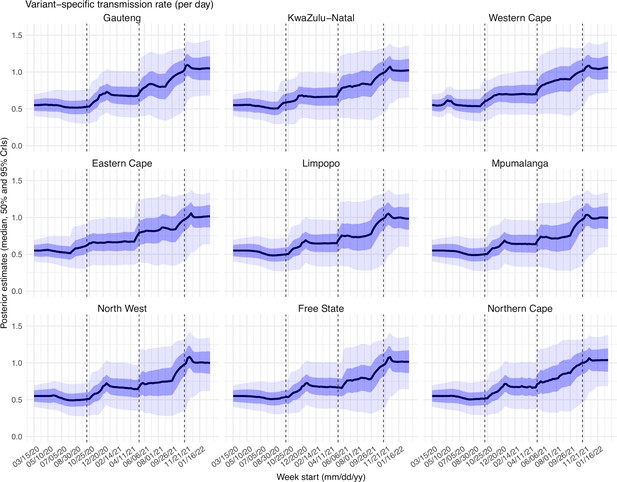

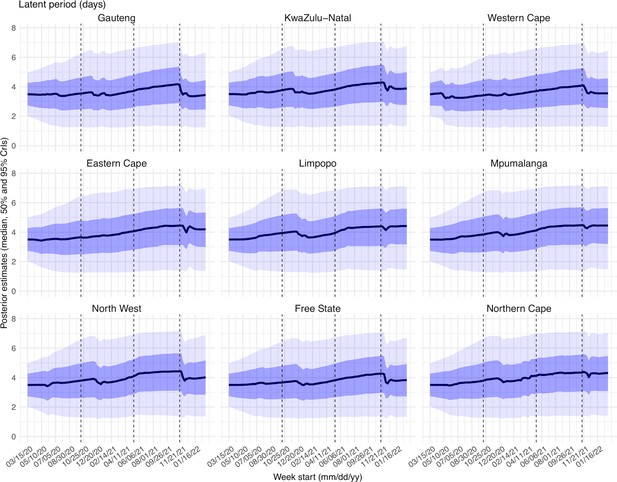

Examination of posterior estimates for all model parameters

To diagnose posterior estimates for each parameter, we plotted the posterior median, 50% and 95% credible intervals (CrIs) estimated for each week during the entire study period, for each of the nine provinces (Appendix 1—figure 15 – 23). As shown in Appendix 1—figure 15, the estimated transmission rate was relatively stable during the ancestral wave; it then increased along with the surge of the Beta variant around October 2020 and leveled off during the Beta wave. Similarly, following the initial surge of the Delta and Omicron variants, estimated transmission rates increased before leveling off when the new variant became predominant. Similar patterns are estimated for all provinces, indicating the model-inference system is able to capture the changes in transmission rate due to each new variant.

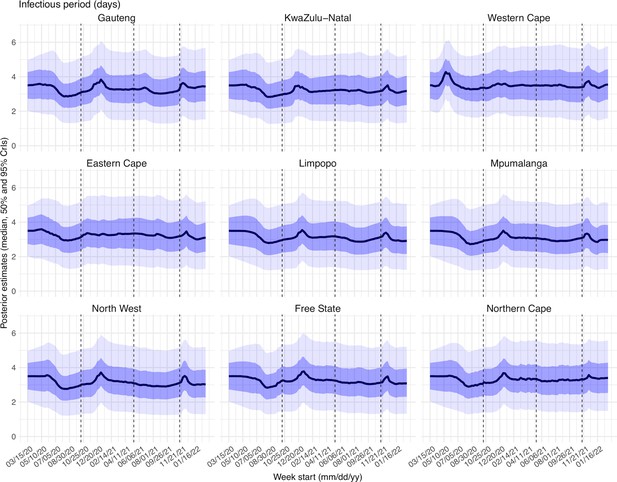

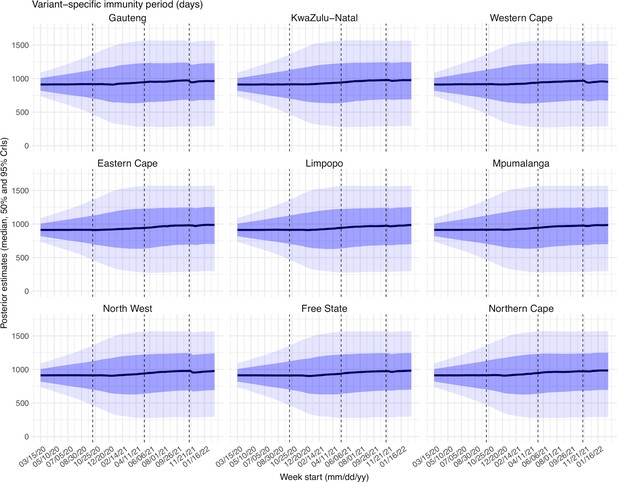

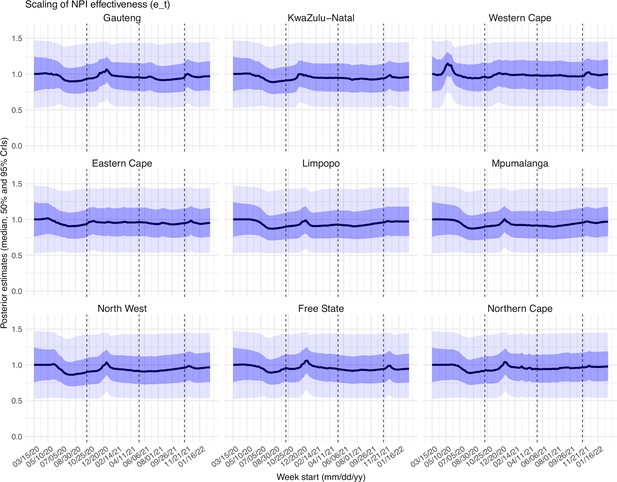

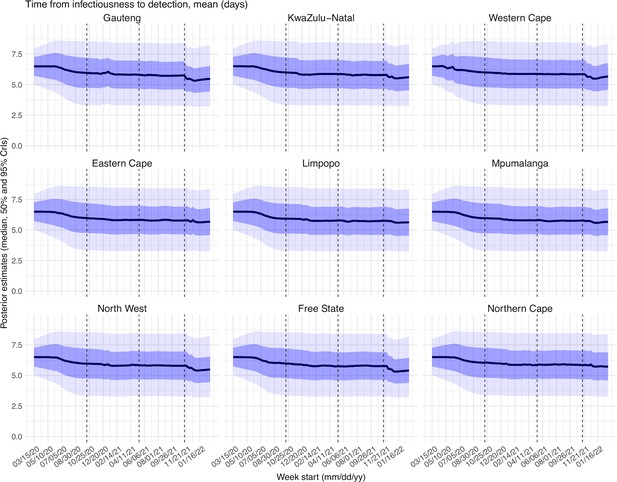

Estimated latent period (Appendix 1—figure 16), infectious period (Appendix 1—figure 17), immunity period (Appendix 1—figure 18), and the scaling factor of NPI effectiveness (Appendix 1—figure 19) all varied somewhat over time, but to a much less extent compared to the transmission rate. Estimated time from infectiousness to case detection decreased slightly over time, albeit with larger variations in later time periods (see Appendix 1—figure 20 for the mean and Appendix 1—figure 21 for the standard deviation). It is possible that the model-inference system could not adequately estimate the nuanced changes in these parameters using aggregated population level data.

Estimated infection-detection rates varied over time for all provinces (Appendix 1—figure 22). The infection-detection rate can be affected by (1) testing capacity, e.g., lower during the first weeks of the COVID-19 pandemic, and sometimes lower near the peak of a pandemic wave when maximal capacity was reached; (2) awareness of the virus, e.g., higher when a new variant was first reported and lower near the end of a wave; and (3) disease severity, e.g., higher when variants causing more severe disease were circulating. Overall, the estimates were consistent with these expected patterns.

Lastly, estimated IFRs also varied over time and across provinces (Appendix 1—figure 23). IFR can be affected by multiple factors, including infection demographics, innate severity of the circulating variant, quality and access to healthcare, and vaccination coverage. For infection demographics, IFR tended to be much lower in younger ages as reported by many (e.g., Levin et al., 2020). In South Africa, similar differences in infection demographics occurred across provinces. For instance, (Giandhari et al., 2021) noted a lower initial mortality in Gauteng, as earlier infections concentrated in younger and wealthier individuals. For the innate severity of the circulating variant, as noted in the main text, in general estimated IFRs were higher during the Beta and Delta waves than during the Omicron wave. In addition, as shown in Appendix 1—figure 23, estimated IFRs were substantially higher in four provinces (i.e., KwaZulu-Natal, Western Cape, Eastern Cape, and Free State) than other provinces during the Beta wave. Coincidentally, the earliest surges of the Beta variant occurred in three of those provinces (i.e., KwaZulu-Natal, Western Cape, Eastern Cape)(Tegally et al., 2021). Nonetheless, and as noted in the main text and the above subsection, the IFR estimates here should be interpreted with caution, due to the likely underreporting and irregularity of the COVID-19 mortality data used to generate these estimates.

A proposed approach to compute the reinfection rates using model-inference estimates

It is difficult to measure or estimate reinfection rate directly. In this study, we have estimated the immune erosion potential for three major SARS-CoV-2 variants of concern (VOCs) and the infection rates during each pandemic wave in South Africa. These estimates can be used to support estimation of the reinfection rate for a given population. In-depth analysis is needed for such estimations. Here, as an example, we propose a simple approach to illustrate the possibility.

Consider the estimation in the context of the four waves in South Africa in this study (i.e., ancestral, Beta, Delta, and Omicron BA.1 wave). Suppose the cumulative fraction of the population ever infected before the beta wave is (this is roughly the attack rate during the ancestral wave) and estimated immune erosion potential for Beta is . To compute the reinfection rate during the Beta wave, we can assume that are protected by this prior immunity, and that the remaining (i.e. those lost their immunity due to immune erosion) have the same risk of infection as those never infected, such that the reinfection rate/fraction among all infections, zbeta, during the Beta wave (i.e., zbeta is the attack rate by Beta) would be:

The reinfection rate/fraction among the entire population would be:

Combining the above, the cumulative fraction of the population ever infected by the end of the Beta wave and before the Delta wave would be:

Note that the fraction of the population ever infected, c, is updated to compute the subsequent fraction of the population protected by prior immunity, because the immune erosion potential here is estimated relative to the combined immunity accumulated until the rise of a new variant. We can repeat the above process for the Delta wave and the Omicron wave. See an example calculation in Appendix 1—table 4.

Work to refine the reinfection estimates (e.g., sensitivity of these estimates to assumptions and uncertainty intervals) is needed. Nonetheless, these example estimates (Appendix 1—table 4) are consistent with reported serology measures [4th column vs. e.g. ~90% seropositive in March 2022 after the Omicron BA.1 wave reported in Bingham et al., 2022] and reinfection rates reported elsewhere [5th and 6th columns vs. e.g., reported much higher reinfection rate during the Omicron wave in Pulliam et al., 2022]. Importantly, these estimates also show that, in addition to the innate immune erosive potential of a given new variant, the reinfection rate is also determined by the prior cumulative fraction of the population ever infected (4th column in Appendix 1—table 4) and the attack rate by each variant (3rd column in Appendix 1—table 4). That is, the higher the prior cumulative infection rate and/or the higher the attack rate by the new variant, the higher the reinfection rate would be for a new variant that can cause reinfection. For instance, despite the lower immune erosion potential of Delta than Beta, because of the high prior infection rate accumulated up to the Delta wave onset, the estimated reinfection rate by Delta among all Delta infections was higher compared to that during the Beta wave (6th column in Appendix 1—table 4). With the higher attack rate during the Delta wave, the reinfection rate among the entire population was much higher for Delta than Beta (5th column in Appendix 1—table 4). Thus, these preliminary results suggest that reinfection rates observed for each variant and differences across different variants should be interpreted in the context of the innate immune erosion potential of each variant, the prior cumulative infection rate of the study population, and the attack rate of each variant in the same population.

Model-fit to case and death data in each province.

Dots show reported SARS-CoV-2 cases and deaths by week. Blue lines and surrounding area show model estimated median, 50% (darker blue) and 95% (lighter blue) credible intervals. Note that reported mortality was high in February 2022 in some provinces with no clear explanation.

Model validation using hospitalization and excess mortality data.

Model estimated infection rates are compared to COVID-related hospitalizations (left panel) and excess mortality (right panel) during the Ancestral (A), Beta (B), Delta (C), and Omicron (D) waves. Boxplots show the estimated distribution for each province (middle bar = mean; edges = 50% CrIs and whiskers = 95% CrIs). Red dots show COVID-related hospitalizations (left panel, right y-axis) and excess mortality (right panel, right y-axis); these are independent measurements not used for model fitting. Correlation (r) is computed between model estimates (i.e., median cumulative infection rates for the nine provinces) and the independent measurements (i.e., hospitalizations in the nine provinces in left panel, and age-adjusted excess mortality in the right panel), for each wave. Note that hospitalization data begin from 6/6/20 and excess mortality data begin from 5/3/20 and thus are incomplete for the ancestral wave.

Model validation using retrospective prediction, for the remaining 5 provinces.

Model-inference was trained on cases and deaths data since March 15, 2020 until 2 weeks (1st plot in each panel) or 1 week (2nd plot) before the Delta or Omicron wave (see timing on the x-axis); the model was then integrated forward using the estimates made at the time to predict cases (left panel) and deaths (right panel) for the remaining weeks of each wave. Blue lines and surrounding shades show model fitted cases and deaths for weeks before the prediction (line = median, dark blue area = 50% CrIs, and light blue = 80% CrIs). Red lines show model projected median weekly cases and deaths; surrounding shades show 50% (dark red) and 80% (light red) CIs of the prediction. For comparison, reported cases and deaths for each week are shown by the black dots; however, those to the right of the vertical dash lines (showing the start of each prediction) were not used in the model. For clarity, here we show 80% CIs (instead of 95% CIs, which tend to be wider for longer-term projections) and predictions for the five least populous provinces (Limpopo in A and B; Mpumalanga in C and D; North West in E and F; Free State in G and H; and Northern Cape in I and J). Predictions for the other 4 provinces are shown in Figure 2.

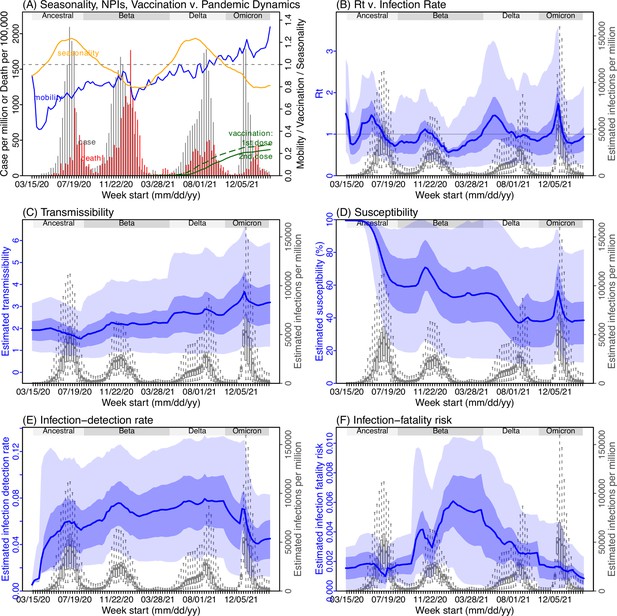

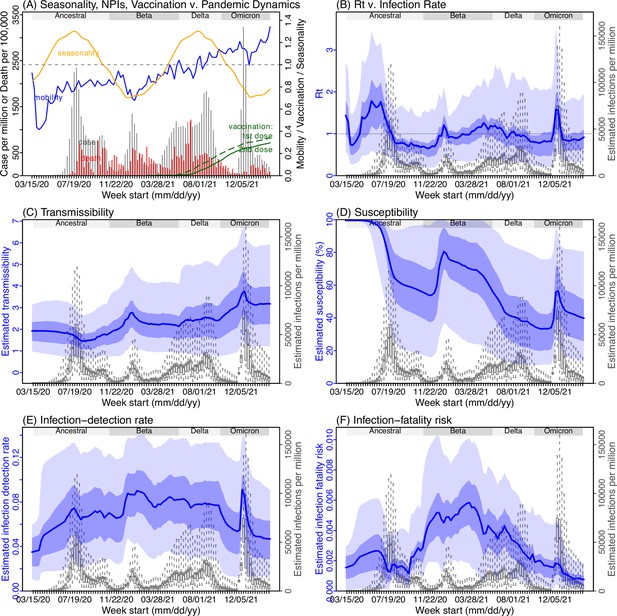

Model inference estimates for KwaZulu-Natal.

(A) Observed relative mobility, vaccination rate, and estimated disease seasonal trend, compared to case and death rates over time. Key model-inference estimates are shown for the time-varying effective reproduction number Rt (B), transmissibility RTX (C), population susceptibility (D, shown relative to the population size in percentage), infection-detection rate (E), and infection-fatality risk (F). Grey shaded areas indicate the approximate circulation period for each variant. In (B) – (F), blue lines and surrounding areas show the estimated mean, 50% (dark) and 95% (light) CrIs; boxes and whiskers show the estimated mean, 50% and 95% CrIs for estimated infection rates. Note that the transmissibility estimates (RTX in C) have removed the effects of changing population susceptibility, NPIs, and disease seasonality; thus, the trends are more stable than the reproduction number (Rt in B) and reflect changes in variant-specific properties. Also note that infection-fatality risk estimates were based on reported COVID-19 deaths and may not reflect true values due to likely under-reporting of COVID-19 deaths.

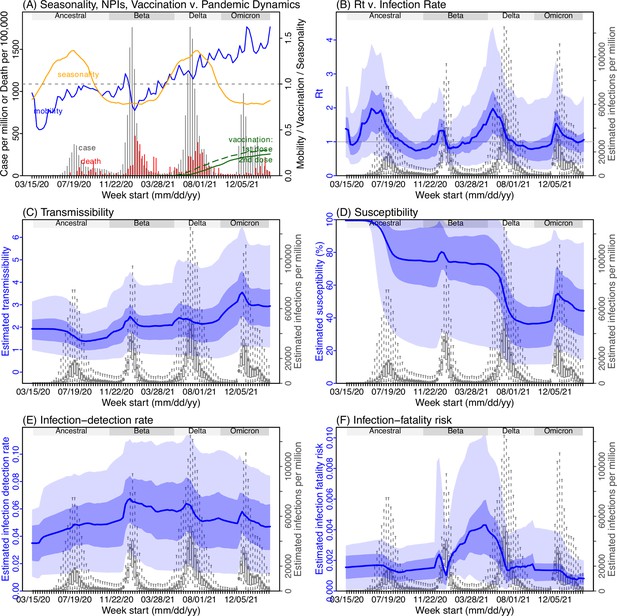

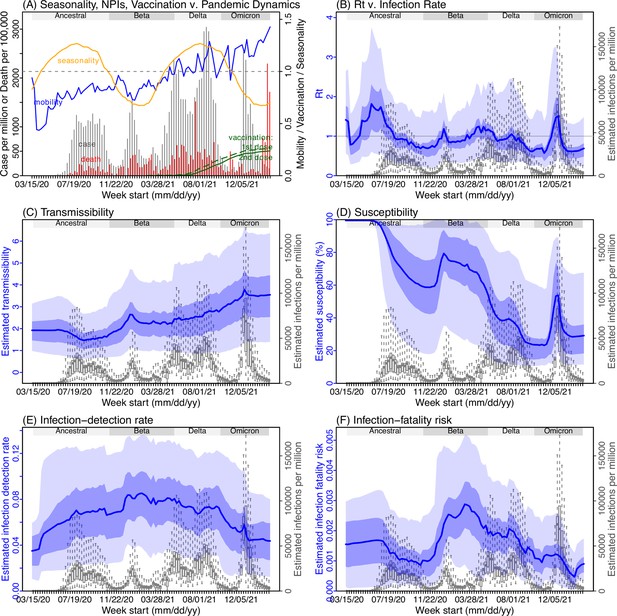

Model inference estimates for Western Cape.

(A) Observed relative mobility, vaccination rate, and estimated disease seasonal trend, compared to case and death rates over time. Key model-inference estimates are shown for the time-varying effective reproduction number Rt (B), transmissibility RTX (C), population susceptibility (D, shown relative to the population size in percentage), infection-detection rate (E), and infection-fatality risk (F). Grey shaded areas indicate the approximate circulation period for each variant. In (B) – (F), blue lines and surrounding areas show the estimated mean, 50% (dark) and 95% (light) CrIs; boxes and whiskers show the estimated mean, 50% and 95% CrIs for estimated infection rates. Note that the transmissibility estimates (RTX in C) have removed the effects of changing population susceptibility, NPIs, and disease seasonality; thus, the trends are more stable than the reproduction number (Rt in B) and reflect changes in variant-specific properties. Also note that infection-fatality risk estimates were based on reported COVID-19 deaths and may not reflect true values due to likely under-reporting of COVID-19 deaths.

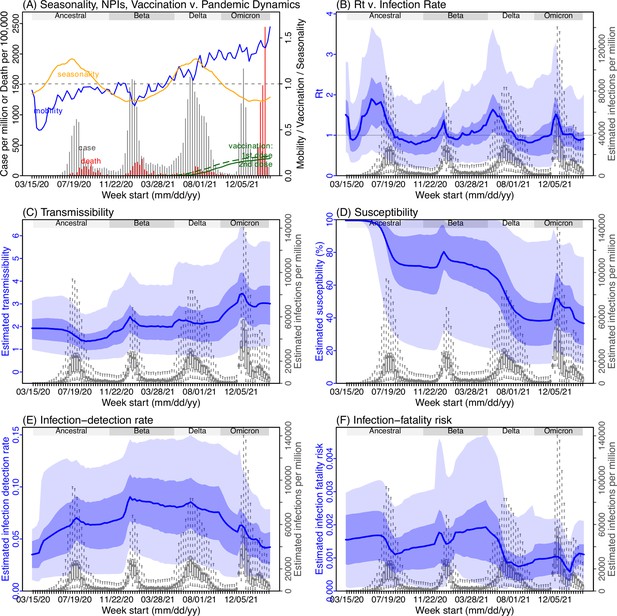

Model inference estimates for Eastern Cape.

(A) Observed relative mobility, vaccination rate, and estimated disease seasonal trend, compared to case and death rates over time. Key model-inference estimates are shown for the time-varying effective reproduction number Rt (B), transmissibility RTX (C), population susceptibility (D, shown relative to the population size in percentage), infection-detection rate (E), and infection-fatality risk (F). Grey shaded areas indicate the approximate circulation period for each variant. In (B) – (F), blue lines and surrounding areas show the estimated mean, 50% (dark) and 95% (light) CrIs; boxes and whiskers show the estimated mean, 50% and 95% CrIs for estimated infection rates. Note that the transmissibility estimates (RTX in C) have removed the effects of changing population susceptibility, NPIs, and disease seasonality; thus, the trends are more stable than the reproduction number (Rt in B) and reflect changes in variant-specific properties. Also note that infection-fatality risk estimates were based on reported COVID-19 deaths and may not reflect true values due to likely under-reporting of COVID-19 deaths.

Model inference estimates for Limpopo.

(A) Observed relative mobility, vaccination rate, and estimated disease seasonal trend, compared to case and death rates over time. Key model-inference estimates are shown for the time-varying effective reproduction number Rt (B), transmissibility RTX (C), population susceptibility (D, shown relative to the population size in percentage), infection-detection rate (E), and infection-fatality risk (F). Grey shaded areas indicate the approximate circulation period for each variant. In (B) – (F), blue lines and surrounding areas show the estimated mean, 50% (dark) and 95% (light) CrIs; boxes and whiskers show the estimated mean, 50% and 95% CrIs for estimated infection rates. Note that the transmissibility estimates (RTX in C) have removed the effects of changing population susceptibility, NPIs, and disease seasonality; thus, the trends are more stable than the reproduction number (Rt in B) and reflect changes in variant-specific properties. Also note that infection-fatality risk estimates were based on reported COVID-19 deaths and may not reflect true values due to likely under-reporting of COVID-19 deaths.

Model inference estimates for Mpumalanga.

(A) Observed relative mobility, vaccination rate, and estimated disease seasonal trend, compared to case and death rates over time. Key model-inference estimates are shown for the time-varying effective reproduction number Rt (B), transmissibility RTX (C), population susceptibility (D, shown relative to the population size in percentage), infection-detection rate (E), and infection-fatality risk (F). Grey shaded areas indicate the approximate circulation period for each variant. In (B) – (F), blue lines and surrounding areas show the estimated mean, 50% (dark) and 95% (light) CrIs; boxes and whiskers show the estimated mean, 50% and 95% CrIs for estimated infection rates. Note that the transmissibility estimates (RTX in C) have removed the effects of changing population susceptibility, NPIs, and disease seasonality; thus, the trends are more stable than the reproduction number (Rt in B) and reflect changes in variant-specific properties. Also note that infection-fatality risk estimates were based on reported COVID-19 deaths and may not reflect true values due to likely under-reporting of COVID-19 deaths.

Model inference estimates for North West.

(A) Observed relative mobility, vaccination rate, and estimated disease seasonal trend, compared to case and death rates over time. Key model-inference estimates are shown for the time-varying effective reproduction number Rt (B), transmissibility RTX (C), population susceptibility (D, shown relative to the population size in percentage), infection-detection rate (E), and infection-fatality risk (F). Grey shaded areas indicate the approximate circulation period for each variant. In (B) – (F), blue lines and surrounding areas show the estimated mean, 50% (dark) and 95% (light) CrIs; boxes and whiskers show the estimated mean, 50% and 95% CrIs for estimated infection rates. Note that the transmissibility estimates (RTX in C) have removed the effects of changing population susceptibility, NPIs, and disease seasonality; thus, the trends are more stable than the reproduction number (Rt in B) and reflect changes in variant-specific properties. Also note that infection-fatality risk estimates were based on reported COVID-19 deaths and may not reflect true values due to likely under-reporting of COVID-19 deaths.

Model inference estimates for Free State.

(A) Observed relative mobility, vaccination rate, and estimated disease seasonal trend, compared to case and death rates over time. Key model-inference estimates are shown for the time-varying effective reproduction number Rt (B), transmissibility RTX (C), population susceptibility (D, shown relative to the population size in percentage), infection-detection rate (E), and infection-fatality risk (F). Grey shaded areas indicate the approximate circulation period for each variant. In (B) – (F), blue lines and surrounding areas show the estimated mean, 50% (dark) and 95% (light) CrIs; boxes and whiskers show the estimated mean, 50% and 95% CrIs for estimated infection rates. Note that the transmissibility estimates (RTX in C) have removed the effects of changing population susceptibility, NPIs, and disease seasonality; thus, the trends are more stable than the reproduction number (Rt in B) and reflect changes in variant-specific properties. Also note that infection-fatality risk estimates were based on reported COVID-19 deaths and may not reflect true values due to likely under-reporting of COVID-19 deaths.

Model inference estimates for Northern Cape.