Signatures of Bayesian inference emerge from energy-efficient synapses

eLife assessment

This important study provides deep insight into a ubiquitous, but poorly understood, phenomenon: synaptic noise (primarily due to failures). Through a combination of theoretical analysis, simulations, and comparison to existing experimental data, this paper makes a compelling case that synapses are noisy because reducing noise is expensive. It touches on probably the most significant feature of living organisms -- their ability to learn -- and will be of broad interest to the neuroscience community.

https://doi.org/10.7554/eLife.92595.3.sa0Important: Findings that have theoretical or practical implications beyond a single subfield

- Landmark

- Fundamental

- Important

- Valuable

- Useful

Compelling: Evidence that features methods, data and analyses more rigorous than the current state-of-the-art

- Exceptional

- Compelling

- Convincing

- Solid

- Incomplete

- Inadequate

During the peer-review process the editor and reviewers write an eLife Assessment that summarises the significance of the findings reported in the article (on a scale ranging from landmark to useful) and the strength of the evidence (on a scale ranging from exceptional to inadequate). Learn more about eLife Assessments

Abstract

Biological synaptic transmission is unreliable, and this unreliability likely degrades neural circuit performance. While there are biophysical mechanisms that can increase reliability, for instance by increasing vesicle release probability, these mechanisms cost energy. We examined four such mechanisms along with the associated scaling of the energetic costs. We then embedded these energetic costs for reliability in artificial neural networks (ANNs) with trainable stochastic synapses, and trained these networks on standard image classification tasks. The resulting networks revealed a tradeoff between circuit performance and the energetic cost of synaptic reliability. Additionally, the optimised networks exhibited two testable predictions consistent with pre-existing experimental data. Specifically, synapses with lower variability tended to have (1) higher input firing rates and (2) lower learning rates. Surprisingly, these predictions also arise when synapse statistics are inferred through Bayesian inference. Indeed, we were able to find a formal, theoretical link between the performance-reliability cost tradeoff and Bayesian inference. This connection suggests two incompatible possibilities: evolution may have chanced upon a scheme for implementing Bayesian inference by optimising energy efficiency, or alternatively, energy-efficient synapses may display signatures of Bayesian inference without actually using Bayes to reason about uncertainty.

Introduction

The synapse is the major site of inter-cellular communication in the brain. The amplitude of synaptic postsynaptic potentials (PSPs) are usually highly variable or stochastic. This variability arises primarily presynaptically: the release of neurotransmitter from presynaptically housed vesicles into the synaptic cleft has variable release probabilities and variable quantal sizes (Lisman and Harris, 1993; Branco and Staras, 2009; Brock et al., 2020). Unreliable synaptic transmission seems puzzling, especially in light of evidence for low-noise, almost failure-free transmission at some synapses (Paulsen and Heggelund, 1994; Paulsen and Heggelund, 1996; Bellingham et al., 1998). Moreover, the degree to which a synapse is unreliable does not just vary from one synapse type to another, there is also an heterogeneity of precision amongst synapses of the same type (Murthy et al., 1997; Dobrunz and Stevens, 1997). Given that there is capacity for more precise transmission, why is this capacity not used in more synapses?

Unreliable transmission degrades accuracy but Laughlin et al., 1998, showed that the synaptic connection from a photoreceptor to a retinal large monopolar cell could increase its precision by increasing the number of synapses, averaging the noise away, but this comes at the cost of extra energy per bit of information transmitted. Moreover, Levy and Baxter, 2002, demonstrated that there is a value for the precision which optimises the energy cost of information transmission. In this paper, we explore this notion of a performance-energy tradeoff.

However, it is important to consider precision and energy cost in the context of neuronal computation; the brain does not simply transfer information from neuron to neuron, it performs computation through the interaction between neurons. However, models outlining a synaptic energy-performance tradeoff, (Laughlin et al., 1998; Levy and Baxter, 2002; Goldman, 2004; Harris et al., 2012; Harris et al., 2019; Karbowski, 2019), predominantly consider information transmission between just two neurons and the corresponding information-theoretic view treats the synapse as an isolated conduit of information (Shannon, 1948). In contrast, in reality, a single synapse is just one unit of the computational machinery of the brain. As such, the performance of an individual synapse needs to be considered in the context of circuit performance. To perform computation in an energy-efficient way the circuit as a whole needs to allocate resources across different synapses to optimise the overall energy cost of computation (Yu et al., 2016; Schug et al., 2021).

Here, we consider the consequences of a tradeoff between network performance and energetic reliability costs that depend explicitly upon synapse precision. We estimate the energy costs associated with precision by considering the biological mechanisms underpinning synaptic transmission. By including these costs in a neural network designed to perform a classification task, we observe a heterogeneity in synaptic precision and find that this ‘allocation’ of precision is related to signatures of synapse ‘importance’, which can be understood formally on the grounds of Bayesian inference.

Results

We proposed energetic costs for reliable synaptic transmission and then measured their consequences in an artificial neural network (ANN).

Biophysical costs

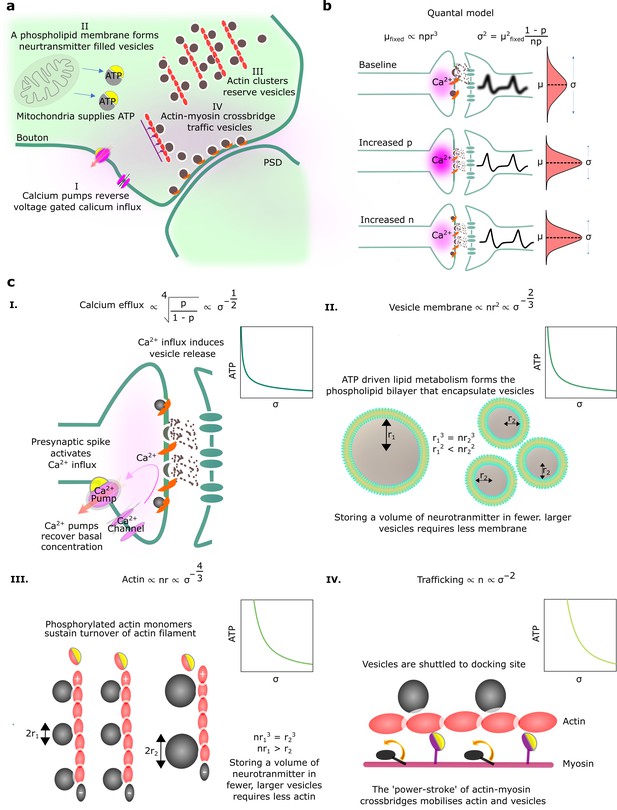

Here, we seek to understand the biophysical energetic costs of synaptic transmission, and how those costs relate to the reliability of transmission (Figure 1a). We start by considering the underlying mechanisms of synaptic transmission. In particular, synaptic transmission begins with the arrival of a spike at the axon terminal. This triggers a large influx of calcium ions into the axon terminal. The increase in calcium concentration causes the release of neurotransmitter-filled vesicles docked at axonal release sites. The neurotransmitter diffuses across the synaptic cleft to the postsynaptic dendritic membrane. There, the neurotransmitter binds with ligand-gated ion channels causing a change in voltage, i.e., a PSP. This process is often quantified using the Katz and Miledi, 1965, quantal model of neurotransmitter release. Under this model, for each connection between two cells, there are docked, readily releasable vesicles (see Figure 1a for an illustration of a single synaptic connection with multi-vesicular release).

Physiological reliability costs.

(a) Physiological processes that influence postsynaptic potential (PSP) precision. (b) A binomial model of vesicle release. For fixed PSP mean, increasing or decreases PSP variance. We have substituted to reflect that vesicle volume scales quantal size (Karunanithi et al., 2002). (c) Four different biophysical costs of reliable synaptic transmission. (I) Calcium pumps reverse the calcium influx that triggers vesicle release. A high probability of vesicle release requires a large influx of calcium, and extruding this calcium is costly. Note that represents the vesicle radius. (II) An equivalent volume of neurotransmitter can be stored in few large vesicles or shared between many smaller vesicles. Sharing a fixed volume of neurotransmitter among many small vesicles reduces PSP variability but increases vesicle surface area, creating greater demand for phospholipid metabolism and hence greater energetic costs. (III) Actin filament supports the structure of vesicle clusters at the terminal. Many and large vesicles require more actin and higher rates of ATP-dependent actin turnover. (IV) There are biophysical costs that scale with the number of vesicles (Laughlin et al., 1998; Attwell and Laughlin, 2001), e.g., vesicle trafficking driven by myosin-V active transport along actin filaments.

An alternative interpretation of this model might consider the number of uni-vesicular connections between two neurons. When the presynaptic cell spikes, each docked vesicle releases with probability and each released vesicle causes a PSP of size . Thus, the mean, μ, and variance, , of the PSP can be written (see Figure 1b),

where is considered a scaling variable. An assertion in our model is that variability in PSP strength is the result of variable numbers of vesicle release, not variability in ; here, during any PSP, is assumed constant across vesicles. While there is some suggestion that intra- and inter-site variability in is a significant component of PSP variability (see Silver, 2003), we ultimately expect quantal variability to be small relative to the variability attributed to vesicular release. This is supported by the classic observation that PSP amplitude histograms have a multi-peak structure (Boyd and Martin, 1956; Holler et al., 2021), and by more direct measurement and modelling of vesicle release (Forti et al., 1997; Raghavachari and Lisman, 2004).

We considered four biophysical costs associated with improving the reliability of synaptic transmission, while keeping the mean fixed, and derived the associated scaling of the energetic cost with PSP variance.

Calcium efflux. Reliability is higher when the probability of vesicle release, , is higher. As vesicle release is triggered by an increase in intracellular calcium, greater calcium concentration implies higher release probability. However, increased calcium concentration implies higher energetic costs. In particular, calcium that enters the synaptic bouton will subsequently need to be pumped out. We take the cost of pumping out calcium ions to be proportional to the calcium concentration, and take the relationship between release probability and calcium concentration to be governed by a Hill Equation, following Sakaba and Neher, 2001. The resulting relationship between energetic costs and reliability is (Figure 1cI; see Appendix 1, ‘Reliability costs’ for further details).

Vesicle membrane surface area. There may also be energetic costs associated with producing and maintaining a large amount of vesicle membrane. Purdon et al., 2002, argues that phospholipid metabolism may take a considerable proportion of the brain’s energy budget. Additionally, costs associated with membrane surface area may arise because of leakage of hydrogen ions across vesicles (Pulido and Ryan, 2021). Importantly, a cost for vesicle surface area is implicitly a cost on reliability. In particular, we could obtain highly reliable synaptic release by releasing many small vesicles, such that stochasticity in individual vesicle release events averages out. However, the resulting many small vesicles have a far larger surface area than a single large vesicle, with the same mean PSP. Thus, a cost on surface area implies a relationship between energetic costs and reliability; in particular (Figure 1cII; see Appendix 1, ‘Reliability costs’ for further details).

Actin. Another cost for small but numerous vesicles arises from a demand for structural organisation of the vesicles pool by filaments such as actin (Cingolani and Goda, 2008; Gentile et al., 2022). Critically, there are physical limits to the number of vesicles that can be attached to an actin filament of a given length. In particular, if vesicles are smaller we can attach more vesicles to a given length of actin, but at the same time, the total vesicle volume (and hence the total quantity of neurotransmitter) will be smaller (Figure 1cIII). A fixed cost per unit length of actin thus implies a relationship between energetic costs and reliability of, (see Appendix 1, ‘Reliability costs’).

Trafficking. A final class of costs is proportional to the number of vesicles (Laughlin et al., 1998). One potential biophysical mechanism by which such a cost might emerge is from active transport of vesicles along actin filaments or microtubles to release sites (Chenouard et al., 2020). In particular, vesicles are transported by ATP-dependent myosin-V motors (Bridgman, 1999), so more vesicles require a greater energetic cost for trafficking. Any such cost proportional to the number of vesicles gives rise to a relationship between energetic cost and PSP variance of the form, (Figure 1cIV; see Appendix 1, ‘Reliability costs’).

Costs related to PSP mean/magnitude. While costs on precision are the central focus of this paper, it is certainly the case that other costs relating to the mean PSP magnitude constitute a major cost of synaptic transmission. For example, high amplitude PSPs require a large quantity of neurotransmitter, high probability of vesicle release, and a large number of postsynaptic receptors (Attwell and Laughlin, 2001). These can be formalised as costs on the PSP mean, μ , and can additionally be related to L1 weight decay in a machine learning context (Rosset and Zhu, 2006; Sacramento et al., 2015).

Reliability costs in ANNs

Next, we sought to understand how these biophysical energetic costs of reliability might give rise to patterns of variability in a trained neural network. Specifically, we trained ANNs using an objective that embodied a tradeoff between performance and reliability costs,

The ‘performance cost’ term measures the network’s performance on the task, for instance in our classification tasks we used the usual cross-entropy cost. The ‘magnitude cost’ term captures costs that depend on the PSP mean, while the ‘reliability cost’ term captures costs that depend on the PSP precision. In particular,

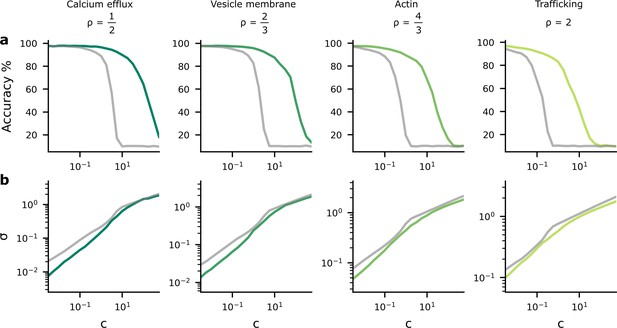

Here, indexes synapses, and recall that σi is the standard deviation of the ith synapse. The multiplier in the reliability cost determines the strength of the reliability cost relative to the performance cost. Small values for imply that the reliability cost term is less important, permitting precise transmission and higher performance. Large values for give greater importance to the reliability cost encouraging energy efficiency by allowing higher levels of synaptic noise, causing detriment to performance (see Figure 2).

Accuracy and postsynaptic potential (PSP) variance as we change the tradeoff between reliability and performance costs.

We changed the tradeoff by modifying , in Equation 4, which multiplies the reliability cost. (a) As the reliability cost multiplier, , increases, the accuracy decreases considerably. The green lines show the heterogeneous noise setting where the noise level is optimised on a per-synapse basis, while the grey lines show the homogeneous noise setting, where the noise is optimised, but forced to be the same for all synapses. (b) When the reliability cost multiplier, , increases, the synaptic noise level (specifically, the average standard deviation, σ) increases.

We trained fully connected, rate-based neural network to classify MNIST digits. Stochastic synaptic PSPs were sampled from a Normal distribution,

where, recall, μi is the PSP mean and σi2 is the PSP variance for the ith synapse. The output firing rate was given by

Here, can be understood as the somatic membrane potential, and represents the relationship between somatic membrane potential and firing rate; we used ReLU (Fukushima, 1975). We optimised network parameters and using Adam (Kingma and Ba, 2014) (see Materials and methods for details on architecture and hyperparameters).

The tradeoff between accuracy and reliability costs in trained networks

Next we sought to understand how the tradeoff between accuracy and reliability cost manifests in trained networks. Perhaps the critical parameter in the objective (Equation 2 and Equation 4) was , which controlled the importance of the reliability cost relative to the performance cost. We trained networks with a variety of different values of , and with four values for motivated by the biophysical costs (the different columns).

In practice all the reliability costs and others we may have overlooked should together constitute an overall energetic reliability cost. However, it is difficult to estimate the specific contributions of different costs, i.e., the individual values of . While Attwell and Laughlin, 2001; Engl and Attwell, 2015, estimate the ATP demands for various synaptic processes, it is difficult to relate these to the relative scale of each cost at a synapse level. Therefore, for simplicity, we kept each cost separate, training neural networks with just one choice of reliability cost; emphasising results shared across all costs. It is possible that one cost dominates all the others, but if that is not the case it will be necessary to use a more complicated reliability cost. However, since we have considered four costs with very different power-law behaviours, it is likely the behaviour will not be significantly different to what we have observed.

As expected, we found that as increased, performance fell (Figure 2a) and the average synaptic standard deviation increased (Figure 2b). Importantly, we considered two different settings. First, we considered an homogeneous noise setting, where is optimised but kept the same across all synapses (grey lines). Second, we considered an heterogeneous noise setting, where is allowed to vary across synapses, and is optimised on a per-synapse basis. We found that heterogeneous noise (i.e. allowing the noise to vary on a per-synapse basis) improved accuracy considerably for a fixed value of , but only reduced the average noise slightly.

The findings in Figure 2 imply a tradeoff between accuracy and average noise level, , as we change . If we explicitly plot the accuracy against the noise level using the data from Figure 2, we see that as the synaptic noise level increases, the accuracy decreases (Figure 3a). Further, the synaptic noise level is associated with a reliability cost (Figure 3b), and this relationship changes in the different columns as they use different values of associated with different biological mechanisms that might give rise to the dominant biophysical reliability cost. Thus, there is also a relationship between accuracy and reliability costs (Figure 3c), with accuracy increasing as we allow the system to invest more energy in becoming more reliable, which implies a higher reliability cost. Again, we plotted both the homogeneous (grey lines) and heterogeneous noise cases (green lines). We found that heterogeneous noise allowed for considerably improved accuracy at a given average noise standard deviation or a given reliability cost.

The performance-reliability cost tradeoff in artificial neural network (ANN) simulations.

(a) Accuracy decreases as the average postsynaptic potential (PSP) standard deviation, , increases. The grey lines are for the homogeneous noise setting where the PSP variance is optimised but isotropic (i.e. the same across all synapses), while the green lines are for the heterogeneous noise setting, where the PSP variances are optimised individually on a per-synapse basis. (b) Increasing reliability by reducing leads to greater reliability costs, and this relationship is different for different biophysical mechanisms and hence values for (columns). (c) Higher accuracy therefore implies larger reliability cost.

Energy-efficient patterns of synapse variability

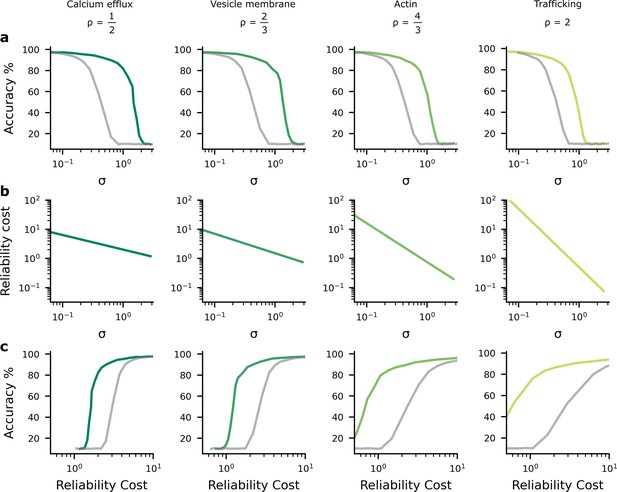

We found that the heterogeneous noise setting, where we individually optimise synaptic noise on a per-synapse basis, performed considerably better than the homogeneous noise setting (Figure 3). This raised an important question: how does the network achieve such large improvements by optimising the noise levels on a per-synapse basis? We hypothesised that the system invests a lot of energy in improving the reliability for ‘important’ synapses, i.e., synapses whose weights have a large impact on predictions and accuracy (Figure 4a). Conversely, the system allows unimportant synapses to have high variability, which reduces reliability costs (Figure 4b). To get further intuition, we compared both and on the same plot (Figure 4c). Specifically, we put the important synapse, from Figure 4a, on the horizontal axis, and the unimportant synapse, from Figure 4b, on the vertical axis. In Figure 4c, the relative importance of the synapse is now depicted by how the cost increases as we move away from the optimal value of the weight. Specifically, the cost increases rapidly as we move away from the optimal value of , but increases much more slowly as we move away from the optimal value of . Now, consider deviations in the synaptic weight driven by homogeneous synaptic variability (Figure 4c left, grey points). Many of these points have poor performance (i.e. a high performance cost), due to relatively high noise on the important synapse (i.e. ). Next, consider deviations in the synaptic weight driven by heterogeneous, optimised variability (Figure 4c left, green points). Critically, optimising synaptic noise reduces variability for the important synapse, and that reduces the average performance cost by eliminating large deviations on the important synapse. Thus, for the same overall reliability cost, heterogeneous, optimised variability can achieve much lower performance costs, and hence much lower overall costs than homogeneous variability (Figure 4d).

Schematic depiction of the impact of synaptic noise on synapses with different importance.

(a) First, we considered an important synapse for which small deviations in the weight, , e.g., driven by noise, imply a large increase in the performance cost. This can be understood as a high curvature of the performance cost as a function of . (b) Next we considered an unimportant synapse, for which deviations in the weights cause far less increase in performance cost. (c) A comparison of the impacts of homogeneous and optimised heterogeneous variability for synapses and from (a and b). The performance cost is depicted using the purple contours, and realisations of the postsynaptic potentials (PSPs) driven by synaptic variability are depicted in the grey/green points. The grey points (left) depict homogeneous noise while the green points (right) depict optimised, heterogeneous noise. (d) The noise distributions in panel c are chosen to keep the same reliability cost (diagonally hatched area); but the homogeneous noise setting has far a higher performance cost, primarily driven by larger noise in the important synapse, .

To investigate experimental predictions arising from optimised, heterogeneous variability, we needed a way to formally assess the ‘importance’ of synapses. We used the ‘curvature’ of the performance cost: namely the degree to which small deviations in the weights from their optimal values will degrade performance. If the curvature is large (Figure 4a), then small deviations in the weights, e.g., those caused by noise, can drastically reduce performance. In contrast, if the curvature is smaller (Figure 4b), then small deviations in the weights cause a much smaller reduction in performance. As a formal measure of the curvature of the objective, we used the Hessian matrix, . This describes the shape of the objective as a function of the synaptic weights, the : specifically, it is the matrix of second derivatives of the objective, with respect to the weights, and measures the local curvature of objective. We were interested in the diagonal elements, ; the second derivatives of the objective with respect to .

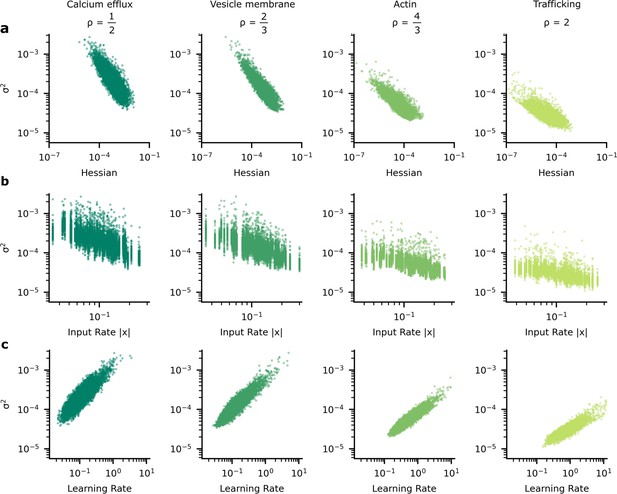

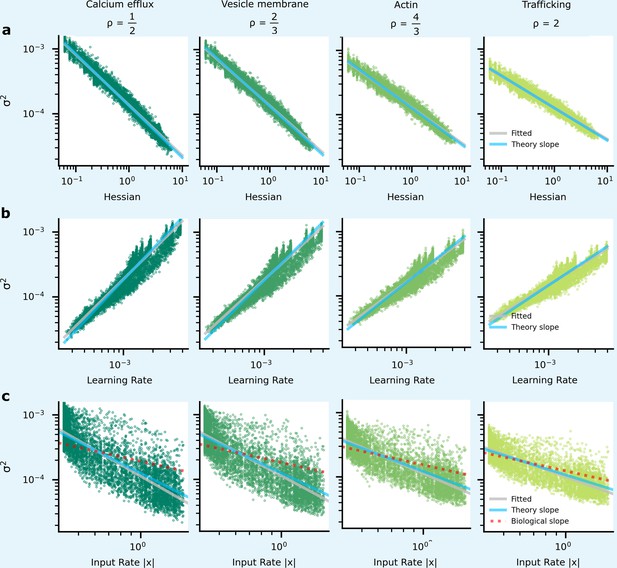

We began by looking at how the optimised synaptic noise varied with synapse importance, as measured by the curvature or, more formally, the Hessian (Figure 5a). The Hessian values were estimated using the average-squared gradient, see Appendix 3, ‘Synapse importance and gradient magnitudes’. We found that as the importance of the synapse increased, the optimised noise level decreased. These patterns of synapse variability make sense because noise is more detrimental at important synapses and so it is worth investing energy to reduce the noise in those synapses.

The heterogeneous patterns of synapse variability in artificial neural networks (ANNs) optimised by the tradeoff.

We present data patterns on logarithmic axis between signatures of synapse importance and variability for 10,000 (100 neuron units, each with 100 synapses) synapses that connect two hidden layers in our ANN. (a) Synapses whose corresponding diagonal entry in the Hessian is large have smaller variance. (b) Synapses with high variance have faster learning rates. (c) As input firing rate increases, synapse variance decreases.

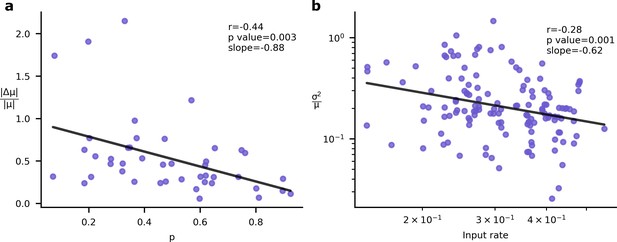

However, this relationship (Figure 5a) between the importance of a synapse and the synaptic variability is not experimentally testable, as we are not able to directly measure synapse importance. That said, we are able to obtain two testable predictions. First, the input rate in our simulations was negatively correlated with optimised synaptic variability (Figure 5b). Second, the optimised synaptic variability was larger for synapses with larger learning rates (Figure 5c). Critically, similar patterns have been observed in experimental data. In Figure 6a we present the negative correlation between learning rate and synaptic reliability presented by Schug et al., 2021, from in vitro measurements of V1 (layer 5) pyramidal synapses before and after STDP-induced long-term plasticity (LTP) conducted by Sjöström et al., 2001. Furthermore, a relationship between input firing rate and synaptic variability was observed by Aitchison et al., 2021, using in vivo functional recordings from V1 (layer 2/3) (Ko et al., 2013; Figure 6b).

Experimental signatures of Bayesian synapses.

The Bayesian synapse hypothesis predicts relationships between synapse reliability, learning rate, and input rate. (a) Synapses with higher probability of release, , demonstrate smaller increases in synaptic mean following long-term plasticity (LTP) induction. This pattern was originally observed by Schug et al., 2021. (b) As input firing rates are increased, normalised EPSP variability decreases with (Aitchison et al., 2021).

To understand why these patterns of variability emerge in our simulations and in data, we need to understand the connection between synapse importance, synaptic inputs (Figure 5b, Figure 6b), and synaptic learning (Figure 5c, Figure 6a). Perhaps the easiest connection is between the synapse importance and the input firing rate. If the input cell never fires, then the synaptic weight cannot affect the network output, and the synapse has zero importance (and also zero Hessian; see Appendix 2, ‘High input rates and high precision at important synapses’). This would suggest a tendency for synapses with higher input firing rates to be more important, and hence to have lower variability. This pattern is indeed borne out in our simulations (Figure 5b; also see Appendix 6—figure 1), though of course there is a considerable amount of noise: there are a few important synapses with low input rates, and vice versa.

Next, we consider the connection between learning rate and synapse importance. To understand this connection, we need to choose a specific scheme for modulating the learning rate as a function of the inputs. While the specific scheme for modulating the learning rate is ultimately an assumption, we believe modern deep learning offers strong guidance as to the optimal family of schemes for modulating the learning rate. In particular, modern, state-of-the-art, update rules for ANNs almost always use an adaptive learning rate. These adaptive learning rates, (including the most common such as Adam and variants), almost always use a normalising learning rate which decreases in response to high incoming gradients,

Specifically, the local learning rate for the ith synapse, ηi, is usually a base learning rate, ηbase, divided by the root-mean-squared gradient at this synapse . Critically, the root-mean-squared gradient turns out to be strongly related to synapse importance. Intuitively, important synapses with greater impact on network predictions will have larger gradients (see Appendix 3, ‘Synapse importance and gradient magnitudes’).

In vivo performance requires selective formation, stabilisation, and elimination of LTP (Yang et al., 2009), raising the questions as to which biological mechanisms are able to provide this selectivity. Reducing updates at historically important synapses is one potential approach to determining which synapses should have their strengths adjusted and which should be stabilised. Adjusting learning rates based on synapse importance enables fast, stable learning (LeCun et al., 2002; Kingma and Ba, 2014; Khan et al., 2018; Aitchison, 2020; Martens, 2020; Jegminat et al., 2022).

For our purposes, the crucial point is that when training using an adaptive learning rate such as Equation 7, important synapses have higher root-mean-squared gradients, and hence lower learning rates. Here, we use a specific set of update rules which uses this adaptive learning rate (i.e. Adam: Kingma and Ba, 2014; Yang and Li, 2022). Thus, we can use learning rate as a proxy for importance, allowing us to obtain the predictions tested in Figure 5b which match Figure 5a/c.

The connection to Bayesian inference

Surprisingly, our experimental predictions obtained for optimised, heterogeneous synaptic variability (Figure 5) match those arising from Bayesian synapses presented in Figure 6 (i.e. synapses that use Bayes to infer their weights; Aitchison et al., 2021). Our first prediction was that lower variability implies a lower learning rate. The same prediction also arises if we consider Bayesian synapses. In particular, if variability and hence uncertainty is low, then a Bayesian synapse is very certain that it is close to the optimal value. In that case, new information should have less impact on the synaptic weight, and the learning rate should be lower. Our second prediction was that higher presynaptic firing rates imply less variability. Again, this arises in Bayesian synapses: Bayesian synapses should become more certain and less variable if the presynaptic cell fires more frequently. Every time the presynaptic cell fires, the synapse gets a feedback signal which gives a small amount of information about the right value for that synaptic weight. So the more times the presynaptic cell fires, the more information the synapse receives, and the more certain it becomes.

This match between observations for our energy-efficient synapses and previous work on Bayesian synapses led us to investigate potential connections between energy efficiency and Bayesian inference. Intuitively, there turns out to be a strong connection between synapse importance and uncertainty. Specifically, if a synapse is very important, then the performance cost changes dramatically when there are errors in that synaptic weight. That synapse therefore receives large gradients, and hence strong information about the correct value, rapidly reducing uncertainty.

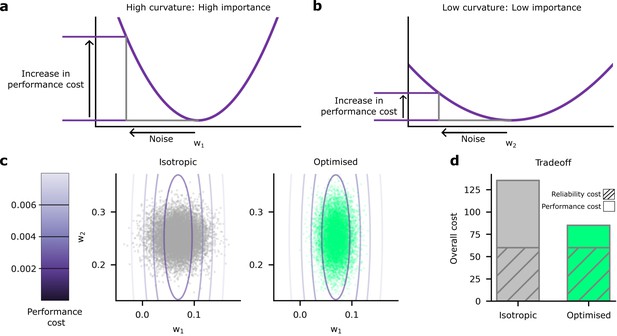

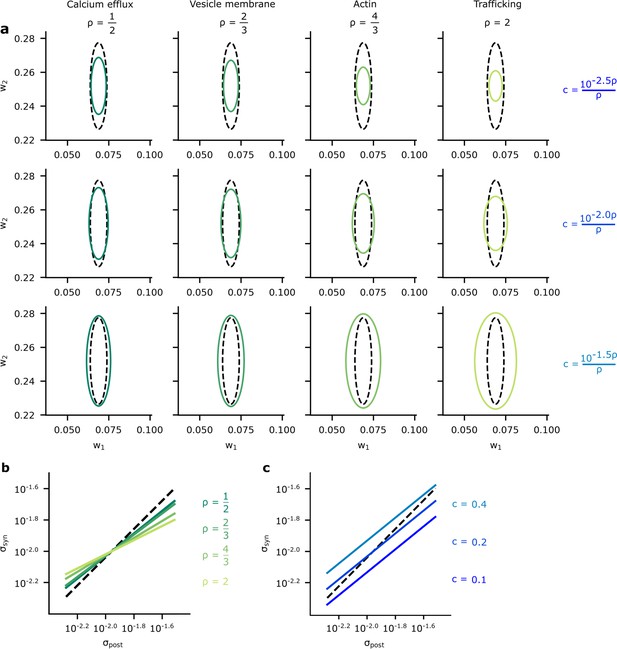

To assess the connection between Bayesian posteriors and energy-efficient variability in more depth, we estimated and plotted the posterior variance against the optimised synaptic variability (Figure 7a) (see Materials and methods). We considered our four different biophysical mechanisms (values for ; Figure 7a, columns), and values for (Figure 7a, rows). In all cases, there was a clear correlation between the posterior and the optimised variability: synapses with larger posterior variance also had large optimised variance. To further assess this connection, we used the relation between the Hessian and posterior variance given by Equation 54c and the analytic result given in Appendix 5, ‘Analytic predictions for ’ to plot the relationships between and the posterior variability, , as a function of (Figure 7b) and as a function of (Figure 7c). Again, these plots show a clear correlation between synapse variance and posterior variance, though the relationship is far from perfect. For a perfect relationship, we would expect the lines in Figure 7b and c to all lie along the diagonal with slope equal to one. In contrast, these lines actually have a slope smaller than one, indicating that optimised variability is less heterogeneous than posterior variance (Figure 7b and c). Interestingly, the slope increases towards one as the associated is decreased, this suggests that synapse variability best approximates the posterior when is small.

A comparison of optimised synaptic variability and posterior variance.

(a) Posterior variance (grey-dashed ellipses) plotted alongside optimised synaptic variability (green ellipses) for different values of (columns) and (rows) for an illustrative pair of synapses. Note that using fixed values of for different ’s dramatically changed the scale of the ellipses. Instead, we chose as a function of to ensure that the scale of the optimised noise variance was roughly equal across different . This allowed us to highlight the key pattern: that smaller values for give optimised variance closer to the true posterior variances, while higher values for tended to make the optimised synaptic variability more isotropic. (b) To understand this pattern more formally, we plotted the synaptic variability as a function of the posterior variance for different values of . Note that we set to to avoid large additive offsets (see Connecting the entropy and the biological reliability cost – Equation 48 for details). (c) The synaptic variability as a function of the posterior variance for different values of (3 DP). As increases (lighter blues) we penalise reliability more, and hence the optimised synaptic noise variability increases. (Here, we fixed across different settings for .)

This strong, but not perfect, connection between the patterns of variability in Bayesian inference and energy-efficient networks motivated us to seek a formal connection between Bayesian and efficient synapses. As such, in the Appendix, we derive a theoretical connection between our overall performance cost and Bayesian inference (see Appendix 4, ‘Energy-efficient noise and variational Bayes for neural network weights’). Moreover, this connection is subsequently used to provide an explanation for why synapse variability aligns closer to posterior variance for small (see Equation 51), specifically, variational inference, a well-known procedure for performing (approximate) Bayesian inference in NNs (Hinton and van Camp, 1993; Graves, 2011; Blundell et al., 2015). Variational inference optimises the ‘evidence lower bound objective’ (ELBO) (Barber and Bishop, 1998; Jordan et al., 1999; Blei et al., 2017), which surprisingly turns out to resemble our performance cost. Specifically, the ELBO includes a term which encourages the entropy of the approximating posterior distribution (which could be interpreted as our noise distribution) to be larger. This resembles a reliability cost, as our reliability costs also encourage the noise distribution to be larger. Critically, the biological power-law reliability cost has a different form from the ideal, entropic reliability cost. However, we are able to derive a formal relationship: the biological power-law reliability costs bound the ideal entropic reliability cost. Remarkably, this implies that our overall cost (Equation 2) bounds the ELBO, so reducing our cost (Equation 2) tightens the ELBO bound and gives an improved guarantee on the quality of Bayesian inference.

Discussion

Comparing the brain’s computational roles with associated energetic costs provides a useful means for deducing properties of efficient neurophysiology. Here, we applied this approach to PSP variability. We began by looking at the biophysical mechanisms of synaptic transmission, and how the energy costs for transmission might vary with synaptic reliability. We modified a standard ANN to incorporate unreliable synapses and trained this on a classification task using an objective that combined classification accuracy and an energetic cost on reliability. This led to a performance-reliability cost tradeoff and heterogeneous patterns of synapse variability that correlated with input rate and learning rate. We noted that these patterns of variability have been previously observed in data (see Figure 6). Remarkably, these are also the patterns of variability predicted by Bayesian synapses (Aitchison et al., 2021) (i.e. when distributions over synaptic weights correspond with the Bayesian posterior). Finally, we showed empirical and formal connections between the synaptic variability implied by Bayesian synapses and our performance-reliability cost tradeoff.

The reliability cost in terms of the synaptic variability (Equation 4) is a critical component of the numerical experiments we present here. While the precise form of the cost is inevitably uncertain, we attempted to mitigate the uncertainty by considering a wide range of functional forms for the reliability cost. In particular, we considered four biophysical mechanisms, corresponding to four power-law exponents, (). Moreover, these different power-law costs already cover a reasonably wide-range of potential penalties and we would expect the results to hold for many other forms of reliability cost as the intuition behind the results ultimately relies merely on there being some penalty for increasing reliability.

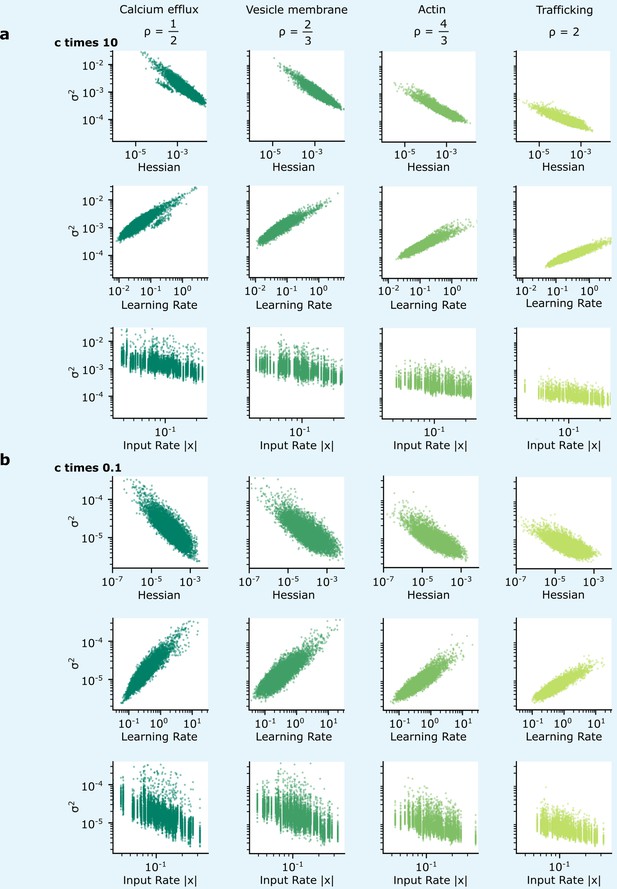

The biophysical cost also includes a multiplicative factor, , which sets the magnitude of the reliability cost. In fact, the patterns of variability exhibited in Figure 5 are preserved as is changed: this was demonstrated for values of which are 10 times larger and 10 times smaller, Appendix 6—figure 2. This multiplicative factor should be understood as being determined by the properties of the physics and chemistry underpinning synaptic dynamics, e.g., it could represent the quantity of ATP required by the metabolic costs of synaptic transmission (although this factor could vary, e.g. in different cell types).

Our ANNs used backpropagation to optimise the mean and variance of synaptic weights. While there are a number of schemes by which biological circuits might implement backpropagation (Whittington and Bogacz, 2017; Sacramento et al., 2018; Richards and Lillicrap, 2019), it is not yet clear whether backpropagation is implemented by the brain (see Lillicrap et al., 2020, for a review on the plausibility of propagation in the brain). Regardless, backpropagation is merely the route we used in our ANN setting to reach an energy-efficient configuration. The patterns we have observed are characteristic of an energy-efficient network and therefore should not depend on the learning rule that the brain uses to achieve energy efficiency.

Our results in ANNs used MNIST classification as an example of a task; this may appear somewhat artificial, but all brain areas ultimately do have a task: to maximise fitness (or reward as a proxy for fitness). Moreover, our results all ultimately arise from trading off biophysical reliability costs against the fact that if a synapse is important to performing a task, then variability in that synapse substantially impairs performance. Of course performance, in different brain areas, might mean reward, fitness, or some other measures. In contrast, if a synapse is unimportant, variability in that synapse impairs performance less. In all tasks there will be some synapses that are more, and some synapses that are less important, and our task, while relatively straightforward, captures this important property.

Our results have important implications for the understanding of Bayesian inference in synapses. In particular, we show that energy efficiency considerations give rise to two phenomena that are consistent with predictions outlined in previous work on Bayesian synapses (Aitchison et al., 2021). First, that normalised variability decreases for synapses with higher presynaptic firing rates. Second, that synaptic plasticity is higher for synapses with higher variability.

Specifically, these findings suggest that synapses connect their uncertainty in the value of the optimal synaptic weight (see Aitchison et al., 2021, for details) to variability. This is in essence a synaptic variant of the ‘sampling hypothesis’. Under the sampling hypothesis, neural activity is believed to represent a potential state of the world, and variability is believed to represent uncertainty (Hoyer and Hyvärinen, 2002; Knill and Pouget, 2004; Ma et al., 2006; Fiser et al., 2010; Berkes et al., 2011; Orbán et al., 2016; Aitchison and Lengyel, 2016; Haefner et al., 2016; Lange and Haefner, 2017; Shivkumar et al., 2018; Bondy et al., 2018; Echeveste et al., 2020; Festa et al., 2021; Lange et al., 2021; Lange and Haefner, 2022). This variability in neural activity, representing uncertainty in the state of the world, can then be read out by downstream circuits to inform behaviour. Here, we showed that a connection between synaptic uncertainty and variability can emerge simply as a consequence of maximising energy efficiency. This suggest that Bayesian synapses may emerge without any necessity for specific synaptic biophysical implementations of Bayesian inference.

Importantly though, while the brain might use synaptic noise for Bayesian computation, these results are also consistent with an alternative interpretation: that the brain is not Bayesian, it just looks Bayesian because it is energy efficient. To distinguish between these two interpretations, we ultimately need to know whether downstream brain areas exploit or ignore information about uncertainty that arises from synaptic variability.

Materials and methods

The ANN simulations were run in PyTorch with feedforward, fully connected neural networks with two hidden layers of width 100. The input dimension of 784 corresponded to the number of pixels in the greyscale MNIST images of handwritten digits, while the output dimension of 10 corresponded to the number of classes. We used the reparameterisation trick to backpropagate with respect to the mean and variance of the weights, in particular, we set , where (Kingma et al., 2015). MNIST classification was learned through optimisation of Gaussian parameters with respect to a cross-entropy loss in addition to reliability costs using minibatch gradient descent under Adam optimisation with a minibatch size of 20. To prevent negative values for the σs, they were reparameterised using a softplus function with argument , with . The base learning rate in Equation 7 is . The μis were initialised homogeneously across the network from and the σis were initialised homogeneously across the network at . Hyperparameters were chosen via grid search on the validation dataset to enable smooth learning, high performance, and rapid convergence. In the objective used to train our simulations, we also add an L1 regularisation term over synaptic weights, , where .

Plots in Figure 2 present mappings from hyperparameter, , to accuracy and . A different neural network was trained for each , after 50 training epochs the average across synapses was computed, and accuracy was evaluated on the test dataset. Plots in Figure 3 present mappings of this against accuracy and reliability cost. The reliability cost was computed using fixed (see Equation _48).

To compute the Hessian in Figure 5 and elsewhere, we used the empirical Fisher information approximation (Fisher, 1922), . This was evaluated by taking the average at over 10 epochs after full training for 50 epochs. The average learning rate and the average input rate were also evaluated over 10 epochs following training. The data presented illustrate these variables with regard to the weights of the second hidden layer. We set hyperparameter (see Equation 47) in these simulations.

For geometric comparisons between the distribution over synapses and the Bayesian posterior presented in Figure 7 we used the analytic results in Appendix 5, ‘Analytic predictions for ’. To estimate the posterior used in Figure 7, we optimised a factorised Gaussian approximation to the posterior over weights using variational inference and Bayes by backpropagation (Blundell et al., 2015). We then took from two optimised weights. For the variance and slope comparisons between Bayesian and efficient synapses in Figure 7, we used the analytic results in Appendix 5, ‘Analytic predictions for ’.

Source code used in simulations is available at: https://github.com/JamesMalkin/EfficientBayes copy archived at Malkin, 2024.

Appendix 1

Reliability costs

The difficulty in determining reliability costs is that depends on three variables: , the number of vesicles, the probability of release, and , the quantal size, which measures the amount of neurotransmitter in each vesicle:

However, these variables also determine the mean

so a straightforward optimisation of under reliability costs will also change μ and one pitfall is to accidentally consider only those changes in reliability that derive from changes in mean. A solution to this problem is to eliminate one of the variables so that is a function of μ, and the remaining variables. Eliminating gives

The idea is to consider energetic costs associated with and and relate these to while holding μ fixed. To simplify the biological motivation, we assume that during changes to the synapse aimed at manipulating the energetic cost, will also change to compensate for any collateral changes in μ keeping μ constant. Hence, a ‘compensatory variable’. Moreover, there is biological evidence that is the mechanism used in real synapses to fix μ (Turrigiano et al., 1998; Karunanithi et al., 2002). Fixing μ through a compensatory variable is termed homeostatic plasticity. Fixing μ through is described as ‘quantal scaling’. For reviews on homeostatic plasticity, see Turrigiano and Nelson, 2004; Davis and Müller, 2015.

In what follows four different energy costs are considered, the first depends on , the next two on , the situation for the final one is less clear, but in each case we derive a reliability cost in the form for some value of ρ. Of course, since we are considering costs for fixed μ the coefficient of variation is proportional to ; it may be helpful to think of these calculations as finding the relationship between the cost and .

Calcium efflux –

Calcium influx into the synapse is an essential part of the mechanism for vesicle release. Presynaptic calcium pumps act to restore calcium concentrations in the synapse; this pumping is a significant portion of synaptic transmission costs (Attwell and Laughlin, 2001). By rearranging the Hill equation defined by Sakaba and Neher, 2001, it can be shown that vesicle release has an interaction coefficient of four, this means the odds of release per vesicle are related to intracellular calcium amplitude via the fourth power (Heidelberger et al., 1994; Sakaba and Neher, 2001):

To recover basal synaptic calcium concentration, the calcium influx is reversed by ATP-driven calcium pumps, where :

Since this physiological cost does not depend on we assume it is fixed and so the odds of release is proportional to . Thus,

or .

Vesicle membrane –

There is a cost associated with the total area of vesicle membrane. Evidence in Pulido and Ryan, 2021, suggest stored vesicles emit charged H+ ions, with the number emitted proportional to the number of v-glut, glutamate transporters, on the surface of vesicles. v-ATPase pumps reverse this process maintaining the pH of the cell. It is suggested that this cost is 44% of the resting synaptic energy consumption. In addition, metabolism of the phospholipid bilayer that form the membrane of neurotransmitter-filled vesicles has been identified as a major energetic cost (Purdon et al., 2002). Provided the total volume is the same, release of the same amount of neurotransmitter into the synaptic cleft can involve many smaller vesicles or fewer larger ones. However, while having many small vesicles will be more reliable, it requires a greater surface area of costly membrane. With fixed μ and ,

Since and using this give

Since this reliability cost depends on , so is regarded as constant, so and hence .

Actin –

Actin polymers are an energy costly structural filament that support the structural organisation of vesicle reserve pools (Cingolani and Goda, 2008), with the vesicles strung out along the filaments. We assume each vesicle to require a length of actin roughly proportional to its diameter, this means that the total length of actin is proportional to . Hence,

The calculation then proceeds much as for the membrane cost, but with instead of giving .

Trafficking –

ATP-fuelled myosin motors drive trains of actin filament along with associated cargo such as vesicles and actin-myosin trafficking moves vesicles from vesicle reserve pools to release sites sustaining the readily releasable pool following vesicle release (Bridgman, 1999; Gramlich and Klyachko, 2017). This gives a cost for vesicle recruitment proportional to , the number of vesicles released:

so if is regarded as the principal way this cost is changed, with fixed then and so . This is the view point we are taking to motivate examining reliability costs with . This is certainly useful in considering the range of model behaviours over a broad range of values.

Nonetheless, it is sensible to ask whether the likely biological mechanism behind a varying trafficking cost is one which changes itself. In this case, since a constant μ for varying means , we have

which, is, again, of the form cost provided is small. For larger , however, it depends on exactly how changes as changes.

Generally, throughout these calculation we have supposed that is a compensatory variable and that either or changes in the process that changes the cost at a synapse. The benefit of this is that we are able to model costs directly in terms of reliability; in the future, though, it might be interesting to consider models which use , , and instead of μ and ; this would certainly be convenient for the sort of comparisons we are making here and interesting, although two formulations seem equivalent, this does not mean that the learning dynamics will be the same.

Appendix 2

High input rates and high precision at important synapses

Here, we show that under a linear model, important synapses, as measured by the Hessian, have high input rates. Additionally, we show that energy-efficient synapses imply that these important synapses have low optimized variability.

We consider a simplified linear model, with targets , inputs , and weights . We have

We take the performance cost to be

where is the number of datapoints. We take the network weights to be drawn from a multivariate Gaussian, with diagonal covariance, , i.e., ,

All our derivations rely on looking at the quadratic form for the performance cost. In particular,

Expanding the brackets,

and evaluating the expectations,

where the trace,

includes a sum over both the synapse index and the time index .

To measure synapse importance, we use the Hessian, i.e., the second derivative of the performance cost with respect to the mean. From Equation 24, we can identify this as

where, as before, is the time index.

Since the the only term in the performance cost that depends on the variance, , is the trace, we can identify that optimises the performance and reliability cost. This allows us to observe how synapse variability relates to synapse importance in the tradeoff between performance and reliability costs:

which means

or

Thus, more important synapses (as measured by the Hessian, ) have lower variability if the synapse is energy efficient. Moreover, through Equation 26, we expect synapses with higher input rates to be more important.

Appendix 3

Synapse importance and gradient magnitudes

In our ANN simulations we train synaptic weights using the most established adaptive optimisation scheme, Adam (Kingma and Ba, 2014), which has recently been realised using biologically plausible mechanisms (Yang and Li, 2022). Adam uses a synapse-specific learning rate, , which decreases in response to high gradients at that synapse,

Specifically, the local learning rate for the ith synapse, , is usually a base learning rate, , divided by , the root-mean-square of the gradient for each datapoint or minibatch.

The key intuition is that if the gradients for each datapoint/minibatch are large, that means that this synapse is important, as it has a big impact on the predictions for every datapoint. In fact, these mean-squared gradients can be related to our formal measure of synapse importance, the Hessian. Specifically, for data generated from the model, the Hessian (or Fisher information; Fisher, 1922) is equivalent to the mean-squared gradient, where the gradient is taken over each datapoint separately,

where the expectation is evaluated over data generated by the model and is defined,

Of course, in practice, the data is not drawn from the model, so the relationship between the squared gradients and the Hessian computed for real data is only approximate. But it is close enough to induce a relationship between synapse importance (measured as the diagonal of the Hessian) and learning rates (which are inversely proportional to the root-mean-squared gradients) in our simulations.

Appendix 4

Energy-efficient noise and variational Bayes for neural network weights

Introduction to variational Bayes for neural network weights

One approach to performing Bayesian inference for the weights of a neural network is to use variational Bayes. In variational Bayes, we introduce a parametric approximate posterior, , and fit the parameters of that approximate posterior using gradient descent on an objective, the ELBO (Blundell et al., 2015). In particular,

where is the entropy of the approximate posterior. Maximising the ELBO is particularly useful for selecting models as it forms a lower bound on the marginal likelihood, or evidence, (MacKay, 1992b; Barber and Bishop, 1998). When using variational Bayes for neural network weights, we usually use Gaussian approximate posteriors (Blundell et al., 2015),

Note that optimising the ELBO with respect to the parameters of is difficult, because is the distribution over which the expectation is taken in Equation 33. To circumvent this issue, we use the reparameterisation trick (Kingma et al., 2015), which involves writing the weights in terms of IID standard Gaussian variables, ,

Thus, we can write the ELBO as an expectation over , which has a fixed IID standard Gaussian distribution,

Note that we use w, μ, etc. without indices in this expression to indicate all the weights/mean weights.

Identifying the log-likelihood and log-prior

Following the usual practice in deep learning, we assume the likelihood is formed by a categorical distribution, with probabilities obtained by applying the softmax function to the output of the neural network. The log-likelihood for a categorical distribution with softmax probabilities is the negative cross-entropy (Murphy, 2012),

where is the output of the network with weights, , and inputs, . We can additionally identify a Laplace prior with the magnitude cost. Specifically, if we take the prior to be Laplace, with scale ,

where we identify the magnitude cost using Equation 3.

Connecting the entropy and the biological reliability cost

Now, we have identified the log-likelihood and log-prior terms in the ELBO (Equation 33) with terms in our biological cost (Equation 2). We thus have two terms left: the entropy in the ELBO (Equation 33) and the reliability cost in the biological cost (Equation 2). Critically, the entropy term also acts as a reliability cost, in that it encourages more variability in the weights (as the entropy is positive in the ELBO [Equation 33] and we are trying to maximise the ELBO, the entropy term gives a bonus for more variability). Intuitively, we can think of the entropy term in the ELBO (Equation 33) as being an ‘entropic reliability cost’, as compared to the ‘biological reliability cost’ in Equation 2.

This intuitive connection suggests that we might be able to find a more formal link. Specifically, we can define an entropic reliability cost as simply the negative entropy,

Our goal is to write the biological reliability cost , as a bound on . By rearranging and introducing and ,

and noting that ,

we can demonstrate that any reliability cost expressed as a generic power-law forms an upper bound on the entropic cost

Here, is the biological reliability cost for a synapse, which formally bounds the entropic reliability cost from VI,

We can also sum these quantities across synapses,

The parameter sets the importance of the reliability cost within the performance-reliability cost tradeoff (see Figure 2)

Note that while both and represent the biological reliability costs, they are slightly different in that includes an additive constant. Importantly, this additive constant is independent of and , so it does not affect learning and can be ignored.

Given that the biological reliability cost forms a bound on the ideal entropic reliability cost we can consider using the biological reliability cost in place of the entropic reliability cost,

we find that our overall biological cost (Equation 2) forms a bound on the ELBO, which itself forms a bound on the evidence, . Thus, pushing down the overall biological cost (Equation 2) pushes up a bound on the model evidence.

Predictive probabilities arising from biological reliability costs

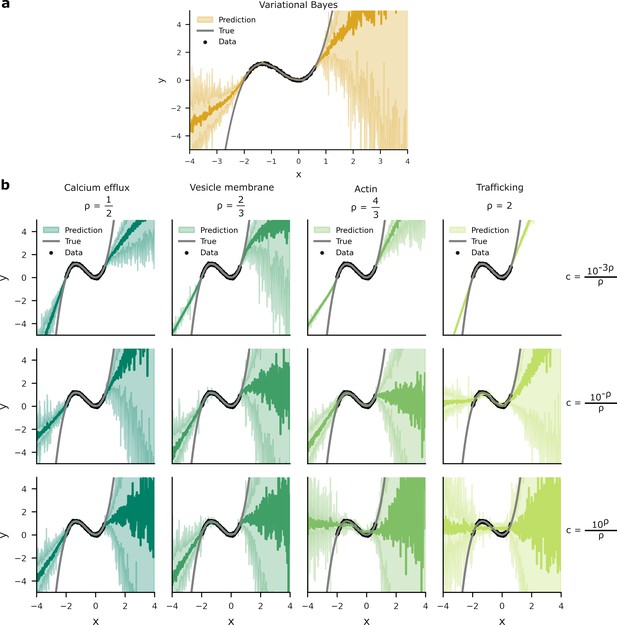

Given the connections between our overall cost and the ELBO, we expect that optimising our overall cost will give a similar result to variational Bayes. To check this connection, we plotted the distribution of predictions induced by noisy weights arising from variational Bayes (Appendix 4—figure 1a) and our overall costs (Appendix 4—figure 1b). Variational Bayes maximises the ELBO, therefore its predictive distribution is optimised to reflect the data distribution from which data is drawn (MacKay, 1992a). We found comparable patterns for the predictive distributions learned through variational Bayes and our overall costs, albeit with some breakdown in predictive performance with higher values for .

Predictive distributions from variational Bayes and our overall costs are similar.

We trained a one hidden layer network with 20 hidden units on data (black dots). Network targets, , were drawn from a ‘true’ function, (grey line) with additive Gaussian noise of variance . Two standard deviations of the predictive distributions are depicted in the shaded areas. (a) The predictive distribution produced by variational Bayes have a larger density of predictions where there is a higher probability of data. Where there is an absence of data, the model has to extrapolate and the spread of the predictive distribution increases. (b) Optimising the overall cost with small generates narrow response distributions. This is most noticeable in the upper-right panel, where the spread of predictive distribution is unrelated to the presence or absence of data. In contrast, while the predictive density for larger do vary according to the presence or absence of data, these distributions poorly predict . This is most apparent in the lower-right panel where the network’s predictive distribution transects the inflections of .

Interpreting , , and . As discussed in the main text, and are the fundamental parameters, and they are set by properties of the underlying biological system. It may nonetheless be interesting to consider the effects of and on the tightness of the bound of the biological reliability cost on the variational reliability cost. In particular, we consider settings of and for which the bound is looser or tighter (though again, there is no free choice in these parameters: they are set by properties of the biological system).

First, the biological reliability cost becomes equal to the ideal entropic reliability cost in the limit as .

Thus, taking and ,

Thus,

This explains the apparent improvement in predictive performance (Appendix 4—figure 1) and in matching the posteriors (Figure 7) with lower values of .

Second, the biological reliability cost becomes equal to the ideal entropic reliability cost when ,

as the first term in Equation 43 cancels. However, cannot be set individually across synapses, but is instead roughly constant, with a value set by underlying biological constraints. In particular, can be written as a function of and (Equation 47), and and are quantities that are roughly constant across synapses, with their values set by biological constraints. Thus, biological implications of a tightening bound as tends to are not clear.

Appendix 5

Analytic predictions for

At various points in the main text, we note a connection between the Hessian, synapse importance, and optimal variability. We start with Equation 29, which relates the optimal, energy-efficient noise variance, , to the Hessian, which gives Equation 54a. Then, we combine this with the form for the Hessian (Equation 26), which gives Equation 54b. Finally, we note Hessian describes the log-likelihood (Equation 26 and Equation 20). Thus, assuming the prior variance is large, we have , which gives Equation 54c,

To test these predictions, we performed a simpler simulation classifying MNIST in a network with no hidden layers. We found that the analytic results closely matched the simulations, and that the biological slopes tend to match the better than the other values for . However, while the direction of the slope was consistent in deeper networks, the exact value of the slope was not consistent (Figure 5 and Appendix 6—figure 1), so it is unclear whether we can draw any strong conclusions here.

Comparing analytic predictions for synapse variability with simulations and experimental data in a zero hidden layer network for MNIST classification.

The green dots show simulated synapses, and the grey line is fitted to these simulated points. The blue line is from our analytic predictions, while the red-dashed line is taken from experimental data (Figure 6b).

Appendix 6

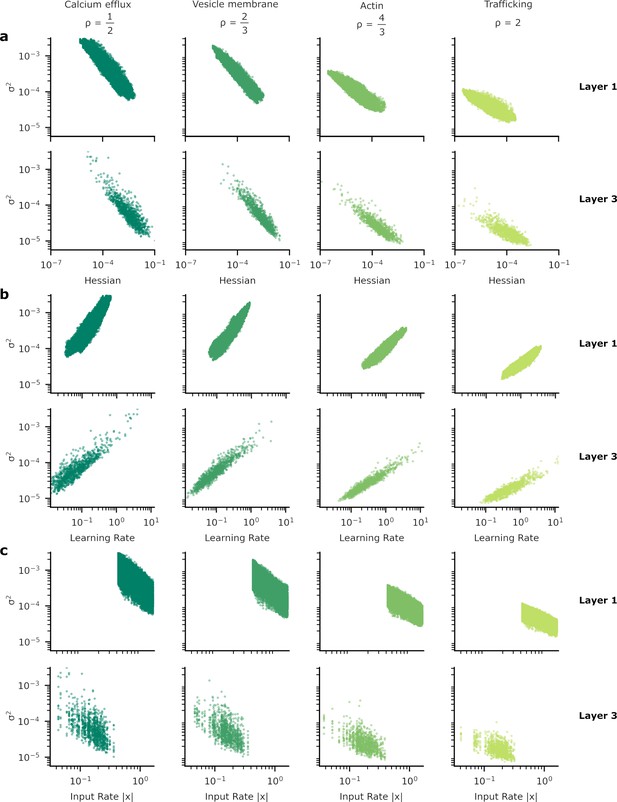

Patterns of synapse variability for the remaining layers of the neural network used to provide our results.

In Figure 5 we showed the heterogeneous patterns of synapse variability for the synapses connecting the two hidden layers of our artificial neural network (ANN). Here, we exhibit the equivalent plots for the other synapses, those between the input and the first hidden layer (layer 1) and from the final hidden layer to the output layer (layer 3). As in Figure 5 we show the relationship between synaptic variance and (a) the Hessian; (b) learning rate; and (c) input rate. The patterns do not appear substantially different from layer to layer.

Patterns of synapse variability are robust to changes in the reliability.

We show that the patterns of variability of synapses connecting the two hidden layers presented in Figure 5 are preserved over a wide range of . (a, b) When the reliability cost multiplier, , is either increased (a) or decreased (b) by a factor of 10, overall synapse variability increases or decreases accordingly, but the qualitative correlations seen in Figure 5 are preserved.

Data availability

The current manuscript is a computational study, so any data generated is simulated data. The previously published dataset listed below and data from Ko et al., 2013 were used. All newly generated data is available at: https://github.com/JamesMalkin/EfficientBayes, copy archived at Malkin, 2024.

-

Dryad Digital RepositoryData from: Unified pre- and postsynaptic long-term plasticity enables reliable and flexible learning.https://doi.org/10.5061/dryad.p286g

References

-

The hamiltonian brain: efficient probabilistic inference with excitatory-inhibitory neural circuit dynamicsPLOS Computational Biology 12:e1005186.https://doi.org/10.1371/journal.pcbi.1005186

-

ConferenceBayesian filtering unifies adaptive and non-adaptive neural network optimization methodsAdvances in Neural Information Processing Systems. pp. 18173–18182.

-

Synaptic plasticity as Bayesian inferenceNature Neuroscience 24:565–571.https://doi.org/10.1038/s41593-021-00809-5

-

An energy budget for signaling in the grey matter of the brainJournal of Cerebral Blood Flow & Metabolism 21:1133–1145.https://doi.org/10.1097/00004647-200110000-00001

-

Ensemble learning in Bayesian neural networksNato ASI Series F Computer and Systems Sciences 168:215–238.

-

Developmental changes in EPSC quantal size and quantal content at a central glutamatergic synapse in ratThe Journal of Physiology 511 (Pt 3):861–869.https://doi.org/10.1111/j.1469-7793.1998.861bg.x

-

Variational inference: a review for statisticiansJournal of the American Statistical Association 112:859–877.https://doi.org/10.1080/01621459.2017.1285773

-

ConferenceWeight uncertainty in neural networkInternational conference on machine learning PMLR. pp. 1613–1622.

-

The end-plate potential in mammalian muscleThe Journal of Physiology 132:74–91.https://doi.org/10.1113/jphysiol.1956.sp005503

-

The probability of neurotransmitter release: variability and feedback control at single synapsesNature Reviews Neuroscience 10:373–383.https://doi.org/10.1038/nrn2634

-

Myosin Va movements in normal and dilute-lethal axons provide support for a dual filament motor complexThe Journal of Cell Biology 146:1045–1060.https://doi.org/10.1083/jcb.146.5.1045

-

A practical guide to using CV analysis for determining the locus of synaptic plasticityFrontiers in Synaptic Neuroscience 12:11.https://doi.org/10.3389/fnsyn.2020.00011

-

Actin in action: the interplay between the actin cytoskeleton and synaptic efficacyNature Reviews. Neuroscience 9:344–356.https://doi.org/10.1038/nrn2373

-

Homeostatic control of presynaptic neurotransmitter releaseAnnual Review of Physiology 77:251–270.https://doi.org/10.1146/annurev-physiol-021014-071740

-

Non-signalling energy use in the brainThe Journal of Physiology 593:3417–3429.https://doi.org/10.1113/jphysiol.2014.282517

-

Statistically optimal perception and learning: from behavior to neural representationsTrends in Cognitive Sciences 14:119–130.https://doi.org/10.1016/j.tics.2010.01.003

-

On the mathematical foundations of theoretical statisticsPhilosophical Transactions of the Royal Society of London 222:309–368.https://doi.org/10.1098/rsta.1922.0009

-

Cognitron: A self-organizing multilayered neural networkBiological Cybernetics 20:121–136.https://doi.org/10.1007/BF00342633

-

Enhancement of information transmission efficiency by synaptic failuresNeural Computation 16:1137–1162.https://doi.org/10.1162/089976604773717568

-

ConferencePractical variational inference for neural networksAdvances in Neural Information Processing Systems 24 (NIPS 2011).

-

Energy-efficient information transfer at thalamocortical synapsesPLOS Computational Biology 15:e1007226.https://doi.org/10.1371/journal.pcbi.1007226

-

ConferenceKeeping the neural networks simple by minimizing the description length of the weightsProceedings of COLT-93. pp. 5–13.https://doi.org/10.1145/168304.168306

-

ConferenceInterpreting neural response variability as Monte Carlo sampling of the posteriorAdvances in Neural Information Processing Systems.

-

Learning as filtering: Implications for spike-based plasticityPLOS Computational Biology 18:e1009721.https://doi.org/10.1371/journal.pcbi.1009721

-

An introduction to variational methods for graphical modelsMachine Learning 37:183–233.https://doi.org/10.1023/A:1007665907178

-

Metabolic constraints on synaptic learning and memoryJournal of Neurophysiology 122:1473–1490.https://doi.org/10.1152/jn.00092.2019

-

Quantal size and variation determined by vesicle size in normal and mutant Drosophila glutamatergic synapsesThe Journal of Neuroscience 22:10267–10276.https://doi.org/10.1523/JNEUROSCI.22-23-10267.2002

-

The measurement of synaptic delay, and the time course of acetylcholine release at the neuromuscular junctionProceedings of the Royal Society of London. Series B, Biological Sciences 161:483–495.https://doi.org/10.1098/rspb.1965.0016

-

ConferenceFast and scalable bayesian deep learning by weight-perturbation in adamInternational conference on machine learning PMLR. pp. 2611–2620.

-

ConferenceVariational dropout and the local reparameterization trickAdvances in Neural Information Processing Systems.

-

The Bayesian brain: the role of uncertainty in neural coding and computationTRENDS in Neurosciences 27:712–719.https://doi.org/10.1016/j.tins.2004.10.007

-

Characterizing and interpreting the influence of internal variables on sensory activityCurrent Opinion in Neurobiology 46:84–89.https://doi.org/10.1016/j.conb.2017.07.006

-

A confirmation bias in perceptual decision-making due to hierarchical approximate inferencePLOS Computational Biology 17:e1009517.https://doi.org/10.1371/journal.pcbi.1009517

-

Task-induced neural covariability as a signature of approximate Bayesian learning and inferencePLOS Computational Biology 18:e1009557.https://doi.org/10.1371/journal.pcbi.1009557

-

BookEfficient backpropIn: LeCun Y, editors. Neural Networks: Tricks of the Trade. Springer. pp. 9–50.https://doi.org/10.1007/3-540-49430-8_2

-

Energy-efficient neuronal computation via quantal synaptic failuresThe Journal of Neuroscience 22:4746–4755.https://doi.org/10.1523/JNEUROSCI.22-11-04746.2002

-

Backpropagation and the brainNature Reviews. Neuroscience 21:335–346.https://doi.org/10.1038/s41583-020-0277-3

-

Quantal analysis and synaptic anatomy--integrating two views of hippocampal plasticityTrends in Neurosciences 16:141–147.https://doi.org/10.1016/0166-2236(93)90122-3

-

Bayesian inference with probabilistic population codesNature Neuroscience 9:1432–1438.https://doi.org/10.1038/nn1790

-

A practical bayesian framework for backpropagation networksNeural Computation 4:448–472.https://doi.org/10.1162/neco.1992.4.3.448

-

The evidence framework applied to classification networksNeural Computation 4:720–736.https://doi.org/10.1162/neco.1992.4.5.720

-

SoftwareEfficient bayes, version swh:1:rev:a341bff3e9241f958f351cb460b30047ec60d3a3Software Heritage.

-

New insights and perspectives on the natural gradient methodThe Journal of Machine Learning Research 21:5776–5851.

-

Energy consumption by phospholipid metabolism in mammalian brainNeurochemical Research 27:1641–1647.https://doi.org/10.1023/a:1021635027211

-

Properties of quantal transmission at CA1 synapsesJournal of Neurophysiology 92:2456–2467.https://doi.org/10.1152/jn.00258.2004

-

Dendritic solutions to the credit assignment problemCurrent Opinion in Neurobiology 54:28–36.https://doi.org/10.1016/j.conb.2018.08.003

-

Sparse, flexible and efficient modeling using L 1 regularizationFeature Extraction: Foundations and Applications 01:375–394.https://doi.org/10.1007/978-3-540-35488-8_17

-

Energy efficient sparse connectivity from imbalanced synaptic plasticity rulesPLOS Computational Biology 11:e1004265.https://doi.org/10.1371/journal.pcbi.1004265

-

ConferenceDendritic cortical microcircuits approximate the backpropagation algorithmAdvances in Neural Information Processing Systems.

-

Quantitative relationship between transmitter release and calcium current at the calyx of held synapseThe Journal of Neuroscience 21:462–476.https://doi.org/10.1523/JNEUROSCI.21-02-00462.2001

-

A mathematical theory of communicationBell System Technical Journal 27:379–423.https://doi.org/10.1002/j.1538-7305.1948.tb01338.x

-

ConferenceA probabilistic population code based on neural samplesAdvances in Neural Information Processing Systems.

-

Homeostatic plasticity in the developing nervous systemNature Reviews. Neuroscience 5:97–107.https://doi.org/10.1038/nrn1327

Article and author information

Author details

Funding

Engineering and Physical Sciences Research Council (EP/T517872/1)

- James Malkin

Leverhulme Trust (RPG-2019-229)

- Cian O'Donnell

Biotechnology and Biological Sciences Research Council (BB/W001845/1)

- Cian O'Donnell

Leverhulme Trust (RF-2021-533)

- Conor J Houghton

The funders had no role in study design, data collection and interpretation, or the decision to submit the work for publication.

Acknowledgements

We are grateful to Dr Stewart whose philanthropy supported GPU compute used in this project. JM was funded by the Engineering and Physical Sciences Research Council (EP/T517872/1). COD was funded by the Leverhulme Trust (RPG-2019-229) and Biotechnology and Biological Sciences Research Council (BB/W001845/1). CH is supported by the Leverhulme Trust (RF-2021-533).

Version history

- Preprint posted:

- Sent for peer review:

- Reviewed Preprint version 1:

- Reviewed Preprint version 2:

- Version of Record published:

- Version of Record updated:

Cite all versions

You can cite all versions using the DOI https://doi.org/10.7554/eLife.92595. This DOI represents all versions, and will always resolve to the latest one.

Copyright

© 2023, Malkin et al.

This article is distributed under the terms of the Creative Commons Attribution License, which permits unrestricted use and redistribution provided that the original author and source are credited.

Metrics

-

- 1,615

- views

-

- 115

- downloads

-

- 3

- citations

Views, downloads and citations are aggregated across all versions of this paper published by eLife.

Citations by DOI

-

- 2

- citations for umbrella DOI https://doi.org/10.7554/eLife.92595

-

- 1

- citation for Reviewed Preprint v1 https://doi.org/10.7554/eLife.92595.1