Pan-cortical 2-photon mesoscopic imaging and neurobehavioral alignment in awake, behaving mice

eLife assessment

This important paper presents a thoroughly detailed methodology for mesoscale-imaging of extensive areas of the cortex, either from a top or lateral perspective, in behaving mice. The examples of scientific results to be derived with this method offer promising and stimulating insights. Overall, the method and results presented are convincing and will be of interest to neuroscientists focused on cortical processing in rodents and beyond.

https://doi.org/10.7554/eLife.94167.3.sa0Important: Findings that have theoretical or practical implications beyond a single subfield

- Landmark

- Fundamental

- Important

- Valuable

- Useful

Convincing: Appropriate and validated methodology in line with current state-of-the-art

- Exceptional

- Compelling

- Convincing

- Solid

- Incomplete

- Inadequate

During the peer-review process the editor and reviewers write an eLife Assessment that summarises the significance of the findings reported in the article (on a scale ranging from landmark to useful) and the strength of the evidence (on a scale ranging from exceptional to inadequate). Learn more about eLife Assessments

Abstract

The flow of neural activity across the neocortex during active sensory discrimination is constrained by task-specific cognitive demands, movements, and internal states. During behavior, the brain appears to sample from a broad repertoire of activation motifs. Understanding how these patterns of local and global activity are selected in relation to both spontaneous and task-dependent behavior requires in-depth study of densely sampled activity at single neuron resolution across large regions of cortex. In a significant advance toward this goal, we developed procedures to record mesoscale 2-photon Ca2+ imaging data from two novel in vivo preparations that, between them, allow for simultaneous access to nearly all 0f the mouse dorsal and lateral neocortex. As a proof of principle, we aligned neural activity with both behavioral primitives and high-level motifs to reveal the existence of large populations of neurons that coordinated their activity across cortical areas with spontaneous changes in movement and/or arousal. The methods we detail here facilitate the identification and exploration of widespread, spatially heterogeneous neural ensembles whose activity is related to diverse aspects of behavior.

Introduction

Recent advances in large-scale neural recording technology, such as widefield imaging (Musall et al., 2019; Gallero-Salas et al., 2021; Esmaeili et al., 2021), large field-of-view (FOV) 2-photon (2p) imaging (Sofroniew et al., 2016; Stringer et al., 2019; Yu et al., 2022), and Neuropixels high-density extracellular electrophysiology recordings (Jun et al., 2017; Steinmetz et al., 2019) have allowed for rapid advancement in our understanding of the relationships between brain-wide neural activity and both spontaneous and task-engaged behavior in mice. For example, these techniques can now be deployed in recently developed behavioral paradigms that allow for the temporal separation of periods during which cortical activity is dominated by activity related to stimulus representation, choice/decision, maintenance of choice, and response or implementation of choice during different intra-trial epochs of 2-alternative forced choice (2-AFC) discrimination tasks (Guo et al., 2014), the standardization of training and performance analysis across laboratories (Aguillon-Rodriguez et al., 2021; eLife), and the separation of context-dependent rule representation and choice in a working memory task (Wu et al., 2020).

One of the striking features of neocortical neuronal activity is how strongly changes in behavioral state, such as task engagement, movement, or arousal, affect the spontaneous and evoked activity of neurons within visual, auditory, somatosensory, motor and other cortical regions (Niell and Stryker, 2010; McGinley et al., 2015; Musall et al., 2019; Stringer et al., 2019). However, this is not to say that these effects are uniform across the cerebral cortex (Morandell et al., 2023; Wang et al., 2023). Neurons exhibit diversity in the dependence of their neural activity on arousal and behavioral state both between and within cortical areas (Niell and Stryker, 2010; McGinley et al., 2015; Shimaoka et al., 2018), and these areas are active at different times during a rewarded task (Salkoff et al., 2020; Esmaeili et al., 2021; Gallero-Salas et al., 2021). Furthermore, the arousal dependence of membrane potential across cortical areas has been shown to be diverse and predictable by a temporally filtered readout of pupil diameter and walking speed (Shimaoka et al., 2018).

For this reason, directly combining and/or comparing the correlations between behavior and neural activity across regions imaged in separate sessions may not reveal the true differences in the relationship between behavior and neural activity across cortical areas, due to a ‘temporal filtering effect’. In other words, the correlations between behavior and neural activity in each region appear to depend on the exact time since the behavior began (Shimaoka et al., 2018). In our view, this makes the simultaneous recording of multiple cortical areas essential for proper comparison of the dependencies of their neural activities on arousal/movement, because only then are the distributions of behavioral state dwell times the same across cortical areas.

Areas involved in sensory decision making are often far from each other (Gallero-Salas et al., 2021) and can exhibit coordinated state-dependent changes in functional coupling (Clancy et al., 2019). Also, multimodal sensory information is multiplexed and combined as it ascends across the cortical hierarchy (Coen et al., 2023). For these reasons, understanding the brain activity underlying optimal performance during multimodal, task-engaged behavior will require dense sampling of many brain areas at single neuron resolution across lateral, dorsal, and frontal cortices simultaneously at a temporal resolution high enough to describe both spontaneous behavioral state transitions and the neural dynamics relevant for a given task.

Dense intra-cortical sampling at a fixed depth/cortical layer across many areas is not possible with current Neuropixels probes (Steinmetz et al., 2021), 1-photon (1p) widefield imaging can be contaminated by neuropil and hemodynamic signal (Waters, 2020; Valley et al., 2020) and typically does not achieve single cell resolution (but see Yoshida et al., 2018; Kauvar et al., 2020), and standard 2p imaging is limited by scanning speed (see Gong et al., 2015) and field of view spatial extent (FOV; i.e. simultaneously imageable, either contiguous or non-contiguous, regions; Allen et al., 2017; Hattori et al., 2019; but see Yu et al., 2021). Note that although some recent advances in 1p widefield imaging have allowed for the imaging of individual cells, both in head-fixed and freely moving mice, they do not achieve true single cell resolution in practice (they get close to ~10 μm xy or ‘lateral’ resolution, with undefined z or ‘depth’ resolution) and rely in part on the sparse labeling of neurons with Ca2+ indicators either within or across cortical layers (Cai et al., 2016; Hope et al., 2023, bioRxiv; Xie et al., 2023).

Recent advancements in cranial window preparations have enabled imaging over large portions of the dorsal cortex (Kim et al., 2016; Stringer et al., 2023, bioRxiv). However, simultaneous 2p imaging over a large cortical area including both dorsal and lateral regions, particularly in a preparation that allows for simultaneous imaging of the major primary sensory cortices (auditory, visual, and somatosensory) and frontal motor/choice areas (M1, M2) in awake, behaving mice has not been previously shown.

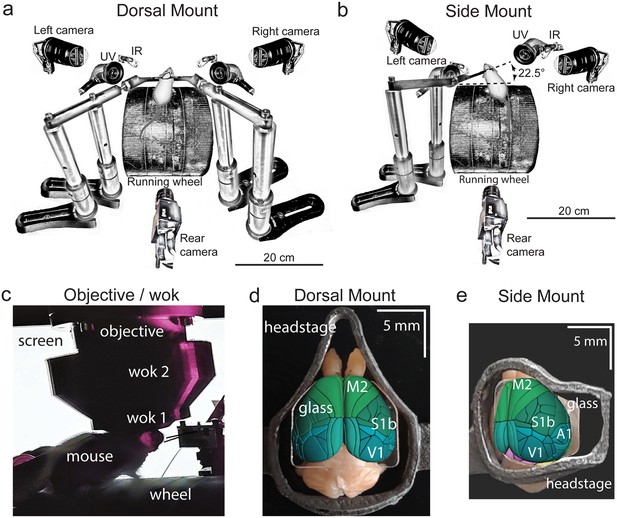

Here, we developed a set of new techniques and integrated them with existing technologies in order to overcome the limitations of current state-of-the-art methods and provide the first pan-cortical 2p assays at single-cell resolution in awake, behaving mice. To achieve this, we designed custom 3D-printed titanium headposts, mounting devices, adapters, and cranial windows, and modified (‘Crystal Skull’; Kim et al., 2016; Figure 1a, d, Figure 1—figure supplement 1c, upper, e) or developed (‘A1/V1/M2’ or ‘temporo-parietal’; Figure 1b, e, Figure 1—figure supplement 1a, f) two in vivo surgical preparations, which we will henceforth refer to as the ‘dorsal mount’ and ‘side mount’, respectively.

Technical adaptations for dual-mount in vivo pan-cortical imaging with the Thorlabs 2p-RAM mesoscope.

(a) Mesoscope behavioral apparatus for the dorsal mount preparation. Mouse is mounted upright on a running wheel with headpost fixed to dual adjustable support arms mounted on vertical posts (1” diameter). Behavior cameras are fixed at front-left, front-right, and center-posterior, with ultraviolet and infrared light-emitting diodes aligned with goose-neck supports parallel to right and left cameras. (b) Mesoscope behavioral apparatus for side mount preparation. Same as in (a), except that the mouse is rotated 22.5 degrees to its left so that the objective lens (at angle 0) is orthogonal to the center-of-mass of the preparation across the right cortical hemisphere. The objective can rotate +/-20 degrees medio-laterally, if needed, to optimize imaging of any portion of the cortex under the cranial window. The right behavior camera is positioned more posterior and lower than in (a), to allow for imaging of the eye under the acute angle formed with the horizontal light shield, shown in (c). (c) Mouse running on wheel with side mount preparation receiving visual stimulation from an LED screen positioned on the left side, with linear motor-positioned dual lick-spouts in place and 3D printed vertical light shield (wok 2) attached to rim of flat shield (wok 1) to block extraneous light from entering the objective. (d) Overhead view of dorsal mount preparation with 3D printed titanium headpost, custom cranial window, and Allen CCF aligned to paraformaldehyde-fixed mouse brain. Motor region = light green, somatosensory = dark green, visual = dark blue, retrosplenial = light blue. Olfactory bulbs (anterior) at top, cerebellum (posterior) at bottom of image. Note ridge along perimeter of headpost for fitted horizontal light shield (wok 1) attachment. (e) Rotated dorsal view (22.5 degrees right) of side mount preparation with 3D printed titanium headpost, custom cranial window, and Allen CCF aligned to paraformaldehyde-fixed mouse brain. Auditory region shown in light blue, ventral and anterior to visual areas, and ventral and posterior to somatosensory areas (right side of image is lateral/ventral, and left side of headpost perimeter is medial/dorsal). Other regions shown with the same color scheme as in (d). UV = ultraviolet, IR = infrared, M2 = secondary motor cortex, S1b = primary somatosensory barrel cortex, V1 = primary visual cortex, A1 = primary auditory cortex.

The dorsal mount, which was based on the earlier ‘Crystal Skull’ preparation (Kim et al., 2016; see also Ghanbari et al., 2019 for a similar preparation), included modifications such as a novel headpost and support arms, along with other hardware and novel surgical, data acquisition, and analysis methods, and enabled simultaneous imaging across nearly all of bilateral dorsal cortex. Our novel side mount preparation, on the other hand, allowed for simultaneous imaging of much of the dorsal and lateral cortex across the right hemisphere (although our design can easily be ‘flipped’ across a mirror-image plane to allow for imaging of the left hemisphere, if desired).

These two novel preparations enabled mesoscale 2p imaging (here, we used the 2p-RAM mesoscope, Thorlabs; Sofroniew et al., 2016) of up to ~7,500 individual neurons simultaneously at ~3 Hz across a 5x5 mm FOV (Figure 2, Figure 3, Figure 1—figure supplement 2; Videos 1, 2, 8 and 9; Protocol 1), or up to 800 neurons combined across four 660x660 µm FOVs at ~10 Hz (Figure 1—figure supplement 2d, e; Protocol 1; see Supplementary methods and materials). Although these recording speeds are not fast enough to capture the full dynamics of rapid neural processing involved in, for example, initial cortical sensory encoding and decision making, they are faster than earlier similar mesoscale recordings in visual cortex (Stringer et al., 2019), and they are likely fast enough to capture important components of spontaneous arousal and movement related fluctuations in neural dynamics across dorsal and lateral cortex. The total number of imaged neurons can be significantly increased in our preparations by using other mouse lines or different imaging or analysis parameters.

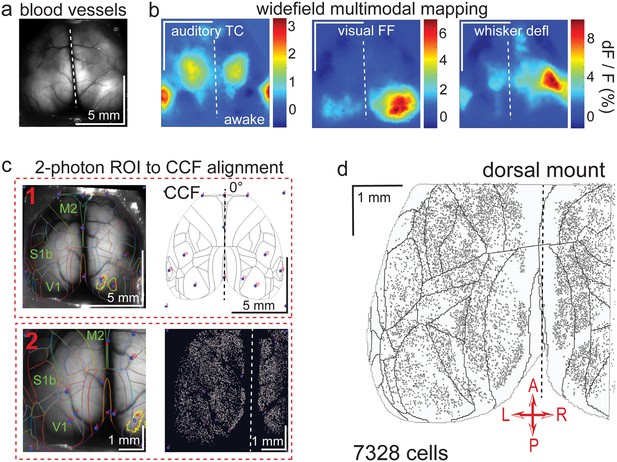

Widefield-imaging-based multimodal mapping (MMM) and CCF alignment, ROI detection, and areal assignment of GCaMP6s+ excitatory neurons for the dorsal mount preparation.

(a) Mean projection of 30 s, 470 nm excitation epifluorescence widefield movie of vasculature performed through a dorsal mount cranial window. Midline indicated by a dashed white line. (b) Mean 1 s baseline subtracted dF/F images of 1 s responses to ~20–30 repetitions of left-side presentations of a 5–40 kHz auditory tone cloud (auditory TC; left), visual full field (FF) isoluminant Gaussian noise stimulation (center), and 100 ms x 5 Hz burst whisker deflection (right) in an awake dorsal mount preparation mouse. (c) Example two-step CCF alignment to dorsal mount preparation, performed in Python on MMM masked blood-vessel image (upper left), rotated outline CCF (upper right), and Suite2p region of interest (ROI) output image containing exact position of all 2p imaged neurons (lower right). Yellow outlines show the area of masks created from the thresholded mean dF/F image for, in this example, repeated full-field visual stimulation. In step 1 (top row), the CCF is transformed and aligned to the MMM image using a series of user-selected points (blue points with red numbered labels, set to matching locations on both left and right images) defining a bounding-box and known anatomical and functional locations. In step 2 (bottom row), the same process is applied to transformation and alignment of Suite2p ROIs onto the MMM with user-selected points defining a bounding box and corresponding to unique, identifiable blood-vessel positions and/or intersections. Finally, a unique CCF area name and number are assigned to each Suite2p ROI (i.e. neuron) by applying the double reverse-transformation from Suite2p cell-center location coordinates, to MMM, to CCF. (d) CCF-aligned Suite2p ROIs from an example dorsal mount preparation with 7328 neurons identified in a single 30-min session from a spontaneously behaving mouse. TC = tone cloud, FF = full-field, defl = deflection, dF/F = change in fluorescence over baseline fluorescence, CCF = Allen common coordinate framework version 3.0, M2 = secondary motor cortex, S1b = primary somatosensory barrel cortex, V1 = primary visual cortex, A = anterior, P = posterior, R = right, L = left.

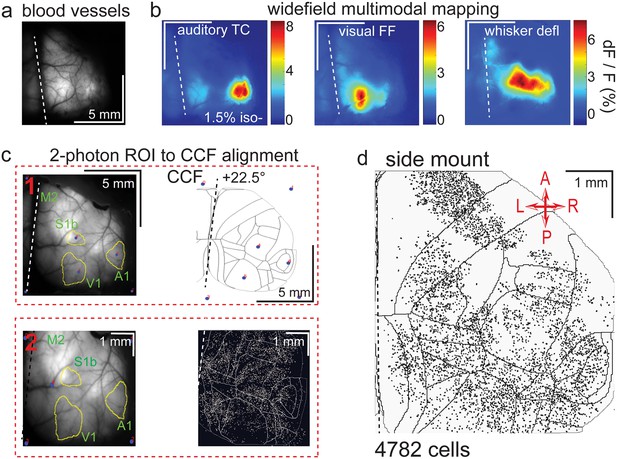

Widefield-imaging-based multimodal mapping (MMM) and CCF alignment, ROI detection, and areal assignment of GCaMP6s+ excitatory neurons for the side mount preparation.

(a) Mean projection of 30 s, 470 nm excitation fluorescence widefield movie of vasculature performed through a side mount cranial window. Midline indicated by a dashed white line. (b) Mean 1 s baseline subtracted dF/F images of 1 s responses to ~20–30 repetitions of left-side presentations of a 5–40 kHz auditory tone cloud (auditory TC; left), visual full field (FF) isoluminant Gaussian noise stimulation (center), and 100 ms x 5 Hz burst whisker deflection (right) in an example side mount preparation mouse under 1.5% isoflurane anesthesia. (c) Example two-step CCF alignment to side mount preparation, performed in Python on MMM masked blood-vessel image (upper left), rotated outline CCF (upper right), and Suite2p region of interest (ROI) output image containing exact position of all 2p imaged neurons (bottom right). Yellow outlines show the area of masks from thresholded mean dF/F image for repeated auditory, full-field visual, and/or whisker stimulation. In step 1 (top row), the CCF is transformed and aligned to the MMM image using a series of user-selected points (blue points with red numbered labels, set to matching locations on both left and right images) defining a bounding-box and known anatomical and functional locations. In step 2 (bottom row), the same process is applied to transformation and alignment of Suite2p ROIs onto the MMM with user-selected points defining a bounding box and corresponding to unique, identifiable blood-vessel positions and/or intersections. Finally, a unique CCF area name and number are assigned to each Suite2p ROI (i.e. neuron) by applying the double reverse-transformation from Suite2p cell-center location coordinates, to MMM, to CCF. (d) CCF-aligned Suite2p ROIs from an example side mount preparation with 4782 neurons identified in a single 70 min session from a mouse performing our 2-alternative forced choice (2-AFC) auditory discrimination task. TC = tone cloud, FF = full-field, defl = deflection, dF/F = change in fluorescence over baseline fluorescence, CCF = Allen common coordinate framework version 3.0, M2 = secondary motor cortex, S1b = primary somatosensory barrel cortex, A1 = primary auditory cortex, V1 = primary visual cortex, A = anterior, P = posterior, R = right, L = left.

Dorsal mount.

Upper left, 3D printed titanium headpost for dorsal mount (left; i.materialise.com and sculpteo.com) and accompanying cranial window for dorsal mount (labmaker.org, or TLCInternational.com and glaswerk.com), shown in top and side orthogonal projection, followed by isometric projection (AutoDesk Inventor, Adobe Acrobat Pro 3D viewer). Upper right, 3D printed plastic (PLA) light-shields or ‘woks’, same views as upper left. Horizontal light shield (left) fits onto perimeter of dorsal mount headpost and is attached with Sylgard 170 Fast Cure silicone elastomer, and vertical light shield fits onto vertical perimeter ridge of horizontal light shield and is held by gravity. Bottom, simultaneous and temporally aligned high-resolution videography from three points-of-view of a mouse under spontaneously behaving conditions (shown at 3x speed or 90 Hz; left and right camera are GigE Teledyne Dalsa M2050 cameras, and posterior camera is FLIR grasshopper USB3 a camera). Example pose tracking labeling by DeepLabCut (Mathis et al., 2018) is shown (right camera).

Side mount: same as in dorsal mount (Video 1), but for side mount hardware.

Note that the mouse’s headpost is retained by a single fixed support arm, rotated 22.5 degrees to the left, whereas the mouse in the dorsal mount example is held by dual orthogonally positioned fixed support arms.

Finally, we designed a custom, LabView-controlled behavioral setup with up to 3 high-speed body and face cameras (Figure 1a, b; Videos 1 and 2). This behavioral monitoring allowed us to compare widespread, densely sampled, high-resolution neural activity to movement and behavioral arousal state variation in head-fixed, awake, ambulatory (i.e. running atop a cylindrical wheel) mice under conditions of spontaneous behavior (Figure 4, Figure 5, Figure 6, Figure 4—figure supplements 1 and 2, Figure 5—figure supplements 1 and 2, Figure 6—figure supplement 1), passive sensory stimulation (Figures 1—3, Figure 1—figure supplement 2), or 2-alternative forced choice (2-AFC) task engagement (Figure 1c, Protocol 1).

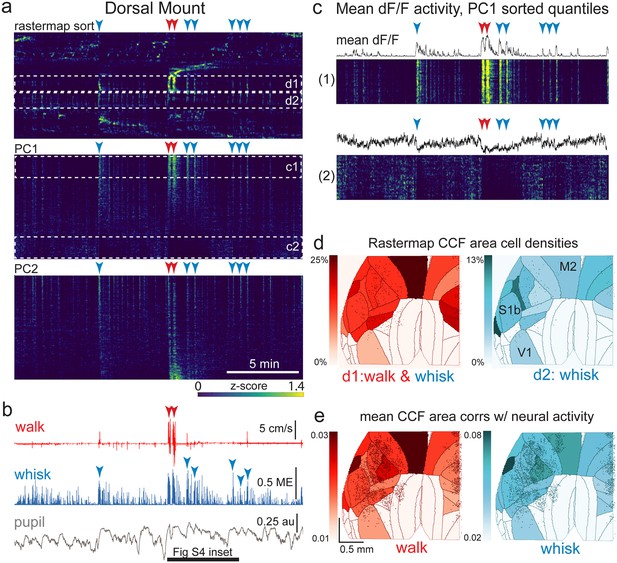

The relationship between spontaneous behavioral measures and neural activity across the dorsal cortex is globally heterogeneous.

(a) Rastermap (top), first principal component (PC1; middle), and second principal component (PC2, bottom) sorting of normalized, rasterized, neuropil subtracted dF/F neural activity for 3096 cells from a single 20-min duration example dorsal mount 2p imaging session. Each row in the display corresponds to a ‘superneuron’, or average activity of 50 adjacent neurons in the Rastermap sort (Stringer et al., 2023, bioRxiv). Activity for each superneuron is separately z-scored, then displayed as low activity in blue, intermediate activity in green, and maximum activity level in yellow (standard viridis color look-up table). Red and blue arrowheads show alignment of walking and whisking bouts, respectively, to neural activity. White dashed boxes indicate dual selections highlighted in panels (c) and (d). Scale bar shows color look-up map for each separately z-scored, individually displayed superneuron in the raster displays. (b) Behavioral arousal primitives of walk speed, whisker motion energy, and pupil diameter shown temporally aligned to the rasterized neural activity traces in (a), directly above. Horizontal black bar indicates time of expanded inset in Figure 4—figure supplement 2. (c) Expanded insets of top and bottom fifths of rasterized PC1 sorting from the middle segment of panel (a), with mean dF/F activity traces shown above each. Red and blue arrowheads indicate the same walking and whisking bouts, respectively, as in (a) and (b). (d) Normalized density of neurons in each CCF area belonging to two example Rastermap sorted groups (d1, left, red: MIN = 0%, MAX = 25%; d2, right, blue: MIN = 0%, MAX = 13%), with rasterized activities shown in corresponding labeled white dashed boxes in the top segment of panel (a). The neurons present in selected Rastermap groups are shown as Suite2p footprint outlines. The type of behavioral arousal primitive activity typically concurrently active with high neural activity for each Rastermap group is indicated below each Rastermap group’s CCF density map (i.e. walk and whisk for group d1, left (red), and whisk for group d2, right (blue)). (e) Normalized mean correlations of neural activity and walk speed (left, red; mean: MIN = 0.01, MAX = 0.03) and whisker motion energy (right, blue; mean: MIN = 0.02, MAX = 0.08) per CCF area (i.e. average correlation of all neurons in each area) are shown for this example session. Mean walk speed correlations with neural activity (dF/F) were significantly greater than zero (p<0.001, median t(3095)=3.7, single-sample t-test; python: scipy.stats.ttest_1samp) for 15 of the 19 CCF areas with at least 20 neurons present. The areas with mean correlations not significantly larger than zero were right VISam, right SSp_ll, left VIS_rl, and left AUDd. Mean whisker motion energy correlations with neural activity (dF/F) were significantly more than zero (p<0.001, median t(3095)=4.4, single-sample t-test) for 16 of the 19 CCF areas with at least 20 neurons present. The areas with mean correlations not significantly larger than zero were right VISam, left VISam, and left VISrl. PC = principal component, ME = motion energy, au = arbitrary units, M2=secondary motor cortex, S1b = primary somatosensory barrel cortex, V1 = primary visual cortex.

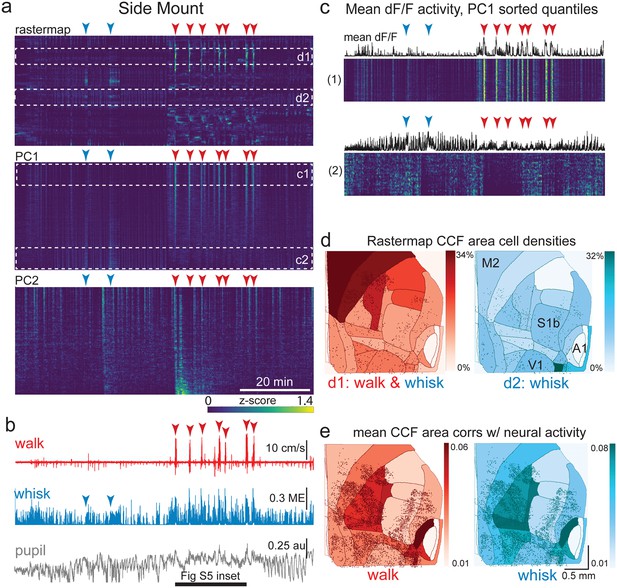

The relationship between spontaneous behavioral measures and neural activity across the lateral cortex is regionally patterned.

(a) Rastermap (top), first principal component (PC1; middle), and second principal component (PC2, bottom) sortings of normalized, rasterized, neuropil subtracted dF/F neural activity for 5678 cells from a single 90 min duration example side mount 2p imaging session. Each row in the display corresponds to a ‘superneuron’, or average of 50 adjacent neurons in the Rastermap sort (Stringer et al., 2023, bioRxiv). Low activity (z-scored) in blue, intermediate activity in green, maximum activity level in yellow. Red and blue arrowheads show alignment of walking and whisking bouts, respectively, to neural activity. White dashed boxes indicate selections highlighted in panels (c) and (d). Scale bar shows color look-up map for each separately z-scored, individually displayed superneuron in the raster displays. (b) Behavioral arousal primitives of walk speed, whisker motion energy, and pupil diameter shown temporally aligned to the rasterized neural activity traces in (a), directly above. (c) Expanded insets of top and bottom fifths of rasterized PC1 sorting from the middle segment of panel (a), with mean activity traces shown above each. Red and blue arrowheads indicate the same walking and whisking bouts, respectively, as in (a) and (b). Horizontal black bar indicates time of expanded inset shown in Figure 5—figure supplement 1. (d) Normalized density of neurons in each CCF area belonging to two example Rastermap sorted groups (d1, left, red: MIN = 0%, MAX = 34%; d2, right, blue: MIN = 0%, MAX = 32%), with rasterized activities shown in corresponding labeled white dashed boxes in the top segment of panel (a). Only cells in selected Rastermap groups are shown. The type of behavioral arousal primitive (i.e. walk and whisk, left, in red; whisk, right, in blue) that was typically concurrently active with high neural activity is indicated below each Rastermap group’s CCF density map. (e) Normalized mean correlations of neural activity and walk speed (left, red; mean: MIN = 0.01, MAX = 0.06; standard deviation: MIN = 0.000, MAX = 0.029) and whisker motion energy (right, blue; mean: MIN = 0.01, MAX = 0.08; standard deviation: MIN = 0.000, MAX = 0.043) per CCF area for this example session. Mean walk speed correlations with neural activity (dF/F) were significantly more than zero (p<0.001, median t(5677)=4.4, single-sample t-test; python: scipy.stats.ttest_1samp) for 20 of the 24 CCF areas with at least 20 neurons present. The areas with mean correlations not significantly larger than zero were left VISp, right SSpn, right AUDpo, and right TEa. Mean whisker motion energy correlations with neural activity (dF/F) were significantly more than zero (p<0.001, median t(5677)=7.0, single-sample t-test) for 21 of the 24 CCF areas with at least 20 neurons present. The areas with mean correlations not significantly larger than zero were right SSpn, right AUDpo, and right TEa. PC = principal component, ME = motion energy, au = arbitrary units, M2 = secondary motor cortex, S1b = primary somatosensory barrel cortex, V1 = primary visual cortex, A1 = primary auditory cortex.

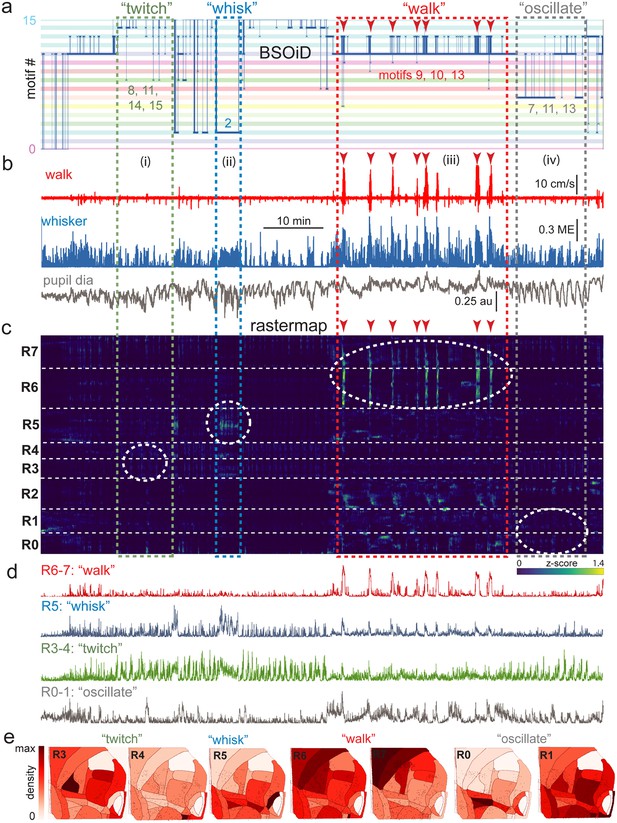

Alignment of activity sorted neural ensembles and spontaneous behavioral motifs reveals sparse, distributed encoding of arousal measures across dorsolateral cortex in the side mount preparation.

(a) Manual identification of high-level, qualitative behaviors (twitch, green dashed box; whisk, blue dashed box; walk, red dashed box; pupil oscillate, gray dashed box), aligned to sets of raw, unfiltered behavioral motifs (B-SOiD) extracted from pose-estimates (DeepLabCut) for a single example session. Numbered B-SOiD motifs (trained on a set of four sessions from the same mouse, motif # indicated by the position of the thick blue line relative to the y-axis at each time point) are color-coded (horizontal bars) across the entire 90-min session. (b) High-level behaviors (dashed colored boxes, (i–iv) and BSOiD motifs from a) are vertically temporally aligned to the behavioral primitive movement and arousal measures of walk speed, whisker motion energy, and pupil diameter. Expanded alignments of high-level behaviors ii (whisk) and iii (walk) are shown in Figure 6—figure supplement 1a, b, Red arrowheads indicate temporal alignment positions for multiple walk bouts across BSOiD motifs (a), behavioral arousal primitives (b), and Rastermap sorted neural data (c). (c) Rastermap sorted, z-scored, rasterized, neuropil subtracted dF/F neural activity (10th percentile baseline, rolling 30 s window) from the same side mount session, temporally aligned to the behavioral data directly above. Numbers at left (R0–R7) indicate Rastermap motif numbers, selected manually ‘by-eye’ for this session, and the horizontal dashed white lines on the Rastermap-sorted neural data indicate the separation between neighboring Rastermap motifs. Each row in the display corresponds to a ‘superneuron’, or average of 50 adjacent neurons in the Rastermap sort (Stringer et al., 2023, bioRxiv). Colored, dashed boxes indicate alignment of high-level behaviors with neural activity epochs from the same example session. White dashed ovals indicate areas of Rastermap group-aligned active neural ensembles during periods of defined high-level behaviors. The 2p sampling rate for this session was 3.38 Hz. (d) Normalized mean activity traces for all neurons in each neural ensemble indicated in (c) (white dashed ovals). Each trace also corresponds and is aligned to the indicated Rastermap groups. (e) Color-coded neuron densities per CCF area corresponding to Rastermap groups shown in (c), shown grouped by qualitative high-level behaviors, as indicated in (a, b). Cross-indexing with neurobehavioral alignments (white dashed ovals) in (c) allows for visualization of spatial distribution of neurons active during identified high-level behaviors (a) consisting of defined patterns of behavioral primitive movement and arousal measures (b). Corresponding maximum cell density percentages in each CCF area (white to red scale bar, top right) for each normalized Rastermap (R0–7, excluding R2) heatmap color lookup table are 11.4, 31.8, 22.9, 24.1, 38.4, 33.2, and 24.0%, respectively (left to right: R3, R4, R5, R6, R7, R0, and R1). This list of cell density percentages, therefore, corresponds to that of the CCF area filled with the darkest shade of red in each Rastermap group. White CCF areas in each Rastermap group are areas where no cells of that group were found in this example session, or where the total number of cells was less than 20 and therefore the density estimate was deemed unreliable and not reported. ME = motion energy, au = arbitrary units, CCF = common coordinate framework.

We present here detailed methods, along with example recording sessions during spontaneous behavior from both our dorsal and side mount preparations, to demonstrate the feasibility of widespread 2p cortical neuronal imaging simultaneously with behavioral monitoring. For a graphical schematic overview of our methods, please see the following supplementary material: https://github.com/vickerse1/mesoscope_spontaneous/blob/main/pancortical_workflow_diagrams.pdf; Vickers, 2024b.

Results

Recent studies employing large-scale imaging (e.g. widefield 1p and mesoscale 2p) and/or electrophysiology (e.g. Neuropixels) recording technologies have suggested that a significant percentage of the variance of neural activity across neocortex can be accounted for by rapid spontaneous fluctuations in arousal and self-directed movement during both spontaneous behavior and task performance (Musall et al., 2019; Steinmetz et al., 2019; Stringer et al., 2019; Jacobs et al., 2020; Salkoff et al., 2020; Stringer et al., 2023, bioRxiv). Given that arousal state- and movement-related activity appears to be a ubiquitous feature of cortical activity across all regions, it would be advantageous to develop methodologies that allow for simultaneous, single neuron resolution, contiguous monitoring of neuronal activity during second-to-second movements and changes in arousal, during both spontaneous and trained behaviors. Here, we harness new imaging and analysis technologies in order to both address this methodological gap and provide a proof of principle test of these methods by examining the relationship of behavioral arousal and movement to detailed spatial patterns of neural activity across the dorsal and lateral neocortex.

The overall workflow for our mouse preparations, data acquisition, and data analysis can be found in the following supplementary document: https://github.com/vickerse1/mesoscope_spontaneous/blob/main/pancortical_workflow_diagrams.pdf; Vickers, 2024b. The remainder of our supplementary materials are also hosted on the main /mesoscope_spontaneous folder of our GitHub repository, including documented analysis code, design and 3D-component printable files, grayscale versions of all figures, and supplementary figures and movies. Related data for example recording sessions shown in main and supplementary figures are publicly available on FigShare+ at: https://doi.org/10.25452/figshare.plus.c.7052513.

Large field of view 2-photon imaging in behaving mice

In order to simultaneously monitor the activity of cortical neurons across a large area (~25 mm2, or up to 36 mm2 in some cases) of either the bilateral dorsal cortex (dorsal mount), or of both dorsal and lateral cortex across the right hemisphere (side mount) in awake, behaving mice, we chose to utilize 2p imaging of GCaMP6s fluorescence using an existing and commercially available 2p random access mesoscope (Thorlabs; 2p-RAM; Sofroniew et al., 2016). We chose this form of data collection for use in head-fixed mice because it allows for: (1) rapid scanning (i.e. it uses a resonant scanner coupled to a virtually conjugated galvo pair) over a large (5x5 mm fully corrected, or up to 6x6 mm imageable), field-curvature corrected field of view (FOV); (2) subcellular resolution in the z-axis to avoid region-of-interest (ROI) contamination by neuropil and neighboring cells (0.61 x 0.61 x 4.25 µm xyz point-spread function at 970 nm laser excitation wavelength); (3) correction for aberrations at all wavelengths between 900–1070 nm, to allow for combined imaging of multiple fluorophores. We achieved these three objectives in head-fixed mice that were able to walk freely on a running wheel (See Figure 1a, b, Video 1, and Video 6), to clearly view visual monitors on both sides, and to lick either of two water spouts (left, right) for 2-alternative forced choice responses, while we performed left, right, and rear videography to monitor each mouse’s face, pupil, and body.

The neuronal imaging methodology we chose (see below) is not the only one available. For example, another promising and recently developed alternative implementation, Diesel 2p, allows for similarly flexible and large FOV imaging at single-cell resolution, but with a significantly higher z-axis point-spread function (~8–10 µm; Yu et al., 2021). Despite this drawback, its use of dual scan engines allows for true simultaneous imaging of different cortical areas, while the 2p RAM mesoscope (Thorlabs) accomplishes this by rapid jumping (~1 ms) between FOVs. Both systems offer excellent corrected field curvatures on the order of +/-25 µm over ~5 mm.

Several primary design constraints needed to be met to achieve our goal of monitoring neuronal activity over a large portion of the mouse lateral and/or dorsal cortices. These ranged from aspects of the surgical preparation, mounting, and imaging, to neural recording (Figure 1—figure supplement 1, Videos 1 and 2, Protocols II-III). To increase the extent of the neocortex over which we could monitor neural activity, we developed two distinct headpost/cranial window preparations. In the first, the dorsal mount, the mouse is head-fixed upright on a cylindrical running wheel and the objective is vertical (Figure 1a). Here, both wings of the headpost are attached to support arms, and each support arm is mounted on two vertical 1” diameter posts secured with flexing feet for maximum stability. This preparation allowed for monitoring of neural activity over large regions of both cerebral hemispheres, from the posterior aspects of visual cortex to the anterior aspects of motor cortex, and laterally to the dorsal-most aspects of auditory cortex, depending on the placement of the 2p mesoscope objective (Figure 1d).

The second preparation, the side mount, required the head of the mouse to be rotated 22.5o to the left (or right, not shown), with the microscope objective vertical or tilted 1–5 degrees to the right, so as to extend the 5x5 mm imaging FOV to include the auditory cortex and other neighboring ventral/lateral cortical areas (Figure 1b and e). For the side mount, the headpost had only a single, left wing so that the right side of the mouse’s face would not be occluded. The deep lateral/ventral extent of the side mount headpost prevented easy addition of a right headpost wing. Attaching the left wing to a single support arm supported by two 1” diameter vertical posts mounted with flexing feet, as in the dorsal mount preparation, was sufficient to minimize movement artifacts. A key additional difference to note here is that two small screws were used to attach the left wing of the side mount headpost to the support arm, thus giving it additional stability. The dorsal mount preparation used only one screw (the outermost hole) on each of the left and right side headpost wings. Thus, both preparations were secured by a total of two mounting screws, each countersunk into headpost arms. An important design feature is that the headpost wings fit into recessed rectangular slots machined into the support arms, facilitating stability.

We observed that mice adapt well to head tilt in the side mount preparation, and will readily whisk, walk or run, and learn to perform lick response tasks in this configuration. Keeping the microscope objective in a vertical or near-vertical orientation, and rotating the mouse’s head (instead of rotating the objective), significantly improved the manageability of the water meniscus between the objective and the cranial window. However, if needed, the objective of the Thorlabs mesoscope may be rotated laterally up to +/- 20o for direct access to more ventral cortical areas, for example if one wants to use a smaller, flat cortical window that requires the objective to be positioned orthogonally to the target region. In general, setups such as Sutter’s MOM system and Thorlabs’ Bergamo microscope, which offer even greater degrees of microscope objective rotation, would allow enhanced versatility with our preparations.

Preliminary comparisons across mice indicated that side and dorsal mount mice showed a similar degree of behavioral variability both within and across sessions. An example of the relative ease with which mice adapted to the ~22.5 degree neck rotation can be seen in Video 2 and Video 10. It was in general important to make sure that the distance between the wheel and all four limbs was similar when using either preparation. In particular, careful attention must be paid to the positioning of the front limbs in the side mount mice so that they are not too high off the wheel. This can be accomplished by a slight forward angling of the left support arm. With dorsal mount mice, it was important to not place them too close to the wheel, in which case they would exert pressure on the headpost and support arms, leading to increased movement artifacts, or too far above the wheel, which can significantly reduce walking bout frequency.

Although it was in principle possible to image the side mount preparation in the same optical configuration without rotating the mouse (by rotating the objective to 20 degrees to the right), we found that the last 2–3 degrees of unavailable, yet necessary, rotation (our preparation is rotated 22.5 degrees left, which is more than the full available 20 degrees rotation of the objective), along with several other factors, made this undesirable. First, it was difficult or impractical to attach the horizontal light shield and to establish a water meniscus with the objective fully rotated. One could use gel instead of water (although, in our hands, gel was optically inferior), but without the horizontal light shield, light from the UV and IR LEDs can reach the photomultiplier tubes (PMTs) via the objective and contaminate the image or cause tripping of the PMT. Second, imaging the right pupil and face of the mouse was difficult under these conditions because the camera would need the same optical access angle as the objective, or would need to be moved down toward the air table and rotated up 20 degrees, in which case its view would be blocked by the running wheel and other objects mounted on the air table. A system of mirrors for imaging the animal could be used to overcome this problem, but would further complicate the current setup.

Cortical imaging in the novel side mount preparation was restricted to one hemisphere and a thin medial strip (~1 mm wide) of the contralateral hemisphere. This allowed for imaging from roughly the anterio-medial areas of V1 to medial and medio-lateral secondary regions of motor cortex (or slightly farther, if the anterior-posterior axis of the brain is oriented along the diagonal of the 5x5 mm FOV, or if one images to the full allowable but partially uncorrected 6x6 mm extent with ScanImage). This anterior-posterior reach was similar to that observed in the dorsal mount preparation. However, the side mount windowing and the accompanying mounting procedure significantly increased our ability to image neural activity in lateral (ventral) cortical aspects, including auditory, somatosensory, and association cortical regions (Figure 1e), notably without the need for substantial rotation of the objective.

To achieve 2p neuronal imaging from broad cortical regions, we created large and stable, custom (designed in AutoDesk Inventor) 3D-printed titanium (laser sintered powder with active cooling and shot-peened post-processing) headposts (Figure 1d, e and Figure 1—figure supplement 1e, f; Suppl Design Files; i.materialise.com and sculpteo.com) and glass (0.21 mm thick, Schott D263T) cranial windows (Figure 1, Figure 1—figure supplement 1a, c, d, e, and f ; Videos 1 and 2; Suppl Design files: https://github.com/vickerse1/mesoscope_spontaneous (copy archived at Vickers, 2024a); Labmaker.org for dorsal mount; TLC International for cutting, and GlasWerk for bending, for the side mount and early prototypes of the dorsal mount). We incorporated these custom made headposts and cranial windows into the dorsal mount (Figure 1a and d, Figure 1—figure supplement 1c upper, d upper left, e), and side mount (Figure 1b, e, Figure 1—figure supplement 1a, c, lower, d, lower left and right, and f, upper right) preparations. The dorsal mount preparation allowed for imaging over roughly the same extent of bilateral dorsal cortex as in Kim et al., 2016, although we were able to image up to ~25 mm2 at a time, compared to the more typical maximum 2p FOV of ~1 mm2. In addition, the area of bilateral cortex imageable with this preparation is comparable to that of many recent studies employing widefield 1-photon imaging, such as Musall et al., 2019. The side mount preparation allowed for imaging of an extent of right hemisphere comparable to that of Esmaeili et al., 2021, although they used through-the-skull widefield 1-photon imaging, while our cranial window preparation allows for either widefield 1-photon imaging, or single neuron resolution 2p imaging, in the same mouse.

To mimic the curvature of the brain and reduce tissue compression, which is a problem with flat coverslips with a diameter larger than ~3 mm, cranial windows were curved by heating pre-cut (TLC International) glass pieces over 9 or 10 mm bend-radius molds (GlasWerk). The bend radius (i.e. radius of half cylinder mold over which the melted glass is bent to achieve its curved shape) was fixed at 10 mm for Labmaker.org dorsal mount windows (Figure 1—figure supplement 1e, right; Video 1; early attempts with 11 or 12 mm bend radii failed for all but the largest adult male mice). For windows custom designed in collaboration with GlasWerk (Figure 1—figure supplement 1f, right; Video 2), the bend radius was set at either 9 mm, for a tight fit to ventral auditory areas, or at 10 mm, to enable simultaneous imaging of the entire preparation at a single focal depth. In our experience, successful cranial windows last between 100 and 150 days, or in some rare cases up to 300 days: https://github.com/vickerse1/mesoscope_spontaneous/blob/main/window_preparation_stability.pdf; Vickers, 2024b. The keys to this stability were even pressure across the surface of the window, complete enclosure of the glass-skull interface with flow-it, causing minimal damage to the dura during the craniotomy, and constant attention to the minimization of infection either across the surface of the skull, between the headpost and window surgeries, or around the external perimeter of the headpost, after the window surgery (See Protocol III and Figure 1—figure supplement 1).

The success rate for window implantation, for both dorsal mount and side mount preparations, was around ~65% (Figure 1—figure supplement 1g; see also Protocol III). However, with extensive practice this can be raised to be closer to 75–80%, especially if care is taken to both maximize the area of the craniotomy and to leave the dental cement attaching the headpost to the skull undamaged during drilling, so that the perimeter of the window fits fully inside the craniotomy, the window ‘floats’ or sits freely directly on the surface of the brain, and the headpost remains firmly attached to the skull. This was accomplished in later iterations of our designs and methods by making the side mount window slightly smaller at the anterior edge of M2, and by using an adjustable support arm (Figure 1—figure supplement 1b, lower) mounted on a small breadboard attached underneath the base of the stereotax in order to fix the mouse in its 22.5 degree left-rotated position during the window surgery so that it would not move or vibrate during drilling of the craniotomy. This also acted to minimize ‘break-throughs’ of the drill bit tip through the skull and into the brain.

The main causes of death during cranial window surgery were exsanguination during the surgery following a ruptured major blood vessel on the surface of the brain (either sagittal or lateral, usually), damage to the dura caused by the skull during the removal step of the craniotomy or by the sharp tip of a tool used to remove the skull, failure to properly pressurize the window across the entire cortical surface, or failure to completely seal the window to the skull fragment around its entire perimeter with flow-it (here, rapid UV light application during flow-it application can help). In some cases where the mouse survived the window surgery it was still considered a failure if a bleed re-erupted and occluded at least 30% of the window, or if glue occluded at least 30% of the window either above or below the surface of the window. In general, most bleeds cleared, on their own, by cerebrospinal fluid within a week if there was no direct clotting on the surface of the brain.

Changes in vasculature following cranial window surgery were usually minimal but could involve the following: (i) sometimes a vessel is displaced or moved during the window surgery, (ii) sometimes a vessel, in particular the sagittal sinus, will enlarge or increase its apparent diameter over time if it is not properly pressured by the cranial window, and (iii) sometimes an area experiencing window pressure that is too low will, over time, show outgrowth of fine vascular endings. The most common of these was (i), and (iii) is perhaps the least common. In general the vasculature is quite stable, and window preparations that we observed to be of high quality at ~1 week post-surgery showed minimal changes over the first ~150 days (see supplementary materials: https://github.com/vickerse1/mesoscope_spontaneous/blob/main/window_preparation_stability.pdf; Vickers, 2024b).

Within a field of view of 5x5 mm, curvature of the brain, especially in the mediolateral direction, is a significant problem. To partially compensate for brain curvature, online field-curvature correction (FCC) was applied in ScanImage (Vidrio Technologies, MBF Biosciences) during mesoscope image acquisition. In addition, complementary fast-z ‘sawtooth’ or ‘step’ corrections were applied when all ROIs were acquired at the same or different z-planes, respectively (see Sofroniew et al., 2016). Despite this, small uncorrected discrepancies in the depth of the imaging plane below the pial surface may have remained, especially along the long-axis of acquisition ROIs in the side mount preparation (i.e. mediolateral). It is likely that optical effects of the curvature of the glass partially compensated for these differences in targeted imaging depth (i.e. by refraction to normalize approach of the laser to the pial surface), although we did not quantify this effect. In cases where we imaged multiple small ROIs, nominal imaging depth was adjusted in an attempt to maintain a constant relative cortical layer depth (i.e. depth below the pial surface).

We estimate that we experienced ~200 μm of depth offset across 2.5 mm. If the objective is orthogonal to our 10 mm bend window and centered at the apex of its convexity, a small ROI located at the lateral edge of the side mount preparation would need to be positioned around 200 μm below that of an equivalent ROI placed near the apex, and would be at close to the same depth as an ROI placed at or near the midline, at the medial edge of the window. We determined this by examining the geometry of our cranial windows in CAD drawings (available at https://github.com/vickerse1/mesoscope_spontaneous/tree/main/cranial_windows), and by comparing z-depth information from adjacent sessions in the same mouse, the first of which used a large FOV and the second of which used multiple small FOVs optimized so that they sampled from the same cortical layers across areas.

The mouse was restrained by fixation of the headpost with two countersunk screws to either a fixed (Figure 1—figure supplement 1b, top) or adjustable aluminum support arm (custom; Figure 1a, b, Figure 1—figure supplement 1b, bottom) and mounting apparatus (Figure 1a and b). For the dorsal mount preparation, two headpost support arms, one on each side of the head, were used (Figure 1a, Figure 1—figure supplement 1c, top; outer screw of both headpost wings used) while for the side mount preparation, a single, left side support arm was sufficient (Figure 1—figure supplement 1, bottom; inner and outer screws in the left headpost wing were both used). It was important to fix the headpost mounting arms to the top of the airtable with 1-inch diameter vertical mounting posts (Thorlabs RS6P8, RSH4, PF175), making sure all joints were clean of debris and tightened securely, to reduce movement artifacts.

To further minimize movement artifacts, it was also important to mount the mouse at a distance from the surface of the running wheel that was not too low, which would allow the mouse to push up with its legs, or too high, so that part of the mouse’s body weight would be supported by the headpost. A final consideration to minimize movement artifacts was to ensure that proper, even pressure was maintained across the entire interface between the brain and the cranial window during window implantation, both by making the craniotomy large enough so that the entire window could be set freely on the brain surface, and by using the 3D-printed window stabilizer properly during the application of flow-it glue to attach the edge of the window to the skull (see Protocols II, III).

Both preparations were found to be relatively free of vibration and movement artifacts and allowed for micro-adjustments (i.e. pitch, yaw, and roll) of the mouse relative to both the objective and the running wheel (Figure 1a, b and c). Increased positioning flexibility of the support-arm assembly was achieved through either of two ways: (1) by positioning it on a distal base-attached ball-joint mount (Thorlabs, SL20 articulating base; not shown); (2) through the use of a custom adjustable headpost support arm that was proximally adjusted around a ball-and-joint assembly (Figure 1—figure supplement 1b, bottom). This adjustable support arm operated through the use of a custom wrench and reverse-threaded (left-handed) nut for initial coarse tightening, and a micro-clamp for rotational stabilization during tightening. Four micro-hex wrench driven set-screws, two on either side of the ball-and-joint fitting, were used to achieve the final, fully locked state for imaging. The adjustable support arm was found to be superior to the base-attached ball joint mount in allowing for iterative micro-positioning of the animal in relation to the running wheel, objective, and lick spouts after initial fixation (mounting) of the headstage to the support bar.

In order to perform 2p neuronal imaging, the large 2p-RAM water-immersion objective (~10x net magnification, 0.6 NA, ~1.3 kg, 12 mm diameter tip, 25.6 mm shaft) must be positioned between 2.2 and 2.8 mm (i.e. ~working distance minus window thickness) from the curved glass surface of the cranial window while maintaining a stable water meniscus. Providing a base for the water meniscus while also blocking incident light entry was achieved by creating a custom 3D printed plastic light shield (AutoDesk Inventor, MakerGear M3 printer, PLA) that was attached to the protruding, fitted rim of the headpost with 170 FAST CURE Sylgard (‘wok one’; Figure 1c and Figure 1—figure supplement 1c, d). A second light shield (‘wok two’) served to further block extraneous light entry into the objective and was attached to the base of the lower light shield (‘wok one’) by fitting of a U-shaped profile over a vertically protruding single edge profile along the perimeter of the first, lower light shield (‘wok one’; Figure 1c).

Together, these two custom-printed light shields prevented incident light (from the video stimulus monitor, the ultraviolet LEDs used for controlling the baseline and dynamic range of pupil diameter, and the infrared LEDs used to illuminate the mouse) from entering the imaging objective and thereby either contaminating the image or tripping the photomultiplier tubes (PMTs). Line-of-sight for the animal to the video stimulus monitor was retained, and this design allowed for free vertical and rotational (over a limited range) movement of the objective lens, which was contacted directly only by the water meniscus.

Direct left, right, and posterior camera angles, or ‘lines of sight’, were preserved to enable reliable recording and proper illumination of pupil diameter, whisker pad movement, and the movement of other body parts such as the nose, mouth, ear, paws, and tail (Figure 1a and b; see Videos 1 and 2). Placement of the rotated mouse in the side mount preparation near the left side of the running wheel allowed for the animal’s left and right fields of view to not be significantly obstructed. Adjustable positioning of two vertically and laterally offset conductance-based lick spouts for 2-alternative forced choice (2-AFC) task performance (lick left, lick right) and reward delivery was achieved with a rapidly translatable motorized linear stage (Figure 1c; 63.7ms total travel time over 7 mm; Zaber Technologies). This system allowed for rapid withdrawal of lick spouts between trials, and presentation of the lick spouts during the response period of each trial.

Pseudo-widefield imaging and optogenetic stimulation hardware modifications of the Thorlabs 2-photon mesoscope

Examining neuronal activity at the single cell level and relating it to stimulus-evoked activity observed at the widefield level (Figures 2 and 3), as well as manipulating regions of the cortex through localized light delivery and optogenetics (see Video 11), required precise optical alignment across all three of these methods. First, a standardized cortical map needed to be fitted onto images of skull landmarks and the cortical vasculature by widefield multimodal sensory mapping (MMM; Figures 2 and 3a, b, and c, top). Then, a method was needed for transferring this cortical map onto the field of view of the mesoscope to both allow for online FOV targeting and for post-hoc assignment of each imaged neuron to a designated cortical area on the Allen common-coordinate framework (CCF v3.0) map for subsequent analyses (Figures 2 and 3c, bottom, d). Finally, the spatial coordinate system of the 1p opto-stimulation laser routed in through an auxiliary light-path of the mesoscope needed to be aligned to that of the 2p laser so that specific cortical subregions could be targeted for optogenetic inhibition by light activated, ChR2-mediated excitation of parvalbumin interneurons in Thy1-RGECO x PV-Cre x Ai32 mice (Video 11).

In order to align 2p maps of single neuron activity with functional 1p cortical maps determined through pseudo-widefield imaging (i.e. combined reflected and fluorescence light imaging with a standard ‘non-widefield’ format CCD camera), we designed a method for reliably aligning a standardized cortical map (i.e. the Allen CCF v3.0; we used either a 0 degree, https://github.com/vickerse1/mesoscope_spontaneous/blob/main/python_code/CCF_map_rotation/0deg/CCF_MMM.png; Vickers, 2024b, or 22.5 degree, https://github.com/vickerse1/mesoscope_spontaneous/blob/main/python_code/CCF_map_rotation/22deg/CCF_MMM.png; Vickers, 2024b, rotated CCF map outline created with the following code: https://github.com/vickerse1/mesoscope_spontaneous/blob/main/python_code/CCF_map_rotation/Rotate_CCF.py; Vickers, 2024b), to an image of the cortical vasculature pattern, which can be directly visualized with both widefield and 2p imaging techniques for each brain (see Protocol V). The epifluorescence light source (Excelitas) for the pseudo-widefield imaging, which was parfocal with the 2p imaging coordinate system, was controllable by a dial, shutter, and remote foot-pedal for optimal ease of positioning the objective in the water meniscus at a distance of ~2.2 mm from the headpost and cranial window without crashing into the preparation (Figure 1c and Figure 1—figure supplement 1d, top and bottom right). This allowed us to ‘drive’ the position of the 2p mesoscope FOV to the desired Allen CCF cortical area by moving the objective to a location where the observed vasculature pattern matched that of a processed image we created ahead of time on our 1-photon widefield imaging rig that combined vasculature, sensory responses, and skull landmarks from each mouse.

This technique required several modifications of the auxiliary light-paths of the Thorlabs mesoscope, and would also likely involve similar modifications in other comparable microscope setups, such as Diesel 2p (Yu et al., 2021). For switchable blue/green widefield imaging and 2p imaging in our original configuration (see (a) in https://github.com/vickerse1/mesoscope_spontaneous/blob/main/mesoscope_optical_path_opto_switching.pdf; Vickers, 2024b), we used a 469/35 Semrock excitation filter, 466/40 Semrock dichroic (1st, top cube), and mirror (2nd, bottom cube). For the combination of pseudo-widefield imaging and rapid, targetable optogenetic 1-photon (1p) stimulation with concurrent 2p imaging (see (b) in https://github.com/vickerse1/mesoscope_spontaneous/blob/main/mesoscope_optical_path_opto_switching.pdf), which we used with PV-Cre x Ai32 x Thy1-RGECO mice (Video 11), we established dual coupling of the broadband fluorescence light-source (Excelitas) used in the original configuration and a 473 nm laser driver (SF4C 473, Thorlabs), coupled via liquid light guide, to converging, dual input auxiliary light paths of the Thorlabs mesoscope via two in-series, magnetically secured, switchable filter cubes (DFM1T1 cube, Thorlabs; see https://github.com/vickerse1/mesoscope_spontaneous/blob/main/mesoscope_filterCube_schematic_dual_opto_Jan0320.jpg; Vickers, 2024b; the top cube can be switched to allow pseudo-widefield imaging of either GCaMP6s or Thy1-RGECO, and the bottom cube can be switched to allow either pseudo-widefield imaging or optogenetic stimulation).

The 473 nm (blue) laser driver was connected to a single open loop, high speed buffer (50 LD, Thorlabs), and targeted to the coordinate system of the 2p laser with a grid-calibrated, auxiliary galvo-galvo scanner (GVSM002, Thorlabs). This allowed for 2p imaging and blue 1p laser optogenetic stimulation to occur pseudo-simultaneously, because rapid, electronic control of the PMT1 and PMT2 shutters was able to limit interruption of image acquisition to a brief period during presentation of the optogenetic stimulus (~100 ms; see Video 11; see https://github.com/vickerse1/mesoscope_spontaneous/blob/main/mesoscope_optical_path_opto_switching.pdf). Here, replacement of the switchable mirror behind the objective, which blocked 2p imaging during pseudo-widefield imaging in our original configuration, with a dichroic that, when positioned in the light path, allowed both 920 nm (2p excitation) and 473 nm (opto-excitation) light to reach the mouse brain without requiring any additional slow switching of optical components.

Reduction of resonant scanner noise

Resonant scanners in 2p microscopes emit intense sound at the resonant mirror frequency, which is well within the hearing range of mice (~12.5 kHz emitted in the Thorlabs mesoscope). Because scanning precision requires the scanner to remain at a stable, elevated temperature, the scanner must remain on during the entire experimental session (i.e. up to ~2 hr per mouse). The unattenuated or native high-frequency background noise generated by the resonant scanner causes stress to both mice (Sadananda et al., 2008) and experimenters (Fletcher et al., 2018), and likely acts to prevent mice from achieving maximum performance in auditory mapping, spontaneous activity sessions, auditory stimulus detection, and auditory discrimination sessions/tasks.

To reduce this acoustic noise, we encased the resonant scanner and attached light path tubes with a custom 3-dimensional (3D)-printed assembly containing dense interior insulating foam. See Supplementary methods and materials for diagrams: https://github.com/vickerse1/mesoscope_spontaneous/blob/main/resonant_scanner_baffle/closed_cell_honeycomb_baffle_for_noise_reduction_on_resonant_scanner_devices.pdf; Vickers, 2024b, and for a text description: https://github.com/vickerse1/mesoscope_spontaneous/blob/main/resonant_scanner_baffle/closed_cell_honeycomb_baffle_methodology_summary.pdf; Vickers, 2024b; ‘3D scanning and honeycomb patterned nylon print’ (University of Oregon Innovation Disclosure #DIS-23–001, US provisional patent application UOR-145-PROV). It was critical to use 3D scanning of encased components in the design of the noise reduction shield so that the sound-reduction assembly would closely follow all of the surface contours and fit accurately in spaces with tight tolerances.

The result of this encasement was a large reduction in resonance scanner sound, from ~60 dB to ~5 dB measured at the head of the mouse (Bruel and Kjaer ¼” pressure filled microphone 4938 A-011; i.e. below the mouse hearing threshold at 12.5 kHz of roughly 15 dB; Zheng et al., 1999). By comparison, encasements designed using standard 3D-design and printing techniques (i.e. not based on a 3D scan) were, in our hands, only able to achieve a noise reduction of ~30 dB, to a level still audible to both mice and humans. This difference was due to the enhanced precision of encasement fit enabled by using the 3D-scanned map of the microscope’s surface contours.

Cortical alignment to the common coordinate framework (CCF) v3.0 map

Interpretation of the diversity of activity of thousands of neurons simultaneously identifiable with the mesoscope requires proper cortical areal localization, including that of areas anatomically or functionally distant from primary sensory cortices. Such mapping is not routinely possible directly on the 2p mesoscope when performing neuronal-level imaging, due to both the heterogeneity of responses within primary sensory cortices at the single neuron level, and to animal-to-animal variations in the spatial extent of cortical regions (de Vries et al., 2020; Bimbard et al., 2023). To facilitate the assignment of neurons to cortical areas, we sought to align the Allen Institute Common Coordinate Framework (CCF v3.0; Wang et al., 2020) to our 2p neuronal imaging results, using blood vessels, skull landmarks, and widefield imaging responses as intermediaries. We used an overlaid image of these features that we refer to as the ‘multimodal map’ (MMM) as on-the-fly guidance for FOV placement during 2p mesoscope imaging sessions, and then performed a precise post-hoc CCF alignment based on the MMM and vasculature patterns observed during 2p imaging to assign a unique CCF area identifier to each Suite2p-identified ROI/neuron during preprocessing stages of our data analysis (see https://github.com/vickerse1/mesoscope_spontaneous/tree/main/matlab_code for creation of the MMM, and https://github.com/vickerse1/mesoscope_spontaneous/tree/main/python_code for generation of rotated CCF map outlines and precise neuron assignment to CCF areas, which takes place in the following jupyter notebook: https://github.com/vickerse1/mesoscope_spontaneous/blob/main/python_code/mesoscope_pre_proc/meso_pre_proc_1.ipynb).

Alignment of the CCF to the dorsal surface of cortex and subsequent image-stack registration based on widefield imaging Ca2+ fluorescence data has been performed elsewhere with a variety of techniques. These include the use of skull landmarks (e.g. bregma and lambda; Musall et al., 2019), responses to unimodal sensory stimuli (Gallero-Salas et al., 2021), visual field mapping (Zhuang et al., 2017), and/or autocorrelation maps of spontaneous activity (Peters et al., 2021). Few studies, however, have performed such alignments with rotated cortex (i.e. imaging lateral portions of the cortex at an angle; but, see Esmaeili et al., 2021).

To summarize our approach, we used a technique where, for both the dorsal mount (Figure 2, Figure 1—figure supplement 2a) and side mount (Figure 3, Figure 1—figure supplement 2b) preparations, we first imaged 1p widefield GCaMP6s responses to unimodal passive sensory stimulation (e.g. visual, auditory, and somatosensory) to create a MMM consisting of multiple sensory area masks on top of the cortical vasculature. We then overlaid skull landmarks (e.g. bregma and lambda), imaged with reflected green light, onto the MMM. This intermediate overlay image, created using custom MatLab code to extract mean widefield dF/F sensory responses (https://github.com/vickerse1/mesoscope_spontaneous/blob/main/matlab_code/SensoryMapping_Vickers_Jun2520.m; Vickers, 2024b), followed by z-projection and selection masking techniques in Fiji/ImageJ, was used to guide selection of the FOV during 2p mesoscope imaging sessions. The Allen CCF was then warped onto the vasculature/skull/MMM overlay, and the mean 2p image was warped onto the MMM by vasculature alignment using custom code (see:https://github.com/vickerse1/mesoscope_spontaneous/blob/main/python_code/mesoscope_pre_proc/meso_pre_proc_1.ipynb for full initial processing steps of 2p data, or https://github.com/vickerse1/mesoscope_spontaneous/blob/main/python_code/mesoscope_preprocess_MMM_creation.ipynb; Vickers, 2024b for a short notebook containing only the relevant CCF alignment steps - as with all of our jupyter notebooks, these should be run in the ‘uobrainflex’ python environment: https://github.com/sjara/uobrainflex; Jaramillo, 2020). Finally, each neural ROI in the 2p image was assigned, post-hoc, to a position in the MMM x-y coordinate system and a corresponding CCF area based on the final overall alignment of the MMM, CCF, and 2p image. The following sections describe these steps in more detail.

Widefield multimodal mapping

To create the multimodal map, each mouse was put under light isoflurane anesthesia (~1.5%) after head-post surgery, but before implantation of the cranial window, and exposed to 5 min each of full-field visual (vertical and horizontal stationary grating patches; 0.16 cpd, 30 deg; Michaiel et al., 2019), tone-cloud auditory (a series of overlapping 30 ms duration tones randomly selected from a frequency range of 5–40 kHz and presented at 100 Hz; Xiong et al., 2015), and piezo-driven whisker deflection (or, in some cases, forelimb and trunk stimulation; see Gallero-Salas et al., 2021). For whisker deflection, a 1 s, 5 Hz burst of five 100 ms duration forward sweeps was used (i.e. each sweep consists of 100ms of forward movement and 100 ms of backward movement), consisting of posterior to anterior sweeps. The whisker deflector was a custom 3D-printed triangular polylactic acid (PLA) piece mounted on a 21-gauge needle and attached to a PL140.11 piezo-actuator (PI Ceramic) with epoxy glue. It was actuated with a Physik-Instrumente controller driven by a custom pulse sequence generated in Spike2 and delivered from an analog output of a CED Power 1401, with an inter-stimulus interval of ~10 s (Figure 2b, right, and Figure 3b, right; see also Videos 3–7).

Dorsal mount multimodal mapping: example widefield (1p) imaging of GCaMP6s fluorescence responses during a visual multimodal mapping session.

Top right, full field, left-side isoluminant Gaussian noise stationary grating patches (vertical and horizontal stationary grating patches; 0.16 cpd, 30 deg; Michaiel et al., 2019) presented to elicit a visual response in right cortex, with small upper left-corner alternating white/black box positioned under photodiode to record precise stimulus presentation times. Top left, pixelwise dF/F response of the entire image for a single trial, recorded at 50 Hz and shown at 0.5 x speed. The baseline was calculated as the median of a 1 s period leading up to the stimulus onset. The dashed white circle indicates the putative primary visual cortex (right V1). A = anterior, L = left, R = right, P = posterior, dF/F = change in fluorescence divided by baseline fluorescence; midline extends vertically near the center of frame from bottom to top edge roughly between the ‘A’ and ‘P’: labels. Bottom, trace of mean dF/F for all pixels inside dashed white circle (mask), expressed as percent change. Vertical black line indicates stimulus onset. The visual stimulus is present through the end of the epoch shown. Upper left, overlay: mean of 33 dF/F responses (mean of 1 s after stimulus onset minus mean of 1 s leading up to stimulus onset) in a single dorsal mount session under 2–3% isoflurane anesthesia. ITI = inter-trial interval, measured from beginning of one stimulus to beginning of the next stimulus.

Whisker stimulation: same as in dorsal mount multimodal mapping visual stimulation example video (Video 3), with same mouse on same day, except with 5 Hz, 100 ms duration forward swipes with custom 3D printed plastic (PLA) whisker-deflector, as indicated by vertical deflections in stimulus trace, mid-right.

Example video of mouse shown from a different session than dF/F data, because mouse face video was typically not recorded during multimodal alignment sessions. Red S1b (and dashed line) = right primary whisker barrel cortex. A = anterior, P = posterior, L = left, R = right, V1 = primary visual cortex.

Side mount multimodal mapping: Same as in Video 3 but for side mount preparation. 1.5–3% isoflurane anesthesia was used in all 3 sessions.

Auditory: 1 s tone cloud with tones between 2 and 40 kHz presented for 0.5 s starting at black vertical dashed line (bottom). Sonogram display from Spike2 (CED) shows individual tones as horizontal green lines, where y-axis is sound frequency (~0–25 kHz) and x-axis is time (0–1 s). Movies shown at 0.25x speed. As in other example videos, the mouse shown is from a different session, but is exposed to the same stimulus at the indicated time. The mouse shown is from a different session type when videography was enabled, to show the normal response of the mouse to the stimulus. ml = midline, A1 = primary auditory cortex, A = anterior, L = left, R = right, P = posterior, dF/F = change in fluorescence divided by baseline fluorescence.

Same as in Video 5 but for visual stimulation, with different side mount example mouse.

ml = midline, A1 = primary auditory cortex, A = anterior, L = left, R = right, P = posterior, dF/F = change in fluorescence divided by baseline fluorescence.

Same as in Videos 5 and 6 but for whisker stimulation, with same side mount example mouse as in Video 5.

ml = midline, A1 = primary auditory cortex, A = anterior, L = left, R = right, P = posterior, dF/F = change in fluorescence divided by baseline fluorescence.

Light anesthesia was used to minimize unwanted cortical activity due to spontaneous movements and arousal fluctuations, and to prevent the spread of cortical sensory responses to areas downstream of primary sensory areas. Imaging through the skull between the headpost and cranial window implantation surgeries was done to allow for coregistration of skull landmarks with vasculature and sensory responses, and in general yielded more contiguous, easily interpretable multimodal maps than the same widefield mapping done through the cranial window. Although the resulting overlay of vasculature and the multimodal sensory map was useful for cranial window placement, it was not strictly necessary. Additional MMM sessions performed through the cranial window were performed every 30–60 days or as necessary due to slight changes in vasculature. In general, vasculature was stable throughout the entire ~150–200 day lifespan of a successful cranial window preparation (see https://github.com/vickerse1/mesoscope_spontaneous/blob/main/window_preparation_stability.pdf; Vickers, 2024b), although in some cases slight increases in the diameter of major blood vessels (e.g. sagittal sinus), or outgrowth of fine arteriole endings, were observed.

Averaged, baseline-subtracted dF/F responses (Figures 2b and 3b) were thresholded, masked, outlined, and layered onto the blood vessel image (Figure 2a, c, Figure 3a, c, Figure 1—figure supplement 2a, b) along with bregma and lambda skull landmarks (Figure 1—figure supplement 1a) identified with 530 nm (green) reflected-light skull-imaging using a custom protocol/macro in Fiji (ImageJ; Figure 2c, Figure 3c, Figure 1—figure supplement 2a, b; Videos 3–7). This multimodal mapping procedure and alignment to the CCF and skull landmarks was repeated if significant changes in vasculature pattern occurred over the days/weeks of the experiment. More precise maps of visual cortical areas can be achieved through visual field mapping using a topographic stimulus consisting of a bar sweeping in azimuth or elevation (Garrett et al., 2014). This technique was not applied here, because our goal was global alignment of the Allen Institute cortex-wide CCF to our dorsal or side mount views, and not precise alignment to visual subareas per se.

Co-alignment of 2-photon image to vasculature and CCF

The MMM was aligned to an overlay of the rotated CCF edges and region of interest (ROI) masks using custom Python code and the built-in function ‘PiecewiseAffineTransform’, given a user-supplied series of bounding-box and alignment points common to both images (Figure 2c (upper, part 1), 3 c (upper, part 1)). A second, similar alignment was then performed between the multimodal map and all neural ROI locations (i.e. Suite2p-identified neurons) relative to the 2p image plane; here, vasculature is inferred from the pattern of gaps (e.g. vascular ‘shadows’) in the spatial distribution of neurons (Figure 2c (lower, part 2), 3 c (lower, part 2)), and can be confirmed by parfocal pseudo-widefield (i.e. combined reflected and epifluorescence light) imaging directly on the 2p Thorlabs mesoscope. Note that in 2p imaging below the pial surface, the effective/apparent width of the vasculature, or its “shadow”, is significantly larger than that of the actual blood vessel, and its width increases with depth. The transforms resulting from each of these two alignments were applied in serial (i.e. multimodal map to CCF, then multimodal map to 2p neural image; Figures 2c and 3c) to overlay the neural image directly onto the cortical map, using outlines, and to assign a unique CCF area identifier (i.e. name and ID number) to each Suite2p-extracted, 2p-imaged neuron using CCF masks (Figures 2d and 3d). Note that, while the final assignment of CCF area identifiers to each Suite2p-identified neuron (Figure 2c (2), right) was performed post-hoc, the intermediate blood-vessel/MMM overlay image (Figure 2 (1) and (2), left) was used for online guidance of 2p mesoscope FOV selection during imaging sessions.

2-photon imaging across broad regions of the dorsal and lateral cortex in behaving mice

Previous 2p imaging studies have demonstrated that arousal and/or orofacial/body movement can explain a significant proportion of the variance in spontaneous and sensory evoked neural responses in visual and other restricted dorsal cortical regions (Musall et al., 2019; Stringer et al., 2019). Here, as a proof of principle, we examined the generality of this finding by simultaneously monitoring neuronal activity across broad regions of bilateral dorsal cortex, with our dorsal mount preparation, and both dorsal and lateral cortex across the right hemisphere, in our side mount preparation. Previous studies suggest that there may be both commonalities as well as heterogeneity in the effects of changes in arousal/movement on spontaneous and sensory-evoked responses across different cortical areas (McGinley et al., 2015; Musall et al., 2019; Stringer et al., 2019). For example, running typically enhances the gain of visually evoked responses in the mouse primary visual cortex (Niell and Stryker, 2010), while it significantly decreases that of evoked auditory responses in primary auditory cortex (Zhou et al., 2014; McGinley et al., 2015).

Assessing such potential differences between functionally distinct, and potentially distant, cortical areas required simultaneous imaging across multiple cortical regions while maintaining adequate imaging speed, quality, and resolution. To achieve this level of 2p imaging across several millimeters of cerebral cortex (e.g. from visual to motor or auditory cortical areas), we first sought to optimize the parameters of our mesoscope imaging methods and protocols.

Conventional 2p imaging using a preparation similar to our dorsal mount (“Crystal Ckull”; Kim et al., 2016) previously employed serial acquisition of ~1 x 1 mm FOVs (Kim et al., 2016). Other 2p Thorlabs mesoscope imaging studies have used acquisition protocols targeting z-stacks of 600x600 µm FOVs in barrel cortex (3 planes at 7 Hz, 1.17 µm/pixel; Peron et al., 2015), 900x935 µm FOVs in visual cortex (11 planes at ~1 Hz; Stringer et al., 2019), and three adjacent 600x1800 µm FOVs in CA1 hippocampus (Sun et al., 2023; bioRxiv). Here, with our dorsal and side mount preparations, we were able to routinely image between 2000 and 7600 neurons per session over up to ~25 mm2 of dorsal cortex simultaneously at ~3 Hz with a resolution of 5 µm/pixel in GCaMP6s mice (combined total in both preparations for all large-FOV sessions: N=17 mice, n=91 sessions, ~350,000 neurons; Figure 1—figure supplement 2e, Video 8).

Dorsal mount.