Language: I see what you are saying

In the mid-1940s, the psychologist Alvin Liberman went to work with Franklin Cooper at the Haskins Laboratories in New Haven, Connecticut. He initially set out to create a device to turn printed letters into sounds so that blind people could ‘hear’ written texts (Liberman, 1996). His first foray involved shining a light through a slit onto the page in order to convert the lines of each letter into light and then into frequencies of sound. Liberman and colleagues reasoned that with enough training, blind users would be able to learn these arbitrary letter-sound pairs and so be able to understand the text.

The device was a spectacular failure: the users performed slowly and inaccurately. This led Liberman and colleagues to the realization that speech is not an arbitrary sequence of sounds, but a specific human code. They argued that the key to this code was the link between the speech sounds a person hears and the motor actions they make in order to speak. This important work led to decades of further research and helped lay the foundation for the psychological and neuroscientific study of speech.

When we watch and listen to someone speak, our brain combines the visual information of the movement of the speaker’s mouth with the speech sounds that are produced by this movement (McGurk and MacDonald, 1976). One of the core problems that researchers in this field are investigating is how these different sets of information are integrated to allow us to understand speech. Now, in eLife, Hyojin Park, Christoph Kayser, Gregor Thut and Joachim Gross of the University of Glasgow report that they have studied this integration by using a technique called magnetoencephalography to record the magnetic fields that are generated by the electrical currents of the brain (Park et al., 2016).

Park et al. presented volunteers with audio-visual clips of naturalistic speech and then asked them to complete a short questionnaire about the speech they heard and saw. In some cases, these clips were manipulated so that the audio did not match the video. In other cases, Park et al. presented a different speech signal to each ear and asked the volunteers to pay attention to just one signal. By analyzing these combinations, they could separate the brain activity that is associated with watching someone speak from the activity that processes the speech sounds themselves.

Park et al. found that a part of the continuous speech stream called the envelope, which is the slow rising and falling in the amplitude of the speech, was tracked in auditory areas of the brain (Figure 1). Conversely, the visual cortex tracked mouth movements. These results are a good replication and extension of previous data recorded from both the auditory domain (Cogan and Poeppel, 2011; Gross et al., 2013; Luo and Poeppel, 2007) and the visual domain (Luo et al., 2010; Zion Golumbic et al., 2013). However, Park et al. extended these findings by asking: what role does tracking the lip movements of a speaker play in speech perception?

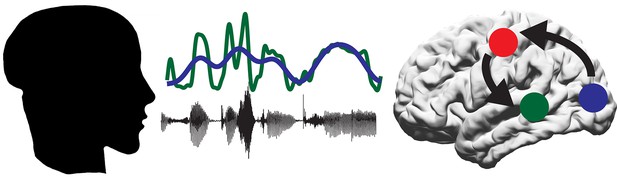

A proposed model for the role of the motor system in speech perception.

A person produces speech by the coordinated movement of their articulatory system. The listener hears the sound (black line) and sees the mouth of the speaker open and close (represented by blue line). Some of the information in the sound is contained within the speech envelope (green line). The auditory regions of the brain (green circle) track the speech envelope, while the visual system (blue circle) tracks the visual movements of the mouth. The motor system (red circle) then decodes the intended mouth movement and integrates this with the response of the auditory regions to the incoming sounds.

To learn more about which parts of the brain track the lip movements of the speaker, Park et al. performed a partial regression on the lip movement, envelope and brain activity data to remove the response to sound and focus on just the effect of tracking the lip movements. This revealed two areas of the brain that actively track lip movements during speech. The first area, as found by previous researchers, was the visual cortex. This presumably tracks the lips as a visual signal. The second area was the left motor cortex.

To further establish the role of the motor cortex during speech perception, Park et al. examined the comprehension scores from the questionnaire. These scores could be predicted from the extent to which neural activity in the motor cortex synchronized with the lip movements observed by the participant: higher scores correlated with a higher degree of synchronization. This suggests that the ability of the motor cortex to track lip movements is important for understanding audiovisual speech, suggesting a new role for the motor system in speech perception. Park et al. interpret this finding to suggest that the motor system helps to predict the upcoming sound signal by simulating the speaker’s intended mouth movement (Arnal and Giraud, 2012; Figure 1).

While this is an important first step, it is still not clear how the lip movement tracked by the motor cortex is integrated with the response of auditory regions of the brain to speech sounds. Are mouth movements tracked specifically for ambiguous or difficult stimuli (Du et al., 2014) or is this tracking necessary for perceiving speech generally? Future work will hopefully clarify the specifics of this mechanism.

It is interesting and somewhat ironic that the motor cortex tracks the visual signals of mouth movement, given the early (and unsuccessful) efforts of Liberman and colleagues to help the blind ‘hear’ written texts. Indeed, just as these early researchers proposed, it seems that the link between the motor and auditory system is a key to understanding how speech is represented in the brain.

References

-

Cortical oscillations and sensory predictionsTrends in Cognitive Sciences 16:390–398.https://doi.org/10.1016/j.tics.2012.05.003

-

A mutual information analysis of neural coding of speech by low-frequency MEG phase informationJournal of Neurophysiology 106:554–563.https://doi.org/10.1152/jn.00075.2011

-

Noise differentially impacts phoneme representations in the auditory and speech motor systemsProceedings of the National Academy of Sciences of the United States of America 111:7126–7131.https://doi.org/10.1073/pnas.1318738111

-

Visual input enhances selective speech envelope tracking in auditory cortex at a "cocktail party"Journal of Neuroscience 33:1417–1426.https://doi.org/10.1523/JNEUROSCI.3675-12.2013

Article and author information

Author details

Publication history

Copyright

© 2016, Cogan

This article is distributed under the terms of the Creative Commons Attribution License, which permits unrestricted use and redistribution provided that the original author and source are credited.

Metrics

-

- 1,594

- views

-

- 131

- downloads

-

- 0

- citations

Views, downloads and citations are aggregated across all versions of this paper published by eLife.

Download links

Downloads (link to download the article as PDF)

Open citations (links to open the citations from this article in various online reference manager services)

Cite this article (links to download the citations from this article in formats compatible with various reference manager tools)

Further reading

-

- Cancer Biology

- Neuroscience

Cancer patients often experience changes in mental health, prompting an exploration into whether nerves infiltrating tumors contribute to these alterations by impacting brain functions. Using a mouse model for head and neck cancer and neuronal tracing, we show that tumor-infiltrating nerves connect to distinct brain areas. The activation of this neuronal circuitry altered behaviors (decreased nest-building, increased latency to eat a cookie, and reduced wheel running). Tumor-infiltrating nociceptor neurons exhibited heightened calcium activity and brain regions receiving these neural projections showed elevated Fos as well as increased calcium responses compared to non-tumor-bearing counterparts. The genetic elimination of nociceptor neurons decreased brain Fos expression and mitigated the behavioral alterations induced by the presence of the tumor. While analgesic treatment restored nesting and cookie test behaviors, it did not fully restore voluntary wheel running indicating that pain is not the exclusive driver of such behavioral shifts. Unraveling the interaction between the tumor, infiltrating nerves, and the brain is pivotal to developing targeted interventions to alleviate the mental health burdens associated with cancer.

-

- Neuroscience

Emotional responsiveness in neonates, particularly their ability to discern vocal emotions, plays an evolutionarily adaptive role in human communication and adaptive behaviors. The developmental trajectory of emotional sensitivity in neonates is crucial for understanding the foundations of early social-emotional functioning. However, the precise onset of this sensitivity and its relationship with gestational age (GA) remain subjects of investigation. In a study involving 120 healthy neonates categorized into six groups based on their GA (ranging from 35 and 40 weeks), we explored their emotional responses to vocal stimuli. These stimuli encompassed disyllables with happy and neutral prosodies, alongside acoustically matched nonvocal control sounds. The assessments occurred during natural sleep states using the odd-ball paradigm and event-related potentials. The results reveal a distinct developmental change at 37 weeks GA, marking the point at which neonates exhibit heightened perceptual acuity for emotional vocal expressions. This newfound ability is substantiated by the presence of the mismatch response, akin to an initial form of adult mismatch negativity, elicited in response to positive emotional vocal prosody. Notably, this perceptual shift’s specificity becomes evident when no such discrimination is observed in acoustically matched control sounds. Neonates born before 37 weeks GA do not display this level of discrimination ability. This developmental change has important implications for our understanding of early social-emotional development, highlighting the role of gestational age in shaping early perceptual abilities. Moreover, while these findings introduce the potential for a valuable screening tool for conditions like autism, characterized by atypical social-emotional functions, it is important to note that the current data are not yet robust enough to fully support this application. This study makes a substantial contribution to the broader field of developmental neuroscience and holds promise for future research on early intervention in neurodevelopmental disorders.