The evolution of distributed sensing and collective computation in animal populations

Abstract

Many animal groups exhibit rapid, coordinated collective motion. Yet, the evolutionary forces that cause such collective responses to evolve are poorly understood. Here, we develop analytical methods and evolutionary simulations based on experimental data from schooling fish. We use these methods to investigate how populations evolve within unpredictable, time-varying resource environments. We show that populations evolve toward a distinctive regime in behavioral phenotype space, where small responses of individuals to local environmental cues cause spontaneous changes in the collective state of groups. These changes resemble phase transitions in physical systems. Through these transitions, individuals evolve the emergent capacity to sense and respond to resource gradients (i.e. individuals perceive gradients via social interactions, rather than sensing gradients directly), and to allocate themselves among distinct, distant resource patches. Our results yield new insight into how natural selection, acting on selfish individuals, results in the highly effective collective responses evident in nature.

https://doi.org/10.7554/eLife.10955.001eLife digest

In nature, we see many examples of highly coordinated movements of groups of individuals; think of a flock of birds turning swiftly in unison or a crowd of people filing through the exit of a building. A common feature of these behaviors is that they occur without any centralized control, and that they involve sudden and often dramatic changes in the 'collective state' of the group (i.e. speed, or the distances between individuals). In the past, researchers have likened these transitions in collective behavior to phase transitions in physical systems, for example, the transition between liquid water and water vapor. However, it is not clear how such collective responses could have evolved.

Natural selection is an evolutionary process whereby individuals with particularly 'fit' traits produce more offspring than others. Over many generations, these beneficial traits tend to become more common in the population. Hein, Rosenthal, Hagstrom et al. developed a mathematical model to investigate whether the capacity of a population to perform collective motions could evolve through natural selection.

The model shows that over many generations, populations consistently evolve a unique collective trait whereby small responses of individuals to an environmental cue can cause spontaneous changes in the collective state of the local population. These transitions in collective state greatly enhance the ability of individuals to locate and exploit resources. Hein, Rosenthal, Hagstrom et al.’s findings suggest that natural selection acting on the behavior of individuals can cause a population to evolve a distinctive, collective behavior.

The next challenge will be to identify a biological system in which the evolution of collective motion can be studied experimentally to test these predictions.

https://doi.org/10.7554/eLife.10955.002Introduction

In many highly coordinated animal groups, such as fish schools and bird flocks, the ability of individuals to locate resources and avoid predators depends on the collective behavior of the group. For example, when fish schools are attacked by predators, 'flash expansion' (Pitcher et al., 1993) and other coordinated collective motions, made possible above a certain group size, reduce individual risk (Handegard et al., 2012). Similarly, fish can track dynamic resource patches far more effectively when they are in a group (Berdahl et al., 2013). When an individual responds to a change in the environment (e.g., predator, resource cue), this response propagates swiftly through the group (Rosenthal et al., 2015), altering the group’s collective motion. How are such rapid, coordinated responses possible? These responses may occur, in part, because the nature of social interactions makes animal groups highly sensitive to small changes in the behavior of individual group members; theoretical (Couzin et al., 2002; D’Orsogna et al., 2006; Kolpas et al., 2007) and empirical (Tunstrøm et al., 2013; Buhl et al., 2006) studies of collective motion have revealed that minor changes in individual behavior, such as speed (Tunstrøm et al., 2013), can cause sudden transitions in group state, reminiscent of similarly sudden phase transitions between collective states in physical systems (such as the solid-liquid-gas transitions as a function of increasing temperature). It has been proposed that individuals may trigger such changes in collective state by responding to the environment, thereby initiating a coordinated response at the group level (e.g., Couzin et al. (2002); Kolpas et al. (2007); Couzin and Krause, 2003). This mechanism requires that the behavioral rules of individual animals within a population have evolved in a way that allows groups to transition adaptively among distinct collective states. The evolutionary processes that could lead to this population-level property, however, remain poorly understood.

The feedback between the behavioral phenotypes of individuals, the collective behaviors that these phenotypes produce, and individual-level fitness consequences has made it challenging to study how complex collective behaviors evolve (Torney et al., 2011). Many species, including fish and birds, form groups in which members have low genetic relatedness, which implies that kin selection alone cannot explain the evolution of collective behavior. Moreover, while natural selection acts on the behavioral phenotypes of selfish individuals, collective behaviors are group-level, or perhaps even population-level, properties rather than heritable individual phenotypes. To understand how collective behaviors evolve, then, one must first understand the mapping between individual phenotypes and collective behavior, and between collective behavior and individual fitness.

Here, we take advantage of detailed studies of the social interaction rules and environmental response behaviors of schooling fish (Berdahl et al., 2013; Katz et al., 2011) to develop a biologically-motivated evolutionary model of collective responses to the environment. Using analytical methods and evolutionary simulations, we study how individual behavioral rules produce collective behaviors, and how collective behaviors, in turn, govern the fitness and evolution of selfish individuals. To relate individual and collective behaviors to fitness, we consider a fundamental task faced by fish and other motile organisms: finding and exploiting dynamic resources (Stephens et al., 2007). In our model, individuals respond to the locations of near neighbors and also to local measurements of resource quality. Each individual achieves a fitness determined by the resource level it experiences over its lifetime. We use this framework to explore the evolution of complex collective responses to the environment, and how such responses are related to transitions in collective state.

Model development

Behavioral rules

We model the movement behaviors of each individual in a population of size using two experimentally-motivated (Berdahl et al., 2013; Katz et al., 2011) behavioral rules: a social response rule and an environmental response rule. The social response rule is motivated by experimental studies of pairwise interactions among golden shiners (Notemigonus crysoleucas) (Katz et al., 2011). Individual fish avoid others with whom they are in very close proximity. As the distance between individuals increases, however, interactions gradually change from repulsive to attractive, with maximum attraction occurring at a distance of two-four body lengths. For longer distances, individuals still attract one another but the strength of attraction decays in magnitude (Appendix section 1; Katz et al., 2011). As found in experimental studies of golden shiners (Katz et al., 2011) and mosquitofish (Gambusia holbrooki) (Herbert-Read et al., 2011) there need not be an explicit alignment tendency; rather alignment can be an emergent property of motion combined with the tendencies for repulsion and attraction described above.

To capture these observed social interactions (or ‘social forces’), we model the acceleration of individuals using a force-based method (Katz et al., 2011). The th individual responds to its neighbors using the following rule:

where is the social force on the th individual, is the position of the th individual, is the two-dimensional gradient operator, the term in brackets is a social potential, , , , and are constants that dictate the relative strengths and length scales of social attraction and repulsion, and the set is a set of the nearest neighbors of the th individual, where a neighbor is an individual within a distance of of the focal individual. Equation 1 does not include explicit alignment with neighbors. A similar model is discussed in D’Orsogna et al. (2006). In Equation 1, determines the length scale over which individuals are influenced by social interactions. If is greater than but less than , individuals repel one another at short distances but do not attract one another. We refer to such individuals as asocial (Appendix section 1). If is greater than both and , individuals repel one another at short distances and are attracted to one another at intermediate distances as observed by Katz et al. (2011). Finite ensures that individuals can only respond to a limited number of their neighbors in crowded regions of space and provides a simplified model of sensory-based social interactions (e.g., Rosenthal et al. (2015); Strandburg-Peshkin et al. (2013)). Finite also ensures that individuals are limited to finite local density (Appendix section 3).

To model the response of individuals to the environment, we develop an environmental response rule based on experimentally-observed environmental responses of golden shiners (Berdahl et al., 2013). In particular, in a dynamic, heterogeneous environment, individual golden shiners respond strongly to local sensory cues by slowing down in favorable regions of the environment, and speeding up in unfavorable regions. In contrast, fish respond only weakly to spatial gradients in environmental quality and instead adjust their headings primarily based on the positions of their near neighbors. Accordingly, we model the th individual’s environmental response as a function of the level of an environmental cue (in this case, the level of a resource) at its current position:

where is the autonomous force the th individual generates by accelerating or decelerating in response to the environment, is a monotonically decreasing function of the value of an environmental cue, is the cue value at the th individual’s position, is a damping term that limits individuals to a finite speed, and is the th individual’s velocity. In the absence of social interactions, individuals travel at preferred speed (for ). Changes in speed are crucial in the schooling behavior of fish (Tunstrøm et al., 2013; Berdahl et al., 2013), and as we show below, are also responsible for generating effective collective response in our model. Following the experimental results in Berdahl et al. (2013) we assume that individuals do not change their headings in response to the cue. In what follows, we refer to 'cue' and 'resource' interchangeably as we model the case where the cue is the resource itself (see e.g., Torney et al. (2009); Hein and McKinley (2012) for cases where the cue is not a resource).

Combining social and environmental response rules yields two equations that govern each individual’s movement (in two dimensions):

and

where is mass. D’Orsogna et al. (2006) explores the behavior of a similar model with constant over the full parameter space. Here we focus on a parameter regime that yields behavioral rules that match the experimental observations of Katz et al. (2011) and Berdahl et al. (2013).

We simulate a discretized version of the system described by Equations 3 and 4. In particular, we choose a time step, , within which the acceleration due to social influences (Equation 1) and resource value are assumed to be constant. Positions, speeds, and accelerations of all individuals at time are then given by the solutions to Equations 3 and 4 at time , with the values of and determined at time . A navigational noise vector of small magnitude and uniform heading 0 to 2 is added to the velocity of each agent at each time step. Taking the limit as goes to zero means that individuals are constantly acquiring information and instantaneously altering their actions in response. In Appendix section 3−6, we analyze a continuum approximation of this limiting model and below we discuss results of this analysis alongside simulation results.

The social interaction rule allows us to build an interaction network for the entire population. Two individuals are socially connected if at least one of them influences the other through Equation 1. We define a 'group' as a set of individuals that belong to the same connected component in this network.

Evolutionary dynamics

The natural environments in which organisms live are often heterogeneous and dynamic (Stephens et al., 2007). Consequently, we simulate populations of individuals in dynamic landscapes, where individuals make decisions in response to local sensory cues (local measurements of a resource) and these decisions have fitness consequences for the individuals within the population (Guttal and Couzin, 2010; Torney et al., 2011). In keeping with experimental observations (Berdahl et al., 2013), we assume individuals follow a simple environmental response function: , where dictates the th individual’s preferred speed when the level of the environmental cue is zero and determines how sensitive the th individual is to the cue value (Berdahl et al., 2013). Rather than prescribing values of and , we use an evolutionary framework similar to that developed by Guttal and Couzin (2010) to allow these two behavioral traits to evolve along with the maximum interaction length , which determines whether individuals are social ( length scale of social attraction) or asocial ( length scale of social attraction, Appendix section 1).

In each generation, individuals are located in a two-dimensional environment in which each point in space is associated with a resource value that changes over time (see Materials and methods). Individuals move through the environment using the interaction rules described above, and each individual has its own value of the , , and parameters. At the end of each generation, we compute each individual’s fitness as the mean value of the resource it experienced during that generation. Each individual then reproduces with a probability proportional to its relative fitness within the population. offspring comprise the next generation where each offspring inherits the traits of its parent modified by a small mutation (Appendix section 2). For reference, we compare the evolution of populations in which , , and are allowed to evolve, to the evolution of populations of asocial individuals, for which is set to a constant (Appendix section 1).

Results

Evolution of behavioral rules

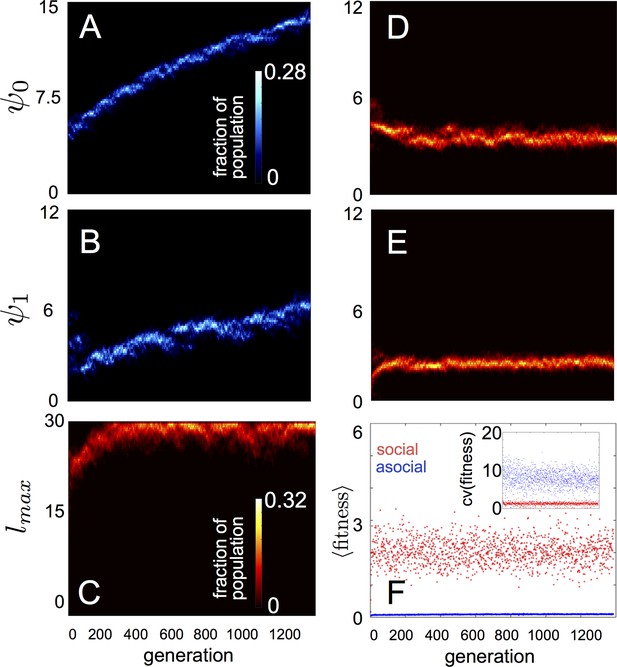

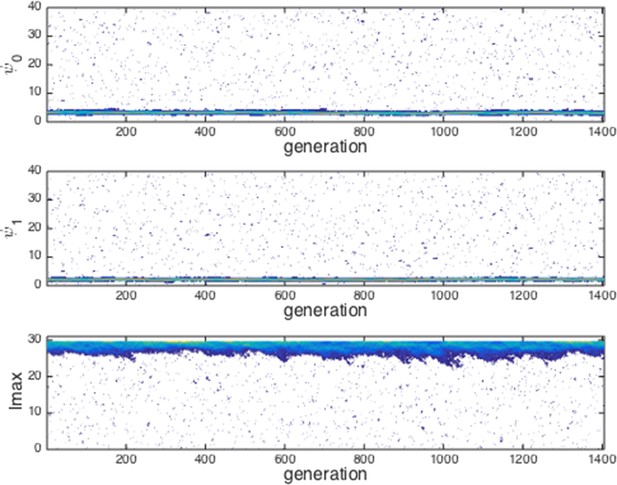

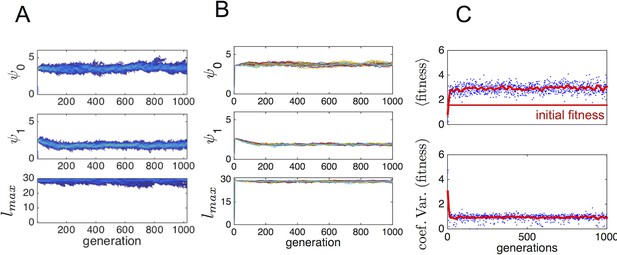

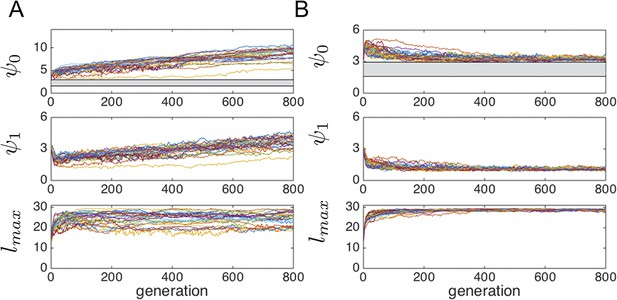

In populations of asocial individuals, the baseline speed parameter and environmental sensitivity increase consistently through evolutionary time (Figure 1A–B). Asocial individuals move through the environment, slowing down in regions where the resource value is high and speeding up when the resource value is low (Video 1). As one would expect from random walk theory (Schnitzer, 1993; Gurarie and Ovaskainen, 2013), individuals more rapidly encounter regions of the environment with high resource value when they travel at high preferred speeds (Equation A65; Gurarie and Ovaskainen, 2013), and the more they reduce speed in regions of the environment with high resource quality, the more time they spend in these regions (Schnitzer, 1993). Because of these two effects, the fittest asocial individuals have high baseline speeds (i.e., high ) and accelerate and decelerate rapidly in response to changes in the resource value (i.e., high ; Figure 1A–B, Appendix).

Evolution of behavioral rules.

(A, B) show evolutionary dynamics of populations of asocial individuals (i.e., maximum length scale of social interactions fixed; see text). (C-E) show evolutionary dynamics of individuals in which the maximum length scale of social interactions is allowed to evolve. Brightness of color indicates the frequency of a phenotype in the population. In asocial populations, baseline speed parameter (A) and environmental sensitivity (B) increase continually through evolutionary time. When is allowed to evolve (C), individuals quickly become social ( approaches maximum allowable value of 30), and baseline speed parameter (D) and environmental sensitivity (E) stabilize at intermediate values. Mean fitness of social populations (F, red points) is over five times higher than mean fitness of asocial populations (F, blue points), and the coefficient of variation in fitness is over four times lower in social populations (F inset). Unless otherwise noted, parameter values in all figures are as follows: , , , , , , , , , , , , , , and .

Asocial population.

Responses of population of asocial individuals (points) and dynamic resource peak (resource value shown in grayscale; dark regions have high resource value, light regions have low resource value). Length of tail proportional to speed. Peak centroid moves according to 2D Brownian motion with drift vector and standard deviation (see Materials and methods). In Videos 1–4, view is zoomed in to area surrounding moving resource peak (field of view is , where is the length scale of repulsion; full environment is projected onto a torus with edge length ). Behavioral parameters as follows: , , , , , , , , , . Environmental parameters in Videos 1–4 are: , , , , , , .

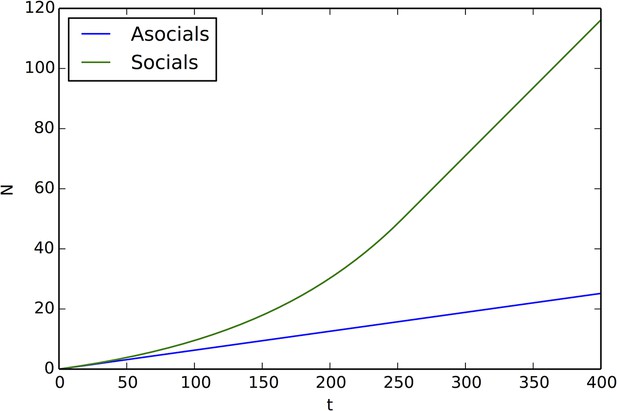

When populations are allowed to evolve sociality, the evolutionary process selects for very different behaviors (Figure 1C–E). Selection quickly favors sociality, and individuals evolve large maximum interaction lengths (Figure 1C). Over evolutionary time, selection removes individuals with high and low values of and from the population and an evolutionarily stable state (ESSt; Maynard Smith, 1982) emerges that is characterized by a single mode at the dominant value of each trait (Figure 1D–E; Appendix section 2). The ESSt resulting from selection on , , and is robust in that it is resistant to invasion by phenotypes near the ESSt, and by invaders with trait values far from the ESSt (Appendix section 2). Throughout evolution, populations of social individuals achieve mean fitness values that are approximately five times higher than those of asocial populations, and a coefficient of variation in fitness approximately four times lower than that of asocial individuals (Figure 1F).

Notably, a single individual drawn from a population at the ESSt can invade a resident population of asocial individuals and the social strategy quickly sweeps through the population (Appendix section 2). To understand why this invasion occurs, consider a population of asocial individuals that slow down in favorable regions of the environment. If the environment does not change too rapidly, such individuals will accumulate in regions where the resource level is high. This phenomenon has been studied mathematically in the context of position-dependent diffusion (Schnitzer, 1993), and will occur, in general, when individuals lower their speeds in response to the value of an environmental cue. A social mutant that responds to the environment, and to its neighbors, can take advantage of the correlation between density and resource quality by climbing the gradient in the density of its neighbors (Equation 1). In this case, the positions of neighbors contain information about the value of resources and social mutants quickly invade asocial populations leading to a rapid increase in mean fitness (Appendix section 2).

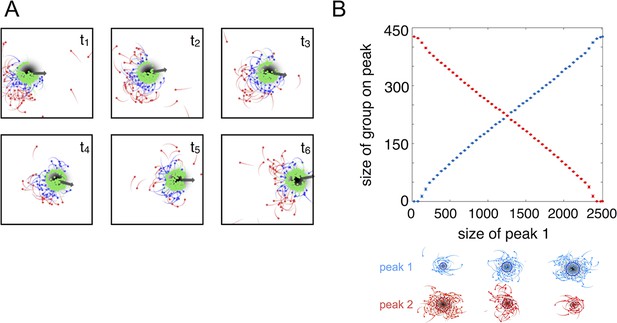

Evolved populations collectively compute properties of the environment

The high fitness of the evolved phenotype is due, in part, to a collective resource tracking ability, similar to that found in golden shiners (Berdahl et al., 2013). Evolved individuals can find and track resource peaks as they move through the environment (Figure 2A, Video 2; Materials and methods), whereas asocial individuals and social individuals with trait values far from the ESSt cannot (Videos 1, 3–4). Tracking occurs via a dynamic process. Individuals near the edge of the peak move rapidly, whereas individuals nearer to the peak center (where the resource value is high) move slowly (Equation 2). As in fish schools (Berdahl et al., 2013), individuals turn toward near neighbors (Equation 1) and travel toward the peak center. This collective tracking behavior is particularly important when the resource field changes rapidly over time. As a resource peak moves, individuals at its trailing edge experience a resource value that becomes weaker through time (Figure 2A). As the resource value becomes weaker, these individuals accelerate (Equation 2), but turn toward neighbors on the peak (Equation 1) and thus travel toward the moving peak (Figure 2A). When the environment contains multiple resource peaks, evolved populations fuse spontaneously to form groups whose sizes correspond to that of the peak they are tracking (Figure 2B), even though no individual is able to assess peak size, or know whether there are multiple peaks in the environment. This behavior is consistent with recent sonar observations of foraging marine fish showing that fish form shoals that match the sizes of dynamic resource patches (Bertrand et al., 2008; Bertrand et al., 2014). Our model demonstrates that collective tracking behavior similar to that observed in real fish schools can evolve through selection on the decision rules of individuals.

Collective tracking of dynamic resource and length-scale matching.

(A) Sequence (left to right, top to bottom) of individuals interacting with moving resource peak (resource value in grayscale, darker = higher resource value). Peak is drifting to the right (grey arrow). Colors indicate the regime into which each agent falls (red: , blue: , green: ). Length of tail is proportional to speed. Peak centroid moves according to 2D Brownian motion with drift (see Materials and methods). (B) When environments contain multiple resource peaks, evolved populations divide into groups that match peak sizes, e.g., in a two-peak environment, the size of group on each peak is proportional to peak size. Total size of two peaks is constant so that the larger the first peak (Peak 1, x-axis), the smaller the second peak. Peak size computed as the integral of the resource value over the entire peak (see Materials and methods). Group size is mean size of the group nearest each peak (mean taken over the last 2,500 time steps of each simulation). Points (and error bars) represent mean ( 2 standard errors) of 1,000 simulations for each combination of peak sizes. Parameters as in Figure 1 with and values of , , and taken from a population in the ESSt.

Population at the evolutionarily stable state (ESSt).

Responses of population of individuals evolved for 1500 generations to the ESSt to dynamic resource peaks. Behavioral parameters as in Video 1 with , , , and , where denotes mean over the population. Note rapid accumulation of individuals near peaks and dynamic peak-tracking behavior of groups.

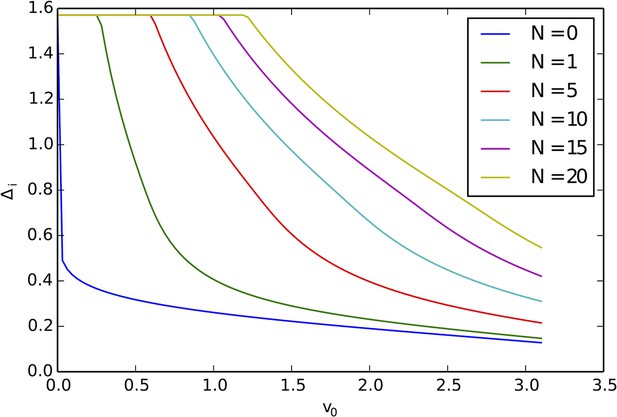

Evolved populations are poised near abrupt transitions in collective state

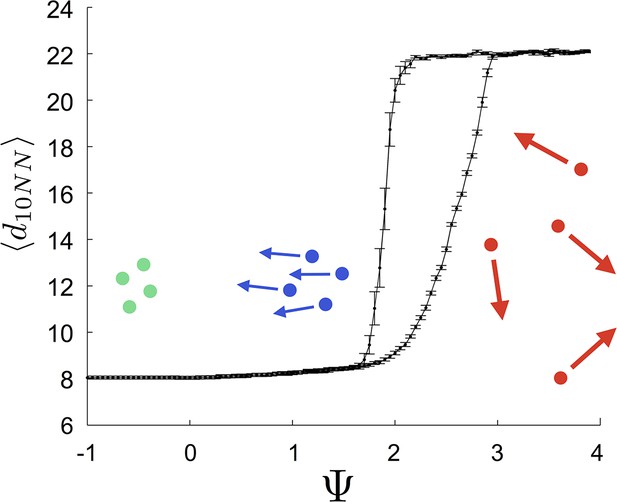

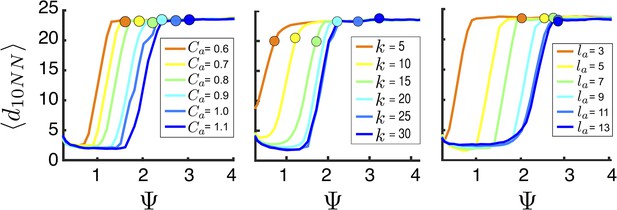

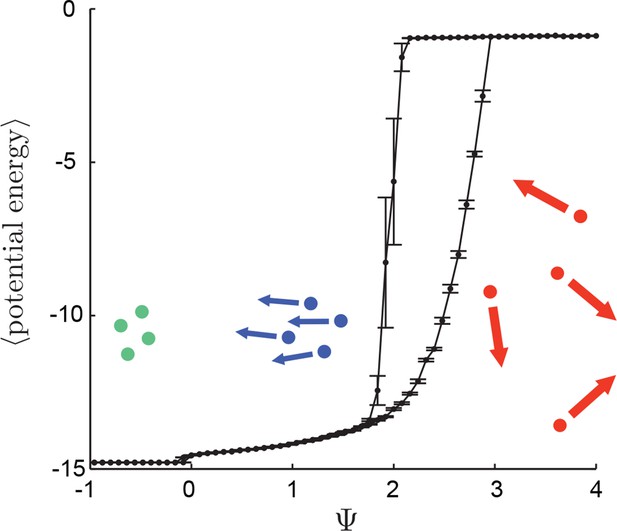

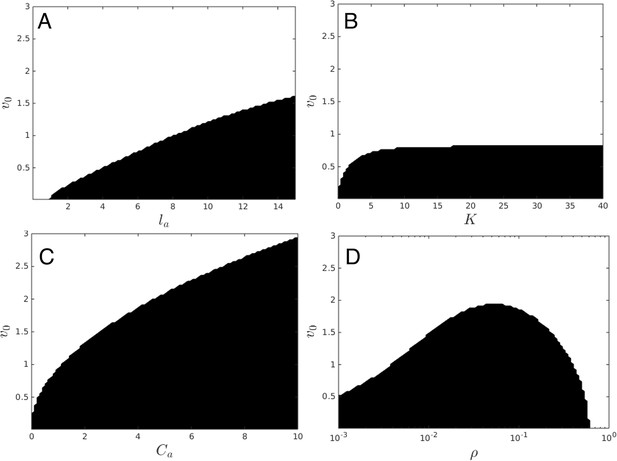

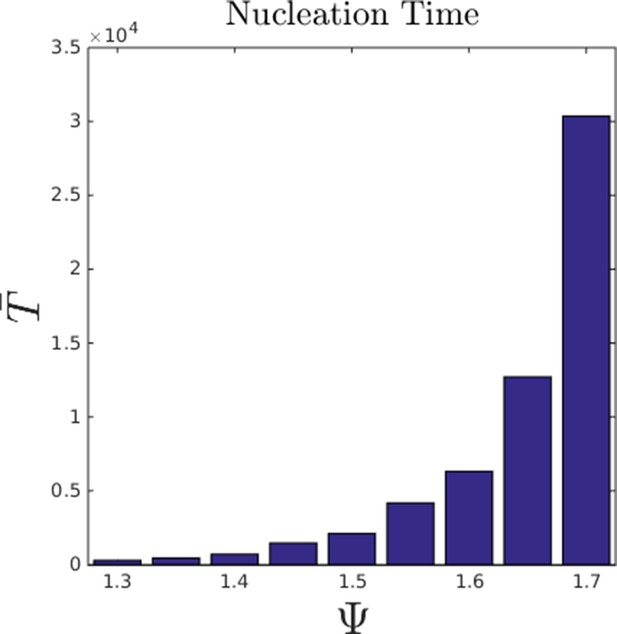

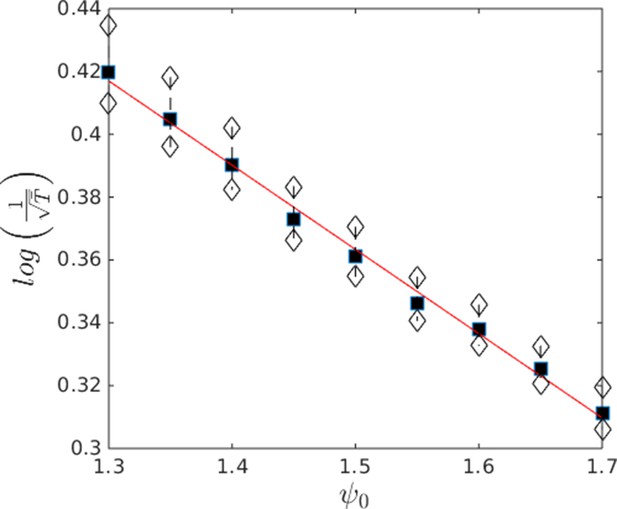

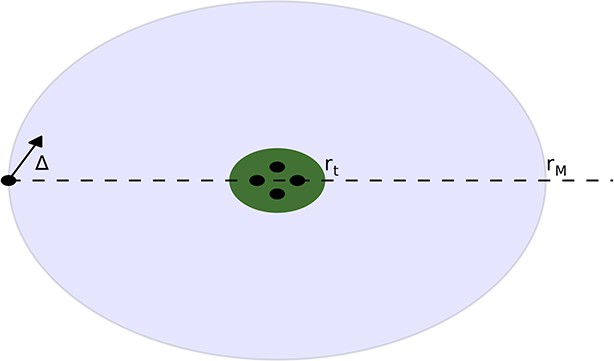

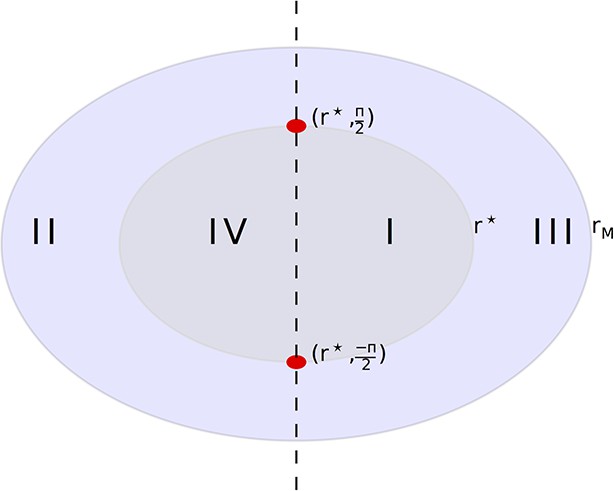

That individuals in evolutionarily stable populations have intermediate baseline speeds and intermediate environmental sensitivities (Figure 1D–E) raises a question: what determines the evolutionarily stable values of these traits? It is tempting to conclude that these trait values are determined by the nature of the environment alone. However, the fact that the evolutionary trajectories of social and asocial populations are so different (Figure 1), suggests that the collective behaviors discussed above strongly influence the outcome of evolution. Analysis of Equations 1–4 reveals that the preferred speed parameter divides the dynamical behavior of populations into distinct collective states (Figure 3; analysis in Appendix section 5). For , individuals have a preferred speed of zero and the inter-individual distances are governed by initial conditions. In this state, individuals resist acceleration due to social interactions. For small , individuals form relatively dense groups that move through the environment as collectives, either milling, swarming, or translating (D’Orsogna et al., 2006), the collective motions exhibited by real schooling fish (Tunstrøm et al., 2013). Individual speeds are relatively low and inter-individual distances are short. For large , inter-individual distances are large, and individuals move through the environment quickly. Dynamic changes among theses states are evident in Video 2. These collective states are also clearly distinguishable in Figure 3 ( and ) and Appendix Figure 9 (), and are separated by abrupt changes in the distances between near neighbors (the inverse of local density, Figure 3) or potential energy (Appendix Figure 9). The location of transitions between states depends on the parameters of the social response rule (e.g., number of neighbors an individual pays attention to ; Figure 4). The transitional regimes between these states are reminiscent of the first-order phase transitions that occur in some physical systems, for example at the transition between liquid water and water vapor. As in the liquid-vapor phase transition, transitions in collective state are characterized by strong hysteresis (Figure 3). If the population begins with large , mean distance to neighbors remains stable for decreasing and then decreases abruptly (Figure 3, Appendix Figure 9 upper curve). If is then increased, mean distance to neighbors increases but follows a different functional relationship with (Figure 3, lower curve). We refer to the collective states as station-keeping (; see Appendix Figure 9), cohesive (small ), and dispersed (large ). The analogy between transitions in collective state in our system and first order phase transitions in physical systems can be made more precise by analyzing the formation rate of groups when is in the hysteresis region. In the hysteresis region, the rate at which groups of individuals form spontaneously (and therefore nucleate a transition from the dispersed to cohesive state) depends strongly on ; when is near the upper bound of the hysteresis region, the time required for a group to form spontaneously is very long (see Appendix section 5.4). From a thermodynamic perspective, this makes the spontaneous formation of groups extremely unlikely, which explains why populations that begin in the dispersed state follow the upper branch of the hysteresis curve shown in Figure 3.

Hysteresis plot of the distance to 10 nearest neighbors, averaged over the entire population (points and error bars) as a function of preferred speed parameter in a uniform environment.

Figure produced by starting with a population with in a uniform environment. Population is allowed to equilibrate for 5000 time steps and is then computed. is then lowered. This process is repeated until , at which point the same procedure is used to increase . Upper curve corresponds to decreasing . Lower curve corresponds to increasing . Regimes where and correspond to transitions between collective states. Points and (error bars) correspond to mean ( 2 standard errors) of 50 replicate simulations. Parameters as in Figure 1 with .

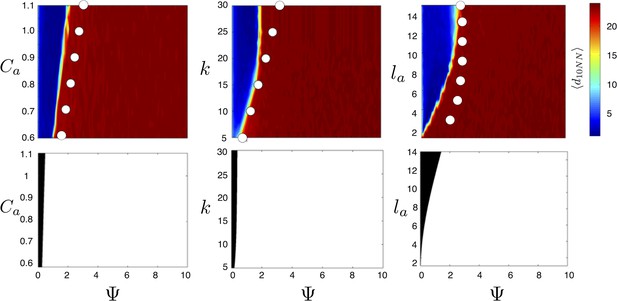

Evolved populations are positioned near transitions in collective state.

Upper panels show mean distance to 10 nearest neighbors (, color scale) from simulated populations. A separate populations is simulated in a uniform environment for each value of the social attraction strength (), number of neighbors an individual reacts to (), and the decay length of social attraction () parameters. Red is low density corresponding to dispersed state, and blue is high density corresponding to cohesive state. Points show the mean value of of populations in the EESt (populations evolved for 1,000 generations in an environment with dynamic resource peaks). Evolved populations are positioned near transition between cohesive and dispersed states. Lower panels are based on analytical calculations and show the predicted regions in which the dispersed state is stable (white) and unstable (black, Appendix section 5). Parameters as in Figure 1 with , , , , , and .

For a wide variety environmental conditions (Appendix section 2) and social parameters (Figure 4), the evolutionarily stable trait values have a notable feature: the evolved values of the baseline speed parameter, , place individuals in the population slightly above the transition between cohesive and dispersed states when (Figure 4, upper panels, Figure 5; points in both figures show mean values of population in the ESSt), and the evolved environmental sensitivity, , is large enough that locally, groups of individuals cross from the dispersed state through the cohesive and station-keeping states in regions of the environment where the resource value is high (Figure 2A, colors indicate instantaneous value of for each individual). In other words, the evolved values of and allow local subpopulations to undergo sudden changes from one collective state to another in the proximity of favorable regions of the environment. Importantly, the approximate location of the transition between cohesive and dispersed states can be predicted by directly analyzing Equations 1–4 without considering details of the environment, or the mapping between behavior and fitness (Figure 4 compare upper panels [simulation] to lower panels [analytical prediction]). While the precise evolutionarily stable values of depend on the parameters of the environment (Appendix section 2), the evolutionarily stable values of place the population near the cohesive-dispersed transition in many different kinds of environments (Appendix Figure 5). As we show below, being near this transition allows groups to respond quickly to changes in the environment. Our results demonstrate, that such locations in behavioral state-space are, in fact, evolutionary attractors.

Mean distance to nearest neighbors (curves) and ESSt value of (points) as a function of social parameters.

Points denote mean ESSt value of . Note abrupt transitions in density as function of , as shown in Figure 3. In all cases, ESSt value of causes populations to cross transition when resource value is high (i.e., , where is maximum resource value of each peak). Densities and ESSt values generated as described in Figure 4.

The evolutionary results presented in Figure 1 assume that individuals do not appreciably deplete the resource. We can explore an alternative scenario in which resource peaks are depleted through consumption (Appendix section 2.8). In that case, the th individual consumes resources at a rate per time step. We repeated evolutionary simulations assuming either a high or low rate of resource consumption . For high consumption rate (100 individuals can deplete a peak in roughly five time steps), still increases so that individuals are attracted to one another through social interactions, but selection for large is much weaker than the case shown in Figure 1C (see Appendix Figure 7). Moreover, and increase continually through evolutionary time. This result is intuitive because when resources are depleted rapidly, the locations of neighbors convey little information about the future location of resources and transitioning from the dispersed to cohesive state may actually be maladaptive. By contrast, when individuals consume the resource at a more moderate rate (Appendix Figure 7), evolutionary trajectories parallel the trajectory shown in Figure 1C–E; there is strong selection for high , reaches a stable value that is situated directly above the hysteresis region shown in Figure 3, and evolves to a stable value that is large enough to allow individuals to cross from dispersed to cohesive, and station-keeping states in regions of the environment where the resource value is high.

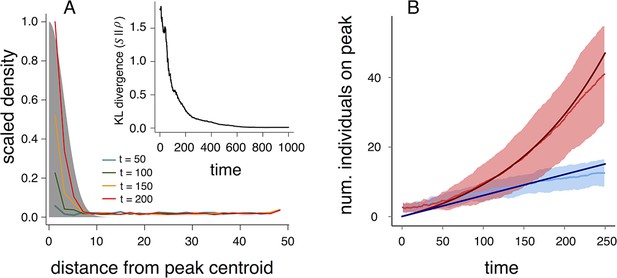

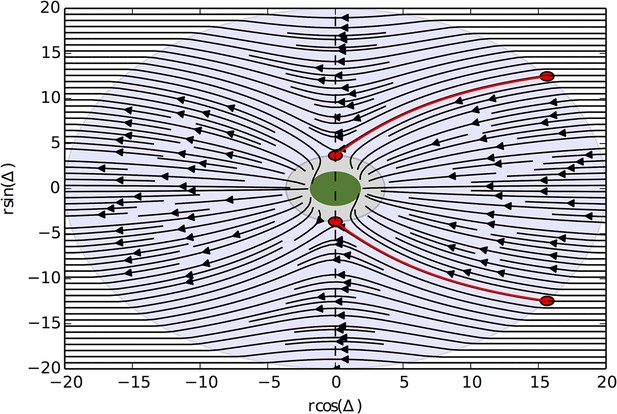

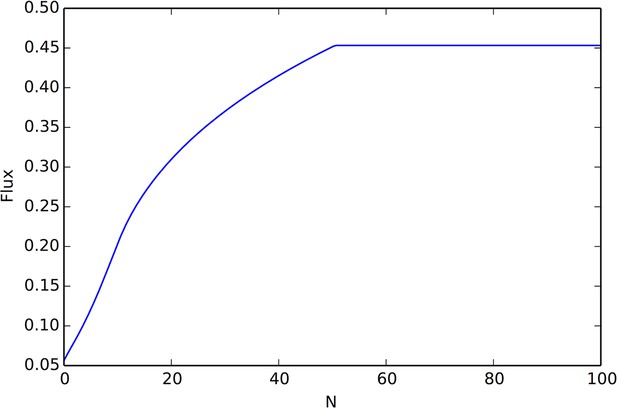

Changes in collective state allow for rapid collective computation of the resource distribution

Why do populations of selfish individuals evolve behavioral rules that place them near the transition between collective states? Dispersed, cohesive, and station-keeping states are each associated with a characteristic density (low, intermediate, and high, respectively; Figure 3, Appendix Figure 9). If individuals enter the cohesive and station-keeping states where the resource level is high, the density of individuals becomes strongly correlated with the resource distribution (Figure 6A). The similarity between the distribution of individuals and the distribution of the resource can be quantified by the Kullback-Leibler divergence (KL divergence), an information-theoretic concept that measures the distance between two distributions (Figure 6A inset). Though individuals cannot sense resource gradients, they can detect gradients in the density of their neighbors (Equation 1), and can therefore move up the resource gradient.

Collective computation and social gradient climbing.

(A) Collective computation of the resource distribution (grayscale represents resource value, normalized to maximum of 1). Curves show local density of individuals at different distances from the resource peak center (maximum value also normalized to 1). Note the rapid accumulation of individuals near the peak center. The distribution of individuals becomes increasingly concentrated in the region where the resource level is highest; inset shows that the Kullback-Leibler divergence between the resource distribution and the local density of individuals decreases through time as the two distributions become more similar. (B) Number of individuals near peak center (within one decay length, , of peak center) as a function of time. Red and blue points and confidence bands represent means sd. for 100 replicate simulations. Red points and band is ESSt population and blue points and band is an asocial population with the same parameter values. Curves are analytical predictions based on Equations 3 and 4 (Appendix section 6).

The abrupt transitions in the density of individuals between dispersed and cohesive states (Figure 3) mean that there is a strong density gradient in regions of the environment where individuals in the dispersed state border individuals in the cohesive state (e.g., Figure 2A, 6A, Video 2). This suggests that the behavior of an individual in this region can be approximated by considering only its interactions with individuals that are on the resource peak (i.e., where density is high). Using this assumption, we derive analytically the rate at which new individuals join (or rejoin) a group on the resource peak (Appendix section 6.5). Asocial individuals arrive at a resource peak at a rate , where is a constant (Figure 6B, blue curves and points; Equation A65). However, social individuals initially arrive at a rate that increases as more individuals reach the peak, such that the number of individuals on the peak, , increases exponentially with time: , where and are positive constants (Figure 6B, red curves and points; Equation A68–A70). Analytical calculations (Figure 6B, solid lines) agree well with results of numerical simulations (Figure 6B, points and confidence bands). The rapid accumulation shown in Figure 6 is especially important when the environment changes quickly with time; it allows groups to respond swiftly to changes in the resource field and enables the emergent resource tracking behavior described above.

The form of Equations (3–4) implies that an individual’s behavioral response combines personal information about the environment (Equation 2) with social cues (Equation 1). In fact, under a time rescaling, our model is equivalent to one in which the relative strength of social forces varies across the environment (Appendix section 4). The tradeoff between using social information and personal information is inherent in social decision-making (Couzin et al., 2005; Couzin, et al., 2011). This tradeoff means that individuals with large and are, by default, less responsive to their neighbors. Perturbing the values of and of individuals in populations at the ESSt show that, in populations with high mean , individuals fail to form large groups and are poor at tracking resource peaks (Appendix section 2.6, Appendix Figure 6). In populations with high mean values of , individuals form groups (Appendix section 2.7), but fail to exploit regions with the highest resource quality. Individuals with low values of or form groups but do not effectively track dynamic resources (Appendix section 2.7).

Discussion

Our model demonstrates that selection on the behavioral phenotypes of selfish individuals can lead to the rapid evolution of distributed sensing and collective computation. The mechanism that promotes this evolution involves the use of public information: when individuals respond to the environment by slowing down in regions of high resource quality – a behavior that is adaptive even in the absence of social interactions (Appendix Figure 2) – their positions become correlated with the locations of resources. Social individuals can exploit this public information by climbing gradients in the density of their neighbors. As in simple, game-theoretic models of social foraging (e.g., Clark and Mangel, 1984), social individuals gain a fitness advantage by using information about the environment gleaned by observing neighbors. Because of this, asocial populations are readily invaded by social mutants and collective behaviors evolve (Appendix section 2).

Evolutionarily stable populations occupy a distinctive location in behavioral state space: one in which small changes in individual behavior cause large changes in collective state (Figures 4, 5). When individuals respond to local environmental cues by accelerating or decelerating, local populations transition between the collective states shown in Figure 3 (e.g. Figure 2A). This creates the strong spatial gradient in population density (Figure 6A) and allows groups to track dynamic features in the environment rapidly. Perturbations of this evolutionarily stable state cause individuals either to weigh social information too heavily (i.e., small and/or ), in which case groups fail to explore effectively (Video 3, Appendix Figure 7), or to weigh personal information too heavily (i.e., large and/or ), in which case individuals fail to exploit the social information that enables dynamic resource tracking (Video 4, Appendix Figure 7). Because of this, mutants with phenotypes far from the evolutionarily stable state are removed from the population by natural selection. The transitions we observe in collective state bear a resemblance to phase transitions in physical systems, and our results lend credence to the hypothesis that natural selection can result in the evolution of biological systems that are poised near such bifurcation points in parameter space. Importantly, we show that these high-fitness regions of parameter space can be predicted a priori from the structure of individual decision rules, even without knowledge of the environment.

Population with mean below the ESSt value.

Responses of perturbed ESSt population to dynamic resource peaks. All parameters as in Video 2 except that each individual’s value of parameter is lowered so that the population mean . Note swarms of individuals form in regions of the environment that are far from resource peaks. Individuals explore poorly and therefore have low fitnesses.

Population with mean above the ESSt value.

Responses of perturbed ESSt population to dynamic resource peaks. All parameters as in Video 2 except that each individual’s value of parameter is increased so that the population mean . Note that individuals do not form large groups near resource peaks and fail to track peaks as they move.

Collective computation is a notion that has strongly motivated research on animal groups (Berdahl et al., 2013; Couzin, 2007; Cvikel, et al., 2015). In our model, populations perform a collective computation through their social and environmental response rules. When individuals are exposed to a heterogeneous resource environment, their responses to the environment cause a modification of the local population density; individuals aggregate in regions where the resource cue is strong. The population performs a physical computation in the formal sense (Schnitzer, 2002): physical variables – the positions and relative densities of neighbors – represent mathematical ones – spatially resolved estimates of the quality of resources in the environment. The environments considered in our study bear a strong resemblance to those encountered in dynamic coverage problems in distributed control theory (Bachmayer and Leonard, 2002), dynamic optimization problems (Passino, 2002), and Monte Carlo parameter estimation (McKay, 2003). Combining an evolutionary approach to algorithm design with collective interactions may therefore be a useful starting point for optimization schemes or control algorithms for autonomous vehicles, particularly if the structure of social interactions leads to bifurcation points in behavioral parameter space as in the model studied here.

Understanding the feedback loop between individual behavior, collective behavior of populations, and selection on individual fitness is a major challenge in evolutionary theory (Guttal and Couzin, 2010; Torney et al., 2011; Pruitt and Goodnight, 2014). Our framework closes this loop and demonstrates how distributed sensing and collective computation can evolve through natural selection on the decision rules of selfish individuals.

Materials and methods

Resource environment

Request a detailed protocolOur model of the resource environment incorporates three salient features of the resource environments that schooling fish and other social foragers encounter in nature. These features are: 1) spatial variation in resource quality, 2) temporal variation in resource quality, and 3) characteristic length scales of resource patches (Stephens et al., 2007; Bertrand et al., 2008; Bertrand et al., 2014). Accordingly, we model a two-dimensional environment in which the resource is distributed as a set of resource peaks. We assume the boundary of the environment is periodic such that individuals, inter-individual potentials, and resource peaks are all projected onto a torus. Each of the peaks decays like a Gaussian with increasing distance to the peak center. The value of the resource in a single peak at a location, , is given by

where is a constant that determines the resource value at the peak center and is a decay length parameter, and is the location of the centroid of the peak of interest. The total resource value the th individual experiences is the sum over all peaks in the environment. Each peak moves according to Brownian motion with drift vector and standard deviation . At each time step, each peak has a probability of disappearing and reappearing at a new location, chosen at random from all locations in the environment.

Appendix

1 Social interaction rules

1.1 Model of social interactions

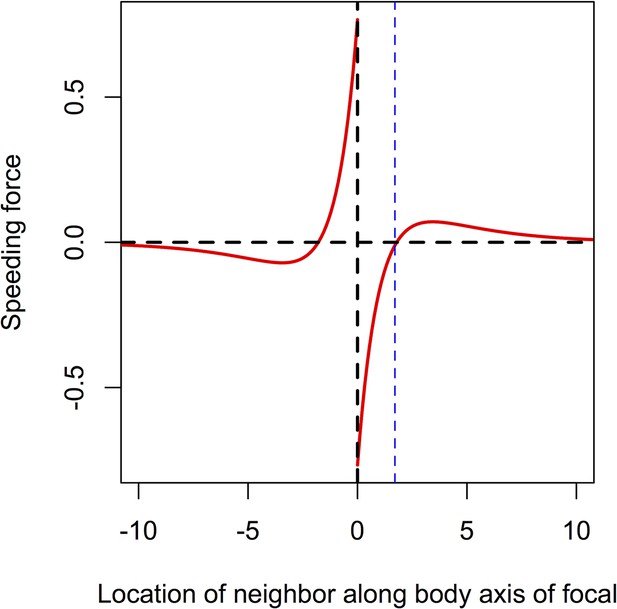

Past individual-based models that include social interactions have often depicted social interactions by assuming that individuals monitor metric 'zones'. Individuals avoid neighbors in a small zone of avoidance, and align and move toward neighbors within larger zones of social interactions (e.g., Guttal and Couzin, 2010; Couzin et al., 2002; Chou et al., 2012). Here, we use an alternative model that depicts social interactions as forces that act to modify individuals’ accelerations. This approach is closely related to force matching methods that have been applied to data to infer the strength of pairwise social interactions among individuals. We assume that social forces depend on distance in a way that creates short-range repulsion among individuals, strong intermediate range attraction, and weak attraction for longer ranges in agreement with results of Katz et al. (2011). We model the social forces on a focal individual, , by the following equation:

where, as described in the Main Text, and are the position and velocity of the th individual, respectively, is the two-dimensional gradient operator, the term in brackets is a social potential, , , , and are constants, and the set is a set of the nearest neighbors of the th individual, where a neighbor is an individual within a distance of of the focal individual. Appendix Figure 1 shows the effective force exerted on a focal individual by a neighbor located along the focal individual’s trajectory, either behind (-axis ) or in front of (-axis ) the focal individual [compare to Appendix Figure 2 of Katz et al. (2011)]. Unlike many past models of interactions among individuals, we do not assume that individuals explicitly align with one another. However, because the r.h.s of Equation A1 is proportional to the gradient of a social potential, social interactions can cause the focal individual to turn. This turning toward neighbors causes the social gradient climbing behavior described in the Main Text and discussed in detail in Appendix section 6 below.

Speeding force as a function of the location of a single neighbor.

On the left of the origin, the neighbor is behind the focal individual. For very short distances, the neighbor exerts a positive speeding force on the focal individual, causing it to accelerate. For longer negative distances, the speeding force on the focal individual is negative; the focal individual decelerates to come closer to its neighbor. To the right of the origin the neighbor is in front of the focal individual. For short distances, the speeding force on the focal individual is negative, causing it to slow down. For longer positive distances the speeding force exerted on the focal individual is positive; the focal individual speeds up, closing the distance between it and its neighbor. The distance corresponding to repulsion only is shown with the blue line. Parameters as follows: Ca = 1, Cr = 1.1, la = 3, lr = 1.

1.2 Definition of an asocial individual

To illustrate the collective behavior and evolution of social individuals it is useful to compare social individuals to individuals that are not influenced by social attraction. We refer to such individuals as 'asocial' and define them in terms of Equation A1 by setting to a value that corresponded to the distance at which the gradient of the social potential for a pairwise interaction is equal to zero (blue line in Appendix Figure 1: point at which potential crosses zero). We define asocial agents in this way because the short-range repulsion included in the inter-agent potential shown in Appendix Figure 1 represents collision-avoidance–a behavior that should be common to all individuals, regardless of whether they are socially attracted to one another. While this definition of an 'asocial' individual is more biologically sensible, we have also tried modeling asocial individuals by assuming that the r.h.s. of Equation A1 is equal to zero for all individuals (this assumes, for instance, that these individuals are not limited to finite local density); this approach does not qualitatively change the results presented below and in the Main Text.

2 Evolutionary dynamics

2.1 Selection algorithm

To understand the connection between the evolution of collective behaviors and selection on the performance of individuals, we implement a simple evolutionary algorithm similar to that used in Guttal and Couzin (2010). In the first generation, agents with heterogeneous values of and and are initiated in an environment with resource peaks. The number of agents remains constant across generations, and generations are non-overlapping. Each generation consists of a simulation run for 5,000 or 10,000 time steps over which we calculated the mean resource value experienced by each individual in the population. At the end of each generation, individuals are selected from the population (with replacement) to reproduce themselves, yielding a total of new offspring. An individual’s probability of being selected for reproduction is proportional to its mean resource value, normalized by sum of mean resource value over all individuals in the population. Individuals that perform well are more likely to be selected to reproduce and are likely to produce more offspring than individuals that perform poorly. The selection probability of the th individual is defined as follows:

where is the instantaneous resource value of individual , and angular brackets represent time-averaging over the particular generation under consideration. If an individual is selected for reproduction, a child is produced in the next generation with equal to that of the parent, with a small mutation. The value of an offspring is equal to the value of its parent, plus a normally distributed random number with mean zero and variance :

where is the value of an offspring of individual . The and traits of offspring were determined in the same way.

2.2 Evolution of asocial populations

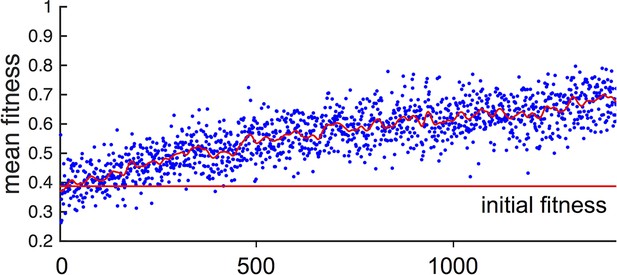

In general, populations of asocial individuals evolve to have increasing and values. While fitnesses of individuals in these populations are well below fitnesses of individuals in the evolutionarily stable states discussed below (see Main Text Figure 1F), selection on asocial populations still leads to an increase in mean fitness (Appendix Figure 2). This occurs because, as evolution progresses and and values evolve, asocial individuals spend more time in regions of the environment with high resource value.

Fitness of asocial population through evolutionary time.

Blue points indicate mean fitness of population in each generation. Horizontal red line indicates mean fitness of population over first ten generations. Corresponding and values for each generation are shown in Figure 1A,B of the Main Text.

2.3 Establishment of evolutionarily stable state (ESSt)

We allow populations to evolve according to the algorithm described above. Initial values of and phenotypes are drawn at random from uniform distributions between 0 and 6. Initial values of are drawn with uniform probability from the interval . The distribution of trait values quickly stabilizes for all three phenotypic traits as shown in Figure 1C–E of Main Text. We refer to this evolved state as an evolutionarily stable state (ESSt, Guttal and Couzin, 2010). The persistent variance in the distribution of , , and are partially due to mutations in the value of these traits, which are continually introduced into the population. We therefore expect such persistent of inter-individual variation in phenotype as a result of mutation-selection balance.

2.4 Robustness of ESSt

To evaluate the robustness of the evolutionarily stable state (ESSt) described in the Main Text, we performed evolution under invasion by phenotypes that are both near to, and far from the ESSt. We initiated the population with trait distributions from the ESSt (selected from the final generation of simulations used to establish ESSt). Then in each generation, we selected individuals to reproduce and applied ordinary mutations as described above. However, before initiating the next generation, a single individual was chosen to serve as an invader. That individual’s phenotype was replaced by values (, , and ). and were chosen with uniform probability from the interval and is chosen with uniform probability from the interval . Though these intervals are somewhat arbitrary, we note that and must ultimately be bounded above by limits on the speed that individuals can sustain, and by limits on the distance over which individuals can perceive one another, respectively. should also be bounded above because it is limited by the rate at which individuals can accelerate (decelerate) in response to changes in the measured value of an environmental cue. Thus, all three traits are bounded above due to physical constraints. Applying higher bounds on these trait values did not qualitatively change our conclusions.

Appendix Figure 3 shows a typical evolutionary progression when a population at ESSt acquires mutations (i.e., small changes in phenotype) and receives an invader in each generation. Although invaders from across the phenotype space are introduced into the population (Appendix Figure 3 blue dots across phenotype space), none of these invaders establishes for more than a few generations (Appendix Figure 3 blue dots become extinct after few generations). The ESSt is resistant to invasion by both nearby phenotypes, introduced through ordinary mutation, and phenotypes far from the ESSt, introduced through invaders. We therefore refer to the ESSt as robust.

Evolution of traits under invasion by mutants far from the ESSt.

Example evolutionary progression for , and . Note that invaders (blue points introduced across phenotype space) do not establish and the dominant trait values in the population do not change over evolutionary time. Color indicates frequency of phenotype in population (white = 0, blue = low frequency, orange = high frequency).

2.5 Invasion of asocial population by social strategy

To determine whether phenotypes from the ESSt could invade a population of purely asocial individuals, we performed another set of evolutionary simulations in which we initiated populations with asocial individuals and a single individual, chosen at random from the ESSt. Appendix Figure 4 shows evolutionary progressions from this initial state. In panel A, the full trait distribution of the population is shown. The social invader increases in frequency and sweeps the population of asocials. Replicate invasions show a very similar progression (Appendix Figure 4B). The final distribution of trait values matches the ESSt. The change in phenotypes that occur when the ESSt phenotype invades the asocial population lead to a dramatic increase in mean fitness (Appendix Figure 4C) and a decrease in the range of fitnesses of different individuals in the population.

Typical progression of evolution from initial state with N − 1 asocial individuals and individual selected from the ESSt population.

(A) Invader from ESSt increases in abundance and sweeps population. (B) Mean trait values in 50 replicate invasions (each line is a separate invasion from the same initial state with N − 1 asocial individuals). ESSt phenotype quickly invades and sweeps the population in all cases. (C) Mean and coeffient of variation in fitness corresponding to the invasion shown in A.

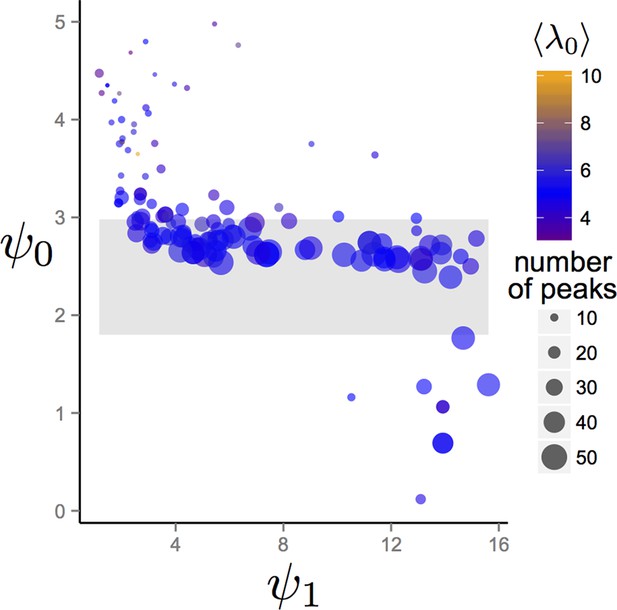

2.6 Dependence of evolutionary outcomes on the environment

One of the conclusions drawn in the Main Text is that the trait values of the evolved population at the EESt correspond to a location in behavioral state space where the population is in the dispersed state in regions of the environment with low resource quality, and that the population transitions from the dispersed to cohesive and station-keeping states in regions of the environment with high resource quality. We evaluated whether this conclusion holds, more generally, by evolving populations in more complex environments in which environmental properties were selected at random. We initialized trait values of populations as described in Establishment of evolutionarily stable state (ESSt) above. However, to generate the environment, we chose the number of Gaussian resource peaks at random from 1 to 50 with uniform probability. The maximum resource value of each peak and the variance of the two-dimensional Gaussian peak shape were also chosen at random. Maximum resource value was chosen with uniform probability from the interval and variance was chosen with uniform probability from the interval . Finally, the variances of all peaks in a given simulation were rescaled so that the sum of the integral of all peaks over the environment was equal to . We enforce this latter condition to ensure that resource peaks are small relative to the size of the environment. All other parameter values were those listed in Figure 1 of the Main Text, except that was 300.

We allowed populations to evolve for 1500 generations and recorded values of , , and that evolved. Appendix Figure 5 show mean and trait values after 1500 generations for evolutionary simulations with different environmental conditions. The gray band in Appendix Figure 5 corresponds to the region of hysteresis between cohesive and dispersed states shown in Figure 3 in the Main Text. With the exception of a small number of simulated evolutions (Appendix Figure 5, points below gray band) populations in all environments had mean trait values of in or above the hysteresis region. In all cases, the combination of and caused individuals to exhibit values of that were less than zero in the most favorable regions of the environment. Thus, for the large majority of random environmental conditions we generated, individuals transition from values that correspond to the dispersed state, through values that correspond to cohesive and station keeping states in favorable regions of the environment.

Trait values after 1500 generations of evolution in randomly generated environments.

Each point represents the mean trait values of a single population that has been allowed to evolve for 1500 generations. Point sizes denote the number of peaks that were present in the environment. Point colors represent the maximum resource value λ0 averaged over all peaks present in the environment. Gray region corresponds to the region of hysteresis shown in Figure 3 of the Main Text. Number of peaks and peak parameters were chosen at random. All other parameters as in Figure 1 of Main Text.

2.7 Perturbation of populations around the ESSt

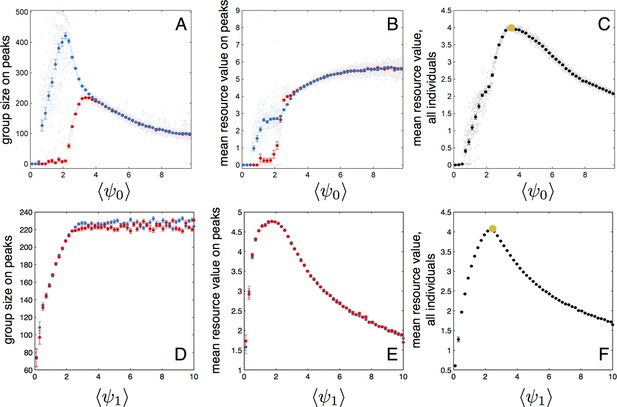

To further understand how the evolutionarily stable trait values lead to high individual fitness we perturbed the entire populations at ESSt by shifting either or of all individuals in the population. This resulted in a change in the mean value of these traits over the entire population. We then simulated the dynamic behaviors of the new perturbed population in a simplified environment containing two resource peaks. Initially, all individuals were located in a single group near one of the peaks (the starting peak). Appendix Figure 6 shows that, for fixed , group sizes and mean fitness vary strongly as a function of the mean value of of the population (both and are taken from a population at ESSt and values of are shifted to change of the population). For small values of , individuals track the starting peak but do not find the second peak (Appendix Figure 6A, blue and red points, respectively). As reaches approximately 2.2, individuals begin to form a group on the second resource peak (Appendix Figure 6A, red points denoting size of group nearest second peak begin to increase). Mean performance of individuals in the group nearest the second peak rapidly increases (Appendix Figure 6A, red points rapidly increase for ). When performance is averaged over the entire population, there is a clear maximum at (Appendix Figure 6C), the value corresponding to mean (and modal) for the evolved population in the ESSt (orange point in Appendix Figure 6C). Selection on fitnesses of individuals and optimization for maximum fitness of the entire population lead to the same value of . For larger values of , the average performance over all individuals begins to decline (Appendix Figure 6C) because fewer individuals aggregate near peaks. Perturbations of also lead do decreases in mean fitness at the population level (Appendix Figure 6F). For mean below that of the ESSt, individuals form small groups near peaks (Appendix Figure 6D). For mean above that of ESSt, individuals form large groups, but individuals in the groups near peaks have low fitness because they do not effectively aggregate near peak centers (Appendix Figure 6E).

Performance of populations near the evolutionarily stable state.

(A) The number of individuals on each peak (the starting peak, blue; the second peak, red) as a function of the mean baseline speed parameter, of a population perturbed from the ESSt. Below a of roughly 2.2, individuals do not form a large group on the second peak. (B) Mean resource value of individuals on the starting peak (blue) and second peak (red). (C) Resource value averaged over all individuals in the population (individuals in groups nearest each peak and all other individuals in the environment). Note maximum value occurs in the regime where individuals aggregate on both the starting and second peaks (3.6). Orange point indicates values corresponding to ESSt. (D-F) Group size (D), mean resource value of individuals on peaks (E), and mean resource value of all individuals (F) as a function of the mean environmental sensitivity parameter of a population perturbed from ESSt. Orange point in (F) indicates values corresponding to ESSt. Note rapid decrease in mean fitness for perturbations in both directions. Semitransparent points are results of 2000 individual simulation runs. To compute means and standard errors, simulation runs were divided into 50 evenly spaced bins. Bolded points and error bars show mean of each bin 2 standard errors.

2.8 Evolution with resource consumption

As described in the Main Text, social interactions confer a fitness advantage to social individuals at least in part because the positions and local densities of a given individual’s neighbors contain information about the spatial distribution of resources. However, if individuals quickly consume resources, this may break down. For example, areas in which the density of neighbors is currently high may no longer contain resources in the near future if those neighbors consume the resources. To explore how resource consumption affects evolutionary dynamics, we repeated evolutionary simulations assuming individuals consume the resource. To model resource consumption, we assume each individual consumes resources at a rate given by the product of the resource value at its position and a consumption rate constant . At each time step, the height of peak , , is reduced by the sum , where is the location of the peak and is the number of individuals in the population. We assume individuals abandon a resource when falls below . To keep the number of resource peaks constant and the total amount of resource on the landscape from being completely depleted, we allow resource peaks that reach a height of to regenerate at a new location chosen at random with equal probability from all points in the environment. The new peak has a peak height equal to the starting peak height, . Mean resource value for each agent is calculated in the same manner as in the case where peaks are not depleted.

Appendix Figure 7 shows the results of replicate evolutionary simulations with high (A) and low (B) rates of resource consumption. In the case of high consumption (Appendix Figure 7A), individuals evolve to have increasing mean values of and , and values are well above the hysteresis regime between collective states. While values of still enable individuals to be attracted to one another at intermediate to large distances, the variation in values among replicate simulations suggests that there is not strong selection for large . When individuals consume resources at a lower rate (Appendix Figure 7B), results parallel those shown in Figure 1 of the Main Text; populations evolve mean values of that are directly above the hysteresis region, approaches a stable value, and approaches the maximum allowable value of 30.

Evolution of behavioral traits when individuals consume resource.

Lines show means of independent evolutionary simulations. (A) High consumption rate corresponding to fast depletion of resource peaks. (B) Intermediate consumption rate corresponding to slower depletion of the peaks. Note different axis limits in the top panels of A and B. Grey region corresponds to hysteresis region between collective states shown in Main Text Figure 3. Parameters are as follows: s*=2, high consumption rate = 3.2*10–3 (time step−1), low consumption rate = 8.0*10–5 (time step−1), N = 300. All other parameters as in Figure 1 of the Main Text. These consumption rates correspond to the case where 100 individuals near the peak center can deplete a peak in roughly five time steps (fast depletion, A), and the case where the same task takes 200 time steps (slower depletion, B).

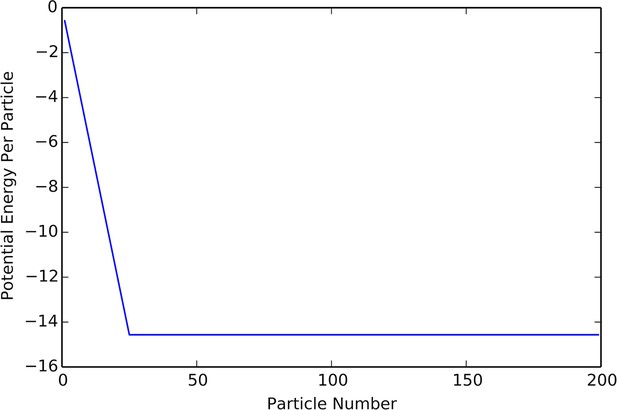

3 The cohesive state is characterized by a fixed, finite density

Agents obeying the equations described in the Main text exhibit several distinct collective states. One such state, which we call the cohesive state, is characterized by dense groups of agents occupying a fixed fraction of the environment. One of the salient properties of these groups is that they eventually reach a fixed density that becomes independent of group size. Using a small number of simple assumptions about the behavior of agents within a cohesive group, we are able to predict the density of agents directly from the model parameters. The motivation of our calculation comes from the structure of the equations, which include social potential terms and velocity-dependent self-propulsion terms. The social force on an agent is given by Equation A1. We will rewrite the social potential in Equation A1 (i.e., the term in brackets) as

The effect of the potential term in the equations is to exert a force on the entire system towards configurations where the potential energy is lower. The propulsive forces are non-conservative, causing phase-space volumes to contract, allowing the system to approach a potential energy minimum. We model the cohesive state as agents occupying a circular region of radius . Further, we assume that the probability distribution of agents within this circular region is uniform, so that agent density is given by:

The density lets us define an interaction radius which is expected to contain individuals:

This expression is valid when , which is the case we are interested in. When the interaction radius is simply the group radius, , and each agent interacts with every other agent. We calculate the expected potential by integrating over a circle of radius :

This integral evaluates to the following expression:

It is illustrative to write this expression after the substitution :

The density that minimizes will be the density of agents in the cohesive state. When written this way, it is clear that will not influence the location of the minimum of , as only appears in the expression as a constant multiplier. Thus, when we expect cohesive groups to have a constant density, so that the radius of a group grows like . These predictions match the results of our simulation quite closely, which one can see from comparisons between Appendix Figure 8 to the lower branch of the hysteresis plot in Appendix Figure 9.

Plot of potential energy per agent as a function of group size.

Cutoff at N = K indicates constant density.

Hysteresis plot of potential energy averaged over the entire population (compare with Figure 3 in Main Text).

Figure produced by starting with a population with Ψ = 4 in a uniform environment. Population is allowed to equilibrate for 5,000 time steps and then average potential energy is calculated using Equation 4 in the Main Text. Ψ is then lowered. This process is repeated until Ψ = −1, at which point the same procedure is used to increase Ψ. Upper curve corresponds to decreasing Ψ. Lower curve corresponds to increasing Ψ. Note drop in mean potential energy at Ψ = 0. We refer to the states on either side of this transition as station-keeping (Ψ < 0) and cohesive Ψ > 0 and below upper transitional regime at Ψ ∼ 1.7. Points and (error bars) correspond to mean (and 2 standard errors) of 50 replicate simulations. Parameters as in Figure 1 of the Main Text with lmax = 30.

That density is necessarily constant with increasing is a hallmark of topological interaction laws which are repulsive at short range. When there is no restriction on the number of interaction neighbors, an interaction law of the type that we use can give rise to catastrophic behavior, where the group density increases without bound with increasing (D’Orsogna et al., 2006). One feature of the topological interaction is that it allows for biological realism for agent parameter values that would otherwise lead to catastrophic behavior.

4 Relationship between and the relative strength of social forces

In order to better understand how changing parameters affect our model, we ignore stochasticity and consider the equations for the acceleration and velocity in a homogeneous environment without resources (so that the background velocity is constant). First we define:

The equations become:

Let be a characteristic length scale in this problem, let be a characteristic velocity scale, and let be a characteristic time scale. Then, let , the attraction coefficient, be the scale of the potential. We non-dimensionalize our equations by rewriting them in terms of the dimensionless variables:

The resulting dimensionless equations are:

The non-dimensional number measures the relative strength of the social potential. When becomes large, social forces become negligible. The reason for this effect is that agents begin moving too quickly for the social forces to have any appreciable effect on their trajectories. Therefore, for constant and , an alternative interpretation of is as a term that dictates the relative strengths of autonomous versus social forces.

5 Continuum description predicts cohesive and dispersed state

Figure 3 in the Main Text illustrates that populations exhibit distinct regimes, which we refer to as collective states, as a function of the preferred speed parameter. Two states are evident in Figure 3 in the Main Text: a state with short inter-individual distances for small and a state with large inter-individual distances for large . A third state is evident if the mean potential energy of all individuals in the population is plotted as a function of in a uniform environment, where potential energy is calculated from Equations 3 and 4 in the Main Text. For there is a distinct drop in potential energy for decreasing (Appendix Figure 9). We refer to the state that occurs for as station-keeping in the Main Text.

In order to better understand the behavior of agents in the context of our model, we have developed a continuum equation for the time evolution of agent density. In the context of a homogeneous environment this description can be used to predict the points in parameter space at which the uniform, purely solitary state becomes unstable, and to demonstrate that heterogeneous states cannot be stable at high enough background velocity. Although the continuum description is only an approximation, it is able to qualitatively predict many of the features of our multi-agent simulations, which makes the mechanisms responsible for this behavior mathematically more explicit. In order to derive continuum equations, we begin with a Liouville equation for the probability density of all the particles within the full phase space, and derive a hierarchy of equations by taking moments (Flierl et al., 1999; Born and Green, 1946). This hierarchy can be closed by assuming that stochastic forces are sufficiently strong to ensure independence of the individual agents. For the analysis presented here, we assume that the agents travel at a constant velocity (using the angular variable to describe the direction of the velocity), and that there is noise in the angular velocity driven by a Wiener process with variance per unit time. The assumption of a constant velocity implies that we have taken a limit where , , and go to infinity, though their ratios remain constant. We will let and stand for the limiting ratios of the original model. We will denote the space of positions by , which will be a 2-torus with length . The assumption of a constant velocity and of angular noise lead only to small quantitative changes in agent behavior, and they make it possible to analyze the resulting equations.

Therefore we begin with the following set of stochastic differential equations:

Here is the social force on agent , and is the angular direction of the social force on agent . We assume that this force is produced through a topological interaction of the following form:

Here is the set of closest neighbors to agent , and is an interaction kernel.

From these equations, we are able to write a Liouville equation by introducing a probability density on phase space, , where is the set of agent positions and is the set of agent directions. The value of at a given set of positions and directions is the probability that the each of the agents have the specified positions and directions. The Liouville equation is:

In order to simplify this equation, we assume that the probability density function can be factorized into the product of identical single particle probability density functions:

This assumption is equivalent to assuming statistical independence of the positions and directions of the agents, a condition which could be reached either through large stochasticity or ergodic single particle trajectories. The assumption allows us to derive a closed equation for the single particle probability density function , in a similar fashion to a closure of the usual BBGKY hierarchy in kinetic theory.

Then, we are able to write the following equation for the single agent probability density function (where we have replaced a binomial distribution with a Poisson distribution):

Here, the expression is the expected number of agents within a distance from the point , the function is the social potential between two particles, and the expression is the angle of force from an agent located at to an agent located at :

The presence of the terms involving are a consequence of the topological interaction law between the agents. This equation is most accurate in the limit where , where is a characteristic density and is the characteristic length scale of the interaction. In the examples which we considered in our study, this ratio is typically only slightly larger than 1 (see Peshkov et al., 2012; Chou et al., 2012) for derivations of continuum descriptions of topological interactions in a more collisional regime). Despite that, we find both quantitative and qualitative agreement between the continuum description and the agent based model. This kinetic description can be converted into a hierarchy of fluid equations by taking moments with respect to the angular direction variable .

We introduce the phase space particle density :

The Fourier series for gives the important macroscopic variables, for instance:

Here is the mean velocity of agents at each point in space. We will take moments of the kinetic equation through Fourier series:

The time evolution equation for the th Fourier coefficient is:

Here, we have simplified this expression by introducing two new functionals of the density, and , which represent the social force exerted at the point due to the density and the direction of that social force. Explicitly these are given by:

The evolution of the th moment depends on the value of the st moment, so that we have an infinite hierarchy of equations. Moments with high values of experience strong damping, and we can use this to justify discarding all moments with above a given threshold. In the following treatment we will set to zero all Fourier coefficients with , which is the simplest truncation of the hierarchy that leads to non-trivial equations.

The right hand side of the momentum equation leads to rapid equilibration, and we can eliminate the time derivative in this equation. This allows us to find an expression for in terms of only:

We can use this to write a single closed equation for . In order to facilitate the analysis of this equation, we make one further approximation, replacing the sum over Poisson factors with a Heaviside function that is equal to if the expected number of agents between inside a ball of size is less than , and otherwise. This captures the dominant qualitative feature of the topological interaction in a simple way: the effective interaction radius is a function of the local density. This approximation is quantitatively consistent with the assumptions of the previous section and the results of our simulations. The resulting equation is:

The advection-diffusion equation will be used to understand the behavior of our multi-agent simulations. From its form one can see the effects of : large enhances the diffusivity and reduces the effects of the potential.

5.1 Formation of the cohesive state and stability of the dispersed state

Any constant density function is an equilibrium solution of Equation A36. A crucial property of our multi-agent model is that depending on the background velocity, the agents have the ability to spontaneously form a dense state which we call the cohesive state. In order for this to be possible, the uniform state must be unstable. One advantage of the continuum description is that it allows us to investigate such questions within a much simpler framework than in the original agent based model. In order to do so, we select a uniform state with value and linearize around it, neglecting terms second order or higher in the deviation away from :

This is translationally invariant, and we have periodic boundary conditions, so we consider the Fourier coefficients of :

The term can be calculated by application of the convolution theorem and integration by parts, using the fact that the integrals are radially symmetric (which is true as long as ):

Here stand for the corresponding Bessel functions of the first kind. Linear stability is determined by the sign of the coefficient on on the right hand side of the following expression: