Minimal-assumption inference from population-genomic data

Abstract

Samples of multiple complete genome sequences contain vast amounts of information about the evolutionary history of populations, much of it in the associations among polymorphisms at different loci. We introduce a method, Minimal-Assumption Genomic Inference of Coalescence (MAGIC), that reconstructs key features of the evolutionary history, including the distribution of coalescence times, by integrating information across genomic length scales without using an explicit model of coalescence or recombination, allowing it to analyze arbitrarily large samples without phasing while making no assumptions about ancestral structure, linked selection, or gene conversion. Using simulated data, we show that the performance of MAGIC is comparable to that of PSMC’ even on single diploid samples generated with standard coalescent and recombination models. Applying MAGIC to a sample of human genomes reveals evidence of non-demographic factors driving coalescence.

https://doi.org/10.7554/eLife.24836.001Introduction

The continuing progress in genetic sequencing technology is enabling the collection of vast amounts of data on the genomic diversity of populations. These data are potentially our richest source of new information on evolutionary history. The challenge now is to figure out how to extract this information – how to learn as much as possible about the history of populations from modern data sets of many densely-sequenced individuals.

Perhaps the best-established approach to historical inference from genetic data is to fit demographic models to the site frequency spectrum (SFS) (e.g., Gutenkunst et al. (2009); Excoffier et al. (2013); Liu and Fu (2015)). The SFS is easy to calculate, even from very large samples, and demographic models can be fit to it without a specific model of recombination, but it neglects all information about how diversity is distributed across the genome, treating each site independently. This is a natural approximation for samples sequenced only at a sparse set of weakly-linked loci, but in large whole-genome samples much of the information is contained in associations among different polymorphisms. Because SFS-based approaches cannot use this information, the number of model parameters they can reliably estimate is limited by the sample size, regardless of how much of the genome is sequenced (Myers et al., 2008; Bhaskar and Song, 2014).

Recently, an alternative approach has been developed in which a hidden Markov model is used to explicitly model recombination along the genome (the ‘sequential Markovian coalescent’, SMC or SMC’, McVean and Cardin (2005); Marjoram and Wall (2006); Paul et al. (2011)), vastly increasing the amount of information that can be gleaned from samples of a small number of individuals (Hobolth et al., 2007; Li and Durbin, 2011; Harris and Nielsen, 2013; Sheehan et al., 2013; Schiffels and Durbin, 2014; Steinrücken et al., 2015). But this requires modeling coalescence and recombination throughout the analysis, and as a result becomes computationally intractable for large samples (while still being far less computationally intensive than modeling the full ancestral recombination graph). Additionally, for an increasing number of populations, we have multiple genomic sequences but know almost nothing about their natural histories, including plausible historical demographies and patterns of recombination and selection; this is true even for some model organisms (see Alfred and Baldwin (2015) and other articles in series). It is generally unclear how deviations from the underlying models, e.g., past population structure or gene conversion, affect the inferences of these methods.

Here we present a method for Minimal-Assumption Genomic Inference of Coalescence (MAGIC) that infers the patterns of ancestry and recombination in an arbitrarily large sample of genomes while making only minimal, generic assumptions about recombination, selection, and demography. MAGIC finds approximate distributions of times to different common ancestors of the sample. These distributions can then be used to fit and test potential models for the history of the population, including the simplest model of a single time-dependent ‘effective population size’, . MAGIC strikes a balance between the SFS- and SMC-based approaches, using the distribution of diversity across genomic windows of varying size to generate a description of the single-locus coalescent process that contains far more information than the simple SFS without using a detailed model for recombination.

Results

Approach

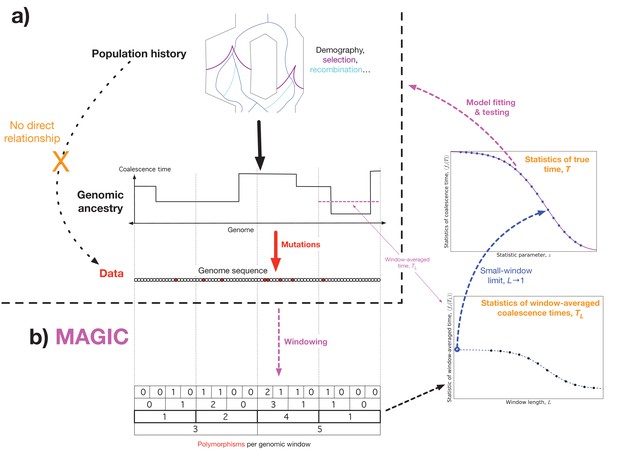

The key fact underlying MAGIC is that the relationship between the population parameters (such as recombination rates, historical demography, and selection) and genomic data is entirely mediated by the coalescent history of the sample (Figure 1a). We therefore take it as our goal to learn the coalescence time distribution directly from the data without needing a model for the population dynamics. Once one knows the coalescent history, the genomic data typically contains no additional information about the population parameters (unless, e.g., the selective values of different alleles are known), and one can fit or evaluate a wide range of models without having to re-analyze the full data set every time. In simple cases, such as when the population is described by a single effective population size, , this can be done analytically (Gattepaille et al., 2016), while in general it can be done via Approximate Bayesian Computation (ABC).

Outline of approach.

(a) Concept: we would like to infer the history of the population (top) from the sequence data (bottom), but the causal connection between the two is entirely mediated by the coalescent history of the sample (middle). This suggests that it should be possible to extract much of the coalescent information from the data without making strong assumptions about the population dynamics. (b) Schematic algorithm: MAGIC first splits the sample into small windows and counts the polymorphisms within each window, then progressively merges pairs of adjacent windows together (bottom left). For each window length , the histogram of window diversity is used to estimate parameters of the distribution of the window-averaged coalescence time (bottom right). Taking the limit of these as goes to 1 gives the parameters of the distribution of the true coalescence time (top right), which are then used to fit and test models for the underlying dynamics.

Essentially, MAGIC uses the variability in the density of polymorphisms across a wide range of length scales to learn the genome-wide distribution of coalescent histories. This technique is inspired by Li and Durbin (2011)’s method, PSMC, and its successor MSMC (Schiffels and Durbin, 2014), which use the fact that SNPs tend to be dense in regions with a long time to the most recent common ancestor (TMRCA), and sparse in regions with short TMRCAs (Figure 1, top left and middle left). Thus, the distribution of SNPs across the genome can be used to infer the distribution of local coalescence times. But while PSMC and MSMC use models for coalescence and recombination to assign a coalescence time to each locus, MAGIC estimates the genomic distribution of times directly, bypassing the need for explicit modeling. To do this, MAGIC first splits the genome into windows and finds the distribution of genetic diversity across windows, that is, the histogram of the number of polymorphic sites per window of a given length (Figure 1, bottom left; Figure 6, top left). (This can be seen as a simplified version of the ‘blockwise counts of SFS types (bSFS)’ introduced by (Bunnefeld et al., 2015).) This histogram is then used to estimate the distribution of window-averaged coalescence times (Figure 1, bottom right; Figure 6, bottom). For small windows, these times are essentially the true single-locus coalescence times, but the inference is noisy due to the small number of mutations in each window. For large windows, the inference of the window-averaged distribution is more precise, but this distribution is far from the single-locus distribution because windows typically span multiple segments with different coalescence histories. The basic trick of MAGIC is that rather than choosing one window length, it integrates the information gathered from a wide range of different window lengths to find the small-length limit – the true single-locus distribution (Figure 1, center right).

MAGIC’s accuracy is comparable to the state-of-the-art model-driven method MSMC (Schiffels and Durbin, 2014) on small data sets that conform to MSMC’s assumptions (see ‘Single diploid samples’ in ‘Results’). More importantly, it can also analyze larger samples, and can be useful in analyzing data from populations with features (such as gene conversion, ancestral structure, or linked selection) that violate MSMC’s assumptions, either as a stand-alone inference method or as a way of testing models inferred by other methods that include these features. MAGIC’s algorithm, described in ‘Methods’ below, is designed to be as simple and modular as possible, allowing one to incorporate additional assumptions in situations where more information is available. This also enables the inference of a wide range of parameters, including the distribution of map lengths of blocks of identity-by-descent across the genome (Ralph and Coop, 2013). Finally, MAGIC can use the dependence of the window-averaged distributions on window size to learn about the rate of recombination and the variation in recombination rate and the coalescent process across the genome. The variation in the coalescent process is particularly interesting because it is a signature of the effects of non-demographic forces.

Representing coalescence time distributions

For single diploid samples, the coalescent history is completely described by a single time at each locus. Thus the pairwise coalescence time distribution could equivalently be described by the hazard function (the pairwise coalescence rate, as in Schiffels and Durbin (2014)) or its reciprocal (the ‘effective population size’ , as in Li and Durbin (2011)). However, when estimating the distribution from noisy data, the procedure that minimizes the error for one description will not in general minimize the error for the others. We focus on estimating the coalescence time distribution itself (rather than its hazard function or ), primarily because it naturally generalizes to arbitrary sets of coalescent tree branch lengths for larger samples (see below). This also lets us emphasize that the idea that the full coalescent can be described by a single is a model that can be tested (as in Figure 3 and the right panel of Figure 4). For plotting results from analyzing simulations (Figure 2 and Figure 3), we show cumulative distributions rather than densities so that we can plot the actual coalescent histories of samples (black curves in Figure 2 and left panels of Figure 3), which consist of discrete sets of events, and also because the density estimates are very poorly constrained by the data (Ralph and Coop, 2013). For the analysis of human data, we plot the more familiar (Figure 4, left panel).

We must also choose how to summarize coalescence time distributions within MAGIC’s analysis (Figure 1, right-hand side). We will use the Laplace transform of the density, . For a formal definition of this quantity and how we estimate it, see the section ‘Laplace transforms’ of the Methods below. Intuitively, represents what the homozygosity would be if the mutation rate had been larger by a factor of , e.g., is the observed homozygosity, and is what it would have been if the mutation rate had been twice as high (Lohse et al., 2011). This means that very roughly corresponds to the probability of coalescing within generations, where is the mutation rate, that is, large tell us about the recent past while small tell us about the distant past. The mean coalescence time corresponds to , where is the observed heterozygosity of the sample. We choose this statistic both because of this natural interpretation and because it can be obtained from the observed diversity distribution directly with only very weak assumptions, allowing us to delay introducing stronger assumptions until the very last step of the analysis, when we invert the transform to find the full coalescence time distribution (see Materials and methods below).

Single diploid samples

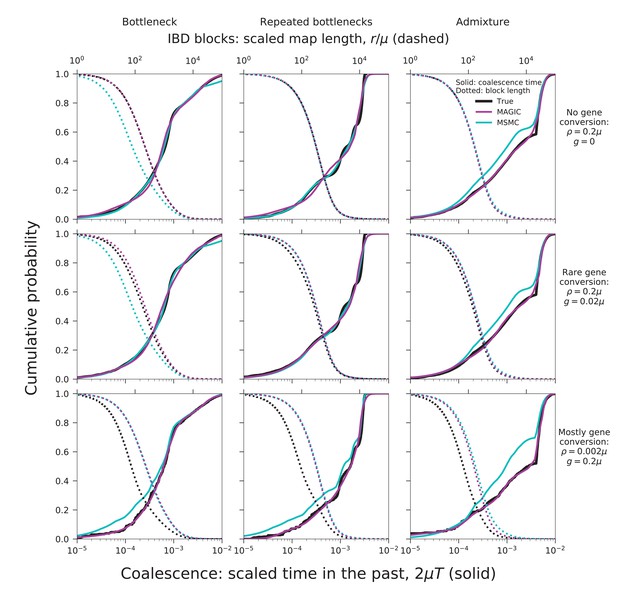

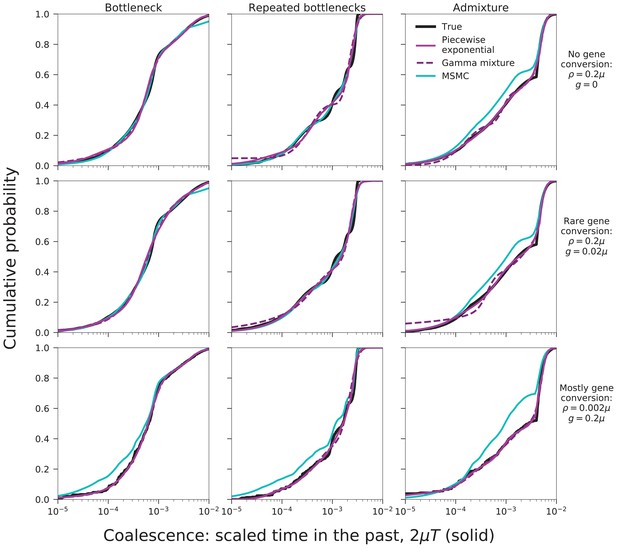

To validate our approach, we have tested MAGIC on single diploid samples generated under a range of coalescent models simulated with ms (Hudson, 2002). MAGIC accurately infers the distribution of coalescent times from samples with map length and polymorphism density similar to that of a human genome (Figure 2, top two rows, solid curves; see Figure 9 and Methods for detailed parameters and additional tests). MAGIC performs nearly as well as MSMC (Kolmogorov-Smirnov distance to the true distribution of for the simulations shown, compared to for MSMC). Both methods tend to smooth out sharp transitions in the coalescence distribution as a consequence of regularization. The distribution of map lengths of blocks of identity by descent (IBD) can be inferred with very high accuracy (Figure 2, top two rows, dotted curves), improving on MSMC, which sometimes overestimates the amount of very deep coalescence, and correspondingly erroneously estimates a large number of very short blocks. The part of the block-length distribution estimated by MAGIC is complementary to the very long blocks that can be observed directly (as in, e.g., Ralph and Coop (2013)). MAGIC is also accurate on genomes simulated with ms under a model in which recombination is dominated by gene conversion (Figure 2, bottom row); this can be seen as loosely corresponding to a primarily asexual population, with gene conversion representing homologous recombination. In this case, MSMC’s recombination model breaks down and MAGIC’s inferences are more reliable.

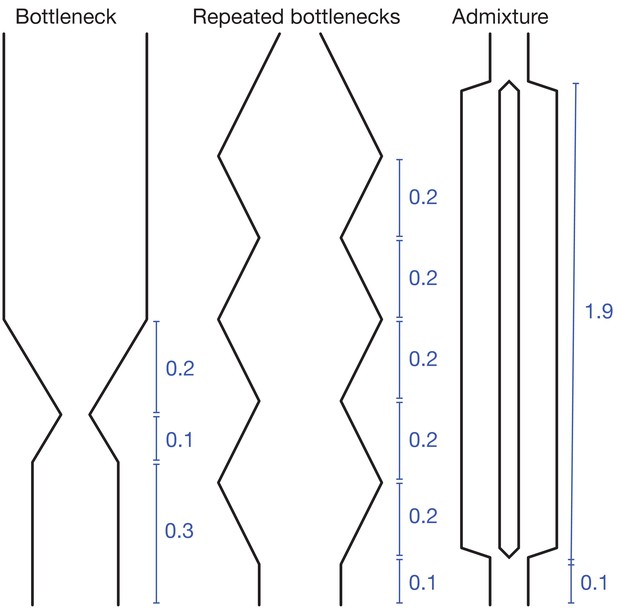

MAGIC accurately infers the distribution of coalescence times (solid curves) and lengths of blocks of identity-by-descent (IBD, dotted curves) for pairwise data simulated with ms under several demographic scenarios (Figure 9): a single bottleneck (left column), repeated expansions and contractions (middle), and recent admixture of two diverged populations (right).

The coalescence time plots show the cumulative distribution, while the IBD block-length plots show the survival function. When crossovers are frequent and gene conversion is rare (top two rows), MAGIC and MSMC are comparably accurate for coalescence times. MAGIC very accurately infers the IBD block length distribution, while MSMC sometimes is inaccurate (e.g., ‘Bottleneck’ scenario), but otherwise produces a curve nearly indistinguishable from MAGIC’s. For frequent gene conversion and rare crossovers (bottom row), the details of the gene conversion process have a strong effect on the IBD block lengths, and neither method can infer their distribution, but MAGIC can still infer the coalescence times. All simulations are of a genome consisting of independent chromosomes, each base pairs long, with per-base mutation rate and present population size such that . Recombination is via crossovers occurring at rate per base, and via gene conversion being initiated at rate per base with mean tract length . See Materials and methods for values of other simulation parameters.

Larger samples

For samples of more than two haplotypes, the coalescent history at each locus is described by a tree, rather than a single time. The space of possible trees grows very rapidly with the sample size, so that even with long genomes it is impossible to directly estimate the full distribution. Instead, MAGIC infers the distribution of some small set of features of the trees, such as mean pairwise distance and total branch length, chosen either because they are important in and of themselves or because they are sufficient statistics for some model of the coalescent process. For example, MAGIC can fit the basic time-dependent effective population size model by estimating the distribution of pairwise coalescence times, and then check whether the fitted model correctly predicts the distributions of other tree features. MAGIC is particularly suited for model-checking, since for all the features beyond the basic pairwise coalescence time one can skip the final, most difficult step in the algorithm – inverting the Laplace transforms – and simply compare the distributions at the level of Laplace transforms.

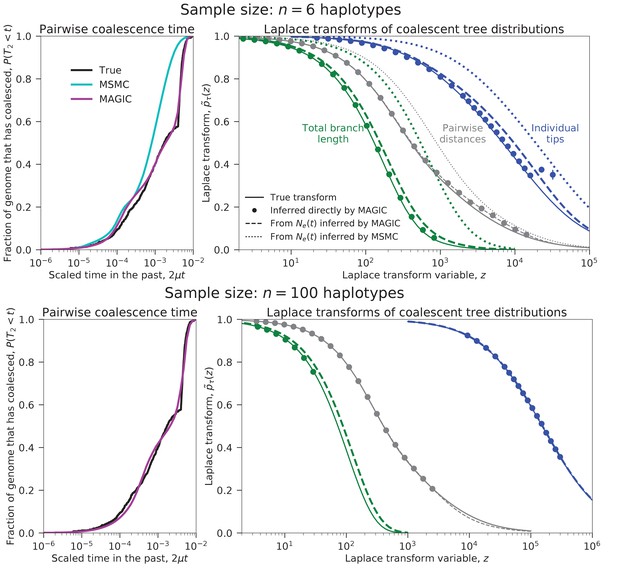

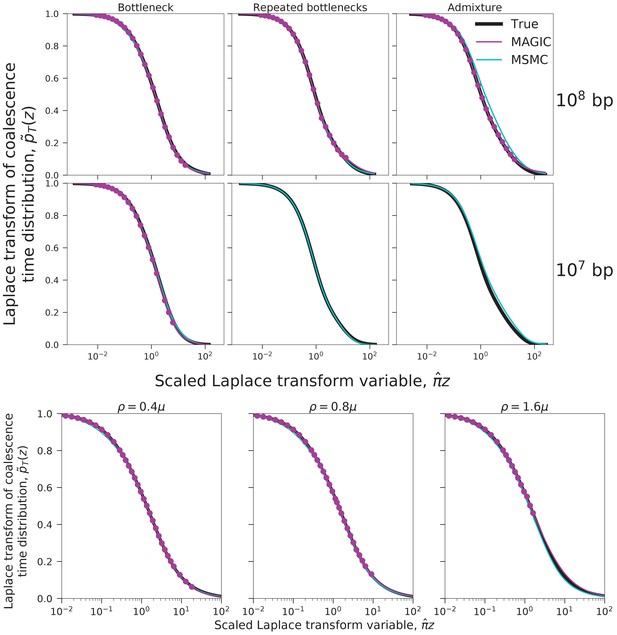

We test this approach on a sample of six haplotypes from a recently admixed population simulated with ms (demographic parameters in the last column of Figure 2 and Figure 9). MAGIC accurately estimates the distributions of pairwise coalescence times, while MSMC is relatively inaccurate (Figure 3, top left). MAGIC also accurately infers the Laplace transforms of the distributions of the total branch lengths and the lengths of the tips of the full coalescent trees (Figure 3, top right). Comparing these inferences to the predictions of the models corresponding to the pairwise inferences shows that MAGIC is more accurate than MSMC but is still missing features of the tree distributions, indicating that the model is not a good description of the population history. (The differences appear small in the plot, but are far larger than the uncertainties in the inferences and correspond to substantial differences in the actual distributions.) This failure of the may be why MSMC is inaccurate in this case.

Coalescence time distributions and their Laplace transforms for a simulated population with ancestral structure, as inferred from samples of intermediate and large size.

Top row: sample size haplotypes. MAGIC accurately estimates the distribution of the pairwise coalescence time (top left), and the Laplace transforms of the distributions of the total branch length and the lengths of the tips of the branches (top right, green and blue, respectively). The gap between the estimated Laplace transforms (circles) and that obtained by simulating the estimated from the pairwise coalescence time distribution (green and blue dashed curves) suggests that the model is inaccurate. The pairwise Laplace transforms are shown in gray for comparison. MSMC’s inferences are less accurate. Bottom row: sample size haplotypes (too large for MSMC). The gap remains between the estimated Laplace transform of the total branch length distribution and that derived from the pairwise , showing that the coalescent process cannot be described by a single . However, the tip length distribution matches that predicted from the pairwise comparisons, showing that the model has predictive power for recent times.

MAGIC’s running time on large samples is dominated by the time to read all the data through memory, so it grows only linearly with sample size, meaning that the method can be run on essentially arbitrarily large samples. MAGIC accurately estimates the distribution of pairwise times and the Laplace transforms of the total branch length and tip length distributions in a sample of 100 haplotypes from the same admixed population (Figure 3, bottom row). (Curves are not shown for MSMC because it cannot analyze large samples; a sample size of eight from this population caused it to crash.) The total branch length Laplace transform remains different from that predicted by the effective population size model, but the tip Laplace transform matches almost exactly, indicating that the model is accurately describing recent times (post-admixture, , roughly corresponding to ) but not ancient times (pre-admixture, , roughly corresponding to ), as expected.

In the example above, we have only inferred the distributions (or their Laplace transforms) of pairwise coalescence times, total branch lengths, and tip lengths. But to be able to consider a wide range of models for the population, one must be able to estimate a wide range of parameters. MAGIC can infer the distribution of the total length of any specified set of tree branches. For a given set, MAGIC first filters the polymorphisms in the original data for those that correspond to mutations on the desired branches, and then proceeds with the same analysis as in the basic case of a single diploid sample. For example, to find the distribution of total branch length, MAGIC analyzes the genomic distribution of all polymorphic sites, while to find the distribution of tip lengths, it only looks at singletons on specific haplotypes. For data with no linkage information, the tree features that can be estimated correspond to components of the site-frequency spectrum (SFS), but while the SFS just gives estimates of the means of the lengths of different sets of branches, MAGIC infers full distributions.

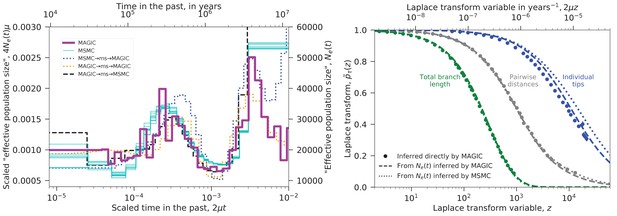

Human data

We used MAGIC to analyze the nine diploid sequences from unrelated Yoruban individuals in Complete Genomics’ ‘69 genomes’ data set (Drmanac et al., 2010). The pairwise coalescence time distribution inferred from the heterozygosity distribution was similar to that obtained with MSMC run on individual samples. These distributions are plotted in the left panel of Figure 4 as ‘effective population sizes’ (solid lines), while the underlying Laplace transform estimate is plotted in the right panel. The distributions differ mainly in the distant past where the data is limited. The fact that MSMC finds results from single individuals similar to those that MAGIC finds from the whole sample shows that MSMC is the more powerful method in this case. (MAGIC does not consistently detect the hump in at when run on single individuals.)

We re-ran the analysis on samples simulated with ms using the inferred trajectories; the results are shown in the left panel of Figure 4 as dotted lines. These show that MAGIC’s results are more accurate than MSMC’s for the distant past: MAGIC recaptures a simulated ancient decrease in (orange) and partially recaptures a large ancient (blue), while MSMC misses the ancient decrease in (black). The fine-scale fluctuations that appear in MAGIC’s inferred for are somewhat puzzling. The fact that MAGIC reproduces a smooth simulated trajectory (blue) and that MSMC fails to capture simulated fluctuations (black) would seem to suggest that they are a real feature of the data, but the fact that MAGIC also fails to capture simulated fluctuations (orange) argues against this. Instead, the fluctuations may be an artifact caused by some non-demographic feature of the real data that is missing in the simulations, potentially related to biases in sequencing coverage or SNP-calling.

Inferred evolutionary history of Yoruban individuals.

Left: Inferred ‘effective population size’ for the nine unrelated Yoruban individuals from the 69 Genomes data set (Drmanac et al., 2010). MAGIC’s inference from the full sample is similar to MSMC’s inference from each individual, differing mostly in the distant past where there is limited data. Dotted lines show inference from samples simulated under the inferred ’s, indicating that MAGIC’s inference in the distant past is likely to be more accurate than MSMC’s, but that its inference of the fine-scale structure in is unreliable. Right: Laplace transforms of the distributions of different coalescence times for the same sample. The pairwise coalescence time (corresponding to in the left plot) is in gray, while the total branch length is in green and the distribution of tip lengths is in blue. Points show the values inferred directly by MAGIC, while solid and dashed curves show the results of simulations based on the curves inferred by MAGIC and MSMC, respectively. The pairwise accurately describes the distribution of total branch lengths, but overestimates the tip length Laplace transform (i.e., underestimates the tip lengths). MAGIC’s overestimate roughly corresponds to a underestimate of the median tip length, while MSMC’s corresponds to a underestimate, indicating that both underestimate recent from pairwise data.

We also inferred the Laplace transforms of the distributions of two other coalescence times: the total branch length, and the lengths of the tips of the coalescent trees (Figure 4, right), and compared them to ms simulations of the inferred demographies. The total branch length is close to that predicted by pairwise , but the tips of the trees are substantially longer. This is consistent with the fact that the pairwise inferences do not detect the recent Yoruban population growth, that is, underestimate the recent effective population size. (It could also be a signature of false-positive singleton SNPs.) Note that even if we did not know that the Yoruban population had been recently increasing, MAGIC’s result for the tips would tell us that the estimates of the left tail of the pairwise coalescence time distribution were inaccurate. To reconcile the results, one could try to find an trajectory that fits all the points in the right panel of Figure 4. This could be done via ABC as in ABLE (Beeravolu Reddy et al., 2016), simulating the coalescent under different and accepting trajectories that fit better. Unlike in ABLE, all the points are single-locus statistics, so these could be just single-locus simulations with no recombination, greatly reducing the computational requirements. Alternatively, one could simply try another inference method that is better able to resolve recent times (e.g., Terhorst et al. (2017)’s SMC++) and then check whether the resulting matches MAGIC’s inferences. Both of these approaches could also be generalized to include coalescents that cannot be described by an (e.g., for population structure, one could include it in an ad hoc ABC model, or one could use an existing method such as ABLE and test its results).

Inferring differences among chromosomes: recombination and coalescence

MAGIC’s coalescence-time inference is designed to be robust to the form of recombination, but it can also be used to learn about recombination. To do this, rather than simply taking the small-window limit of Laplace transforms of the window-averaged coalescence time, one can look at how they change as a function of window size. In general, besides the small-window limit in which almost all windows lie within IBD blocks, there should also be a long-window limit in which almost all windows contain many IBD blocks. In between these two there is a transitional regime where the window length lies within the bulk of the distribution of IBD block lengths; finding this transitional length gives an estimate of the recombination rate.

Because the transition from the small-window to the large-window limit is not very sharp (Figure 6, bottom right), this estimate of the recombination rate is very rough. A more precise estimation requires a specific model of recombination and coalescence, like the one used by MSMC. But even if one does not have a good model for the dynamics of a population, one can make the assumption that all autosomes are experiencing roughly the same dynamics, whatever they are. (This assumption is implicit in all demographic inference from full genomes.) In that case, the dependence of the Laplace transforms of the window-averaged coalescence time on window length should be similar across autosomes, and differences in average recombination rates across chromosomes should be detectable as rescalings of the window lengths. MAGIC can therefore use these rescalings to precisely estimate relative recombination rates.

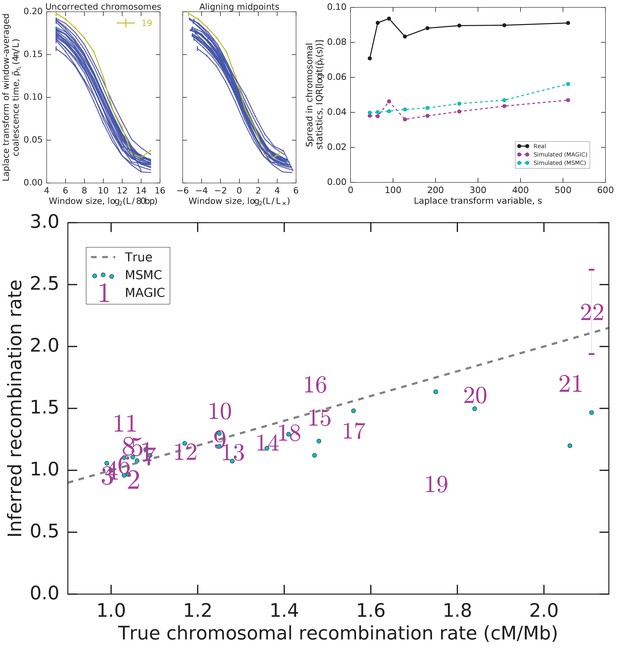

As an example of this approach, we analyze each autosome across the nine unrelated Yoruban individuals in the data set. We find that the Laplace transforms of their window-averaged coalescence time distributions all show a similar dependence on window length (Figure 5, top left). Up to a rescaling in length, the autosomes appear very similar (Figure 5, top center), with the exception of 19. The collapse of the remaining 21 autosomes suggests that they differ primarily in the amount of very recent coalescence (rescaling the heterozygosity) and in average recombination rates (rescaling the window lengths). The scaling factors for the window lengths therefore are an estimate of relative recombination rates, and are indeed very close to values measured by Kong et al. (2002) (Figure 5, bottom), with the exception of chromosome 19, as expected.

It is no surprise that chromosome 19 is an outlier in coalescence: it has a much higher gene density than the other chromosomes (Grimwood et al., 2004), and is therefore likely to have a much higher fraction of loci under selection and affected by linked selection (Hernandez et al., 2011). However, the other autosomes do not have identical gene densities, and there are several large regions with unusual patterns of diversity, such as the MHC locus and flanking regions on chromosome 6. Indeed, even after rescaling, there is still more residual variation in coalescence across the 21 similar autosomes than would be expected by chance under the genomic coalescence time distributions inferred by MAGIC and MSMC (Figure 5, top right). This variation must be due to non-demographic factors driving coalescence; the fact that they are readily detectable suggests that one should be cautious in interpreting the details of the results of model-based inference in terms of demography.

Integrating diversity patterns across length scales allows to compare recombination rates among chromosomes and to test whether the observed patterns can be explained by a single shared demographic history.

Top left: One value of the Laplace transform of the window-averaged coalescence time distribution as a function of window size for each autosome. The curves for most autosomes appear similar, but shifted both vertically and horizontally. Top center: Much inter-autosomal variation can be explained by variation in recombination rates: the curves are similar under a rescaling of window lengths (a horizontal shift such that the midpoints of the curves align), except for chromosome 19 (yellow), which appears to have a different pattern of coalescence. Top right: There is substantial variation in the asymptotes that cannot be explained by variation in recombination rates, and is more than expected from intrinsic coalescent stochasticity. Plot shows the interquartile range (IQR) of the Laplace transform of the coalescence time distribution across chromosomes for the actual data as well as simulations of the pairwise coalescent histories inferred by MAGIC and MSMC (Figure 4). (Note that IQR, unlike variance, is insensitive to outliers.) Bottom: The rescaling of window sizes needed to align the different autosomes gives an estimate of their relative recombination rates which is very close to the values obtained by Kong et al. (2002) (‘True’). For chromosomes other than 22, the inferred error bars are smaller than the size of the markers.

Discussion

The MAGIC algorithm bridges the gap between fast but limited SFS-based approaches to demographic inference and model-based approaches that are limited to small sample sizes, allowing far more information to be extracted from large, high-coverage samples. To see the difference between MAGIC and an SFS-based approach, consider the information that can be gained from sites with singleton polymorphisms. Under an approach that treats sites independently, these can be summarized by one number – their genomic frequency – which can only be used to estimate one number – the mean total tip length of coalescent trees. MAGIC, in contrast, also considers how clustered the sites are over a wide range of lengthscales, allowing it to estimate not just the mean, but the whole distribution of total tip lengths.

While this manuscript was in preparation, two other methods extending the SFS by using linkage information were posted. Terhorst et al. (2017)’s SMC++ uses the SFS to augment the pairwise coalescent inference approach of PSMC; Beeravolu Reddy et al., 2016’s ABLE uses ms to fit demographic models to Bunnefeld et al., 2015’s ‘blockwise SFS’ statistics. These methods share MAGIC’s aim of taking advantage of linkage information for large samples, and as mentioned above, ABLE’s initial summary of the genomic diversity is related to MAGIC’s. The fundamental difference is that both SMC++ and ABLE use explicit coalescence and recombination processes (SMC’ for SMC++, ms’s ancestral recombination graph for ABLE) to fit specified demographic models, while MAGIC focuses on just estimating the coalescence time distributions.

MAGIC is designed to be complementary to the existing inference methods, which largely rely on fitting simplified demographic models that neglect selection (Schraiber and Akey, 2015). Because MAGIC makes no assumptions about whether coalescence is driven by demography or selection, and only minimal assumptions about mutation and recombination, it can be used as a first-pass analysis of genomes from species whose natural histories are not already well-known, with its results informing the choice of more detailed, model-based methods that use additional information outside of the sample sequences. Conversely, MAGIC can be used for a necessary final step missing in many demographic inference projects: model checking. To evaluate a model produced by other methods, one can use MAGIC to estimate additional parameters beyond those used to fit the model, and then test whether the model reproduces those values. If it does, this can be a crucial sign that the model is capturing important features of the underlying history, while if it does not, the deviations can point to ways in which the model needs to be adjusted (as with the inferences of the recent past of the Yoruban population above).

Finally, MAGIC’s estimated Laplace transforms could also be used directly to fit population models (including non-standard ones incorporating, for instance, linked selection, that are not implemented in existing inference methods). Because MAGIC converts the genomic distribution of diversity into the Laplace transforms of single-locus coalescent times, fitting models to its results requires only single-locus coalescent simulations or calculations, which are much less computationally intensive than multi-locus ones. They can thus reasonably be found analytically or via Approximate Bayesian Computation for many models.

Even for populations for which there are good a priori models, the minimal-assumption approach has advantages. Because MAGIC has a modular structure and is not tailored to a specific population model, it can be used to quickly analyze many populations with very different dynamics, with each population’s model incorporated in just the last step of the analysis. Similarly, for any given population, MAGIC can estimate many different parameters describing coalescence and recombination to answer multiple questions about the historical dynamics. Finally, not using any explicit model of coalescence and recombination keeps MAGIC’s algorithm simple enough that it runs quickly even on very large sample sizes, and that users familiar with Python can understand and modify it.

There are a number of potential modifications to MAGIC that users could make. At a minimum, there are likely to be technical improvements to the estimation methods that would allow it to get more information out of the data. More interestingly, the range of parameters estimated by MAGIC could be extended. In particular, MAGIC currently infers the distributions of features of coalescent trees that can be found from unphased, unpolarized polymorphism data, but it could be extended to take advantage of this extra information when available. It would also be possible to extend MAGIC so that it would infer joint distributions of different coalescence times, rather than just all the marginal distributions. This would greatly increase the amount of information that could be extracted from extremely large data sets such as are likely to be available in the near future.

Materials and methods

Diversity across genomic windows

Request a detailed protocolA sample set of genomes will comprise many blocks of sequence with different coalescent histories; by looking at the distribution of genetic diversity across blocks, one can estimate the coalescence time distribution of the population the sample was drawn from. Li and Durbin (2011) and Schiffels and Durbin (2014) try to do this by considering all possible boundaries between the blocks using a hidden Markov model. However, block boundaries are only easy to identify when mutation rates are much larger than recombination rates, which is generally not the case, and describing every possible block becomes impractical for larger sample sizes as the number of blocks proliferates. Instead, we simply divide the genome into windows of a fixed length , and consider the distribution of histories of windows. MAGIC estimates the distribution of a single coalescence time (i.e., coalescent tree parameter) across genomic positions . For single diploid samples, is the total branch length (twice the time to the most recent common ancestor at position ) and completely characterizes the coalescent history. For larger samples, MAGIC can be used to estimate the distributions of multiple statistics one at a time.

We assume the infinite sites model, in which each mutation occurs at a unique locus. Under this model, the diversity (e.g., heterozygosity in a single diploid sample) in a window of length starting at position has a distribution that depends only on the window-averaged coalescence time, defined as

If is smaller than most block lengths, then windows will typically lie within blocks, and the distribution of will be close to the distribution of . For very large , each window will average over many blocks, and will have a narrow support around the mean of . Usually, there will be a wide range of intermediate values of for which windows lie inside long blocks but cover multiple short blocks. Ideally, we would like to use information from windows with lengths throughout this range, preferentially selecting the ones that lie inside long blocks.

Given that approaches , as decreases, one might be tempted to take to be as small as possible, but the problem of course is that we cannot see directly; we need to infer it from the number of SNPs in the window. Given , and assuming a constant mutation rate per base, the number of SNPs will be approximately Poisson-distributed with mean . (Here we are assuming that is always small enough that each base has only a small chance of having mutated; this allows us to approximate the underlying sum of binomially-distributed random variables with a Poisson that depends only on .) The smaller is, the lower the signal-to-noise ratio in the number of SNPS will be and the less power we will have to distinguish different values of . Thus, we expect that we will get the most information about from an intermediate value of , and should be able to do better still by integrating information from multiple values of .

The total probability that there are SNPs in a window of length is the average of the Poisson distribution over all possible values of :

where is the window-averaged coalescence time scaled such that it is equal to the expected number of SNPs in the window: is a Poisson mixture distribution, with mixing distribution given by , the cumulative distribution function of , i.e., the fraction of the genome that is expected to have coalesced by a given scaled time. The observed SNP count distributions thus give us information about the window-averaged coalescence-time distributions . We could try to estimate the full distribution , but we are primarily interested in the true single-locus coalescence-time distribution (where ). We will therefore focus on estimating just features of that can then be combined to estimate .

Laplace transforms

Request a detailed protocolWe need to choose which set of parameters describing to estimate. The Laplace transform , evaluated at a set of points , is a natural choice, as it is closely related to the diversity distribution (Lohse et al., 2011): Equation 2 shows that is the Taylor coefficient of about . This has two implications. First, the similarity to the proportion of homozygous windows means that the Laplace transform has a natural interpretation as an estimate for the proportion of windows of length that would be homozygous if the mutation rate were multiplied by . (It is also closely related to the distribution of lengths of IBD blocks – see below.) Second, we can quickly and easily estimate using the plug-in estimator:

The estimate lt of the Equation 3 transform will be accurate for close to 1, but will blow up for large – we cannot accurately estimate the amount of very recent coalescence. To make this precise, we need to estimate the error in Equation 3 due to the stochastic mutation accumulation process. But this depends on the unobserved distribution , so we will need a rough estimate of this distribution. We use Ghosh et al., 1983’s estimator to estimate the value of for every window from the observed number of SNPs ; roughly, this gives , with a correction given by their Equation (2.17) that slightly shrinks the estimated values. (This shrinkage improves the estimate for small values of .) For , where would give , implying that mutations could never occur, we adjust the formula to , where is the number of windows with 0 SNPs, to account for the fact that coalescence times may not be exactly 0. We then calculate the standard error that would be introduced by the stochastic mutation accumulation process under :

where is the total number of windows. The accuracy of Equation 3 and Equation 4 could be improved by using more sophisticated estimators, but the current ones are the easiest to compute.

Combining length scales

Request a detailed protocolTo combine information from different window lengths, we need to correct for the increase in window-wide mutation rate . We can therefore consider the quantity as a function of , holding fixed, as shown in the bottom panels of Figure 6. When is nearly independent of , this quantity should be nearly constant. (To see this, note that , with no explicit dependence.) This is the case for very large , when each window averages over many coalescent blocks, and for very small , where each window falls within a coalescent block and approaches , the distribution we are interested in. We therefore fit a sigmoid curve (specifically, Richards’ curve) to as a function of ,

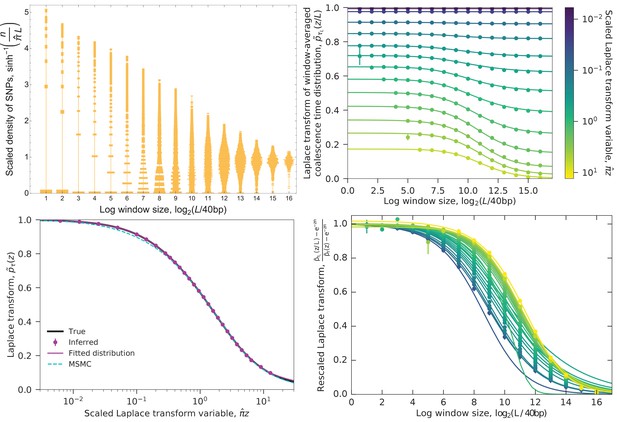

Example of converting the distribution of SNPs to the coalescence time distribution.

Data are from the simulated ‘bottleneck’ demography (Figure 2 and Figure 9, left columns). Top left: Distribution of SNP densities across windows of different lengths, normalized by the heterozygosity . The width of a bar represents the fraction of windows with a given SNP density. The densities are shown on an arcsinh scale (approximately logarithmic for large values but linear for small values). At short lengths, the distribution is concentrated at zero, while at long lengths it is bunched near the mean, with the best spread in between. Top right: The Laplace transform of the window-averaged coalescence time distribution as a function of window length and Laplace transform variable . Points show the estimates derived from the SNP density distribution (using Equation 3, with error bars given by Equation 4), curves are sigmoid fits (Equation 5). For small (long times), small windows can be used to estimate the left asymptote , while for large (short times), the estimates from small windows diverge (missing points with )) and longer windows need to be used. Typical coalescence times correspond to (blue-green). Bottom left: Laplace transform of the coalescence time distribution. Points are the left asymptotes of the curves in the top right panel. Black curve shows the true transform, magenta curve shows MAGIC’s fitted distributions (the gamma mixture and piecewise exponential forms give indistinguishable curves), cyan curve shows the Laplace transform of MSMC’s estimated coalescence time distribution. All the curves are close, but differ slightly for very large , corresponding to very recent times, with MSMC also differing for small (ancient times). Bottom right: Same as top right, rescaled to show that as increases, longer windows are sufficient to accurately estimate . But the increase in the minimum sufficient window length is slower than the increase in the minimum length for which can be accurately estimated (top right, leftmost points), putting a limit on the maximum for can be estimated (bottom left, rightmost point).

and take the left asymptote as an estimate of . The right asymptote is the long-window limit, and can therefore be estimated directly from the genomic density of SNPs . ( is the heterozygosity in the basic case when is the pairwise TMRCA, but more generally it is the density of SNPs corresponding to the branch statistic being estimated.) To fit the remaining parameters, we use the curve_fit function in SciPy’s optimize package on the lowest- points that have small estimated errors. curve_fit also returns a standard error , estimated under the assumption that the errors in are independent for different values of . This is obviously not true (all estimates are from the same set of mutations), but even for lengthscales that are only separated by factors of , the correlations in the error appear to be small in simulations, giving final error bars of roughly the right magnitude. While contains the information about the single-locus coalescent, the values of other parameters, particularly , are informative about recombination – see ‘Inferring recombination rates’ and Figure 5.

The sigmoid form sigmoid is flexible enough to fit all of the simulated and real data that we have examined, but we only trust our estimate of if the data are close enough to the left asymptote so that the estimate is not very sensitive to the choice of functional form. Effectively, this means that the estimate is close to for the smallest for which the estimated error bars are small, with corrections based on the next few higher lengthscales. But this smallest depends on , so while for any given value of only a few lengthscales are important, every lengthscale is important for estimating the Laplace transform for some . Short lengthscales are useful for small (i.e., long coalescence times), while long lengthscales are useful for large (short coalescence times) (Figure 6, bottom panels). To see why this is, recall that our estimate is most accurate for arguments near 1, so for each our most accurate estimate of comes from windows of length . Our smallest window size therefore puts a lower limit on the values of for which we can estimate , that is, an upper limit on the times we can characterize. Similarly, there is an upper limit on the values (lower limit on timescale) that we can resolve. This can occur at the value of at which the windows become so large that we only have a few per chromosome and no longer have good statistics, or, as in Figure 6, at values such that the windows are so long that even the most recently-coalesced ones cover multiple recombination breakpoints, that is, is far from the asymptote .

Coalescence-time distributions

Once we have estimates for the Laplace transform of the coalescence time distribution at a set of , we would like to invert the transform to obtain . The moments of are trivial to find, simply by taking derivatives of at , but beyond the first moment these tend to be dominated by rare, deep coalescence events and so are not informative about the bulk of the distribution. Unfortunately, inverting Laplace transforms is a fundamentally hard problem (Epstein and Schotland, 2008), and we need to assume some kind of parametric form for . We have implemented two possibilities in MAGIC.

First, one can assume that can be written as a mixture of gamma distributions:

where and all , , and are positive. This can also be extended to include a possible point mass at when estimating the distribution of features for which it is possible that some trees will have a value of exactly 0. For instance, in a sample of 10 haplotypes, there will typically be some loci for which the coalescent tree has no branches that are ancestral to exactly 5 loci. (More generally, this should be considered when estimating the distribution of lengths of branches that are ancestral to exactly out of sample of individuals, for any .) Alternatively, one can assume that can be written as a piecewise-exponential function, as in MSMC and PSMC:

where , , and . For pairwise coalescence times, has a natural interpretation as the instantaneous coalescence rate, that is, the inverse of .

Both of these forms have the computational advantage of having analytic Laplace transforms:

with an additional constant term if a point mass at is included. This means that we can simply fit Equation 8 to the values without having to deal with inverse Laplace transforms directly. We use the basin-hopping optimization algorithm implemented in SciPy to find the parameters that minimize the scaled squared error . For the gamma mixture, we optimize . For the piecewise exponential, we fix the to be evenly spread in log space between and where and optimize the rates , with a quadratic penalty on for regularization. These forms are flexible enough to fit all the data that we have tried (see, e.g., Figure 6, bottom left – the two forms give indistinguishable curves). The piecewise-exponential form is better-suited for estimating from pairwise coalescence times, and appears to tend to be more accurate in the tails of the distribution (Figure 7); we have used it in all curves except the ones in Figure 7 specifically labeled as showing the gamma mixture form.

Comparison of the functional forms for the coalescence time distribution.

The inferred piecewise exponential (solid magenta) and gamma mixture (dashed purple) distributions are very similar, but where they differ, the piecewise exponential form is closer to the true distribution (black). Simulations are the same as shown in Figure 2. The two forms’ predicted block-length distributions (dotted curves in Figure 2 are indistinguishable.

Block-length distributions

Request a detailed protocolIdentical-by-descent (IBD) blocks are stretches of the genome that have not undergone recombination since the common ancestor of the block. The distribution of block lengths can be used to infer patterns of relatedness and ancestry (Li and Durbin, 2011; Ralph and Coop, 2013), but it is hard to measure except for long blocks with very recent common ancestors, because the recombination events are not directly observable. Under the standard assumptions that coalescence is mostly driven by neutral processes (rather than linked selection) and that recombination primarily occurs via crossovers, the distribution of the genetic map lengths of these blocks across the genome is closely related to the Laplace transform of the coalescence time distribution:

We can estimate the block-length distribution in Morgans simply by approximating the derivative of , a much easier problem than inverting the transform. If we want to convert map lengths to numbers of bases, we need to estimate the crossover frequency per base. The dependence of on (i.e., in Equation 5 above) provides a rough estimate; MSMC gives a more precise one. blocklength gives the distribution of lengths of blocks that are not interrupted by even ‘ghost’ recombination events where the coalescent tree does not change ([Marjoram and Wall, 2006]’s ‘R class’ of events). In the simplest case of a single diploid sample from a well-mixed population evolving neutrally under the Kingman coalescent, these ghost events can be ignored by simply scaling all recombination rates by , but in general they must be included, even though they are not directly observable.

Required sequence length and polymorphism density

Request a detailed protocolFor MAGIC to accurately estimate the probability of coalescence within a time interval, the underlying genomic data must contain many regions that coalesced within that interval, and these regions must have enough polymorphisms to put reasonable limits on their coalescence times. For these to be true, there must be at least some minimum length of sequence, and recombination must not be too frequent relative to mutation (or else regions short enough to have a single coalescent history will not contain multiple polymorphisms). To explore this first condition, we tried decreasing the amount of simulated data in Figure 2 from the original bases. MAGIC was consistently able to infer the Laplace transform (and therefore the probability distribution) when using only bases (i.e., approximately one human chromosome), but when using just bases succeeded in only some simulations (Figure 8, top). To explore the second condition, we re-simulated the ‘bottleneck’ history with increasing values of the crossover rate, (and without gene conversion). MAGIC accurately inferred the full Laplace transform until crossovers became more frequent than mutations, (Figure 8, bottom), at which point it was only able to infer for small values of , where the inference primarily depends on short windows (Figure 6, right panels).

Limits on inferring the Laplace transform of simulated pairwise coalescence distributions.

Symbols and colors are as in the bottom left panel of Figure 6. Top: MAGIC can usually accurately infer the Laplace transform from sequenced bases (top row), but often fails when the data is limited to bases (bottom row, right two panels). Even under limited data, the inferences that it does manage to make remain accurate (bottom row, left panel). Other simulation parameters are as in the top row of Figure 2. Bottom: MAGIC can infer most of the Laplace transform when the crossover rate, , is less than the mutation rate, (left two panels), but for (right panel), it becomes limited to large . Simulation parameters are as in the top left panel of Figure 2, with increased.

Implementation

Request a detailed protocolThe code for MAGIC is written in Python and is available at https://github.com/weissmanlab/magic (Weissman, 2017). It uses the same input format as MSMC and the msmc-tools suite. A copy is archived at https://github.com/elifesciences-publications/magic.

Data processing

Request a detailed protocolWe use the 69 Genomes Diversity Panel from Complete Genomics (Drmanac et al., 2010), and use msmc-tools (Schiffels and Durbin, 2014) to turn the data into a list of SNPs. We split the genome into windows of 80 bp, count the number of SNPs in each window, and then repeatedly merge all windows in pairs and re-count to get the SNP count distribution at successively larger length scales (Figure 1, bottom left; Figure 6, top left). (To correct for uneven sequencing coverage across windows, all windows with coverage were dropped, and all with coverage were down-sampled to .) This gives us SNP count distributions at a range of length scales for every chromosome of every individual in the data set.

Simulated data

Request a detailed protocolAll coalescent simulations were done in ms (Hudson, 2002). To make the simulations computationally tractable, genomes were assembled from independently simulated ‘chromosomes’ of bases each.

For the test demographic scenarios in Figure 2 and Figure 3, the per-base mutation rate was . (ms is parametrized in terms of the present population size, .) For the ‘bottleneck’ scenario, the demography was given by the command ‘-eG .3 10 -eG .4–10 -eG .6 0’; for ‘repeated bottlenecks’, by ‘-eG .1–10 -eG .3 10 -eG .5–10 -eG .7 10 -eG .9–10 -eG 1.1 10 ”; and for ‘admixture’, by ‘-es .1 1 .5 -ej 2 1 2’. See Figure 9 for schematics. For the pairwise simulations, each sample consisted of chromosomes with recombination rates as listed in Figure 2. For the larger-sample simulations in Figure 3, each sample consisted of chromosomes with per-base rate of crossovers , and per-base rate of initiation of gene conversion with mean tract length .

Demographic scenarios simulated.

All time intervals are in units of . In the ‘bottleneck’ and ‘repeated bottlenecks’ scenarios, the population grows and shrinks exponentially at rate .

Data availability

-

69 GenomesPublicly available at the 69 Genomes Data website (download link: ftp://ftp2.completegenomics.com/).

References

-

Descartes' rule of signs and the identifiability of population demographic models from genomic variation dataThe Annals of Statistics 42:2469–2493.https://doi.org/10.1214/14-AOS1264

-

Construction of improved estimators in multiparameter estimation for discrete exponential familiesThe Annals of Statistics 11:351–367.https://doi.org/10.1214/aos/1176346143

-

A high-resolution recombination map of the human genomeNature Genetics 31:241–247.https://doi.org/10.1038/ng917

-

Exploring population size changes using SNP frequency spectraNature Genetics 47:555–559.https://doi.org/10.1038/ng.3254

-

Approximating the coalescent with recombinationPhilosophical Transactions of the Royal Society B: Biological Sciences 360:1387–1393.https://doi.org/10.1098/rstb.2005.1673

-

Can one learn history from the allelic spectrum?Theoretical Population Biology 73:342–348.https://doi.org/10.1016/j.tpb.2008.01.001

-

Methods and models for unravelling human evolutionary historyNature Reviews Genetics 16:727–740.https://doi.org/10.1038/nrg4005

Article and author information

Author details

Funding

Simons Foundation (Simons Investigator Award)

- Oskar Hallatschek

National Institute of General Medical Sciences (R01GM115851)

- Oskar Hallatschek

The funders had no role in study design, data collection and interpretation, or the decision to submit the work for publication.

Acknowledgements

We thank Paul Marjoram, Magnus Nordborg, Stephan Schiffels, and Diethard Tautz for their very thoughtful and helpful review, with additional thanks to Dr. Schiffels for help with MSMC. We also thank Kelley Harris and Rasmus Nielsen for help with human population genetic data, Peter Ralph and Benjamin H. Good for discussions of the mathematical analysis, Julia Palacios for comments on the preprint, Miaoyan Wang for comments on the beta version of the software, and Razib Khan for suggesting the name of the method.

Copyright

© 2017, Weissman et al.

This article is distributed under the terms of the Creative Commons Attribution License, which permits unrestricted use and redistribution provided that the original author and source are credited.

Metrics

-

- 3,071

- views

-

- 404

- downloads

-

- 22

- citations

Views, downloads and citations are aggregated across all versions of this paper published by eLife.

Citations by DOI

-

- 22

- citations for umbrella DOI https://doi.org/10.7554/eLife.24836