Signal denoising through topographic modularity of neural circuits

Abstract

Information from the sensory periphery is conveyed to the cortex via structured projection pathways that spatially segregate stimulus features, providing a robust and efficient encoding strategy. Beyond sensory encoding, this prominent anatomical feature extends throughout the neocortex. However, the extent to which it influences cortical processing is unclear. In this study, we combine cortical circuit modeling with network theory to demonstrate that the sharpness of topographic projections acts as a bifurcation parameter, controlling the macroscopic dynamics and representational precision across a modular network. By shifting the balance of excitation and inhibition, topographic modularity gradually increases task performance and improves the signal-to-noise ratio across the system. We demonstrate that in biologically constrained networks, such a denoising behavior is contingent on recurrent inhibition. We show that this is a robust and generic structural feature that enables a broad range of behaviorally relevant operating regimes, and provide an in-depth theoretical analysis unraveling the dynamical principles underlying the mechanism.

Editor's evaluation

This manuscript puts forward a new idea that topography in neural networks helps to remove noise from inputs. The authors show that there is a critical level of topography that is needed for network to denoise inputs.

https://doi.org/10.7554/eLife.77009.sa0Introduction

Sensory inputs are often ambiguous, noisy, and imprecise. Due to volatility in the environment and inaccurate peripheral representations, the sensory signals that arrive at the neocortical circuitry are often incomplete or corrupt (Faisal et al., 2008; Renart and Machens, 2014). However, from these noisy input streams, the system is able to acquire reliable internal representations and extract relevant computable features at various degrees of abstraction (Friston, 2005; Okada et al., 2010; DiCarlo et al., 2012). Sensory perception in the mammalian neocortex thus relies on efficiently detecting the relevant input signals while minimizing the impact of noise.

Making sense of the environment also requires the estimation of features not explicitly represented by low-level sensory inputs. These inferential processes (Młynarski and Hermundstad, 2018; Parr et al., 2019) rely on the propagation of internal signals such as expectations and predictions, the accuracy of which must be evaluated against the ground truth, that is the sensory input stream. In a highly dynamic environment, this translates to a continuous process whose precision hinges on the fidelity with which external stimuli are encoded in the neural substrate. Additionally, as the system is modular and hierarchical (strikingly so in the sensory and motor components; Meunier et al., 2010; Park and Friston, 2013), it is critical that the external signal permeates the different processing modules despite the increasing distance from the sensory periphery (the input source) and the various transformations it is exposed to along the way, which degrade the signal via the interference of task-irrelevant and intrinsic, ongoing activity.

Accurate signal propagation can be achieved in a number of ways. One obvious solution is the direct routing and distribution of the signal, such that direct sensory input can be fed to different processing modules, which may be partially achieved through thalamocortical projections (Sherman and Guillery, 2002; Nakajima and Halassa, 2017). Another possibility, which we explore in this study, is to propagate the input signal through tailored pathways that route the information throughout the system, allowing different processing stages to retrieve it without incurring much representational loss. Throughout the mammalian neocortex, the existence and characteristics of structured projections (topographic maps) present a possible substrate for such signal routing. By preserving the relative organization of tuned neuronal populations, such maps imprint spatiotemporal features of (noisy) sensory inputs onto the cortex (Kaas, 1997; Bednar and Wilson, 2016; Wandell and Winawer, 2011). In a previous study (Zajzon et al., 2019), we discovered that structured projections can create feature-specific pathways that allow the external inputs to be faithfully represented and propagated throughout the system, but it remains unclear which connectivity properties are critical and what the underlying mechanism is. Moreover, beyond mere sensory representation, there is evidence that such structure-preserving mappings are also involved in more complex cognitive processes in associative and frontal areas (Hagler and Sereno, 2006; Silver and Kastner, 2009; Patel et al., 2014), suggesting that topographic maps are a prominent structural feature of cortical organization.

In this study, we hypothesize that structured projection pathways allow sensory stimuli to be accurately reconstructed as they permeate multiple processing modules. We demonstrate that, by modulating effective connectivity and regional E/I balance, topographic projections additionally serve a denoising function, not merely allowing the faithful propagation of input signals, but systematically improving the system’s internal representations and increasing signal-to-noise ratio. We identify a critical threshold in the degree of modularity in topographic projections, beyond which the system behaves effectively as a denoising autoencoder (note that the parallel is established here on conceptual, not formal, grounds as the system is capable of retrieving the original, uncorrupted input from a noisy source, but bears no formal similarity to denoising autoencoder algorithms). Additionally, we demonstrate that this phenomenon is robust, with the qualitative behavior persisting across very different models. Theoretical considerations and network simulations show that it hinges solely on the modularity of topographic projections and the presence of recurrent inhibition, with the external input and single-neuron properties influencing where/when, but not if, denoising occurs. Our results suggest that modular structure in feedforward projection pathways can have a significant effect on the system’s qualitative behavior, enabling a wide range of behaviorally relevant and empirically supported dynamic regimes. This allows the system to: (1) maintain stable representations of multiple stimulus features (Andersen et al., 2008); (2) amplify features of interest while suppressing others through winner-takes-all (WTA) mechanisms (Douglas and Martin, 2004; Carandini and Heeger, 2011); and (3) dynamically represent different stimulus features as stable and metastable states and stochastically switch among active representations through a winnerless competition (WLC) effect (McCormick, 2005; Rabinovich et al., 2008; Rost et al., 2018).

Our key finding, that the modulation of information processing dynamics and the fidelity of stimulus/feature representations results from the structure of topographic feedforward projections, provides new meaning and functional relevance to the pervasiveness of these projection maps throughout the mammalian neocortex. Beyond routing feature-specific information from sensory transducers through brainstem, thalamus, and into primary sensory cortices (notably tonotopic, retinotopic, and somatotopic maps), their maintenance within the neocortex (Patel et al., 2014) ensures that even cortical regions that are not directly engaged with the sensory input (higher-order cortex), can receive faithful representations of it, and that these internal signals, emanating from lower-order cortical areas, can dramatically skew and modulate the circuit’s E/I balance and local functional connectivity, resulting in fundamental differences in the systems’ responsiveness.

Results

To investigate the role of structured pathways between processing modules in modulating the fidelity of stimulus representations, we study a network comprising up to six sequentially connected sub-networks (SSNs, see Materials and methods and Figure 1a). Each SSN is a balanced random network (see e.g. Brunel, 2000) of 10,000, sparsely and randomly coupled leaky integrate-and-fire (LIF) neurons (80% excitatory and 20% inhibitory). In each SSN, neurons are assigned to sub-populations associated with a particular stimulus. Excitatory neurons belonging to such stimulus-specific sub-populations then project to the subsequent SSN with a varying degree of specificity. We refer to a set of stimulus-specific sub-populations across the network and the structured feedforward projections among them as a topographic map. The specificity of the map is determined by the degree of modularity of the corresponding projections matrices (see e.g. Figure 1a). Modularity is thus defined as the relative density of connections within a stimulus-specific pathway (i.e., connecting sub-populations associated to the same stimulus; see Materials and methods and Figure 1a). In the following, we study the role of topographic specificity in modulating the system’s functional and representational dynamics and its ability to cope with noise-corrupted input signals.

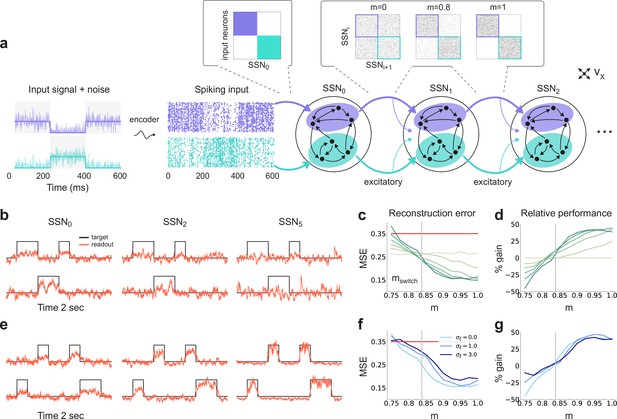

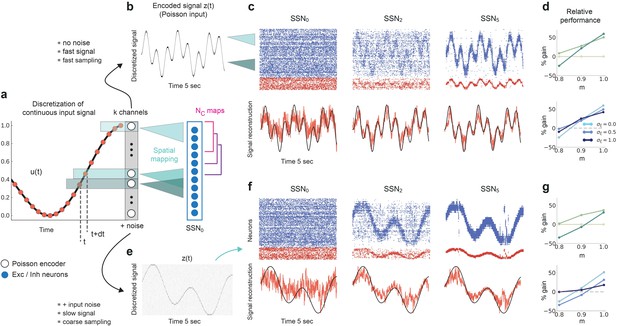

Sequential denoising spiking architecture.

(a) A continuous step signal is used to drive the network. The input is spatially encoded in the first sub-network (SSN0), whereby each input channel is mapped exclusively onto a sub-population of stimulus-specific excitatory and inhibitory neurons (schematically illustrated by the colors; see also inset, top left). This exclusive encoding is retained to variable degrees across the network, through topographically structured feedforward projections (inset, top right) controlled by the modularity parameter (see Materials and methods). This is illustrated explicitly for both topographic maps (purple and cyan arrows). Projections between SSNs are purely excitatory and target both excitatory and inhibitory neurons. (b) Signal reconstruction across the network. Single-trial illustration of target signal (black step function) and readout output (red curves) in three different SSNs, for and no added noise (). For simplicity, only two out of ten input channels are shown. (c) Signal reconstruction error in the different SSNs for the no-noise scenario shown in (b). Color shade denotes network depth, from SSN0 (lightest) to SSN5 (darkest). The horizontal red line represents chance level, while the gray vertical line marks the transition (switching) point (see main text). Figure 1—figure supplement 1 shows the task performance for a broader range of parameters. (d) Performance gain across the network, relative to SSN0, for the setup illustrated in (b). (e) as in (b) but for . (f) Reconstruction error in SSN5 for the different noise intensities. Horizontal and vertical dashed lines as in (c). (g) Performance gain in SSN5, relative to SSN0.

-

Figure 1—source data 1

Code and data for Figure 1 and related figure supplements.

- https://cdn.elifesciences.org/articles/77009/elife-77009-fig1-data1-v2.zip

Sequential denoising through structured projections

By systematically varying the degree of modular specialization in the feedforward projections (modularity parameter, , see Materials and methods and Figure 1), we can control the segregation of stimulus-specific pathways across the network and investigate how it influences the characteristics of neural representations as the signal propagates. If the feedforward projections are unstructured or moderately structured (), information about the input fails to permeate the network, resulting in a chance-level reconstruction accuracy in the last sub-network, SSN5, even in the absence of noise (see Figure 1b, c). However, as approaches a switching value , there is a qualitative transition in the system’s behavior, leading to a consistently higher reconstruction accuracy across the sub-networks (Figure 1b–e), regardless of the amount of noise added to the signal (Figure 1f, g).

Beyond this transition point, reconstruction accuracy improves with depth, that is the signal is more accurately represented in SSN5 than in the initial sub-network, SSN0, with an effective accuracy gain of over 40% (Figure 1d, g). While the addition of noise does impair the absolute reconstruction accuracy in all cases (see Figure 1—figure supplement 1), the denoising effect persists even if the input is severely corrupted (, see Figure 1f, g). This is a counter-intuitive result, suggesting that topographic modularity is not only necessary for reliable communication across multiple populations (see Zajzon et al., 2019), but also supports an effective denoising effect, whereby representational precision increases with depth, even if the signal is profoundly distorted by noise.

Noise suppression and response amplification

The sequential denoising effect observed beyond the transition point results in an increasingly accurate input encoding through progressively more precise internal representations. In general, such a phenomenon could be achieved either through noise suppression, stimulus-specific response amplification or both. In this section, we examine these possibilities by analyzing and comparing the input-driven dynamics of the different sub-networks. The strict segregation of stimulus-specific sub-populations in SSN0 is only fully preserved across the system if , in which case signal encoding and transmission primarily rely on this spatial segregation. Spiking activity across the different SSNs (Figure 2a) demonstrates that the system gradually sharpens the segregation of stimulus-specific sub-populations; indeed, in systems with fully modular feedforward projections, activity in the last sub-network is concentrated predominantly in the stimulated sub-populations. This effect can be observed in both excitatory (E) and inhibitory (I) populations, as both are equally targeted by the feedforward excitatory projections. The sharpening effect consists of both noise suppression and response amplification (Figure 2b), measured as the relative firing rates of the non-stimulated and stimulated sub-populations , respectively. For ,. noise suppression is only marginal and responses within the stimulated pathways are not amplified ().

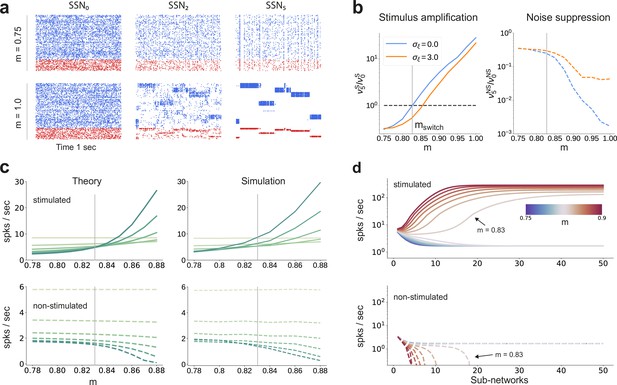

Activity modulation and representational precision.

(a) One second of spiking activity observed across 1000 randomly chosen excitatory (blue) and inhibitory (red) neurons in SSN0, SSN2 and SSN5, for and (top) and (bottom). (b) Mean quotient of firing rates in SSN5 and SSN0 for stimulated (S, left) and non-stimulated (NS, right) sub-populations for different input noise levels, describing response amplification and noise suppression, respectively. (c) Mean firing rates of the stimulated (top) and non-stimulated (bottom) excitatory sub-populations in the different SSNs (color shade as in Figure 1), for . For modularity values facilitating an asynchronous irregular regime across the network, the firing rates predicted by mean-field theory (left) closely match the simulation data (right). (d) Mean-field predictions for the stationary firing rates of the stimulated (top) and non-stimulated (bottom) sub-populations, in a system with 50 sub-networks and . Note that all reported simulation data correspond to the mean firing rates acquired over a period of 10 s and averaged across 5 trials per condition. Figure 2—figure supplement 1 shows the firing rates as a function of the input intensity .

-

Figure 2—source data 1

Code and data for Figure 2 and related figure supplements.

- https://cdn.elifesciences.org/articles/77009/elife-77009-fig2-data1-v2.zip

Mean-field analysis of the stationary network activity (see Materials and methods and Appendix B) predicts that the firing rates of the stimulus-specific sub-populations increase systematically with modularity, whereas the untuned neurons are gradually silenced (Figure 2c, left). At the transition point , mean firing rates across the different sub-networks converge, which translates into a globally uniform signal encoding capacity, corresponding to the zero-gain convergence point in Figure 1d, g. As the degree of modularity increases beyond this point, the self-consistent state is lost again as the functional dynamics across the network shifts toward a gradual response sharpening, whereby the activity of stimulus-tuned neurons become increasingly dominant (Figure 2a–c). The effect is more pronounced for the deeper sub-networks. Note that the analytical results match well with those obtained by numerical simulation (Figure 2c, right).

In the limit of very deep networks (up to 50 SSNs, Figure 2d) the system becomes bistable, with rates converging to either a high-activity state associated with signal amplification or a low-activity state driven by the background input. The transition point is observed at a modularity value of , matching the results reported so far. Below this value, elevated activity in the stimulated sub-populations can be maintained across the initial sub-networks (<10), but eventually dies out; the rate of all neurons decays and information about the input cannot reach the deeper populations. Importantly, for , the transition toward the high-activity state is slower. This allows the input signal to faithfully propagate across a large number of sub-networks (), without being driven into implausible activity states.

E/I balance and asymmetric effective couplings

The departure from the balanced activity in the initial sub-networks can be better understood by zooming in at the synaptic level and analyzing how topography influences the synaptic input currents. The segregation of feedforward projections into stimulus-specific pathways breaks the symmetry between excitation and inhibition (see Figure 3a) that characterizes the balanced state (Haider et al., 2006; Shadlen and Newsome, 1994), for which the first two sub-networks were tuned (see Materials and methods). E/I balance is thus systematically shifted toward excitation in the stimulated populations and inhibition in the non-stimulated ones. Neurons belonging to sub-populations associated with the active stimulus receive significantly more net overall excitation, whereas the other neurons become gradually more inhibited. This disparity grows not only with modularity but also with network depth. Overall, across the whole system, increasing modularity results in an increasingly inhibition-dominated dynamical regime (inset in Figure 3a), whereby stronger effective inhibition silences non-stimulated populations, thus sharpening stimulus/feature representations by concentrating activity in the stimulus-driven sub-populations.

Asymmetric effective couplings modulate the E/I balance and support sequential denoising.

(a) Mean synaptic input currents for neurons in the stimulated (solid curves) and non-stimulated (dashed curves) excitatory sub-populations in the different SSNs. To avoid clutter, data for SSN0 are only shown by markers (independent of ). Inset shows the currents (in pA) averaged over all excitatory neurons in the different sub-networks; increasing modularity leads to a dominance of inhibition in the deeper sub-networks. Color shade represents depth, from SSN1 (light) to SSN5 (dark). (b) Mean-field approximation of the effective recurrent weights in SSN5. Curve shade and style as in (a). (c) Spectral radius of the effective connectivity matrices as a function of modularity. (d) Eigenvalue spectra for the effective coupling matrices in SSN5, for (top) and (bottom). The largest negative eigenvalue (outlier, see Materials and methods), characteristic of inhibition-dominated networks, is omitted for clarity.

-

Figure 3—source data 1

Code and data for Figure 3.

- https://cdn.elifesciences.org/articles/77009/elife-77009-fig3-data1-v2.zip

To gain an intuitive understanding of these effects from a dynamical systems perspective, we linearize the network dynamics around the stationary working points of the individual populations (Tetzlaff et al., 2012) in order to obtain the effective connectivity of the system (see Materials and methods and Appendix B). The effective impact of a single spike from a presynaptic neuron on the firing rate of a postsynaptic neuron (the effective weight ) is determined not only by the synaptic efficacies , but also by the statistics of the synaptic input fluctuations to the target cell that determine its excitability (see Materials and methods, Equation 6). This analysis reveals that there is an increase in the effective synaptic input onto neurons in the stimulated sub-populations as a function of modularity (Figure 3b). Conversely, non-stimulated neurons effectively receive weaker excitatory (and stronger inhibitory) drive and become increasingly less responsive (see Figure 3a, b). The role of topographic modularity in denoising can thus be understood as a transient, stimulus-specific change in effective connectivity.

For low and moderate topographic precision (), denoising does not occur as the effective weights are sufficiently similar to maintain a stable E/I balance across all populations and sub-networks (Figure 3a, b), resulting in a relatively uniform global dynamical state (indicated in Figure 3c by a constant spectral radius for , see also Materials and methods) and stable linearized dynamics ().

However, as the feedforward projections become more structured, the system undergoes qualitative changes: after a weak transient () the spectral radius in the deep SSNs expands due to the increased effective coupling to the stimulated sub-population (Figure 3b); the spectral radius eventually () contracts with increasing modularity (Figure 3c, d). Given that is determined by the variance of , that is heterogeneity across connections (Rajan and Abbott, 2006), this behavior is expected: most weights are in the non-stimulated pathways, which decrease with larger and network depth (Figure 3b). Strong inhibitory currents (Figure 3a) suppress the majority of neurons, thereby reducing noise, as demonstrated by the collapse of the bulk of the eigenvalues toward the center for larger (Figure 3d). Indicative of a more constrained state space, this contractive effect suggests that population activity becomes gradually entrained by the spatially encoded input along the stimulated pathway, whereas the responses of the non-stimulated neurons have a diminishing influence on the overall behavior.

By biasing the effective connectivity of the system, precise topography can thus modulate the balance of excitation and inhibition in the different sub-networks, concentrating the activity along specific pathways. This results in both a systematic amplification of stimulus-specific responses and a systematic suppression of noise (Figure 2b). The sharpness/precision of topographic specificity along these pathways thus acts as a critical control parameter that largely determines the qualitative behavior of the system and can dramatically alter its responsiveness to external inputs.

Modulating inhibition

How can the system generate and maintain the elevated inhibition underlying such a noise-suppressing regime? On the one hand, feedforward excitatory input may increase the activity of certain excitatory neurons in of sub-network , which, in turn, can lead to increased mean inhibition through local recurrent connections. On the other hand, denoising could depend strongly on the concerted topographic projections onto . Such structured feedforward inhibition is known to play important functional roles in, for example, sharpening the spatial contrast of somatosensory stimuli (Mountcastle and Powell, 1959) or enhancing coding precision throughout the ascending auditory pathways (Roberts et al., 2013).

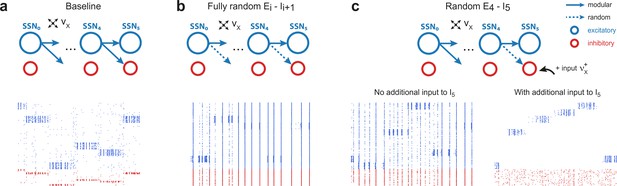

To investigate whether recurrent activity alone can generate sufficiently strong inhibition for signal transmission and denoising, we maintained the modular structure between the excitatory populations and randomized the feedforward projections onto the inhibitory ones ( for , compare top panels of Figure 4a, b). This leads to unstable firing patterns in the downstream sub-networks, characterized by significant accumulation of synchrony and increased firing rates (see bottom panels of Figure 4a, b and Figure 4—figure supplement 1a, b). These effects, known to result from shared pre-synaptic excitatory inputs (see e.g. Shadlen and Newsome, 1998; Tetzlaff et al., 2003; Kumar et al., 2008a), are more pronounced for larger and network depth (see Figure 4—figure supplement 1). Compared with the baseline network, whose activity shows clear spatially encoded stimuli (sequential activation of stimulus-specific sub-populations [Figure 4a, bottom]), removing structure from the projections onto inhibitory neurons abolishes the effect and prevents accurate signal transmission.

Modular projections to inhibitory populations stabilize network dynamics.

Raster plots show 1 s of spiking activity of 1000 randomly chosen neurons in SSN5, for different network configurations. (a) Baseline network with . (b) Unstructured feedforward projections to the inhibitory sub-populations lead to highly synchronized network activity, hindering signal representation. (c) Same as the baseline network in (a), but with random projections for and additional but unspecific (Poissonian) excitatory input to controlled via . Without such input (, left), the activity is strongly synchronous, but this is compensated for by the additional excitation, reducing synchrony and restoring the denoising property ( spikes/s, right). Figure 4—figure supplement 1 depicts the activity statistics in the last two modules, for the different scenarios.

-

Figure 4—source data 1

Code and data for Figure 4 and related figure supplements.

- https://cdn.elifesciences.org/articles/77009/elife-77009-fig4-data1-v2.zip

These effects of unstructured inhibitory projections are so marked that they can be observed even if a single set of projections is modified: this can be seen in Figure 4c, where only the connections are randomized. It is worth noting, however, that the excessive synchronization that results from unstructured inhibitory projections (Figure 4c, bottom left, no additional input condition) can be easily counteracted by driving (the inhibitory population that receives only unstructured projections) with additional uncorrelated external input. If strong enough (), this additional external drive pushes the inhibitory population into an asynchronous regime that restores the sharp, stimulus-specific responses in the excitatory population of the corresponding sub-network (see Figure 4c, bottom right, and Figure 4—figure supplement 1c).

These results emphasize the control of inhibitory neurons’ responsiveness as the main causal mechanism behind the effects reported. Elevated local inhibition is strictly required, but whether this is achieved by tailored, stimulus-specific activation of inhibitory sub-populations, or by uncorrelated excitatory drive onto all inhibitory neurons appears to be irrelevant and both conditions result in sharp, stimulus-tuned responses in the excitatory populations.

A generalizable structural effect

We have demonstrated that, by controlling the different sub-networks’ operating point, the sharpness of feedforward projections allows the architecture to systematically improve the quality of internal representations and retrieve the input structure, even if profoundly corrupted by noise. In this section, we investigate the robustness of the phenomenon in order to determine whether it can be entirely ascribed to the topographic projections (a structural/architectural feature) or if the particular choices of models and model parameters for neuronal and synaptic dynamics contribute to the effect.

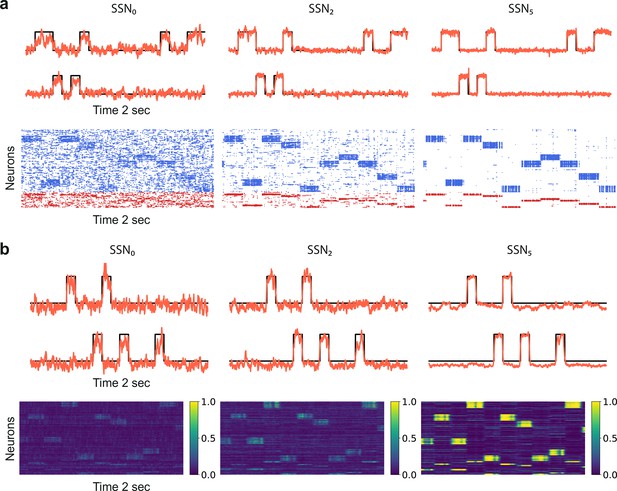

To do so, we study two alternative model systems on the signal denoising task. These are structured similar to the baseline system explored so far, comprising separate sequential sub-networks with modular feedforward projections among them (see Figure 1 and Materials and methods), but vary in total size, neuronal and synaptic dynamics. In the first test case, only the models of synaptic transmission and corresponding parameters are altered. To increase biological verisimilitude and following Zajzon et al., 2019, synaptic transmission is modeled as a conductance-based process, with different kinetics for excitatory and inhibitory transmission, corresponding to the responses of and receptors, respectively, see Materials and methods and Supplementary file 3 for details. The results, illustrated in Figure 5a, demonstrate that task performance and population activity across the network follow a similar trend to the baseline model (Figures 1 and 2a, b). Despite severe noise corruption, the system is able to generate a clear, discernible representation of the input as early as SSN2 and can accurately reconstruct the signal. Importantly, the relative improvement with increasing modularity and network depth is retained. In comparison to the baseline model, the transition occurs for a slightly different topographic configuration, , at which point the network dynamics converges toward a low-rate, stable asynchronous irregular regime across all populations, facilitating a linear firing rate propagation along the topographic maps (Figure 5—figure supplement 1).

Denoising through modular topography is a robust structural effect.

(a) Signal reconstruction (top) and corresponding network activity (bottom) for a network with leaky integrate-and-fire (LIF) neurons and conductance-based synapses (see Materials and methods). Single-trial illustration of target signal (black step function) and readout output (red curves) in three different SSNs, for and strong noise corruption (). For simplicity, only two out of ten input channels are shown. Figure 5—figure supplement 1 shows additional activity statistics. (b) As in (a) for a rate-based model with and (see Materials and methods for details).

-

Figure 5—source data 1

Code and data for Figure 5 and related figure supplements.

- https://cdn.elifesciences.org/articles/77009/elife-77009-fig5-data1-v2.zip

The second test case is a smaller and simpler network of nonlinear rate neuron models (see Figure 5b and Materials and methods) which interact via continuous signals (rates) rather than discontinuities (spikes). Despite these profound differences in the neuronal and synaptic dynamics, the same behavior is observed, demonstrating that sequential denoising is a structural effect, dependent on the population firing rates and thus less sensitive to fluctuations in the precise spike times. Moreover, the robustness with respect to the network size suggests that denoising could also be performed in smaller, localized circuits, possibly operating in parallel on different features of the input stimuli.

Variable map sizes

Despite their ubiquity throughout the neocortex, the characteristics of structured projection pathways is far from uniform (Bednar and Wilson, 2016), exhibiting marked differences in spatial precision and specificity, aligned with macroscopic gradients of cortical organization. This non-uniformity may play an important functional role supporting feature aggregation (Hagler and Sereno, 2006) and the development of mixed representations (Patel et al., 2014) in higher (more anterior) cortical areas. Here, we consider two scenarios in the baseline (current-based) model to examine the robustness of our findings to more complex topographic configurations.

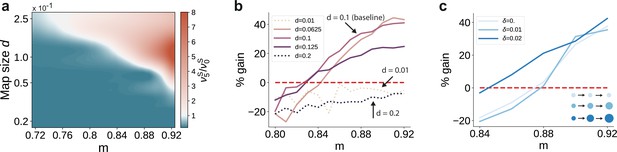

First, we varied the size of stimulus-tuned sub-populations (parametrized by , see Materials and methods) but kept them fixed across the network. For small sub-populations and intermediate degrees of topographic modularity, the activity along the stimulated pathway decays with network depth, suggesting that input information does not reach the deeper SSNs (see Figure 6a and Figure 6—figure supplement 1). These results place a lower bound on the size of stimulus-tuned sub-populations below which no signal propagation can occur, as reflected by the negative gain in performance for (Figure 6b). Whereas denoising is robust to variation around the baseline value of that yielded perfect partitioning of the feedforward projections (see Supplementary Materials), an upper bound may emerge due to increasing overlap between the maps ( in Figure 6b). In this case, the activity may ‘spill over’ to other pathways than the stimulated one, corrupting the input representations and hindering accurate transmission and decoding. This can be alleviated by reduced or no overlap (as in Figure 6a), in which case signal propagation and denoising is successful for larger map sizes ( also for ). We thus observe a trade-off between map size, overlap and the degree of topographic precision that is required to accurately propagate stimulus representations (see Discussion).

Variation in the map sizes.

(a) Ratio of the firing rates of the stimulated sub-populations in the first and last sub-networks, , as a function of modularity and map size (parameterized by and constant throughout the network, that is , see Materials and methods). Depicted values correspond to stationary firing rates predicted by mean-field theory, smoothed using a Lanczos filter. Note that, in order to ensure that every neuron was uniquely tuned, that is there is no overlap between stimulus-specific sub-populations, the number of sub-populations was igen

chosen to be proportional to the map size (). (b, c) Performance gain in SSN5 relative to SSN0 (ten stimuli, as in Figure 1d, g), for varying properties of structural mappings: (b) fixed map size () with color shade denoting map size, and (c) linearly increasing map size () and a smaller initial map size . The results depict the average performance gains measured across five trials, using the current-based model illustrated in Figure 1 (ten stimuli) and no input noise (). Figure 6—figure supplement 1 further illustrates how the activity varies across the modules as a function of the map size.

-

Figure 6—source data 1

Code and data for Figure 6 and related figure supplements.

- https://cdn.elifesciences.org/articles/77009/elife-77009-fig6-data1-v2.zip

Second, we took into account the fact that these structural features are known to vary with hierarchical depth resulting in increasingly larger sub-populations and, consequently, increasingly overlapping stimulus selectivity (Smith et al., 2001; Patel et al., 2014; Bednar and Wilson, 2016). To capture this effect, we introduce a linear scaling of map size with depth ( for , see Materials and methods). The ability of the circuit to gradually clean the signal’s representation is fully preserved, as illustrated in Figure 6c. In fact, for intermediate modularity () broadening the projections can further sharpen the reconstruction precision (compare curves for and ).

Taken together, these observations demonstrate that a gradual denoising of stimulus inputs can occur entirely as a consequence of the modular wiring between the subsequent processing circuits. Importantly, this effect generalizes well across diverse neuron and synapse models, as well as key system properties, making modular topography a potentially universal circuit feature for handling noisy data streams.

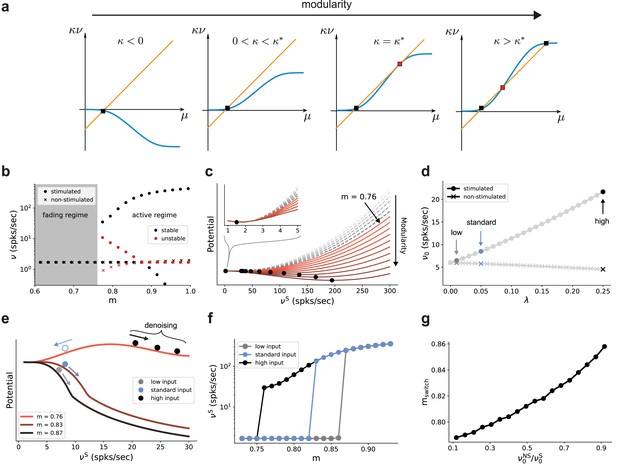

Modularity as a bifurcation parameter

The results so far indicate that the modular topographic projections, more so than the individual characteristics of neurons and synapses, lead to a sequential denoising effect through a joint process of signal amplification and noise suppression. To better understand how the system transitions to such an operating regime, it is helpful to examine its macroscopic dynamics in the limit of many sub-networks (Toyoizumi, 2012; Cayco-Gajic and Shea-Brown, 2013; Kadmon and Sompolinsky, 2016). We apply standard mean-field techniques (Fourcaud and Brunel, 2002; Helias et al., 2013; Schuecker et al., 2015) to find the asymptotic firing rates (fixed points across sub-networks) of the stimulated and non-stimulated sub-populations as a function of topography (Figure 2d). For this, we can approximate the input μ to a group of neurons as a linear function of its firing rate with a slope that is determined by the coupling within the group and an offset given by inputs from other groups of neurons (orange line in Figure 7a). With an approximately sigmoidal rate transfer function, the self-consistent solutions are at the intersections marked in Figure 7a.

Modularity changes the fixed point structure of the system.

(a) Sketch for self-consistent solution (for the full derivation, see Appendix B) for the firing rate of the stimulated sub-population (blue curves) and the linear relation (orange lines), in the limit of infinitely deep networks. Squares denote stable (black) and unstable (red) fixed points where input and output rates are the same. (b) Bifurcation diagram obtained from numerical evaluation of the mean-field self-consistency equations, Equations 9 and 10 showing a single stable fixed point in the fading regime, and multiple stable (black) and unstable (red) fixed points in the active regime where denoising occurs. (c) Potential energy of the mean activity (see Materials and methods and Equation 22 in Appendix B) for increasing topographic modularity. A stable state, corresponding to local minimum in the potential, exists at a low non-zero rate in every case, including for (gray dashed curves, inset). For (colored solid curves), a second fixed point appears at progressively larger firing rates. (d) Theoretical predictions for the stationary firing rates of the stimulated and non-stimulated sub-populations in SSN0, as a function of stimulus intensity (, see Materials and methods). Low, standard, and high denote values of 0.01, 0.05 (baseline value used in Figure 1), and 0.25, respectively. (e) Sketch of attractor basins in the potential for different values of . Markers correspond to the highlighted initial states in (d), with solid and dashed arrows indicating attraction toward the high- and low-activity state, respectively. (f) Firing rates of the stimulated sub-population as a function of modularity in the limit of infinite sub-networks, for the three different marked in (d). (g) Modularity threshold for the active regime shifts with increasing noise in the input, modeled as additional input to the non-stimulated sub-populations in SSN0. Figure 7—figure supplement 1 show the dependency of the effective feedforward couplings on different parameters. Note that all panels (except (a)) show theoretical predictions obtained from numerical evaluation of the mean-field self-consistency equations.

-

Figure 7—source data 1

Code and data for Figure 7 and related figure supplements.

- https://cdn.elifesciences.org/articles/77009/elife-77009-fig7-data1-v2.zip

Formally, all neurons in the deep sub-networks of one topographic map form such a group as they share the same firing rate (asymptotic value). The coupling within this group comprises not only recurrent connections of one sub-network but also modular feedforward projections across sub-networks. For small modularity, the group is in an inhibition-dominated regime () and we obtain only one fixed point at low activity (Figure 7a, left). Importantly, the firing rate of this fixed point is the same for stimulated and non-stimulated topographic maps. Any influence of input signals applied to SSN0 therefore vanishes in the deeper sub-networks and the signal cannot be reconstructed (fading regime). As topographic projections become more concentrated (larger ), changes sign and gradually leads to two additional fixed points (as conceptually illustrated in Figure 7a and quantified in Figure 7b by numerically solving the self-consistent mean-field equations, see also Appendix B): an unstable one (red) that eventually vanishes with increasing and a stable high-activity fixed point (black). The bistability opens the possibility to distinguish between stimulated and non-stimulated topographic maps and thereby reconstruct the signal in deep sub-networks: in the active regime beyond the critical modularity threshold (here ), a sufficiently strong input signal can drive the activity along the stimulated map to the high-activity fixed point, such that it can permeate the system, while the non-stimulated sub-populations still converge to the low-activity fixed point. Note that this critical modularity represents the minimum modularity value for which bistability emerges. It typically differs from the actual switching point , which additionally depends on the input intensity.

In the potential energy landscape (see Materials and methods), where stable fixed points correspond to minima, the bistability that emerges for more structured topography can be understood as a transition from a single minimum at low rates (Figure 7c, inset) to a second minimum associated with the high-activity state (Figure 7c). Even though the full dynamics of the spiking network away from the fixed point cannot be entirely understood in this simplified potential picture (see Appendix B), qualitatively, more strongly modular networks cause deeper potential wells, corresponding to more attractive dynamical states and higher firing rates (see Figure 9—figure supplement 2).

Because the intensity of the input signal dictates the rate of different populations in the initial sub-network SSN0 (Figure 7d), it also determines, for any given modularity, whether the rate of the stimulated sub-population is in the basin of attraction of the high-activity (see Figure 7e, solid markers and arrows) or low-activity (dashed, blue marker and arrow) fixed point. Denoising, and therefore increasing signal reconstruction, is thus achieved by successively (across sub-networks) pushing the population states toward the self-consistent firing rates.

As reported above, for the baseline network and (standard) input () used in Figures 1 and 2, the switching point between low and high activity is at (blue markers in Figure 7d, f). Stronger input signals move the switching point toward the minimal modularity of the active regime (black markers in Figure 7d, f), while weaker inputs only induce a switch at larger modularities (gray markers in Figure 7d, f).

Noise in the input simply shifts the transition point to the high-activity state in a similar manner, with more modular connectivity required to compensate for stronger jitter (Figure 7g). However, as long as the mean firing rate of the stimulated sub-population in SSN0 is slightly higher than that of the non-stimulated ones (up to 0.5 spks/sec), it is sufficient to position the system in the attracting basin of the high-rate fixed point and the system is able to clean the signal representation. This indicates a remarkably robust denoising mechanism.

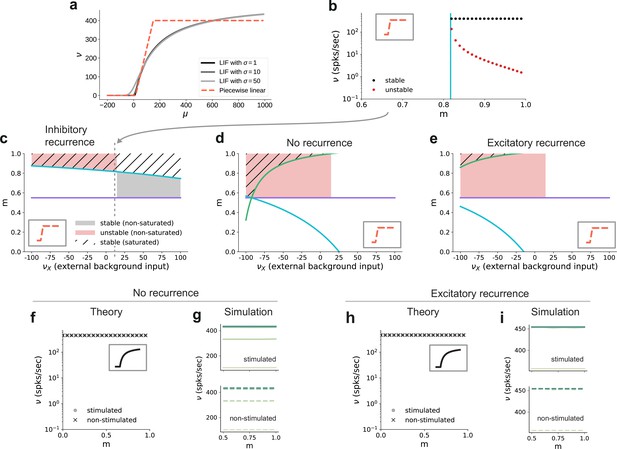

Critical modularity for denoising

In addition to properties of the input, the critical modularity marking the onset of the active regime is also influenced by neuronal and connectivity features. To build some intuition, it is helpful to consider the sigmoidal activation function of spiking neurons (Figure 8a). The nonlinearity of this function prohibits us from obtaining quantitative, closed-form analytical expressions for the critical modularity and requires a numerical solution of the self-consistency equations (Figure 7b). However, since the continuous rate model shows a qualitatively similar behavior to the spiking baseline model (see Section ‘A generalizable structural effect’), we can study a fully analytically tractable model with piecewise linear activation function (Figure 8a, b) to expose the dependence of the critical modularity on both neuron and network properties (see detailed derivations in Appendix B).

Dependence of critical modularity on neuron and connectivity features.

(a) Activation function for leaky integrate-and-fire model as a function of the mean input μ for (black to gray) and piecewise linear approximation with qualitatively similar shape (red). (b) Bifurcation diagram as in Figure 7b, but for piecewise linear activation function shown in inset. Low-activity fixed points at zero rate are not shown, which is the case throughout for the non-stimulated sub-populations. This panel corresponds to the cross-section marked by the gray dashed lines in (c), at . Likewise, the vertical cyan bar corresponds to the lower bound on modularity depicted by the cyan curve in (c) for the same value . (c) Analytically derived bounds on modularity (purple line corresponds to Equation 1, cyan curve to Equation 2) as a function of external input for the baseline model with inhibition-dominated recurrent connectivity (). Shaded regions denote positions of stable (black) and unstable (red) fixed points with and . Hatched area represents region with stable fixed points at saturated rates. Denoising occurs in all areas with stable fixed points (hatched and black shaded regions). Negative values on the x-axis correspond to inhibitory external background input with rate . (d) Same as panel (c) for networks with no recurrent connectivity within the SSNs (green curve defined by Equation 3). (e) Same as panel (c) for networks with excitation-dominated connectivity within SSNs (). (f) Same as Figure 7b, obtained through numerical evaluation of the mean-field self-consistent equations for the spiking model. All non-zero fixed points are stable, with points representing stimulated (circle) and non-stimulated (cross) populations overlapping. (g) Mean firing rates across the SSNs in the current-based (baseline) model with no recurrent connections, obtained from 5 s of network simulations and averaged over five trials. (h, i) Same as (f, g) for networks with excitation-dominated connectivity.

-

Figure 8—source data 1

Code and data for Figure 8 and related figure supplements.

- https://cdn.elifesciences.org/articles/77009/elife-77009-fig8-data1-v2.zip

In this simple model, the output is zero for inputs below and at maximum rate for inputs above . In between these two bounds, the output is linearly interpolated . As discussed before, successful denoising is achieved if the non-stimulated sub-populations are silent, , and the stimulated sub-populations are active, . Note that in the following we focus on this ideal scenario representing perfect denoising, but, in principle, intermediate solutions with may also occur and could still be considered as successful denoising. Analyzing for which neuron, network and input properties this scenario is achieved, we obtain multiple conditions for the modularity that need to be fulfilled.

The first condition illustrates the dependence of the critical modularity on the neuron model (Figure 8c, purple horizontal line)

where is the number of stimulus-specific sub-populations and (typically with a value of 0.25) represents the (reduced) noise ratio in the deeper sub-networks, with scaling the noise and scaling the feedforward connections (see Materials and methods). This is necessary to ensure that the total excitatory input to each neuron is consistent across the network. In particular, the critical modularity depends on the dynamic range of input and output . The condition represents a lower bound on the modularity required for denoising. Importantly, while it depends on the effective coupling strength , the noise ratio and the number of maps (see Materials and methods), it does not depend on the nature of the recurrent interactions (E/I ratio) and the strength of the external background input. In addition, we find two additional critical values of the modularity (cyan and green curves in Figure 8c–e), both of which do depend on the strength of the external background input and the recurrent connectivity (E/I ratio ):

Depending on the external input strength , these are either upper or lower bounds. In the denominator of these expressions, the total input (recurrent and external) is compared to the limits of the dynamic range of the neuron model. The cancellation between recurrent and external inputs in the inhibition-dominated baseline model typically yields a total input within the dynamic range of the neuron, such that modularity in feedforward connections can decrease the input of the non-stimulated sub-populations to silence them, and increase the input of the stimulated sub-populations to support their activity. The competition between the excitatory and inhibitory contributions ensures that the total input does not lead to a saturating output activity. Thus, for inhibitory recurrence, denoising can be achieved at a moderate level of modularity over a large range of external background inputs (shaded black and hatched regions in Figure 8c), which demonstrates a robust denoising mechanism even in the presence of changes in the input environment.

In contrast, if recurrent connections are absent, strong inhibitory external background input is required to counteract the excitatory feedforward input and achieve a denoising scenario (Figure 8d). Fixed points at non-saturated activity are also present for low excitatory external input, but unstable due to the positive recurrent feedback. This is because in networks without recurrence, there is no competition between the recurrent input and the external and feedforward inputs. As a result, the input to both the stimulated and non-stimulated sub-populations is typically high, such that modulation of the feedforward input via topography cannot lead to a strong distinction between the pathways as required for denoising. In these networks, one typically observes high activity in all populations. A similar behavior can be observed in excitation-dominated networks (Figure 8e), where the inhibitory external background input must be even stronger to compensate the excitatory feedforward and recurrent connectivity and reach a stable denoising regime.

Note that inhibitory external input is not in line with the excitatory nature of external inputs to local circuits in the brain and is therefore biologically implausible. One way to achieve denoising in excitation-dominated networks for excitatory background inputs would be to shift the dynamic range of the activation function (see Figure 8—figure supplement 1), which is, however, not consistent with the biophysical properties of real neurons (distance between threshold and rest as compared to typical strengths of postsynaptic potentials). In summary, we find that recurrent inhibition is crucial to achieve denoising in biologically plausible settings.

These results on the role of recurrence and external input can be transferred to the behavior of the spiking model. While details of the fixed point behavior depend on the specific choice of the activation function, Figure 8f, h shows that there is also no denoising regime for the spiking model in case of no or excitation-dominated recurrence and a biologically plausible level of external input. Instead, one finds high activity in both stimulated and non-stimulated sub-populations, as confirmed by network simulations (Figure 8g, i). Figure 8—figure supplement 2 further confirms that even reducing the external input to zero does not avoid this high-activity state in both stimulated and non-stimulated sub-populations for .

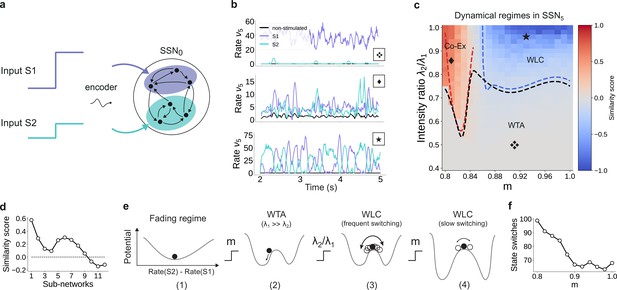

Input integration and multi-stability

The analysis considered in the sections above is restricted to a system driven with a single external stimulus. However, to adequately understand the system’s dynamics, we need to account for the fact that it can be concurrently driven by multiple input streams. If two simultaneously active stimuli drive the system (see illustration in Figure 9a), the qualitative behavior where the responses along the stimulated (non-stimulated) maps are enhanced (silenced) is retained if the strength of the two input channels is sufficiently different (Figure 9b, top panel). In this case, the weaker stimulus is not strong enough to drive the sub-population it stimulates toward the basin of attraction of the high-activity fixed point. Consequently, the sub-population driven by this second stimulus behaves as a non-stimulated sub-population and the system remains responsive to only one of the two inputs, acting as a WTA circuit. If, however, the ratio of stimulus intensities varies, two active sub-populations may co-exist (Figure 9b, center) and/or compete (bottom panel), depending also on the degree of topographic modularity.

For multiple input streams, topography may elicit a wide range of dynamical regimes.

(a) Two active input channels with corresponding stimulus intensities and , mapped onto non-overlapping sub-populations, drive the network simultaneously. Throughout this section, is fixed to the previous baseline value. (b) Mean firing rates of the two stimulated sub-populations (purple and cyan), as well as the non-stimulated sub-populations (black) for three different combinations of and ratios (as marked in (c)). (c) Correlation-based similarity score shows three distinct dynamical regimes in SSN5 when considering the firing rates of two, simultaneously stimulated sub-populations associated with S1 and S2, respectively: coexisting (Co-Ex, red area), winner-takes-all (WTA, gray), and winnerless competition (WLC, blue). Curves mark the boundaries between the different regimes (see Materials and methods). Activity for marked parameter combinations shown in (b). (d) Evolution of the similarity score with increasing network depth, for and input ratio of 0.86. For deep networks, the Co-Ex region vanishes and the system converges to either WLC or WTA dynamics. (e) Schematic showing the influence of modularity and input intensity on the system’s potential energy landscape (see Materials and methods): (1) in the fading regime there is a single low-activity fixed point (minimum in the potential); (2) increasing modularity creates two high-activity fixed points associated with S1 and S2, with the dynamics always converging to the same minimum due to ; (3) strengthening S2 balances the initial conditions, resulting in frequent, fluctuation-driven switching between the two states; (4) for larger values, switching speed decreases as the wells become deeper and the barrier between the wells wider. (f) Switching frequency between the dominating sub-populations in SSN5 decays with increasing modularity. Data computed over 10 s, for . Figure 9—figure supplement 1 and Figure 9—figure supplement 2 show the evolution of the Co-Ex region over 12 modules and the potential landscape, respectively.

-

Figure 9—source data 1

Code and data for Figure 9 and related figure supplements.

- https://cdn.elifesciences.org/articles/77009/elife-77009-fig9-data1-v2.zip

To quantify these variations in macroscopic behavior, we focus on the dynamics of SSN5 and measure the similarity (correlation coefficient) between the firing rates of the two stimulus-specific sub-populations as a function of modularity and ratio of input intensities (see Materials and methods and Figure 9c). In the case that both inputs have similar intensities but the feedforward projections are not sufficiently modular, both sub-populations are activated simultaneously (Co-Ex, red area in Figure 9c). This is the dynamical regime that dominates the earlier sub-networks. However, this is a transient state, and the Co-Ex region gradually shrinks with network depth until it vanishes completely after approximately 9–10 SSNs (see Figure 9d).

For low modularity, the system settles in the single stable state associated with near-zero firing rates, as illustrated schematically in the energy landscape in Figure 9e, (1) (see Materials and methods, Appendix B, and Supplementary Materials for derivations and numerical simulations). Above the critical modularity value, the system enters one of two different regimes. For and an input ratio below 0.7 (Figure 9c, gray area), one stimulus dominates (WTA) and the responses in the two populations are uncorrelated (Figure 9b, top panel). Although the potential landscape contains two minima corresponding to either population being active, the system always settles in the high-activity attractor state corresponding to the dominating input (Figure 9e, (2)).

If, however, the two inputs have comparable intensities and the topographic projections are sharp enough (), the system transitions into a different dynamical state where neither stimulus-specific sub-population can maintain an elevated firing rate for extended periods of time. In the extreme case of nearly identical intensities ( and high modularity, the responses become anti-correlated (Figure 9b, bottom panel), that is the activation of the two stimulus-specific sub-populations switches, as they engage in a dynamic behavior reminiscent of WLC between multiple neuronal groups (Lagzi and Rotter, 2015; Rost et al., 2018). The switching between the two states is driven by stochastic fluctuations (Figure 9e, (3)). The depth of the wells and width of barrier (distance between fixed points) increase with modularity (see Figure 9e, (4) and Figure 9—figure supplement 2), suggesting a greater difficulty in moving between the two attractors and consequently fewer state changes. Numerical simulations confirm this slowdown in switching (Figure 9f).

We wish to emphasize that the different dynamical states arise primarily from the feedforward connectivity profile. Nevertheless, even though the synaptic weights are not directly modified, varying the topographic modularity does translate to a modification of the effective connectivity weights (Figure 3b). The ratio of stimulus intensities also plays a role in determining the dynamics, but there is a (narrow) range (approximately between 0.75 and 0.8) for which all 3 regions can be reached through sole modification of the modularity. Together, these results demonstrate that topography can not only lead to spatial denoising but also enable various, functionally important network operating points.

Reconstruction and denoising of dynamical inputs

Until now, we have considered continuous but piecewise constant, step signals, with each step lasting for a relatively long and fixed period of . This may give the impression that the denoising effects we report only works for static or slowly changing inputs, whereas naturalistic stimuli are continuously varying. Nevertheless, sensory perception across modalities relies on varying degrees of temporal and spatial discretization (VanRullen and Koch, 2003), with individual (sub-)features of the input encoded by specific (sub-)populations of neurons in the early stages of the sensory hierarchy. In this section, we will demonstrate that denoising is robust to the temporal properties of the input and its encoding, as we relax many of the assumptions made in previous sections.

We consider a sinusoidal input signal, which we discretize and map onto the network according to the depiction in Figure 10a. This approach is similar to previous works, for instance it can mimic the movement of a light spot across the retina (Klos et al., 2018). By varying the sampling interval and number of channels , we can change the coarseness of the discretization from step-like signals to more continuous approximations of the input. If we choose a high sampling rate () and sufficient channels (), we can accurately encode even fast changing signals (Figure 10b). Given that each input-driven SSN is inhibition-dominated and therefore close to the balanced state, the network exhibits a fast tracking property (van Vreeswijk and Sompolinsky, 1996) and can accurately represent and denoise the underlying continuous signal in the spiking activity (Figure 10c, top). This is also captured by the readout, with the tracking precision increasing with network depth (Figure 10c, bottom). In this condition, there is a performance gain of up to 50% in the noiseless case (Figure 10d, top) and similar values for varying levels of noise (Figure 10d, bottom).

Reconstruction of a dynamic, continuous input signal.

(a) Sketch of the encoding and mapping of a sinusoidal input onto the current-based network model. The signal is sampled at regular time intervals , with each sample binned into one of channels (which is then active for a duration of ). This yields a temporally and spatially discretized -dimensional binary signal , from which we obtain the final noisy input similar to the baseline network (see Figure 1 and Materials and methods). Unlike the one-to-one mapping in Figure 1, here we decouple the number of channels from that of topographic maps, (map size is unchanged, ). Because , the channels project to evenly spaced but overlapping sub-populations in SSN0, while the maps themselves overlap significantly. (b) Discretized signal and rate encoding for input , with and no noise (). (c) Top panel shows the spiking activity of 500 randomly chosen excitatory (blue) and inhibitory (red) neurons in SSN0, SSN2, and SSN5, for . Corresponding target signal (black) and readout output (red) are shown in bottom panel. (d) Relative gain in performance in SSN2 and SSN5 for (top). Color shade denotes network depth. Bottom panel shows relative gain in SSN5 for different levels of noise . (e–g) Same as (b–d), but for a slowly varying signal (sampled at ), and . Performance results are averaged across five trials. We used 20 s of data for training and 10 s for testing (activity sampled every 1 ms, irrespective of input discretization ).

-

Figure 10—source data 1

Code and data for Figure 10 and related figure supplements.

- https://cdn.elifesciences.org/articles/77009/elife-77009-fig10-data1-v2.zip

Note that due to the increased number of input channels (40 compared to 10) projecting to the same number of neurons in SSN0 as before , for the same the effective amount of noise each neuron receives is, on average, four times larger than in the baseline network. Moreover, the task was made more difficult by the significant overlap between the maps () as well as the resulting decrease in neuronal input selectivity. Nevertheless, similar results were obtained for slower and more coarsely sampled signals (Figure 10e–g).

We found comparable denoising dynamics for a large range of parameter combinations involving the map size, number of maps, number of channels, and signal complexity. Although there are limits with respect to the frequencies (and noise intensity) the network can track (see Figure 10—figure supplement 1), these findings indicate a very robust and flexible phenomenon for denoising spatially encoded sensory stimuli.

Discussion

The presence of stimulus- or feature-tuned sub-populations of neurons in primary sensory cortices (as well as in downstream areas) provides an efficient spatial encoding strategy (Pouget et al., 1999; Seriès et al., 2004; Tkacik et al., 2010) that ensures the relevant computable features are accurately represented. Here, we propose that beyond primary sensory areas, modular topographic projections play a key role in preserving accurate representations of sensory inputs across many processing modules. Acting as a structural scaffold for a sequential denoising mechanism, we show how they simultaneously enhance relevant stimulus features and remove noisy interference. We demonstrate this phenomenon in a variety of network models and provide a theoretical analysis that indicates its robustness and generality.

When reconstructing a spatially encoded input signal corrupted by noise in a network of sequentially connected populations, we find that a convergent structure in the feedforward projections is not only critical for successfully solving the task, but that the performance increases significantly with network depth beyond a certain modularity (Figure 1). Through this mechanism, the response selectivity of the stimulated sub-populations is sharpened within each subsequent sub-network, while others are silenced (Figure 2). Such wiring may support efficient and robust information transmission from the thalamus to deeper cortical centers, retaining faithful representations even in the presence of strong noise. We demonstrate that this holds for a variety of signals, from approximately static (stepwise) to smoothly and rapidly changing dynamic inputs (Figure 10). Thanks to the balance of excitation and inhibition, the network is able to track spatially encoded signals on very short timescales, and is flexible with respect to the level of spatial and temporal discretization. Accurate tracking and denoising requires that the encoding is locally static/semi-stationary for only a few tens of milliseconds, which is roughly in line with psychophysics studies on the limits of sensory perception (Borghuis et al., 2019).

More generally, topographic modularity, in conjunction with other top-down processes (Kok et al., 2012), could provide the anatomical substrate for the implementation of a number of behaviorally relevant processes. For example, feedforward topographic projections on the visual pathway could contribute, together with various attentional control processes, to the widely observed pop-out effect in the later stages of the visual hierarchy (Brefczynski-Lewis et al., 2009; Itti et al., 1998). The pop-out effect, at its core, assumes that in a given context some neurons exhibit sharper selectivity to their preferred stimulus feature than the neighboring regions, which can be achieved through a winner-take-all (WTA) mechanism (see Figure 9 and Himberger et al., 2018).

The WTA behavior underlying the denoising is caused by a re-shaping of the E/I balance across the network (see Figure 3). As the excitatory feedforward projections become more focused, they modulate the system’s effective connectivity and thereby the gain on the stimulus-specific pathways, gating or allowing (and even enhancing) signal propagation. This change renders the stimulated pathway excitatory in the active regime (see Figure 7), leading to multiple fixed points such as those observed in networks with local recurrent excitation (Renart et al., 2007; Litwin-Kumar and Doiron, 2012). While the high-activity fixed point of such clustered networks is reached over time, in our model it unfolds progressively in space, across multiple populations. Importantly, in the range of biologically plausible numbers of cortical areas relevant for signal transmission (up to 10 for some visual stimuli, see Felleman and Van Essen, 1991; Hegdé and Felleman, 2007) and intermediate modularity, the firing rates remain within experimentally observed limits and do not saturate. The basic principle is similar to other approaches that alter the gain on specific pathways to facilitate stimulus propagation, for example through stronger synaptic weights (Vogels and Abbott, 2005), stronger nonlinearity (Toyoizumi, 2012), tuning of connectivity strength, and neuronal thresholds (Cayco-Gajic and Shea-Brown, 2013), via detailed balance of local excitation and inhibition (amplitude gating; Vogels and Abbott, 2009) or with additional subcortical structures (Cortes and van Vreeswijk, 2015). Additionally, our model also displays some activity characteristics reported previously, such as the response sharpening observed for synfire chains (Diesmann et al., 1999) or (almost) linear firing rate propagation (Kumar et al., 2010) (for intermediate modularity).

However, due to the reliance on increasing inhibitory activity at every stage, we speculate that denoising, as studied here, would not occur in such a system containing a single, shared inhibitory pool with homogeneous connectivity. In this case, inhibition would affect all excitatory populations uniformly, with stronger activity potentially preventing accurate stimulus transmission from the initial sub-networks. Nevertheless, this problem could be alleviated using a more realistic, localized spatial connectivity profile as in Kumar et al., 2008a, or by adding shadow pools (groups of inhibitory neurons) for each layer of the network, carefully wired in a recurrent or feedforward manner (Aviel et al., 2003; Aviel et al., 2005; Vogels and Abbott, 2009). In such networks with non-random or spatially dependent connectivity, structured (modular) topographic projections onto the inhibitory populations will likely be necessary to maintain stable dynamics and attain the appropriate inhibition-dominated regimes (Figure 3). Alternatively, these could be achieved through additional, targeted inputs from other areas (Figure 4), with feedforward inhibition known to provide a possible mechanism for context-dependent gating or selective enhancement of certain stimulus features (Ferrante et al., 2009; Roberts et al., 2013).

While our findings build on the above results, we here show that the experimentally observed topographic maps may serve as a structural denoising mechanism for sensory stimuli. In contrast to most works on signal propagation where noise mainly serves to stabilize the dynamics and is typically avoided in the input, here the system is driven by a continuous signal severely corrupted by noise. Taking a more functional approach, this input is reconstructed using linear combinations of the full network responses, rather than evaluating the correlation structure of the activity or relying on precise firing rates. Focusing on the modularity of such maps in recurrent spiking networks, our model also differs from previous studies exploring optimal connectivity profiles for minimizing information loss in purely feedforward networks (Renart and van Rossum, 2012; Zylberberg et al., 2017), also in the context of sequential denoising autoencoders (Kadmon and Sompolinsky, 2016) and stimulus classification (Babadi and Sompolinsky, 2014), which used simplified neuron models or shallow networks, made no distinction between excitatory and inhibitory connections, or relied on specific, trained connection patterns (e.g., chosen by the pseudo-inverse model). Although the bistability underlying denoising can, in principle, also be achieved in such feedforward or networks without inhibition, our theoretical predictions and network simulations indicate that for biologically constrained circuits (i.e., where the background and long-range feedforward input is excitatory), inhibitory recurrence is indispensable for the spatial denoising studied here (see Section ‘Critical modularity for denoising’). Recurrent inhibition compensates for the feedforward and external excitation, generating competition between the topographic pathways and allowing the populations to rapidly track their input.

Moreover, our findings provide an explanation for how low-intensity stimuli (1–2 spks/sec above background activity, see Figure 2 and Supplementary Materials) could be amplified across the cortex despite significant noise corruption, and relies on a generic principle that persists across different network models (Figure 5) while also being robust to variations in the map size (Figure 6). We demonstrated both the existence of a lower and upper (due to increased overlap) bound on their spatial extent for signal transmission, as well as an optimal region for which denoising was most pronounced. These results indicate a trade-off between modularity and map size, with larger maps sustaining stimulus propagation at lower modularity values, whereas smaller maps must compensate through increased topographic density (see Figure 6a and Supplementary Materials). In the case of smaller maps, progressively enlarging the receptive fields enhanced the denoising effect and improved task performance (Figure 6c), suggesting a functional benefit for the anatomically observed decrease in topographic specificity with hierarchical depth (Bednar and Wilson, 2016; Smith et al., 2001). One advantage of such a wiring could be spatial efficiency in the initial stages of the sensory hierarchy due to anatomical constraints, for instance the retina or the lateral geniculate nucleus. While we get a good qualitative description of how the spatial variation of topographic maps influences the system’s computational properties, the numerical values in general are not necessarily representative. Cortical maps are highly dynamic and exhibit more complex patterning, making (currently scarce) precise anatomical data a prerequisite for more detailed investigations. For instance, despite abundant information on the size of receptive fields (Smith et al., 2001; Liu et al., 2016; Keliris et al., 2019), there is relatively little data on the connectivity between neurons tuned to related or different stimulus features across distinct cortical circuits. Should such experiments become feasible in the future, our model provides a testable prediction: the projections must be denser (or stronger) between smaller maps to allow robust communication whereas for larger maps fewer connections may be sufficient.

Finally, our model relates topographic connectivity to competition-based network dynamics. For two input signals of comparable intensities, moderately structured projections allow both representations to coexist in a decodable manner up to a certain network depth, whereas strongly modular connections elicit WLC like behavior characterized by stochastic switching between the two stimuli (see Figure 9). Computation by switching is a functionally relevant principle (McCormick, 2005; Schittler Neves and Timme, 2012), which relies on fluctuation- or input-driven competition between different metastable (unstable) or stable attractor states. In the model studied here, modular topography induced multi-stability (uncertainty) in representations, alternating between two stable fixed points corresponding to the two input signals. Structured projections may thus partially explain the experimentally observed competition between multiple stimulus representations across the visual pathway (Li et al., 2016), and is conceptually similar to an attractor-based model of perceptual bistability (Moreno-Bote et al., 2007). Moreover, this multi-stability across sub-networks can be ‘exploited’ at any stage by control signals, that is additional modulation (inihibitory) could suppress one and amplify (bias) another.

Importantly, all these different dynamical regimes emerge progressively through the hierarchy and are not discernible in the initial modules. Previous studies reporting on similar dynamical states have usually considered either the synaptic weights as the main control parameter (Lagzi and Rotter, 2015; Lagzi et al., 2019; Vogels and Abbott, 2005) or studied specific architectures with clustered connectivity (Schaub et al., 2015; Litwin-Kumar and Doiron, 2012; Rost et al., 2018). Our findings suggest that in a hierarchical circuit a similar palette of behaviors can be also obtained given appropriate effective connectivity patterns modulated exclusively through modular topography. Although we used fixed projections throughout this study, these could also be learned and shaped continuously through various forms of synaptic plasticity (see e.g. Tomasello et al., 2018). To achieve such a variety of dynamics, cortical circuits most likely rely on a combination of all these mechanisms, that is, pre-wired modular connections (within and between distant modules) and heterogeneous gain adaptation through plasticity, along with more complex processes such as targeted inhibitory gating.

Overall, our results highlight a novel functional role for topographically structured projection pathways in constructing reliable representations from noisy sensory signals, and accurately routing them across the cortical circuitry despite the plethora of noise sources along each processing stage.

Materials and methods

Network architecture

Request a detailed protocolWe consider a feedforward network architecture where each sub-network (SSN) is a balanced random network (Brunel, 2000) composed of homogeneous LIF neurons, grouped into a population of excitatory and inhibitory units. Within each sub-network, neurons are connected randomly and sparsely, with a fixed number of local excitatory and local inhibitory inputs per neuron. The sub-networks are arranged sequentially, that is the excitatory neurons in project to both and populations in the subsequent sub-network (for an illustrative example, see Figure 1a). There are no inhibitory feedforward projections. Although projections between sub-networks have a specific, non-uniform structure (see next section), each neuron in receives the same total number of synapses from the previous SSN, .

In addition, all neurons receive inputs from an external source representing stochastic background noise. For the first sub-network, we set , as it is commonly assumed that the number of background input synapses modeling local and distant cortical input is in the same range as the number of recurrent excitatory connections (see e.g. Brunel, 2000; Kumar et al., 2008b; Duarte and Morrison, 2014). To ensure that the total excitatory input to each neuron is consistent across the network, we scale by a factor of for the deeper SSNs and set , resulting in a ratio of 3:1 between the number of feedforward and background synapses.

Modular feedforward projections

Within each SSN, each neuron is assigned to one or more of sub-populations SP associated with a specific stimulus ( unless otherwise stated). This is illustrated in Figure 1a for . We choose these sub-populations so as to minimize their overlap within each , and control their effective size , through the scaling parameter . Depending on the size and number of sub-populations, it is possible that some neurons are not part of any or that some neurons belong to multiple such sub-populations (overlap).

Map size

Request a detailed protocolIn what follows, a topographic map refers to the sequence of sub-populations in the different sub-networks associated with the same stimulus. To enable a flexible manipulation of the map sizes, we constrain the scaling factor by introducing a step-wise linear increment , such that . Unless otherwise stated, we set and . Note that all SPs within a given SSN have the same size. In this study, we will only explore values in the range to ensure consistent map sizes across the system, that is, for all (see constraints in Appendix A).

Modularity