A saturation hypothesis to explain both enhanced and impaired learning with enhanced plasticity

Abstract

Across many studies, animals with enhanced synaptic plasticity exhibit either enhanced or impaired learning, raising a conceptual puzzle: how enhanced plasticity can yield opposite learning outcomes? Here, we show that the recent history of experience can determine whether mice with enhanced plasticity exhibit enhanced or impaired learning in response to the same training. Mice with enhanced cerebellar LTD, due to double knockout (DKO) of MHCI H2-Kb/H2-Db (KbDb−/−), exhibited oculomotor learning deficits. However, the same mice exhibited enhanced learning after appropriate pre-training. Theoretical analysis revealed that synapses with history-dependent learning rules could recapitulate the data, and suggested that saturation may be a key factor limiting the ability of enhanced plasticity to enhance learning. Optogenetic stimulation designed to saturate LTD produced the same impairment in WT as observed in DKO mice. Overall, our results suggest that the recent history of activity and the threshold for synaptic plasticity conspire to effect divergent learning outcomes.

https://doi.org/10.7554/eLife.20147.001eLife digest

All animals can learn from their experiences. One of the main ideas for how learning occurs is that it involves changes in the strength of the connections between neurons, known as synapses. The ability of synapses to become stronger or weaker is referred to as synaptic plasticity. High levels of synaptic plasticity are generally thought to be good for learning, while low levels of synaptic plasticity make learning more difficult.

Nevertheless, studies have also reported that high levels of synaptic plasticity can sometimes impair learning. To explain these mixed results, Nguyen-Vu, Zhao, Lahiri et al. studied mice that had been genetically modified to show greater synaptic plasticity than normal mice. The same individual mutant animals were sometimes less able to learn an eye-movement task than unmodified mice, and at other times better able to learn exactly the same task. The main factor that determined how well the mice could learn was what the mice had experienced shortly before they began the training.

Nguyen-Vu et al. propose that some experiences change the strength of synapses so much that they temporarily prevent those synapses from undergoing any further changes. Animals with these “saturated” synapses will struggle to learn a new task, even if their brains are normally capable of high levels of synaptic plasticity. Notably, even normal activity appears to be able to put the synapses of the mutant mice into a saturated state, whereas this saturation would only occur in normal mice under a restricted set of circumstances. Consistent with this idea, Nguyen-Vu et al. showed that a specific type of pre-training that desaturates synapses improved the ability of the modified mice to learn the eye-movement task. Conversely, a different procedure that is known to saturate synapses impaired the learning ability of the unmodified mice.

A future challenge is to test these predictions experimentally by measuring changes in synaptic plasticity directly, both in brain slices and in living animals. The results could ultimately help to develop treatments that improve the ability to learn and so could provide benefits to a wide range of individuals, including people who have suffered a brain injury or stroke.

https://doi.org/10.7554/eLife.20147.002Introduction

The prospect that learning might be enhanced by enhancing synaptic plasticity has captured the imagination of many, fueled by reports of super cognition in transgenic mice with enhanced synaptic plasticity (McConnell et al. 2009; Tang et al., 1999; Lee and Silva, 2009; Huh et al., 2000; Ito, 2002). Synaptic plasticity is a fundamental property of neural circuits, hence its enhancement has the potential to enhance a wide range of brain functions, and benefit a wide range of patients. It could accelerate the recovery of sensory, motor, or cognitive function after a stroke or other injury; counter the decline of learning and memory in the elderly; or be used in conjunction with behavioral therapy to enhance drug rehabilitation, treatment for post-traumatic stress disorder, speech therapy, or other kinds of rehabilitation. Rapid progress in our understanding of plasticity at the synaptic level is providing targets for drug development and other molecular strategies for enhancing synaptic plasticity (Lee and Silva, 2009). However, optimism for this approach has been tempered by the observation that the enhancement of synaptic plasticity can, in some cases, impair learning (Migaud et al., 1998; Uetani et al., 2000; Hayashi et al., 2004; Cox et al., 2003). The finding that manipulations to enhance synaptic plasticity can either enhance or impair learning has been reported for different brain regions, and for both associative LTP and LTD (Migaud et al., 1998; Uetani et al., 2000; Hayashi et al., 2004; Cox et al., 2003; Takeuchi et al., 2008; Koekkoek et al., 2005; McConnell et al. 2009). Yet, there have been no experimental tests of why enhanced synaptic plasticity can have these opposite effects at the behavioral level. Moreover, despite extensive theoretical study of how enhanced plasticity can impair memory (the plasticity-stability dilemma; Toulouse et al., 1986; Carpenter and Grossberg, 1987; Amit and Fusi, 1994; Amit and Fusi, 1992; Gerrow and Triller, 2010; Frey and Morris, 1997; Reymann and Frey, 2007; Clopath et al., 2008; Barrett et al., 2009; Redondo and Morris, 2011), there has been little theoretical treatment of how enhanced plasticity could impair learning. Hence, the principles governing the learning outcome under conditions of enhanced plasticity have remained elusive, as have the principles for promoting enhanced learning. This fundamental gap in our understanding of how enhanced synaptic plasticity functions in the context of an intact neural circuit is limiting the application of synaptic plasticity enhancers in patients who could potentially benefit from this approach. We combined experiment and theory to address this conceptual gap.

We measured learning in mice deficient in molecules of the class-I major histocompatibility molecule (MHCI) complex, which have enhanced synaptic plasticity in multiple brain regions, including the cortex, hippocampus, thalamus, and cerebellum (Huh et al., 2000; Syken et al., 2006; McConnell et al. 2009; Lee et al., 2014). We focused on the cerebellum to take advantage of the known links between synaptic plasticity and cerebellum-dependent oculomotor learning. In the cerebellum, classical MHCI H2-Kb (H2-Kb) and MHCI H2-Db (H2-Db) are highly expressed in Purkinje cells, and double-knockout mice, MHCI H2-Kb/H2-Db−/− (KbDb−/−; Vugmeyster et al., 1998; Schott et al., 2003; referred to as double knockout (DKO) here), exhibit enhanced associative LTD at the parallel fiber-Purkinje cell synapses (pf-Pk LTD) (McConnell et al. 2009). For many years, pf-Pk LTD was widely considered to be the mechanism of all cerebellum-dependent learning (Ito, 2002); however, recent evidence from animals with disrupted pf-Pk LTD suggests it contributes selectively to certain forms of cerebellum-dependent learning and not others (Boyden et al., 2006; Hansel et al., 2006; Titley et al., 2010; Schonewille et al., 2011; Aiba et al., 1994; Shibuki et al., 1996; Endo et al., 2009; Feil et al., 2003; Lee et al., 2009; Li et al., 1995; Miyata et al., 2001). We leveraged a set of closely related oculomotor learning tasks with different dependence on pf-Pk LTD to analyze how enhanced pf-Pk LTD functions in an intact circuit.

Results

Impaired motor learning in mice with enhanced cerebellar LTD

We tested the ability of DKO mice to adaptively modify their vestibulo-ocular reflex (VOR). The VOR is an eye movement response to a vestibular stimulus, which functions to stabilize images on the retina during head motion. In wild type mice, motor learning can adaptively increase or decrease the amplitude of the VOR to improve image stabilization. Previous work has suggested that LTD contributes selectively to VOR learning when training to increase the amplitude of the VOR is done using high-frequency (≥1 Hz) visual-vestibular stimuli, and much less so, if at all, when VOR learning is tested with other training paradigms (Boyden et al., 2006; Hansel et al., 2006; Titley et al., 2010; Schonewille et al., 2011; Aiba et al., 1994; Shibuki et al., 1996; Endo et al., 2009; Feil et al., 2003; Lee et al., 2009; Li et al., 1995; Miyata et al., 2001). We found that mice with enhanced pf-Pk LTD exhibited the same, specific VOR learning deficit. DKO mice were significantly impaired in learning to increase the amplitude of the VOR when training was done using 1 Hz visual-vestibular stimuli (Figure 1D, solid bars; Figure 1—source data 1). However, as previously reported in LTD-impaired mice (Boyden et al., 2006; Schonewille et al., 2011), there was no significant impairment of learning to increase the VOR when training was done with lower frequency visual-vestibular stimuli of 0.6 Hz (Figure 1—figure supplement 1, top), or when learning to decrease the VOR at either training frequency (Figure 1E, solid bars, Figure 1—figure supplement 1, bottom). Baseline performance of the VOR and visually-driven oculomotor behaviors were indistinguishable between DKO and WT mice (Figure 1—figure supplements 2–3), suggesting an impairment of the learning mechanism itself, rather than other sensory or motor deficits.

Rescue of H2-Db expression in adult Purkinje cells rescues learning impairment in DKO mice with enhanced cerebellar plasticity.

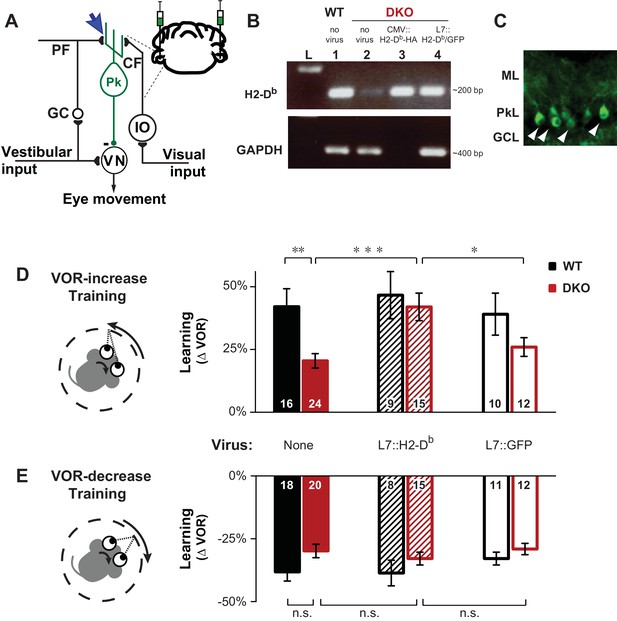

(A) Circuit for VOR learning. Vestibular input drives eye movements via a direct pathway through the vestibular nuclei (VN), and a side-loop through the granule cells (GC), parallel fibers (PF), and Purkinje cells (Pk) of the cerebellar flocculus. The climbing fiber (CF) input to the Purkinje cells from the inferior olive (IO) carries visual signals, and can trigger LTD in the parallel fiber-to-Purkinje cell synapses (blue arrow), which is enhanced in mice deficient in the Class-I major histocompatibility molecules H2-Kb and H2-Db (KbDb−/−; referred to as double knockout, DKO). A lentiviral construct expressing H2-Db under the control of the Purkinje cell-specific L7 promoter was injected bilaterally into the flocculi of adult mice (see Materials and methods for details). (B) RT-PCR confirmed the presence of H2-Db mRNA in the cerebellar flocculus of DKO mice injected with the L7::H2-Db-T2A-GFP virus (lane 4). Lane 1: Positive control, WT (thalamus); Lane 2: Negative control, DKO (spleen); Lane 3: DKO (flocculus) infected with CMV::H2-Db-HA; Lane 4: DKO (flocculus) infected with L7::H2-Db-T2A-GFP. Ladder in the left lane. (Lane 3, CMV::H2-Db-HA, is a positive control for detection of H2-Db expression in cerebellum, but because it was not restricted to Purkinje cells, it was not used further in this study; GAPDH was not loaded; full details in Materials and methods). (C) Floccular Purkinje cells of DKO mice infected with L7::H2-Db-T2A-copGFP virus (white arrowheads) and stained with anti-copGFP immunohistochemistry. Molecular layer (ML), Purkinje cell layer (PkL), Granule cell layer (GCL). (D) Training to increase the VOR. Left, A vestibular stimulus was paired with oppositely directed visual stimulus motion. Right, DKO mice (solid red) were impaired on VOR-increase learning compared to wild type mice (WT; solid black) (**p=0.004, t(38) = 3.08). Virally-mediated expression of H2-Db in Purkinje cells of the adult cerebellar flocculi (L7::H2–Db) rescued the learning deficit in DKO mice (hatched red; ***p=0.0005, t(37) = 3.81 vs DKO without virus, solid red), so that they learned as well as WT mice injected with the same virus (hatched black; n.s. p=0.65, t(22) = 0.46) and better than DKO mice that received virus expressing only GFP (L7::GFP, open red, *p=0.03, t(25) = 2.290). Virally-mediated expression of H2-Db had no significant effect on learning in the WT mice (hatched vs. solid black, n.s. p=0.70, t(23) = 0.39), and expression of GFP had no effect in DKO mice (open vs solid red; n.s. p=0.26, t(34) = 1.13) or WT mice (open vs. solid black; n.s. p=0.79, t(24) = 0.26). Mean ± s.e.m. In this and all figures, numbers in bars indicate n = number of animals. (E) Training to decrease the VOR. Left, A vestibular stimulus was paired with a visual stimulus that moved with the head. Right, VOR-decrease learning in DKO mice (solid red) was not significantly different from WT (solid black) (n.s. p=0.08, t(36) = 1.86). Expression of H2-Db had no significant effect on VOR-decrease learning in DKO mice (hatched vs. solid red; n.s. p=0.43, t(33) = 0.79), and was not different from mice that received control virus expressing only GFP (hatched vs. open red; n.s. p=0.29, t(25) = 1.08). Mean ± s.e.m.

-

Figure 1—source data 1

Rescue of H2-Db expression in adult Purkinje cells rescue learning impairment in DKO mice with enhanced cerebellar plasticity.

Data show VOR learning, after thirty minutes of either VOR-increase training or VOR-decrease training, for both DKO and WT mice in three different conditions (without virus, with L7::H2-Db virus, or control L7::GFP virus). The eye movement response to the vestibular stimulus alone, i.e. the VOR, was measured in total darkness, and learning was calculated as the percentage change in the VOR gain after training relative the baseline VOR gain measured before training. Positive values indicate increase VOR learning and negative values indicate decrease VOR learning corresponding to the respective training stimuli. Each number corresponds to the learning in an individual animal.

- https://doi.org/10.7554/eLife.20147.004

The impaired learning phenotype in DKO mice could be attributed to the loss of H2-Db expression in Purkinje cells, the post-synaptic site of pf-Pk LTD. Although H2-Kb and H2-Db expression is not exclusive to the cerebellar Purkinje cells, virally-mediated rescue of H2-Db expression specifically in Purkinje cells of the flocculus (Figure 1A–C), the cerebellar region necessary for VOR learning, of adult global DKO mice, was sufficient to rescue their impaired VOR-increase learning (Figure 1D, hatched bars). Thus, rescue of H2-Db expression in adult neurons can restore normal function, even in animals that developed in the absence of this molecule, indicating that the role of MHCI molecules is not confined to the developing nervous system, but actively regulates plasticity in adults as well. Expression of H2-Db had no effect on VOR-decrease learning (Figure 1E, hatched bars), which is insensitive to perturbations of pf-Pk LTD (Boyden et al., 2006; Hansel et al., 2006; Schonewille et al., 2011).

Climbing fiber stimulation in WT mice recapitulates the DKO phenotype

The similarity of the learning deficit in mice with enhanced pf-Pk LTD to that previously reported in mice with impaired pf-Pk LTD (Boyden et al., 2006) suggested the possibility of a similar underlying cause. We hypothesized that in both cases, the behavioral deficit could reflect the unavailability of pf-Pk LTD during training. In particular, the lower induction threshold for pf-Pk LTD in the DKO mice (McConnell et al. 2009) could allow normal basal activity in the circuit to aberrantly recruit LTD and deplete the pool of LTD-eligible synapses. Thus, the capacity for LTD could be exhausted, i.e., saturated, even before the start of training, rendering the circuit unable to support new learning that depends on pf-Pk LTD (Figure 2A).

Elevated LTD before training impairs LTD-dependent learning.

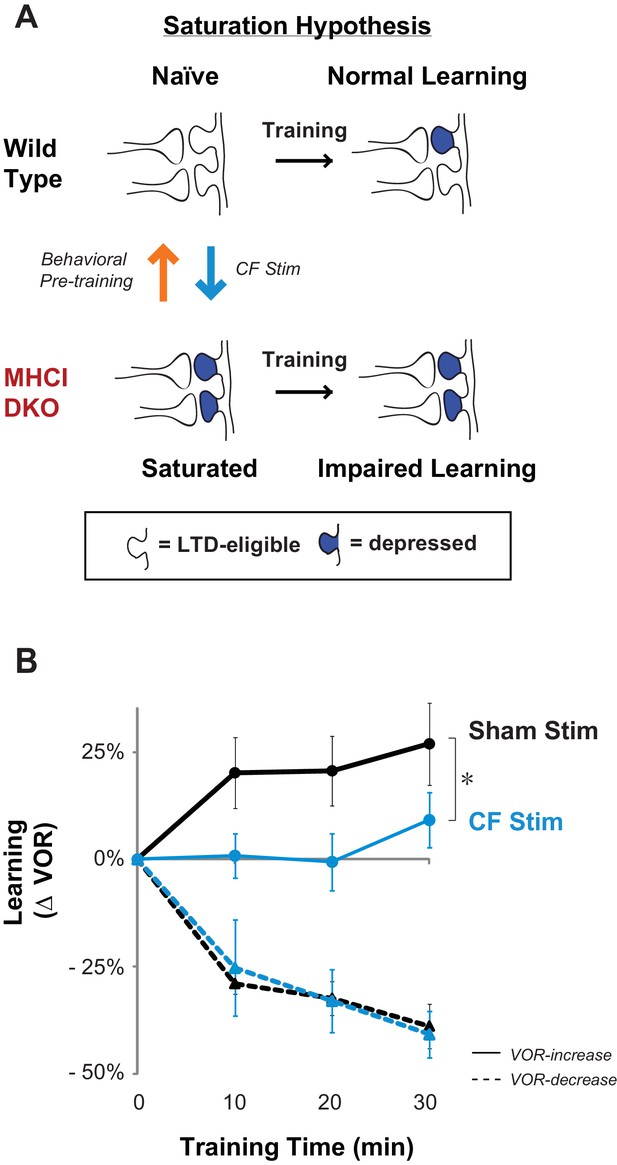

(A) Saturation hypothesis to explain impaired learning with enhanced synaptic plasticity. Top, In naïve WT mice, at the start of training, synapses are presumably available (white synaptic spines) to selectively undergo associative synaptic plasticity (long-term depression, LTD; blue spines) during training, thereby supporting normal learning. Bottom, In DKO mice, the lower induction threshold for LTD could enable spontaneous activity in the circuit to aberrantly recruit LTD at a random subset of spontaneously active synapses before training, thereby depleting the pool of synapses eligible to undergo LTD, and preventing normal learning. Behavioral pre-training (orange arrow) restores the capacity for LTD-dependent learning in the DKO mice (Figure 3). We tested whether LTD saturation and impairment of LTD-dependent learning can be induced in WT mice with climbing fiber stimulation (cyan arrow; Figure 2B). (B) Climbing fiber stimulation in WT mice before VOR training recapitulates the learning impairment in the DKO mice. Optogenetic stimulation of climbing fibers for 30 min, to induce pf-Pk LTD in the flocculus of WT mice, blocked subsequent VOR-increase learning (solid cyan trace; *p=0.03, F(1,10) = 5.912, two-factor repeated measures ANOVA, CF stim n = 6, Sham n = 6) but had no effect on VOR-decrease learning (dashed cyan trace; n.s. p=0.68, F(1,5) = 0.20) relative to sham stimulation controls in animals that did not express ChR2 in the climbing fibers (black). Mean ± s.e.m.

-

Figure 2—source data 1

Elevated LTD before training impairs LTD-dependent learning.

Climbing fiber stimulation in WT mice before VOR training recapitulates the learning impairment in the DKO mice. Data show time course of VOR learning in WT mice during 30 min of normal visual-vestibular VOR training following 30 min of optical stimulation to either optogenetically stimulate climbing fibers (CFs) or provide sham controls (light only in WT without ChR2 expressed in the CFs). Learning was calculated as the percentage change in VOR gain measured after each 10 min block of visual-vestibular training relative to the baseline VOR gain measured immediately before visual-vestibular training. Positive values indicate increase VOR learning and negative values indicate decrease VOR learning corresponding to the respective training stimuli. Each row of 4 numbers within the condition columns corresponds to the time course of learning in an individual animal.

- https://doi.org/10.7554/eLife.20147.009

To assess whether the saturation of LTD could produce a motor learning phenotype like that observed in the DKO mice, we conducted stimulation experiments. In other brain areas, direct stimulation of the relevant circuits to induce and saturate plasticity has been shown to occlude or impair subsequent learning. We used a similar approach to test whether saturation of LTD can produce the selective impairment of high-frequency VOR-increase learning observed in the DKO mice. Climbing-fiber stimulation is known to induce LTD in simultaneously active pf-Pk synapses (Crepel and Jaillard, 1991; Ekerot and Kano, 1985; Ito and Kano, 1982). Therefore, we opotogenetically stimulated the climbing fiber input to the cerebellar flocculus to elevate the level of pf-Pk LTD in WT mice prior to VOR training (Figure 2A, cyan arrow).

Climbing fibers were optogenetically stimulated for 30 min (250 ms trains of three 2 ms light pulses, repeated every 1 s) while the mouse was head-restrained in the dark without visual or vestibular stimuli. During normal VOR learning, climbing fiber activation is thought to induce LTD selectively in those pf-Pk synapses activated by the visual and vestibular stimuli used to induce learning. In contrast, optogenetic climbing fiber stimulation delivered in the absence of such stimuli should induce LTD randomly in spontaneously active pf-Pk synapses. This non-specific LTD-induction procedure did not affect the amplitude of the VOR, measured after climbing fiber stimulation (Figure 2—figure supplement 1B). This is consistent with the normal baseline VOR amplitude in DKO mice (Figure 1—figure supplement 2A), and in wild type mice after lesions of the flocculus (Rambold et al., 2002; Koekkoek et al., 1997; Katoh et al., 2005). Together, these observations indicate that non-specific manipulations of the flocculus are not sufficient to have a coordinated effect on the VOR behavior, and that LTD only increases the amplitude of the VOR if it is induced selectively in the appropriate subset of pf-Pk synapses. Nevertheless, if non-specific LTD depleted the pool of LTD-eligible synapses, it could impair subsequent LTD-dependent learning (Figure 2—figure supplement 2). Accordingly, VOR-increase learning was impaired after climbing fiber stimulation (Figure 2B, CF Stim, cyan vs. Sham Stim, black; Figure 2—source data 1). Sham stimulation controls exhibited VOR-increase learning, confirming that disrupted VOR-increase learning was specific to stimulation of climbing fibers, rather than reflecting nonspecific, optical or mechanical perturbation of the circuit. Notably, climbing fiber stimulation before training did not perturb subsequent VOR-decrease learning, which also relies on the cerebellar flocculus (Koekkoek et al., 1997; Rambold et al., 2002) but is insensitive to disruptions of pf-Pk LTD (Boyden et al., 2006) (Figure 2B, dashed traces). The specificity of the learning impairment in WT mice after climbing fiber stimulation indicates that elevated levels of pf-Pk LTD prior to training can produce a phenotype like that observed in the DKO mice (Figure 1D).

Behavioral pre-training converts impaired learning of DKO mice to enhanced learning

If elevated pf-Pk LTD prior to training is contributing to the learning impairment in the DKO mice, then any procedure that reverses pf-Pk LTD might reset the synapses to a state more capable of supporting LTD-dependent learning. Pf-Pk LTD can be actively reversed by post-synaptic LTP of the same synapses (Lev-Ram et al., 2003), providing a cellular mechanism for reversing LTD saturation. Moreover, behavioral VOR-decrease training has been shown to rapidly reverse any evidence of prior VOR-increase learning (Boyden and Raymond, 2003), suggesting that it rapidly reverses any pf-Pk LTD or other plasticity induced during VOR-increase learning. Therefore, we tested whether VOR-decrease training could put the VOR circuit of DKO mice into a state more capable of supporting LTD-dependent VOR-increase training.

Mice were given thirty minutes of VOR-decrease pre-training immediately before VOR-increase training. In the DKO mice, this pre-training significantly enhanced subsequent VOR-increase learning relative to DKO mice that did not receive the pre-training (Figure 3A, compare red traces and bars in middle vs. left panels; Figure 3—source data 1). Notably, pre-training had the opposite effect on WT mice, impairing subsequent VOR-increase learning (Figure 3A, compare black traces in middle vs. left panels). Since the pre-training enhanced learning in the DKO mice, but impaired learning in the WT mice, the DKO mice learned better than the WT after pre-training. Hence, the pre-training not only reversed the learning impairment, but also revealed a capacity for enhanced learning in the DKO mice relative to WT mice (Figure 3A, compare black vs. red traces in middle panel).

Behavioral pre-training reveals enhanced learning in mice with enhanced LTD.

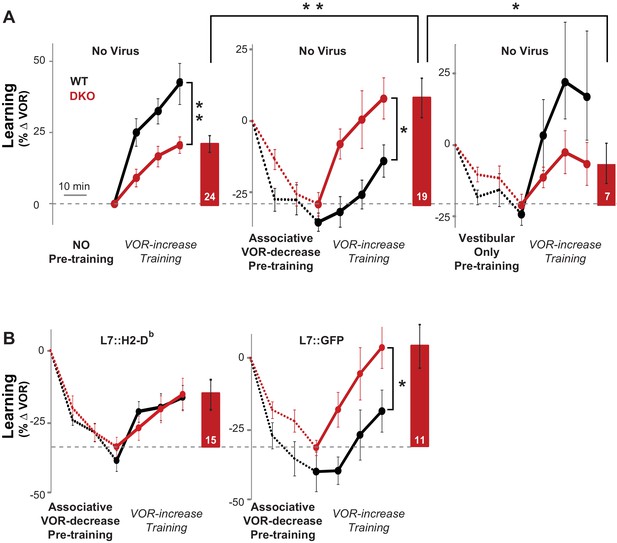

(A) The same VOR-increase training procedure induced dramatically different learning outcomes in the DKO mice with different pre-training procedures (p=0.01, F = 5.153, ANOVA). Left, Without pre-training, DKO mice with enhanced pf-Pk LTD were impaired on VOR-increase learning (**p=0.002, F(1,38) = 11.08, two-factor repeated measures ANOVA; WT n = 16,. DKO n = 24,). Middle, Pre-training with an associative VOR-decrease paradigm that was not significantly different between the genotypes (dotted lines, p=0.19, F(1,29) = 1.79; WT n = 12, DKO n = 19) reversed the learning impairment in DKO mice (red) so that they learned more than WT (black) during subsequent VOR-increase training (*p=0.02, F(1,29) = 5.95; WT n = 12, DKO n = 19). Right, Pre-training with a vestibular stimulus alone decreased the VOR gain comparably between the two genotypes (dotted line, p=0.30, F(1,17) = 1.25; WT n = 6, DKO n = 7), but there was no improvement of subsequent VOR-increase learning in the DKO mice relative to WT mice (p=0.13, F(1,11) = 2.70; WT n = 6, DKO n = 7). In DKO mice, VOR-increase learning was better after associative VOR-decrease pre-training compared with no pre-training (**p=0.005, Fischer’s LSD) or vestibular-only pre-training (*p=0.03) (compare red bar graphs and learning curves). In contrast, in WT mice, VOR-increase learning was worse after associative VOR-decrease pre-training compared with no pre-training (*p=0.037, Fischer’s LSD) or vestibular only pre-training (*p=0.049) (compare black learning curves). Learning is plotted on the same scale in each plot, and aligned on the values at the start of VOR-increase training for DKO mice. Mean ± s.e.m. (B) Virally-mediated rescue of H2-Db expression in floccular Purkinje cells (L7::H2-Db, left) eliminated the enhanced VOR-increase learning in DKO mice after associative VOR-decrease pre-training (compare with middle panel of A), so that learning was indistinguishable from WT mice injected with the same virus (VOR-increase learning, p=0.98, F(1,22) = 0.0004; VOR-decrease pre-training, p=0.53, F(1,22) = 0.40; two-factor repeated measure ANOVA; WT n = 9; DKO n = 15). The enhanced VOR-increase learning phenotype was present in DKO mice that received control virus expressing only GFP (L7::GFP, right, p=0.05, F(1,18) = 4.29; WT n = 9, DKO n = 11) although the VOR-decrease pre-training itself was not significantly different between the two genotypes (p=0.20, F(1,18) = 1.75; WT n = 9, DKO n = 11). Mean ± s.e.m.

-

Figure 3—source data 1

Behavioral pre-training reveals enhanced learning in mice with enhanced LTD.

The same VOR-increase training procedure induced dramatically different learning outcomes in the DKO mice with different pre-training procedures. Data show time course of VOR learning in WT and DKO mice during 30 min of different pre-training conditions (no pre-training, VOR-decrease pre-training, and vestibular only pre-training) followed by 30 min of normal VOR-increase training. Data are separated by pre-training condition and by whether mice received virus for rescue expression of H2-Db in Purkinje cells (without virus, with L7::H2-Db virus, or control L7::GFP virus). Learning was calculated as the percentage change in the VOR gain after each block of 10 min training relative the baseline VOR gain measured before any training occurred. Negative values indicate decrease VOR learning and positive values indicate increase VOR learning. However, in this case with pre-training, a reduction in negative values also indicates increase VOR learning. Each row of numbers within the condition columns corresponds to the time course of learning in an individual animal.

- https://doi.org/10.7554/eLife.20147.013

The enhanced learning in the DKO mice relative to WT mice after pre-training could not be explained by differences in the efficacy of VOR-decrease learning (Figure 3—figure supplement 1), nor could it be explained by more rapid forgetting of the effects of pre-training in the DKO mice, because VOR-decrease learning was retained normally (Figure 3—figure supplement 2). Also, the enhanced learning was unmasked specifically by pre-training with the associative VOR-decrease training paradigm, which paired visual and vestibular stimuli. Simply decreasing the VOR amplitude with a non-associative, habituation paradigm, which presented the vestibular stimulus alone, was not sufficient to unmask the enhanced learning (Figure 3A, right, Vestibular Only Pre-training). Thus, only the appropriate pre-training experience can put the circuit of the DKO mice into a state that enables their enhanced synaptic plasticity to support enhanced learning (Figure 2A, orange arrow).

The enhanced learning phenotype of the DKO mice had features in common with the impaired learning phenotype in these mice. First, the enhanced learning phenotype, like the impaired learning phenotype (Figure 1D, solid bars, Figure 1—figure supplement 1, top), was only observed when training was done using high-frequency (1.0 Hz) visual-vestibular stimuli (Figure 3A, middle panel), but not a slightly lower stimulus frequency of 0.6 Hz (Figure 3—figure supplement 3). In addition, the enhanced learning phenotype, like the impaired learning phenotype in the DKO mice, reverted to WT phenotype after virally-mediated expression of H2-Db in the Purkinje cells of adult DKO mice (compare Figure 3B, left panel, with Figure 3A, middle panel, and Figure 1D, hatched bars). The commonality of these features suggests that both the enhanced and impaired learning phenotypes of DKO mice share a common mechanism, involving the same set of synapses.

Modeling predicts strong saturation of LTD and difficult to reverse synaptic states

The experimental results above raise the possibility that the learning impairment of the DKO mice relative to WT mice could result from the saturation of LTD. We conducted computational modeling studies to further assess the plausibility of this hypothesis, and, more generally, to develop an understanding of the properties of synaptic plasticity that can support both enhanced and impaired learning, depending on recent experience. First, we examined how the competition between two opposing factors determines the learning outcome. One factor is the enhanced intrinsic propensity for synapses to undergo LTD, which alone would enhance learning. The other factor is the depletion of the number of synapses eligible for LTD, which alone would impair learning. Second, we characterized the properties of synaptic models that could reproduce the opposite effects of behavioral pre-training, namely impairing learning in WT mice, and enhancing learning in DKO mice. The modeling generates predictions about the essential features of synapses that could support both the impaired and enhanced learning outcomes we observed empirically. Moreover, it provides general insights about how the prior history of activity in the circuit interacts with the threshold for plasticity to determine whether learning is impaired or enhanced.

We adopted a theoretical framework (Fusi et al., 2005; Fusi and Abbott, 2007a; Lahiri and Ganguli, 2013) previously used to study memory, to compare different synaptic models. Each model incorporated three key experimental findings: (1) the selective contribution of pf-Pk LTD to VOR-increase and not VOR-decrease learning (Boyden et al., 2006); (2) the observation that pf-Pk LTD is easier to induce in the DKO mice (i.e., the ‘lower threshold’ for LTD induction McConnell et al. 2009); and (3) the ability of VOR-decrease training to reverse the effects of VOR-increase training (Boyden and Raymond, 2003).

The contribution of pf-Pk LTD to VOR-increase learning was modeled by using the rate at which synapses transitioned to a depressed state during training as the measure of VOR-increase learning (Ito, 1972). Training to increase the VOR was modeled by increasing the rate of LTD ‘events’, which can be thought of as the rate at which patterns of neural activity occur with the potential to induce LTD (see Appendix for details). The lower threshold for pf-Pk LTD in the DKO mice was modeled as an increase in the probability that an LTD ‘event’ (i.e., near-coincident parallel fiber and climbing fiber activation) will actually induce LTD, which we will refer to as the ‘intrinsic’ LTD rate.

In vitro, Pf-Pk LTD can be actively reversed by post-synaptic LTP of the same synapses (Lev-Ram et al., 2003). Therefore, the ability of VOR-decrease training to reverse the effects of VOR-increase training was modeled by increasing the rate of LTP events at the pf-Pk synapses during VOR-decrease training (Boyden and Raymond, 2003). However, we note that the mechanism of VOR-decrease learning is not currently known. Moreover, known asymmetries in VOR-increase and VOR-decrease learning (Boyden and Raymond, 2003; Kimpo et al., 2005) suggest that the mechanism of VOR decrease learning is not simply pf-Pk LTP. Therefore, we do not attempt to model the decrease in VOR gain itself, but to merely capture the effects of VOR-decrease training on the pf-Pk synapses, and, more specifically, its effect on the availability of pf-Pk synapses to undergo LTD during VOR-increase learning.

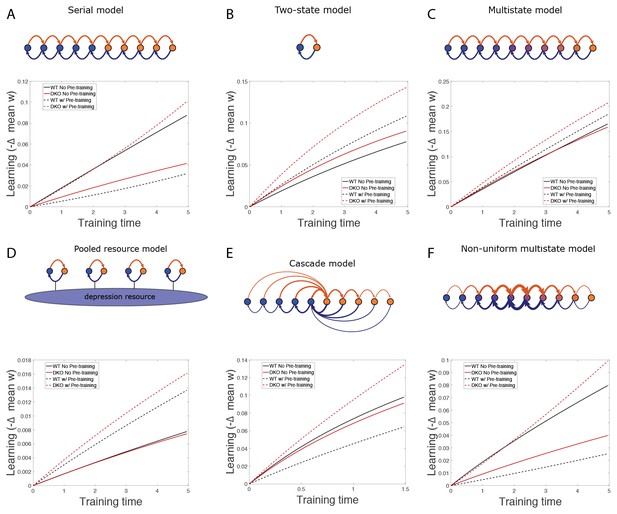

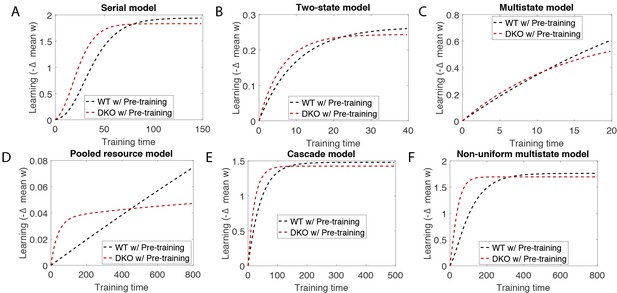

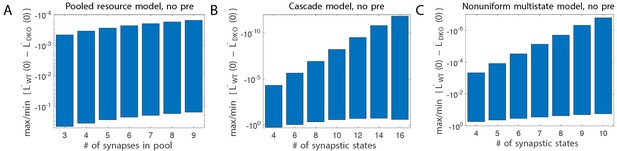

We implemented synaptic models with different numbers of potentiated and depressed states and different probabilities of transitioning between states (Montgomery and Madison, 2002; Petersen et al., 1998), and compared their ability to reproduce our empirical observations of VOR learning in wild type and DKO mice (Figure 4A). Specific synaptic models were considered for their analytical tractability and prevalence in theoretical treatment. In all of the models, the lower threshold for LTD in the DKO mice interacted with the rate of spontaneous LTD events caused by basal activity in the circuit (basal rate of parallel fiber and climbing fiber coactivation) to bias the initial distribution of synapses towards the depressed state(s) prior to learning (Figure 4B,D, top right, blue bars). Neurons in the depressed states were ineligible or less eligible to undergo additional LTD. Thus, for DKO mice, the outcome of VOR-increase training depended upon a competition between two opposing forces: (1) an enhanced intrinsic propensity for eligible synapses to undergo LTD, which alone would enhance learning; and (2) depletion of synapses eligible for LTD, i.e., saturation of LTD, which alone would impair learning.

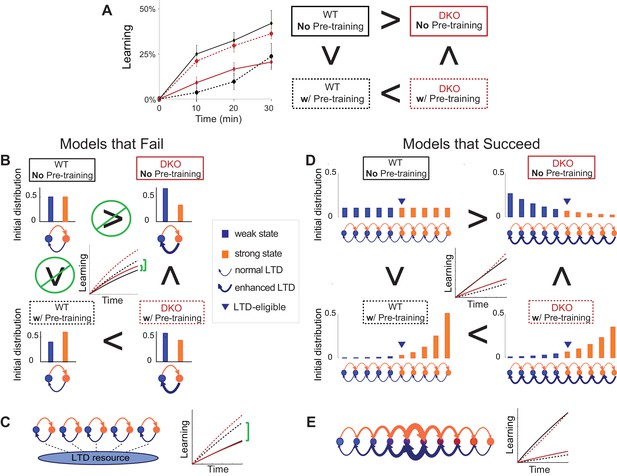

Synaptic models with amplified saturation effects and stubborn synaptic states account for learning in mice with normal and enhanced LTD.

(A) Four empirical comparisons constrain the models. Left, Empirical results replotted from Figure 3, with all curves aligned to the start of VOR-increase training, P values can be found in the legend for Figure 3. Right, Less than and greater than symbols (< and >) indicate which mice exhibited greater VOR-increase learning. In all panels of Figure 4: red, DKO mice; black, WT mice; solid lines, no pre-training; dashed lines, with VOR-decrease pre-training. (B) A binary synapse model with a strong synaptic state (orange) and a weak state (blue). Synapses transition between the two states at the rate of depression (blue curved arrow) and potentiation (orange curved arrow). The fraction of synapses in each state prior to VOR-increase learning is indicated by blue and orange bars. VOR-increase learning is measured by the decrease in synaptic weights during training. For DKO mice, the rate of depression was higher than WT, reflecting the lower threshold for LTD, (thick blue arrow), hence a greater fraction of the synapses were in the weak state (blue bars) prior to any VOR training. VOR-decrease pre-training (bottom panels) increased the fraction of synapses in the strong, LTD-eligible state (orange) in both WT and DKO mice. Center, The binary synapse model predicts enhanced learning in DKO vs. WT mice without pre-training (solid red vs solid black trace) and enhanced learning in WT mice with vs. without pre-training (dashed vs solid black trace), in contradiction to the empirical results in A (green brackets and green Ø). (C) The pooled resource model. Left, The probability of synaptic depression varied with the level of a shared resource that was depleted by the occurrence of depression at other synapses. Right, This model fails to account for the impaired learning in WT mice after pre-training (dashed black vs. solid black; green bracket). (D) The serial synaptic model with multiple strong (orange) and weak (blue) states, but only two values of synaptic strength, can account qualitatively for the effects of enhanced LTD and pre-training on learning (compare center panel with A). Before training, the synapses were strongly biased towards the weak state in the DKO mice, reducing the fraction of LTD-eligible synapses (blue arrowheads), and impairing learning relative to WT (solid red vs. solid black), as observed empirically. VOR-decrease pre-training shifted the bias towards the strong states (bottom panels). In DKO mice, this increased the fraction of LTD-eligible synapses (blue triangle), and enhanced learning (dashed red). In WT mice, pre-training biased the synapses to be too deep into the chain of potentiated states, so that the fraction of LTD-eligible synapses was reduced (blue triangle) and learning impaired (dashed black). (E) The non-uniform multistate model. Left, Each state is of varying strength from strong (orange) to weak (blue), and the transition probabilities between states decay exponentially the further the state is from the center. Right, This model qualitatively reproduced all of the empirical observations of learning.

Classical models of LTP and LTD did not reproduce our observation of impaired learning with enhanced plasticity. We tested a simple binary synapse model (Figure 4B), and a more generalized linear multistate model with multiple synaptic strengths (see Appendix). These models encapsulate classical notions of synaptic plasticity as straightforward changes in synaptic strength, to a maximal or minimal bound. However, one can show mathematically, that for all values of the parameters of these models, the enhanced intrinsic LTD rate dominates the saturation effect, at least for the initial phase of learning. Thus, these models incorrectly predict that enhanced plasticity would enhance VOR-increase learning (Figure 4B, solid red vs. black trace, green bracket; see Appendix for an analytical solution and predictions of models for longer time scales). Thus, classical models of synaptic plasticity could not readily account for the behavioral results observed empirically.

To predict impaired learning with enhanced plasticity, a mechanism to amplify the effect of depleting the synapses eligible for LTD was required. We first considered a synaptic model in which LTD driven by spontaneous activity in the circuit would not only deplete the LTD-eligible pool, but also retard LTD in the remaining LTD-eligible synapses (Figure 4C), as one might expect, for example, if a protein necessary for LTD induction was present in a cell in limited quantities. This resource-depletion model reproduced the impaired learning of DKO mice, however, it failed to predict the empirical observation of impaired learning after pre-training in WT mice (Figure 4C, dotted black vs. solid black trace, green bracket). To account for this latter observation, the synaptic architecture had to include ‘stubborn’ synaptic states whereby too many LTD-reversing events can impair the capacity for subsequent LTD.

One model that possesses both essential properties of amplified saturation effects and stubborn synaptic states is a serial model (Leibold and Kempter 2008; Ben Dayan Rubin and Fusi, 2007) with only two different synaptic weights, but with each weight associated with multiple, internal states (Figure 4D). Enhancing LTD in these models leads to an exponential distribution over synaptic states, which strongly depletes the pool of synapses available to express LTD. This exponential distribution of synapses (Figure 4D, top right) can account for the impaired learning phenotype in the DKO mice by providing sufficient depletion of LTD-eligible synapses by spontaneous basal activity to overwhelm the higher intrinsic LTD rate in the remaining LTD-eligible synapses. In this model, pre-training in the DKO mice reversed this saturation bias (Figure 4D, bottom right vs. top right), allowing the higher intrinsic LTD rate to dominate. In contrast, WT mice started with many LTD-eligible synapses, but pre-training pushed synapses deep into the chain of potentiated states, thereby reducing their ability to undergo a subsequent transition to a depressed state (Figure 4D, bottom left, orange bars). Notably, the model predicts that with extended VOR-increase training, the advantage conferred on the DKO mice relative to WT mice by pre-training should disappear (see Appendix).

Other synaptic models in which the capacity for a synaptic weight to change depends on the history of prior plasticity events could also account for our empirical observations. Models with such metaplasticity include the cascade model (Fusi et al., 2005) (see Appendix), and a multistate model with multiple synaptic strengths and lower transition probabilities for the deeper states (Figure 4E). Given appropriate parameters, these models are capable of reproducing all of the qualitative learning outcomes observed experimentally (Figure 4A, right), in contrast to the classical, binary or linear multistate models of plasticity, which are unable to do so for any choice of parameters. This successful class of models illustrates general principles about how the enhancement of plasticity at a given synapse can contribute to both impaired and enhanced learning, depending on the recent history of activity. In essence, under conditions where the recent activity leaves many synapses of WT mice in the labile, LTD-eligible states, enhancing plasticity tends to push the synapses out of these LTD-eligible states before training and thus impairs learning despite enhanced plasticity (compare Figure 4D, top-left with top-right). Under conditions where the recent activity leaves many synapses of WT mice in the ‘stubborn," potentiated states, enhancing plasticity can push them into the labile, LTD-eligible states, and enhance subsequent learning (compare Figure 4D, bottom-left with bottom-right). A history-dependent alteration in the capacity to undergo additional plasticity has been documented experimentally at some synapses in the hippocampus (Montgomery and Madison, 2002; Petersen et al., 1998). Such history-dependence in the plasticity of cerebellar synapses, required by our model to explain our observed behavioral phenotypes, constitutes a key prediction that can be tested in future empirical investigations of the synaptic physiology.

Discussion

Our results provide new insights about how enhanced synaptic plasticity can yield either enhanced or impaired learning, and begin to identify the factors that favor enhanced versus impaired learning when synaptic plasticity is enhanced. Although it was previously known that enhanced plasticity can have these opposite effects on behavior, these divergent results were obtained by different labs, using different learning tasks that depend on different brain regions, and different lines of mice with enhanced plasticity. Here, we established that the same individual animals with enhanced plasticity can respond to the same behavioral training with either enhanced or impaired learning, depending on the recent history of experience. Thus, the capacity for new learning is determined by a dynamic interplay between the threshold for synaptic plasticity and the recent history of activity.

Classical models of synaptic plasticity, in which an LTP event simply increases the synaptic strength and an LTD event simply decreases the synaptic strength, do not readily explain our behavioral data. We showed that in such models, enhancing plasticity led to enhanced learning, across the entire parameter space, and independent of the history of previous learning experiences. A critical, theoretical ingredient required to account for our data is a history-dependent form of synaptic plasticity (Montgomery and Madison, 2002; Petersen et al., 1998; Fusi et al., 2005) in which repeated LTD changes the internal state of the synapse into a less labile state. Then enhanced LTD can deplete the pool of labile synapses capable of supporting further learning, leading to impaired learning despite enhanced plasticity. Thus, the similar learning deficits in mice with enhanced pf-Pk LTD (Figure 1D) and impaired pf-Pk LTD (Boyden et al., 2006; Hansel et al., 2006) could reflect a similar underlying cause, namely the unavailability of pf-Pk LTD during training (Figure 2A).

More generally, our results suggest a new hypothesis for why enhanced plasticity can impair learning. Such impairments have generally been attributed to an over-recruitment of the enhanced plasticity mechanism at inappropriate synapses during training corrupting the memory trace (Migaud et al., 1998; Koekkoek et al., 2005; Martin et al., 2000). Our results raise the alternative possibility that enhancing the plasticity mechanism necessary for learning might lead, instead to its under-recruitment at appropriate synapses during learning, as a result of saturation (Figure 2A).

The possibility that synaptic plasticity can be saturated has long been recognized (Martin et al., 2000). Behavioral training paradigms that induce learning can occlude the subsequent induction of synaptic plasticity in brain slices (Schreurs et al., 1997; Pascoli et al., 2012; Rioult-Pedotti et al., 1998), and strong, artificial stimulation of neural activity in vivo to saturate a plasticity mechanism has been shown to impair subsequent learning in animals with normal synaptic plasticity (Martin et al., 2000; Moser et al., 1998), as we found for cerebellar climbing fiber stimulation (Figure 2B). Despite its consideration in these contexts, saturation has not been identified previously as a factor that could limit the ability of enhanced synaptic plasticity to enhance learning. Our results provide initial experimental evidence for this hypothesis. In particular, our results suggest that in mice with a low threshold for associative synaptic plasticity, saturation of the plasticity and occlusion of further learning may occur, not only in response to the patterns of elevated neural activity that can induce saturation in WT animals, but also in response to the normal, ongoing, basal levels of activity in a circuit, in the absence of any training or neural stimulation (compare Figure 1D and Figure 2B). Thus, enhanced synaptic plasticity, in the form of a lower threshold for induction, can be opposed by a tendency for plasticity to saturate, which, in turn, limits the capacity for new learning.

The capacity for new learning could be decreased or increased by manipulations that altered activity in the VOR circuit for a few tens of minutes. In WT mice, 30 min of elevated climbing-fiber activity induced a state that prevented subsequent learning (Figure 2B, CF Stim, solid cyan). Recovery from this saturation also occurred over a timescale of tens of minutes; although VOR-increase learning was profoundly suppressed after climbing fiber stimulation, there was evidence for recovery of learning during the last few minutes of the 30 min training session (Figure 2B, solid blue trace at 30 min), and full recovery a few days later (Figure 2—figure supplement 1C). Likewise, the natural manipulation of circuit activity caused by 30 min of behavioral pre-training was apparently sufficient to reverse saturation in the DKO mice and transform their learning impairment into a learning enhancement (Figure 3A). Thus, if the recent neural activity is appropriately patterned, rapid recovery from saturation is possible, creating the potential for enhanced plasticity to support enhanced learning. This dependence on the recent history of activity may explain the difference between the results from DKO mice in vivo versus in vitro. In vitro, the levels of spontaneous activity are much lower than in vivo, hence any saturation that is present in vivo would rapidly decay in vitro, revealing enhancement rather than saturation of pf-Pk LTD (McConnell et al., 2009). The dependence of learning on the recent history of activity may also explain the observation of either impaired or enhanced learning on behavioral tasks that depend on different parts of the cerebellum (McConnell et al., 2009), which have different levels of spontaneous activity (Zhou et al., 2014), and different dependence of LTD induction on the patterns of neural activation (Suvrathan et al., 2016). In sum, in both DKO and WT mice, the capacity for new learning was highly dependent on the recent history of activity in the circuit over the previous tens of minutes.

Our findings reinforce the idea that synaptic plasticity and learning are not isomorphic: one cannot predict the learning outcome from the synaptic properties alone. Rather, the capacity for new learning is determined by a dynamic interplay between the threshold for synaptic plasticity and the recent history of activity. A better understanding of this interaction is of great clinical significance, with the potential to guide the treatment of a wide range of patients who could benefit from enhanced neural plasticity, such as those recovering from brain injury. Our results suggest that synaptic plasticity enhancers may be most effective if combined with strategies for controlling basal levels of neural activity. In particular, suppression of neural activity before training may prime enhanced learning under conditions of enhanced associative plasticity. In addition, our finding that the appropriate behavioral pre-training can unmask enhanced learning in mice with enhanced synaptic plasticity (Figure 3A) raises the possibility that behavioral therapy could provide an alternative to drugs in patients with pathologically altered synaptic plasticity (Koekkoek et al., 2005; Yashiro et al., 2009; Baudouin et al., 2012).

A better understanding of how the threshold for synaptic plasticity affects function has the potential to influence many areas of neuroscience. Synaptic plasticity plays a role in nearly all brain functions, from the most basic sensory processing to the highest cognitive functions, and from early development through aging (Hübener and Bonhoeffer, 2014; Greenwood, 2007; Chen and Tonegawa, 1997; Meredith, 2015). To get traction on the broad issue of how enhanced synaptic plasticity influences learning, we harnessed the analytical power of the relatively simple and well-characterized vestibular and oculomotor systems, and leveraged manipulations informed by specific knowledge about signaling and plasticity in those systems. Yet the finding that the enhancement of synaptic plasticity can result in either enhanced or impaired learning has been reported for many different brain regions, and for both associative LTP and LTD. Therefore, saturation should be considered as a factor that could limit the ability of enhanced plasticity to enhance learning in neural circuits throughout the brain. One can speculate that in each brain area, neural circuits have evolved to optimize the threshold for plasticity to delicately balance the need to prevent inappropriate inputs from triggering and saturating plasticity, while allowing the appropriate inputs to drive learning.

Materials and methods

Animals

All experimental procedures were approved by the Administrative Panel on Laboratory Animal Care at Stanford University under the animal care and use committee (IACUC) Protocol #9143, titled ‘Vestibular and Visual Control of Eye Movements in Mice’. All mice were housed on a reversed 12 hr light/12 hr dark cycle, and experiments were conducted during the animals’ dark cycle. After implant surgery for behavioral experiments, mice were single-housed in individual cages. All other mice were maintained in group housing of up to five animals per cage. MHCI H2-Kb/H2-Db−/− (KbDb−/−; referred to as double knockout (DKO)) mice on a C57BL/6 genetic background (Vugmeyster et al., 1998; Schott et al., 2003) were maintained as a homozygous breeding colony. Age-matched C57BL/6 WT mice (RRID:IMSR_JAX:000664) were purchased from Jackson Laboratory.

Surgery

Eye coil and head post implants

Request a detailed protocolMice were surgically prepared for behavioral experiments as previously described (Boyden and Raymond, 2003). In brief, while under anesthesia with ketamine/dexmedetomidine followed by isoflurane, a custom-built head post was attached to the top of the skull using anchor screws and dental acrylic. A small, copper scleral search coil (IET, Marly, Switzerland), 1 mm in diameter, was implanted on the temporal side of one eye beneath the conjunctiva for use in measuring eye movements. The search-coil leads were threaded subcutaneously and soldered to a two-pin connector that was also cemented to the anchor screws with dental acrylic. Animals were allowed to recover from surgery for 4–5 days before behavioral experiments.

Virus injections in the cerebellar flocculus

Request a detailed protocolIn some mice, lentivirus carrying H2-Db, H2-Db/GFP or GFP under the L7/pcp-2 promoter (see below) was used to drive expression specifically in cerebellar Purkinje cells. Mice were anesthetized as described above and craniotomies were made on both sides of the skull over the periotic capsule, which overlies the cerebellar flocculus. Approaching through the pinna, 1 ul of lentivirus was injected at a rate of 0.15 ul/min into each flocculus at a depth of 1250 to 1500 um, using a Harvard Apparatus 11plus pump. A minimum of two weeks following injection was allowed for viral expression before the mice were tested.

Virus injections in the inferior olive

Request a detailed protocolFor optogenetic stimulation experiments, virus carrying ChR2 was injected into the dorsal cap of Kooy of the inferior olive (IO), to target ChR2 to the neurons giving rise to the climbing fiber inputs onto Purkinje cells in the cerebellar flocculus (Nguyen-Vu et al., 2013; Kimpo et al., 2014). Adeno-associated virus (AAV) carrying ChR2 under the CaMKIIα promoter (CaMKIIα-ChR2(H134R)-EYFP (Gradinaru et al., 2007) was obtained from the Neuroscience Gene Vector and Virus Core at the Stanford School of Medicine. Mice were anesthetized as described above and a craniotomy was made above the inferior olive using stereotaxic coordinates. One-half to 1 ul of the AAV solution was injected into the dorsal cap of Kooy over the course of 15–30 min. Following injection, a minimum of four weeks was allowed for viral expression before further surgeries. Mice then underwent surgical implantation of an eye coil and headpost as described above, during which a craniotomy was also made in the periotic capsule, and a cannula (Plastic One, Inc.) implanted, to allow fiber optic access to the cerebellar flocculus.

Lentivirus vectors

Request a detailed protocolLentivirus was used to drive expression of H2-Db in cerebellar Purkinje cells. H2-Db (Garstka et al., 2007) was cloned into the BamHI site in the fourth exon of the L7/pcp-2 gene (Zhang et al., 2001). L7::H2-Db or L7::GFP (Oberdick lab) was cloned into a pCDH-EF1-MCS-T2A-copGFP lentiviral vector backbone (System Biosciences, Mountain View, CA; where EF1 is the promoter for elongation factor 1α, MCS is the multiple cloning site, T2A is a self-cleaving peptide that allows expression of multiple proteins from a single transcript, and copGFP is a green fluorescent protein used as a reporter), using SwaI restriction sites, with the EF1 promoter removed to generate the following constructs: L7::H2-Db-T2A-copGFP, L7::H2-Db-(stop)-T2A-copGFP, and L7::GFP-T2A-copGFP.

In the L7::H2-Db-T2A-copGFP construct, the T2A enables separate expression of H2-Db and copGFP proteins, and is indicated as L7:: H2-Db/GFP. Because a few amino acid residues from the T2A would be left on H2-Db expressed from the L7::H2-Db-T2A-copGFP virus, a second construct was designed, L7::H2-Db-(stop)-T2A-copGFP, which used the stop codon to allow H2-Db expression without any additional amino acid residues from the T2A, and without expression of copGFP.

There was no significant behavioral difference between DKO mice injected with L7::H2-Db-T2A-copGFP (n = 7) and L7::H2-Db-(stop)-T2A-copGFP (n = 8) (VOR-increase learning at 1.0 Hz: p=0.23); both viruses rescued the learning deficit in the DKO mice, therefore the data from these two groups were pooled and indicated as L7::H2-Db. The L7::GFP-T2A-copGFP expressed GFP and copGFP, as a negative control for the L7 promoter, and is indicated as L7::GFP.

All three L7 constructs were sequenced and packaged by the Neuroscience Gene Vector and Virus Core at the Stanford School of Medicine. Lentiviruses were produced by polyethyleneimine-mediated transfection of 293 T cells with four separate plasmids encoding HIV-1 gag-pol, HIV-1 rev, VSV-G envelope, and the HIV-1 based genome vector. Virus-containing culture media was harvested 24 and 48 hr post-transfection, filtered through a 0.45 μm filter and concentrated by ultracentrifugation. Concentrated virus was stored in single-use aliquots at −80°C.

A fourth virus, CMV::H2-Db-HA, provided a positive control for detection of H2-Db in the cerebellum in initial validation experiments, but because its expression was not restricted to Purkinje cells, it was not used further in this study. An HA tag was fused to the c-terminus of H2-Db by PCR. The CMV promoter was PCRed from a pcDNA3 vector (Thermo Fisher Scientific). CMV::H2-Db-HA was then cloned into a pCDH lentivector backbone (System Biosciences). The construct was sequenced for verification and packaged into lentivirus by the Neuroscience Gene Vector and Virus Core at the Stanford School of Medicine.

Reverse-transcriptase (RT) PCR

Request a detailed protocolTwo to three weeks after virus injection, the cerebellar flocculi of two DKO mice injected with L7::H2-Db-T2A-copGFP virus were dissected for mRNA analysis by RT-PCR. Thalamus from one WT control and spleen from one DKO mouse were used as positive and negative control samples, respectively. Primers for H2-Db were designed to detect exon 2 and exon 3 regions of H2-Db. RNA was extracted from each sample using RNAqueous-4PCR (Ambion, Life Technologies, NY) and cDNA was synthesized using the iScript cDNA Synthesis Kit (Bio-Rad). PCR products were evaluated by gel electrophoresis to confirm the presence of PCR products of predicted size of ~250 bp. H2-Db primers:

Sense- 5’CAAGAGCAGTGGTTCCGAGTGAG-3’;

Antisense- 5’CTTGTAATGCTCTGCAGCACCACT-3’.

Reactions for RT-PCR were carried out as previously described (Lee et al., 2014) using 1 ug of cDNA as a template (5 min at 95°C followed by 40 cycles (30 s at 95°C, 30 s, at 60°C, 30 s, 72°C)) (Veriti 96-well Thermal Cycler, Applied Biosystems). Glyceraldehyde-3-phosphate dehydrogenase (GAPDH) was used as internal control. GAPDH primers: Sense- 5’ATTGTCAGCAATGCATCCTGC-3’ Antisense- 5’AGACAACCTGGTCCTCAGTGT-3’. The quality of cDNAs was confirmed by genotyping PCR reactions as described previously (Lee et al., 2014) using 0.5 ~ 1 µg of cDNA as template and the samples containing genomic DNAs were discarded.

Immunohistochemistry

Request a detailed protocolTwo weeks after virus injection, three DKO mice injected in the flocculus with L7::H2-Db-T2A-copGFP were deeply anesthetized and immediately perfused with 0.1M PBS followed by 4% paraformaldehye (w/v) in PBS. The brain was removed and post-fixed for 2 hr at 20°C. After fixation, the brain was placed in 30% sucrose (w/v) in PBS solution overnight at 4°C. Then brain was then embedded in OTC (Sakura Fine Tek) and frozen for cryosectioning. Coronal sections of 20 um were made through the cerebellar flocculi. Slices were then incubated for 1 hr at 20°C with blocking solution containing 10% normal goat serum and 1% BSA in PBS with 0.3% Triton-X (PBST), and then overnight at 4°C with primary antibodies diluted in blocking solution (anti-TurboGFP, Evrogen AB513). Slices were then washed three times with PBST and incubated for 1 hr at 20°C with secondary antibody (Alexa Fluor 488 goat anti rabbit, Invitrogen A2153). Fluorescence images were taken using a Nikon Eclipse E800 microscope.

Behavioral experiments

Request a detailed protocolDuring each behavioral experiment, the head of the mouse was immobilized by attaching the implanted head post to a restrainer. The restrainer was attached to a turntable (Carco Electronics, Pittsburgh, PA), which delivered a vestibular stimulus by rotating the mouse about an earth-vertical axis. Visual stimuli were delivered by moving an optokinetic drum made of white translucent plastic with black and white vertical stripes, each of which subtended 7.5° of visual angle. The optokinetic drum was back-lit by fiber-optic lights.

Horizontal eye position was measured using the eye coil method, and sampled at a rate of either 500 or 1000 Hz. Eye velocity was calculated by differentiating eye position measurements obtained from the eye coil. Any data segment containing a saccade or motion artifact was excluded from the analysis and then a sinusoid was fit to remaining data to extract the amplitude and phase of the eye velocity response to vestibular or visual stimuli. The VOR was measured as the eye-movement response to a sinusoidal vestibular stimulus (rotation of the head about an earth-vertical axis, 1.0 Hz or 0.6 Hz, ±10°/s peak velocity) in complete darkness (i.e., with no visual inputs), in 40 s blocks. The VOR gain was calculated as the ratio of the eye-to-head movement amplitudes. The optokinetic reflex (OKR) was measured as the eye-movement response to an optokinetic visual stimulus (1.0 Hz, ±10°/s peak velocity) delivered with the head stationary, and the gain of the OKR was calculated as the ratio of eye-to-visual stimulus amplitudes.

Training to increase the VOR gain consisted of pairing a ± 10°/s sinusoidal vestibular stimulus with oppositely directed ±10°/s sinusoidal optokinetic drum rotation, such that a VOR gain of 2 would be required to stabilize the image on the retina (Figure 1D). Training or pre-training to decrease the VOR gain consisted of pairing a ± 10°/s sinusoidal vestibular stimulus with ±10°/s sinusoidal rotation of an optokinetic drum (visual stimulus) in the same direction as the head, such that the visual stimulus was stationary relative to the mouse and required a VOR gain of zero to stabilize the image on the retina (Figure 1E). Vestibular-only pre-training consisted of 1 Hz, ±10°/s sinusoidal vestibular stimulation in the dark (i.e., no visual stimulus). Training and pre-training were each conducted in three ten-minute blocks. Before and after each block, the eye movement response to the vestibular stimulus alone, i.e., the VOR, was measured in total darkness, and learning was calculated as the change in VOR gain after each block of training. The frequency of the visual-vestibular training stimuli and the vestibular testing stimuli were either 1.0 Hz or 0.6 Hz.

Experimenters running the behavioral experiments were blind to the genotype of the mice. Individual mice were used for multiple behavioral experiments (subjected to different training and testing conditions), separated by at least two days, with no specific randomization in the order of experiments. If the longevity of the eye coil and head post implants allowed, some mice underwent the same type of behavioral experiment more than once, in which case, the results from the replications were averaged for that animal and a single averaged value was used in the group analysis.

Optical activation of climbing fibers

Request a detailed protocolIn some experiments, animals were subjected to 30 min of optogenetic climbing fiber stimulation prior to visual-vestibular VOR-increase or VOR-decrease training. Experiments were performed on WT mice injected in the inferior olive with virus carrying ChR2, and sham control mice that were not injected with any virus, but underwent that same surgical procedure of eye coil, head post and cannula implantation for behavioral testing. In both the experimental and sham control groups, optical stimulation was delivered unilaterally to the cerebellar flocculus through the implanted cannula via a 200 um optical fiber connected to a blue laser (473 nm, Laserglow). The optical stimulation consisted of 250 ms trains of 3 pulses, repeated every 1 s, with each pulse 2 ms in duration at an intensity of ≤3 mW. The optical stimulation was delivered in 10 min blocks over the course of 30 min while the animal was head-restrained and stationary in the dark. The gain of the VOR was assessed before and after each block of optical stimulation. VOR training began within 2 min of the end of the 30 min of optical stimulation, using vestibular-visual stimulus pairing to either increase or decrease the gain of the VOR.

Statistical analysis

Request a detailed protocolAdequate sample size was determined based on previous experiments of VOR behavior (Boyden and Raymond, 2003; Boyden et al., 2006) and optogenetic stimulation (Nguyen-Vu et al., 2013) in mice, as borne out the by results. Statistical analyses were performed using Microsoft Excel and Graphpad Prism (RRID:SCR_002798). Data are presented as means ± s.e.m unless otherwise indicated. The Kolmogorov-Smirnov test was used to test for normality, and Bartlett’s multiple sample test was used to determine equal variance. Unpaired Student's t tests (2-sided) were used to compare groups. When the time course data were compared, two-factor repeated measure ANOVA was used to test for a significant difference between groups. A Fischer’s LSD post-hoc test was used only when there was a significant difference between groups. For all tests, p<0.05 was considered to be statistically significant.

Computational models

Request a detailed protocolWe used a computational approach to determine the essential features of synaptic models that could account qualitatively for our central empirical observations: (1) without pre-training, enhanced plasticity impairs learning; (2) pre-training rescues learning in mice with enhanced plasticity; (3) pre-training impairs learning in WT mice; and (4) with appropriate pre-training, mice with enhanced plasticity learn faster than WT (Figure 4, left, reproduced from Figure 3A). In all cases, the contribution of pf-Pk LTD to VOR learning was modeled by measuring the initial rate of VOR-increase learning with the rate at which synapses transitioned to a depressed state during training(Ito, 1972). This rate was determined by three factors: (1) fdep, the rate of candidate LTD events, or the pattern of neural activity with the potential to induce LTD (i.e., near simultaneous activation of cerebellar parallel fibers and climbing fibers); (2) qdep, the intrinsic plasticity rate, which corresponded to the threshold for LTD induction, or the probability that a candidate LTD event would cause an eligible synapse to transition to a depressed state; and (3) pstrong, the number of synapses eligible to transition to a depressed state.

The lower induction threshold in DKO mice was modeled as an increase in the intrinsic plasticity rate, qdep(MHC) > qdep. Training to increase the VOR was modeled as an increase in the rate of candidate LTD events, fdep → fdep + Δf. Instead of explicitly modeling VOR-decrease learning, because its mechanism(s) are still unknown, we only modeled the component that reverses VOR-increase as an increase in the rate of LTP events: fpot → fpot + Δf. For illustration, we chose fpot = fdep = ½ in the absence of VOR training, though none of our results depend upon this balanced rate of candidate LTP and LTD events. We compared synaptic models with different numbers of potentiated and depressed states and different probabilities of transitioning between states. See Appendix for details.

Code availability

Request a detailed protocolComputational models were simulated using custom MATLAB (RRID:SCR_001622) code (MATLAB R2013b, The MathWorks Inc., Natick, Massachusetts). The code for simulating the computational models is publicly available.

Appendix 1

Mathematical formalism

Models of synapses

We make the following assumptions:

The synapses are identical and have internal functional states, where the states of different synapses are independent of each other.

Candidate potentiating/depressing plasticity event timings are distributed as Poisson processes with rates .

Candidate potentiation and depression events are described by Markov processes with transition probabilities .

The synaptic weights of the internal states are given by the column vector . This can only take values in a finite range that we can shift to . Most of the models will only use the two extreme values.

The overall rate of candidate plasticity events will only affect the units we measure time in. For the questions we are investigating, qualitative understanding of when learning is enhanced/impaired rather than detailed numerical matching of learning curves, this is completely irrelevant. Only the relative rates will matter. With this in mind, we define

where is the fraction of candidate plasticity events that are potentiating/depressing.

The independence and identicalness of synapses means that the state of the system can be completely described by the probability distribution over the internal states, the row vector which evolves as

where is a continuous time Markov transition matrix and is the identity matrix. Eventually, this will settle into the equilibrium distribution:

We model the DKO mice by changing to , which has larger off-diagonal matrix elements. This makes it easier to induce a decrease in synaptic weight, modelling the observed lower threshold for LTD (McConnell et al., 2009).

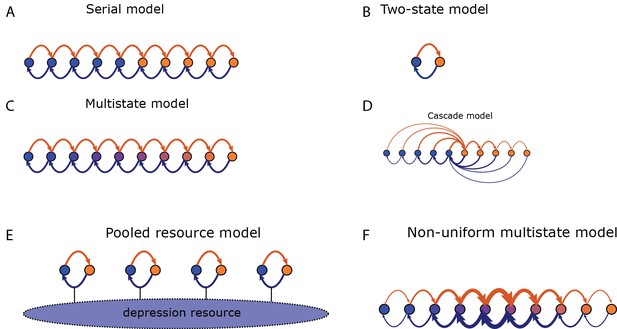

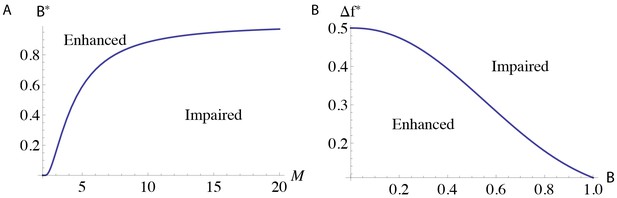

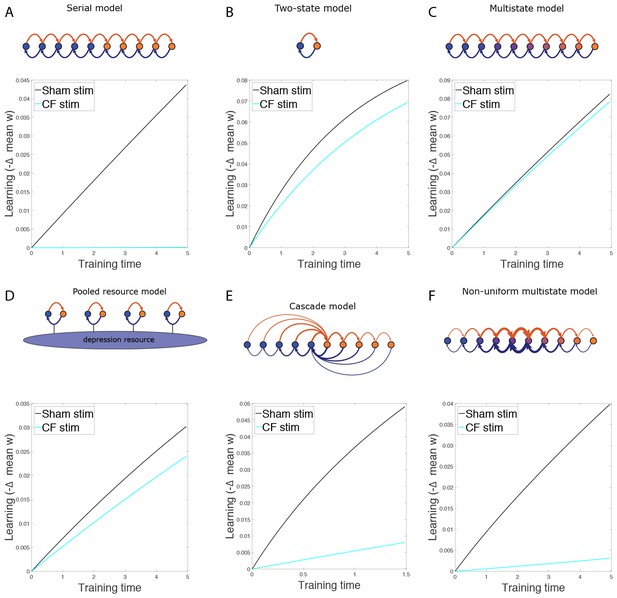

We will look at several different models, the serial model (see Leibold and Kempter, 2008; Ben-Dayan Rubin and Fusi, 2007 and Appendix 1—figure 1A) which has only two values for the synaptic weight, the two-state model (which can be thought of as a special case of the serial model, see Appendix 1—figure 1B), the multistate model (see Amit and Fusi, 1994; Fusi and Abbott, 2007a and Appendix 1—figure 1C) which has a linearly varying synaptic weight, and the cascade model (see Fusi et al., 2005 and Appendix 1—figure 1D). We will also look at a new, pooled resource model and a non-uniform version of the multistate model that we will define below.

Transition probabilities for different models.

Potentiation induces transitions indicated by orange arrows, depression indicated by blue arrows. States of strong/weak synaptic weight indicated by orange/blue circles. (A) In the serial model the transition probabilities for potentiation/depression are all equal and it is parameterised by these two values. The synaptic weight takes only two values, . (B) The two-state model is parameterised by the two transition probabilities. (C) In the multistate model the transition probabilities for potentiation/depression are all equal and it is parameterised by these two values. The synaptic weight varies linearly in the interval . (D) In the cascade model, the transition probabilities decay geometrically with a parameter (see Fusi et al., 2005) and synaptic weight takes only two values. (E) In the pooled resource model, Several two-state synapses share resources that are required for potentiation and depression. These resources are depleted as more synapses are potentiated or depressed. This pool of synapses can be modelled as one compound synapse. (F) In the non-uniform multistate model the synaptic weight varies linearly in the interval , similar to the multistate model, but the transition probabilities between adjacent states decays exponentially away from the central transition for both potentiation and depression.

For the serial, multistate and two state models, we will use the same value for , the transition rate between adjacent states, for potentiation in the wild-type and DKO models. We will use a larger value for for depression in the DKO models than in the wild-type.

For the cascade model, we will use the same value for the parameter (which controls the ratio of adjacent transition rates, see Fusi et al., 2005) for potentiation in the wild-type and DKO models. We will use a larger value for for depression in the DKO models than in the wild-type.

The values used for all these parameters in simulations are listed in Appendix 1—table 1.

Parameters used for simulations. For the serial, two state and multistate models, the plasticity parameter listed is the transition probability between adjacent states. For the pooled resource model, the plasticity parameters are the minimum and maximum transition probability for the constituent two-state synapses. For the cascade and non-uniform multistate models, the plasticity parameter is the ratio of adjacent transition probabilities.

| Model | # states | Plasticity parameter | ||||||

|---|---|---|---|---|---|---|---|---|

| Pot | WT dep | DKO dep | Base | Inc | Dec | |||

| Serial | 10 | 0.12 | 0.14 | 0.2 | 0.5 | 0.89 | 0.11 | 100 |

| Two-state | 2 | 0.1 | 0.1 | 0.2 | 0.5 | 0.6 | 0.4 | 5 |

| Multistate | 10 | 0.3 | 0.3 | 0.4 | 0.5 | 0.8 | 0.2 | 5 |

| Pooled res. | 7 | 0.008 | [0.0006, 0.6] | [0.001, 1] | 0.5 | 0.9 | 0.1 | 20 |

| Cascade | 14 | 0.386 | 0.398 | 0.466 | 0.478 | 0.63 | 0.002 | 200 |

| Non-uni. | 12 | 0.4 | 0.4 | 0.53 | 0.5 | 0.7 | 0.1 | 500 |

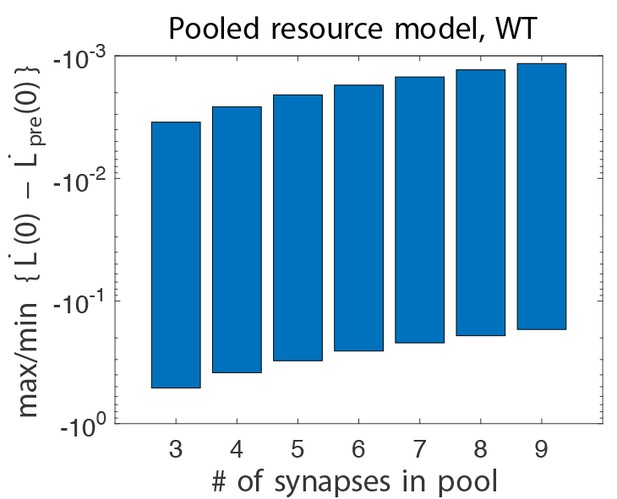

Pooled resource model

Suppose that there is some resource required for potentiation/depression that is shared between synapses and is depleted as more synapses are potentiated/depressed and replenished when this is reversed, as shown in Appendix 1—figure 1E. We can avoid going beyond the independent synapse model by modelling the pool of synapses that share the resource as a single compound synapse.

We will model the individual synapses with the two-state model. Let be the number of them that are potentiated. We will model the effect of resource depletion linearly with the potentiation/depression probabilities for the individual synapses:

At each plasticity event for the compound synapse, one of the individual synapses will be chosen randomly for update. This effectively reduces the transition probabilities by .

This compound synapse would seem to have internal states. However, we need only keep track of the number of potentiated synapses, not their identity. We can group those states of the pool of synapses that have the same total number of potentiated synapses into a single state of the equivalent compound synapse, leaving states. The transition network will then have a multistate topology (see Appendix 1—figure 1C) but the transition probabilities will no longer be uniform and the weight of the compound synapse is the mean of its constituent synapses:

The Markov process is lumpable when partitioning states by total number of potentiated synapses (see Kemeny et al., 1960; Burke and Rosenblatt, 1958; Ball and Yeo, 1993). The transition probabilities between lumps and is computed by choosing one state from lump and summing the transition probabilities to all states in lump , which must be the same for all states in lump .

For any state in lump , there are synapses that can be potentiated to go to lump . Each of these transition probabilities is . Similarly, there are synapses that can be depressed to go to lump . Each of these transition probabilities is . Thus:

with all other off-diagonal elements equal to zero. The diagonal elements are chosen so that the rows sum to one.

This model is parameterised by the range of values, , for potentiation and depression. We will use the same values for potentiation in the wild-type asnd DKO models. We will use larger values for depression in the DKO models than in the wild-type. We consider a version of this model that has resource depletion for depression only, so that potentiation transition probabilities are unaffected by the number of potentiated synapses. This is done by setting . The values of these parameters are listed in Appendix 1—table 1.

Non-uniform multistate model

This model, shown in Appendix 1—figure 1F, is similar to the multistate model (see Appendix 1—figure 1C), as it only has transitions between adjacent states and a linearly varying synaptic weight. However, like the cascade model (see Fusi et al., 2005 and Appendix 1—figure 1D), the transition probabilities decay exponentially away from the central transition. More precisely:

with all other off-diagonal elements equal to zero. The diagonal elements are chosen so that the rows sum to one.

This model is parameterised by the values of chosen for potentiation and depression. We will use a larger value for depression in the DKO models. The values used are listed in Appendix 1—table 1.

Model of VOR learning experiment

Training the animal will not change the internal dynamics of a synapse under potentiation or depression. It will change the environment, which will lead to a change in how often potentiation and depression occur. We will model this by changing , leaving unchanged. This approach was used to model motor learning by Smith et al., 2006.

The untrained case will be described by . Gain-increase training will be described by , and gain-decrease training will be described by . Note that the forgetting matrix Equation (1) and the equilibrium distribution Equation (2) depend on , which we will indicate with subscripts.

Before training, the synaptic distribution will be in the equilibrium distribution corresponding to . During gain-increase training, it will evolve according to Equation (1) with :

On the other hand, if the gain-increase training follows gain-decrease pre-training for some time period, :

We will describe the effect of training by the decrease in mean synaptic weight:

One can approximate the input to a Purkinje cell as some linear combination of the synaptic weights (weighted by the activities of the corresponding parallel fibres). If we are not keeping track of synaptic identity, the most natural linear combination to use would be an equal sum of them all. The behavioural output (VOR gain) will be some unknown, non-linear function of the synaptic weights, so the best we can hope for is to reproduce qualitative features of the experiment, such as whether learning is enhanced or impaired by the mutation or pre-training.

As the knockout produces no change in baseline performance, there must be a compensatory mechanism somewhere else. We will model this compensation as a simple linear offset, as could be produced by another population of neurons/synapses whose effect cancels with these neurons/synapses.

We will assume . This is because the relevant effects of the knockout are well localised to the Purkinje cells, as shown by the rescue data, so the activity of the parallel and climbing fibres should not change very much. Therefore the rates of potentiation and depression should not change very much either.

For the most part, we set , and , with . We use the same values for wild-type and DKO models for the reasons discussed above. We could adjust to keep unchanged, if so desired, but this would only change the overall timescale and would not affect any of the qualitative comparisons that we are concerned with here. The values of these parameters are listed in Appendix 1—table 1.

We are also assuming that the relation between VOR gain and mean synaptic weight is the same for DKO mice and wild-type, except for a linear offset to compensate for the difference in equilibrium weights mentioned above. This ensures that the qualitative questions mentioned above (enhancement or impairment of learning) will not be affected.