Impaired adaptation of learning to contingency volatility in internalizing psychopathology

Abstract

Using a contingency volatility manipulation, we tested the hypothesis that difficulty adapting probabilistic decision-making to second-order uncertainty might reflect a core deficit that cuts across anxiety and depression and holds regardless of whether outcomes are aversive or involve reward gain or loss. We used bifactor modeling of internalizing symptoms to separate symptom variance common to both anxiety and depression from that unique to each. Across two experiments, we modeled performance on a probabilistic decision-making under volatility task using a hierarchical Bayesian framework. Elevated scores on the common internalizing factor, with high loadings across anxiety and depression items, were linked to impoverished adjustment of learning to volatility regardless of whether outcomes involved reward gain, electrical stimulation, or reward loss. In particular, high common factor scores were linked to dampened learning following better-than-expected outcomes in volatile environments. No such relationships were observed for anxiety- or depression-specific symptom factors.

Introduction

Many of the situations we encounter in daily life are characterized by uncertainty. From small choices to large lifetime decisions, we rarely can know for sure the consequences that will stem from our actions. The uncertainty that pervades our daily decision-making is a source of greater distress, and even dysfunction, for some individuals than for others. In recognition of this, intolerance of uncertainty has been proposed as a core feature of generalized anxiety disorder (Freeston et al., 1994; Dugas et al., 1998; Dugas et al., 2001) and, more recently, a transdiagnostic marker of internalizing psychopathology more broadly (Gentes and Ruscio, 2011; Carleton et al., 2012; Boswell et al., 2013). However, these accounts are largely based on patients’ self-reported behavioral and emotional responses to exposure to uncertainty rather than experimental and computational investigations of the cognitive processes involved in choice under uncertainty. We address this in the current study. Here, we test the possibility that a deficit in adapting decision-making to uncertainty might be linked to internalizing psychopathology, in general, and observed across both aversive and reward-based learning.

Within the computational literature, advances have been made in formalizing different sources of uncertainty and their effects on decision making (Yu and Dayan, 2005; Behrens et al., 2007; Nassar et al., 2012; Payzan-LeNestour et al., 2013). One source of uncertainty is noise in the relationship between actions and outcomes, for instance when an action only leads to a given outcome on a proportion of the occasions it is performed. A second source of uncertainty is non-stationarity, or volatility, in the underlying causal structure; for example, when action-outcome contingencies switch and an action that was primarily associated with a given outcome becomes predominantly associated with another. Such volatility leads to uncertainty around the estimate of outcome probability; this can be conceived of as second-order uncertainty (Bach et al., 2011). Crucially, the level of second-order uncertainty determines the optimal response to unexpected outcomes. When contingencies are volatile, and hence second-order uncertainty is high, participants should adjust their probability estimates more rapidly than when contingencies are stable and second-order uncertainty is low. Behrens et al., 2007 reported that healthy adult participants are indeed able to adjust their rate of updating, that is, their learning rate, to match contingency volatility in this manner.

Failure to adapt correctly to the source of uncertainty present in a given situation may result in inaccurate predictions and suboptimal decisions. This might well be a source of distress and dysfunction in everyday life. In a prior study, we examined the relationship between trait anxiety and adaptation of decision-making to contingency volatility (Browning et al., 2015). Modeling participants’ performance on an aversive version of Behrens and colleagues’ probabilistic decision-making task, using electrical stimulation as outcomes, we found that trait anxiety was associated with reduced adaptation of learning rate to volatility. This finding raises the possibility that poor adjustment of probabilistic learning to volatility might reflect an inability to differentially respond to different forms of uncertainty and that this, in turn, might be an important marker of psychopathology.

Critically, this initial work leaves open the question of whether impaired adaptation of learning rate to volatility is specific to anxiety or a marker of internalizing psychopathology more broadly. Scores on the Spielberger Trait Anxiety Inventory (STAI, Spielberger et al., 1983), the measure of anxiety used in Browning et al., 2015, are typically elevated across patients with both anxiety and depressive disorders and correlate highly with scores on measures of depression such as the Beck Depression Inventory (BDI, Beck et al., 1961). Establishing whether impairment in adapting decision-making to volatility is specific to anxiety, or more broadly linked to internalizing psychopathology, requires a way of partitioning symptom variance into components that disentangle variance common to both anxiety and depression from that specific to anxiety or to depression. Bifactor analysis provides a principled method for achieving this goal. It has been used extensively to study the structure of internalizing symptomology (Clark et al., 1994; Steer et al., 1995; Zinbarg and Barlow, 1996; Steer et al., 1999; Simms et al., 2008; Steer et al., 2008; Brodbeck et al., 2011) and has consistently revealed a substantial amount of shared variance, often termed ‘general distress’ or ‘negative affect’ (Clark and Watson, 1991; Clark et al., 1994). In addition, separate specific factors for depression and anxiety are consistently observed, with the depression-specific factor tapping symptoms of anhedonia (Clark et al., 1994; Steer et al., 1999; Steer et al., 2008) and anxiety-specific factors tapping symptoms of anxious arousal (Clark et al., 1994; Steer et al., 1999; Steer et al., 2008) and worry (Brodbeck et al., 2011). Although bifactor modeling of internalizing symptoms is well established, it has not, to date, been used to inform studies of anxiety- and depression-related deficits in decision-making. Using bifactor analysis to estimate scores for each participant on latent dimensions of internalizing symptoms, we can investigate whether impoverished adjustment of learning rate to volatility is primarily linked to general factor scores (i.e. to symptom variance common to both anxiety and depression) or to anxiety-specific or depression-specific factor scores.

A second question of specificity pertains to the breadth of impairment in adjusting learning rate to volatility. There is evidence that the neural substrate, and affective consequences, of learning from unexpected outcomes may vary both with domain (reward versus punishment; Boureau and Dayan, 2011) and with whether outcomes are better or worse than expected (Frank et al., 2007; Cox et al., 2015; Eldar et al., 2016; Palminteri and Pessiglione, 2017). If impoverished adaptation of decision-making to volatility is potentially a core feature of internalizing psychopathology, it is important to establish if such impairment is, or is not, observed regardless of whether choice involves potential aversive outcomes (e.g. shock), reward gain or reward loss and both when outcomes exceed and fall short of our expectations.

Our aims were hence as follows. First, to use bifactor analysis of item-level responses to measures of internalizing symptoms to determine whether impaired adjustment of learning rate to volatility is linked to symptom variance common to both anxiety and depression (i.e. to general factor scores) or to symptom variance specific to anxiety or to depression. Second, to determine whether any such impairment is domain general (i.e. observed both when actions involves the pursuit of rewarding outcomes and the avoidance of punishing outcomes) or domain specific. And third, to determine whether this impairment is observed equally for learning from both positive and negative prediction errors (i.e. better-than-expected and worse-than-expected outcomes) or is differentially linked to one or the other.

Results

Outline

We addressed these aims through two experiments. The first experiment was conducted in our on-site testing facilities. The participant sample comprised patients diagnosed with major depressive disorder (MDD) or generalized anxiety disorder (GAD), healthy control participants screened to be free of any psychiatric diagnoses, and a community sample with naturally varying levels of symptoms (for further details see Materials and methods: Self-Report Measures). Participants completed two versions of the probabilistic decision-making under volatility task (Behrens et al., 2007; Browning et al., 2015). In one version, they chose between two shapes that were associated with potential receipt of electrical stimulation of varying magnitude. In the other version, the outcomes involved possible receipt of financial rewards of varying magnitude. In each case, the probability that a given shape would result in receipt of shock or reward had to be learned across trials. Action-outcome contingencies were stable in one period of the task and volatile in the other (see Materials and methods).

The second experiment was conducted online using Amazon’s Mechanical Turk platform. Here, we used reward gain and reward loss versions of the probabilistic decision-making under volatility task. As detailed further below, we used the same bifactor decomposition of internalizing symptoms and the same computational model of task performance across both experiments and all three versions of the volatility task. A hierarchical Bayesian framework was used for model estimation with participants’ scores on the latent factors of internalizing symptoms entered as predictors of behavioral model parameter values.

Experiment 1

Estimating latent factors of internalizing symptoms

Eighty-eight participants (51 females, mean age = 27 ± 8 years) took part in experiment 1. This was conducted at the Wellcome Centre for Integrative Neuroimaging (WIN) at the John Radcliffe Hospital with ethical approval obtained from the Oxford Central University Research Ethics Committee (CUREC). Twenty participants had a primary diagnosis of major depressive disorder (MDD), 12 participants had a primary diagnosis of generalized anxiety disorder (GAD), 26 ‘healthy control’ participants of approximately the same age and sex ratio were screened to ensure they were free of any psychiatric diagnosis, and 30 participants were members of the local community with a natural range of internalizing symptoms (see Appendix 1—table 1 for participant details). Participants who met criteria for any psychiatric diagnoses apart from anxiety or depressive disorders were excluded, as were participants with neurological conditions and those currently taking psychoactive medications or recreational drugs (see Materials and methods). Data from two control participants was excluded; one was a result of equipment failure; debriefing indicated the second misunderstood the task. This left 86 participants in total.

Participants completed a battery of standardized questionnaires comprising the Spielberger State-Trait Anxiety Inventory (STAI form Y; Spielberger et al., 1983), the Beck Depression Inventory (BDI; Beck et al., 1961), the Mood and Anxiety Symptoms Questionnaire (MASQ; Clark and Watson, 1995; Watson and Clark, 1991), the Penn State Worry Questionnaire (Meyer et al., 1990), the Center for Epidemiologic Studies Depression Scale (CESD; Radloff, 1977), and the 90-item Eysenck Personality Questionnaire (EPQ; Eysenck and Eysenck, 1975). These questionnaires were selected to measure a range of depressive and anxiety symptoms (e.g. anhedonia, negative mood, negative cognitive biases, worry, somatic symptoms) and to assess trait negative affect more broadly (via inclusion of the EPQ Neuroticism subscale; items from other subscales of the EPQ were not included in the bifactor analysis described below).

We sought to separate symptom variance common to both anxiety and depression from that specific to depression or to anxiety. Bifactor modeling of item level responses provides a simple approach to achieve this aim, as demonstrated previously within the internalizing literature (Clark et al., 1994; Steer et al., 1995; Steer et al., 1999; Simms et al., 2008; Brodbeck et al., 2011). Bifactor models decompose the item-level covariance matrix into a general factor and two or more specific factors. Here, we specified a model with one general and two specific factors. This decision drew on our theoretical aim, namely to separate symptom variance common to both anxiety and depression from that specific to depression and that specific to anxiety, and was informed by prior tripartite models of internalizing psychopathology (e.g. Clark and Watson, 1991). It was also supported by the results of eigenvalue decomposition of the covariance matrix. Only the first three eigenvalues were reliably distinguishable from noise—this was determined by comparison of the eigenvalues in descending order against eigenvalues obtained from a random normal matrix of equivalent size (Humphreys and Montanelli, 1975; Floyd and Widaman, 1995), see Figure 1—figure supplement 1 and Materials and methods for more details.

The Schmid-Leiman (SL) procedure was used to estimate the loadings of individual questionnaire items on each factor (Schmid and Leiman, 1957). This procedure performs oblique factor analysis followed by a higher order factor analysis on the lower order factor correlations to extract a general factor. All three factors are forced to be orthogonal to one another, which allows for easier interpretability. In line with previous findings (Clark et al., 1994; Steer et al., 1995; Zinbarg and Barlow, 1996; Steer et al., 1999; Simms et al., 2008; Steer et al., 2008; Brodbeck et al., 2011), the general factor had high loadings (>0.4) for multiple anxiety-related and depression-related items and moderately high loadings (>0.2) across almost all items. One specific factor had high loadings (>0.4) for questions related to anhedonia and depressed mood. The other specific factor had high loadings (>0.4) for questions related to worry and anxiety.

We validated this factor structure by conducting a confirmatory bifactor analysis on item-level responses to the same set of questionnaires completed by an independent online sample (n = 199). Participants were students at UC Berkeley (120 females, mean age = 20 ± 4). This group was fairly distinct from our first sample, being more homogenous in age and educational status and less homogenous in ethnicity, and not including individuals recruited to meet diagnosis for either GAD or MDD. Evaluating the fit of the factor structure obtained from experiment 1 in this second dataset is a strong test of its generalizability. In the confirmatory bifactor analysis, we used diagonally weighted least squares estimation and constrained the factor structure so that items were only allowed to load on a factor for which they had a loading of >0.2 in experiment 1 (see Materials and methods: Exploratory Bifactor Analysis). This constrained model showed a good fit to the data from this new participant sample, comparative fit index (CFI) = 0.962.

As a convergent analysis, we conducted an unconstrained (i.e. exploratory, not confirmatory) bifactor analysis in this second participant sample to see if a similar factor structure would emerge to that obtained in experiment 1. We again specified one common and two specific factors. The factor loadings obtained were highly congruent with the factor loadings obtained from the bifactor analysis in experiment 1 (cosine-similarity was 0.96 for the general factor loadings, 0.81 for the depression-specific factor loadings, and 0.77 for the anxiety-specific factor loadings). Congruence in factor loadings was assessed after matching the two specific factors according to the similarity of their loading content.

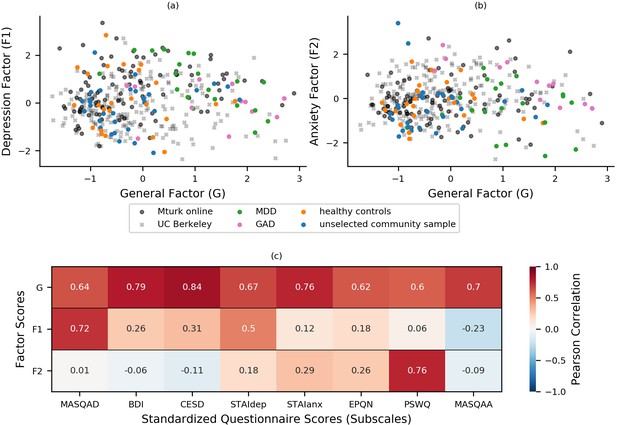

Factor loadings from experiment 1 were used to calculate factor scores for all participants from experiment 1 (n = 86) and the confirmatory factor analysis sample (n = 199). The resultant scores are plotted in Figure 1a–b. As an additional check of construct validity, participants’ scores on these factors were correlated with summary (scale or subscale) scores for the standardized questionnaires administered, Figure 1c. The questionnaires to which items belonged were not specified during the fitting of the bifactor model, hence these summary scores provide an independent measure of the construct validity of the latent factors extracted from the bifactor analysis. As can be seen in Figure 1c, participants’ scores on the general factor correlated strongly (r > 0.60) with summary scores for all the questionnaire measures, indicating that the general factor is indeed tapping variance linked to both anxiety and depressive symptoms. Scores on the depression-specific factor correlated strongly with scores for the MASQ anhedonia subscale (MASQ-AD; r = 0.72) and the STAI depression subscale (STAIdep; r = 0.53), and scores on the anxiety-specific factor correlated strongly with scores for the Penn State Worry Questionnaire (PSWQ; r = 0.76). This indicates that the two specific factors extracted from the bifactor analysis do indeed capture anxiety- and depression-related symptoms, respectively, as intended, and that these factors explain variance above that explained by the general factor. The latter conclusion can be drawn since the specific factors are orthogonal to the general factor and therefore their correlations with scale and subscale scores reflect independently explained variance. This can be further demonstrated by regressing variance explained by scores on the general factor out of scale and subscale scores and then examining the relationship between residual scores for each scale with scores on the two specific factors. As shown in Figure 1—figure supplement 2, after removing variance explained by general factor scores, nearly all the remaining variance in PSWQ scores could be captured by the anxiety-specific factor and nearly all the remaining variance in MASQ-AD scores could be captured by scores on the depression-specific factor.

Bifactor analysis of internalizing symptoms.

(a-b) Bifactor analysis of item-level scores from the STAI, BDI, MASQ, PSWQ, CESD, and EPQ-N (128 items in total) revealed a general ‘negative affect’ factor (xaxis) and two specific factors: one depression-specific (left panel, y-axis) and one anxiety-specific (right panel, y-axis). The initial bifactor analysis was conducted in a sample (n = 86) comprising participants diagnosed with MDD, participants diagnosed with GAD, healthy control participants and unselected community participants. The factor solution showed a good fit in a separate sample of participants (n = 199) recruited and tested online through UC Berkeley’s participant pool (x). Item loadings on a sub-set of questionnaires were used to calculate factor scores for a third set of participants recruited and tested online through Amazon’s Mechanical Turk (n = 147), see Experiment 2. It can be seen that both online samples show a good range of scores across the general and two specific factors that encompass the scores shown by patients with GAD and MDD. (c) Factor scores were correlated with summary scores for questionnaire scales and subscales to assess the construct validity of the latent factors. This was conducted using a combined dataset comprising data from both the exploratory (n = 86) and confirmatory (n = 199) factor analyses. Scores on the general factor correlated highly with all questionnaire summary scores, scores on the depression-specific factor correlated highly with measures of depression, especially anhedonic depression, and scores on the anxiety-specific factor correlated particularly highly with scores for the PSWQ. MASQAD = Mood and Anxiety Symptoms Questionnaire (anhedonic depression subscale); BDI = Beck Depression Inventory; CESD = Center for Epidemiologic Studies Depression Scale; STAIdep = Spielberger State-Trait Anxiety Inventory (depression subscale); STAIanx = Spielberger State-Trait Anxiety Inventory (anxiety subscale); EQN-N = Eysenck Personality Questionnaire (Neuroticism subscale); PSWQ = Penn State Worry Questionnaire; MASQAA = Mood and Anxiety Symptoms Questionnaire (anxious arousal subscale); MDD = major depressive disorder; GAD = generalized anxiety disorder.

As outlined earlier, we applied a bifactor model to item-level symptom responses as we sought to tease apart symptom variance common to anxiety and depression versus unique to anxiety or depression. With model selection, both the extent to which a given model can address the aim of the experiment and the fit of the given model to the data are important considerations. In addition to assessing the absolute fit of the bifactor solution in the confirmatory factor analysis (CFA) dataset, we can also consider its fit relative to that of alternate models. The bifactor model reported here showed a better fit to the novel (CFA) dataset than a ‘flat’ correlated two-factor model, a hierarchical three factor model with the higher order factor constrained to act via the lower level factors, and a unifactor model created by retaining only the general factor and neither of the specific factors (see Appendix 2: Additional Factor Analyses for further details). We note that none of these alternate models would enable us to separate symptom variance common to anxiety and depression versus unique to anxiety and depression, as desired.

Computational modeling of task performance: measuring the impact of block type (volatile, stable), task version (reward, aversive), and relative outcome value (good, bad) on learning rate

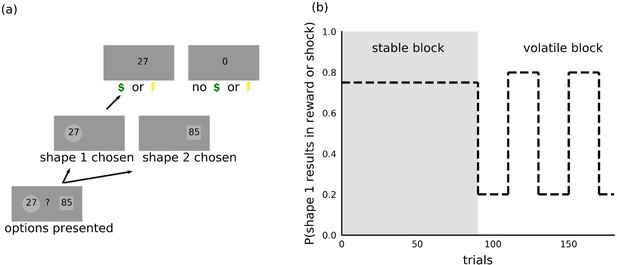

Participants completed both reward gain and aversive versions of a probabilistic decision-making under volatility task (Behrens et al., 2007; Browning et al., 2015). Full task details are provided in Figure 2 and in the Materials and methods. In short, participants were asked to choose between the same two shapes repeatedly across trials. On each trial, one of the two shapes resulted in reward receipt or shock receipt; the nature of the outcome depended on the version of the task. When making their choice, participants were instructed to consider both the magnitude of the reward or shock associated with each shape, which was shown to participants inside each shape and varied across trials, and the probability that each shape would result in reward or shock receipt. The outcome probability could be learned across trials, using the outcomes received. During the stable task period, the outcome probability was fixed such that one shape had a 75% probability of resulting in reward or shock receipt if chosen and the other 25%. During the volatile task period, the shape with the higher probability of shock or reward receipt switched every 20 trials (see Materials and methods for further details).

Task.

(a) On each trial, participants chose between two shapes. One of the two shapes led to receipt of shock or reward on each trial, the nature of the outcome depending on the version of the task. The magnitude of the potential outcome was shown as a number inside each shape and corresponded to the size of the reward in the reward version of the task or intensity of the electric shock in the aversive version of the task. (b) Within each task, trials were organized into two 90-trial blocks. During the stable block, one shape had a 75% probability of resulting in reward or shock receipt; the other shape resulted in shock or reward receipt on the remaining trials. During the volatile block, the shape with the higher probability (80%) of resulting in shock or reward receipt switched every 20 trials. Participants were instructed to consider the magnitude of the potential outcome, shown as a number inside each shape, as well as the probability that the outcome would occur if the shape was chosen.

We fitted participants’ choice behavior using alternate versions of simple reinforcement learning models. We focused on models that were parameterized in a sufficiently flexible manner to capture differences in behavior between experimental conditions (block type: volatile versus stable; task version: reward gain versus aversive) and differences in learning from better or worse than expected outcomes. We used a hierarchical Bayesian approach to estimate distributions over model parameters at an individual- and population-level with the latter capturing variation as a function of general, anxiety-specific, and depression-specific internalizing symptoms. Given our a priori interest in assessing the variance in choice behavior explained by the three internalizing factors, participants’ scores for the three factors were included in the estimation procedure for all models. We compared the fits of different models using Pareto smoothed importance sampling to approximate leave-one-out cross-validation accuracy (PSIS-LOO; Vehtari et al., 2017). PSIS-LOO is a popular method with which to estimate out of sample prediction accuracy as it is less computationally expensive than evaluating exact leave-one-out or k-fold cross-validation accuracy (Gelman et al., 2013). We note that comparing models using WAIC (Watanabe and Opper, 2010), an alternative penalization-based criterion for hierarchical Bayesian models, resulted in identical model rankings for our dataset. We elaborate briefly on model comparison here. Full details are provided in the Materials and methods; a summary of model comparison results is also provided in Appendix 3—table 1.

The models considered were informed by prior work (Lau and Glimcher, 2005; Behrens et al., 2007; Ito and Doya, 2009; Li and Daw, 2011; Berns and Bell, 2012; Akaishi et al., 2014; Browning et al., 2015; Donahue and Lee, 2015; Mkrtchian et al., 2017; Aylward et al., 2019). Each of the models included a parameter to allow for individual differences in the weighting of outcome probabilities against outcome magnitudes, an inverse temperature parameter to allow for differences in how noisily participants made choices as a function of this weighted combination of probability and magnitude, and a learning rate parameter that captured the extent to which participants adjusted probability estimates given the unexpectedness of the previous trial’s outcome. We parameterized dependence on experimental conditions by additive and interactive factors. As one example, the learning rate was divided into a baseline learning rate (), a difference in learning rates between the volatile and stable blocks (), a difference in learning rates between the reward gain and aversive versions of the volatility task , and the two-way interaction of those differences.

Parametrizing the effects of our experimental conditions in the manner described above allowed us to test how these effects varied as a function of between-participant differences in internalizing symptomatology. We parameterized the dependence on internalizing symptoms by adjusting the mean of the Bayesian hierarchical prior according to the general, depression-specific, and anxiety-specific factor scores of each participant, using population-level weights , respectively. These weights differed for each parameter component (e.g. ), but we hide this specificity for notational ease. Including participants’ scores on the three latent internalizing factors in the estimation procedure in this manner enables us to separate variance linked to internalizing symptoms from noise in participants’ choices when estimating model parameters (see Materials and methods: Hierarchical Bayesian Estimation for more details).

We used a form of stage-wise model construction to investigate the manner in which participants integrated outcome probability and outcome magnitude (additive or multiplicative) and the extent to which task performance could be better captured by inclusion of additional model parameters. At each stage of the model construction process, we added or modified a particular component of the model and compared the enriched model to the best model from the previous stage using leave-one-out cross-validation error approximated by Pareto smoothed importance sampling (PSIS-LOO; Vehtari et al., 2017).

In the first stage, we compared a model (#1) that combined outcome probability and outcome magnitude multiplicatively (i.e. by calculating expected value, similarly to Browning et al., 2015) with a model (#2) that combined outcome probability and outcome magnitude additively; see Materials and methods for full model details. We observed that the additive model fit participants’ choice behavior better (model #2 PSIS-LOO = 26,164 versus model #1 PSIS-LOO = 27,801; difference in PSIS-LOO = −1637; std. error of difference = 241; lower PSIS-LOO is better). This finding is consistent with observations of separate striatal representations for outcome magnitude and probability (Berns and Bell, 2012), as well as findings from work with non-human primates where additive models have also been reported to fit choice behavior better than expected value models (Donahue and Lee, 2015).

In both of the models in the first stage, all the parameters were divided into a baseline component, a component for the difference between volatile and stable blocks, a component for the difference between reward and aversive task versions, and a component for the interaction of these two experimental factors. During the second stage, we investigated whether task performance was better captured by additionally allowing for differences in learning as a result of positive and negative prediction errors. Specifically, we added a component for relative outcome value (good, bad) and two further components that captured the interaction of relative outcome value with block type (volatile, stable) and with task version (reward, aversive). We added these components for learning rate alone in one model (#3) and for learning rate, mixture weight, and inverse temperature in another model (#5); see Materials and methods for full model details. We defined a good outcome to be the receipt of a reward or the receipt of no shock and a bad outcome to be the receipt of no reward or the receipt of shock. Including effects of relative outcome value for all three model parameters, including the two-way interactions with block type and task version, improved PSIS-LOO (model #5 PSIS-LOO = 25,462 versus model #2 PSIS-LOO = 26,164; difference in PSIS-LOO = −702; std. error of difference = 142; lower PSIS-LOO is better). Adding the three-way interaction of block type, task version and relative outcome value worsened PSIS-LOO slightly (model #6 PSIS-LOO = 25,486 versus model #5 PSIS-LOO = 25,462; difference in PSIS-LOO = 24; std. error of difference = 9), indicating that two-way interactions were sufficient to capture important aspects of behavioral variation.

Additional stages of model comparison revealed that allowing subjective weighting of magnitude differences improved model fit (model #7 PSIS-LOO = 25,154 versus model #5 PSIS-LOO = 25,462; difference in PSIS-LOO = −308; std. error of difference = 104) as did the addition of a choice kernel that captures participants’ predisposition to repeating prior choices (model #11 PSIS-LOO = 25,037 versus model #7 PSIS-LOO = 25,154; difference in PSIS-LOO = −117; std. error of difference = 42). Both the subjective magnitude parameter and choice kernel inverse temperature were broken down by task version (reward, aversive); a single choice kernel update rate was used across conditions; see Materials and methods: Stage-wise Model Construction. In contrast to the above parameters, adding a lapse term did not improve model fit nor did allowing outcome probabilities to be separately updated for each shape; see Materials and methods for further details.

The best fitting model (#11) is presented in Equation 1a-d. The probability () that shape 1 and not shape 2 would result in reward or shock receipt if chosen is updated on each trial using a prediction error (the difference between the most recent outcome and the previous estimate ) scaled by the learning rate () (Equation 1a).

Next, the estimate of the difference between the two shapes in outcome probability is combined additively with the difference in outcome magnitude using a mixture weight () (Equation 1b). Here, the difference in outcome magnitude is nonlinearly scaled using to account for potential differences in subjective valuation ( and denote the magnitude for shapes 1 and shape 2, respectively; note that the sign for the difference in this equation is removed before exponentiating and then restored).

A choice kernel is also updated on each trial using the difference between the previous choice () and the choice kernel on the previous trial , scaled by an update rate () (Equation 1c).

Finally, the outcome value and the choice kernel determine the probability that shape 1 was chosen on that trial using a softmax choice rule with two separate inverse temperatures ( and ) (Equation 1d).

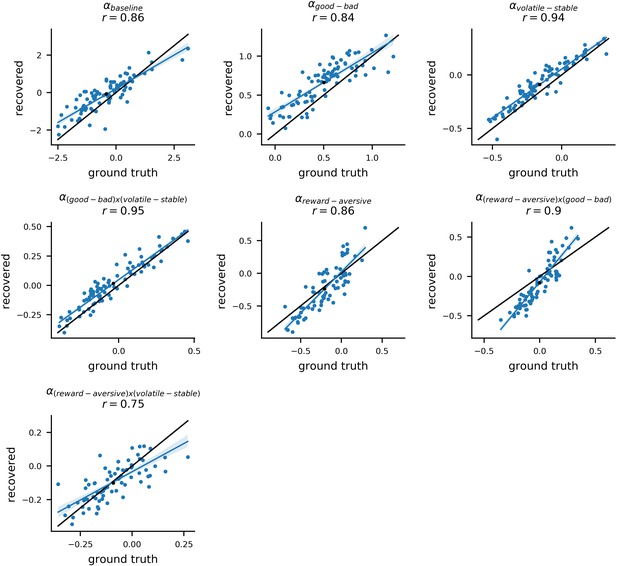

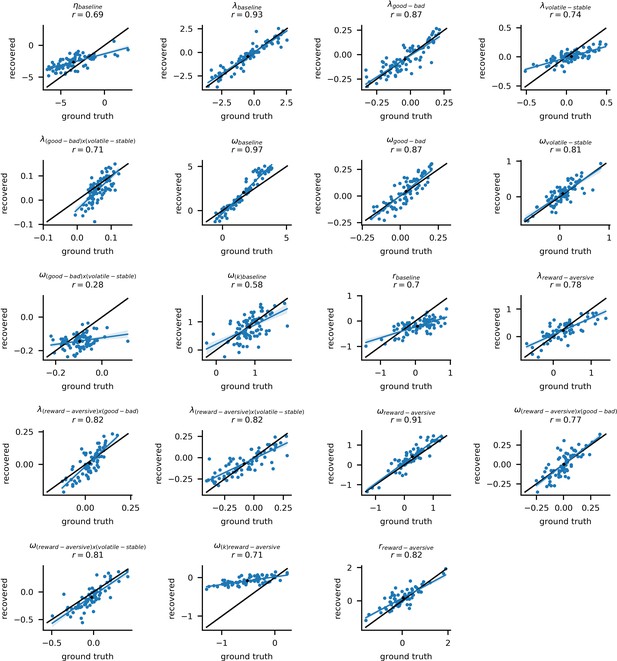

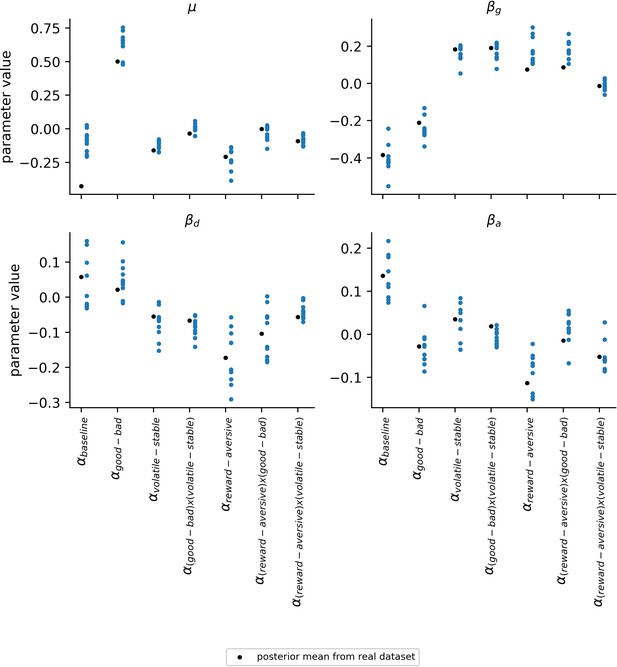

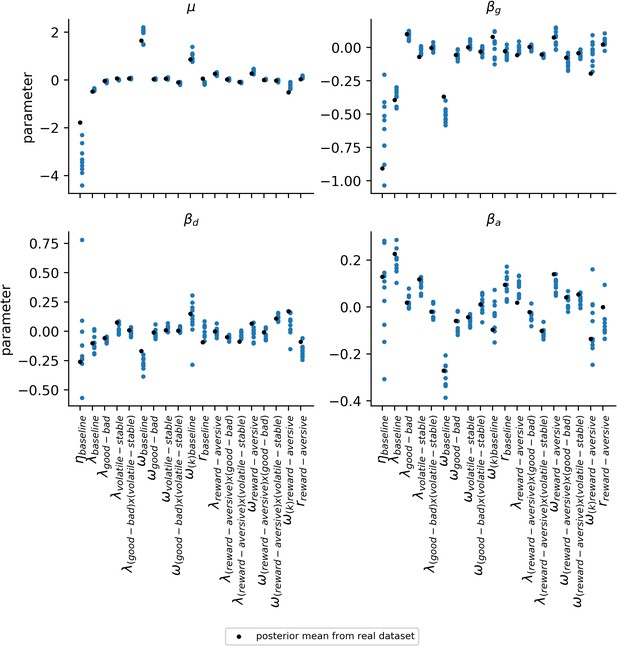

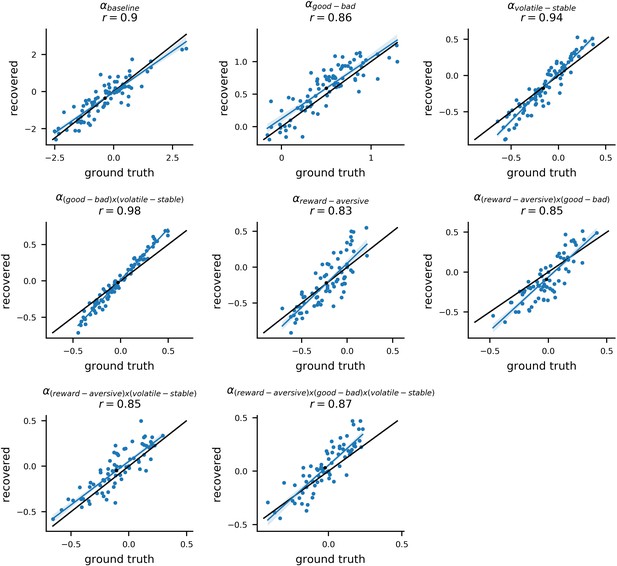

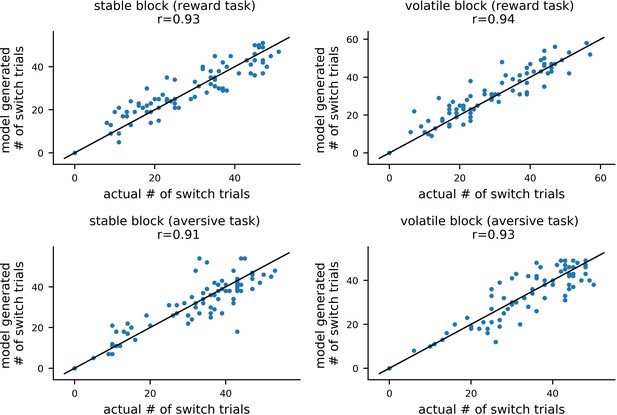

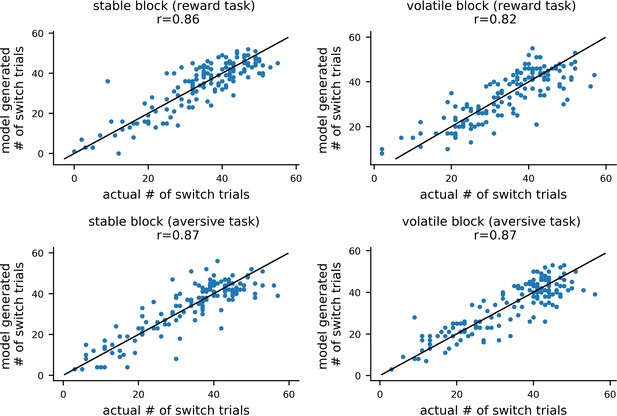

To validate the model estimation procedure, we treated participants’ estimated parameter values as the ground truth and used them to simulate 10 new datasets for each of the 86 participants. By fitting the model to these simulated datasets, we could compare the ground truth parameter values with the parameters estimated (i.e. recovered) from the simulated data. The recovered parameters from each dataset strongly correlated with the ground truth parameter values; the mean correlation across simulated datasets and across parameters was r = 0.76 (std = 0.15). For learning rate components, the average correlation was r = 0.88 (std = 0.13) (for more methodological details see Materials and methods: Parameter Recovery; for parameter recovery results see Appendix 4—figure 1 and Appendix 4—figure 2). This analysis indicates that individual model parameters were recoverable as desired. For estimates of noise in population-level parameters, see Appendix 4—figure 3 and Appendix 4—figure 4.

Cross-group results: participants adjust learning rate to contingency volatility

Having selected model #11, we fit this model to participants’ choice behavior and estimated distributions over model parameters at an individual- and population-level (as described above and detailed further in the Materials and methods). This included estimating population-level weights that captured the effect of internalizing factor scores upon each parameter component (e.g. ).

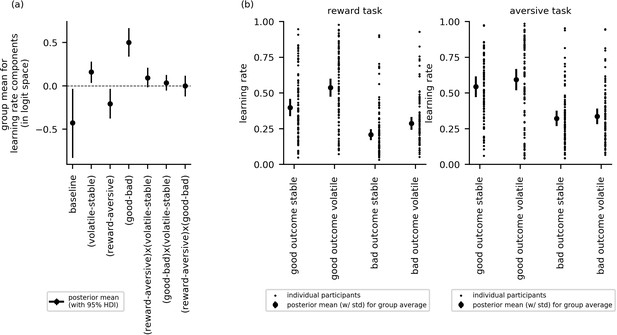

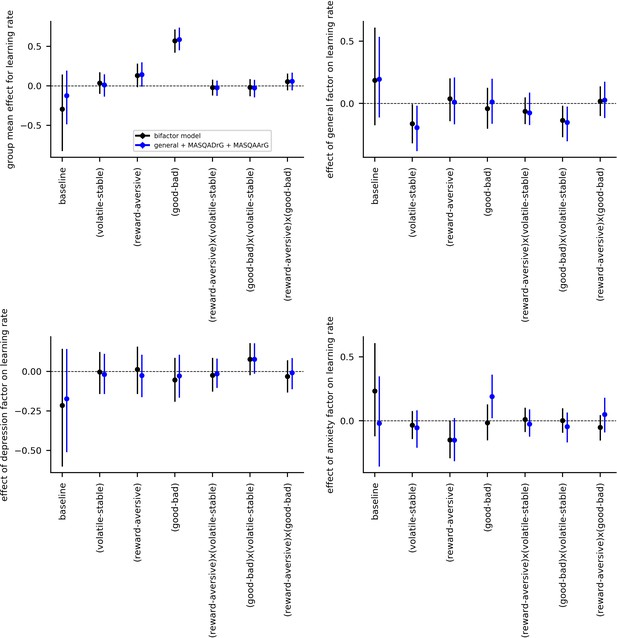

In this section, we report cross-group effects (i.e. across all participants). Here, we used the posterior distributions over the group mean for each learning rate component to examine whether learning rate varied as a function of block type (volatile or stable), task version (reward or aversive), or relative outcome value (i.e. trials following good or bad outcomes). Figure 3a shows the posterior means, along with their 95% highest posterior density intervals (HDIs), for each parameter component. If the 95% HDI for a given effect does not cross zero, the effect is considered statistically credible.

Cross-group results from experiment 1 for effects of block type (volatile, stable), task version (reward, aversive), and relative outcome value (good, bad) on learning rate (n = 86).

(a) This panel shows the posterior means along with the 95% highest posterior density intervals (HDI) for the group means () for each learning rate component (i.e. for baseline learning rate and the change in learning rate as a function of each within-subject factor and their two-way interactions). The 95% posterior intervals excluded zero for effect of block type upon learning rate (i.e. difference in learning rate for the volatile versus stable task blocks ). This was also true for the effect of task version, that is, whether outcomes entailed reward gain or electrical stimulation () and for the effect of relative outcome value, that is, whether learning followed a relatively good (reward or no stimulation) or relatively bad (stimulation or no reward) outcome (. Participants showed higher learning rates during the volatile block than the stable block, during the aversive task than the reward task, and on trials following good versus bad outcomes. None of the two-way interactions were statistically credible, that is the 95% posterior included zero. (b) In this panel, the learning rate components are combined to illustrate how learning rates changed across conditions. The posterior mean learning rate for individual participants (small dots) and the group posterior mean learning rate (large dots, error bars represent the associated posterior standard deviation) are given for each of the eight conditions; these values were calculated from the posterior distributions of the learning rate components ( etc.) and the group means ().

The effects of block type, task version and relative outcome value upon learning rate were statistically credible; that is, their HDIs did not cross zero: block type (), µ = 0.16, 95%-HDI = [0.04,0.28]; task version (), µ = −0.21, 95%-HDI = [−0.37, –0.03]; relative outcome value (), µ = 0.50, 95%-HDI = [0.34,0.67]. Participants had higher learning rates during the volatile block than the stable block, higher learning rates during the aversive task than the reward gain task, and higher learning rates on trials following good versus bad outcomes. None of the two-way interactions were statistically credible: block type by task version (), µ = 0.09, 95%-HDI [−0.02, 0.21]; block type by relative outcome value (), µ = 0.04, 95%-HDI [−0.05, 0.13]; task version by relative outcome value (), µ = 0.0, 95%-HDI [−0.12, 0.12]. Figure 3b illustrates differences in learning rates between experimental conditions for the reward and aversive versions of the task, respectively.

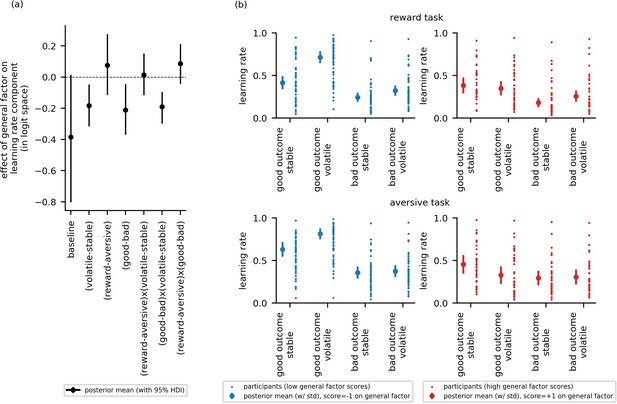

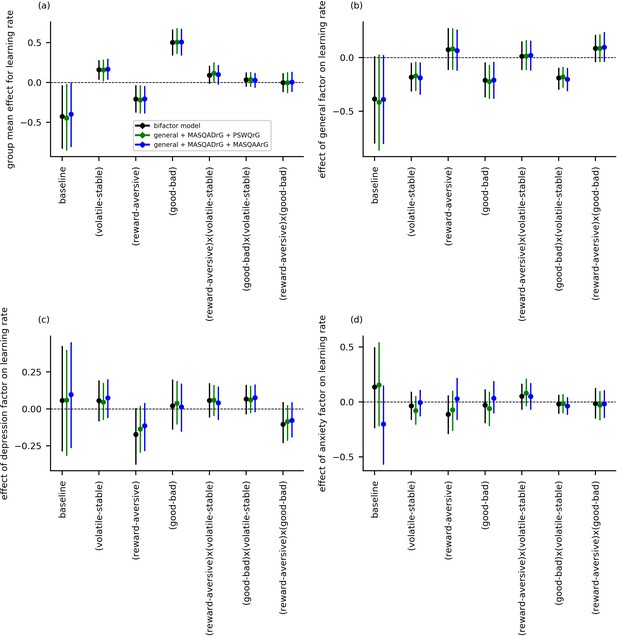

Elevated general factor scores are linked to reduced adjustment of learning rate to volatility, especially following better-than-expected outcomes, across both reward and aversive tasks

To address our first main research question—that is, whether impaired adjustment of learning rate to volatility is linked to symptom variance common to both anxiety and depression or to symptom variance specific to anxiety or to depression—we looked at whether the difference in learning rate between the volatile and stable blocks varied as a function of general factor scores or as a function of anxiety- or depression-specific factor scores. Examining learning rate difference between blocks, , the 95% HDI for the general factor regression coefficient excluded zero, = −0.18, 95%-HDI=[−0.32,–0.05], Figure 4a. Individuals with low scores on the general factor adjusted learning rate between the stable and volatile task blocks to a greater extent than individuals with high general factor scores. Neither anxiety-specific factor scores nor depression-specific factor scores credibly modulated learning rate difference between blocks, , = −0.03, 95%-HDI = [−0.16, 0.09], = 0.06, 95%-HDI=[−0.08, 0.19], respectively, Figure 4—figure supplement 1. This suggests that the ability to appropriately adjust learning rate to volatility, previously linked to trait anxiety (Browning et al., 2015), is actually common to both anxiety and depression and not specific to one or the other.

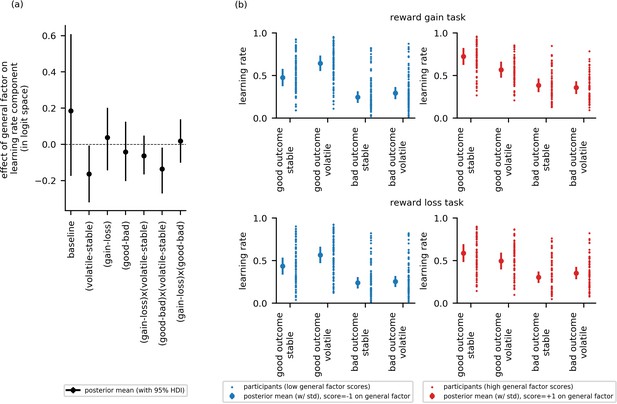

Experiment 1: Effect of general factor scores on learning rate (in-lab sample, n = 86).

Panel (a) shows posterior means and 95% highest posterior density intervals (HDI) for the effect of general factor scores () on each of the learning rate components. General factor scores credibly modulated the extent to which learning rate varied between the stable and volatile task blocks ( = −0.18, 95%-HDI = [−0.32,–0.05]), the effect of relative outcome value on learning rate ; = −0.21, 95%-HDI = [−0.37,–0.04]) and the interaction of these factors upon learning rate (; = −0.19, 95%-HDI = [−0.3,–0.1]). In each case, the 95% HDI did not include 0. (b) Here, we illustrate learning rate as a function of each within-subject factor and high versus low scores on the general factor of internalizing symptoms. To do this, we calculated the expected learning rate for each within-subject condition associated with scores one standard deviation above (‘high’, shown in red) or below (‘low’, shown in blue) the mean on the general factor. It can be seen that the largest difference in learning rates for participants with high versus low general factor scores is on trials following good outcomes during volatile task blocks. This effect is observed across both reward and aversive task versions. Small data points represent posterior mean parameter estimates for individual participants. Large points represent the posterior mean learning rates expected for participants with scores ± 1 standard deviations above or below the mean on the general factor. Error bars represent the posterior standard deviation for these expected learning rates.

To address our second research question—that is, whether the relationship between internalizing symptoms and adjustment of learning rate to volatility is domain general (i.e. holds across both aversive and reward task versions) or domain specific—we looked at whether there was an interaction between internalizing factor scores, block type and task version upon learning rate. Estimates for were not credibly modulated by scores on any of the three internalizing factors ( = 0.01, 95%-HDI = [−0.12,0.15]; = 0.05, 95%-HDI = [−0.07,0.17], = 0.06, 95%-HDI = [−0.06, 0.17]), see Figure 4a and Figure 4—figure supplement 1. Estimates for the main effect of task version on learning rate () also did not vary credibly as a function of internalizing factor scores, = 0.08, 95%-HDI = [−0.11; 0.28], = −0.11, 95%-HDI = [−0.29,0.06], = −0.17, 95%-HDI = [−0.38,0.01], see Figure 4a and Figure 4—figure supplement 1.

To address our third research question—that is, whether relative outcome value (good or bad) modulates the relationship between internalizing symptoms and learning rate adjustment to volatility—we looked at whether there was an interaction between internalizing factor scores, block type (volatile, stable) and relative outcome value (good, bad) upon learning rate. Estimates for were credibly modulated by scores on the general factor ( = −0.19, 95%-HDI = [−0.3,–0.1]), Figure 4a, but not by scores on the anxiety-specific factor or the depression-specific factor ( = −0.02, 95%-HDI = [−0.11, 0.07], = 0.07, 95%-HDI = [−0.04, 0.16]), Figure 4—figure supplement 1. In addition, scores on the general factor, but not the anxiety-specific or depression-specific factors, also credibly modulated the main effect of relative outcome value upon learning rate ( = −0.21, 95%-HDI = [−0.37, –0.04], see Figure 4a and Figure 4—figure supplement 1.

To illustrate these results, we calculated the expected learning rate for each within-subject condition associated with scores one standard deviation above or below the mean on the general factor, Figure 4b. Low general factor scores (shown in blue) were associated with higher learning rates following good versus bad outcomes, both when outcomes were reward-related (here a good outcome was a reward) and when they were aversive (here a good outcome was no shock delivery). Low general factor scores were also associated with a more pronounced difference in learning rate between volatile and stable task blocks following good outcomes. This was observed both when outcomes were reward-related and aversive. In contrast, high scores on the general factor (shown in red) were associated with smaller differences in learning rate between volatile and stable blocks and following good versus bad outcomes; this held across both reward and aversive versions of the task. In particular, the boost in learning from positive predictions errors under volatile conditions (i.e. from good relative outcomes in the volatile block) shown by individuals with low general factor scores was absent in individuals with high general factor scores; if anything individuals with high general factor scores’ learning after positive outcomes was reduced under volatile relative to stable conditions.

To confirm that the relationship between general factor scores and the interaction of block type by relative outcome value on learning rate did not vary as a function of task version, we fit an additional model that parametrized the three-way interaction of block type (volatile, stable), relative outcome value (good, bad) and task version (reward, aversive) for learning rate. Fitting this model to participants’ choice behavior confirmed that this three-way interaction was not statistically credible, nor was its modulation by general factor scores ( = 0.06, 95%-HDI = [−0.06; 0.18]). The effect of general factor scores on the interaction between block type (volatile, stable) and relative outcome value (good, bad) on learning rate remained credible ( = −0.21, 95%-HDI = [−0.34; −0.1]). We note that a parameter recovery analysis revealed successful recovery of the parameter representing the three-way interaction of block type, relative outcome value and task type on learning rate (Appendix 4—figure 5). This suggests that we did not simply fail to observe a three-way interaction due to lack of experimental power. Together, these findings support the conclusion that the negative relationship between general factor scores and increased learning rate following relative good outcomes in volatile environments did not differ credibly as function of task version (reward, aversive).

Experiment 2

We fit the behavioral model from experiment 1 to an independent online sample of participants, in order to test whether the reward gain findings replicated, and whether the findings for punishment would be replicated using loss of reward in place of primary aversive outcomes (shock). Specifically, we predicted that general factor scores would inversely correlate with adaptation of learning rate to volatility, that this would be observed to a greater extent following outcomes of positive relative value (reward gain or no reward loss) and that this would hold across task version (reward gain or reward loss).

One-hundred and seventy-two participants were recruited from Amazon’s Mechanical Turk platform to participate in Experiment 2 (see Materials and methods: Experiment 2). We excluded participants who missed 10 or more responses in either the reward gain or reward loss version of the volatility task (the task is detailed further below); this left 147 participants (n = 147; 65 females) whose data were included in the analyses. Participants filled out the Spielberger State-Trait Anxiety Inventory (STAI), the Mood and Anxiety Symptoms Questionnaire (MASQ), and the Beck Depression Inventory (BDI), showing similar distributions of scores across these three measures to participants in Experiment 1 (see Appendix 1—table 1 and Appendix 1—table 3). Participants’ scores on the three internalizing latent factors were calculated using the factor loadings estimated in experiment 1 for items from this subset of measures. As can be seen in Figure 1, participants in experiment 2 (denoted by dark gray os) showed a similar range of scores on the three factors to participants in experiment 1 despite no attempt being made to specifically recruit individuals who met diagnostic criteria for anxiety or depressive disorders.

As noted above, participants only completed three of the original questionnaires to reduce fatigue, incomplete datasets and participant drop-out. To check that factor scores could be reliably estimated using only items from this subset of the original questionnaires, we calculated factor scores using factor loadings estimated in experiment 1 and either the full or reduced set of questionnaire items. For this analysis, we pooled the questionnaire data from experiment 1 (n = 86) together with the online dataset used for the confirmatory factor analysis (n = 199). We correlated the factor scores calculated using either the full or reduced item set. This revealed little change in scores for the general factor (r = 0.97) or the depression-specific factor (r = 0.97). Scores on the anxiety-specific factor were only moderately correlated when using loadings from the full versus reduced set of items (r = 0.41); this likely reflects the omission of the PSWQ from the reduced questionnaire set. These differences reflect those seen between prior studies in the literature, where loadings on the general and depression factors are fairly consistent, but the anxiety factor can either reflect symptoms of anxious arousal, worry or both, with this varying as a function of measures included (Clark et al., 1994; Steer et al., 1999; Steer et al., 2008; Brodbeck et al., 2011). For the general factor and depression-specific factor, the high correlations between factor scores obtained using the full and reduced item set puts us in a strong position to draw conclusions across experiments 1 and 2. This is less true for the anxiety-specific factor. Our hypotheses center on the general factor; however, we include all scores on all three factors in the hierarchical model fitting procedure for consistency with experiment 1.

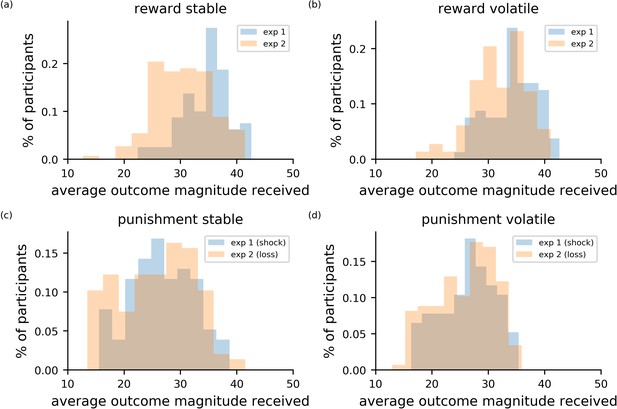

Each participant completed two versions of the probabilistic decision-making under volatility task (Browning et al., 2015): a reward gain and a reward loss task. The reward gain task was closely based on the in-lab reward task, differing only in the timing of intra- and inter-trial intervals, which were shortened slightly for the online version, and in the calculation of monetary bonuses (see Materials and methods). The reward loss task was parallel to the reward gain task, except that participants were allocated points at the start of the task and tried to avoid losing their points throughout the task. In this version of the task, outcome magnitudes corresponded the amount of points that would be subtracted from the point total. Participants’ general level of performance on these online versions of the volatility tasks, as indexed by average magnitude of outcomes received across trials, was broadly similar to that observed in experiment 1 (see Appendix 5—figure 1).

Experiment 2 results: general factor scores are linked to reduced adjustment of learning rate to volatility across both reward loss and reward gain. As in experiment 1, this was primarily observed following better-than-expected outcomes

At a group level, learning rates were credibly higher following good relative outcomes (reward, no loss) versus bad relative outcomes (no reward, loss) ( = 0.52, 95%-HDI = [0.42,0.71]. There was no effect of task version on learning rate = 0.13, 95%-HDI = [−0.02,0.28]. In contrast to experiment 1, there was also no credible group-level effect of block type on learning rate ( = 0.04, 95%-HDI = [−0.1,0.17], see Figure 5—figure supplement 1.

Although there was no effect of block type at the group level, in line with predictions, there was a credible interaction of block type by scores on the general factor: that is, the difference in learning rate between volatile and stable blocks, was inversely correlated with scores on the general factor ( = −0.16, 95%-HDI = [−0.32,–0.01]), Figure 5a. As in experiment 1, individuals with low general factor scores showed greater adjustment of learning rate to volatility, learning faster in the volatile block than the stable block, than participants with high general factor scores. Also as in experiment 1, neither depression nor anxiety-specific factor scores credibly modulated adjustment of learning rate to volatility (): = −0.03, 95%-HDI = [−0.14,0.08]; = 0.01, 95%-HDI = [−0.14,0.12], Figure 5—figure supplement 2.

Experiment 2: Effect of the general factor scores on learning rate (online sample, n = 147).

Panel (a) shows posterior means and 95% highest posterior density intervals (HDI) for the effect of general factor scores () on each of the learning rate components. Replicating findings from experiment 1, general factor scores credibly modulated the extent to which learning rate varied between the stable and volatile task block ( = −0.16, 95%-HDI = [−0.32,–0.01]) and the extent to which this in turn varied as a function of relative outcome value ( = −0.14, 95%-HDI = [−0.27,–0.02]). (b) Here, we illustrate learning rate as a function of each within-subject condition and high (+1 standard deviation, shown in red) versus low (−1 standard deviation, shown in blue) scores on the general factor. As in experiment 1, participants will low general factor scores showed a boost in learning under volatile conditions following receipt of outcomes of good relative value (reward gain or no reward loss). Once again, this boost is not evident in participants with high general factor scores. Small data points represent posterior mean parameter estimates for individual participants. Large points represent the posterior mean learning rates expected for participants with scores ± 1 standard deviations above or below the mean on the general factor. Error bars represent the posterior standard deviation for these expected learning rates. As in experiment 1, there were no cross-group effect of task version (gain - loss) and no effect of general factor scores, or of anxiety- or depression- specific factor scores, on learning components involving task version (gain - loss). As in experiment 1, baseline rates of learning were highly variable. Results for anxiety and depression specific factor scores are shown in Figure 5—figure supplement 2.

Once again, our second question of interest was whether deficits in adaptation of learning rate to volatility linked to elevated internalizing symptomatology would be domain general or domain specific. We examined if the relationship between scores on the three internalizing factors and the effect of block type (volatile, stable) on learning rate varied as a function of task version (reward gain, reward loss). As in experiment 1, none of the corresponding three-way interactions were statistically credible: general factor by block type by task version: = −0.06, 95%-HDI = [−0.17, 0.05]; anxiety-specific factor by block type by task version: = 0.01, 95%-HDI = [−0.09,0.1]; or depression-specific factor by block type by task version: = −0.03, 95%-HDI = [−0.13,0.09]. In addition, scores on the three internalizing factors did not credibly modulate the main effect of task version (reward gain, reward loss) upon learning rate: = −0.04, 95%-HDI = [−0.14,0.20]; = −0.15, 95%-HDI = [−0.28,0.0]; = 0.01, 95%-HDI = [−0.14,0.16].

Our third question of interest was whether deficits in adaptation of learning rate to volatility linked to elevated internalizing symptoms would vary depending on whether outcomes were better or worse than expected. Here, we replicated the finding from experiment 1. Specifically, general factor scores modulated the interaction of block type (volatile, stable) and relative outcome value (good, bad) upon learning rate, , = −0.14, 95%-HDI = [−0.27,–0.02], Figure 5a. As in experiment 1, individuals with low scores on the general internalizing factor showed greater increases in learning rates in the volatile relative to stable task block following better-than-expected versus worse-than-expected outcomes. This boost in learning from positive predictions errors under volatile conditions was absent in individuals with high general factor scores; as in experiment 1, if anything, individuals with high general factor scores’ learning after positive outcomes was reduced under volatile relative to stable conditions, Figure 5b. In contrast to these findings, neither scores on the anxiety-specific factor nor on the depression-specific factor credibly modulated the interaction of block type by relative outcome value on learning rate ( = 0.08, 95%-HDI = [−0.02,0.18]; = 0.01, 95%-HDI = [−0.09,0.1]), Figure 5—figure supplement 2.

These findings, alongside those from experiment 1, indicate that a failure to boost learning when contingencies are volatile, especially following a better than expected outcome, is a shared characteristic of both anxiety and depression. We note that, unlike in experiment 1, scores on the general factor were not associated with increased overall learning for good versus bad outcomes, (i.e. a main effect of relative outcome value, independent of its interaction with block type), = −0.04, 95%-HDI = [−0.20, 0.13], Figure 5a.

Other model parameters

The behavioral model contained five other parameters (inverse temperature , mixture weight for probability versus magnitude , choice kernel update rate , choice kernel inverse temperature , subjective magnitude ). We did not have a priori hypotheses pertaining to the relationship between these parameters and scores on the three internalizing factors. Hence, we looked for statistically credible effects that replicated across both experiments 1 and 2.

Participants as a group relied on outcome probability more during attempts to obtain reward (reward gain) than during attempts to avoid punishment (shock in experiment 1, reward loss in experiment 2); the 95% posterior intervals excluded zero for the difference in mixture weight by task version for both experiment 1 (mixture weight; µ = 0.26, 95%-HDI = [0.01,0.49]) and for experiment 2 (mixture weight; µ = 0.29, 95%-HDI = [0.02,0.55]). Excluding the learning rate results already presented, we did not observe any statistically credible associations between parameter values and scores on the three internalizing factors that replicated across both experiments 1 and 2.

Discussion

We examined how adaptation of probabilistic decision-making to contingency volatility varied as a function of internalizing symptomology. Through bifactor analysis of item-level responses to standardized questionnaire measures of anxiety and depression, we estimated participants’ scores on a general latent factor of internalizing symptomatology as well as their scores on anxiety-specific and depression-specific factors. We modeled participants’ performance on alternate versions of a probabilistic decision-making under volatility task using a hierarchical Bayesian framework and population-level parameters that captured variance attributable to each of the three internalizing factors. In experiment 1, participants including individuals diagnosed with GAD and MDD performed reward gain and aversive versions of the task. In experiment 2, participants recruited online from Amazon Mechanical Turk performed reward gain and reward loss versions of the task. Across both experiments, we observed that high scores on the general factor were associated with reduced adjustment of learning rate to volatility. No parallel relationship was observed for scores on the anxiety or depression-specific factors. These findings are in line with the contention that impoverished adaptation of learning to contingency volatility is linked to the common component of anxiety and depressive symptomatology.

An important, logical, next question concerns the generality of this impairment. In the current study, we addressed this by examining whether impoverished adaptation of learning to volatility in individuals with high scores on the general factor holds for both rewarding and punishing outcomes. In experiment 1, we examined performance on parallel versions of the task using financial reward gain or electrical stimulation (shock) as outcomes; in experiment 2, we compared task performance when outcomes entailed financial reward gain or financial reward loss. Neither experiment found any evidence to suggest that the relationship between impoverished adaptation of learning rate to volatility and scores on the general internalizing factor was modulated by outcome domain. Across all three versions of the task, individuals with low general factor scores showed greater adaptation of learning rate to volatility than individuals with high general factor scores. This suggests that the impairment in adjusting learning to volatility linked to elevated internalizing symptoms generalizes across both reward and punishment learning.

It has previously been suggested that anxiety might be especially associated with altered learning regarding punishment (Lissek et al., 2005), whereas depression might be especially associated with altered learning regarding reward (Elliott et al., 1997; Steele et al., 2007). However, recent reviews have suggested that evidence for the latter, at least, is far from clear cut (Robinson and Chase, 2017). In the case of the volatility task used here, two previous studies found elevated trait anxiety to be linked to impoverished learning rate adjustment when outcomes involved punishment (electric stimulation or financial loss; Browning et al., 2015; Pulcu and Browning, 2017). For outcomes involving reward gain, the correlations between trait anxiety and learning rate adaptation to volatility were not credibly different from zero but also not credibly different to that observed with punishing outcomes (Browning et al., 2015; Pulcu and Browning, 2017). The small sample size of these prior studies means that only effects of moderate size were detectable. In addition, as touched on in the Introduction, the measure of anxiety used (the STAI trait scale) does not provide a particularly pure measure of anxiety-specific affect, also showing moderate-to-high correlations with measures of depression. In the current study, our larger sample size and bifactor analysis of internalizing symptoms enables us to better clarify whether anxiety-specific or depression-specific symptoms are linked to differential adaptation of learning rate to volatility as a function of outcome domain.

Considering anxiety first, we found no evidence to support a relationship between anxiety-specific symptoms and enhanced learning following punishing versus rewarding outcomes or to suggest a differential relationship between anxiety-specific symptoms and adaptation of learning to volatility when outcomes are punishing versus rewarding. We note that omission of PSWQ administration in experiment 2 limited the range of anxiety symptoms that could be captured in that experiment. However, it was experiment 1 that critically used electrical stimulation as punishing outcomes (this, as opposed to reward loss, is arguably the version of punishment where anxiety-specific effects are most expected) and this revealed no credible relationship between anxiety-specific symptoms and differences in learning rate as a function of task version (reward, aversive) or volatility by task version. Further, supplementary analyses using residual scores on the PSWQ or MASQ-AA, controlling for variance explained by the general factor, in place of scores on the anxiety-specific factor also reveal no credible relationship between anxiety-specific symptoms and differences in learning rate as a function of task version (reward, aversive) or volatility by task version (see Appendix 6—figure 1 and Appendix 6—figure 2).

There was also no evidence to support a relationship between depression-specific symptoms and differential adaptation of learning to volatility as a function of outcome domain. These findings, together with those for the general factor reviewed above, suggest that the deficit in adapting learning rate to volatility is linked to symptom variance common to anxiety and depression and is general to both learning about reward and learning about punishment. We note that, in experiment 1, there was a trend toward depression-specific factor scores being linked to higher learning rates, in general, in the aversive versus reward version of the task. This did not reach significance and no equivalent effect was apparent in experiment 2. This might however be worth further exploration in future work.

Our third question of interest was whether impairment in adapting learning to volatility might differentially impact learning from better-than-expected versus worse-than expected outcomes. In the task used here, one shape predicts receipt of reward gain, loss or shock at 75–80% and the other at 20–25%; these contingencies are either stable or volatile depending on the block (following Behrens et al., 2007 and Browning et al., 2015, we used slightly more extreme probabilities in the volatile block to balance performance between conditions). Since outcome probability is always greater than 0 and less than 1, outcomes of relative positive value (reward gain, no shock, no reward loss) will be better than expected, to a varying extent, and should generate a positive prediction error. Similarly, outcomes of relative negative value (no reward gain, shock, reward loss) will be worse than expected, to a varying extent, and should generate a negative prediction error. It is widely accepted that both positive and negative prediction errors are used to inform our estimates of outcome probability—that is, we learn from both better-than-expected and worse-than-expected outcomes. However, learning need not be symmetric. Here, across both experiments, we observed that individuals with low scores on the general internalizing factor mainly showed a volatility-related boost to learning from positive prediction errors. This selective boost to learning from positive prediction errors when contingencies were volatile was not shown by individuals with high scores on the general internalizing factor.

In the current task, asymmetry in learning from positive versus negative prediction errors does not confer a performance advantage given that both outcome types are equally fully informative—receiving a good outcome following selection of shape 1 means shape 2 would have resulted in a bad outcome and vice versa. However, asymmetric learning from good versus bad outcomes under volatility might be valuable in many real-world situations. Conceivably, when the world changes, there might normally be fewer ways of performing well than performing badly, so learning more substantially from the former could be advantageous (Huys et al., 2015). As such, enhanced learning from positive prediction errors when the world is volatile might reflect a learning style acquired over time by individuals with low general factor scores and applied by default in new situations, such as the current tasks.

Given the ubiquity of volatility in the real-world, our findings suggest that the development of interventions aimed at assisting participants to better distinguish contingency noise from contingency volatility, and to adjust decisions accordingly, might be useful in treatment of both anxiety and depression. An important question is whether impairment in adapting learning rate to volatility is specific to internalizing psychopathology or might represent an even more general deficit, perhaps one than also characterizes individuals with high levels of externalizing or psychotic symptomatology. We hope to address this in future work. Understanding this will enable us to more broadly identify patients likely to benefit from cognitive interventions aimed at improving decision-making under different sources of uncertainty, in particular contingency noise versus contingency volatility. A second important future avenue entails research aimed at increasing our understanding of, and development of interventions for, deficits in decision-making uniquely linked to anxiety or depression-specific symptoms. The hope is that such interventions might valuably complement ones targeted at deficits linked to the general component of internalizing psychopathology.

In summary, our findings provide support for the contention that impoverished adjustment of learning rate to volatility is broadly linked to internalizing psychopathology. Bifactor modeling of internalizing symptoms and hierarchical Bayesian modeling of task performance enabled us to establish that this deficit is linked to symptoms shared by both anxious and depressed individuals, that is, to scores on a general internalizing factor, with no additional relationship being observed for anxiety or depression specific symptoms. The relationship between general factor scores and impaired adaptation of learning to volatility was observed in a sample including participants with anxiety and depressive disorders as well as in an online sample where no diagnostic interviews or pre-screening on symptoms was conducted. Further, this relationship held regardless of whether outcomes entailed receipt of reward, shock or reward loss. This speaks to the generality of the deficit in question. Intriguingly, individuals with low general factor scores were found to predominantly boost learning under volatility following better-than-expected outcomes. In other words, they learnt more from positive than from negative prediction errors under conditions of high contingency volatility. It is possible that in everyday life, a generally adaptive strategy when the world is rapidly changing is to learn from successful actions. The ability to do this could potentially confer resilience when faced with environments characterized by second-order uncertainty. In contrast, for individuals with high levels of internalizing symptoms, the inability to adjust behavior depending on whether unexpected outcomes are likely due to contingency noise or to contingency volatility might well comprise decision-making across multiple settings; this could explain findings that self-reported intolerance of uncertainty is elevated in individuals with both anxiety and depressive disorders. More generally, we believe that greater specification of the manner in which deficits in decision-making, and other cognitive functions, map onto latent dimensions of psychopathology will play an important role in the advancement of personalized medicine, allowing for empirically based stratification of individuals who participate in treatment trials, prediction of individuals at risk of clinically significant psychopathology, and development of interventions aimed at remediating core cognitive and computational deficits.

Materials and methods

Experiment 1

Participants

Potential participants of both sexes, between the ages of 18 and 55, were recruited continuously from the local community for a period of one year and 6 months between March 2015 and August 2016. Advertisements in local newspapers and on local mailing lists (e.g. Oxford University mailing lists) together with flyers at local primary care practices and geographically constrained Facebook advertisements were used to recruit participants with generalized anxiety disorder (GAD) and major depressive disorder (MDD). Diagnoses were determined using the research version of the Structured Clinical Interview for DSM-IV-TR (SCID) administered by trained staff and supervised by an experienced clinical psychologist. We excluded participants if they were currently receiving pharmacological treatment or had been prescribed psychotropic medication or taken non-prescribed psychoactive drugs (i.e. street drugs) within the past 3 months. Participants reporting a history of neurological disease or meeting diagnostic criteria for PTSD, OCD, bipolar disorder, schizophrenia or other psychotic disorders, or substance abuse were also excluded. In parallel, we recruited a healthy control group screened using the SCID to ensure they did not meet diagnostic criteria for any DSM-IV-TR Axis I disorder. Here, participants were also excluded if they reported a history of neurological disease or usage of psychoactive drugs (legal or illegal) within the last 3 months.

One-hundred and eight individuals came in for the SCID screening session. Of these, 42 individuals did not meet inclusion criteria and 8 individuals declined to participate in the subsequent experimental sessions. Our final participant sample (n = 58) comprised 12 participants who met diagnostic criteria for GAD, 20 participants who met diagnostic criteria for MDD (three of whom had a secondary diagnosis of GAD), and 26 healthy control participants.

We also included within our final sample an additional 30 participants who had been recruited from the local community to perform the same tasks in the context of an fMRI study. These participants showed broadly the same age-range and sex ratio as the patient and control groups (see Appendix 1—table 1). Here, potential participants were excluded if they reported a prior diagnosis of neurological or psychiatric illness other than GAD or MDD. In addition, participants reporting usage of psychoactive medication or street-drugs were also excluded.

We excluded data from either the reward gain or aversive version of the probabilistic decision-making under volatility task if there was equipment malfunction or if a participant reported after the session that they did not understand the task. In experiment 1, we excluded data from the aversive version of the volatility task from eight participants (three participants with MDD, one participant with GAD, and four control participants). Data from the reward gain version of the volatility task were excluded for six participants (two participants with GAD, one participant with MDD, two control participants, and one community member participant). Only two participants (both control subjects) had data excluded from both tasks. These exclusions left 86 participants in total. Power calculations indicated a sample size of 75 or higher would give 95% power to obtain effect sizes similar to that observed in our earlier work relating adaptation of learning in the aversive version of the volatility task to trait anxiety (Browning et al., 2015). See Appendix 1—table 1 for participant details by task.

Experimental procedure

Request a detailed protocolExperiment 1 was approved by the Oxford Central University Research Ethics Committee (CUREC) and carried out at the Wellcome Centre for Integrative Neuroimaging (WIN) within the John Radcliffe Hospital in compliance with CUREC guidelines. Written informed consent was obtained from each participant prior to participation. Participants recruited by community advertisement into the GAD and MDD patient groups or into the healthy control group were initially screened by phone. This was followed by an in-person screening session during which informed consent was obtained and the Structured Clinical Interview for DSM-IV-TR (SCID) was administered. Individuals meeting criteria for inclusion in the study were invited back for two additional sessions. During the second session, participants completed standardized self-report measures of anxiety and depression and then completed the aversive (shock) version of the volatility task. During the third session, participants completed the reward gain version of the volatility task. The second and third sessions were separated by at least 1 day and no more than 1 week. All three sessions were conducted within the Nuffield Department for Clinical Neurosciences at the John Radcliff Hospital. Participants were paid at a fixed rate of £20 per session and were also given a bonus of up to £10 based on their performance in the reward gain version of the volatility task.

To increase the number of participants and to fill in the spread of symptoms, 30 additional community-recruited participants (aged between 18 and 40 years, 14 females) were included in the sample for experiment 1. These participants were not administered the SCID, but any individuals reporting a history of psychiatric or neurological conditions were excluded as were individuals on psychotropic medication or taking illegal psychotropic agents. These participants completed the aversive and reward gain versions of the volatility task during two fMRI scanning sessions conducted a week apart in the Wellcome Centre for Integrative Neuroimaging at the John Radcliffe Hospital. Questionnaires were administered at the beginning of each of these sessions.

Self-report measures of internalizing symptoms

Request a detailed protocolParticipants completed standardized self-report measures of anxiety and depression. Measures included the Spielberger State-Trait Anxiety Inventory (STAI form Y; Spielberger et al., 1983), the Beck Depression Inventory (BDI; Beck et al., 1961), the Mood and Anxiety Symptoms Questionnaire (MASQ; Clark and Watson, 1995; Watson and Clark, 1991), the Penn State Worry Questionnaire (Meyer et al., 1990), and the Center for Epidemiologic Studies Depression Scale (CESD; Radloff, 1977). In addition, we administered the 80-item Eysenck Personality Questionnaire (EPQ; Eysenck and Eysenck, 1975) to be able to include items from the Neuroticism subscale in our bifactor analysis.

Exploratory bifactor analysis

View detailed protocolA bifactor analysis was conducted on item level responses (n = 128) to the MASQ anhedonia subscale, the MASQ anxious arousal subscale, the STAI trait subscale, the BDI, the CESD, the PSWQ, and the EPQ neuroticism (N) subscale. Item responses were either binary (0–1), quaternary (0–4), or quinary (0–5). Response categories that were endorsed by fewer than 2% of participants were collapsed into the adjacent category to mitigate the effects of extreme skewness. Reverse-scoring was implemented prior to inclusion of items in the bifactor analysis to facilitate interpretation of factor loadings. Polychoric correlations were used to adjust for the fact that categorical variables cannot have correlations across the full range of −1 to 1 (Jöreskog, 1994).